《PyTorch深度学习实践9》——卷积神经网络-高级篇(Advanced-Convolution Neural Network)

Posted ☆下山☆

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了《PyTorch深度学习实践9》——卷积神经网络-高级篇(Advanced-Convolution Neural Network)相关的知识,希望对你有一定的参考价值。

一、 1 ∗ 1 1*1 1∗1卷积

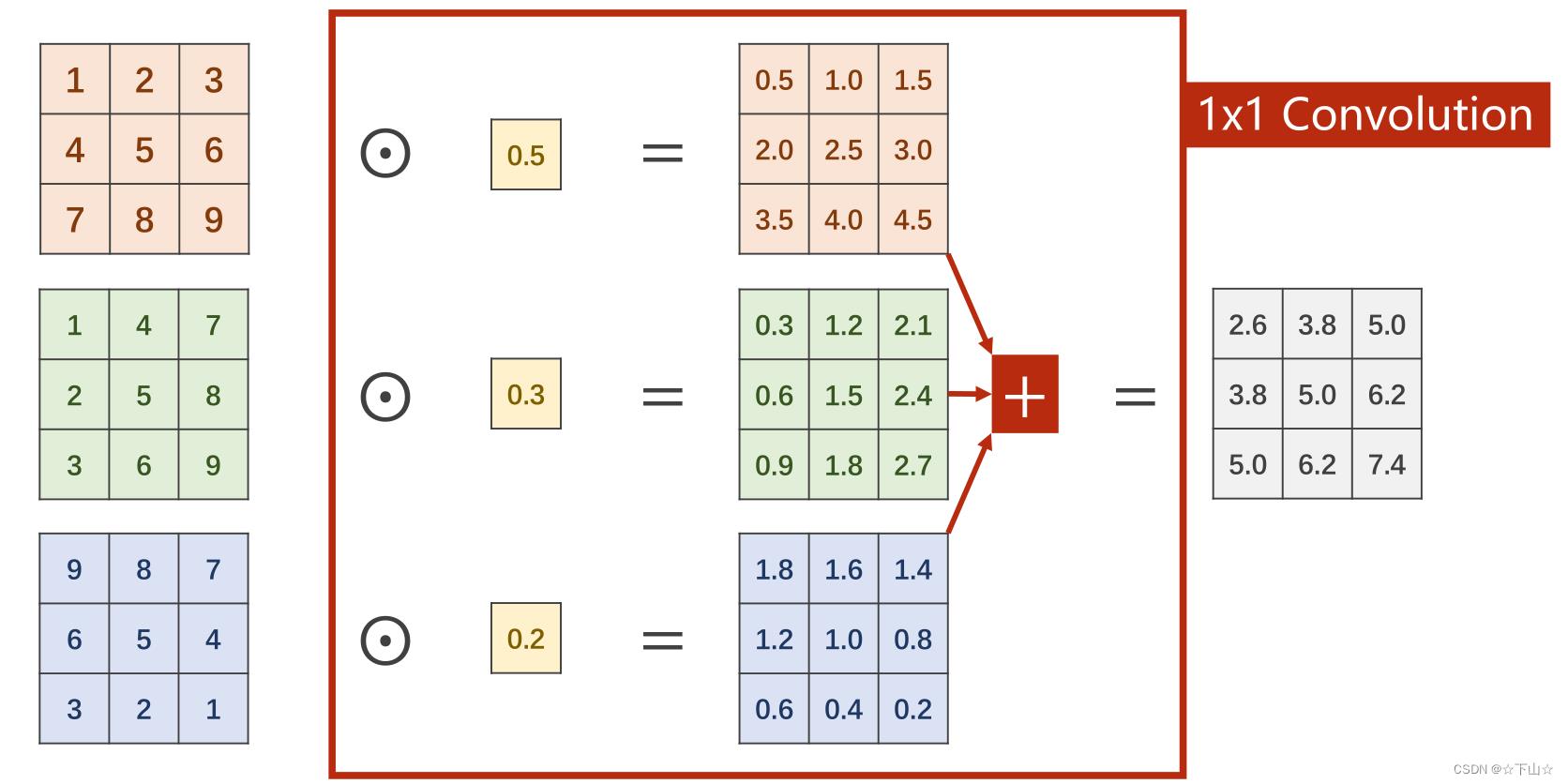

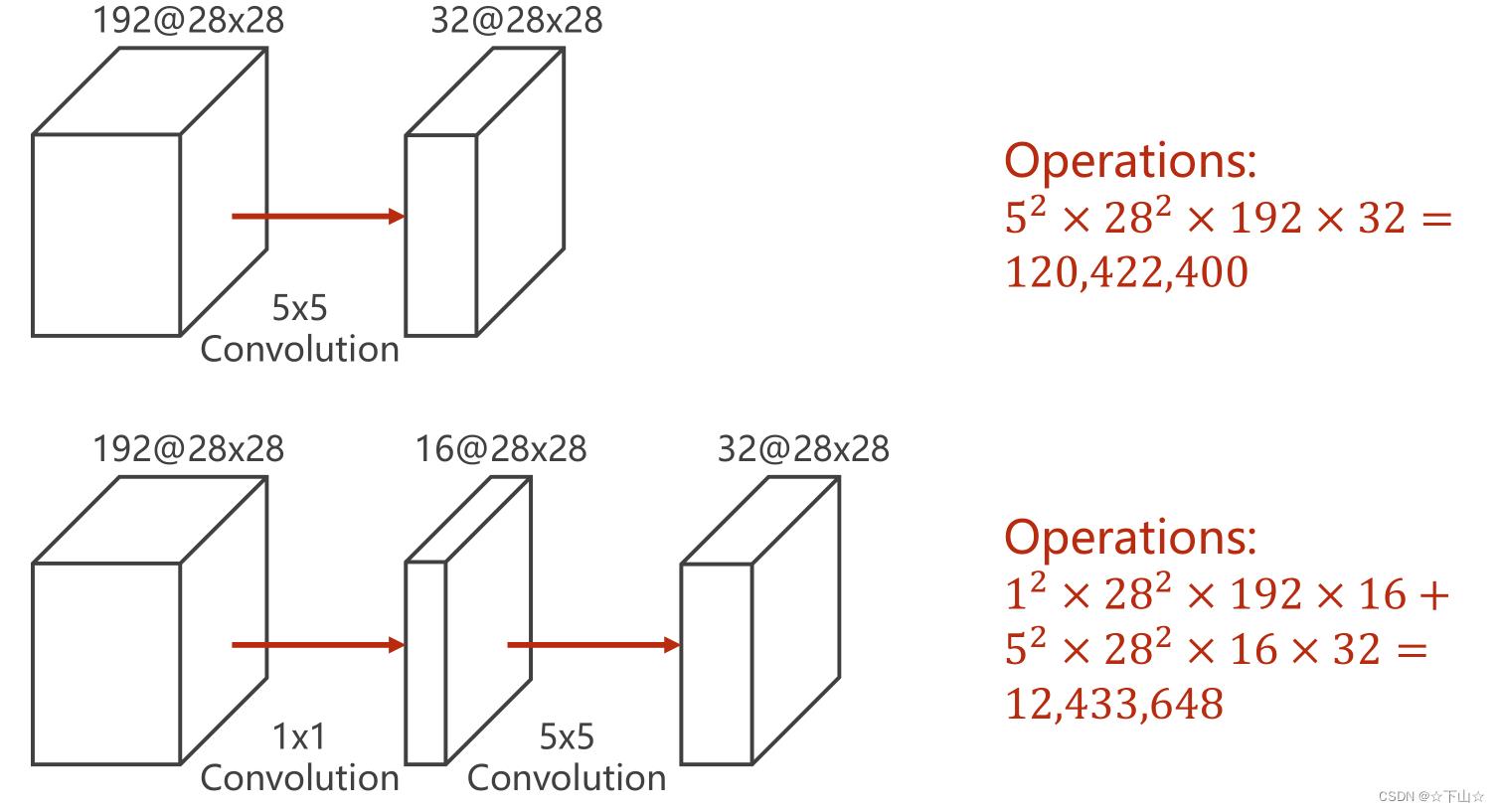

由下面两张图,可以看出

1

∗

1

1*1

1∗1卷积可以显著降低计算量。

通常

1

∗

1

1*1

1∗1卷积还有以下功能:

一是用于信息聚合,同时增加非线性,

1

∗

1

1*1

1∗1卷积可以看作是对所有通道的信息进行线性加权,即信息聚合,同时,在卷积之后可以使用非线性激活,可以一定程度地增加模型的表达能力;二是用于通道数的变换,可以增加或者减少输出特征图的通道数。

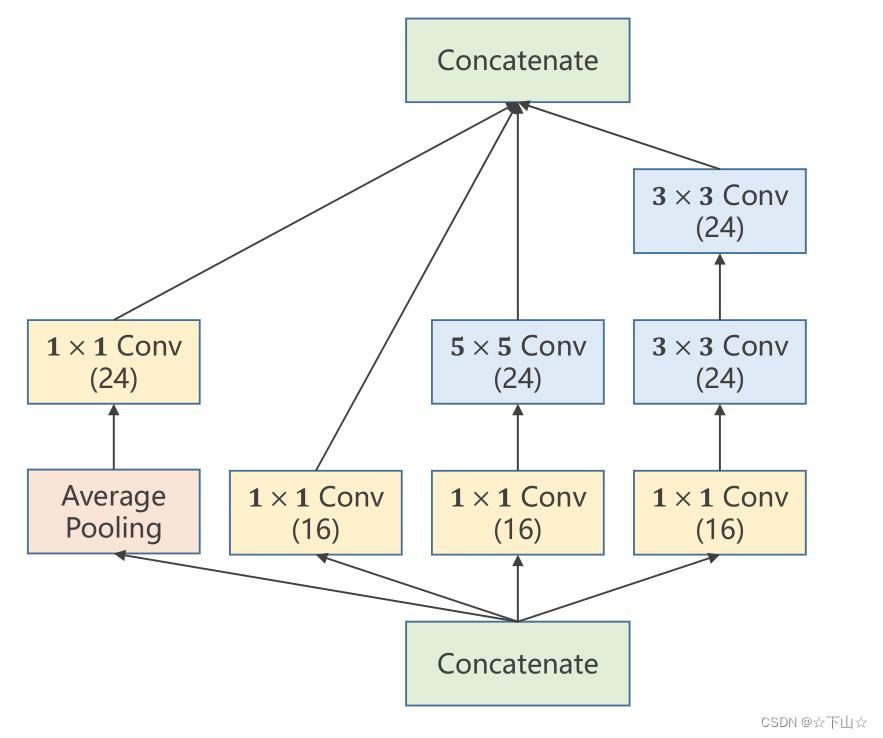

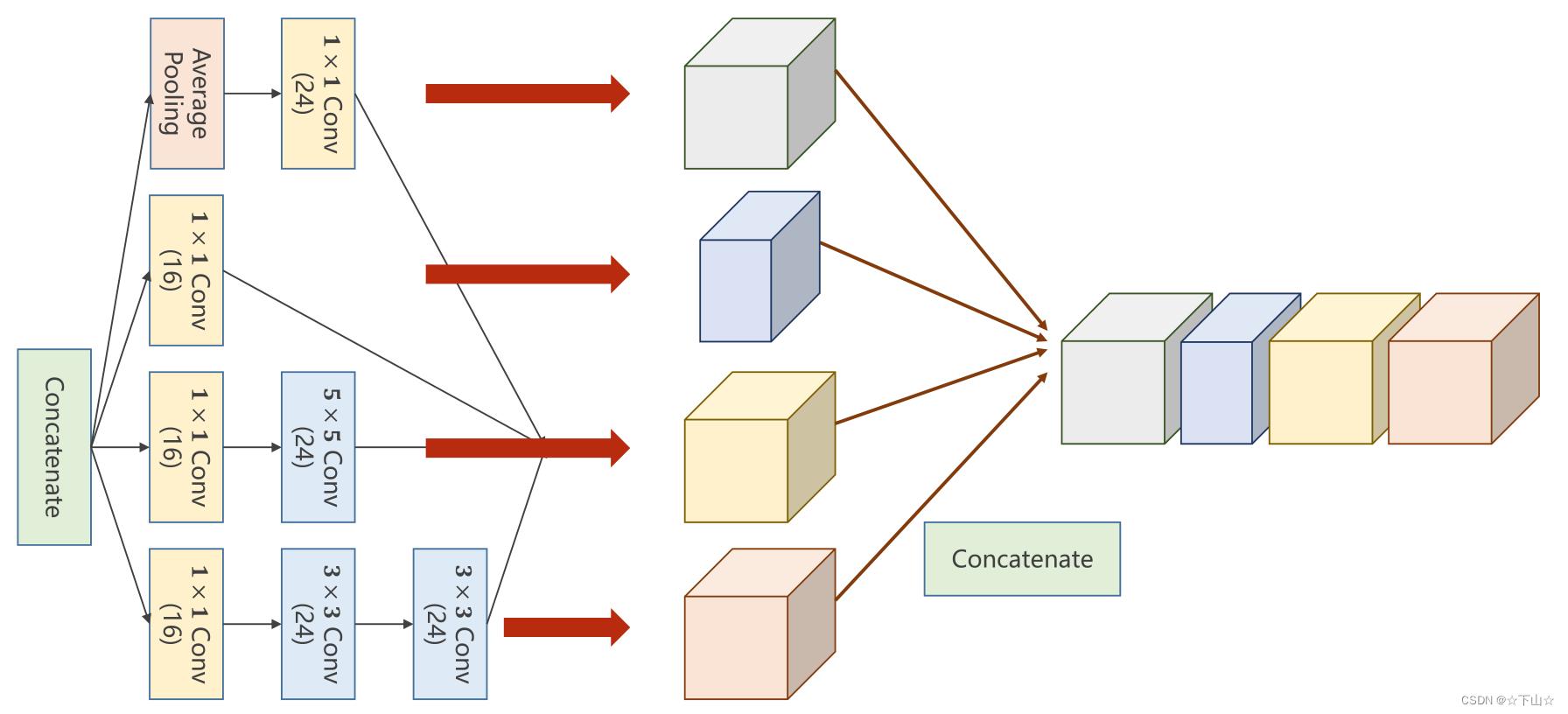

二、Inception Module

Inception V1首次使用了并行的结构。每个Inception块使用多个大小不同的卷积核,与传统的堆叠式网络每层只用一个尺寸的卷积核的结构完全不同。

Inception块的多个不同的卷积核可以提取到不同类型的特征,同时,每个卷积核的感受野也不一样,因此可以获得多尺度的特征,最后再将这些特征拼接起来。同时,为了降低计算成本,可以使用

1

∗

1

1*1

1∗1卷积进行降维。

import torch

import torch.nn as nn

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,均值和方差

train_dataset = datasets.MNIST(root='', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

# design model using class

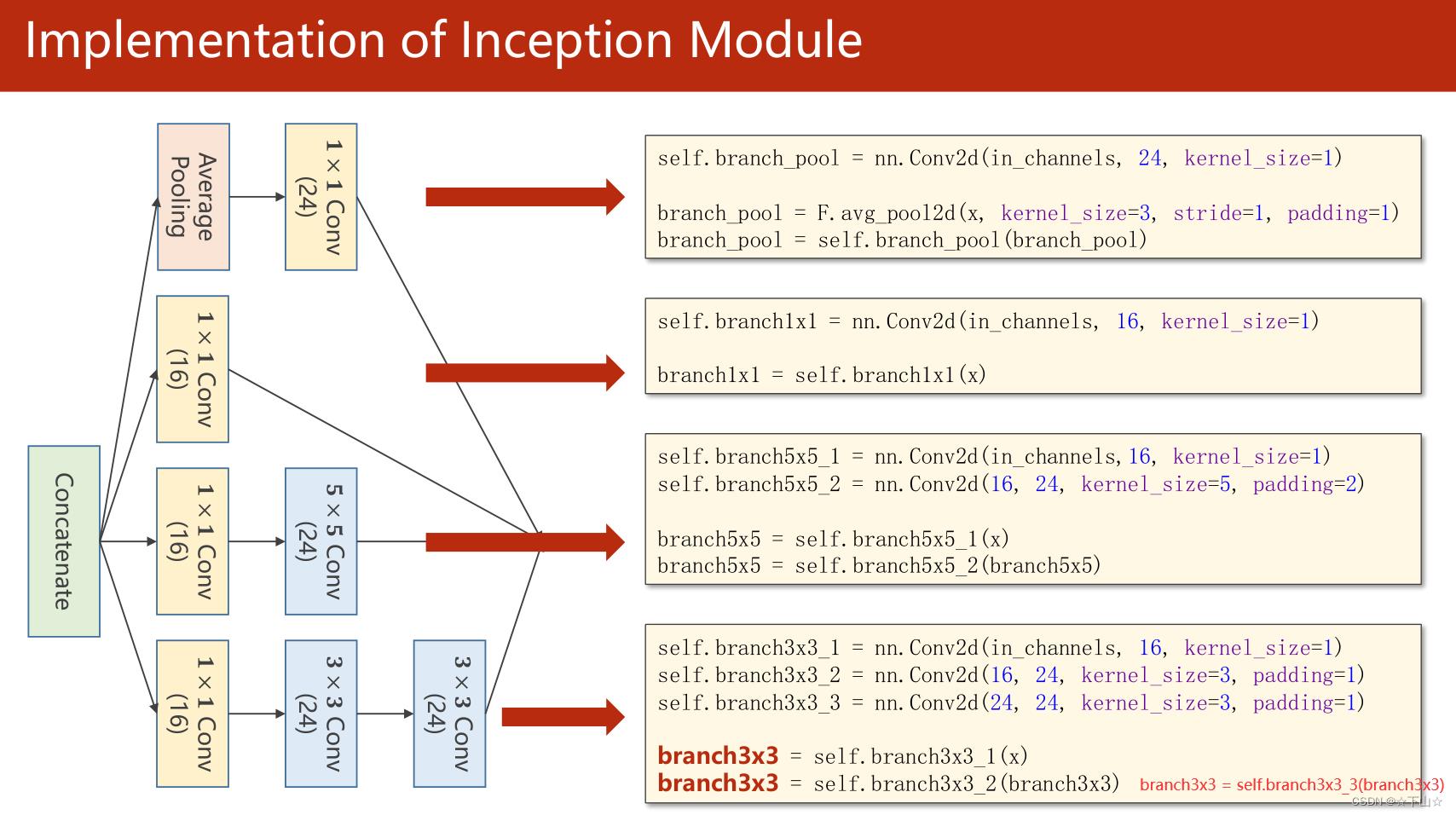

class InceptionA(nn.Module):

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)

self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]

return torch.cat(outputs, dim=1) #沿着channel拼接。 b,c,w,h c对应的是dim=1

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(88, 20, kernel_size=5) # 88 = 24x3 + 16

self.incep1 = InceptionA(in_channels=10) # 与conv1 中的10对应

self.incep2 = InceptionA(in_channels=20) # 与conv2 中的20对应

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(1408, 10)

def forward(self, x):

in_size = x.size(0)

x = F.relu(self.mp(self.conv1(x)))

x = self.incep1(x)

x = F.relu(self.mp(self.conv2(x)))

x = self.incep2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x

model = Net()

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %.2f %% ' % (100 * correct / total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

[1, 300] loss: 0.797

[1, 600] loss: 0.168

[1, 900] loss: 0.130

accuracy on test set: 97.21 %

[2, 300] loss: 0.106

[2, 600] loss: 0.096

[2, 900] loss: 0.088

accuracy on test set: 97.55 %

[3, 300] loss: 0.080

[3, 600] loss: 0.071

[3, 900] loss: 0.068

accuracy on test set: 98.22 %

[4, 300] loss: 0.058

[4, 600] loss: 0.059

[4, 900] loss: 0.061

accuracy on test set: 98.34 %

[5, 300] loss: 0.051

[5, 600] loss: 0.057

[5, 900] loss: 0.048

accuracy on test set: 98.51 %

[6, 300] loss: 0.048

[6, 600] loss: 0.043

[6, 900] loss: 0.047

accuracy on test set: 98.92 %

[7, 300] loss: 0.040

[7, 600] loss: 0.044

[7, 900] loss: 0.038

accuracy on test set: 98.81 %

[8, 300] loss: 0.034

[8, 600] loss: 0.041

[8, 900] loss: 0.037

accuracy on test set: 98.76 %

[9, 300] loss: 0.032

[9, 600] loss: 0.035

[9, 900] loss: 0.034

accuracy on test set: 98.82 %

[10, 300] loss: 0.031

[10, 600] loss: 0.033

[10, 900] loss: 0.031

accuracy on test set: 98.96 %

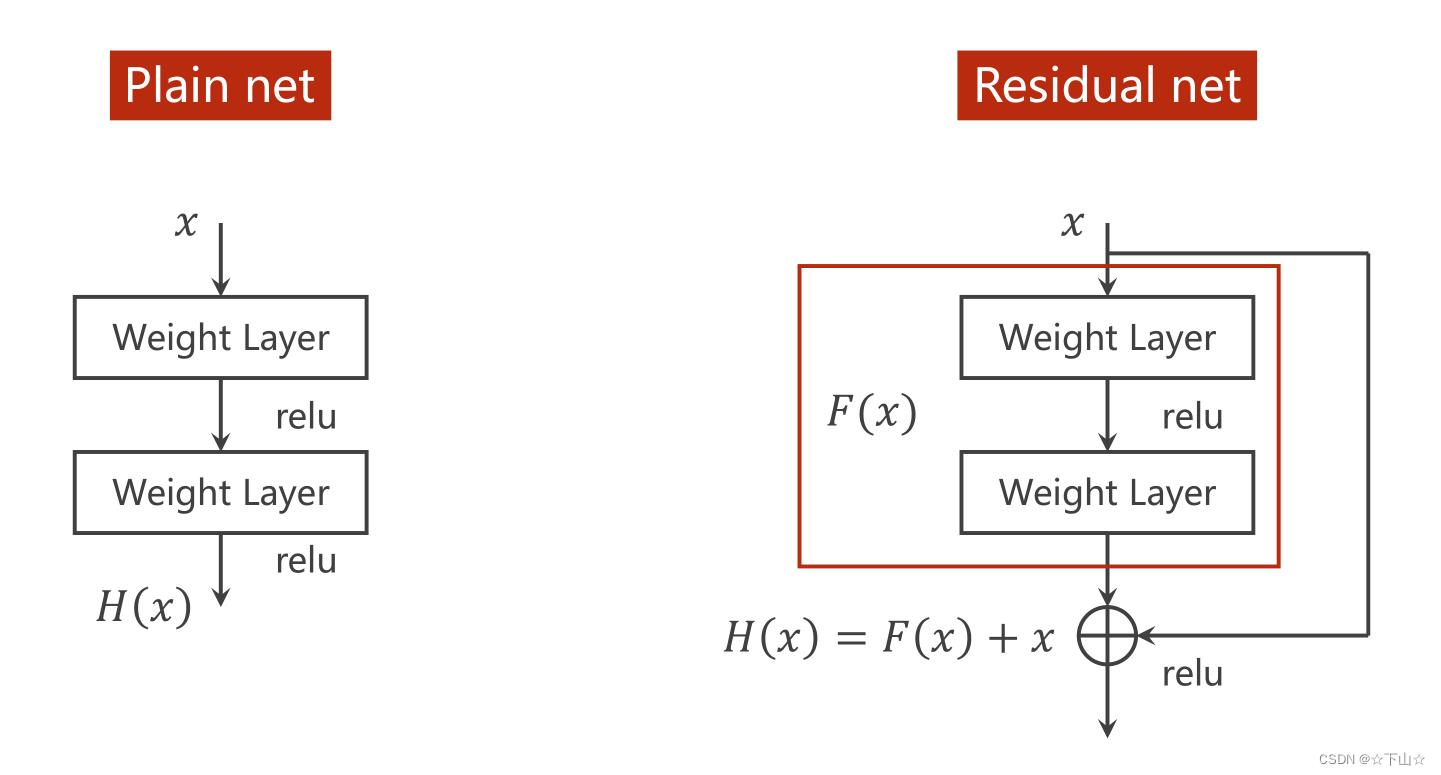

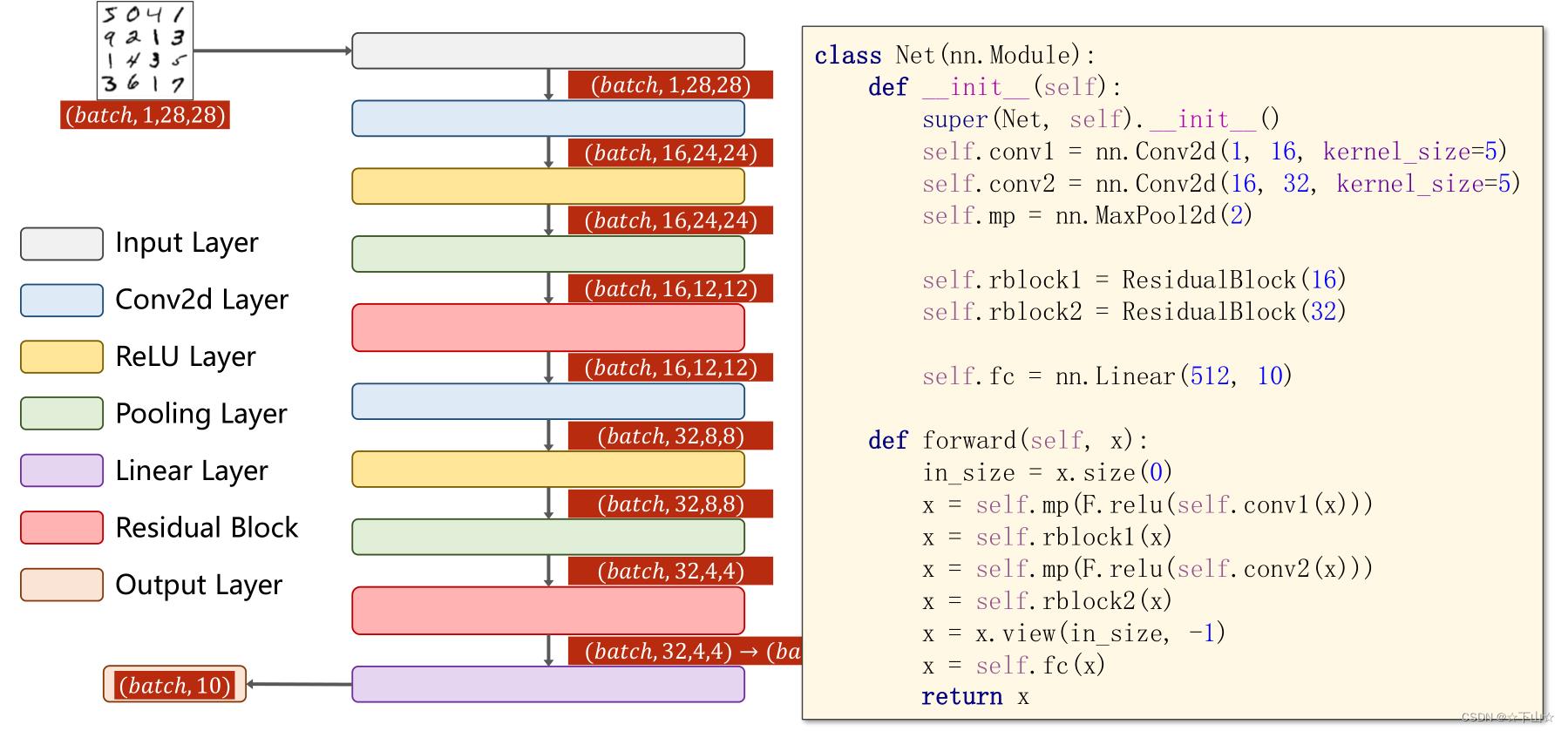

三、Simple Residual Network

残差网络从一定程度上解决了模型退化问题(由于优化困难而导致,随着网络的加深,训练集的准确率反而下降了),它在一个块的输入和输出之间引入一条直接的通路,称为跳跃连接。

跳跃连接的引入使得信息的流通更加顺畅:一是在前向传播时,将输入与输出的信息进行融合,能够更有效的利用特征;二是在反向传播时,总有一部分梯度通过跳跃连接反传到输入上,这缓解了梯度消失的问题。

import torch

import torch.nn as nn

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,均值和方差

train_dataset = datasets.MNIST(root='', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

# design model using class

class ResidualBlock(nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = nn.Conv2d(channels, channels, kernel_size=3, padding=1)

self.conv2 = nn.Conv2d(channels, channels, kernel_size=3, padding=1)

def forward(self, x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x + y)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = nn.Conv2d(16, 32, kernel_size=5) # 88 = 24x3 + 16

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(512, 10)

def forward(self, x):

in_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x

model = Net()

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %.2f %% ' % (100 * correct / total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

本文为系列文章:

| 上一篇 | 《Pytorch深度学习实践》目录 | 下一篇 |

|---|---|---|

| 卷积神经网络-基础篇(Basic-Convolution Neural Network) | 资料 | 循环神经网络-基础篇(Basic-Recurrent Neural Network) |

以上是关于《PyTorch深度学习实践9》——卷积神经网络-高级篇(Advanced-Convolution Neural Network)的主要内容,如果未能解决你的问题,请参考以下文章