基于OpenCV立体视觉标定和校正

Posted lijiayu2015

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了基于OpenCV立体视觉标定和校正相关的知识,希望对你有一定的参考价值。

这几天学习双目视觉标定,分别使用了两种工具:OpenCV和Matlab。Matlab的效果非常稳定,但是一开始OpenCV的效果很糟糕,要不是出现中断就是标定出来的结果数值很大。经过了几天的不断调试和更改,终于把OpenCV的立体视觉标定和校正的程序写出来了。立体标定时计算空间上的两台摄像机几何关系的过程,立体校正则是对个体图像进行纠正,保证这些图像可以从平面对准的两幅图像获得。程序的框架如下:

1.读取左右相机图片序列

双目相机的图片序列放在Demon的路径下,左右相机的图像的名字分别存放在两个txt文件中,程序分别通过这两个txt文件读取对应的图片序列。主注意:我们假设已经将摄像机排列好了,其图像扫描线是粗略物理对齐,从而使得每台摄像机本质上都具有相同的视场。

2.提取图片角点,并分别标定左右相机内参矩阵和畸变向量

调用cvFindChessboardCorners找出图像中的角点,然后调用cvFindCornerSubPix计算亚像素精度角点位置,将全部找出来的角点位置压入一个矩阵序列中,以及初始化角点在世界坐标系的对应位置序列,本程序的世界坐标系长度单位取标定板放个边长。然后用cvCalibrateCamera2分别标定做右相机的内参矩阵和畸变系数向量。将该过程封装成一个函数,具体过程请参考程序注释:

/*单个相机标定函数:

输入参数:

const char* imageList IN保存图片名的txt文件

CvMat* object_points OUT世界坐标系点的矩阵

CvMat* image_points OUT图像坐标系矩阵

CvMat* intrinsic_matrix OUT返回的内参数矩阵

CvMat* distortion_coeffs OUT返回的畸变向量

int n_boards IN图片的数量

int board_w IN每张图片x方向角点数量

int board_h IN每张图片y方向角点数量

CvSize* imgSize OUT每张图片的像素尺寸

*/

static void SingleCalib(const char* imageList, CvMat* object_points, CvMat* image_points, CvMat* intrinsic_matrix, CvMat* distortion_coeffs,

int n_boards, int board_w, int board_h, CvSize* imgSize)

{

//定义文件类

FILE* f;

fopen_s(&f, imageList, "rt");

int board_n = board_w*board_h;//每张图片中角点总数量

CvSize board_sz = cvSize(board_w, board_h);//角点尺寸矩阵

CvPoint2D32f* corners = new CvPoint2D32f[board_w*board_h];//定义用于存放每张图片角点数量的一维点数组

CvMat* point_counts = cvCreateMat(n_boards, 1, CV_32SC1);//向量,每个元素代表每张图片角点的数量

int successes = 0;//找到全部角点的图片数量

int step = 0;//用于记录每张图片角点的起始位置

//文件读取不成功:

if (!f)

{

fprintf(stderr, "can not open file %s\\n", imageList);//要读写, 得知道从哪里读, 往哪里写吧?stderr -- 标准错误输出设备

return;

}

//利用i循环读取文件中的字符,然后用于读取图片

for (int i = 0;; i++)

{

//读取图片

char buf[1024];//存放读取的字符数组

int count = 0, result = 0;//count找的的角点数量,result找角点结果标志,全部角点找到非零,否者为0;

if (!fgets(buf, sizeof(buf)-3, f))//提取文件的字符存放到buf

break;

size_t len = strlen(buf);//len为字符数组的长度

while (len > 0 && isspace(buf[len - 1]))//int isspace(int c)检查参数c是否为空格字符,也就是判断是否为空格(' ')、定位字符(' \\t ')、CR(' \\r ')、换行(' \\n ')

buf[--len] = '\\0'; //、垂直定位字符(' \\v ')或翻页(' \\f ')的情况。,既在非空白字符的后面以为添加‘\\0’

if (buf[0] == '#')//开头为'#',结束此次循环

continue;

IplImage* img = cvLoadImage(buf, 0);//读取图片

if (!img)

break;

//获取图片尺寸

*imgSize = cvGetSize(img);

//提取角点

result = cvFindChessboardCorners(img, cvSize(board_w, board_h), corners, &count, CV_CALIB_CB_ADAPTIVE_THRESH | CV_CALIB_CB_NORMALIZE_IMAGE);

if (result)

{

//Calibration will suffer without subpixel interpolation

//函数 cvFindCornerSubPix 通过迭代来发现具有亚象素精度的角点位置

cvFindCornerSubPix(img, corners, count, cvSize(11, 11), cvSize(-1, -1), cvTermCriteria(CV_TERMCRIT_EPS + CV_TERMCRIT_ITER, 30, 0.1));

/*TermCriteria迭代算法的终止准则:

typedef struct CvTermCriteria0d

{

int type; CV_TERMCRIT_ITER 和CV_TERMCRIT_EPS二值之一,或者二者的组合

int max_iter; 最大迭代次数

double epsilon; 结果的精确性

}

一般表示迭代终止的条件,如果为CV_TERMCRIT_ITER,则用最大迭代次数作为终止条件,如果为CV_TERMCRIT_EPS 则用精度作为迭代条件,

如果为CV_TERMCRIT_ITER+CV_TERMCRIT_EPS则用最大迭代次数或者精度作为迭代条件,看哪个条件先满足。*/

//开始保存角点的其实位置

step = successes*board_n;

//将角点从数组corners压入矩阵image_points;以及给对应的object_points赋值

for (int i = step, j = 0; j < board_n; ++i, ++j)

{

CV_MAT_ELEM(*image_points, float, i, 0) = corners[j].x;

CV_MAT_ELEM(*image_points, float, i, 1) = corners[j].y;

CV_MAT_ELEM(*object_points, float, i, 0) = (j / board_w);

CV_MAT_ELEM(*object_points, float, i, 1) = (j % board_w);

CV_MAT_ELEM(*object_points, float, i, 2) = 0.0f;

}

//给对应图片的point_counts赋值

CV_MAT_ELEM(*point_counts, int, successes, 0) = board_n;

successes++;

}

//释放该角点图像

cvReleaseImage(&img);

}

//关闭文件

fclose(f);

//初始化相机内参矩阵

CV_MAT_ELEM(*intrinsic_matrix, float, 0, 0) = 1.0f;

CV_MAT_ELEM(*intrinsic_matrix, float, 1, 1) = 1.0f;

//标定相机的内参矩阵和畸变系数向量

cvCalibrateCamera2(object_points, image_points, point_counts, *imgSize, intrinsic_matrix, distortion_coeffs, NULL, NULL, 0);

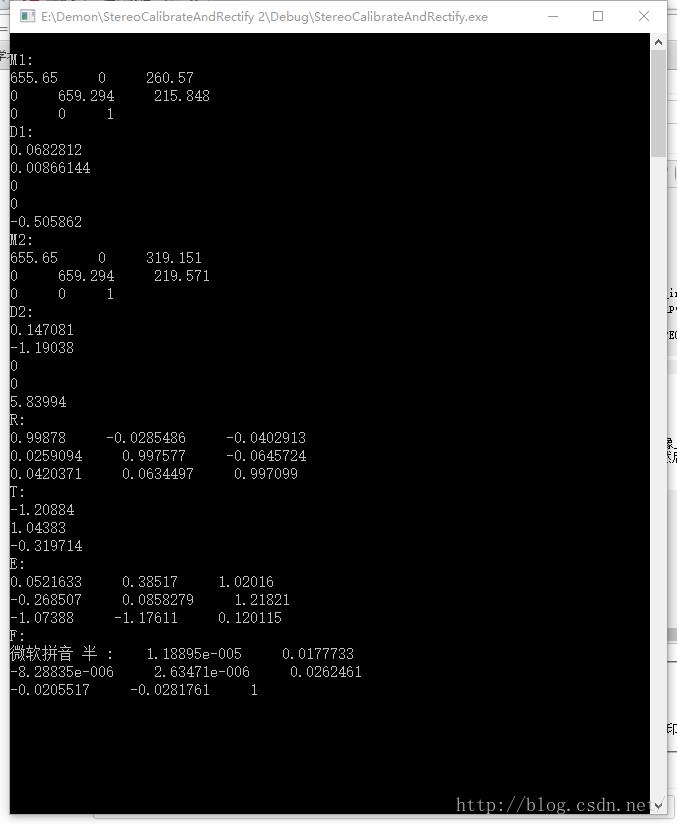

}3.立体标定,计算两摄像机相对旋转矩阵 R,平移向量 T, 本征矩阵E, 基础矩阵F

第2步获得了在世界坐标系和图片坐标系下角点位置序列,以及两个相机的内参矩阵和畸变系数向量,然后调用cvStereoCalibrate计算摄像机相对旋转矩阵 R,平移向量 T, 本征矩阵E, 基础矩阵F,,并同时调整第2步计算的做右相机内参矩阵和畸变系数向量。但是处于个人习惯还是喜欢将该过程封装到一个函数里:

static void StereoCalib(CvMat* _left_object_points, CvMat* _left_image_points, CvMat* _right_image_points, CvMat* left_intrinsic, CvMat* right_intrinsic,

CvMat* left_distortion, CvMat* right_distortion, CvMat* _R, CvMat* _T, CvMat* _E, CvMat* _F, int _n_boards, int _board_w, int _board_h, CvSize _imgSize)

{

int board_n = _board_w*_board_h;//每张图片中角点总数量

//初始化Number_perImg

CvMat* Number_perImg = cvCreateMat(1, _n_boards, CV_32SC1);

int* pInt;

pInt = (int*)(Number_perImg->data.ptr);

for (int i = 0; i < _n_boards; ++i)

{

*pInt = board_n;

pInt++;

}

//Show(用于调试)

/*for (int i = 0; i < _n_boards; i++)

{

cout << CV_MAT_ELEM(*Number_perImg, int, 0, i) << endl;

}*/

//立体标定.计算 _R, _T, _E, _F,,并同时调整Number_perImg, left_intrinsic, D_left,right_intrinsic, D_right

cvStereoCalibrate(_left_object_points, _left_image_points, _right_image_points, Number_perImg, left_intrinsic, left_distortion,

right_intrinsic, right_distortion, _imgSize, _R, _T, _E, _F,

cvTermCriteria(CV_TERMCRIT_ITER + CV_TERMCRIT_EPS, 100, 1e-5), CV_CALIB_FIX_ASPECT_RATIO + CV_CALIB_ZERO_TANGENT_DIST + CV_CALIB_SAME_FOCAL_LENGTH);

}

计算得到如下的结果(到目前为止还是很顺利的嘛!):

4.上述标定结果和Matlab的标定结果对比差别不大。下面通过另一种方法检查标定结果的误差,通过检查图像上的点与另一幅图像的极线的距离远近来评价标定精度。首先,通过cvUndistortPoints()对原始点进行去畸变处理,然后使用cvComputeCorrespondEplilines()来计算极线,然后计算这些点和极线的距离(理想情况下,距离为0),累计这些距离的绝对误差,然后求其平均值。具体过程我也把它封装成一个函数:

/*计算标定误差函数

CvMat* left_image_points IN左相机图像坐标系点的矩阵

CvMat* right_image_points IN右相机图像坐标系点的矩阵

CvMat* left_intrinsic INandOUT左相机的内参矩阵,经立体标定调整后输出

CvMat* right_intrinsic INandOUT右相机的内参矩阵,经立体标定调整后输出

CvMat* left_distortion INandOUT左相机的畸变向量,经立体标定调整后输出

CvMat* right_distortion INandOUT右相机的畸变向量,经立体标定调整后输出

CvMat* _F OUT基础矩阵

int n_boards IN图片的数量

int board_w IN每张图片x方向角点数量

int board_h IN每张图片y方向角点数量

*/

double Calib_Quality_Check(CvMat* _left_image_points, CvMat* _right_image_points, CvMat* left_intrinsic, CvMat* right_intrinsic,

CvMat* left_distortion, CvMat* right_distortion, CvMat* _F, int _n_boards, int _board_w, int _board_h)

{

int board_n = _board_w*_board_h;//每张图片中角点总数量

//定义极线

CvMat* Lines1 = cvCreateMat(1, _n_boards*board_n, CV_32FC3);

CvMat* Lines2 = cvCreateMat(1, _n_boards*board_n, CV_32FC3);

//校正点的畸变

<span style="font-family: Arial, Helvetica, sans-serif;">cvUndistortPoints</span><span style="font-family: Arial, Helvetica, sans-serif;">(_left_image_points, _left_image_points, left_intrinsic, D_left, 0, left_intrinsic);//代码运行到这里出现中断</span>

<span style="font-family: Arial, Helvetica, sans-serif;">cvUndistortPoints</span><span style="font-family: Arial, Helvetica, sans-serif;">(_right_image_points, _right_image_points, right_intrinsic, D_right, 0, right_intrinsic);</span>

//计算极线

cvComputeCorrespondEpilines(_left_image_points, 1, _F, Lines1);

cvComputeCorrespondEpilines(_right_image_points, 2, _F, Lines2);

//计算左相机图像点和极线的距离

float a, b, c;//极线系数ax+by+c=0

double err, avgErr = 0;//误差变量

float* p_temp = (float*)cvPtr2D(_left_image_points, 0, 0);//用于临时计算的点

float* l_temp = (float*)cvPtr2D(Lines2, 0, 0,0);//用于临时计算的极线

for (int i = 0; i < _n_boards*board_n; i++)

{

//提取点坐标

x = *p_temp;

y = *(p_temp + 1);

p_temp += 2;

//提取极线系数

a = *l_temp;

b = *(l_temp + 1);

c = *(l_temp + 2);

l_temp += 3;

//计算点到直线的距离

err = fabs(a*x + b*y + c) / sqrt(a*a + b*b);

//累加误差

avgErr += err;

}

//计算右相机图像点和极线的距离

p_temp = (float*)cvPtr2D(_right_image_points, 0, 0);//用于临时计算的点

l_temp = (float*)cvPtr2D(Lines1, 0, 0);//用于临时计算的极线

for (int i = 0; i < _n_boards*board_n; i++)

{

//提取点坐标

x = *p_temp;

y = *(p_temp + 1);

p_temp += 2;

//提取极线系数

a = *l_temp;

b = *(l_temp + 1);

c = *(l_temp + 2);

l_temp += 3;

//计算点到直线的距离

err = fabs(a*x + b*y + c) / sqrt(a*a + b*b);

//累加误差

avgErr += err;

}

//求误差的平均值

avgErr /= (_n_boards*board_n);

return avgErr;

}

void

cvUndisto(const CvMat* _src, CvMat* _dst, const CvMat* _cameraMatrix,

const CvMat* _distCoeffs,

const CvMat* _R, const CvMat* _P)CV_ASSERT(CV_IS_MAT(_src) && CV_IS_MAT(_dst) &&

(_src->rows == 1 || _src->cols == 1) &&

(_dst->rows == 1 || _dst->cols == 1) &&

CV_ARE_SIZES_EQ(_src, _dst) &&

(CV_MAT_TYPE(_src->type) == CV_32FC2 || CV_MAT_TYPE(_src->type) == CV_64FC2) &&

(CV_MAT_TYPE(_dst->type) == CV_32FC2 || CV_MAT_TYPE(_dst->type) == CV_64FC2));CvMat* left_image_points = cvCreateMat(n_boards*board_n, 2, CV_32FC1);//左相机图片坐标中的角点

CvMat* right_image_points = cvCreateMat(n_boards*board_n, 2, CV_32FC1);//右相机图片坐标中的角点(CV_MAT_TYPE(_src->type) == CV_32FC2 || CV_MAT_TYPE(_src->type) == CV_64FC2) &&(CV_MAT_TYPE(_dst->type) == CV_32FC2 || CV_MAT_TYPE(_dst->type) == CV_64FC2))/*计算标定误差函数

CvMat* left_image_points IN左相机图像坐标系点的矩阵

CvMat* right_image_points IN右相机图像坐标系点的矩阵

CvMat* left_intrinsic INandOUT左相机的内参矩阵,经立体标定调整后输出

CvMat* right_intrinsic INandOUT右相机的内参矩阵,经立体标定调整后输出

CvMat* left_distortion INandOUT左相机的畸变向量,经立体标定调整后输出

CvMat* right_distortion INandOUT右相机的畸变向量,经立体标定调整后输出

CvMat* _F OUT基础矩阵

int n_boards IN图片的数量

int board_w IN每张图片x方向角点数量

int board_h IN每张图片y方向角点数量

*/

double Calib_Quality_Check(CvMat* _left_image_points, CvMat* _right_image_points, CvMat* left_intrinsic, CvMat* right_intrinsic,

CvMat* left_distortion, CvMat* right_distortion, CvMat* _F, int _n_boards, int _board_w, int _board_h)

{

int board_n = _board_w*_board_h;//每张图片中角点总数量

//整理LeftPoints。因为_left_image_points数据类型是_n_boards*board_n*3*CV_32FC1,left数据类型是1*_n_boards*board_n*CV_32FC2,对参数进行转换

CvMat* left = cvCreateMat(1, _n_boards*board_n, CV_32FC2);

float *p = (float*)cvPtr2D(left, 0, 0);

float x, y;

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*_left_image_points, float, i, 0);

y = CV_MAT_ELEM(*_left_image_points, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//整理LeftPoints。因为_left_image_points数据类型是_n_boards*board_n*3*CV_32FC1,left数据类型是1*_n_boards*board_n*CV_32FC2,对参数进行转换

CvMat* right = cvCreateMat(1, _n_boards*board_n, CV_32FC2);

p = (float*)cvPtr2D(right, 0, 0);

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*_right_image_points, float, i, 0);

y = CV_MAT_ELEM(*_right_image_points, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//调整畸变向量。因为left_distortion数据类型是5*1的向量,D_left数据类型是1*5向量,对参数进行转换

CvMat* D_left = cvCreateMat(1, 5, CV_32FC1);

for (int i = 0; i < 5; ++i)

{

CV_MAT_ELEM(*D_left, float, 0, i) = CV_MAT_ELEM(*left_distortion, float, i, 0);

}

//调整畸变向量。因为right_distortion数据类型是5*1的向量,D_right数据类型是1*5向量,对参数进行转换

CvMat* D_right = cvCreateMat(1, 5, CV_32FC1);

for (int i = 0; i < 5; ++i)

{

CV_MAT_ELEM(*D_right, float, 0, i) = CV_MAT_ELEM(*right_distortion, float, i, 0);

}

//定义极线

CvMat* Lines1 = cvCreateMat(1, _n_boards*board_n, CV_32FC3);

CvMat* Lines2 = cvCreateMat(1, _n_boards*board_n, CV_32FC3);

//校正点的畸变

cvUndistortPoints(_left_image_points, _left_image_points, left_intrinsic, D_left, 0, left_intrinsic);

cvUndistortPoints(_right_image_points, _right_image_points, right_intrinsic, D_right, 0, right_intrinsic);

//计算极线

cvComputeCorrespondEpilines(_left_image_points, 1, _F, Lines1);

cvComputeCorrespondEpilines(_right_image_points, 2, _F, Lines2);

//计算左相机图像点和极线的距离

float a, b, c;//极线系数ax+by+c=0

double err, avgErr = 0;//误差变量

float* p_temp = (float*)cvPtr2D(_left_image_points, 0, 0);//用于临时计算的点

float* l_temp = (float*)cvPtr2D(Lines2, 0, 0,0);//用于临时计算的极线

for (int i = 0; i < _n_boards*board_n; i++)

{

//提取点坐标

x = *p_temp;

y = *(p_temp + 1);

p_temp += 2;

//提取极线系数

a = *l_temp;

b = *(l_temp + 1);

c = *(l_temp + 2);

l_temp += 3;

//计算点到直线的距离

err = fabs(a*x + b*y + c) / sqrt(a*a + b*b);

//累加误差

avgErr += err;

}

//计算右相机图像点和极线的距离

p_temp = (float*)cvPtr2D(_right_image_points, 0, 0);//用于临时计算的点

l_temp = (float*)cvPtr2D(Lines1, 0, 0);//用于临时计算的极线

for (int i = 0; i < _n_boards*board_n; i++)

{

//提取点坐标

x = *p_temp;

y = *(p_temp + 1);

p_temp += 2;

//提取极线系数

a = *l_temp;

b = *(l_temp + 1);

c = *(l_temp + 2);

l_temp += 3;

//计算点到直线的距离

err = fabs(a*x + b*y + c) / sqrt(a*a + b*b);

//累加误差

avgErr += err;

}

//求误差的平均值

avgErr /= (_n_boards*board_n);

return avgErr;

}

4.计算相机校正的左右相机的校正矩阵,使相机两平面完全行对准

当相机两平面完全行对准时,计算立体视差是最简单的,为了使相机两平面完全行对准,OpenCV提供了非标定和标定的方法来计算相机校正的左右相机的校正矩阵。接下来,选择非标定(Hartley)方法cvStereoRectifyUncalibrated()或者标定(Bouguet)方法cvStereoRectify()来计算相机变换。

如果选择非标定方法,在使用

cvInitUndistortRectifyMap( const CvMat* camera_matrix,const CvMat* dist_coeffs,const CvMat *R, const CvMat* new_camera_matrix,CvArr* mapx, CvArr* mapy );而选择标定方法则可以直接从cvStereoRectify()读出cvInitUndistortRectifyMap()的输入参数。

我还是把这个过程封装成一个函数的形式:

/*立体校正的映射矩阵mapx和mapy函数

CvMat* left_image_points IN左相机图像坐标系点的矩阵

CvMat* right_image_points IN右相机图像坐标系点的矩阵

CvMat* left_intrinsic INandOUT左相机的内参矩阵,经立体标定调整后输出

CvMat* right_intrinsic INandOUT右相机的内参矩阵,经立体标定调整后输出

CvMat* left_distortion INandOUT左相机的畸变向量,经立体标定调整后输出

CvMat* right_distortion INandOUT右相机的畸变向量,经立体标定调整后输出

CvMat* R OUT相机相对旋转矩阵

CvMat* T OUT相机相对平移矩阵

CvMat* F OUT基础矩阵

CvSize* imgSize IN每张图片的像素尺寸

int n_boards IN图片的数量

int board_w IN每张图片x方向角点数量

int board_h IN每张图片y方向角点数量

bool useUncalibrated IN是否采用非标定算法Hartly计算立体校正的映射矩阵mapx和mapy

CvMat* mxl OUT左相机立体校正的映射矩阵mapx

CvMat* myl OUT左相机立体校正的映射矩阵mapy

CvMat* mxr OUT右相机立体校正的映射矩阵mapx

CvMat* myr OUT右相机立体校正的映射矩阵mapy

*/

/*mapx和mapy是函数返回的查找映射表,。对目标图像上的每一个像素来说,映射表说明应该从什么位置插值源像素;

并且映射表可以直接被Remap()嵌入使用。函数cvInitUndistortRectifyMap()被左右摄像机分别调用,这样可以获得它们的各自不同的重映射参数mapx和mapy,

每次我们有新的左右立体图像需要校正时可以使用左右映射表*/

static void ComputeRectification(CvMat* imagePoints_left, CvMat* imagePoints_right, CvMat* _left_intrinsic, CvMat* _right_intrinsic, CvMat* _left_distortion, CvMat* _right_distortion,

CvMat* R, CvMat* T, CvMat* F, CvSize imageSize, int _n_boards, int _board_w, int _board_h, CvMat* mxl, CvMat* myl, CvMat* mxr, CvMat* myr)

{

//定义和初始化左右相机的行对准旋转矩阵

CvMat* Rl = cvCreateMat(3, 3, CV_64FC1);

cvZero(Rl);

CvMat* Rr = cvCreateMat(3, 3, CV_64FC1);

cvZero(Rr);

//计算相机共面和行对准的校正项

if (useUncalibrated == false)//useUncalibrated == 0,使用Bouguet方法计算校正项

{

//定义和初始化做右相机投影矩阵,该矩阵可以计算校正后的相机内参数矩阵

CvMat* Pl = cvCreateMat(3, 4, CV_64FC1);

cvZero(Pl);

CvMat* Pr = cvCreateMat(3, 4, CV_64FC1);

cvZero(Pr);

//计算相机校正项,计算得到 Rl, Rr, Pl, Pr

cvStereoRectify(<span style="font-family: Arial, Helvetica, sans-serif;">_left_intrinsic</span><span style="font-family: Arial, Helvetica, sans-serif;">, </span><span style="font-family: Arial, Helvetica, sans-serif;">_right_intrinsic</span><span style="font-family: Arial, Helvetica, sans-serif;">, </span><span style="font-family: Arial, Helvetica, sans-serif;">_left_distortion</span><span style="font-family: Arial, Helvetica, sans-serif;">, </span><span style="font-family: Arial, Helvetica, sans-serif;">_right_distortion</span><span style="font-family: Arial, Helvetica, sans-serif;">, imageSize,</span><span style="font-family: Arial, Helvetica, sans-serif;">R, T, Rl, Rr, Pl, Pr, 0, 0, );//运行到此处出现中断</span><span style="font-family: Arial, Helvetica, sans-serif;">

</span> //Show(用于调试)

/*cout << endl << "Pr:" << endl;

for (int i = 0; i < 3; i++)

{

const double *p = (const double*)(Pr->data.ptr + i*Pr->step);

cout << *p << " " << *(p + 1) << " " << *(p + 2)<<" "<<*(p+3) << endl;

}*/

/*通过比较转换矩阵P位置[0][3]和位置[1][3]的大小 判断相机是水平布置还是垂直布置。

因为这两个位置的元素分别表示右相机坐标系原点移动到左相机坐标系原点的x和y方向移动距离。*/

isVerticalStereo = fabs(CV_MAT_ELEM(*Pr, double, 1, 3)) > fabs(CV_MAT_ELEM(*Pr, double, 0, 3));

//Precompute maps for cvRemap()

//下面通过函数cvInitUndistortRectifyMap()计算立体校正的映射矩阵mx和my

cvInitUndistortRectifyMap(_left_intrinsic, D_left, Rl, Pl, mxl, myl);

cvInitUndistortRectifyMap(_right_intrinsic, D_right, Rr, Pr, mxr, myr);

}

else//useUncalibrated! = 0,使用Hartley方法计算校正项

{

int board_n = _board_w*_board_h;//每张图片中角点总数量

CvMat* Hl = cvCreateMat(3, 3, CV_64F);//Hartley方法计算得到的校正项

CvMat* Hr = cvCreateMat(3, 3, CV_64F);//Hartley方法计算得到的校正项

CvMat *invM = cvCreateMat(3, 3, CV_64F);//用于临时存放相机内参数的逆

//整理imagePoints_left和imagePoints_right

float x, y;//用于传值的临时变量

float* p;//用于传值的临时变量

//LeftPoints

CvMat* left = cvCreateMat(1, _n_boards*board_n, CV_32FC2);

p = (float*)cvPtr2D(left, 0, 0);

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*imagePoints_left, float, i, 0);

y = CV_MAT_ELEM(*imagePoints_left, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//Show

/*p = (float*)cvPtr2D(left, 0, 0);

for (int i = 0; i < n_boards*board_n; i++)

{

cout << *p << " " << *(p + 1) << endl;

p += 2;

}*/

//RightPoints

CvMat* right = cvCreateMat(1, _n_boards*board_n, CV_32FC2);

p = (float*)cvPtr2D(right, 0, 0);

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*imagePoints_right, float, i, 0);

y = CV_MAT_ELEM(*imagePoints_right, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//Show

/*p = (float*)cvPtr2D(right, 0, 0);

for (int i = 0; i < n_boards*board_n; i++)

{

cout << *p << " " << *(p + 1) << endl;

p += 2;

}*/

//通过两个相机的图像点计算基础矩阵F

cvFindFundamentalMat(left, right, F);

//使用非标定算法Hartley立体校正,获得做右相机的校正单应性矩阵H1,H2

cvStereoRectifyUncalibrated(left, right, F, imageSize, Hl, Hr, 3);

/*使用cvStereoRectifyUncalibrated()的结果H1,H2来校正立体摄像机,必须先预处理一下单应性矩阵Hl和我Hr,

如果没有先前标定中获得Mrect,令Mrect=M,分别计算左右校正旋转矩阵Rl=inv(Mrect_l)Hl(Ml)和Rr=inv(Mrect_r)Hr(Mr),

最后还需要分别计算左右相机的畸变系数向量*/

//Show(用于调试)

/*cout << endl << "M1:";

for (int i = 0; i < 3; i++)

{

cout << endl;

for (int j = 0; j < 3; j++)

{

cout << CV_MAT_ELEM(*_left_intrinsic, float, i, j) << " ";

}

}*/

//预处理Rl=inv(Mrect_l)Hl(Ml)

cvInvert(M_left, invM);

cvMatMul(Hl, M_left, Rl);

cvMatMul(invM, Rl, Rl);

//预处理Rr=inv(Mrect_r)Hr(Mr)

cvInvert(M_right, invM);//_iM为_M1的逆矩阵

cvMatMul(Hr, M_right, Rr);//_R1=_H1*_M1

cvMatMul(invM, Rr, Rr);//_R2=_iM*_R1

//Precompute map for cvRemap(),用预处理后的_R1和_R2,以及前面计算的相机参数代入cvInitUndistortRectifyMap计算mapx和mapy

cvInitUndistortRectifyMap(M_left, D_left, Rl, M_left, mxl, myl);

cvInitUndistortRectifyMap(M_right, D_right, Rr, M_right, mxr, myr);

}

}cvRodrigues2( const CvMat* src, CvMat* dst, CvMat* jacobian )if (!CV_ARE_DEPTHS_EQ(src, dst))

CV_Error(CV_StsUnmatchedFormats, "All the matrices must have the same data type");/*立体校正的映射矩阵mapx和mapy函数

CvMat* left_image_points IN左相机图像坐标系点的矩阵

CvMat* right_image_points IN右相机图像坐标系点的矩阵

CvMat* left_intrinsic INandOUT左相机的内参矩阵,经立体标定调整后输出

CvMat* right_intrinsic INandOUT右相机的内参矩阵,经立体标定调整后输出

CvMat* left_distortion INandOUT左相机的畸变向量,经立体标定调整后输出

CvMat* right_distortion INandOUT右相机的畸变向量,经立体标定调整后输出

CvMat* R OUT相机相对旋转矩阵

CvMat* T OUT相机相对平移矩阵

CvMat* F OUT基础矩阵

CvSize* imgSize IN每张图片的像素尺寸

int n_boards IN图片的数量

int board_w IN每张图片x方向角点数量

int board_h IN每张图片y方向角点数量

bool useUncalibrated IN是否采用非标定算法Hartly计算立体校正的映射矩阵mapx和mapy

CvMat* mxl OUT左相机立体校正的映射矩阵mapx

CvMat* myl OUT左相机立体校正的映射矩阵mapy

CvMat* mxr OUT右相机立体校正的映射矩阵mapx

CvMat* myr OUT右相机立体校正的映射矩阵mapy

*/

/*mapx和mapy是函数返回的查找映射表,。对目标图像上的每一个像素来说,映射表说明应该从什么位置插值源像素;

并且映射表可以直接被Remap()嵌入使用。函数cvInitUndistortRectifyMap()被左右摄像机分别调用,这样可以获得它们的各自不同的重映射参数mapx和mapy,

每次我们有新的左右立体图像需要校正时可以使用左右映射表*/

static void ComputeRectification(CvMat* imagePoints_left, CvMat* imagePoints_right, CvMat* _left_intrinsic, CvMat* _right_intrinsic, CvMat* _left_distortion, CvMat* _right_distortion,

CvMat* R, CvMat* T, CvMat* F, CvSize imageSize, int _n_boards, int _board_w, int _board_h, CvMat* mxl, CvMat* myl, CvMat* mxr, CvMat* myr)

{

/*将参数变量重新整理成CV_64F类型的,我也不知道为什么,cvStereoRectify如果传入CV_32FC1的参数会中断,改为CV_64FC1则成功*/

//整理左相机内参矩阵。因为_left_intrinsic数据类型为CV_32FC1,M_left为CV_64FC1

CvMat* M_left = cvCreateMat(3, 3, CV_64FC1);

for (int i = 0; i < 3; i++)

for (int j = 0; j < 3; j++)

CV_MAT_ELEM(*M_left, double, i, j) = CV_MAT_ELEM(*_left_intrinsic, float, i, j);

//整理右相机内参矩阵。因为_left_intrinsic数据类型为CV_32FC1,M_left为CV_64FC1

CvMat* M_right = cvCreateMat(3, 3, CV_64FC1);

for (int i = 0; i < 3; i++)

for (int j = 0; j < 3; j++)

CV_MAT_ELEM(*M_right, double, i, j) = CV_MAT_ELEM(*_right_intrinsic, float, i, j);

//调整畸变向量。因为left_distortion数据类型是5*1的向量,D_left数据类型是1*5向量,同时转换为CV_64FC1。

CvMat* D_left = cvCreateMat(1, 5, CV_64FC1);

for (int i = 0; i < 5; ++i)

{

CV_MAT_ELEM(*D_left, double, 0, i) = CV_MAT_ELEM(*_left_distortion, float, i, 0);

}

//调整畸变向量。因为right_distortion数据类型是5*1的向量,D_right数据类型是1*5向量,同时转换为CV_64FC1。

CvMat* D_right = cvCreateMat(1, 5, CV_64FC1);

for (int i = 0; i < 5; ++i)

{

CV_MAT_ELEM(*D_right, double, 0, i) = CV_MAT_ELEM(*_right_distortion, float, i, 0);

}

//转换旋转矩阵。CV_32FC1转换为CV_64FC1

CvMat* RR = cvCreateMat(3, 3, CV_64FC1);

for (int i = 0; i < 3; i++)

for (int j = 0; j < 3; j++)

CV_MAT_ELEM(*RR, double, i, j) = CV_MAT_ELEM(*R, float, i, j);

//定义平移向量。CV_32FC1转换为CV_64FC1

CvMat* TT = cvCreateMat(3, 1, CV_64FC1);

for (int i = 0; i < 3; ++i)

{

CV_MAT_ELEM(*TT, double, i, 0) = CV_MAT_ELEM(*T, float, i, 0);

}

//定义和初始化左右相机的行对准旋转矩阵

CvMat* Rl = cvCreateMat(3, 3, CV_64FC1);

cvZero(Rl);

CvMat* Rr = cvCreateMat(3, 3, CV_64FC1);

cvZero(Rr);

//计算相机共面和行对准的校正项

if (useUncalibrated == false)//useUncalibrated == 0,使用Bouguet方法计算校正项

{

//定义和初始化做右相机投影矩阵,该矩阵可以计算校正后的相机内参数矩阵

CvMat* Pl = cvCreateMat(3, 4, CV_64FC1);

cvZero(Pl);

CvMat* Pr = cvCreateMat(3, 4, CV_64FC1);

cvZero(Pr);

//计算相机校正项,计算得到 Rl, Rr, Pl, Pr

cvStereoRectify(M_left, M_right, D_left, D_right, imageSize,

RR, TT, Rl, Rr, Pl, Pr, 0, 0, -1.0, cvSize(0, 0), (CvRect*)0, (CvRect*)0);

//Show(用于调试)

/*cout << endl << "Pr:" << endl;

for (int i = 0; i < 3; i++)

{

const double *p = (const double*)(Pr->data.ptr + i*Pr->step);

cout << *p << " " << *(p + 1) << " " << *(p + 2)<<" "<<*(p+3) << endl;

}*/

/*通过比较转换矩阵P位置[0][3]和位置[1][3]的大小 判断相机是水平布置还是垂直布置。

因为这两个位置的元素分别表示右相机坐标系原点移动到左相机坐标系原点的x和y方向移动距离。*/

isVerticalStereo = fabs(CV_MAT_ELEM(*Pr, double, 1, 3)) > fabs(CV_MAT_ELEM(*Pr, double, 0, 3));

//Precompute maps for cvRemap()

//下面通过函数cvInitUndistortRectifyMap()计算立体校正的映射矩阵mx和my

cvInitUndistortRectifyMap(_left_intrinsic, D_left, Rl, Pl, mxl, myl);

cvInitUndistortRectifyMap(_right_intrinsic, D_right, Rr, Pr, mxr, myr);

}

else//useUncalibrated! = 0,使用Hartley方法计算校正项

{

int board_n = _board_w*_board_h;//每张图片中角点总数量

CvMat* Hl = cvCreateMat(3, 3, CV_64FC1);//Hartley方法计算得到的校正项

CvMat* Hr = cvCreateMat(3, 3, CV_64FC1);//Hartley方法计算得到的校正项

CvMat *invM = cvCreateMat(3, 3, CV_64FC1);//用于临时存放相机内参数的逆

//整理imagePoints_left和imagePoints_right

float x, y;//用于传值的临时变量

double* p;//用于传值的临时变量

//LeftPoints

CvMat* left = cvCreateMat(1, _n_boards*board_n, CV_64FC2);

p = (double*)cvPtr2D(left, 0, 0);

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*imagePoints_left, float, i, 0);

y = CV_MAT_ELEM(*imagePoints_left, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//Show

/*p = (float*)cvPtr2D(left, 0, 0);

for (int i = 0; i < n_boards*board_n; i++)

{

cout << *p << " " << *(p + 1) << endl;

p += 2;

}*/

//RightPoints

CvMat* right = cvCreateMat(1, _n_boards*board_n, CV_64FC2);

p = (double*)cvPtr2D(right, 0, 0);

for (int i = 0; i < _n_boards*board_n; ++i)

{

x = CV_MAT_ELEM(*imagePoints_right, float, i, 0);

y = CV_MAT_ELEM(*imagePoints_right, float, i, 1);

*p = x;

p++;

*p = y;

p++;

}

//Show

/*p = (float*)cvPtr2D(right, 0, 0);

for (int i = 0; i < n_boards*board_n; i++)

{

cout << *p << " " << *(p + 1) << endl;

p += 2;

}*/

//通过两个相机的图像点计算基础矩阵F

cvFindFundamentalMat(left, right, F);

//使用非标定算法Hartley立体校正,获得做右相机的校正单应性矩阵H1,H2

cvStereoRectifyUncalibrated(left, right, F, imageSize, Hl, Hr, 3);

/*使用cvStereoRectifyUncalibrated()的结果H1,H2来校正立体摄像机,必须先预处理一下单应性矩阵Hl和我Hr,

如果没有先前标定中获得Mrect,令Mrect=M,分别计算左右校正旋转矩阵Rl=inv(Mrect_l)Hl(Ml)和Rr=inv(Mrect_r)Hr(Mr),

最后还需要分别计算左右相机的畸变系数向量*/

//Show(用于调试)

/*cout << endl << "M1:";

for (int i = 0; i < 3; i++)

{

cout << endl;

for (int j = 0; j < 3; j++)

{

cout << CV_MAT_ELEM(*_left_intrinsic, float, i, j) << " ";

}

}*/

//预处理Rl=inv(Mrect_l)Hl(Ml)

cvInvert(M_left, invM);

cvMatMul(Hl, M_left, Rl);

cvMatMul(invM, Rl, Rl);

//预处理Rr=inv(Mrect_r)Hr(Mr)

cvInvert(M_right, invM);//_iM为_M1的逆矩阵

cvMatMul(Hr, M_right, Rr);//_R1=_H1*_M1

cvMatMul(invM, Rr, Rr);//_R2=_iM*_R1

//Precompute map for cvRemap(),用预处理后的_R1和_R2,以及前面计算的相机参数代入cvInitUndistortRectifyMap计算mapx和mapy

cvInitUndistortRectifyMap(M_left, D_left, Rl, M_left, mxl, myl);

cvInitUndistortRectifyMap(M_right, D_right, Rr, M_right, mxr, myr);

}

}5.校正图片和计算视差映射矩阵

以上是关于基于OpenCV立体视觉标定和校正的主要内容,如果未能解决你的问题,请参考以下文章

双目三维重建系统(双目标定+立体校正+双目测距+点云显示)Python