[Python人工智能] 三十四.Bert模型 keras-bert库构建Bert模型实现微博情感分析

Posted Eastmount

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了[Python人工智能] 三十四.Bert模型 keras-bert库构建Bert模型实现微博情感分析相关的知识,希望对你有一定的参考价值。

从本专栏开始,作者正式研究Python深度学习、神经网络及人工智能相关知识。前一篇文章开启了新的内容——Bert,首先介绍Keras-bert库安装及基础用法及文本分类工作。这篇文章将通过keras-bert库构建Bert模型,并实现微博情感分析。基础性文章,希望对您有所帮助!

这篇文章代码参考“山阴少年”大佬的博客,并结合自己的经验,对其代码进行了详细的复现和理解。希望对您有所帮助,尤其是初学者,也强烈推荐大家关注这位老师的文章。

微博情感预测结果如下所示:

原文: 《长津湖》这部电影真的非常好看,今天看完好开心,爱了爱了。强烈推荐大家,哈哈!!!

预测标签: 喜悦

原文: 听到这个消息真心难受,很伤心,怎么这么悲剧。保佑保佑,哭

预测标签: 哀伤

原文: 愤怒,我真的挺生气的,怒其不争,哀其不幸啊!

预测标签: 愤怒

本专栏主要结合作者之前的博客、AI经验和相关视频及论文介绍,后面随着深入会讲解更多的Python人工智能案例及应用。基础性文章,希望对您有所帮助,如果文章中存在错误或不足之处,还请海涵!作者作为人工智能的菜鸟,希望大家能与我在这一笔一划的博客中成长起来。写了这么多年博客,尝试第一个付费专栏,为小宝赚点奶粉钱,但更多博客尤其基础性文章,还是会继续免费分享,该专栏也会用心撰写,望对得起读者。如果有问题随时私聊我,只望您能从这个系列中学到知识,一起加油喔~

- Keras下载地址:https://github.com/eastmountyxz/AI-for-Keras

- TensorFlow下载地址:https://github.com/eastmountyxz/AI-for-TensorFlow

前文赏析:

- [Python人工智能] 一.TensorFlow2.0环境搭建及神经网络入门

- [Python人工智能] 二.TensorFlow基础及一元直线预测案例

- [Python人工智能] 三.TensorFlow基础之Session、变量、传入值和激励函数

- [Python人工智能] 四.TensorFlow创建回归神经网络及Optimizer优化器

- [Python人工智能] 五.Tensorboard可视化基本用法及绘制整个神经网络

- [Python人工智能] 六.TensorFlow实现分类学习及MNIST手写体识别案例

- [Python人工智能] 七.什么是过拟合及dropout解决神经网络中的过拟合问题

- [Python人工智能] 八.卷积神经网络CNN原理详解及TensorFlow编写CNN

- [Python人工智能] 九.gensim词向量Word2Vec安装及《庆余年》中文短文本相似度计算

- [Python人工智能] 十.Tensorflow+Opencv实现CNN自定义图像分类案例及与机器学习KNN图像分类算法对比

- [Python人工智能] 十一.Tensorflow如何保存神经网络参数

- [Python人工智能] 十二.循环神经网络RNN和LSTM原理详解及TensorFlow编写RNN分类案例

- [Python人工智能] 十三.如何评价神经网络、loss曲线图绘制、图像分类案例的F值计算

- [Python人工智能] 十四.循环神经网络LSTM RNN回归案例之sin曲线预测

- [Python人工智能] 十五.无监督学习Autoencoder原理及聚类可视化案例详解

- [Python人工智能] 十六.Keras环境搭建、入门基础及回归神经网络案例

- [Python人工智能] 十七.Keras搭建分类神经网络及MNIST数字图像案例分析

- [Python人工智能] 十八.Keras搭建卷积神经网络及CNN原理详解

[Python人工智能] 十九.Keras搭建循环神经网络分类案例及RNN原理详解 - [Python人工智能] 二十.基于Keras+RNN的文本分类vs基于传统机器学习的文本分类

- [Python人工智能] 二十一.Word2Vec+CNN中文文本分类详解及与机器学习(RF\\DTC\\SVM\\KNN\\NB\\LR)分类对比

- [Python人工智能] 二十二.基于大连理工情感词典的情感分析和情绪计算

- [Python人工智能] 二十三.基于机器学习和TFIDF的情感分类(含详细的NLP数据清洗)

- [Python人工智能] 二十四.易学智能GPU搭建Keras环境实现LSTM恶意URL请求分类

- [Python人工智能] 二十六.基于BiLSTM-CRF的医学命名实体识别研究(上)数据预处理

- [Python人工智能] 二十七.基于BiLSTM-CRF的医学命名实体识别研究(下)模型构建

- [Python人工智能] 二十八.Keras深度学习中文文本分类万字总结(CNN、TextCNN、LSTM、BiLSTM、BiLSTM+Attention)

- [Python人工智能] 二十九.什么是生成对抗网络GAN?基础原理和代码普及(1)

- [Python人工智能] 三十.Keras深度学习构建CNN识别阿拉伯手写文字图像

- [Python人工智能] 三十一.Keras实现BiLSTM微博情感分类和LDA主题挖掘分析

- [Python人工智能] 三十二.Bert模型 (1)Keras-bert基本用法及预训练模型

- [Python人工智能] 三十三.Bert模型 (2)keras-bert库构建Bert模型实现文本分类

- [Python人工智能] 三十四.Bert模型 (3)keras-bert库构建Bert模型实现微博情感分析

一.Bert模型引入

Bert模型的原理知识将在后面的文章介绍,主要结合结合谷歌论文和模型优势讲解。

- BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding

- https://arxiv.org/pdf/1810.04805.pdf

- https://github.com/google-research/bert

BERT(Bidirectional Encoder Representation from Transformers)是一个预训练的语言表征模型,是由谷歌AI团队在2018年提出。该模型在机器阅读理解顶级水平测试SQuAD1.1中表现出惊人的成绩,并且在11种不同NLP测试中创出最佳成绩,包括将GLUE基准推至80.4%(绝对改进7.6%),MultiNLI准确度达到86.7% (绝对改进率5.6%)等。可以预见的是,BERT将为NLP带来里程碑式的改变,也是NLP领域近期最重要的进展。

Bert强调了不再像以往一样采用传统的单向语言模型或者把两个单向语言模型进行浅层拼接的方法进行预训练,而是采用新的masked language model(MLM),以致能生成深度的双向语言表征。其模型框架图如下所示,后面的文章再详细介绍,这里仅作引入,推荐读者阅读原文。

二.数据集介绍

数据描述:

| 类型 | 描述 |

|---|---|

| 数据概览 | 36 万多条,带情感标注 新浪微博,包含 4 种情感,其中喜悦约 20 万条,愤怒、厌恶、低落各约 5 万条 |

| 推荐实验 | 情感/观点/评论 倾向性分析 |

| 数据来源 | 新浪微博 |

| 原数据集 | 微博情感分析数据集,网上搜集,具体作者、来源不详 |

| 数据描述 | 微博总体数目为361744: 喜悦-199496、愤怒-51714、厌恶-55267、低落-55267 |

| 对应类标 | 0: 喜悦, 1: 愤怒, 2: 厌恶, 3: 低落 |

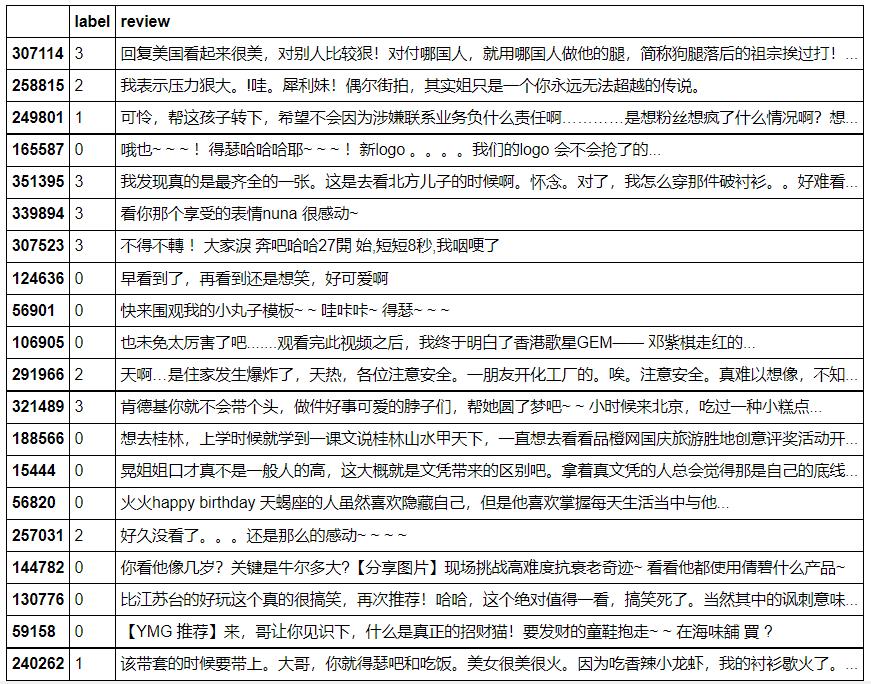

数据示例:

注意,做到实验分析,作者才发现“厌恶-55267”和“低落-55267”的数据集完全相同,因此我们做三分类问题,更重要的是思想。

下载地址:

- https://github.com/eastmountyxz/Datasets-Text-Mining

参考链接:

- https://github.com/SophonPlus/ChineseNlpCorpus/blob/master/datasets/simplifyweibo_4_moods/intro.ipynb

三.机器学习微博情感分析

首先,我们介绍机器学习微博情感分析代码。

- 读取数据

- 数据预处理(中文分词)

- TF-IDF计算

- 分类模型构建

- 预测及实验评估

完整代码如下:

# -*- coding: utf-8 -*-

# -*- coding: utf-8 -*-

"""

Created on Mon Sep 27 22:21:53 2021

@author: xiuzhang

"""

import jieba

import pandas as pd

import numpy as np

from collections import Counter

from scipy.sparse import coo_matrix

from sklearn import feature_extraction

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import RandomForestClassifier

from sklearn.tree import DecisionTreeClassifier

from sklearn import svm

from sklearn import neighbors

from sklearn.naive_bayes import MultinomialNB

from sklearn.ensemble import AdaBoostClassifier

#-----------------------------------------------------------------------------

#读取数据

train_path = 'data/weibo_3_moods_train.csv'

test_path = 'data/weibo_3_moods_test.csv'

types = 0: '喜悦', 1: '愤怒', 2: '哀伤'

pd_train = pd.read_csv(train_path)

pd_test = pd.read_csv(test_path)

print('训练集数目(总体):%d' % pd_train.shape[0])

print('测试集数目(总体):%d' % pd_test.shape[0])

#中文分词

train_words = []

test_words = []

train_labels = []

test_labels = []

stopwords = ["[", "]", ")", "(", ")", "(", "【", "】", "!", ",", "$",

"·", "?", ".", "、", "-", "—", ":", ":", "《", "》", "=",

"。", "…", "“", "?", "”", "~", " ", "-", "+", "\\\\", "‘",

"~", ";", "’", "...", "..", "&", "#", "....", ",",

"0", "1", "2", "3", "4", "5", "6", "7", "8", "9", "10"

"的", "和", "之", "了", "哦", "那", "一个", ]

for line in range(len(pd_train)):

dict_label = pd_train['label'][line]

dict_content = str(pd_train['content'][line]) #float=>str

#print(dict_label,dict_content)

cut_words = ""

data = dict_content.strip("\\n")

data = data.replace(",", "") #一定要过滤符号 ","否则多列

seg_list = jieba.cut(data, cut_all=False)

for seg in seg_list:

if seg not in stopwords:

cut_words += seg + " "

#print(cut_words)

label = -1

if dict_label=="喜悦":

label = 0

elif dict_label=="愤怒":

label = 1

elif dict_label=="哀伤":

label = 2

else:

label = -1

train_labels.append(label)

train_words.append(cut_words)

print(len(train_labels),len(train_words)) #209043 209043

for line in range(len(pd_test)):

dict_label = pd_test['label'][line]

dict_content = str(pd_test['content'][line])

cut_words = ""

data = dict_content.strip("\\n")

data = data.replace(",", "")

seg_list = jieba.cut(data, cut_all=False)

for seg in seg_list:

if seg not in stopwords:

cut_words += seg + " "

label = -1

if dict_label=="喜悦":

label = 0

elif dict_label=="愤怒":

label = 1

elif dict_label=="哀伤":

label = 2

else:

label = -1

test_labels.append(label)

test_words.append(cut_words)

print(len(test_labels),len(test_words)) #97366 97366

print(test_labels[:5]) #['喜悦', '喜悦', '愤怒', '哀伤', '喜悦']

#-----------------------------------------------------------------------------

#TFIDF计算

#将文本中的词语转换为词频矩阵 矩阵元素a[i][j] 表示j词在i类文本下的词频

vectorizer = CountVectorizer(min_df=100) #MemoryError控制参数

#该类会统计每个词语的tf-idf权值

transformer = TfidfTransformer()

#第一个fit_transform是计算tf-idf 第二个fit_transform是将文本转为词频矩阵

tfidf = transformer.fit_transform(vectorizer.fit_transform(train_words+test_words))

for n in tfidf[:5]:

print(n)

print(type(tfidf))

#获取词袋模型中的所有词语

word = vectorizer.get_feature_names()

for n in word[:10]:

print(n)

print("单词数量:", len(word))

#将tf-idf矩阵抽取 元素w[i][j]表示j词在i类文本中的tf-idf权重

X = coo_matrix(tfidf, dtype=np.float32).toarray() #稀疏矩阵

print(X.shape)

print(X[:10])

X_train = X[:len(train_labels)]

X_test = X[len(train_labels):]

y_train = train_labels

y_test = test_labels

print(len(X_train),len(X_test),len(y_train),len(y_test))

#-----------------------------------------------------------------------------

#分类模型

clf = MultinomialNB()

#clf = svm.LinearSVC()

#clf = LogisticRegression(solver='liblinear')

#clf = RandomForestClassifier(n_estimators=10)

#clf = neighbors.KNeighborsClassifier(n_neighbors=7)

#clf = AdaBoostClassifier()

clf.fit(X_train, y_train)

print('模型的准确度:'.format(clf.score(X_test, y_test)))

pre = clf.predict(X_test)

print("分类")

print(len(pre), len(y_test))

print(classification_report(y_test, pre, digits=4))

输出结果如下所示:

训练集数目(总体):209043

测试集数目(总体):97366

Building prefix dict from the default dictionary ...

Dumping model to file cache C:\\Users\\xdtech\\AppData\\Local\\Temp\\jieba.cache

Loading model cost 0.885 seconds.

Prefix dict has been built succesfully.

<class 'scipy.sparse.csr.csr_matrix'>

单词数量: 6997

(306409, 6997)

[[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

...

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 0. 0.]]

209043 97366 209043 97366

模型的准确度:0.6670398290984533

分类

97366 97366

precision recall f1-score support

0 0.6666 0.9833 0.7945 61453

1 0.6365 0.1184 0.1997 17461

2 0.7071 0.1330 0.2240 18452

avg / total 0.6689 0.6670 0.5797 97366

四.Bert模型微博情感分析

模型框架如下图所示:

1.模型训练

blog34_kerasbert_train.py

代码如下:

# -*- coding: utf-8 -*-

"""

Created on Wed Nov 24 00:09:48 2021

@author: xiuzhang

"""

import json

import codecs

import pandas as pd

from keras_bert import load_trained_model_from_checkpoint, Tokenizer

from keras.layers import *

from keras.models import Model

from keras.optimizers import Adam

import os

import tensorflow as tf

os.environ["CUDA_DEVICES_ORDER"] = "PCI_BUS_IS"

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

#指定了每个GPU进程中使用显存的上限,0.9表示可以使用GPU 90%的资源进行训练

gpu_options = tf.GPUOptions(per_process_gpu_memory_fraction=0.9)

sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options))

maxlen = 300

BATCH_SIZE = 8

config_path = 'chinese_L-12_H-768_A-12/bert_config.json'

checkpoint_path = 'chinese_L-12_H-768_A-12/bert_model.ckpt'

dict_path = 'chinese_L-12_H-768_A-12/vocab.txt'

#读取vocab词典

token_dict =

with codecs.open(dict_path, 'r', 'utf-8') as reader:

for line in reader:

token = line.strip()

token_dict[token] = len(token_dict)

#------------------------------------------类函数定义--------------------------------------

#词典中添加否则Unknown

class OurTokenizer(Tokenizer):

def _tokenize(self, text):

R = []

for c in text:

if c in self._token_dict:

R.append(c)

else:

R.append('[UNK]') #剩余的字符是[UNK]

return R

tokenizer = OurTokenizer(token_dict)

#数据填充

def seq_padding(X, padding=0):

L = [len(x) for x in X]

ML = max(L)

return np.array([

np.concatenate([x, [padding] * (ML - len(x))]) if len(x) < ML else x for x in X

])

class DataGenerator:

def __init__(self, data, batch_size=BATCH_SIZE):

self.data = data

self.batch_size = batch_size

self.steps = len(self.data) // self.batch_size

if len(self.data) % self.batch_size != 0:

self.steps += 1

def __len__(self):

return self.steps

def __iter__(self):

while True:

idxs = list(range(len(self.data)))

np.random.shuffle(idxs)

X1, X2, Y = [], [], []

for i in idxs:

d = self.data[i]

text = d[0][:maxlen]

x1, x2 = tokenizer.encode(first=text)

y = d[1]

X1.append(x1)

X2.append(x2)

Y.append(y)

if len(X1) == self.batch_size or i == idxs[-1]:

X1 = seq_padding(X1)

X2 = seq_padding(X2)

Y = seq_padding(Y)

yield [X1, X2], Y

[X1, X2, Y] = [], [], []

#构建模型

def create_cls_model(num_labels[Python人工智能] 三十二.Bert模型 Keras-bert基本用法及预训练模型

[Python人工智能] 三十五.基于Transformer的商品评论情感分析 机器学习和深度学习的Baseline模型实现

[Python人工智能] 三十六.基于Transformer的商品评论情感分析 keras构建多头自注意力(Transformer)模型

[Python人工智能] 三十六.基于Transformer的商品评论情感分析 keras构建多头自注意力(Transformer)模型