大数据数据仓库环境准备

Posted DB架构

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了大数据数据仓库环境准备相关的知识,希望对你有一定的参考价值。

1.Hive 安装部署

1)把apache-hive-3.1.2-bin.tar.gz上传到linux的/opt/software目录下

2)解压apache-hive-3.1.2-bin.tar.gz到/opt/module/目录下面

[maxwell@hadoop102 software]$ tar -zxvf /opt/software/apache-hive-3.1.2-bin.tar.gz -C /opt/module/3)修改apache-hive-3.1.2-bin.tar.gz的名称为hive

[maxwell@hadoop102 software]$ cd /opt/module/

[maxwell@hadoop102 module]$ ls -ltr

total 0

drwxr-xr-x. 7 maxwell maxwell 245 Apr 2 2019 jdk1.8.0_212

drwxrwxr-x. 3 maxwell maxwell 18 Mar 22 23:20 hadoop-3.1.3

drwxr-xr-x. 13 maxwell maxwell 204 Mar 23 11:02 hadoop

drwxrwxr-x. 8 maxwell maxwell 160 Mar 23 14:50 zookeeper

drwxr-xr-x. 9 maxwell maxwell 130 Mar 23 15:23 kafka

drwxrwxr-x. 9 maxwell maxwell 239 Mar 25 13:30 flume

drwxrwxr-x. 2 maxwell maxwell 76 Mar 25 21:58 db_log

drwxrwxr-x. 3 maxwell maxwell 117 Mar 27 17:14 applog

drwxr-xr-x. 11 maxwell maxwell 117 Mar 28 12:56 datax

drwxrwxr-x. 5 maxwell maxwell 237 Mar 29 09:25 maxwell

drwxrwxr-x. 9 maxwell maxwell 153 Mar 29 10:05 apache-hive-3.1.2-bin

[maxwell@hadoop102 module]$ mv /opt/module/apache-hive-3.1.2-bin/ /opt/module/hive

[maxwell@hadoop102 module]$ ls -ltr

total 0

drwxr-xr-x. 7 maxwell maxwell 245 Apr 2 2019 jdk1.8.0_212

drwxrwxr-x. 3 maxwell maxwell 18 Mar 22 23:20 hadoop-3.1.3

drwxr-xr-x. 13 maxwell maxwell 204 Mar 23 11:02 hadoop

drwxrwxr-x. 8 maxwell maxwell 160 Mar 23 14:50 zookeeper

drwxr-xr-x. 9 maxwell maxwell 130 Mar 23 15:23 kafka

drwxrwxr-x. 9 maxwell maxwell 239 Mar 25 13:30 flume

drwxrwxr-x. 2 maxwell maxwell 76 Mar 25 21:58 db_log

drwxrwxr-x. 3 maxwell maxwell 117 Mar 27 17:14 applog

drwxr-xr-x. 11 maxwell maxwell 117 Mar 28 12:56 datax

drwxrwxr-x. 5 maxwell maxwell 237 Mar 29 09:25 maxwell

drwxrwxr-x. 9 maxwell maxwell 153 Mar 29 10:05 hive

[maxwell@hadoop102 module]$ 4)修改/etc/profile.d/my_env.sh,添加环境变量

[maxwell@hadoop102 ~]$ cd /opt/software/

[maxwell@hadoop102 software]$

[maxwell@hadoop102 software]$ sudo vim /etc/profile.d/my_env.sh

[maxwell@hadoop102 software]$ 添加内容

#HIVE_HOME

export HIVE_HOME=/opt/module/hive

export PATH=$PATH:$HIVE_HOME/bin

重启Xshell对话框或者source一下 /etc/profile.d/my_env.sh文件,使环境变量生效

[maxwell@hadoop102 software]$

[maxwell@hadoop102 software]$ source /etc/profile.d/my_env.sh

[maxwell@hadoop102 software]$

5)解决日志Jar包冲突,进入/opt/module/hive/lib目录

[maxwell@hadoop102 lib]$ ls -ltr log4j-slf4j-impl-2.10.0.jar

-rw-rw-r--. 1 maxwell maxwell 24173 Apr 15 2020 log4j-slf4j-impl-2.10.0.jar

[maxwell@hadoop102 lib]$ pwd

/opt/module/hive/lib

[maxwell@hadoop102 lib]$ mv log4j-slf4j-impl-2.10.0.jar log4j-slf4j-impl-2.10.0.jar.bak

[maxwell@hadoop102 lib]$ ls -ltr log4j-slf4j-impl-2.10.0.jar.bak

-rw-rw-r--. 1 maxwell maxwell 24173 Apr 15 2020 log4j-slf4j-impl-2.10.0.jar.bak

[maxwell@hadoop102 lib]$ 2.Hive 元数据配置到mysql

2.1 拷贝驱动

将MySQL的JDBC驱动拷贝到Hive的lib目录下

[maxwell@hadoop102 lib]$ cp /opt/software/mysql-connector-java-5.1.27-bin.jar /opt/module/hive/lib/

[maxwell@hadoop102 lib]$ 2.2 配置Metastore到MySQL

在$HIVE_HOME/conf目录下新建hive-site.xml文件

添加如下内容

[maxwell@hadoop102 conf]$ vim hive-site.xml

[maxwell@hadoop102 conf]$ cat hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop102:3306/metastore?useSSL=false&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>centos123</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10000</value>

</property>

<property>

<name>hive.server2.thrift.bind.host</name>

<value>hadoop102</value>

</property>

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

<property>

<name>hive.cli.print.header</name>

<value>true</value>

</property>

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

</property>

</configuration>

[maxwell@hadoop102 conf]$ pwd

/opt/module/hive/conf

[maxwell@hadoop102 conf]$ 3 启动Hive

3.1 初始化元数据库

1)登陆MySQL

[maxwell@hadoop102 conf]$ mysql -uroot -pcentos123

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \\g.

Your MySQL connection id is 342

Server version: 5.7.16-log MySQL Community Server (GPL)

Copyright (c) 2000, 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\\h' for help. Type '\\c' to clear the current input statement.

mysql> 2)新建Hive元数据库

mysql>

mysql> create database metastore;

Query OK, 1 row affected (0.00 sec)

mysql> 3)初始化Hive元数据库

[maxwell@hadoop102 conf]$ schematool -initSchema -dbType mysql -verbose4)修改元数据库字符集

Hive元数据库的字符集默认为Latin1,由于其不支持中文字符,故若建表语句中包含中文注释,会出现乱码现象。如需解决乱码问题,须做以下修改。

修改Hive元数据库中存储注释的字段的字符集为utf-8

[maxwell@hadoop102 conf]$ mysql -uroot -pcentos123

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \\g.

Your MySQL connection id is 348

Server version: 5.7.16-log MySQL Community Server (GPL)

Copyright (c) 2000, 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\\h' for help. Type '\\c' to clear the current input statement.

mysql> use metastore

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql>

(1)字段注释

mysql> alter table COLUMNS_V2 modify column COMMENT varchar(256) character set utf8;

Query OK, 0 rows affected (0.04 sec)

Records: 0 Duplicates: 0 Warnings: 0(2)表注释

mysql> alter table TABLE_PARAMS modify column PARAM_VALUE mediumtext character set utf8;

Query OK, 0 rows affected (0.06 sec)

Records: 0 Duplicates: 0 Warnings: 0

(3)退出mysql

mysql> quit;

Bye

[maxwell@hadoop102 conf]$3.2 启动Hive客户端

[maxwell@hadoop102 hive]$ ls -ltr

total 52

-rwxr-xr-x. 1 maxwell maxwell 230 Aug 23 2019 NOTICE

-rwxr-xr-x. 1 maxwell maxwell 20798 Aug 23 2019 LICENSE

-rwxr-xr-x. 1 maxwell maxwell 2469 Aug 23 2019 RELEASE_NOTES.txt

drwxrwxr-x. 4 maxwell maxwell 34 Mar 29 10:05 examples

drwxrwxr-x. 3 maxwell maxwell 157 Mar 29 10:05 bin

drwxrwxr-x. 4 maxwell maxwell 35 Mar 29 10:05 scripts

drwxrwxr-x. 7 maxwell maxwell 68 Mar 29 10:05 hcatalog

drwxrwxr-x. 2 maxwell maxwell 44 Mar 29 10:05 jdbc

drwxrwxr-x. 4 maxwell maxwell 12288 Mar 29 10:15 lib

drwxrwxr-x. 2 maxwell maxwell 4096 Mar 29 10:18 conf

[maxwell@hadoop102 hive]$ pwd

/opt/module/hive

[maxwell@hadoop102 hive]$ bin/hive

which: no hbase in (/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/opt/module/jdk1.8.0_212/bin:/opt/module/hadoop/bin:/opt/module/hadoop/sbin:/opt/module/kafka/bin:/home/maxwell/.local/bin:/home/maxwell/bin:/opt/module/jdk1.8.0_212/bin:/opt/module/hadoop/bin:/opt/module/hadoop/sbin:/opt/module/kafka/bin:/opt/module/hive/bin)

Hive Session ID = 22be5461-43f7-4ce9-87dc-0adb2a4dcd41

Logging initialized using configuration in jar:file:/opt/module/hive/lib/hive-common-3.1.2.jar!/hive-log4j2.properties Async: true

Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Hive Session ID = a52aa9bb-2b0f-4c22-9760-107a1fd26b7a

hive (default)> show databases;

OK

database_name

default

Time taken: 0.92 seconds, Fetched: 1 row(s)

hive (default)> 大数据技术架构(组件)——Hive:环境准备1

1.0.1、环境准备

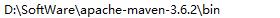

1.0.1.0、maven安装

1.0.1.0.1、下载软件包

1.0.1.0.2、配置环境变量

1.0.1.0.3、调整maven仓库

打开$MAVEN_HOME/conf/settings.xml文件,调整maven仓库地址以及镜像地址

<settings xmIns="http://maven.apache.org/SETTINGS/1.0.0"

xmIns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/SETTINGS/1.0.0

https://maven.apache.org/xsd/settings-1.0.0.xsd">

<localRepository>你自己的路径</localRepository>

<mirrors>

<mirror>

<id>alimaven</id>

<name>aliyun maven</name>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

<mirrorOf>central</mirrorof>

</mirror>

</mirrors>

</settings>1.0.1.1、cywin安装

该软件安装主要是为了支持windows编译源码涉及到的基础环境包

1.0.1.1.1、下载软件包

1.0.1.1.2、安装相关编译包

需要安装cywin,gcc+相关的编译包

binutils

gcc

gcc-mingw

gdb1.0.1.2、源码包下载

https://downloads.apache.org/hive/hive-2.3.9/

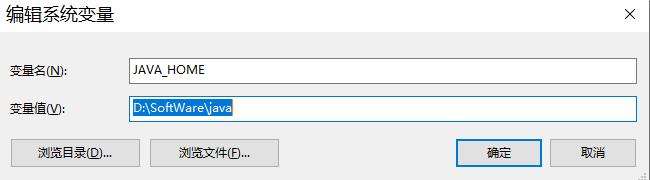

1.0.1.3、JDK安装

1.0.1.3.1、下载软件包

1.0.1.3.2、配置环境变量

1.0.1.3.2.1、创建JAVA_HOME系统变量

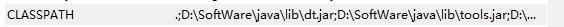

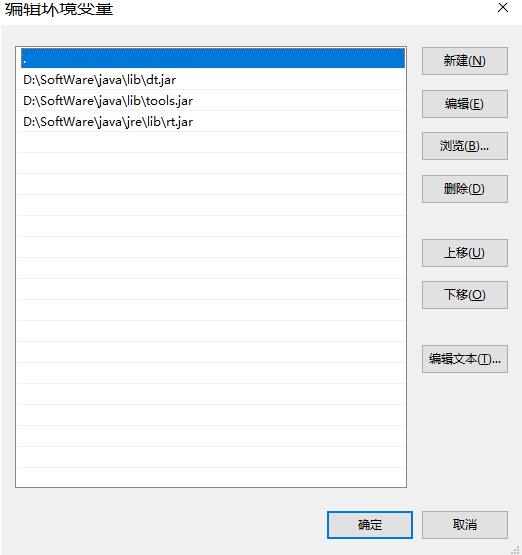

1.0.1.3.2.2、创建CLASSPATH系统变量

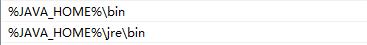

1.0.1.3.2.3、追加到Path变量中

1.0.1.4、Hadoop安装

1.0.1.4.1、下载软件包

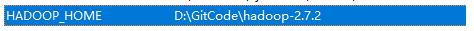

1.0.1.4.2、配置环境变量

创建HADOOP_HOME系统变量,然后将该变量追加到Path全局变量中

1.0.1.4.3、编辑配置文件

注意:下载的软件包中可能不包含下列的文件,但提供了template文件,直接重命名即可。由于该篇是属于学习自用,所以只需要配置基础核心信息即可。下列文件位于$HADOOP_HOME/etc/hadoop目录下。

1.0.1.4.3.1、修改core-site.xml文件

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>1.0.1.4.3.2、修改yarn-site.xml文件

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

</configuration>1.0.1.4.3.3、修改mapred-site.xml文件

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>1.0.1.4.4、初始化启动

# 首先初始化namenode,打开cmd执行下面的命令

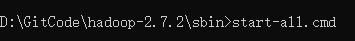

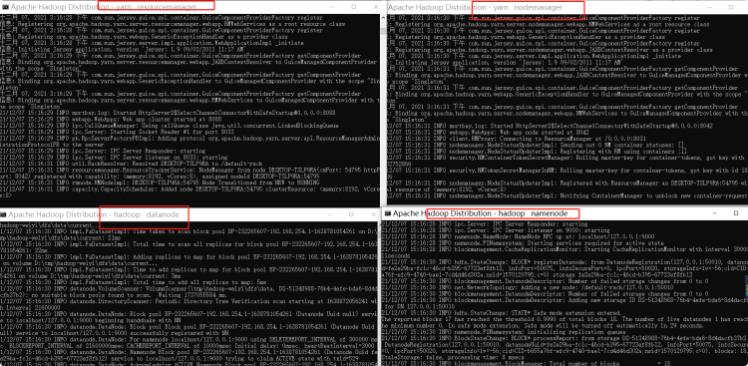

$HADOOP_HOME/bin> hadoop namenode -format# 当初始化完成后,启动hadoop

$HADOOP_HOME/sbin> start-all.cmd

当以下进程都启动成功后,hadoop基本环境也算是搭建成功了!

以上是关于大数据数据仓库环境准备的主要内容,如果未能解决你的问题,请参考以下文章

大数据数据仓库-基于大数据体系构建数据仓库(Hive,Flume,Kafka,Azkaban,Oozie,SparkSQL)