TensorFlow2 手把手教你训练 MNIST 数据集 part1

Posted 我是小白呀

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了TensorFlow2 手把手教你训练 MNIST 数据集 part1相关的知识,希望对你有一定的参考价值。

TensorFlow2 手把手教你训练 MNIST 数据集 part1

概述

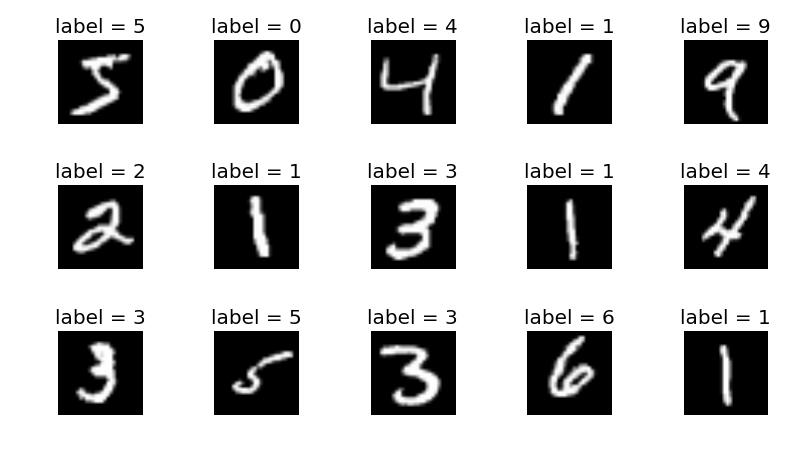

MNIST 包含 0~9 的手写数字, 共有 60000 个训练集和 10000 个测试集. 数据的格式为单通道 28*28 的灰度图.

get_data 函数

def get_data():

"""

读取数据

:return: 返回分批完的训练集和测试集

"""

# 读取数据

(X_train, y_train), (X_test, y_test) = tf.keras.datasets.mnist.load_data()

# 调试输出

print("X_train:", X_train.shape, "y_train:", y_train.shape)

print("X_test:", X_test.shape, "y_test:", y_test.shape)

# 分割训练集

train_db = tf.data.Dataset.from_tensor_slices((X_train, y_train)).shuffle(60000, seed=0)

train_db = train_db.batch(batch_size).map(pre_processing).repeat(iteration_num) # 迭代45次

# 分割测试集

test_db = tf.data.Dataset.from_tensor_slices((X_test, y_test)).shuffle(10000, seed=0)

test_db = test_db.batch(batch_size).map(pre_processing)

return train_db, test_db

pre_processing 函数

def pre_processing(x, y):

'''

数据预处理

:param x: 特征值

:param y: 目标值

:return: 返回预处理好的x, y

'''

# 转换x

x = tf.cast(x, tf.float32) / 255 # 转换为0~1的形式

x = tf.reshape(x, [-1, 784]) # 把x铺平

# 转换y

# y = tf.convert_to_tensor(y) # 转成tensor

y = tf.cast(y, tf.int32) # 转成tensor

y = tf.one_hot(y, depth=10) # 转成one_hot编码

return x, y

main 函数

def main():

"""

主函数

:return: 无返回值

"""

# 获取分批数据

train_db, test_db = get_data()

# 生成w1形状为[784, 512]的截断正态分布, 中心为0, 标差为0.1

w1 = tf.Variable(tf.random.truncated_normal([784, 512], stddev=0.1))

# 生成b1形状为[512]初始化为0

b1 = tf.Variable(tf.zeros([512]))

# 生成w2形状为[512, 256]的截断正态分布, 中心为0, 标差为0.1

w2 = tf.Variable(tf.random.truncated_normal([512, 256], stddev=0.1))

# 生成b2形状为[256]初始化为0

b2 = tf.Variable(tf.zeros([256]))

# 生成w3形状为[256, 10]的截断正态分布, 中心为0, 标差为0.1

w3 = tf.Variable(tf.random.truncated_normal([256, 10], stddev=0.1))

# 生成b3形状为[10]初始化为0

b3 = tf.Variable(tf.zeros([10]))

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# 第一个隐层

h1 = x @ w1 + b1

h1 = tf.nn.relu(h1) # 激活

# 第二个隐层

h2 = h1 @ w2 + b2

h2 = tf.nn.relu(h2) # 激活

# 输出层

out = h2 @ w3 + b3

# 计算损失函数

loss = tf.square(y - out)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, [w1, b1, w2, b2, w3, b3])

for p, g in zip([w1, b1, w2, b2, w3, b3], grads):

p.assign_sub(learning_rate * g)

# 每100批调试输出一下损失

if step % 1000 == 0:

print(step, 'loss:', float(loss))

# 每1000次计算测试集正确率

if step % 5000 == 0:

total, total_correct = 0., 0

for step, (x, y) in enumerate(test_db):

# 第一个隐层

h1 = x @ w1 + b1

h1 = tf.nn.relu(h1) # 激活

# 第二个隐层

h2 = h1 @ w2 + b2

h2 = tf.nn.relu(h2) # 激活

# 输出层

out = h2 @ w3 + b3

# [256, 10] => [256]

pred = tf.argmax(out, axis=1)

# 从one_hot编码变回来

y = tf.argmax(y, axis=1)

# 判断是否正确

correct = tf.equal(pred, y)

# bool tensor => int tensor => numpy

total_correct += tf.reduce_sum(tf.cast(correct, dtype=tf.int32)).numpy()

total += x.shape[0]

print(step, "Accuracy", total_correct / total * 100, "%")

完整代码

import tensorflow as tf

# 定义超参数

batch_size = 256 # 一次训练的样本数目

learning_rate = 0.001 # 学习率

iteration_num = 45 # 迭代次数

def get_data():

"""

读取数据

:return: 返回分批完的训练集和测试集

"""

# 读取数据

(X_train, y_train), (X_test, y_test) = tf.keras.datasets.mnist.load_data()

# 调试输出

print("X_train:", X_train.shape, "y_train:", y_train.shape)

print("X_test:", X_test.shape, "y_test:", y_test.shape)

# 分割训练集

train_db = tf.data.Dataset.from_tensor_slices((X_train, y_train)).shuffle(60000, seed=0)

train_db = train_db.batch(batch_size).map(pre_processing).repeat(iteration_num) # 迭代45次

# 分割测试集

test_db = tf.data.Dataset.from_tensor_slices((X_test, y_test)).shuffle(10000, seed=0)

test_db = test_db.batch(batch_size).map(pre_processing)

return train_db, test_db

def pre_processing(x, y):

'''

数据预处理

:param x: 特征值

:param y: 目标值

:return: 返回预处理好的x, y

'''

# 转换x

x = tf.cast(x, tf.float32) / 255 # 转换为0~1的形式

x = tf.reshape(x, [-1, 784]) # 把x铺平

# 转换y

# y = tf.convert_to_tensor(y) # 转成tensor

y = tf.cast(y, tf.int32) # 转成tensor

y = tf.one_hot(y, depth=10) # 转成one_hot编码

return x, y

def main():

"""

主函数

:return: 无返回值

"""

# 获取分批数据

train_db, test_db = get_data()

# 生成w1形状为[784, 512]的截断正态分布, 中心为0, 标差为0.1

w1 = tf.Variable(tf.random.truncated_normal([784, 512], stddev=0.1))

# 生成b1形状为[512]初始化为0

b1 = tf.Variable(tf.zeros([512]))

# 生成w2形状为[512, 256]的截断正态分布, 中心为0, 标差为0.1

w2 = tf.Variable(tf.random.truncated_normal([512, 256], stddev=0.1))

# 生成b2形状为[256]初始化为0

b2 = tf.Variable(tf.zeros([256]))

# 生成w3形状为[256, 10]的截断正态分布, 中心为0, 标差为0.1

w3 = tf.Variable(tf.random.truncated_normal([256, 10], stddev=0.1))

# 生成b3形状为[10]初始化为0

b3 = tf.Variable(tf.zeros([10]))

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# 第一个隐层

h1 = x @ w1 + b1

h1 = tf.nn.relu(h1) # 激活

# 第二个隐层

h2 = h1 @ w2 + b2

h2 = tf.nn.relu(h2) # 激活

# 输出层

out = h2 @ w3 + b3

# 计算损失函数

loss = tf.square(y - out)

loss = tf.reduce_mean(loss)

# 计算梯度

grads = tape.gradient(loss, [w1, b1, w2, b2, w3, b3])

# 更新权重

for p, g in zip([w1, b1, w2, b2, w3, b3], grads):

p.assign_sub(learning_rate * g)

# 每100批调试输出一下损失

if step % 1000 == 0:

print(step, 'loss:', float(loss))

# 每1000次计算测试集正确率

if step % 5000 == 0:

total, total_correct = 0., 0

for step, (x, y) in enumerate(test_db):

# 第一个隐层

h1 = x @ w1 + b1

h1 = tf.nn.relu(h1) # 激活

# 第二个隐层

h2 = h1 @ w2 + b2

h2 = tf.nn.relu(h2) # 激活

# 输出层

out = h2 @ w3 + b3

# [256, 10] => [256]

pred = tf.argmax(out, axis=1)

# 从one_hot编码变回来

y = tf.argmax(y, axis=1)

# 判断是否正确

correct = tf.equal(pred, y)

# bool tensor => int tensor => numpy

total_correct += tf.reduce_sum(tf.cast(correct, dtype=tf.int32)).numpy()

total += x.shape[0]

print(step, "Accuracy", total_correct / total * 100, "%")

if __name__ == "__main__":

main()

输出结果:

X_train: (60000, 28, 28) y_train: (60000,)

X_test: (10000, 28, 28) y_test: (10000,)

0 loss: 1.8554267883300781

39 Accuracy 12.479999999999999 %

1000 loss: 0.21533580124378204

2000 loss: 0.1755288988351822

3000 loss: 0.13523665070533752

4000 loss: 0.12891730666160583

5000 loss: 0.11436235904693604

39 Accuracy 55.95 %

6000 loss: 0.10332286357879639

7000 loss: 0.09788000583648682

8000 loss: 0.09040157496929169

9000 loss: 0.07946525514125824

10000 loss: 0.08393758535385132

39 Accuracy 68.33 %

以上是关于TensorFlow2 手把手教你训练 MNIST 数据集 part1的主要内容,如果未能解决你的问题,请参考以下文章

TensorFlow2 手把手教你训练 MNIST 数据集 part1