TensorFlow2 手把手教你实现自定义层

Posted 我是小白呀

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了TensorFlow2 手把手教你实现自定义层相关的知识,希望对你有一定的参考价值。

TensorFlow2 手把手教你实现自定义层

概述

通过自定义网络, 我们可以自己创建网络并和现有的网络串联起来, 从而实现各种各样的网络结构.

Sequential

Sequential 是 Keras 的一个网络容器. 可以帮助我们将多层网络封装在一起.

通过 Sequential 我们可以把现有的层已经我们自己的层实现结合, 一次前向传播就可以实现数据从第一层到最后一层的计算.

格式:

tf.keras.Sequential(

layers=None, name=None

)

例子:

# 5层网络模型

model = tf.keras.Sequential([

tf.keras.layers.Dense(256, activation=tf.nn.relu),

tf.keras.layers.Dense(128, activation=tf.nn.relu),

tf.keras.layers.Dense(64, activation=tf.nn.relu),

tf.keras.layers.Dense(32, activation=tf.nn.relu),

tf.keras.layers.Dense(10)

])

Model & Layer

通过 Model 和 Layer 的__init__和call()我们可以自定义层和模型.

Model:

class My_Model(tf.keras.Model): # 继承Model

def __init__(self):

"""

初始化

"""

super(My_Model, self).__init__()

self.fc1 = My_Dense(784, 256) # 第一层

self.fc2 = My_Dense(256, 128) # 第二层

self.fc3 = My_Dense(128, 64) # 第三层

self.fc4 = My_Dense(64, 32) # 第四层

self.fc5 = My_Dense(32, 10) # 第五层

def call(self, inputs, training=None):

"""

在Model被调用的时候执行

:param inputs: 输入

:param training: 默认为None

:return: 返回输出

"""

x = self.fc1(inputs)

x = tf.nn.relu(x)

x = self.fc2(x)

x = tf.nn.relu(x)

x = self.fc3(x)

x = tf.nn.relu(x)

x = self.fc4(x)

x = tf.nn.relu(x)

x = self.fc5(x)

return x

Layer:

class My_Dense(tf.keras.layers.Layer): # 继承Layer

def __init__(self, input_dim, output_dim):

"""

初始化

:param input_dim:

:param output_dim:

"""

super(My_Dense, self).__init__()

# 添加变量

self.kernel = self.add_variable("w", [input_dim, output_dim]) # 权重

self.bias = self.add_variable("b", [output_dim]) # 偏置

def call(self, inputs, training=None):

"""

在Layer被调用的时候执行, 计算结果

:param inputs: 输入

:param training: 默认为None

:return: 返回计算结果

"""

# y = w * x + b

out = inputs @ self.kernel + self.bias

return out

案例

数据集介绍

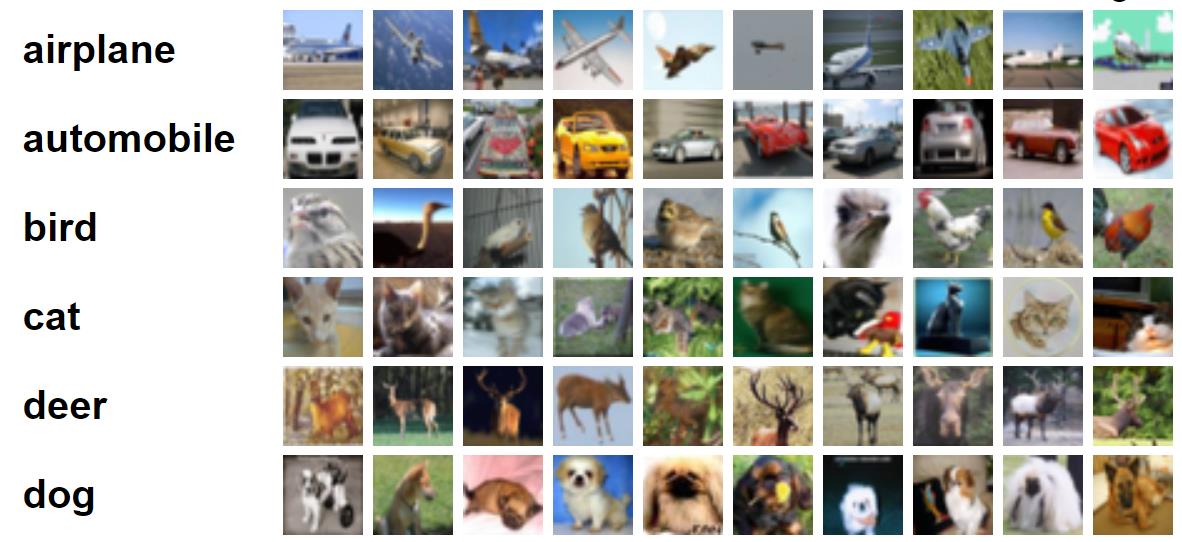

CIFAR-10 是由 10 类不同的物品组成的 6 万张彩色图片的数据集. 其中 5 万张为训练集, 1 万张为测试集.

完整代码

import tensorflow as tf

def pre_process(x, y):

# 转换x

x = 2 * tf.cast(x, dtype=tf.float32) / 255 - 1 # 转换为-1~1的形式

x = tf.reshape(x, [-1, 32 * 32 * 3]) # 把x铺平

# 转换y

y = tf.convert_to_tensor(y) # 转换为0~1的形式

y = tf.one_hot(y, depth=10) # 转成one_hot编码

# 返回x, y

return x, y

def get_data():

"""

获取数据

:return:

"""

# 获取数据

(X_train, y_train), (X_test, y_test) = tf.keras.datasets.cifar10.load_data()

# 调试输出维度

print(X_train.shape) # (50000, 32, 32, 3)

print(y_train.shape) # (50000, 1)

# squeeze

y_train = tf.squeeze(y_train) # (50000, 1) => (50000,)

y_test = tf.squeeze(y_test) # (10000, 1) => (10000,)

# 分割训练集

train_db = tf.data.Dataset.from_tensor_slices((X_train, y_train)).shuffle(10000, seed=0)

train_db = train_db.batch(batch_size).map(pre_process).repeat(iteration_num) # 迭代20次

# 分割测试集

test_db = tf.data.Dataset.from_tensor_slices((X_test, y_test)).shuffle(10000, seed=0)

test_db = test_db.batch(batch_size).map(pre_process)

return train_db, test_db

class My_Dense(tf.keras.layers.Layer): # 继承Layer

def __init__(self, input_dim, output_dim):

"""

初始化

:param input_dim:

:param output_dim:

"""

super(My_Dense, self).__init__()

# 添加变量

self.kernel = self.add_weight("w", [input_dim, output_dim]) # 权重

self.bias = self.add_weight("b", [output_dim]) # 偏置

def call(self, inputs, training=None):

"""

在Layer被调用的时候执行, 计算结果

:param inputs: 输入

:param training: 默认为None

:return: 返回计算结果

"""

# y = w * x + b

out = inputs @ self.kernel + self.bias

return out

class My_Model(tf.keras.Model): # 继承Model

def __init__(self):

"""

初始化

"""

super(My_Model, self).__init__()

self.fc1 = My_Dense(32 * 32 * 3, 256) # 第一层

self.fc2 = My_Dense(256, 128) # 第二层

self.fc3 = My_Dense(128, 64) # 第三层

self.fc4 = My_Dense(64, 32) # 第四层

self.fc5 = My_Dense(32, 10) # 第五层

def call(self, inputs, training=None):

"""

在Model被调用的时候执行

:param inputs: 输入

:param training: 默认为None

:return: 返回输出

"""

x = self.fc1(inputs)

x = tf.nn.relu(x)

x = self.fc2(x)

x = tf.nn.relu(x)

x = self.fc3(x)

x = tf.nn.relu(x)

x = self.fc4(x)

x = tf.nn.relu(x)

x = self.fc5(x)

return x

# 定义超参数

batch_size = 256 # 一次训练的样本数目

learning_rate = 0.001 # 学习率

iteration_num = 20 # 迭代次数

optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate) # 优化器

loss = tf.losses.CategoricalCrossentropy(from_logits=True) # 损失

network = My_Model() # 实例化网络

# 调试输出summary

network.build(input_shape=[None, 32 * 32 * 3])

print(network.summary())

# 组合

network.compile(optimizer=optimizer,

loss=loss,

metrics=["accuracy"])

if __name__ == "__main__":

# 获取分割的数据集

train_db, test_db = get_data()

# 拟合

network.fit(train_db, epochs=5, validation_data=test_db, validation_freq=1)

输出结果:

Model: "my__model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

my__dense (My_Dense) multiple 786688

_________________________________________________________________

my__dense_1 (My_Dense) multiple 32896

_________________________________________________________________

my__dense_2 (My_Dense) multiple 8256

_________________________________________________________________

my__dense_3 (My_Dense) multiple 2080

_________________________________________________________________

my__dense_4 (My_Dense) multiple 330

=================================================================

Total params: 830,250

Trainable params: 830,250

Non-trainable params: 0

_________________________________________________________________

None

(50000, 32, 32, 3)

(50000, 1)

2021-06-15 14:35:26.600766: I tensorflow/compiler/mlir/mlir_graph_optimization_pass.cc:176] None of the MLIR Optimization Passes are enabled (registered 2)

Epoch 1/5

3920/3920 [==============================] - 39s 10ms/step - loss: 0.9676 - accuracy: 0.6595 - val_loss: 1.8961 - val_accuracy: 0.5220

Epoch 2/5

3920/3920 [==============================] - 41s 10ms/step - loss: 0.3338 - accuracy: 0.8831 - val_loss: 3.3207 - val_accuracy: 0.5141

Epoch 3/5

3920/3920 [==============================] - 41s 10ms/step - loss: 0.1713 - accuracy: 0.9410 - val_loss: 4.2247 - val_accuracy: 0.5122

Epoch 4/5

3920/3920 [==============================] - 41s 10ms/step - loss: 0.1237 - accuracy: 0.9581 - val_loss: 4.9458 - val_accuracy: 0.5050

Epoch 5/5

3920/3920 [==============================] - 42s 11ms/step - loss: 0.1003 - accuracy: 0.9666 - val_loss: 5.2425 - val_accuracy: 0.5097

以上是关于TensorFlow2 手把手教你实现自定义层的主要内容,如果未能解决你的问题,请参考以下文章

TensorFlow2 手把手教你训练 Fashion Mnist