Hadoop安装 --- Hadoop安装

Posted 你∈我

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop安装 --- Hadoop安装相关的知识,希望对你有一定的参考价值。

目录

修改hadoop313的权限

在/opt目录下,将hadoop目录下的所有权限更改

chown -R root:root ./hadoop313/

更改配置文件

(注意更改配置文件里面有些名字需要改)

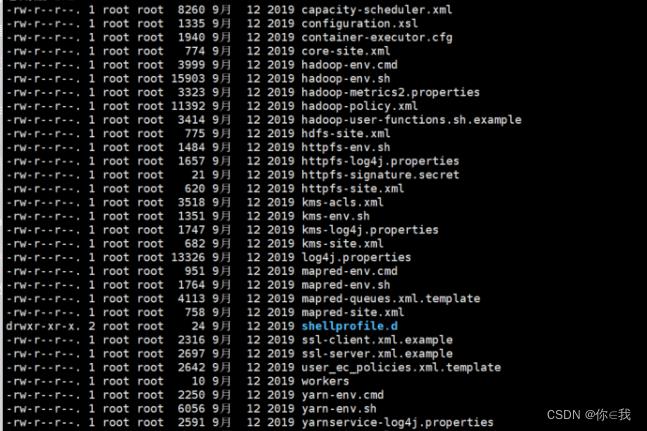

切换到opt/soft/hadoop313/etc/hadoop目录下面

在此目录下新建一个文件夹data,作为namenode上本地的hadoop临时文件夹

配置core-site.xml

vim ./core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop1:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/soft/hadoop313/data</value>

<description>namenode上本地的hadoop临时文件夹</description>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

<description>读写队列缓存:128k</description>

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

</configuration>

配置hadoop-env.sh

vim hadoop-env.sh

export JAVA_HOME=/opt/soft/jdk180配置hdfs-site.xml

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

<description>hadoop中每一个block文件的备份数量</description>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/opt/soft/hadoop313/data/dfs/name</value>

<description>namenode上存储hdfsq名字空间元数据的目录</description>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/opt/soft/hadoop313/data/dfs/data</value>

<description>datanode上数据块的物理存储位置目录</description>

</property>

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

<description>关闭权限验证</description>

</property>

</configuration>

配置mapred-site.xml

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

<description>job执行框架:local,classic or yarn</description>

<final>true</final>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>/opt/soft/hadoop313/etc/hadoop:/opt/soft/hadoop313/share/hadoop/common/lib/*:/opt/soft/hadoop313/share/hadoop/common/*:/opt/soft/hadoop313/share/hadoop/hdfs/*:/opt/soft/hadoop313/share/hadoop/hdfs/lib/*:/opt/soft/hadoop313/share/hadoop/mapreduce/*:/opt/soft/hadoop313/share/hadoop/mapreduce/lib/*:/opt/soft/hadoop313/share/hadoop/yarn/*:/opt/soft/hadoop313/share/hadoop/yarn/lib/*

</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop1:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop1:19888</value>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>1024</value>

</property>

</configuration>

配置yarn-site.xml

vim yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>20000</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

</property>

<property>

<name>yarn.nodemanager.localizer.address</name>

<value>hadoop1:8040</value>

</property>

<property>

<name>yarn.nodemanager.address</name>

<value>hadoop1:8050</value>

</property>

<property>

<name>yarn.nodemanager.webapp.address</name>

<value>hadoop1:8042</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/opt/soft/hadoop313/yarndata/yarn</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/opt/soft/hadoop313/yarndata/log</value>

</property>

</configuration>

配置环境

vim /etc/profile

#HADOOP_HOME

export HADOOP_HOME=/opt/soft/hadoop313

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/lib

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export HDFS_JOURNALNODE_USER=root

export HDFS_ZKFC_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export HADOOP_YARN_HOME=$HADOOP_HOME

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_LIBEXEC_DIR=$HADOOP_HOME/libexec

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

配置完成之后

刷新当前的shell环境

source /etc/profile

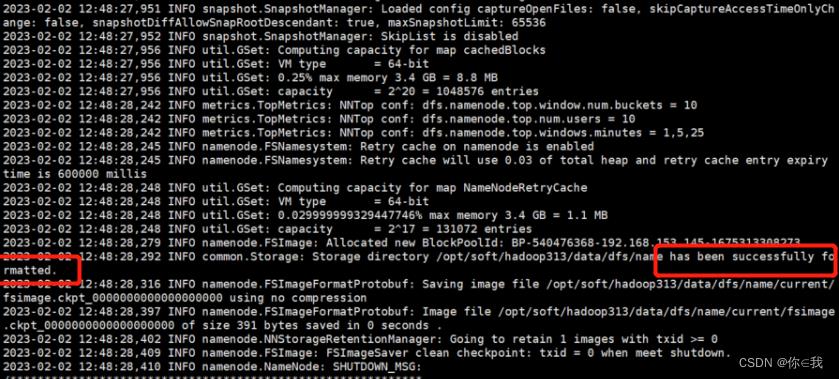

初始化

hdfs namenode -format

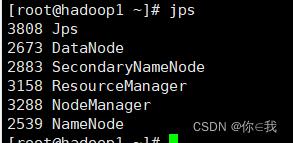

启动所有 SH

start-all.sh

输入jps,出来以下结果则启动成功

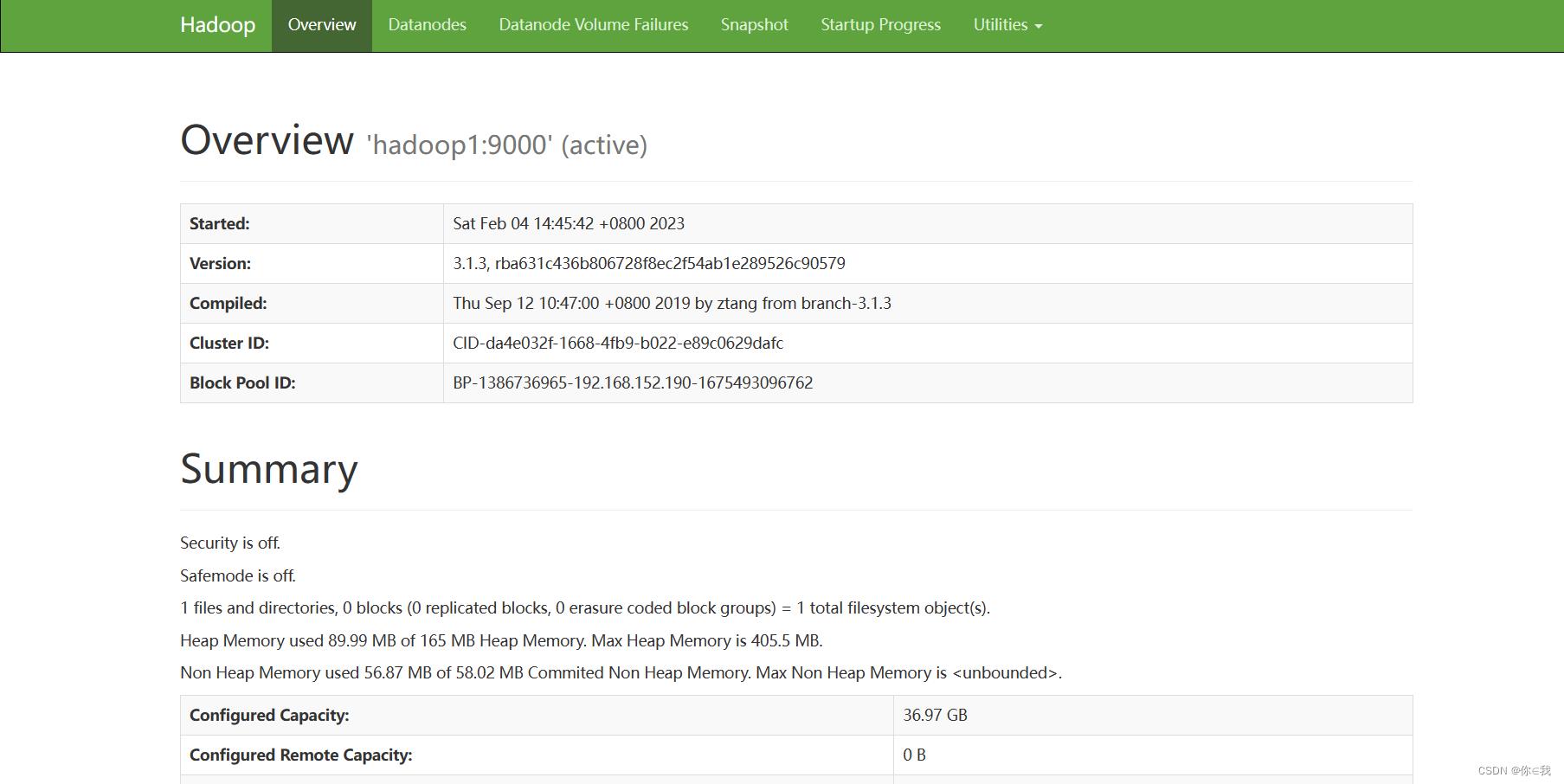

输入IP:9870访问网站会出来下面页面

例如:192.168.111.111:9870

以上是关于Hadoop安装 --- Hadoop安装的主要内容,如果未能解决你的问题,请参考以下文章