Deep RL Bootcamp Lecture 4A: Policy Gradients

Posted ecoflex

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Deep RL Bootcamp Lecture 4A: Policy Gradients相关的知识,希望对你有一定的参考价值。

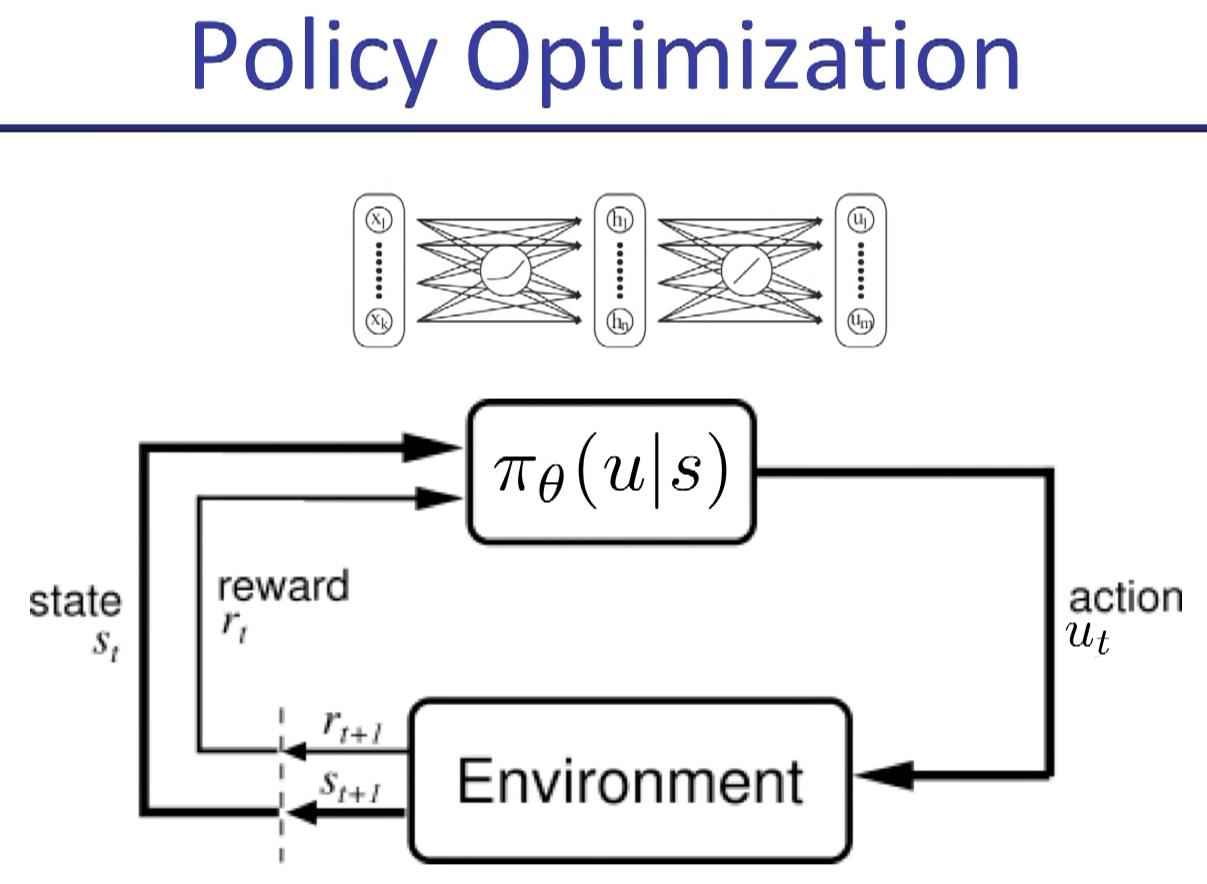

in policy gradient, "a" is replaced by "u" usually.

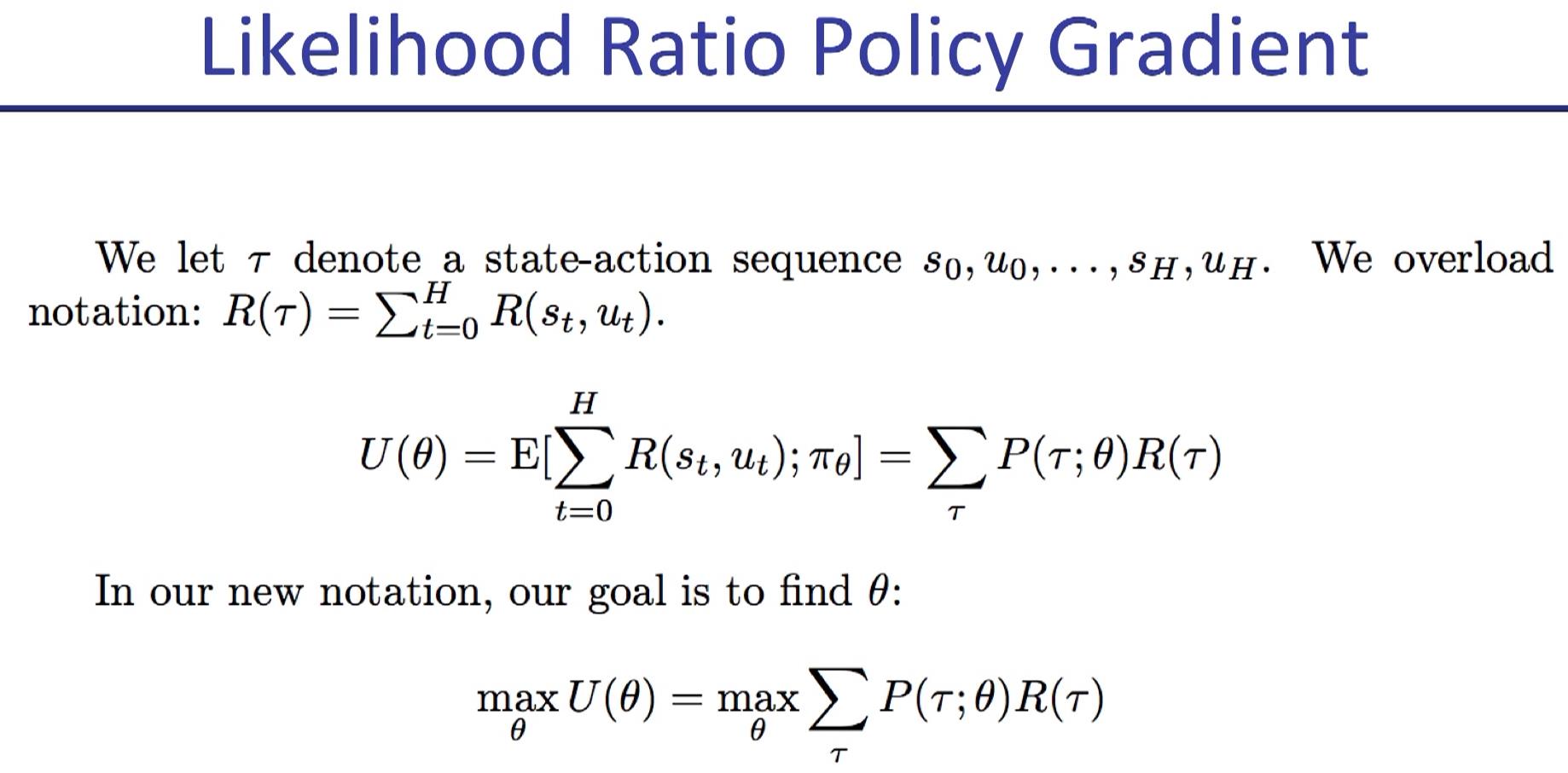

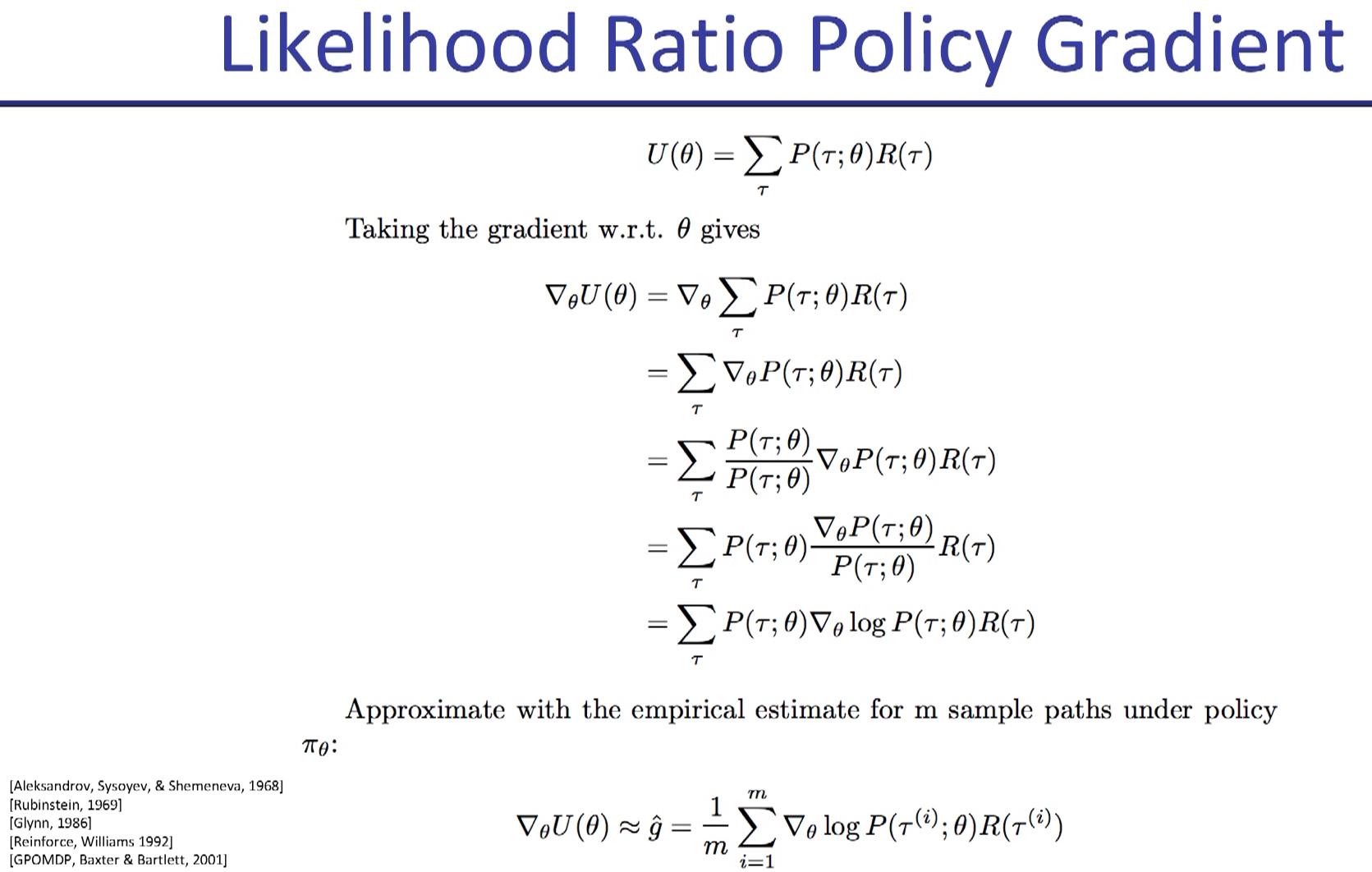

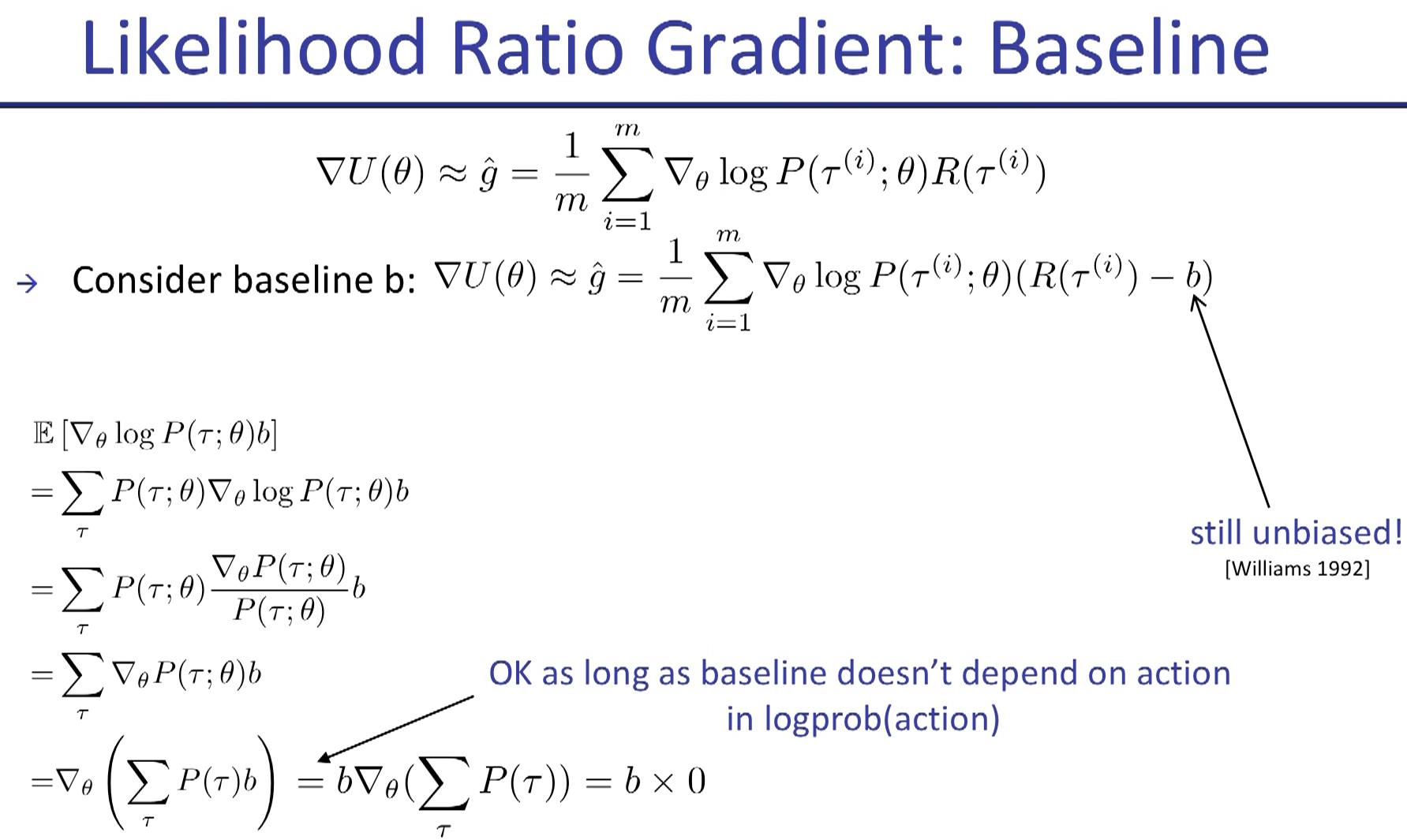

use this new form to estimate how good the update is.

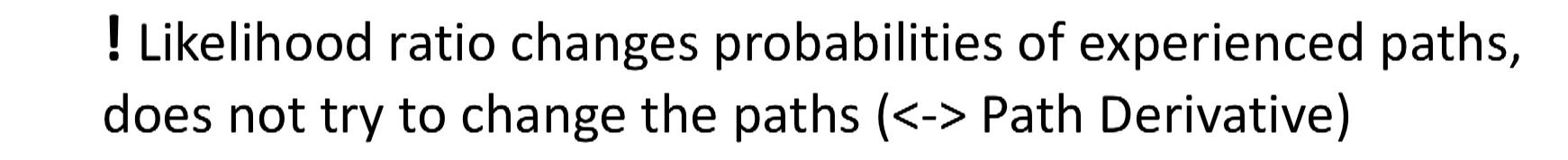

If all three path show positive reward, should the policy increase the posibility of all the sampling?

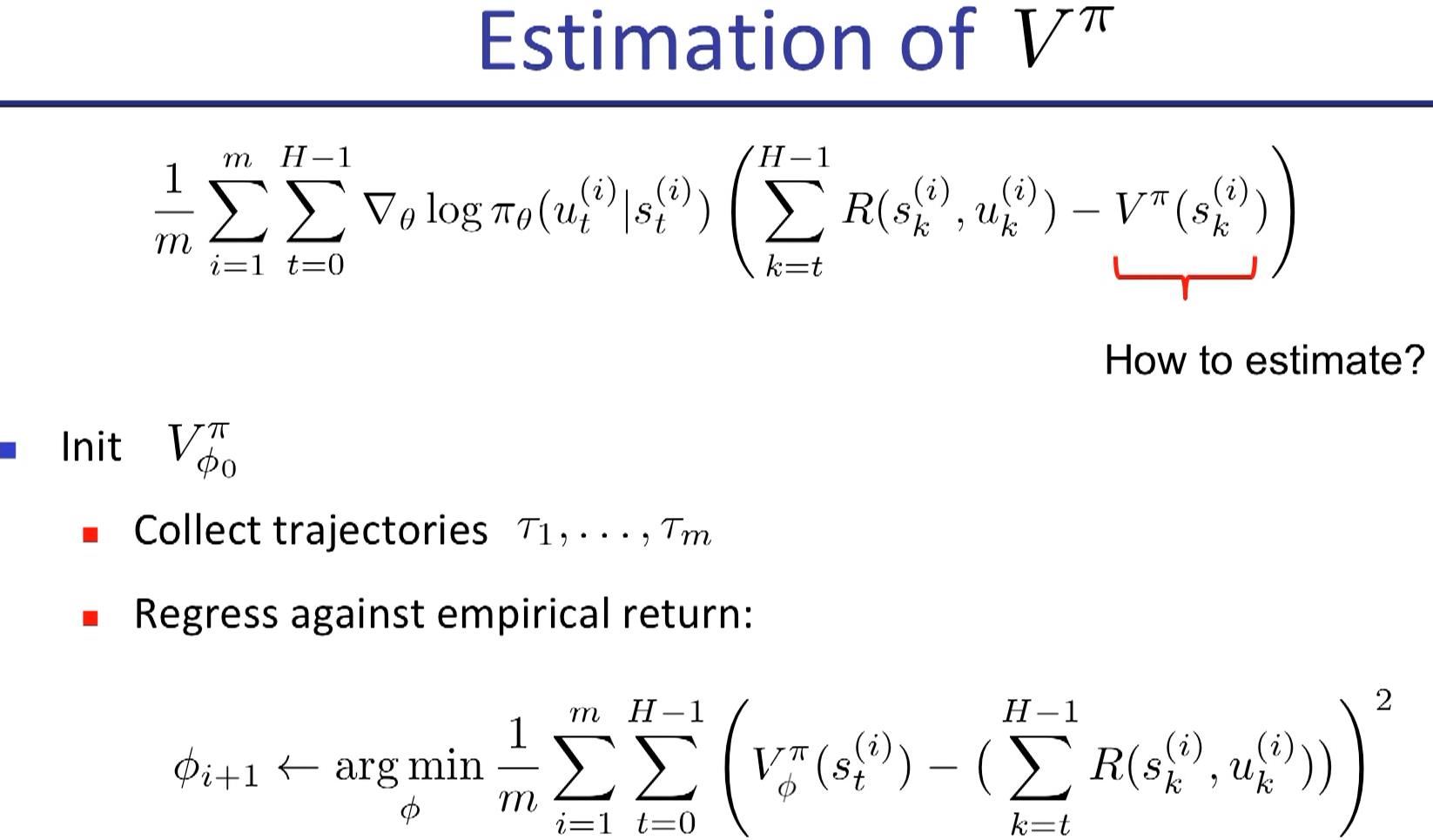

monte carlo estimate

TD estimate

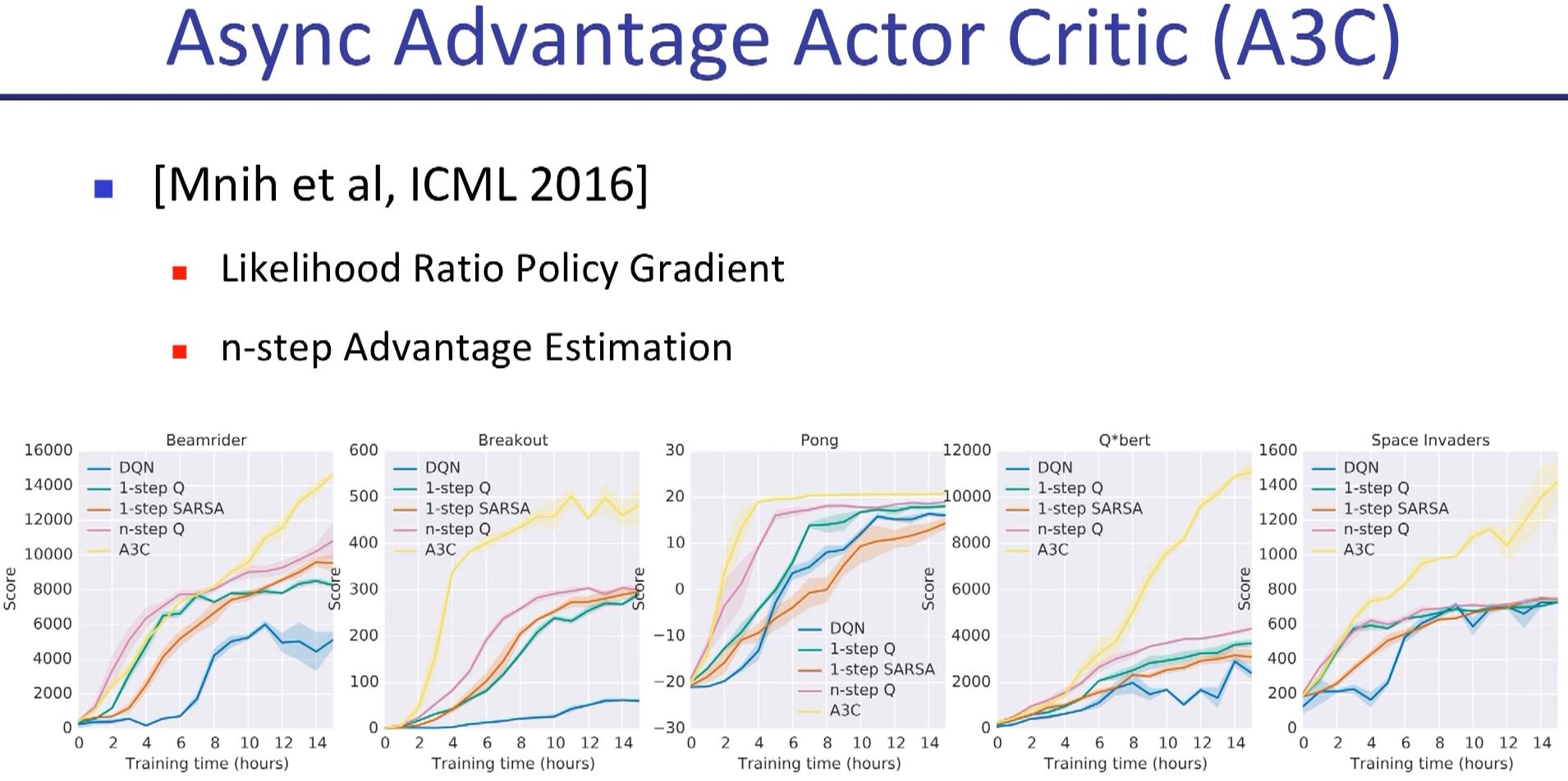

2 weeks to train as respect to real world time scale.

but could be faster in emulator (MOJOCO).

we don\'t know whether a set of hyperparameter is going to work until enough interations have past. So it\'s kind of tricky, and using emulator could alleviate this problem.

question: how to transform learnt knowledge of robot to real life if we are not sure about the match between simulator and real world?

Randomly initilize many simulator and see the robustness of the algorithm

this video shows that even a robot with two years of endeavor of a group of experts still isn\'t good at locomotion

hindsight experience replay

Marcin Richard from OpenAI

the program is set to find the best way to get pizza, but when the agent find a ice cream, the agent realizes that ice cream, corresponding to a higher reward, is the exact thing it wants to get.

https://zhuanlan.zhihu.com/p/29486661

https://zhuanlan.zhihu.com/p/31527085

以上是关于Deep RL Bootcamp Lecture 4A: Policy Gradients的主要内容,如果未能解决你的问题,请参考以下文章