人工智能实践:tensorflow笔记-第五周

Posted lijinfrank

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了人工智能实践:tensorflow笔记-第五周相关的知识,希望对你有一定的参考价值。

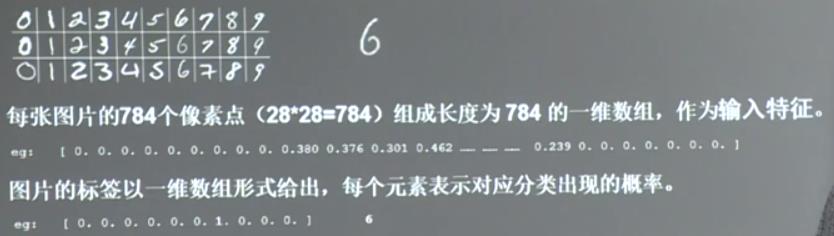

第五周:MNIST数字识别

MNIST数据集:

&emsp?提供6万张2828像素点的0~9手写数字图片和标签,用于训练。

&emsp?提供1万张2828像素点的0~9手写数字图片和标签,用于测试。

使用下面两行代码来下载数据集:

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets(‘./data/‘,one_hot=True)结果:

Successfully downloaded train-images-idx3-ubyte.gz 9912422 bytes.

Extracting ./data/train-images-idx3-ubyte.gz

Successfully downloaded train-labels-idx1-ubyte.gz 28881 bytes.

Extracting ./data/train-labels-idx1-ubyte.gz

Successfully downloaded t10k-images-idx3-ubyte.gz 1648877 bytes.

Extracting ./data/t10k-images-idx3-ubyte.gz

Successfully downloaded t10k-labels-idx1-ubyte.gz 4542 bytes.

Extracting ./data/t10k-labels-idx1-ubyte.gz返回各子集的样本数:

print("train data size:",mnist.train.num_examples)

print("validation data size:",mnist.validation.num_examples)

print("test data size:",mnist.test.num_examples)结果:

train data size: 55000

validation data size: 5000

test data size: 10000用 mnist.train.images[0] 返回第0张图片的像素点;用mnist.train.labels[0]返回第0张图片的标签。

取一小撮数据喂入神经网络训练,定义了BATCH_SIZE 的值以后,可以使用mnist.train.next_batch(BATCH_SIZE)来从训练集中随机获得BATCH_SIZE个数据和标签,分别赋值给xs,和ys,代码如下:

BATCH_SIZE = 200 #定义一小撮是多少

xs,ys = mnist.train.next_batch(BATCH_SIZE)

print("xs shape:",xs.shape) #输出这一小撮训练集的维度

结果:

xs shape: (200, 784)

print("ys shape:",ys.shape) #输出这一小撮训练集的标签维度

结果:

ys shape: (200, 10)介绍一些操作:

tf.get_collection(""):从集合中取全部变量,生成一个列表

tf.add_n([]):列表内对应元素相加

tf.cost(x,dtype):把x转化为dtype类型

tf.argmax(x,axis):返回最大值所在索引号,如tf.argmax(x,axis):返回最大值所在索引号,如tf.argmax([1,0,0],1)返回0.

os.path.join("home","name"):返回home/name(按照路径的-方式重新拼接home和name)

字符串.split():按照指定拆分符串切片,返回分割后的列表。如:‘./model/mnist_model-1001‘.split(‘-‘)[-1]返回1001.

with tf.Graph().as_default() as g::其内定义的节点在计算图g中

保存模型:

saver = tf.train.Saver() #实例化saver对象

with tf.Session() as sess: #在with结构for循环中一定轮数时保存模型当前会话

for i in range(STEPS):

if i%轮数 == 0: #拼接成MODEL_SAVE_PATH/MODEL_NAME-global_step

saver.save(sess.os.path.join(MODEL_SAVE_PATH,MODEL_NAME).global_step=global_step)加载模型

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(存储路径)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess,ckpt.model_checkpoint_path)实例化可还原滑动平均值的saver

ema = tf.train.ExponentialMcvingAverage(滑动平均值)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)准确率计算方法

correct_prediction = tf.equal(tf.argmax(y,1),tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32))5.2 模块化搭建神经网络八股

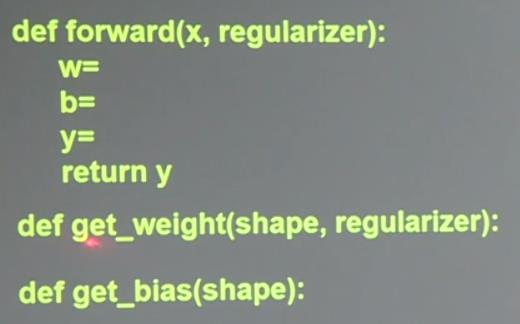

1.前向传播

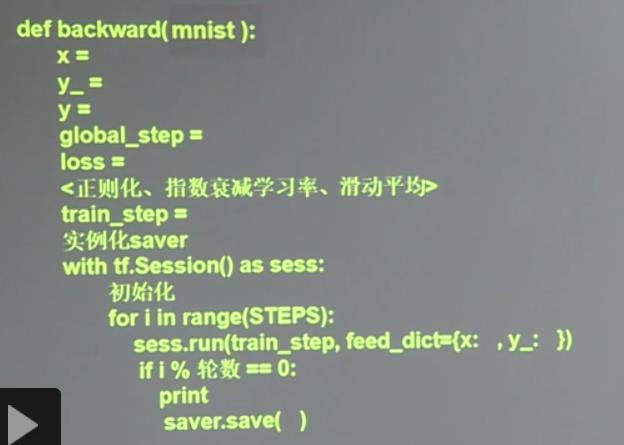

2.反向传播

几个常用的模块

1.损失函数loss含正则化regularization

反向传播代码中加入:

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y,labels=tf.argmax(y_,1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection(‘losses‘))前向传播中加入

if regularizer != None:

tf.add_to_collection(‘losses‘,tf.contrib.layers.l2_regularizer(regularizer)(w))2.学习率learning_rate

反向传播中加入:

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,global_step,LEARNING_RATE_STEP,LEARNING_RATE_DECAY,staircase=True)滑动平均ema

反向传播中加入

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step,ema_op]):

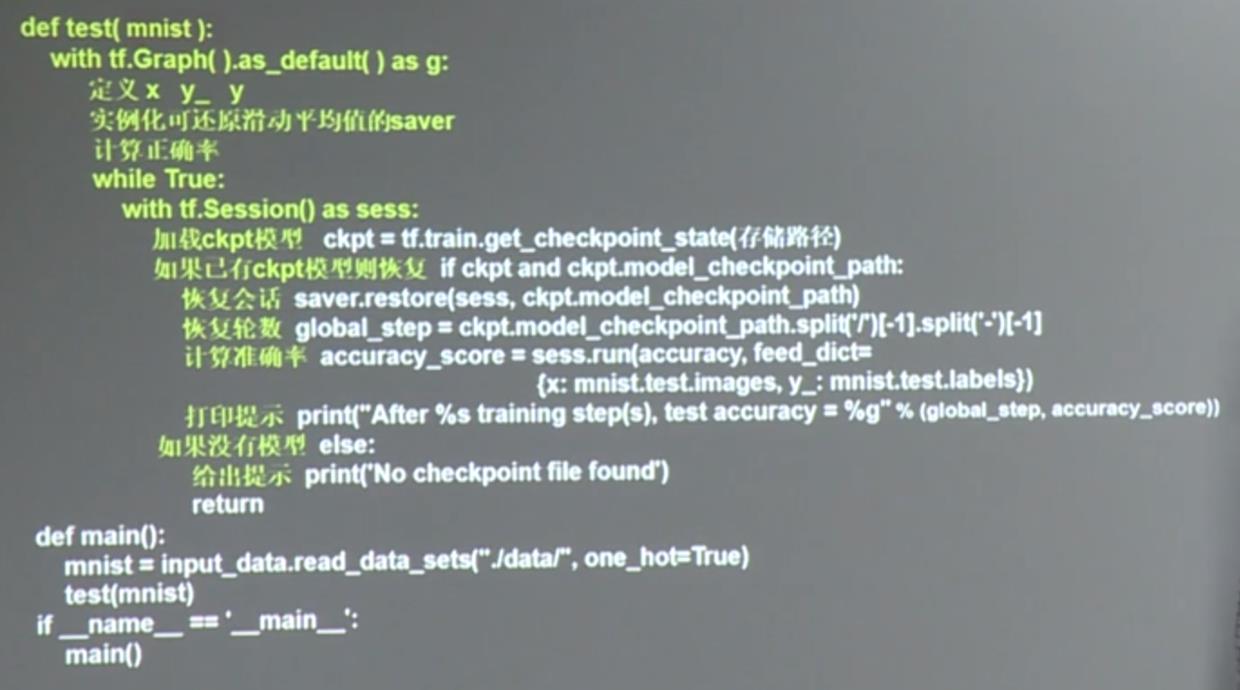

train_op = tf.no_op(name=‘train‘)3.测试代码

5.3手写数字识别准确率

解读代码:

前向传播:mnist_backward.py

反向传播:mnist_forward.py

测试输出准确率:mnist_test.py

所有代码中涉及到的tensorflow的语法:

1.tf.truncated_normal(shape, mean, stddev)的用法:

shape表示生成张量的维度,mean是均值,stddev是标准差。这个函数产生正太分布,均值和标准差自己设定。这是一个截断的产生正太分布的函数,就是说产生正太分布的值如果与均值的差值大于两倍的标准差,那就重新生成。和一般的正太分布的产生随机数据比起来,这个函数产生的随机数与均值的差距不会超过两倍的标准差,但是一般的别的函数是可能的。

2.tf.train.Saver:

参考

TensorFlow通过tf.train.Saver类实现神经网络模型的保存和提取。tf.train.Saver对象saver的save方法将TensorFlow模型保存到指定路径中.

3.tf.equal(A, B):是对比这两个矩阵或者向量的相等的元素,如果是相等的那就返回True,反正返回False,返回的值的矩阵维度和A是一样的.

4.tf.cast():

cast(x, dtype, name=None) 将x的数据格式转化成dtype.例如,原来x的数据格式是bool.

5.TensorFlow常规模型加载方法

参考

完整代码

1.加载数据集

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets(‘./data/‘,one_hot=True)mnist_backward.py

import tensorflow as tf

INPUT_NODE = 784 #喂入的图片的像素是28*28,也就是784个像素点的输入,因此输入节点个数为784个

OUTPUT_NODE = 10 #输出的节点数是10,用来显示图片的10个数字,也就是:0-9

LAYER1_NODE = 500 #隐含层的节点数为500个

def get_weight(shape, regularizer):

w = tf.Variable(tf.truncated_normal(shape,stddev=0.1)) #将权重w初始化为高斯分布的随机数,且方差为0.1

if regularizer != None: #如果需要正则化则引入L2正则化

tf.add_to_collection(‘losses‘, tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

def get_bias(shape): #设置偏置

b = tf.Variable(tf.zeros(shape)) #将偏置初始化为0

return b

def forward(x, regularizer):

w1 = get_weight([INPUT_NODE, LAYER1_NODE], regularizer) #w1的维度为:784*500,不用正则化

b1 = get_bias([LAYER1_NODE],1) #加到隐含层的偏差b1的维度为:500*1,

y1 = tf.nn.relu(tf.matmul(x, w1) + b1) #隐含层的输出为采用了relu激活函数

w2 = get_weight([LAYER1_NODE, OUTPUT_NODE], regularizer) #w2初始化为500*10,不采用正则化

b2 = get_bias([OUTPUT_NODE],1) #加到输出层的偏置为:10*1

y = tf.matmul(y1, w2) + b2 #输出层没有采用激活函数

return ymnist_forward.py

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import os

BATCH_SIZE = 200 #一次喂入神经网络的数据是200组

LEARNING_RATE_BASE = 0.1 #基础学习率为0.1

LEARNING_RATE_DECAY = 0.99 #学习率衰减率为0.99

REGULARIZER = 0.0001 #正则化系数为0.0001

STEPS = 50000 #训练的轮数为50000

MOVING_AVERAGE_DECAY = 0.99 #滑动平均衰减率

MODEL_SAVE_PATH="./model/" #模型的保存路径

MODEL_NAME="mnist_model" #模型的保存文件名

def backward(mnist):

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE]) #为x站位,维度:None*784

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE]) #为y_站位,维度:None*10

y = mnist_forward.forward(x, REGULARIZER) #调用前向传播函数

global_step = tf.Variable(0, trainable=False) #给轮数计数器赋初值,并将其设置为不可训练

#调用包含正则化的损失函数

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

loss = cem + tf.add_n(tf.get_collection(‘losses‘))

#定义指数衰减学习率

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True)

#定义训练过程

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

#定义滑动平均

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name=‘train‘)

saver = tf.train.Saver() #用tf.train.Saver() 创建一个Saver 来管理模型中的所有变量。

#初始化所有变量

with tf.Session() as sess:

init_op = tf.global_variables_initializer()

sess.run(init_op)

for i in range(STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE) #每次读入BATCH_SIZE组数据和标签

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

#保存模型到当前会话

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step)

def main():

mnist = input_data.read_data_sets("./data/", one_hot=True)

backward(mnist)

if __name__ == ‘__main__‘:

main()mnist_test.py

#coding:utf-8

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import mnist_backward

TEST_INTERVAL_SECS = 5 #定义程序循环的间隔时间是5s

def test(mnist):

with tf.Graph().as_default() as g: #复现计算图

x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE])

y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE])

y = mnist_forward.forward(x, None) #计算前向传播

#实例化带滑动平均的saver对象,这样所有参数在被加载时会被复制为各自的滑动平均值

ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

#计算准确率

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

while True:

with tf.Session() as sess:

#给出model.ckpt-n.meta的路径后会加载图结构,并返回saver对象

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

global_step = ckpt.model_checkpoint_path.split(‘/‘)[-1].split(‘-‘)[-1]

accuracy_score = sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels})

print("After %s training step(s), test accuracy = %g" % (global_step, accuracy_score))

else:

print(‘No checkpoint file found‘)

return

time.sleep(TEST_INTERVAL_SECS) #暂停的时间

def main(): #读入数据集

mnist = input_data.read_data_sets("./data/", one_hot=True)

test(mnist) #调用mnist函数

if __name__ == ‘__main__‘:

main()以上是关于人工智能实践:tensorflow笔记-第五周的主要内容,如果未能解决你的问题,请参考以下文章