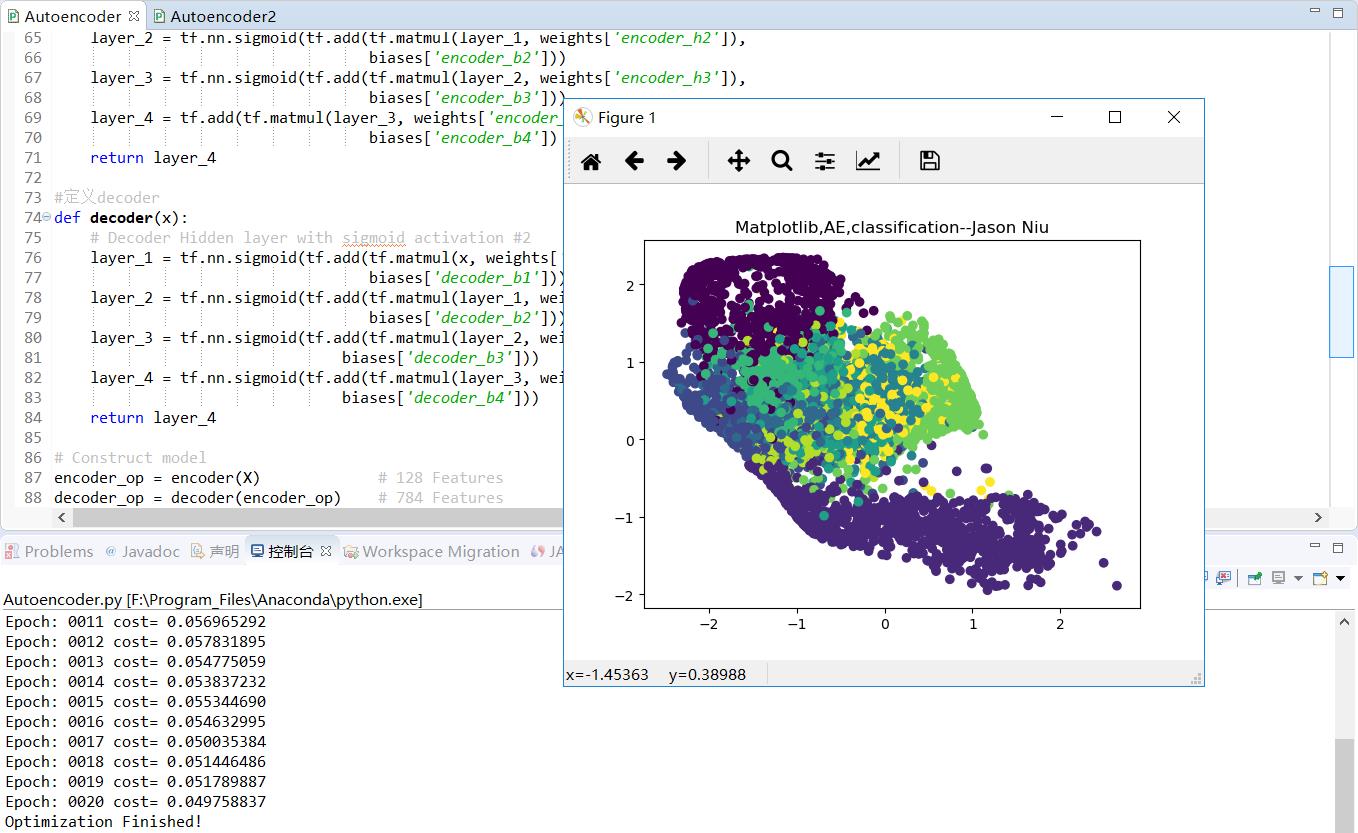

TF之AE:AE实现TF自带数据集AE的encoder之后decoder之前的非监督学习分类

Posted 一个处女座的IT

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了TF之AE:AE实现TF自带数据集AE的encoder之后decoder之前的非监督学习分类相关的知识,希望对你有一定的参考价值。

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

#Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist=input_data.read_data_sets("/niu/mnist_data/",one_hot=False)

# Parameter

learning_rate = 0.001

training_epochs = 20

batch_size = 256

display_step = 1

examples_to_show = 10

# Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28像素即784个特征值)

#tf Graph input(only pictures)

X=tf.placeholder("float", [None,n_input])

# hidden layer settings

n_hidden_1 = 128

n_hidden_2 = 64

n_hidden_3 = 10

n_hidden_4 = 2

weights = {

\'encoder_h1\': tf.Variable(tf.random_normal([n_input,n_hidden_1])),

\'encoder_h2\': tf.Variable(tf.random_normal([n_hidden_1,n_hidden_2])),

\'encoder_h3\': tf.Variable(tf.random_normal([n_hidden_2,n_hidden_3])),

\'encoder_h4\': tf.Variable(tf.random_normal([n_hidden_3,n_hidden_4])),

\'decoder_h1\': tf.Variable(tf.random_normal([n_hidden_4,n_hidden_3])),

\'decoder_h2\': tf.Variable(tf.random_normal([n_hidden_3,n_hidden_2])),

\'decoder_h3\': tf.Variable(tf.random_normal([n_hidden_2,n_hidden_1])),

\'decoder_h4\': tf.Variable(tf.random_normal([n_hidden_1, n_input])),

}

biases = {

\'encoder_b1\': tf.Variable(tf.random_normal([n_hidden_1])),

\'encoder_b2\': tf.Variable(tf.random_normal([n_hidden_2])),

\'encoder_b3\': tf.Variable(tf.random_normal([n_hidden_3])),

\'encoder_b4\': tf.Variable(tf.random_normal([n_hidden_4])),

\'decoder_b1\': tf.Variable(tf.random_normal([n_hidden_3])),

\'decoder_b2\': tf.Variable(tf.random_normal([n_hidden_2])),

\'decoder_b3\': tf.Variable(tf.random_normal([n_hidden_1])),

\'decoder_b4\': tf.Variable(tf.random_normal([n_input])),

}

def encoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights[\'encoder_h1\']),

biases[\'encoder_b1\']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights[\'encoder_h2\']),

biases[\'encoder_b2\']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights[\'encoder_h3\']),

biases[\'encoder_b3\']))

layer_4 = tf.add(tf.matmul(layer_3, weights[\'encoder_h4\']),

biases[\'encoder_b4\'])

return layer_4

#定义decoder

def decoder(x):

# Decoder Hidden layer with sigmoid activation #2

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights[\'decoder_h1\']),

biases[\'decoder_b1\']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights[\'decoder_h2\']),

biases[\'decoder_b2\']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights[\'decoder_h3\']),

biases[\'decoder_b3\']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3, weights[\'decoder_h4\']),

biases[\'decoder_b4\']))

return layer_4

# Construct model

encoder_op = encoder(X) # 128 Features

decoder_op = decoder(encoder_op) # 784 Features

# Prediction

y_pred = decoder_op #After

# Targets (Labels) are the input data.

y_true = X #Before

cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost)

# Launch the graph

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

total_batch = int(mnist.train.num_examples/batch_size)

# Training cycle

for epoch in range(training_epochs):

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # max(x) = 1, min(x) = 0

# Run optimization op (backprop) and cost op (to get loss value)

_, c = sess.run([optimizer, cost], feed_dict={X: batch_xs})

# Display logs per epoch step

if epoch % display_step == 0:

print("Epoch:", \'%04d\' % (epoch+1),

"cost=", "{:.9f}".format(c))

print("Optimization Finished!")

encode_result = sess.run(encoder_op,feed_dict={X:mnist.test.images})

plt.scatter(encode_result[:,0],encode_result[:,1],c=mnist.test.labels)

plt.title(\'Matplotlib,AE,classification--Jason Niu\')

plt.show()

以上是关于TF之AE:AE实现TF自带数据集AE的encoder之后decoder之前的非监督学习分类的主要内容,如果未能解决你的问题,请参考以下文章

TF400898: 发生内部错误。活动 ID:1fc05eca-fed8-4065-ae1a-fc8f2741c0ea