Tensorflow 解决MNIST问题的重构程序

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Tensorflow 解决MNIST问题的重构程序相关的知识,希望对你有一定的参考价值。

分为三个文件:mnist_inference.py:定义前向传播的过程以及神经网络中的参数,抽象成为一个独立的库函数;mnist_train.py:定义神经网络的训练过程,在此过程中,每个一段时间保存一次模型训练的中间结果;mnist_eval.py:定义测试过程。

mnist_inference.py:

#coding=utf8 import tensorflow as tf #1. 定义神经网络结构相关的参数。 INPUT_NODE = 784 OUTPUT_NODE = 10 LAYER1_NODE = 500 #2. 通过tf.get_variable函数来获取变量。 def get_weight_variable(shape, regularizer): weights = tf.get_variable("weights", shape, initializer=tf.truncated_normal_initializer(stddev=0.1)) if regularizer != None: tf.add_to_collection(‘losses‘, regularizer(weights)) return weights #3. 定义神经网络的前向传播过程。使用命名空间方式,不需要把所有的变量都作为变量传递到不同的函数中提高程序的可读性 def inference(input_tensor, regularizer): with tf.variable_scope(‘layer1‘): weights = get_weight_variable([INPUT_NODE, LAYER1_NODE], regularizer) biases = tf.get_variable("biases", [LAYER1_NODE], initializer=tf.constant_initializer(0.0)) layer1 = tf.nn.relu(tf.matmul(input_tensor, weights) + biases) with tf.variable_scope(‘layer2‘): weights = get_weight_variable([LAYER1_NODE, OUTPUT_NODE], regularizer) biases = tf.get_variable("biases", [OUTPUT_NODE], initializer=tf.constant_initializer(0.0)) layer2 = tf.matmul(layer1, weights) + biases return layer2

mnist_train.py:

#coding=utf8

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_inference

import os

#1. 定义神经网络结构相关的参数。 BATCH_SIZE = 100 LEARNING_RATE_BASE = 0.8 LEARNING_RATE_DECAY = 0.99 REGULARIZATION_RATE = 0.0001 TRAINING_STEPS = 30000 MOVING_AVERAGE_DECAY = 0.99 MODEL_SAVE_PATH="MNIST_model/" MODEL_NAME="mnist_model" #2. 定义训练过程。 def train(mnist): # 定义输入输出placeholder。 x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name=‘x-input‘) y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name=‘y-input‘) regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE) y = mnist_inference.inference(x, regularizer) global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。 variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step) variables_averages_op = variable_averages.apply(tf.trainable_variables()) cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1)) cross_entropy_mean = tf.reduce_mean(cross_entropy) loss = cross_entropy_mean + tf.add_n(tf.get_collection(‘losses‘)) learning_rate = tf.train.exponential_decay( LEARNING_RATE_BASE, global_step, mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY, staircase=True) train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step) with tf.control_dependencies([train_step, variables_averages_op]): train_op = tf.no_op(name=‘train‘) # 初始化TensorFlow持久化类。 saver = tf.train.Saver() with tf.Session() as sess: tf.global_variables_initializer().run() for i in range(TRAINING_STEPS): xs, ys = mnist.train.next_batch(BATCH_SIZE) _, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys}) if i % 1000 == 0: print("After %d training step(s), loss on training batch is %g." % (step, loss_value)) saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step) def main(argv=None): mnist = input_data.read_data_sets("MNIST_data", one_hot=True) train(mnist) if __name__ == ‘__main__‘: main()

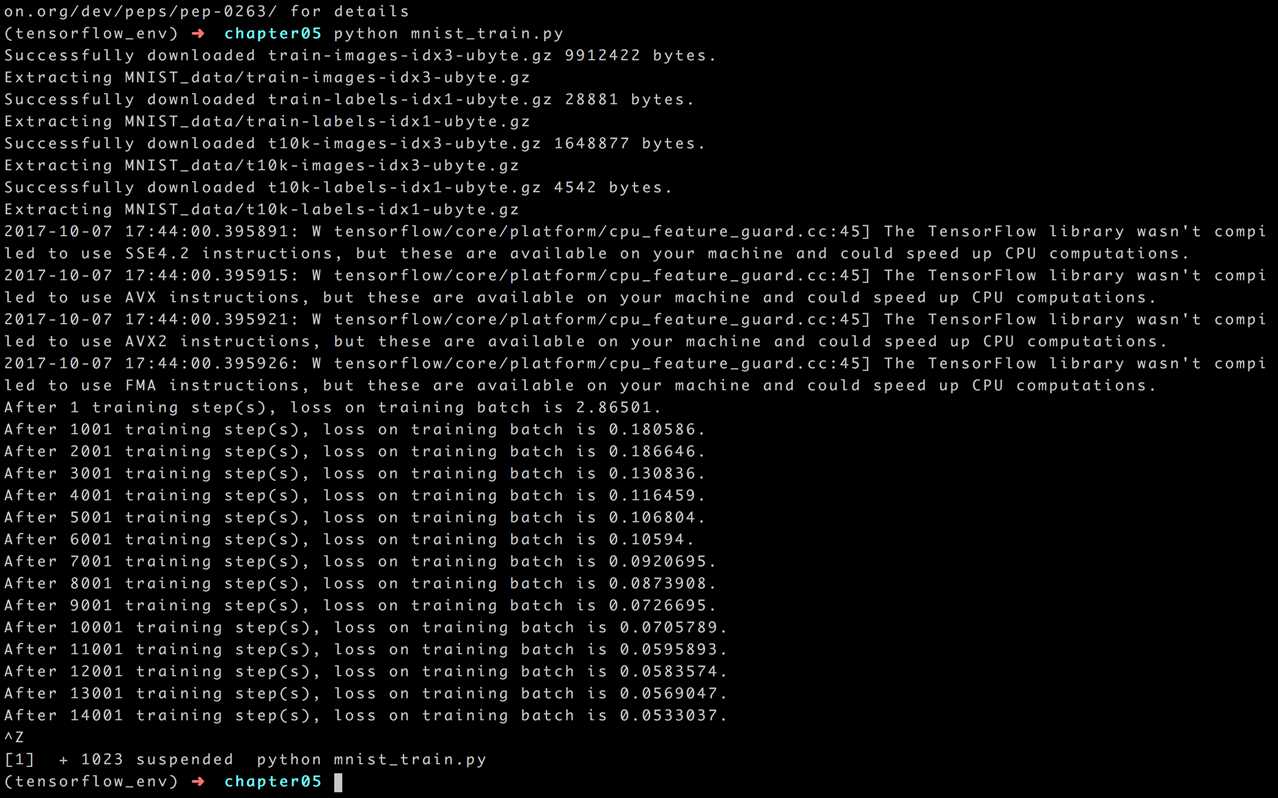

结果如下:

mnist_eval.py:

import time import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import mnist_inference #coding=utf8 import mnist_train #1. 每10秒加载一次最新的模型 # 加载的时间间隔。 EVAL_INTERVAL_SECS = 10 def evaluate(mnist): with tf.Graph().as_default() as g: x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name=‘x-input‘) y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name=‘y-input‘) validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels} y = mnist_inference.inference(x, None) correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) variable_averages = tf.train.ExponentialMovingAverage(mnist_train.MOVING_AVERAGE_DECAY) variables_to_restore = variable_averages.variables_to_restore() saver = tf.train.Saver(variables_to_restore) while True: with tf.Session() as sess: ckpt = tf.train.get_checkpoint_state(mnist_train.MODEL_SAVE_PATH) if ckpt and ckpt.model_checkpoint_path: saver.restore(sess, ckpt.model_checkpoint_path) global_step = ckpt.model_checkpoint_path.split(‘/‘)[-1].split(‘-‘)[-1] accuracy_score = sess.run(accuracy, feed_dict=validate_feed) print("After %s training step(s), validation accuracy = %g" % (global_step, accuracy_score)) else: print(‘No checkpoint file found‘) return time.sleep(EVAL_INTERVAL_SECS) def main(argv=None): mnist = input_data.read_data_sets("MNIST_data", one_hot=True) evaluate(mnist) if __name__ == ‘__main__‘: main()

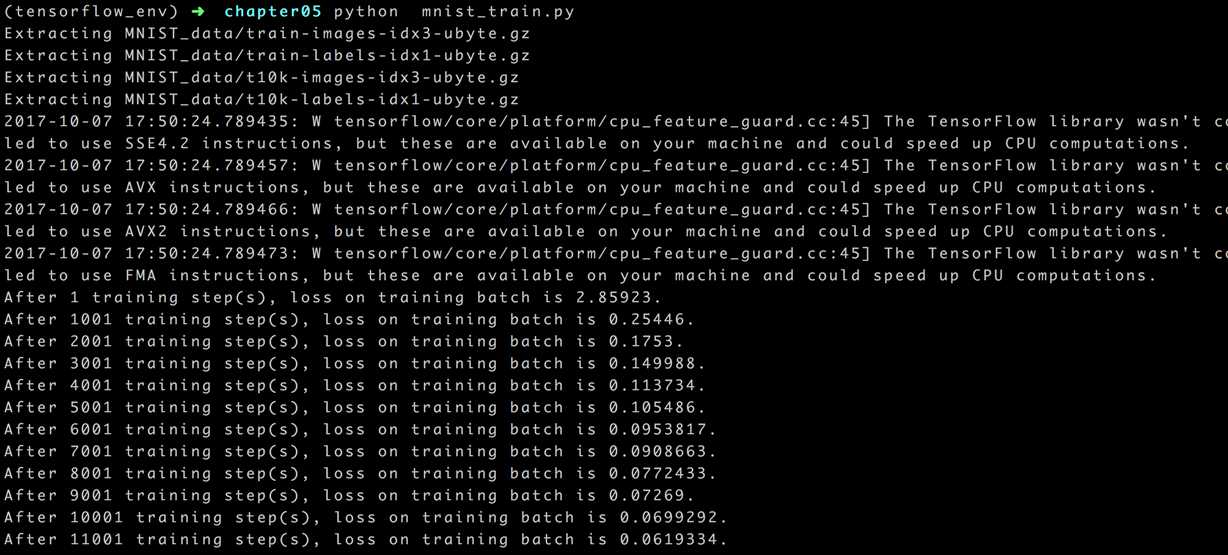

结果如下:

以上是关于Tensorflow 解决MNIST问题的重构程序的主要内容,如果未能解决你的问题,请参考以下文章

Fashion Mnist TensorFlow 数据形状不兼容

第三方库Tensorflow编写程序正常运行,出现warning的问题

机器学习在用到mnist数据集报错No module named 'tensorflow.examples.tutorials'解决办法