Elasticsearch:Aggregation 简介

Posted Elastic 中国社区官方博客

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Elasticsearch:Aggregation 简介相关的知识,希望对你有一定的参考价值。

Aggregation 在中文中也被称作聚合。简单地说,Elasticsearch 中的 aggregation 聚合将你的数据汇总为指标、统计数据或其他分析。聚合可帮助您回答以下问题:

- 我的网站的平均加载时间是多少?

- 根据交易量,谁是我最有价值的客户?

- 什么会被认为是我网络上的大文件?

- 每个产品类别有多少产品?

Elasticsearch 将聚合分为三类:

- Metric 聚合:根据字段值计算指标,例如总和或平均值。

- Bucket 聚合:根据字段值、范围或其他标准将文档分组到存储桶(也称为箱)中。

- Pipeline 聚合:从其他聚合而不是文档或字段中获取输入,并计算新的聚合

在之前的文章 “Elasticsearch:aggregation 介绍” 我对它有一些介绍。在今天的文章中,我来对它做一个非常简单的介绍,以便大家对它有一个更加清楚明了的认识。

准备工作

我们首先需要参阅文章 “Elastic:开发者上手指南” 来安装自己的 Elasticsearch 及 Kibana:

你需要根据自己的平台选择合适的方式来安装。你可以选择最新的 Elastic Stack 8.2 来进行安装。

准备数据

接下来,我们先创建一个练习的数据。我们打开 Kibana,并导航至 Dev Tools:

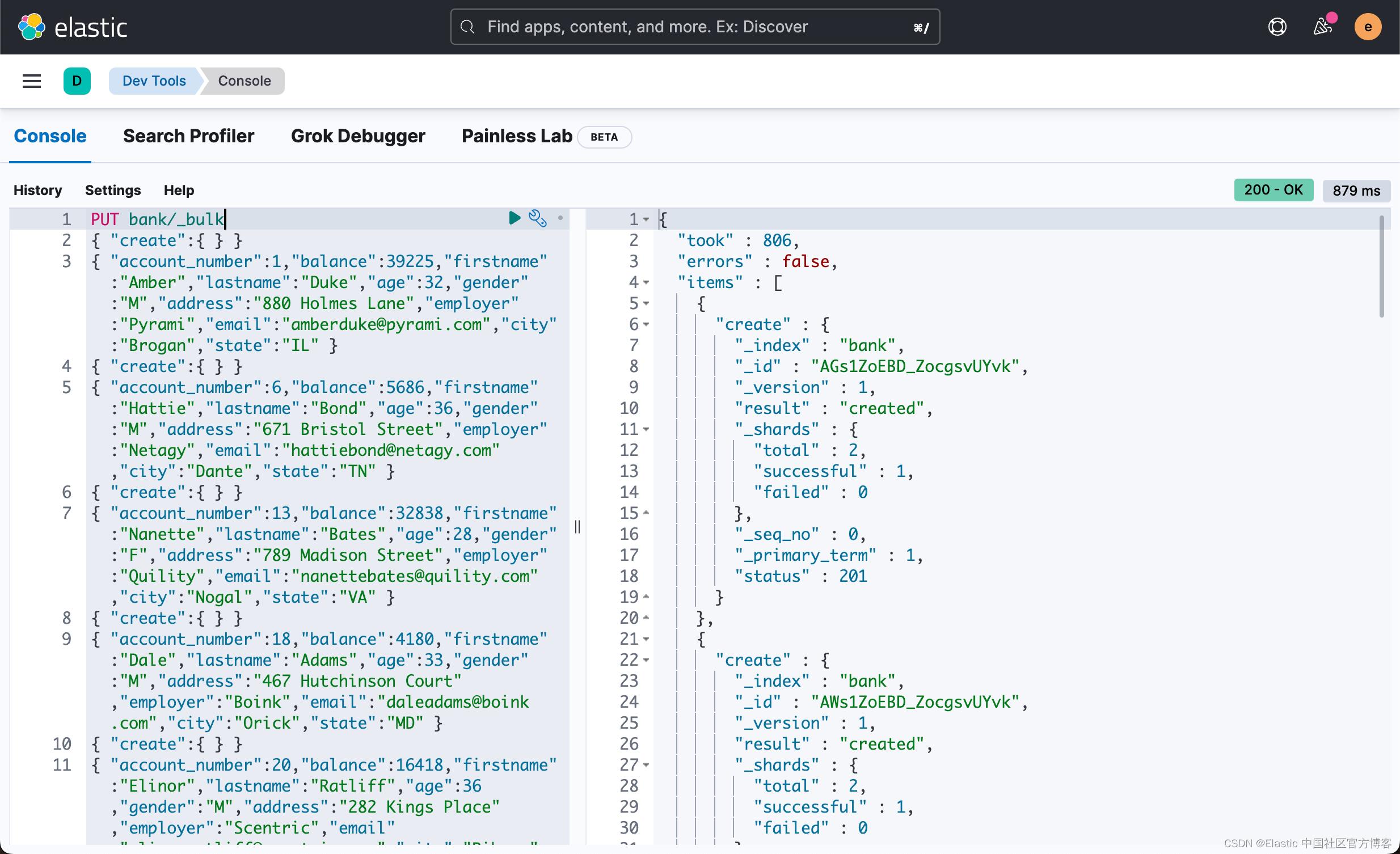

我们使用 _bulk API 来导入数据:

在 Console 中,我们输入如下的数据并执行:

PUT bank/_bulk

"create":

"account_number":1,"balance":39225,"firstname":"Amber","lastname":"Duke","age":32,"gender":"M","address":"880 Holmes Lane","employer":"Pyrami","email":"amberduke@pyrami.com","city":"Brogan","state":"IL"

"create":

"account_number":6,"balance":5686,"firstname":"Hattie","lastname":"Bond","age":36,"gender":"M","address":"671 Bristol Street","employer":"Netagy","email":"hattiebond@netagy.com","city":"Dante","state":"TN"

"create":

"account_number":13,"balance":32838,"firstname":"Nanette","lastname":"Bates","age":28,"gender":"F","address":"789 Madison Street","employer":"Quility","email":"nanettebates@quility.com","city":"Nogal","state":"VA"

"create":

"account_number":18,"balance":4180,"firstname":"Dale","lastname":"Adams","age":33,"gender":"M","address":"467 Hutchinson Court","employer":"Boink","email":"daleadams@boink.com","city":"Orick","state":"MD"

"create":

"account_number":20,"balance":16418,"firstname":"Elinor","lastname":"Ratliff","age":36,"gender":"M","address":"282 Kings Place","employer":"Scentric","email":"elinorratliff@scentric.com","city":"Ribera","state":"WA"

"create":

"account_number":25,"balance":40540,"firstname":"Virginia","lastname":"Ayala","age":39,"gender":"F","address":"171 Putnam Avenue","employer":"Filodyne","email":"virginiaayala@filodyne.com","city":"Nicholson","state":"PA"

"create":

"account_number":32,"balance":48086,"firstname":"Dillard","lastname":"Mcpherson","age":34,"gender":"F","address":"702 Quentin Street","employer":"Quailcom","email":"dillardmcpherson@quailcom.com","city":"Veguita","state":"IN"

"create":

"account_number":37,"balance":18612,"firstname":"Mcgee","lastname":"Mooney","age":39,"gender":"M","address":"826 Fillmore Place","employer":"Reversus","email":"mcgeemooney@reversus.com","city":"Tooleville","state":"OK"

"create":

"account_number":44,"balance":34487,"firstname":"Aurelia","lastname":"Harding","age":37,"gender":"M","address":"502 Baycliff Terrace","employer":"Orbalix","email":"aureliaharding@orbalix.com","city":"Yardville","state":"DE"

"create":

"account_number":49,"balance":29104,"firstname":"Fulton","lastname":"Holt","age":23,"gender":"F","address":"451 Humboldt Street","employer":"Anocha","email":"fultonholt@anocha.com","city":"Sunriver","state":"RI" 当我们执行完上面的指令后,我们就可以看到一个被创建的索引 bank,并且它里面含有 10 个文档:

GET bank/_count

"count" : 10,

"_shards" :

"total" : 1,

"successful" : 1,

"skipped" : 0,

"failed" : 0

接下来,为了让大家对 aggregation 有一个认识,我在下面来展示如何实现 aggregation 操作的。

在做聚合之前,我们先来了解我们的数据:

GET bank/_mapping

"bank" :

"mappings" :

"properties" :

"account_number" :

"type" : "long"

,

"address" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"age" :

"type" : "long"

,

"balance" :

"type" : "long"

,

"city" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"email" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"employer" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"firstname" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"gender" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"lastname" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

,

"state" :

"type" : "text",

"fields" :

"keyword" :

"type" : "keyword",

"ignore_above" : 256

从上面的展示中,我们可以看出来有的字段是 text 类型的,那么它不可以进行聚合,但是如果这个字段是 multi-field 的,并且它的一个字段是 keyword 的,那么它的 keyword 字段是可以进行聚合的。截止目前为止,除 text 和 annotated_text 类型的字段外,所有的其它类型的字段都支持聚合。

运行一个聚合

你可以通过指定搜索 API 的 aggs 参数将聚合作为搜索的一部分运行。 以下搜索在 city.keyword 上运行术语聚合:

GET bank/_search?filter_path=aggregations

"size": 0,

"aggs":

"cities_distribution":

"terms":

"field": "city.keyword",

"size": 10

在通常的情况下,我们设置 size 为 0,这样我们不需要返回任何的文档。它仅返回聚合的结果。这样做的好处是节省相应的负载,同时也可以缓存我们的响应,以便下次执行同样的聚合时,返回速度更快。上面命令返回的结果为:

"aggregations" :

"cities_distribution" :

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

"key" : "Brogan",

"doc_count" : 1

,

"key" : "Dante",

"doc_count" : 1

,

"key" : "Nicholson",

"doc_count" : 1

,

"key" : "Nogal",

"doc_count" : 1

,

"key" : "Orick",

"doc_count" : 1

,

"key" : "Ribera",

"doc_count" : 1

,

"key" : "Sunriver",

"doc_count" : 1

,

"key" : "Tooleville",

"doc_count" : 1

,

"key" : "Veguita",

"doc_count" : 1

,

"key" : "Yardville",

"doc_count" : 1

]

我们可以看到在各个城市的文档数。上面显示每个城市的文档数为 1。

改变聚合的范围

针对特别大的数据集来说,聚合是非常耗时的。我们可以通过查询来限定它的聚合文档的范围。我们可以这么来操作:

GET bank/_search?filter_path=aggregations

"size": 0,

"query":

"range":

"balance":

"gte": 4000,

"lte": 10000

,

"aggs":

"cities_distribution":

"terms":

"field": "city.keyword",

"size": 10

在上面,我们通过设置一个查询的范围,限定 balance 在 4000 到 10000 之间的文档来进行聚合。这样聚合作用在一个小一点的文档集合中,在很多的情况下会运行更快。上面的命令返回的结果为:

"aggregations" :

"cities_distribution" :

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 0,

"buckets" : [

"key" : "Dante",

"doc_count" : 1

,

"key" : "Orick",

"doc_count" : 1

]

运行多个集合

我们有时甚至可以在同一个请求中执行多个聚合:

GET bank/_search?filter_path=aggregations

"size": 0,

"aggs":

"cities_distribution":

"terms":

"field": "city.keyword",

"size": 5

,

"average_age":

"avg":

"field": "age"

在上面,我们使用了两个聚合:一个求城市的文档分别及平均年龄。上面命令的结果为:

"aggregations" :

"cities_distribution" :

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 5,

"buckets" : [

"key" : "Brogan",

"doc_count" : 1

,

"key" : "Dante",

"doc_count" : 1

,

"key" : "Nicholson",

"doc_count" : 1

,

"key" : "Nogal",

"doc_count" : 1

,

"key" : "Orick",

"doc_count" : 1

]

,

"average_age" :

"value" : 33.7

运行 sub-aggregations

桶聚合支持桶或指标子聚合。 例如,具有 avg 子聚合的 histogram 聚合计算每个文档桶的平均值。 嵌套子聚合没有级别或深度限制。

GET bank/_search?filter_path=aggregations

"size": 0,

"aggs":

"balance_distribution":

"histogram":

"field": "balance",

"interval": 5000

,

"aggs":

"average_age":

"avg":

"field": "age"

上面的命令返回的结果为:

"aggregations" :

"balance_distribution" :

"buckets" : [

"key" : 0.0,

"doc_count" : 1,

"average_age" :

"value" : 33.0

,

"key" : 5000.0,

"doc_count" : 1,

"average_age" :

"value" : 36.0

,

"key" : 10000.0,

"doc_count" : 0,

"average_age" :

"value" : null

,

"key" : 15000.0,

"doc_count" : 2,

"average_age" :

"value" : 37.5

,

"key" : 20000.0,

"doc_count" : 0,

"average_age" :

"value" : null

,

"key" : 25000.0,

"doc_count" : 1,

"average_age" :

"value" : 23.0

,

"key" : 30000.0,

"doc_count" : 2,

"average_age" :

"value" : 32.5

,

"key" : 35000.0,

"doc_count" : 1,

"average_age" :

"value" : 32.0

,

"key" : 40000.0,

"doc_count" : 1,

"average_age" :

"value" : 39.0

,

"key" : 45000.0,

"doc_count" : 1,

"average_age" :

"value" : 34.0

]

添加定制 metadata

使用 meta 元对象将自定义元数据与聚合相关联:

GET bank/_search?filter_path=aggregations

"size": 0,

"aggs":

"state_dist":

"terms":

"field": "state.keyword",

"size": 5

,

"meta":

"my-metadata-field": "foo"

在返回的结果中,我们可以看到 meta 对象:

"aggregations" :

"state_dist" :

"meta" :

"my-metadata-field" : "foo"

,

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 5,

"buckets" : [

"key" : "DE",

"doc_count" : 1

,

"key" : "IL",

"doc_count" : 1

,

"key" : "IN",

"doc_count" : 1

,

"key" : "MD",

"doc_count" : 1

,

"key" : "OK",

"doc_count" : 1

]

返回聚合的类型

默认情况下,聚合结果包括聚合的名称,但不包括其类型。 要返回聚合类型,请使用 typed_keys 查询参数。

GET bank/_search?typed_keys&filter_path=aggregations

"size": 0,

"aggs":

"agg_dist":

"histogram":

"field": "age",

"interval": 10

上面的命令返回的结果为:

"aggregations" :

"histogram#agg_dist" :

"buckets" : [

"key" : 20.0,

"doc_count" : 2

,

"key" : 30.0,

"doc_count" : 8

]

上面的结果显示是一个 histogram 的聚合。

使用 scripts 来进行聚合

当一个字段与你需要的聚合不完全匹配时,你应该在运行时字段(runtime field)上进行聚合:

GET bank/_search?filter_path=aggregations

"size": 0,

"runtime_mappings":

"name":

"type": "keyword",

"script":

"source": "emit(doc['firstname.keyword'].value + ' ' + doc['lastname.keyword'].value)"

,

"aggs":

"name":

"terms":

"field": "name",

"size": 5

上面的命令返回的结果为:

"aggregations" :

"name" :

"doc_count_error_upper_bound" : 0,

"sum_other_doc_count" : 5,

"buckets" : [

"key" : "Amber Duke",

"doc_count" : 1

,

"key" : "Aurelia Harding",

"doc_count" : 1

,

"key" : "Dale Adams",

"doc_count" : 1

,

"key" : "Dillard Mcpherson",

"doc_count" : 1

,

"key" : "Elinor Ratliff",

"doc_count" : 1

]

在上面,我们把 firstname 和 lastname 一起加在一起,并对它进行统计。脚本动态计算字段值,这给聚合增加了一点开销。 除了计算所花费的时间之外,某些聚合(如 terms 和 filters)不能将它们的某些优化与运行时字段一起使用。 总的来说,使用运行时字段的性能成本因聚合而异。

聚合缓存

为了更快的响应,Elasticsearch 将频繁运行的聚合结果缓存在分片请求缓存中。 要获得缓存的结果,请为每次搜索使用相同的首选项字符串。 如果你不需要搜索命中,请将 size 设置为 0 以避免填充缓存。

Elasticsearch 将具有相同首选项字符串的搜索路由到相同的分片。 如果分片的数据在搜索之间没有变化,则分片返回缓存的聚合结果。

Long 类型值的限制

运行聚合时,Elasticsearch 使用双精度值来保存和表示数字数据。 因此,大于 2^23 的长数字的聚合是近似的。

更多阅读,请参阅 “Elastic:开发者上手指南” 中的 “Aggregations” 章节。

以上是关于Elasticsearch:Aggregation 简介的主要内容,如果未能解决你的问题,请参考以下文章

分析Elasticsearch的Aggregation有感(一)

elasticsearch aggregation 过程(未完)

elasticsearch aggregation - 桶的精确计数