编程艺术剖析 darknet load_weights 接口

Posted 极智视界

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了编程艺术剖析 darknet load_weights 接口相关的知识,希望对你有一定的参考价值。

欢迎关注我的公众号 [极智视界],回复001获取Google编程规范

O_o >_< o_O O_o ~_~ o_O

本文分析下 darknet load_weights 接口,这个接口主要做模型权重的加载。

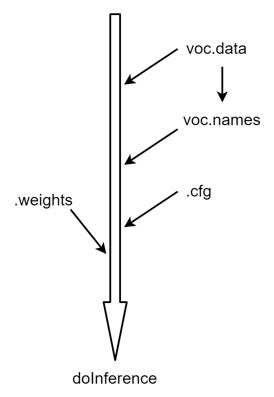

1、darknet 数据加载流程

之前的文章已经介绍了一下 darknet 目标检测的数据加载流程,并介绍了.data、.names 和 .cfg 的加载实现。

接下来这里 load_weights 接口主要做 .weights 模型权重的加载。

2、load_weights 接口

先来看一下接口调用:

load_weights(&net, weightfile);

其中 net 为 network 结构体的实例,weightfile 为权重的文件路径,看一下 load_weights 的实现:

/// parser.c

void load_weights(network *net, char *filename)

{

load_weights_upto(net, filename, net->n);

}

主要调用了 load_weights_upto 函数:

/// parser.c

void load_weights_upto(network *net, char *filename, int cutoff)

{

#ifdef GPU

if(net->gpu_index >= 0){

cuda_set_device(net->gpu_index); // 设置 gpu_index

}

#endif

fprintf(stderr, "Loading weights from %s...", filename);

fflush(stdout); // 强制马上输出

FILE *fp = fopen(filename, "rb");

if(!fp) file_error(filename);

int major;

int minor;

int revision;

fread(&major, sizeof(int), 1, fp); // 一些标志位的加载

fread(&minor, sizeof(int), 1, fp);

fread(&revision, sizeof(int), 1, fp);

if ((major * 10 + minor) >= 2) {

printf("\\n seen 64");

uint64_t iseen = 0;

fread(&iseen, sizeof(uint64_t), 1, fp);

*net->seen = iseen;

}

else {

printf("\\n seen 32");

uint32_t iseen = 0;

fread(&iseen, sizeof(uint32_t), 1, fp);

*net->seen = iseen;

}

*net->cur_iteration = get_current_batch(*net);

printf(", trained: %.0f K-images (%.0f Kilo-batches_64) \\n", (float)(*net->seen / 1000), (float)(*net->seen / 64000));

int transpose = (major > 1000) || (minor > 1000);

int i;

for(i = 0; i < net->n && i < cutoff; ++i){ // 识别不同算子进行权重加载

layer l = net->layers[i];

if (l.dontload) continue;

if(l.type == CONVOLUTIONAL && l.share_layer == NULL){

load_convolutional_weights(l, fp);

}

if (l.type == SHORTCUT && l.nweights > 0) {

load_shortcut_weights(l, fp);

}

if (l.type == IMPLICIT) {

load_implicit_weights(l, fp);

}

if(l.type == CONNECTED){

load_connected_weights(l, fp, transpose);

}

if(l.type == BATCHNORM){

load_batchnorm_weights(l, fp);

}

if(l.type == CRNN){

load_convolutional_weights(*(l.input_layer), fp);

load_convolutional_weights(*(l.self_layer), fp);

load_convolutional_weights(*(l.output_layer), fp);

}

if(l.type == RNN){

load_connected_weights(*(l.input_layer), fp, transpose);

load_connected_weights(*(l.self_layer), fp, transpose);

load_connected_weights(*(l.output_layer), fp, transpose);

}

if(l.type == GRU){

load_connected_weights(*(l.input_z_layer), fp, transpose);

load_connected_weights(*(l.input_r_layer), fp, transpose);

load_connected_weights(*(l.input_h_layer), fp, transpose);

load_connected_weights(*(l.state_z_layer), fp, transpose);

load_connected_weights(*(l.state_r_layer), fp, transpose);

load_connected_weights(*(l.state_h_layer), fp, transpose);

}

if(l.type == LSTM){

load_connected_weights(*(l.wf), fp, transpose);

load_connected_weights(*(l.wi), fp, transpose);

load_connected_weights(*(l.wg), fp, transpose);

load_connected_weights(*(l.wo), fp, transpose);

load_connected_weights(*(l.uf), fp, transpose);

load_connected_weights(*(l.ui), fp, transpose);

load_connected_weights(*(l.ug), fp, transpose);

load_connected_weights(*(l.uo), fp, transpose);

}

if (l.type == CONV_LSTM) {

if (l.peephole) {

load_convolutional_weights(*(l.vf), fp);

load_convolutional_weights(*(l.vi), fp);

load_convolutional_weights(*(l.vo), fp);

}

load_convolutional_weights(*(l.wf), fp);

if (!l.bottleneck) {

load_convolutional_weights(*(l.wi), fp);

load_convolutional_weights(*(l.wg), fp);

load_convolutional_weights(*(l.wo), fp);

}

load_convolutional_weights(*(l.uf), fp);

load_convolutional_weights(*(l.ui), fp);

load_convolutional_weights(*(l.ug), fp);

load_convolutional_weights(*(l.uo), fp);

}

if(l.type == LOCAL){

int locations = l.out_w*l.out_h;

int size = l.size*l.size*l.c*l.n*locations;

fread(l.biases, sizeof(float), l.outputs, fp);

fread(l.weights, sizeof(float), size, fp);

#ifdef GPU

if(gpu_index >= 0){

push_local_layer(l);

}

#endif

}

if (feof(fp)) break;

}

fprintf(stderr, "Done! Loaded %d layers from weights-file \\n", i);

fclose(fp);

}

以上有几个点不容易看懂,如以下这段:

int major;

int minor;

int revision;

fread(&major, sizeof(int), 1, fp);

fread(&minor, sizeof(int), 1, fp);

fread(&revision, sizeof(int), 1, fp);

这个最好结合保存权重的接口一起来看,load_weights 是 save_weights 的解码过程,来看一下 save_weights_upto 的前面部分:

void save_weights_upto(network net, char *filename, int cutoff, int save_ema)

{

#ifdef GPU

if(net.gpu_index >= 0){

cuda_set_device(net.gpu_index);

}

#endif

fprintf(stderr, "Saving weights to %s\\n", filename);

FILE *fp = fopen(filename, "wb");

if(!fp) file_error(filename);

int major = MAJOR_VERSION;

int minor = MINOR_VERSION;

int revision = PATCH_VERSION;

fwrite(&major, sizeof(int), 1, fp); // 先打上 major

fwrite(&minor, sizeof(int), 1, fp); // 再打上 minor

fwrite(&revision, sizeof(int), 1, fp); // 再打上 revision

(*net.seen) = get_current_iteration(net) * net.batch * net.subdivisions; // remove this line, when you will save to weights-file both: seen & cur_iteration

fwrite(net.seen, sizeof(uint64_t), 1, fp); // 最后打上 net.seen

......

}

从上面的 save_weights 接口可以看出 darknet 的权重在前面会先打上几个标志:major、minor、revision、net.seen,然后再连续存储各层的权重数据,这样就不难理解 load_weights 的时候做这个解码了,以下是这几个参数的宏定义:

/// version.h

#define MAJOR_VERSION 0

#define MINOR_VERSION 2

#define PATCH_VERSION 5

再回到 load_weights,在加载这些标志后是加载各层的权重,以卷积权重加载来说,里面的逻辑分两个:

(1) 单 conv,使用 fread 根据特定大小依次加载 biases 和 weights;

(2) conv + bn 融合,使用 fread 根据特定大小依次加载 biases、scales、rolling_mean、rolling_variance、weights。

来看实现:

/// parser.c

void load_convolutional_weights(layer l, FILE *fp)

{

if(l.binary){

//load_convolutional_weights_binary(l, fp);

//return;

}

int num = l.nweights;

int read_bytes;

read_bytes = fread(l.biases, sizeof(float), l.n, fp); // load biases

if (read_bytes > 0 && read_bytes < l.n) printf("\\n Warning: Unexpected end of wights-file! l.biases - l.index = %d \\n", l.index);

//fread(l.weights, sizeof(float), num, fp); // as in connected layer

if (l.batch_normalize && (!l.dontloadscales)){

read_bytes = fread(l.scales, sizeof(float), l.n, fp); // load scales

if (read_bytes > 0 && read_bytes < l.n) printf("\\n Warning: Unexpected end of wights-file! l.scales - l.index = %d \\n", l.index);

read_bytes = fread(l.rolling_mean, sizeof(float), l.n, fp); // load rolling_mean

if (read_bytes > 0 && read_bytes < l.n) printf("\\n Warning: Unexpected end of wights-file! l.rolling_mean - l.index = %d \\n", l.index);

read_bytes = fread(l.rolling_variance, sizeof(float), l.n, fp); // load rolling_variance

if (read_bytes > 0 && read_bytes < l.n) printf("\\n Warning: Unexpected end of wights-file! l.rolling_variance - l.index = %d \\n", l.index);

if(0){

int i;

for(i = 0; i < l.n; ++i){

printf("%g, ", l.rolling_mean[i]);

}

printf("\\n");

for(i = 0; i < l.n; ++i){

printf("%g, ", l.rolling_variance[i]);

}

printf("\\n");

}

if(0){

fill_cpu(l.n, 0, l.rolling_mean, 1);

fill_cpu(l.n, 0, l.rolling_variance, 1);

}

}

read_bytes = fread(l.weights, sizeof(float), num, fp); // load weights

if (read_bytes > 0 && read_bytes < l.n) printf("\\n Warning: Unexpected end of wights-file! l.weights - l.index = %d \\n", l.index);

//if(l.adam){

// fread(l.m, sizeof(float), num, fp);

// fread(l.v, sizeof(float), num, fp);

//}

//if(l.c == 3) scal_cpu(num, 1./256, l.weights, 1);

if (l.flipped) {

transpose_matrix(l.weights, (l.c/l.groups)*l.size*l.size, l.n);

}

//if (l.binary) binarize_weights(l.weights, l.n, (l.c/l.groups)*l.size*l.size, l.weights);

#ifdef GPU

if(gpu_index >= 0){

push_convolutional_layer(l);

}

#endif

}

再说一下 fread,这个函数在框架源码中数据读取方面会用的比较多,来看一下这个 C 语言的函数:

size_t fread(void *ptr, size_t size, size_t nmemb, FILE *stream)

参数说明:

- ptr:指向带有最小尺寸

size * nmemb字节的内存块的指针; - size:要读取的每个元素的大小,以字节为单位;

- nmemb:元素的个数,每个元素的大小为 size 字节;

- stream:指向 FILE 对象的指针,指定了一个输入流;

返回值:成功读取的元素个数以 size_t 对象返回,返回值与 nmenb 参数一样,若不一样,则可能发生了读错误或达到了文件尾。

好了,以上分析了 darknet 的 load_weights 接口及 weights 数据结构,再结合之前的文章就已经集齐了 darkent 目标检测数据加载部分的解读,希望我的分享对你的学习能有一点帮助。

【公众号传送】

扫描下方二维码即可关注我的微信公众号【极智视界】,获取更多AI经验分享,让我们用极致+极客的心态来迎接AI !

以上是关于编程艺术剖析 darknet load_weights 接口的主要内容,如果未能解决你的问题,请参考以下文章

编程艺术剖析 darknet 链表查找 option_find_xx 接口