深度学习100例-卷积神经网络(CNN)彩色图片分类 | 第2天

Posted K同学啊

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了深度学习100例-卷积神经网络(CNN)彩色图片分类 | 第2天相关的知识,希望对你有一定的参考价值。

一、前期工作

1. 设置GPU

如果使用的是CPU可以忽略这步

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU

tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpu0],"GPU")

2. 导入数据

import tensorflow as tf

from tensorflow.keras import datasets, layers, models

import matplotlib.pyplot as plt

(train_images, train_labels), (test_images, test_labels) = datasets.cifar10.load_data()

3. 归一化

# 将像素的值标准化至0到1的区间内。

train_images, test_images = train_images / 255.0, test_images / 255.0

train_images.shape,test_images.shape,train_labels.shape,test_labels.shape

((50000, 32, 32, 3), (10000, 32, 32, 3), (50000, 1), (10000, 1))

4. 可视化

class_names = ['airplane', 'automobile', 'bird', 'cat', 'deer','dog', 'frog', 'horse', 'ship', 'truck']

plt.figure(figsize=(20,10))

for i in range(20):

plt.subplot(5,10,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(train_images[i], cmap=plt.cm.binary)

plt.xlabel(class_names[train_labels[i][0]])

plt.show()

二、构建CNN网络

model = models.Sequential([

layers.Conv2D(32, (3, 3), activation='relu', input_shape=(32, 32, 3)), #卷积层1,卷积核3*3

layers.MaxPooling2D((2, 2)), #池化层1,2*2采样

layers.Conv2D(64, (3, 3), activation='relu'), #卷积层2,卷积核3*3

layers.MaxPooling2D((2, 2)), #池化层2,2*2采样

layers.Conv2D(64, (3, 3), activation='relu'), #卷积层3,卷积核3*3

layers.Flatten(), #Flatten层,连接卷积层与全连接层

layers.Dense(64, activation='relu'), #全连接层,特征进一步提取

layers.Dense(10) #输出层,输出预期结果

])

model.summary() # 打印网络结构

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d (Conv2D) (None, 30, 30, 32) 896

_________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 15, 15, 32) 0

_________________________________________________________________

conv2d_1 (Conv2D) (None, 13, 13, 64) 18496

_________________________________________________________________

max_pooling2d_1 (MaxPooling2 (None, 6, 6, 64) 0

_________________________________________________________________

conv2d_2 (Conv2D) (None, 4, 4, 64) 36928

_________________________________________________________________

flatten (Flatten) (None, 1024) 0

_________________________________________________________________

dense (Dense) (None, 64) 65600

_________________________________________________________________

dense_1 (Dense) (None, 10) 650

=================================================================

Total params: 122,570

Trainable params: 122,570

Non-trainable params: 0

_________________________________________________________________

三、编译

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

四、训练模型

history = model.fit(train_images, train_labels, epochs=10,

validation_data=(test_images, test_labels))

Epoch 1/10

1563/1563 [==============================] - 9s 4ms/step - loss: 1.7862 - accuracy: 0.3390 - val_loss: 1.2697 - val_accuracy: 0.5406

Epoch 2/10

1563/1563 [==============================] - 5s 3ms/step - loss: 1.2270 - accuracy: 0.5595 - val_loss: 1.0731 - val_accuracy: 0.6167

Epoch 3/10

1563/1563 [==============================] - 5s 3ms/step - loss: 1.0355 - accuracy: 0.6337 - val_loss: 0.9678 - val_accuracy: 0.6610

Epoch 4/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.9221 - accuracy: 0.6727 - val_loss: 0.9589 - val_accuracy: 0.6648

Epoch 5/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.8474 - accuracy: 0.7022 - val_loss: 0.8962 - val_accuracy: 0.6853

Epoch 6/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.7814 - accuracy: 0.7292 - val_loss: 0.9124 - val_accuracy: 0.6873

Epoch 7/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.7398 - accuracy: 0.7398 - val_loss: 0.8924 - val_accuracy: 0.6929

Epoch 8/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.7008 - accuracy: 0.7542 - val_loss: 0.9809 - val_accuracy: 0.6854

Epoch 9/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.6474 - accuracy: 0.7732 - val_loss: 0.8549 - val_accuracy: 0.7137

Epoch 10/10

1563/1563 [==============================] - 5s 3ms/step - loss: 0.6041 - accuracy: 0.7889 - val_loss: 0.8909 - val_accuracy: 0.7046

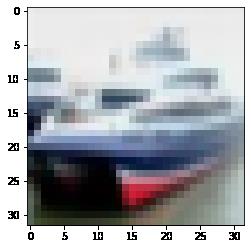

五、预测

通过模型进行预测得到的是每一个类别的概率,数字越大该图片为该类别的可能性越大

plt.imshow(test_images[1])

import numpy as np

pre = model.predict(test_images)

print(class_names[np.argmax(pre[1])])

ship

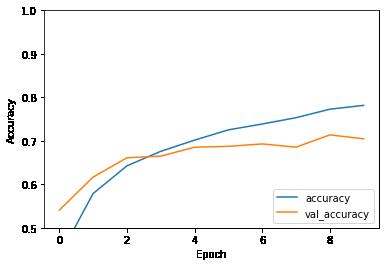

六、模型评估

import matplotlib.pyplot as plt

plt.plot(history.history['accuracy'], label='accuracy')

plt.plot(history.history['val_accuracy'], label = 'val_accuracy')

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.ylim([0.5, 1])

plt.legend(loc='lower right')

plt.show()

test_loss, test_acc = model.evaluate(test_images, test_labels, verbose=2)

313/313 - 0s - loss: 0.8909 - accuracy: 0.7046

print(test_acc)

0.7045999765396118

其他精彩内容:

- 深度学习100例-卷积神经网络(CNN)实现mnist手写数字识别 | 第1天

- 深度学习100例-卷积神经网络(CNN)彩色图片分类 | 第2天

- 深度学习100例-卷积神经网络(CNN)服装图像分类 | 第3天

- 深度学习100例-卷积神经网络(CNN)花朵识别 | 第4天

- 深度学习100例-卷积神经网络(CNN)天气识别 | 第5天

《深度学习100例》专栏直达:【传送门】

交流群:

以上是关于深度学习100例-卷积神经网络(CNN)彩色图片分类 | 第2天的主要内容,如果未能解决你的问题,请参考以下文章

深度学习100例-卷积神经网络(CNN)彩色图片分类 | 第2天

深度学习100例-卷积神经网络(CNN)实现mnist手写数字识别 | 第1天