Impala查询卡顿分析案例

Posted hadoop123

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Impala查询卡顿分析案例相关的知识,希望对你有一定的参考价值。

最近在开发时遇到查询卡顿(stuck)的情况,感觉比较有代表性,因此记录一下排查过程。在生产环境中也可以用类似的方法找到卡顿的源头。

注:本文所用的Impala版本为3.4.0-SNAPSHOT。Impala由C++和Java写成,分别对应BE (Backend) 和 FE (Frontend) 两部分。

案例描述

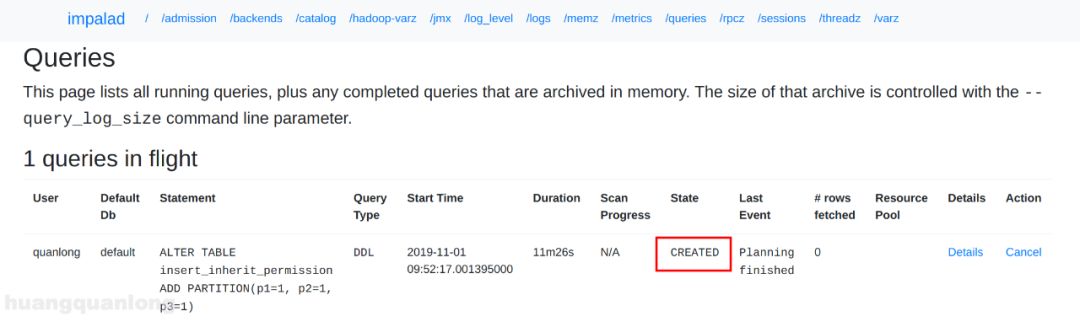

使用Alter Table语句新建一个partition时,查询一直不返回。在Coordinator网页上可以看到查询一直处于CREATED状态,且持续长达十几分钟:

这个查询设置了 sync_ddl=true,在忙碌的集群里是有可能十几分钟未完成的,但在我的测试环境里就不应该这样。

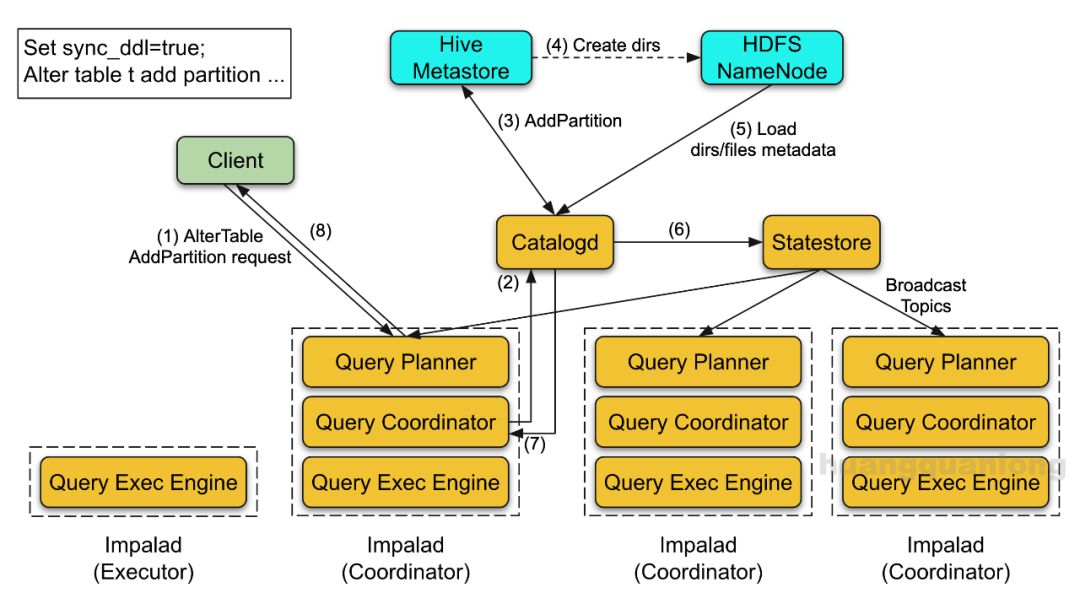

Alter Table Add Partition语句属于DDL,设置sync_ddl=true时,其执行流程如下:

图中各个步骤如下:

(1) 客户端发送查询请求

(2) Coordinator向Catalogd发送ExecDdl请求

(3) Catalogd向HMS发送AddPartition请求

(4) HMS创建Partition,并在HDFS中创建partition目录

(5) Catalogd向NameNode加载新Partition的文件信息

(6) Catalogd在执行完DDL后,将元数据的更新发送给Statestore。Statestore会将更新广播给所有Coordinator。

(7) Catalogd返回包含更新的topic version给Coordinator

(8) Coordinator等到所有Coordinator都接收到了该version的更新时再返回给客户端

卡顿可能发生在上面任何一步中,需要从头开始排查。

查找卡顿源头

I. 找到Coordinator卡住的线程

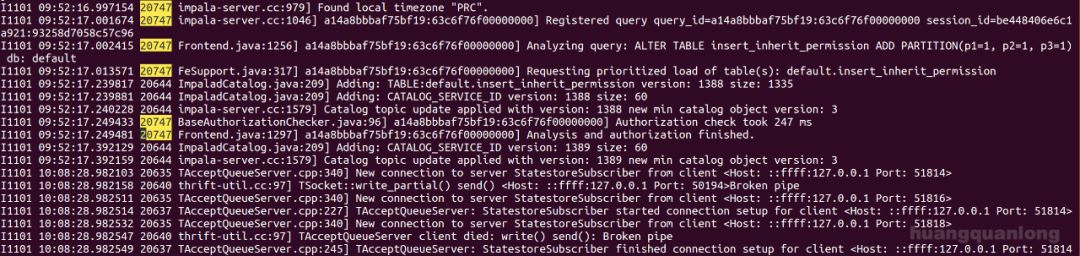

查询是发给Coordinator的,因此从它开始。先查日志,找到这个查询相关的log。每个查询在被解析的时候都会打一行 “Analyzing ...” 这样的日志,借此找到查询:

$ grep -i analyzing /tmp/impalad_node2.INFO | grep 'ALTER TABLE insert_inherit_permission'

I1101 09:52:17.002415 20747 Frontend.java:1256] a14a8bbbaf75bf19:63c6f76f00000000] Analyzing query: ALTER TABLE insert_inherit_permission ADD PARTITION(p1=1, p2=1, p3=1) db: default我的查询是 "ALTER TABLE insert_inherit_permission ADD PARTITION(p1=1, p2=1, p3=1)",在grep的时候可以加上些关键字快速定位。在我的测试环境中,日志是在 /tmp/impalad_node2.INFO,一般在CDH集群中,日志是在 /var/log/impalad/impalad.INFO。

日志中第三列就是线程id,因此FE处理这个查询的线程id是20747。可以看下这个线程所有的log,看到最后是停在 "Analysis and authorization finished":

既然已经做完语义分析和权限鉴定了,查询是卡在哪里呢?

先通过 jstack 看是否有相关信息,jstack输出中的nid就是十六进制的线程id,在我的例子中并没有发现线程20747的堆栈,因此要查看所有BE的线程了。BE是C++写的,理论上我们可以通过gdb attach上impalad,然后打出线程堆栈。但这样会停住整个impalad进程,可能会导致心跳超时而查询被取消的情况。为了不污染现场环境,可以使用gcore触发一次core dump。

$ gcore

usage: gcore [-o filename] pid

$ sudo gcore -o impalad_core 19731

......

[New LWP 20697]

[New LWP 20747]

[Thread debugging using libthread_db enabled]

Using host libthread_db library "/lib/x86_64-linux-gnu/libthread_db.so.1".

Installing openjdk unwinder

Traceback (most recent call last):

File "/usr/share/gdb/auto-load/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/amd64/server/libjvm.so-gdb.py", line 52, in <module>

class Types(object):

File "/usr/share/gdb/auto-load/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/amd64/server/libjvm.so-gdb.py", line 66, in Types

nmethodp_t = gdb.lookup_type('nmethod').pointer()

gdb.error: No type named nmethod.

pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

185 ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S: No such file or directory.

warning: target file /proc/19731/cmdline contained unexpected null characters

Saved corefile impalad_core.19731Dump的时候会被形如 "[New LWP xxxxx]" 的输出刷屏,还可能会有warning,但不用管,只要最后是成功的就行了。生成的core文件一般会比较大,因为把进程的内存也dump下来了。比如在我的开发环境中,core文件就有12GB。

$ ll -h impalad_core.19731

-rw-r--r-- 1 root root 12G 11月 1 10:08 impalad_core.19731生产环境中的内存可能会更大,仍用core dump就不太合适了,后面我们再介绍其它的办法。有了core文件后,就可以用gdb来分析了。可能会占用大量内存,因此在生产环境中最好换台机器做分析。

$ gdb -c impalad_core.19731 /home/quanlong/workspace/Impala/be/build/latest/service/impalad

GNU gdb (Ubuntu 7.11.1-0ubuntu1~16.5) 7.11.1

Copyright (C) 2016 Free Software Foundation, Inc.

License GPLv3+: GNU GPL version 3 or later <http://gnu.org/licenses/gpl.html>

This is free software: you are free to change and redistribute it.

There is NO WARRANTY, to the extent permitted by law. Type "show copying"

and "show warranty" for details.

This GDB was configured as "x86_64-linux-gnu".

Type "show configuration" for configuration details.

For bug reporting instructions, please see:

<http://www.gnu.org/software/gdb/bugs/>.

Find the GDB manual and other documentation resources online at:

<http://www.gnu.org/software/gdb/documentation/>.

For help, type "help".

Type "apropos word" to search for commands related to "word"...

Reading symbols from /home/quanlong/workspace/Impala/be/build/latest/service/impalad...done.

[New LWP 19731]

[New LWP 19762]

...

[New LWP 20697]

[New LWP 20747]

[Thread debugging using libthread_db enabled]

Using host libthread_db library "/lib/x86_64-linux-gnu/libthread_db.so.1".

Core was generated by `/home/quanlong/workspace/Impala/be/build/latest/service/impalad'.

#0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

185 ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S: No such file or directory.

[Current thread is 1 (Thread 0x7f2f3fde48c0 (LWP 19731))]

Installing openjdk unwinder

Traceback (most recent call last):

File "/usr/share/gdb/auto-load/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/amd64/server/libjvm.so-gdb.py", line 52, in <module>

class Types(object):

File "/usr/share/gdb/auto-load/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/amd64/server/libjvm.so-gdb.py", line 66, in Types

nmethodp_t = gdb.lookup_type('nmethod').pointer()

gdb.error: No type named nmethod.

(gdb)找到线程20747并查看堆栈,gdb里的线程编号并不是线程id,但thread指令只能跳到指定编号的线程,因此我们要先用 "thread find 20747" 找到线程编号,然后再切到那个线程用bt指令打印堆栈:

(gdb) thread find 20747

Thread 285 has target id 'Thread 0x7f2e8f3f2700 (LWP 20747)'

(gdb) thread 285

[Switching to thread 285 (Thread 0x7f2e8f3f2700 (LWP 20747))]

#0 0x00007f2f3f7da87f in __libc_recv (fd=403, buf=0x10e9e000, n=131072, flags=0) at ../sysdeps/unix/sysv/linux/x86_64/recv.c:28

28 ../sysdeps/unix/sysv/linux/x86_64/recv.c: No such file or directory.

(gdb) bt

#0 0x00007f2f3f7da87f in __libc_recv (fd=403, buf=0x10e9e000, n=131072, flags=0) at ../sysdeps/unix/sysv/linux/x86_64/recv.c:28

#1 0x0000000004ccd3af in apache::thrift::transport::TSocket::read(unsigned char*, unsigned int) ()

......输出的结果会比较长,如图所示:

最重要的是划红线的部分,也就是这块:

#15 0x0000000002513982 in impala::ClientConnection<impala::CatalogServiceClientWrapper>::DoRpc

#16 0x000000000250fb8c in impala::ClientConnection<impala::CatalogServiceClientWrapper>::DoRpcWithRetry

#17 0x000000000250cced in impala::CatalogOpExecutor::Exec

#18 0x00000000022b9012 in impala::ClientRequestState::ExecDdlRequest

#19 0x00000000022b4253 in impala::ClientRequestState::Exec

#20 0x0000000002241cea in impala::ImpalaServer::ExecuteInternal

#21 0x000000000224131d in impala::ImpalaServer::Execute所以Coordinator是向Catalogd发了RPC请求,正在等待结果返回。堆栈里有代码行号,也可查看相关代码。

现在可以确认Coordinator是卡在RPC上了,接下来需要确认Catalogd在做什么。

II. 找到Catalogd卡住的线程

Catalogd的日志里并没有发现明显的问题,还是得看线程堆栈。我这里catalogd比较闲,jstack结果中只有一个是跟ExecDdl相关的:

"Thread-10" #43 prio=5 os_prio=0 tid=0x0000000012324800 nid=0x510c in Object.wait() [0x00007f6808952000]

java.lang.Thread.State: TIMED_WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

at org.apache.impala.catalog.CatalogServiceCatalog.waitForSyncDdlVersion(CatalogServiceCatalog.java:2635)

- locked <0x0000000722aff010> (a org.apache.impala.catalog.TopicUpdateLog)

at org.apache.impala.service.CatalogOpExecutor.execDdlRequest(CatalogOpExecutor.java:414)

at org.apache.impala.service.JniCatalog.execDdl(JniCatalog.java:167)因此源头就是卡在FE的 CatalogServiceCatalog.waitForSyncDdlVersion() 里,线程id是0x510c,也就是20748. 回看日志,最后一行输出是 "Loaded file and block metadata for ..."

因此该表的metadata加载已经完成了,进入waitForSyncDdlVersion是因为该查询使用了sync_ddl=true,catalogd需要等到更新被发送出去才能知道对应的statestore topic version,才能返回给coordinator。关于metadata的广播及sync_ddl的实现篇幅较长,我们在后续的文章中再介绍。

到此我的诊断就结束了,可以结合代码去debug。然而在生产环境中catalogd一般很忙碌,不一定能直接锁定jstack中的线程。有一个想法是通过coordinator线程确定跟catalogd连接的端口,再以此找到catalogd对应的线程,但fd是所有线程共享的,并没法通过端口来找到线程。因此目前也没有比较好的办法,好在catalogd的大部分逻辑都是java实现的,可以多打几次jstack,对比看哪些线程一直存在来排查。

使用Minidump替代Core Dump

生产环境中的impalad内存一般会比较大,如果使用core dump,生成的core文件也会很大,不利于分析。实际上我们感兴趣的是各个线程的堆栈,并不需要所有的内存数据。Google Breakpad 提供的 Minidumps 提供了新的解决方案,它只会导出崩溃原因、各个线程不超过8KB的栈内存、运行环境(OS、CPU等)和进程信息(加载的模块等)。这样导出的 Minidump 文件就非常小,在本例中,core dump文件的大小是 12GB,minidump 文件的大小却只有 3MB!

使用要求

Impala版本不低于2.7:Impala 2.7(对应的CDH版本是cdh5.9)开始支持外界触发的Minidump,只需要给进程发送SIGUSR1信号。

编译对应版本的Impala源码:需要使用 Debug 模式编译才能保留所有符号信息(symbol),还需要使用toolchain中的breakpad工具来处理minidump。另外编译环境要跟线上环境一致,比如线上环境为ubuntu16.04,编译时也要找ubuntu16.04的机器来做。关于如何编译,可参考文档:https://cwiki.apache.org/confluence/display/IMPALA/Building+Impala

使用方法

1. 生成Minidump

Wrote minidump to /tmp/minidumps/impalad/2d32f167-9189-4fdc-9fc4ef9d-624b3a1d.dmp把这个minidump文件传送到编译Impala的机器上。

2. 生成符号信息

在编译Impala的目录(下称IMPALA_HOME)里,可以找到 bin/dump_breakpad_symbols.py,用它来生成breakpad形式的符号信息,假设说我们希望把符号信息存到 /tmp/syms,指令如下:

$ cd $IMPALA_HOME

$ source bin/impala-config.sh

$ bin/dump_breakpad_symbols.py -f be/build/latest/service/libfesupport.so be/build/latest/service/impalad be/build/latest/util/libloggingsupport.so -d /tmp/syms

INFO:root:Processing binary file: be/build/latest/service/libfesupport.so

INFO:root:Processing binary file: be/build/latest/service/impalad

INFO:root:Processing binary file: be/build/latest/util/libloggingsupport.so生成的文件大小是421MB,比Minidump文件还大一些,因为里面有各种文件名、函数名等:

$ du -sh /tmp/syms

421M /tmp/syms

$ head /tmp/syms/impalad/0894679AAB4BBE9079BA0C593F2114890/impalad.sym

MODULE Linux x86_64 0894679AAB4BBE9079BA0C593F2114890 impalad

INFO CODE_ID 9A6794084BAB90BE79BA0C593F211489

FILE 0 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/exception.c

FILE 1 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/hdfs.c

FILE 2 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/jclasses.c

FILE 3 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/jni_helper.c

FILE 4 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/os/posix/mutexes.c

FILE 5 /container.redhat6/build/cdh/hadoop/3.0.0-cdh6.x-SNAPSHOT/rpm/BUILD/hadoop-3.0.0-cdh6.x-SNAPSHOT/hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfs/os/posix/thread_local_storage.c

FILE 6 /home/quanlong/workspace/Impala/be/generated-sources/gen-cpp/BackendGflags_types.cpp

FILE 7 /home/quanlong/workspace/Impala/be/generated-sources/gen-cpp/BackendGflags_types.h3. 解析Minidump

使用Impala toolchain里的breakpad工具,假设对应的版本是 IMPALA_BREAKPAD_VERSION, 指令如下:

$IMPALA_TOOLCHAIN/breakpad-$IMPALA_BREAKPAD_VERSION/bin/minidump_stackwalk $MINIDUMP_FILE $BREAKPAD_SYMBOLS > $RESULT_FILE在本例中就是:

$ $IMPALA_HOME/toolchain/breakpad-97a98836768f8f0154f8f86e5e14c2bb7e74132e-p2/bin/minidump_stackwalk /tmp/minidumps/impalad/2d32f167-9189-4fdc-9fc4ef9d-624b3a1d.dmp /tmp/syms > /tmp/resolved.txt

......

2019-11-03 08:33:22: basic_code_modules.cc:110: INFO: No module at 0x0

2019-11-03 08:33:22: basic_code_modules.cc:110: INFO: No module at 0x0

2019-11-03 08:33:22: minidump_processor.cc:326: INFO: Processed /tmp/minidumps/impalad/2d32f167-9189-4fdc-9fc4ef9d-624b3a1d.dmp

2019-11-03 08:33:22: minidump.cc:4980: INFO: Minidump closing minidump中间会有刷屏的输出,只要最终解析成功就行了。生成的文件内容如下:

Operating system: Linux

0.0.0 Linux 4.15.0-66-generic #75~16.04.1-Ubuntu SMP Tue Oct 1 14:01:08 UTC 2019 x86_64

CPU: amd64

family 6 model 158 stepping 10

1 CPU

GPU: UNKNOWN

Crash reason: DUMP_REQUESTED

Crash address: 0x4d78597

Process uptime: not available

Thread 0 (crashed)

0 impalad!google_breakpad::ExceptionHandler::WriteMinidump() + 0x57

rax = 0x00007ffeed518510 rdx = 0x0000000000000000

rcx = 0x0000000004d7857f rbx = 0x0000000000000000

rsi = 0x0000000000000001 rdi = 0x00007ffeed5183a8

rbp = 0x00007ffeed518ab0 rsp = 0x00007ffeed518310

r8 = 0x0000000000000000 r9 = 0x0000000000000024

r10 = 0x00007f2f3f7db340 r11 = 0x00007ffeed518980

r12 = 0x00007ffeed518970 r13 = 0x0000000002428f99

r14 = 0x0000000000000000 r15 = 0x00007ffeed5189d0

rip = 0x0000000004d78597

Found by: given as instruction pointer in context

1 impalad!google_breakpad::ExceptionHandler::WriteMinidump(std::string const&, bool (*)(google_breakpad::MinidumpDescriptor const&, void*, bool), void*) + 0xf0

rbx = 0x00007f2f3caf0140 rbp = 0x00007ffeed518ab0

rsp = 0x00007ffeed518960 r12 = 0x00007ffeed518970

r13 = 0x0000000002428f99 r14 = 0x0000000000000000

r15 = 0x00007ffeed5189d0 rip = 0x0000000004d78e60

Found by: call frame info

2 impalad!impala::HandleSignal [minidump.cc : 103 + 0x1e]

rbx = 0x0000000000000000 rbp = 0x00007ffeed518af0

rsp = 0x00007ffeed518ac0 r12 = 0x00007ffeed519250

r13 = 0x00000000111263d0 r14 = 0x0000000000006d62

r15 = 0x0000000000000000 rip = 0x00000000024290ec

Found by: call frame info

3 libpthread-2.23.so + 0x11390

rbx = 0x0000000000000000 rbp = 0x00007ffeed519240

rsp = 0x00007ffeed518b00 r12 = 0x00007ffeed519250

r13 = 0x00000000111263d0 r14 = 0x0000000000006d62

r15 = 0x0000000000000000 rip = 0x00007f2f3f7db390

Found by: call frame info

4 impalad!boost::thread::join_noexcept() + 0x5c

rbp = 0x00007ffeed519260 rsp = 0x00007ffeed519250

rip = 0x0000000003c6a13c

Found by: previous frame's frame pointer

...先是OS、CPU等环境信息,然后就是各个线程的堆栈信息,每个堆栈的各个寄存器值也都显示出来了。第一个线程是breakpad做minidump的线程,因为Crash reason是DUMP_REQUESTED(实际上进程还活着,并没有crash)。如果是在线上环境进程崩溃,则第一个线程会是导致崩溃的线程。

回到Coordinator卡顿的分析,我们先是从日志找到了线程id为20747:

I1101 09:52:17.002415 20747 Frontend.java:1256] a14a8bbbaf75bf19:63c6f76f00000000] Analyzing query: ALTER TABLE insert_inherit_permission ADD PARTITION(p1=1, p2=1, p3=1) db: default可惜breakpad并没有打出像gdb一样的LWP信息,线程的编号也跟gdb的不一样。但据观察,线程id(即LWP id)一般会出现在线程堆栈里前几个调用的寄存器里,经常可以借此找到线程。比如我们关心的线程id是20747,转换成16进制就是510b,在输出文件 /tmp/resolved.txt 里搜510b,刚好只有一个线程其堆栈里前几个调用的寄存器rbx值为 0x000000000000510b,而这个线程的堆栈也正就是 ExecDdl 的堆栈:

Thread 283

...

13 impalad!impala::CatalogServiceClient::recv_ExecDdl(impala::TDdlExecResponse&) [CatalogService.cpp : 1908 + 0x1f]

rbx = 0x0000000000000000 rbp = 0x00007f2e8f3ecf20

rsp = 0x00007f2e8f3ece60 r12 = 0x0000000000000000

r13 = 0x00000000110d1ba8 r14 = 0x0000000002512a7c

r15 = 0x0000000000000000 rip = 0x0000000001c319a4

Found by: call frame info

14 impalad!impala::CatalogServiceClientWrapper::ExecDdl(impala::TDdlExecResponse&, impala::TDdlExecRequest const&, bool*) [catalog-service-client-wrapper.h : 47 + 0x13]

rbx = 0x0000000000000000 rbp = 0x00007f2e8f3ecf90

rsp = 0x00007f2e8f3ecf30 r12 = 0x0000000000000000

r13 = 0x00000000110d1ba8 r14 = 0x0000000002512a7c

r15 = 0x0000000000000000 rip = 0x0000000002512b51

Found by: call frame info

15 impalad!impala::Status impala::ClientConnection<impala::CatalogServiceClientWrapper>::DoRpc<void (impala::CatalogServiceClientWrapper::*)(impala::TDdlExecResponse&, impala::TDdlExecRequest const&, bool*), impala::TDdlExecRequest, impala::TDdlExecResponse>(void (impala::CatalogServiceClientWrapper::* const&)(impala::TDdlExecResponse&, impala::TDdlExecRequest const&, bool*), impala::TDdlExecRequest const&, impala::TDdlExecResponse*) [client-cache.h : 243 + 0x31]

rbx = 0x0000000000000000 rbp = 0x00007f2e8f3ed070

rsp = 0x00007f2e8f3ecfa0 r12 = 0x0000000000000000

r13 = 0x00000000110d1ba8 r14 = 0x0000000002512a7c

r15 = 0x0000000000000000 rip = 0x0000000002513982

Found by: call frame info

...

31 impalad!boost::_bi::bind_t<void, boost::_mfi::mf2<void, impala::ThriftThread, boost::shared_ptr<apache::thrift::concurrency::Runnable>, impala::Promise<unsigned long, (impala::PromiseMode)0>*>, boost::_bi::list3<boost::_bi::value<impala::ThriftThread*>, boost::_bi::value<boost::shared_ptr<apache::thrift::concurrency::Runnable> >, boost::_bi::value<impala::Promise<unsigned long, (impala::PromiseMode)0>*> > >::operator()() [bind_template.hpp : 20 + 0x22]

rbx = 0x000000000000510b rbp = 0x00007f2e8f3f18a0

rsp = 0x00007f2e8f3f1860 r12 = 0x0000000000000000

r13 = 0x00007f2e903f9c60 r14 = 0x000000000c7b95c8

r15 = 0x000000000c2822b0 rip = 0x0000000001fe8aa9

Found by: call frame info

32 impalad!boost::detail::function::void_function_obj_invoker0<boost::_bi::bind_t<void, boost::_mfi::mf2<void, impala::ThriftThread, boost::shared_ptr<apache::thrift::concurrency::Runnable>, impala::Promise<unsigned long, (impala::PromiseMode)0>*>, boost::_bi::list3<boost::_bi::value<impala::ThriftThread*>, boost::_bi::value<boost::shared_ptr<apache::thrift::concurrency::Runnable> >, boost::_bi::value<impala::Promise<unsigned long, (impala::PromiseMode)0>*> > >, void>::invoke(boost::detail::function::function_buffer&) [function_template.hpp : 153 + 0xc]

rbx = 0x000000000000510b rbp = 0x00007f2e8f3f18d0

rsp = 0x00007f2e8f3f18b0 r12 = 0x0000000000000000

r13 = 0x00007f2e903f9c60 r14 = 0x000000000c7b95c8

r15 = 0x000000000c2822b0 rip = 0x0000000001fe89bc

Found by: call frame info

33 impalad!boost::function0<void>::operator()() const [function_template.hpp : 767 + 0x11]

rbx = 0x000000000000510b rbp = 0x00007f2e8f3f1910

rsp = 0x00007f2e8f3f18e0 r12 = 0x0000000000000000

r13 = 0x00007f2e903f9c60 r14 = 0x000000000c7b95c8

r15 = 0x000000000c2822b0 rip = 0x0000000001f04e58

Found by: call frame info

34 impalad!impala::Thread::SuperviseThread(std::string const&, std::string const&, boost::function<void ()>, impala::ThreadDebugInfo const*, impala::Promise<long, (impala::PromiseMode)0>*) [thread.cc : 360 + 0xf]

rbx = 0x000000000000510b rbp = 0x00007f2e8f3f1c70

rsp = 0x00007f2e8f3f1920 r12 = 0x0000000000000000

r13 = 0x00007f2e903f9c60 r14 = 0x000000000c7b95c8

r15 = 0x000000000c2822b0 rip = 0x000000000247bf47

Found by: call frame info

...堆栈里同时也有代码的行号,感兴趣的朋友可以下载整个 resolved.txt 文件 (压缩后73KB) 研究研究:https://github.com/stiga-huang/BlogFiles/blob/master/Debugging%20on%20Stuck%20Query/resolved.txt.gz

在这之后就知道Coordinator是卡在等待ExecDdl的RPC返回了,后面同样可以对catalogd做分析。

总结

Impalad查询卡顿时,如果日志中无法发现异常,对于BE部分可以使用core dump或minidump来做线程堆栈分析,对于FE部分可以用jstack做分析。日志中的线程id对应gdb中的 LWP id 和 jstack 中的 nid (16进制),在minidump中找不到明显对应,可试图在寄存器值中匹配线程。

(注:BE是指Backend,主要是Impala的C++部分;FE是指Frontend,主要是Impala的Java部分)

指令回顾

gcore [-o filename] pid

gdb -c core_file program_binary

gdb指令

thread find thread_id (查找对应线程id在gdb中的线程编号)

thread id (跳到对应编号的线程)

bt (打印堆栈)

jstack pid

kill -s SIGUSR1 pid (触发impala进程生成minidump)

$IMPALA_HOME/bin/dump_breakpad_symbols.py -f binaries -d output_symbol_dir (生成Breakpad形式的符号文件)

$IMPALA_TOOLCHAIN/breakpad-$IMPALA_BREAKPAD_VERSION/bin/minidump_stackwalk $MINIDUMP_FILE $BREAKPAD_SYMBOLS > $RESULT_FILE (解析Minidump)

参考文档

https://cwiki.apache.org/confluence/display/IMPALA/Debugging+Impala+Core+Dumps+on+Another+System

https://cwiki.apache.org/confluence/display/IMPALA/Debugging+Impala+Minidumps

https://cwiki.apache.org/confluence/display/IMPALA/Building+Impala

作者简介:黄权隆,Cloudera研发工程师,Apache Impala PMC & Comitter,毕业于北大计算机系网络所数据库实验室,曾就职于Hulu大数据基础架构团队,负责大数据系统的维护和二次开发,主要负责Impala和HBase方向。现就职于Cloudera,专注于Impala开发。

以上是关于Impala查询卡顿分析案例的主要内容,如果未能解决你的问题,请参考以下文章

单表千亿电信大数据场景,使用Spark+CarbonData替换Impala案例