手把手教你搭建神经网络(使用Vision Transformer进行图像分类)

Posted 羽峰码字

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了手把手教你搭建神经网络(使用Vision Transformer进行图像分类)相关的知识,希望对你有一定的参考价值。

大家好,我是羽峰,今天要和大家分享的是一个基于Vision Transformer的图像分类研究。文章会把整个代码进行分割讲解,完整看完,相信你一定会有所收获。

欢迎关注“羽峰码字”

目录

1. 介绍

本示例实现了Alexey Dosovitskiy等人的 Vision Transformer (ViT) 模型。 该模型主要功能是进行图像分类,使用CIFAR-100数据集进行复现。 ViT模型可将Transformer体系结构自觉地应用于图像补丁序列,而无需使用卷积层。

此示例需要TensorFlow 2.4或更高版本以及TensorFlow Addons,可以使用以下命令进行安装:

pip install -U tensorflow-addons2. 前期准备

2.1 一些基本API

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

import tensorflow_addons as tfa2.2 一些超参数的配置

learning_rate = 0.001

weight_decay = 0.0001

batch_size = 256

num_epochs = 100

image_size = 72 # We'll resize input images to this size

patch_size = 6 # Size of the patches to be extract from the input images

num_patches = (image_size // patch_size) ** 2

projection_dim = 64

num_heads = 4

transformer_units = [

projection_dim * 2,

projection_dim,

] # Size of the transformer layers

transformer_layers = 8

mlp_head_units = [2048, 1024] # Size of the dense layers of the final classifier3. 数据

3.1 准备数据

num_classes = 100

input_shape = (32, 32, 3)

(x_train, y_train), (x_test, y_test) = keras.datasets.cifar100.load_data()

print(f"x_train shape: x_train.shape - y_train shape: y_train.shape")

print(f"x_test shape: x_test.shape - y_test shape: y_test.shape")x_train shape: (50000, 32, 32, 3) - y_train shape: (50000, 1) x_test shape: (10000, 32, 32, 3) - y_test shape: (10000, 1)

3.2 数据扩充

data_augmentation = keras.Sequential(

[

layers.experimental.preprocessing.Normalization(),

layers.experimental.preprocessing.Resizing(image_size, image_size),

layers.experimental.preprocessing.RandomFlip("horizontal"),

layers.experimental.preprocessing.RandomRotation(factor=0.02),

layers.experimental.preprocessing.RandomZoom(

height_factor=0.2, width_factor=0.2

),

],

name="data_augmentation",

)

# Compute the mean and the variance of the training data for normalization.

data_augmentation.layers[0].adapt(x_train)4. 多层感知器(MLP)

def mlp(x, hidden_units, dropout_rate):

for units in hidden_units:

x = layers.Dense(units, activation=tf.nn.gelu)(x)

x = layers.Dropout(dropout_rate)(x)

return x5. 将patch创建实施为一层

class Patches(layers.Layer):

def __init__(self, patch_size):

super(Patches, self).__init__()

self.patch_size = patch_size

def call(self, images):

batch_size = tf.shape(images)[0]

patches = tf.image.extract_patches(

images=images,

sizes=[1, self.patch_size, self.patch_size, 1],

strides=[1, self.patch_size, self.patch_size, 1],

rates=[1, 1, 1, 1],

padding="VALID",

)

patch_dims = patches.shape[-1]

patches = tf.reshape(patches, [batch_size, -1, patch_dims])

return patches让我们显示示例图像的patch

import matplotlib.pyplot as plt

plt.figure(figsize=(4, 4))

image = x_train[np.random.choice(range(x_train.shape[0]))]

plt.imshow(image.astype("uint8"))

plt.axis("off")

resized_image = tf.image.resize(

tf.convert_to_tensor([image]), size=(image_size, image_size)

)

patches = Patches(patch_size)(resized_image)

print(f"Image size: image_size X image_size")

print(f"Patch size: patch_size X patch_size")

print(f"Patches per image: patches.shape[1]")

print(f"Elements per patch: patches.shape[-1]")

n = int(np.sqrt(patches.shape[1]))

plt.figure(figsize=(4, 4))

for i, patch in enumerate(patches[0]):

ax = plt.subplot(n, n, i + 1)

patch_img = tf.reshape(patch, (patch_size, patch_size, 3))

plt.imshow(patch_img.numpy().astype("uint8"))

plt.axis("off")Image size: 72 X 72 Patch size: 6 X 6 Patches per image: 144 Elements per patch: 108

6 实现patch编码层

PatchEncoder层将通过将patch投影到大小为projection_dim的向量中来线性地对其进行变换。 此外,它还向嵌入的向量中添加了可学习的位置。

class PatchEncoder(layers.Layer):

def __init__(self, num_patches, projection_dim):

super(PatchEncoder, self).__init__()

self.num_patches = num_patches

self.projection = layers.Dense(units=projection_dim)

self.position_embedding = layers.Embedding(

input_dim=num_patches, output_dim=projection_dim

)

def call(self, patch):

positions = tf.range(start=0, limit=self.num_patches, delta=1)

encoded = self.projection(patch) + self.position_embedding(positions)

return encoded7.建立ViT模型

ViT模型由多个Transformer模块组成,这些模块使用layer.MultiHeadAttention层作为应用于补丁(patch)序列的自注意力机制。 Transformer块产生一个[batch_size,num_patches,projection_dim]张量,该张量通过带有softmax的分类器头进行处理以产生最终的类概率输出。

与 paper中描述的技术不同,后者将可学习的嵌入添加到已编码补丁的序列中以用作图像表示,最终Transformer块的所有输出均经过layer.Flatten()整形并用作输入的图像表示。请注意,也可以改用layers.GlobalAveragePooling1D图层来聚合Transformer块的输出,尤其是在色块数量和投影尺寸较大的情况下。

def create_vit_classifier():

inputs = layers.Input(shape=input_shape)

# Augment data.

augmented = data_augmentation(inputs)

# Create patches.

patches = Patches(patch_size)(augmented)

# Encode patches.

encoded_patches = PatchEncoder(num_patches, projection_dim)(patches)

# Create multiple layers of the Transformer block.

for _ in range(transformer_layers):

# Layer normalization 1.

x1 = layers.LayerNormalization(epsilon=1e-6)(encoded_patches)

# Create a multi-head attention layer.

attention_output = layers.MultiHeadAttention(

num_heads=num_heads, key_dim=projection_dim, dropout=0.1

)(x1, x1)

# Skip connection 1.

x2 = layers.Add()([attention_output, encoded_patches])

# Layer normalization 2.

x3 = layers.LayerNormalization(epsilon=1e-6)(x2)

# MLP.

x3 = mlp(x3, hidden_units=transformer_units, dropout_rate=0.1)

# Skip connection 2.

encoded_patches = layers.Add()([x3, x2])

# Create a [batch_size, projection_dim] tensor.

representation = layers.LayerNormalization(epsilon=1e-6)(encoded_patches)

representation = layers.Flatten()(representation)

representation = layers.Dropout(0.5)(representation)

# Add MLP.

features = mlp(representation, hidden_units=mlp_head_units, dropout_rate=0.5)

# Classify outputs.

logits = layers.Dense(num_classes)(features)

# Create the Keras model.

model = keras.Model(inputs=inputs, outputs=logits)

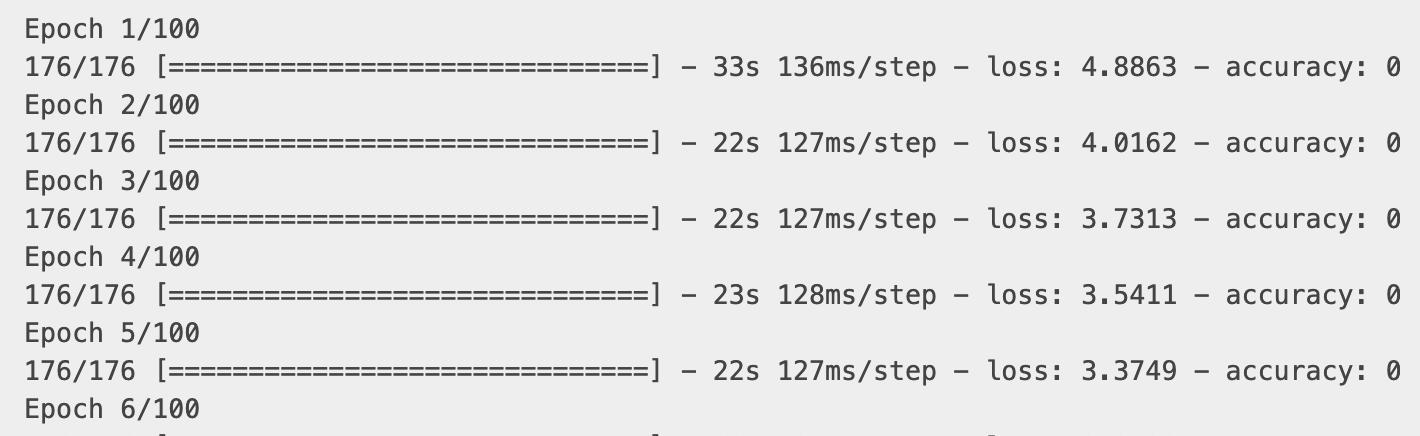

return model8. 编译,训练和评估模型

def run_experiment(model):

optimizer = tfa.optimizers.AdamW(

learning_rate=learning_rate, weight_decay=weight_decay

)

model.compile(

optimizer=optimizer,

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[

keras.metrics.SparseCategoricalAccuracy(name="accuracy"),

keras.metrics.SparseTopKCategoricalAccuracy(5, name="top-5-accuracy"),

],

)

checkpoint_filepath = "/tmp/checkpoint"

checkpoint_callback = keras.callbacks.ModelCheckpoint(

checkpoint_filepath,

monitor="val_accuracy",

save_best_only=True,

save_weights_only=True,

)

history = model.fit(

x=x_train,

y=y_train,

batch_size=batch_size,

epochs=num_epochs,

validation_split=0.1,

callbacks=[checkpoint_callback],

)

model.load_weights(checkpoint_filepath)

_, accuracy, top_5_accuracy = model.evaluate(x_test, y_test)

print(f"Test accuracy: round(accuracy * 100, 2)%")

print(f"Test top 5 accuracy: round(top_5_accuracy * 100, 2)%")

return history

vit_classifier = create_vit_classifier()

history = run_experiment(vit_classifier)

经过100个周期后,ViT模型的测试数据达到了约55%的精度和82%的top-5精度。 这些在CIFAR-100数据集上不是竞争性结果,因为从零开始对同一数据进行训练的ResNet50V2可以达到67%的准确度。

请注意,通过使用JFT-300M数据集对ViT模型进行预训练,然后在目标数据集上进行微调,可以实现paper 中报告的最新结果。 要在不进行预训练的情况下提高模型质量,您可以尝试对模型进行更多的训练,使用更多的Transformer图层,调整输入图像的大小,更改色块大小,或增加投影尺寸。 此外,如本文所述,模型的质量不仅受体系结构选择的影响,还受学习速率计划,优化器,权重衰减等参数的影响。在实践中,建议对ViT进行微调 使用大型高分辨率数据集进行预训练的模型。

至此,今天的分享结束了。强烈建议新手能按照上述步骤一步步实践下来,必有收获。

今天代码翻译于:https://keras.io/examples/vision/image_classification_with_vision_transformer/,新入门的小伙伴可以好好看看这个网站,很基础,很适合新手。

当然,这里不得不重点推荐一下这三个网站:

https://tensorflow.google.cn/tutorials/keras/classification

https://keras.io/examples

https://keras.io/zh/

其中keras中文网址中能找到各种API定义,都是中文通俗易懂,如果想看英文直接到https://keras.io/,就可以,这里也有很多案例,也是很基础明白。入门时可以看看。

我是羽峰,还在成长道路上摸爬滚打的小白,希望能够结识一起成长的你,公众号“羽峰码字”,欢迎来撩。

以上是关于手把手教你搭建神经网络(使用Vision Transformer进行图像分类)的主要内容,如果未能解决你的问题,请参考以下文章