爬虫大作业

Posted 宇健

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了爬虫大作业相关的知识,希望对你有一定的参考价值。

import requests, re, jieba,pandas

from bs4 import BeautifulSoup

from datetime import datetime

from wordcloud import WordCloud

import matplotlib.pyplot as plt

# 获取新闻细节

def getNewsDetail(newsUrl):

res = requests.get(newsUrl)

res.encoding = \'gb2312\'

soupd = BeautifulSoup(res.text, \'html.parser\')

detail = {\'title\': soupd.select(\'#epContentLeft\')[0].h1.text, \'newsUrl\': newsUrl, \'time\': datetime.strptime(

re.search(\'(\\d{4}.\\d{2}.\\d{2}\\s\\d{2}.\\d{2}.\\d{2})\', soupd.select(\'.post_time_source\')[0].text).group(1),

\'%Y-%m-%d %H:%M:%S\'), \'source\': re.search(\'来源:(.*)\', soupd.select(\'.post_time_source\')[0].text).group(1),

\'content\': soupd.select(\'#endText\')[0].text}

return detail

# 通过jieba分词,获取新闻关键词

def getKeyWords():

content = open(\'news.txt\', \'r\', encoding=\'utf-8\').read()

wordSet = set(jieba._lcut(\'\'.join(re.findall(\'[\\u4e00-\\u9fa5]\', content)))) # 通过正则表达式选取中文字符数组,拼接为无标点字符内容,再转换为字符集合

wordDict = {}

deleteList, keyWords = [], []

for i in wordSet:

wordDict[i] = content.count(i) # 生成词云字典

for i in wordDict.keys():

if len(i) < 2:

deleteList.append(i) # 生成单字无意义字符列表

for i in deleteList:

del wordDict[i] # 在词云字典中删除无意义字符

dictList = list(wordDict.items())

dictList.sort(key=lambda item: item[1], reverse=True)

for dict in dictList:

keyWords.append(dict[0])

writekeyword(keyWords)

# 将新闻内容写入到文件

def writeNews(pagedetail):

f = open(\'text1.txt\', \'a\', encoding=\'utf-8\')

for detail in pagedetail:

f.write(detail[\'content\'])

f.close()

# 将词云写入到文件

def writekeyword(keywords):

f = open(\'text.txt\', \'a\', encoding=\'utf-8\')

for word in text:

f.write(\' \' + word)

f.close()

# 获取一页的新闻

def getListPage(listUrl):

res = requests.get(listUrl)

res.encoding = \'utf-8\'

soup = BeautifulSoup(res.text, \'html.parser\')

pagedetail = [] # 存储一页所有新闻的详情

for news in soup.select(\'#news-flow-content\')[0].select(\'li\'):

newsdetail = getNewsDetail(news.select(\'a\')[0][\'href\']) # 调用getNewsDetail()获取新闻详情

pagedetail.append(newsdetail)

return pagedetail

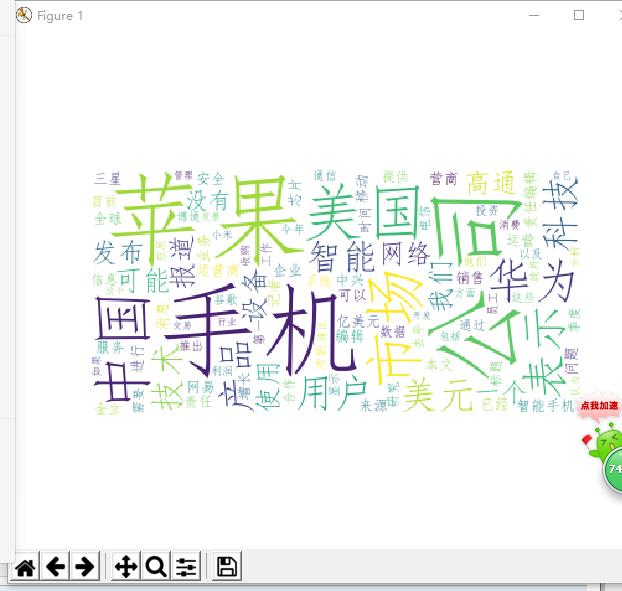

def getWordCloud():

keywords = open(\'keywords.txt\', \'r\', encoding=\'utf-8\').read() # 打开词云文件

wc = WordCloud(font_path=r\'C:\\Windows\\Fonts\\simfang.ttf\', background_color=\'white\', max_words=100).generate(

keywords).to_file(\'kwords.png\') # 生成词云,字体设置为可识别中文字符

plt.imshow(wc)

plt.axis(\'off\')

plt.show()

pagedetail = getListPage(\'http://tech.163.com/internet/\') # 获取首页新闻

writeNews(pagedetail)

for i in range(2, 20): # 因为网易新闻频道只存取20页新闻,直接设置20

listUrl = \'http://tech.163.com/special/tele_2016_%02d/\' % i # 填充新闻页,页面格式为两位数字字符

pagedetail = getListPage(listUrl)

writeNews(pagedetail)

getKeyWords() # 获取词云,并且写到文件

getWordCloud() # 从词云文件读取词云,生成词云

以上是关于爬虫大作业的主要内容,如果未能解决你的问题,请参考以下文章