Making training mini-batches

Posted 刘川枫

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Making training mini-batches相关的知识,希望对你有一定的参考价值。

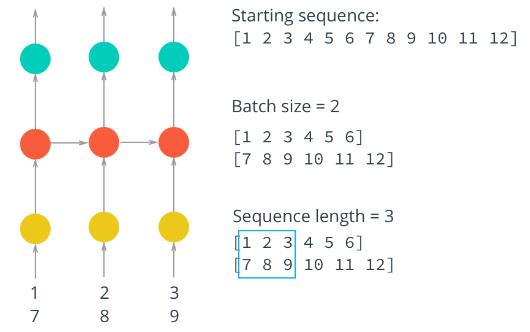

Here is where we\'ll make our mini-batches for training. Remember that we want our batches to be multiple sequences of some desired number of sequence steps. Considering a simple example, our batches would look like this:

We have our text encoded as integers as one long array in encoded. Let\'s create a function that will give us an iterator for our batches. I like using generator functions to do this. Then we can pass encoded into this function and get our batch generator.

The first thing we need to do is discard some of the text so we only have completely full batches. Each batch contains N×MN×M characters, where NN is the batch size (the number of sequences) and MM is the number of steps. Then, to get the number of batches we can make from some array arr, you divide the length of arr by the batch size. Once you know the number of batches and the batch size, you can get the total number of characters to keep.

After that, we need to split arr into NN sequences. You can do this using arr.reshape(size) where size is a tuple containing the dimensions sizes of the reshaped array. We know we want NN sequences (n_seqs below), let\'s make that the size of the first dimension. For the second dimension, you can use -1 as a placeholder in the size, it\'ll fill up the array with the appropriate data for you. After this, you should have an array that is N×(M∗K)N×(M∗K) where KK is the number of batches.

Now that we have this array, we can iterate through it to get our batches. The idea is each batch is a N×MN×M window on the array. For each subsequent batch, the window moves over by n_steps. We also want to create both the input and target arrays. Remember that the targets are the inputs shifted over one character. You\'ll usually see the first input character used as the last target character, so something like this:

y[:, :-1], y[:, -1] = x[:, 1:], x[:, 0]

where x is the input batch and y is the target batch.

The way I like to do this window is use range to take steps of size n_steps from 00 to arr.shape[1], the total number of steps in each sequence. That way, the integers you get from range always point to the start of a batch, and each window is n_steps wide.

1 def get_batches(arr, n_seqs, n_steps): 2 \'\'\'Create a generator that returns batches of size 3 n_seqs x n_steps from arr. 4 5 Arguments 6 --------- 7 arr: Array you want to make batches from 8 n_seqs: Batch size, the number of sequences per batch 9 n_steps: Number of sequence steps per batch 10 \'\'\' 11 # Get the number of characters per batch and number of batches we can make 12 characters_per_batch = n_seqs * n_steps 13 n_batches = len(arr) // characters_per_batch 14 15 # Keep only enough characters to make full batches 16 arr = arr[:n_batches*characters_per_batch] 17 18 # Reshape into n_seqs rows 19 arr = arr.reshape((n_seqs, -1)) 20 21 for n in range(0, arr.shape[1], n_steps): 22 # The features 23 x = arr[:, n:n+n_steps] 24 # The targets, shifted by one 25 y = np.zeros_like(x) 26 y[:, :-1], y[:, -1] = x[:, 1:], x[:, 0] 27 yield x, y 28 29 batches = get_batches(encoded, 10, 50) 30 x, y = next(batches) 31 32 print(\'x\\n\', x[:10, :10]) 33 print(\'\\ny\\n\', y[:10, :10])

以上是关于Making training mini-batches的主要内容,如果未能解决你的问题,请参考以下文章