mongodb3.2复制集和shard集群搭建

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了mongodb3.2复制集和shard集群搭建相关的知识,希望对你有一定的参考价值。

三台机器操作系统环境如下:

[[email protected] ~]$ cat /etc/issue CentOS release 6.4 (Final) Kernel \r on an \m [[email protected] ~]$ uname -r 2.6.32-358.el6.x86_64 [[email protected] ~]$ uname -m x86_64

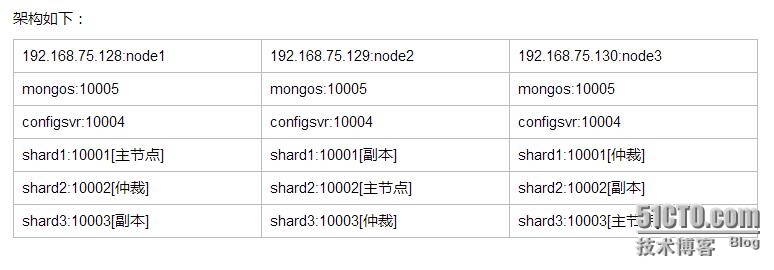

架构如下图,之前的架构图找不到了,就凑合看下面的表格吧。。

192.168.75.128、shard1:10001、shard2:10002、shard3:10003、configsvr:10004、mongos:10005

注:shard1主节点,shard2仲裁,shard3副本

192.168.75.129、shard1:10001、shard2:10002、shard3:10003、configsvr:10004、mongos:10005

注:shard1副本,shard2主节点,shard3仲裁

192.168.75.130、shard1:10001、shard2:10002、shard3:10003、configsvr:10004、mongos:10005

注:shard1仲裁,shard2副本,shard3主节点

node1:192.168.75.128

node2:192.168.75.129

node3:192.168.75.130

创建mongodb用户

[[email protected] ~]# groupadd mongodb [[email protected] ~]# useradd -g mongodb mongodb [[email protected] ~]# mkdir /data [[email protected] ~]# chown mongodb.mongodb /data -R [[email protected] ~]# su - mongodb

创建目录和文件

[[email protected] ~]$ mkdir /data/{config,shard1,shard2,shard3,mongos,logs,configsvr,keyfile} -pv [[email protected] ~]$ touch /data/keyfile/zxl [[email protected] ~]$ touch /data/logs/shard{1..3}.log [[email protected] ~]$ touch /data/logs/{configsvr,mongos}.log [[email protected] ~]$ touch /data/config/shard{1..3}.conf [[email protected] ~]$ touch /data/config/{configsvr,mongos}.conf

下载mongodb

[[email protected] ~]$ wget https://fastdl.mongodb.org/linux/mongodb-linux-x86_64-rhel62-3.2.3.tgz [[email protected] ~]$ tar fxz mongodb-linux-x86_64-rhel62-3.2.3.tgz -C /data [[email protected] ~]$ ln -s /data/mongodb-linux-x86_64-rhel62-3.2.3 /data/mongodb

配置mongodb环境变量

[[email protected] ~]$ echo "export PATH=$PATH:/data/mongodb/bin" >> ~/.bash_profile [[email protected] data]$ source ~/.bash_profile

shard1.conf配置文件内容如下:

[[email protected] ~]$ cat /data/config/shard1.conf systemLog: destination: file path: /data/logs/shard1.log logAppend: true processManagement: fork: true pidFilePath: "/data/shard1/shard1.pid" net: port: 10001 storage: dbPath: "/data/shard1" engine: wiredTiger journal: enabled: true directoryPerDB: true operationProfiling: slowOpThresholdMs: 10 mode: "slowOp" #security: # keyFile: "/data/keyfile/zxl" # clusterAuthMode: "keyFile" replication: oplogSizeMB: 50 replSetName: "shard1_zxl" secondaryIndexPrefetch: "all"

shard2.conf配置文件内容如下:

[[email protected] ~]$ cat /data/config/shard2.conf systemLog: destination: file path: /data/logs/shard2.log logAppend: true processManagement: fork: true pidFilePath: "/data/shard2/shard2.pid" net: port: 10002 storage: dbPath: "/data/shard2" engine: wiredTiger journal: enabled: true directoryPerDB: true operationProfiling: slowOpThresholdMs: 10 mode: "slowOp" #security: # keyFile: "/data/keyfile/zxl" # clusterAuthMode: "keyFile" replication: oplogSizeMB: 50 replSetName: "shard2_zxl" secondaryIndexPrefetch: "all"

shard3.conf配置文件内容如下:

[[email protected] ~]$ cat /data/config/shard2.conf systemLog: destination: file path: /data/logs/shard3.log logAppend: true processManagement: fork: true pidFilePath: "/data/shard3/shard3.pid" net: port: 10003 storage: dbPath: "/data/shard3" engine: wiredTiger journal: enabled: true directoryPerDB: true operationProfiling: slowOpThresholdMs: 10 mode: "slowOp" #security: # keyFile: "/data/keyfile/zxl" # clusterAuthMode: "keyFile" replication: oplogSizeMB: 50 replSetName: "shard3_zxl" secondaryIndexPrefetch: "all"

configsvr.conf配置文件内容如下:

[[email protected] ~]$ cat /data/config/configsvr.conf systemLog: destination: file path: /data/logs/configsvr.log logAppend: true processManagement: fork: true pidFilePath: "/data/configsvr/configsvr.pid" net: port: 10004 storage: dbPath: "/data/configsvr" engine: wiredTiger journal: enabled: true #security: # keyFile: "/data/keyfile/zxl" # clusterAuthMode: "keyFile" sharding: clusterRole: configsvr

mongos.conf配置文件内容如下:

[[email protected] ~]$ cat /data/config/mongos.conf systemLog: destination: file path: /data/logs/mongos.log logAppend: true processManagement: fork: true pidFilePath: /data/mongos/mongos.pid net: port: 10005 sharding: configDB: 192.168.75.128:10004,192.168.75.129:10004,192.168.75.130:10004 #security: # keyFile: "/data/keyfile/zxl" # clusterAuthMode: "keyFile"

注:以上操作只是在node1机器上操作,请把上面这些操作步骤在另外2台机器操作一下,包括创建用户创建目录文件以及安装mongodb等,以及文件拷贝到node2、node3对应的目录下,拷贝之后查看一下文件的属主属组是否为mongodb。关于configsvr的问题,官方建议1台或者3台,反正就是为奇数,你懂得。不懂的话自行搜索mongodb官方就知道答案了,链接找不到了,自己找找吧。

启动各个机器节点的mongod,shard1、shard2、shard3

[[email protected] ~]$ mongod -f /data/config/shard1.conf mongod: /usr/lib64/libcrypto.so.10: no version information available (required by m mongod: /usr/lib64/libcrypto.so.10: no version information available (required by m mongod: /usr/lib64/libssl.so.10: no version information available (required by mong mongod: relocation error: mongod: symbol TLSv1_1_client_method, version libssl.so.1n file libssl.so.10 with link time reference

注:无法启动,看到相应的提示后

解决:安装openssl即可,三台机器均安装openssl-devel

[[email protected] ~]$ su - root Password: [[email protected] ~]# yum install openssl-devel -y

再次切换mongodb用户启动三台机器上的mongod,shard1、shard2、shard3

[[email protected] ~]$ mongod -f /data/config/shard1.conf about to fork child process, waiting until server is ready for connections. forked process: 1737 child process started successfully, parent exiting [[email protected] ~]$ mongod -f /data/config/shard2.conf about to fork child process, waiting until server is ready for connections. forked process: 1760 child process started successfully, parent exiting [[email protected] ~]$ mongod -f /data/config/shard3.conf about to fork child process, waiting until server is ready for connections. forked process: 1783 child process started successfully, parent exiting

进入node1机器上的mongod:10001登录

[[email protected] ~]$ mongo --port 10001 MongoDB shell version: 3.2.3 connecting to: 127.0.0.1:10001/test Welcome to the MongoDB shell. For interactive help, type "help". For more comprehensive documentation, see http://docs.mongodb.org/ Questions? Try the support group http://groups.google.com/group/mongodb-user Server has startup warnings: 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] ** WARNING: /sys/kernel/mm/epage/enabled is ‘always‘. 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] ** We suggest settin 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] ** WARNING: /sys/kernel/mm/epage/defrag is ‘always‘. 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten] ** We suggest settin 2016-03-08T13:28:18.508+0800 I CONTROL [initandlisten]

注:提示warning......

解决:在三台机器上均操作一下内容即可

[[email protected] config]$ su - root Password [[email protected] ~]# echo never > /sys/kernel/mm/transparent_hugepage/enabled [[email protected] ~]# echo never > /sys/kernel/mm/transparent_hugepage/defrag

关闭三台机器上的mongod实例,然后再次启动三台机器上mongod实例即可。

[[email protected] ~]$ netstat -ntpl|grep mongo|awk ‘{print $NF}‘|awk -F‘/‘ ‘{print $1}‘|xargs kill [[email protected] ~]$ mongod -f /data/config/shard1.conf [[email protected] ~]$ mongod -f /data/config/shard2.conf [[email protected] ~]$ mongod -f /data/config/shard3.conf

配置复制集

node1机器上操作配置复制集

[[email protected] config]$ mongo --port 10001 MongoDB shell version: 3.2.3 connecting to: 127.0.0.1:10001/test > use admin switched to db admin > config = { _id:"shard1_zxl", members:[ ... ... {_id:0,host:"192.168.75.128:10001"}, ... ... {_id:1,host:"192.168.75.129:10001"}, ... ... {_id:2,host:"192.168.75.130:10001",arbiterOnly:true} ... ... ] ... ... } { "_id" : "shard1_zxl", "members" : [ { "_id" : 0, "host" : "192.168.75.128:10001" }, { "_id" : 1, "host" : "192.168.75.129:10001" }, { "_id" : 2, "host" : "192.168.75.130:10001", "arbiterOnly" : true } ] } > rs.initiate(con config connect( connectionURLTheSame( constructor > rs.initiate(config) { "ok" : 1 }

node2机器上操作配置复制集

[[email protected] config]$ mongo --port 10002 MongoDB shell version: 3.2.3 connecting to: 127.0.0.1:10002/test Welcome to the MongoDB shell. For interactive help, type "help". For more comprehensive documentation, see http://docs.mongodb.org/ Questions? Try the support group http://groups.google.com/group/mongodb-user > use admin switched to db admin > config = { _id:"shard2_zxl", members:[ ... ... {_id:0,host:"192.168.75.129:10002"}, ... ... {_id:1,host:"192.168.75.130:10002"}, ... ... {_id:2,host:"192.168.75.128:10002",arbiterOnly:true} ... ... ] ... ... } { "_id" : "shard2_zxl", "members" : [ { "_id" : 0, "host" : "192.168.75.129:10002" }, { "_id" : 1, "host" : "192.168.75.130:10002" }, { "_id" : 2, "host" : "192.168.75.128:10002", "arbiterOnly" : true } ] } > rs.initiate(config) { "ok" : 1 }

node3机器上操作配置复制集

[[email protected] config]$ mongo --port 10003 MongoDB shell version: 3.2.3 connecting to: 127.0.0.1:10003/test Welcome to the MongoDB shell. For interactive help, type "help". For more comprehensive documentation, see http://docs.mongodb.org/ Questions? Try the support group http://groups.google.com/group/mongodb-user > use admin switched to db admin > config = {_id:"shard3_zxl", members:[ ... ... {_id:0,host:"192.168.75.130:10003"}, ... ... {_id:1,host:"192.168.75.128:10003"}, ... ... {_id:2,host:"192.168.75.129:10003",arbiterOnly:true} ... ... ] ... ... } { "_id" : "shard3_zxl", "members" : [ { "_id" : 0, "host" : "192.168.75.130:10003" }, { "_id" : 1, "host" : "192.168.75.128:10003" }, { "_id" : 2, "host" : "192.168.75.129:10003", "arbiterOnly" : true } ] } > rs.initiate(config) { "ok" : 1 }

注:以上是配置rs复制集,相关命令如:rs.status(),查看各个复制集的状况

启动三台机器上的configsvr和mongos节点

[[email protected] logs]$ mongod -f /data/config/configsvr.conf about to fork child process, waiting until server is ready for connections. forked process: 6317 child process started successfully, parent exiting [[email protected] logs]$ mongos -f /data/config/mongos.conf about to fork child process, waiting until server is ready for connections. forked process: 6345 child process started successfully, parent exiting

配置shard分片

在node1机器上配置shard分片

[[email protected] config]$ mongo --port 10005 MongoDB shell version: 3.2.3 connecting to: 127.0.0.1:10005/test mongos> use admin switched to db admin mongos> db.runCommand({addshard:"shard1_zxl/192.168.75.128:10001,192.168.75.129:10001,192.168.75.130:10001"}); { "shardAdded" : "shard1_zxl", "ok" : 1 } mongos> db.runCommand({addshard:"shard2_zxl/192.168.75.128:10002,192.168.75.129:10002,192.168.75.130:10002"}); { "shardAdded" : "shard2_zxl", "ok" : 1 } mongos> db.runCommand({addshard:"shard3_zxl/192.168.75.128:10003,192.168.75.129:10003,192.168.75.130:10003"}); { "shardAdded" : "shard3_zxl", "ok" : 1 }

#db.runCommand({addshard:"shard1_zxl/192.168.33.131:10001,192.168.33.132:10001,192.168.33.136:10001"});

#db.runCommand({addshard:"shard2_zxl/192.168.33.131:10002,192.168.33.132:10002,192.168.33.136:10002"});

#db.runCommand({addshard:"shard3_zxl/192.168.33.131:10003,192.168.33.132:10003,192.168.33.136:10003"});

注:根据自己的实际情况,修改上面内容,快速执行。。你懂得。。查看shard信息

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("56de6f4176b47beaa9c75e9d")

}

shards:

{ "_id" : "shard1_zxl", "host" : "shard1_zxl/192.168.75.128:10001,192.168.75.129:10001" }

{ "_id" : "shard2_zxl", "host" : "shard2_zxl/192.168.75.129:10002,192.168.75.130:10002" }

{ "_id" : "shard3_zxl", "host" : "shard3_zxl/192.168.75.128:10003,192.168.75.130:10003" }

active mongoses:

"3.2.3" : 3

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

No recent migrations

databases:启用shard分片的库名字为‘zxl‘,即为库

mongos> sh.enableSharding("zxl")

{ "ok" : 1 }设置集合的名字以及字段,默认自动建立索引,zxl库,haha集合

mongos> sh.shardCollection("zxl.haha",{age: 1, name: 1})

{ "collectionsharded" : "zxl.haha", "ok" : 1 }模拟在haha集合中插入10000数据

mongos> for (i=1;i<=10000;i++) db.haha.insert({name: "user"+i, age: (i%150)})

WriteResult({ "nInserted" : 1 })可以使用上面mongos> sh.status()命令查看各个shard分片情况,以上就是复制集和shard分片搭建完成,主要还是需要理解rs和shard原理。还是把结果发出来吧,如下

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("56de6f4176b47beaa9c75e9d")

}

shards:

{ "_id" : "shard1_zxl", "host" : "shard1_zxl/192.168.75.128:10001,192.168.75.129:10001" }

{ "_id" : "shard2_zxl", "host" : "shard2_zxl/192.168.75.129:10002,192.168.75.130:10002" }

{ "_id" : "shard3_zxl", "host" : "shard3_zxl/192.168.75.128:10003,192.168.75.130:10003" }

active mongoses:

"3.2.3" : 3

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

2 : Success

databases:

{ "_id" : "zxl", "primary" : "shard3_zxl", "partitioned" : true }

zxl.haha

shard key: { "age" : 1, "name" : 1 }

unique: false

balancing: true

chunks:

shard1_zxl1

shard2_zxl1

shard3_zxl1

{ "age" : { "$minKey" : 1 }, "name" : { "$minKey" : 1 } } -->> { "age" : 2, "name" : "user2" } on : shard1_zxl Timestamp(2, 0)

{ "age" : 2, "name" : "user2" } -->> { "age" : 22, "name" : "user22" } on : shard2_zxl Timestamp(3, 0)

{ "age" : 22, "name" : "user22" } -->> { "age" : { "$maxKey" : 1 }, "name" : { "$maxKey" : 1 } } on : shard3_zxl Timestamp(3, 1)以上就是mongodb3.2复制集和shard分片搭建就此完成,还是多多看看各个角色是什么概念以及原理性的东东吧。。

本文出自 “村里的男孩” 博客,请务必保留此出处http://noodle.blog.51cto.com/2925423/1749028

以上是关于mongodb3.2复制集和shard集群搭建的主要内容,如果未能解决你的问题,请参考以下文章