PMON failed to acquire latch, see PMON dump

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了PMON failed to acquire latch, see PMON dump相关的知识,希望对你有一定的参考价值。

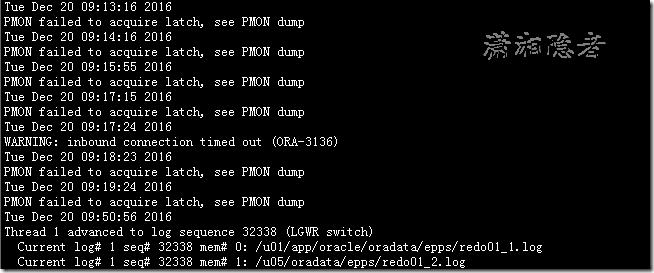

前几天,一台Oracle数据库(Oracle Database 10g Release 10.2.0.4.0 - 64bit Production)监控出现"PMON failed to acquire latch, see PMON dump"错误,连接数据库出现短暂异常,告警日志中具体错误如下所示:

Tue Dec 20 09:13:16 2016

PMON failed to acquire latch, see PMON dump

Tue Dec 20 09:14:16 2016

PMON failed to acquire latch, see PMON dump

Tue Dec 20 09:15:55 2016

PMON failed to acquire latch, see PMON dump

Tue Dec 20 09:17:15 2016

PMON failed to acquire latch, see PMON dump

Tue Dec 20 09:17:24 2016

WARNING: inbound connection timed out (ORA-3136)

Tue Dec 20 09:18:23 2016

PMON failed to acquire latch, see PMON dump

Tue Dec 20 09:19:24 2016

PMON failed to acquire latch, see PMON dump

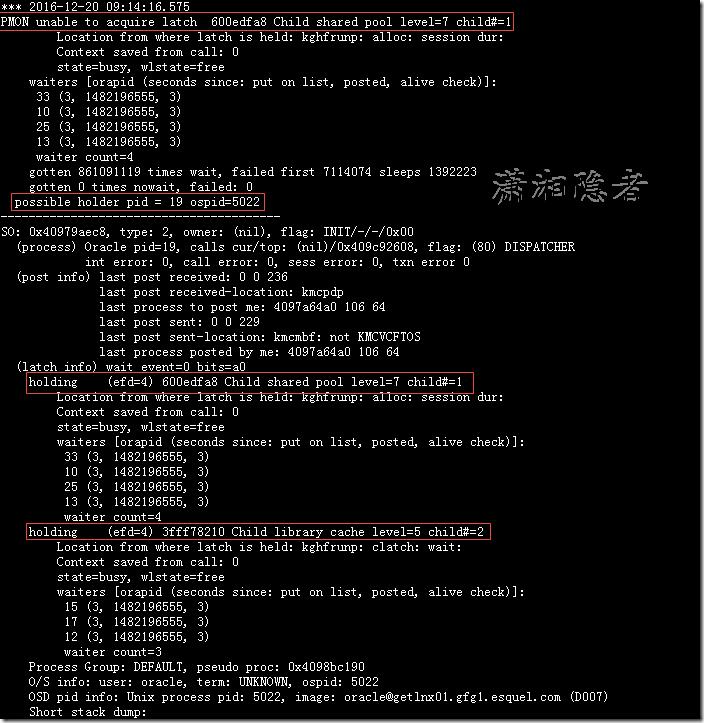

在生成的epps_pmon_4988.trc 跟踪文件里面,发现有些详细信息,你会发现PMON进程不能获取\'Child shared pool\' latch,它被一个pid = 19 ospid=5022的进程给阻塞了。而ospid这个进程是一个Dispatcher的进程。

*** 2016-12-20 09:14:16.575

PMON unable to acquire latch 600edfa8 Child shared pool level=7 child#=1 Location from where latch is held: kghfrunp: alloc: session dur:

Context saved from call: 0

state=busy, wlstate=free

waiters [orapid (seconds since: put on list, posted, alive check)]:

33 (3, 1482196555, 3)

10 (3, 1482196555, 3)

25 (3, 1482196555, 3)

13 (3, 1482196555, 3)

waiter count=4

gotten 861091119 times wait, failed first 7114074 sleeps 1392223

gotten 0 times nowait, failed: 0

possible holder pid = 19 ospid=5022

----------------------------------------

SO: 0x40979aec8, type: 2, owner: (nil), flag: INIT/-/-/0x00

(process) Oracle pid=19, calls cur/top: (nil)/0x409c92608, flag: (80) DISPATCHERint error: 0, call error: 0, sess error: 0, txn error 0

(post info) last post received: 0 0 236

last post received-location: kmcpdp

last process to post me: 4097a64a0 106 64last post sent: 0 0 229

last post sent-location: kmcmbf: not KMCVCFTOS

last process posted by me: 4097a64a0 106 64(latch info) wait_event=0 bits=a0

holding (efd=4) 600edfa8 Child shared pool level=7 child#=1 Location from where latch is held: kghfrunp: alloc: session dur:

Context saved from call: 0

state=busy, wlstate=free

waiters [orapid (seconds since: put on list, posted, alive check)]:

33 (3, 1482196555, 3)

10 (3, 1482196555, 3)

25 (3, 1482196555, 3)

13 (3, 1482196555, 3)

waiter count=4

holding (efd=4) 3fff78210 Child library cache level=5 child#=2 Location from where latch is held: kghfrunp: clatch: wait:

Context saved from call: 0

state=busy, wlstate=free

waiters [orapid (seconds since: put on list, posted, alive check)]:

15 (3, 1482196555, 3)

17 (3, 1482196555, 3)

12 (3, 1482196555, 3)

waiter count=3

Process Group: DEFAULT, pseudo proc: 0x4098bc190

O/S info: user: oracle, term: UNKNOWN, ospid: 5022

OSD pid info: Unix process pid: 5022, image: oracle@xx.xxx.xxx.com (D007)Short stack dump:

ksdxfstk()+32<-ksdxcb()+1547<-sspuser()+111<-__restore_rt()+0<-kghfrunp()+1506<-kghfnd()+1389<-kghalo()+587<-kmnsbm()+578<-nsb

al()+428<-nsbalc()+123<-nsdo()+17278<-nsopen()+2315<-nsanswer()+512<-kmnans()+37<-kmdahd()+385<-kmdmai()+5220<-kmmrdp()+564<-o

pirip()+1193<-opidrv()+582<-sou2o()+114<-opimai_real()+317<-main()+116<-__libc_start_main()+244<-_start()+41

Dump of memory from 0x0000000409747C68 to 0x0000000409747E70

409747C60 00000001 00000000 [........]

409747C70 FE9BEE10 00000003 0000003A 0003129B [........:.......]

409747C80 FEA7D5D0 00000003 0000003A 0003129B [........:.......]

409747C90 FE9DAD30 00000003 0000003A 0003129B [0.......:.......]

Repeat 2 times

409747CC0 FEAB01F0 00000003 0000003A 0003129B [........:.......]

409747CD0 FE9DAD30 00000003 0000003A 0003129B [0.......:.......]

409747CE0 FEA44E70 00000003 0000003A 0003129B [pN......:.......]

409747CF0 FEAA6FF0 00000003 0000003A 0003129B [.o......:.......]

409747D00 FEAB8AD0 00000003 0000003A 0003129B [........:.......]

409747D10 FEA14FF0 00000003 0000003A 0003129B [.O......:.......]

409747D20 FE9A77F0 00000003 0000003A 0003129B [.w......:.......]

Repeat 1 times

409747D40 FEA3CEB0 00000003 0000003A 0003129B [........:.......]

Repeat 1 times

409747D60 FE9C64B0 00000003 0000003A 0003129B [.d......:.......]

Repeat 1 times

409747D80 FEA062B0 00000003 0000003A 0003129B [.b......:.......]

Repeat 3 times

409747DC0 FEAA6FF0 00000003 0000003A 0003129B [.o......:.......]

409747DD0 FEA8F9D0 00000003 0000003A 0003129B [........:.......]

409747DE0 FE9F7570 00000003 0000003A 0003129B [pu......:.......]

409747DF0 FEA91530 00000003 0000003A 0003129B [0.......:.......]

409747E00 FE9BEE10 00000003 0000003A 0003129B [........:.......]

409747E10 FE9BB750 00000003 0000003A 0003129B [P.......:.......]

409747E20 FEA90C10 00000003 0000003A 0003129B [........:.......]

409747E30 FEA8B9F0 00000003 0000003A 0003129B [........:.......]

409747E40 FE9C5270 00000003 0000003A 0003129B [pR......:.......]

409747E50 FEAE12B0 00000003 0000003A 0003129B [........:.......]

409747E60 FE9C5270 00000003 0000003A 0003129B [pR......:.......]

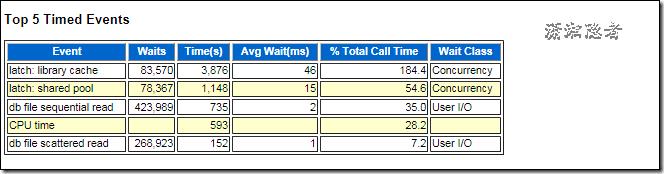

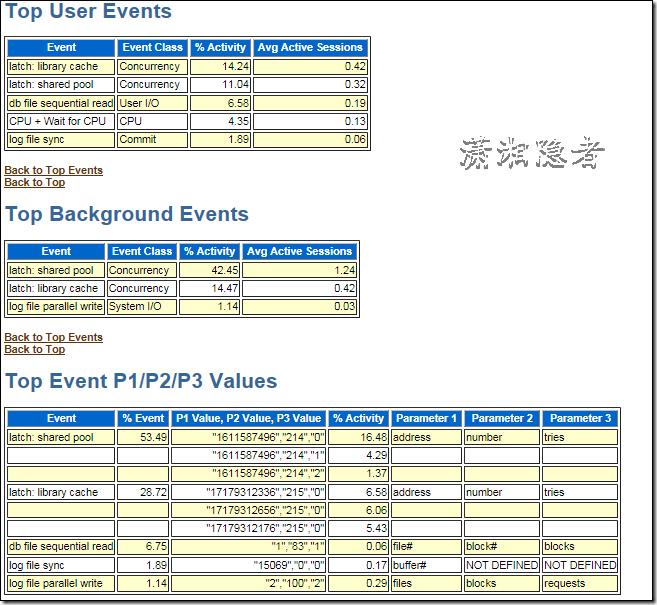

由于当时没有出现问题时,并没有及时发现,没有Collect HangAnalyze traces,所以再深入一点的挖掘root case已经很难了。当时手工生成了一个快照(9:26),也就是说9:00 ~ 9:26这段时间生成的快照刚刚覆盖了出现问题的时间段。生成了这个时段的AWR报告,在这个时间段latch:library cache 和latch:shared pool等待事件是主要等待事件。

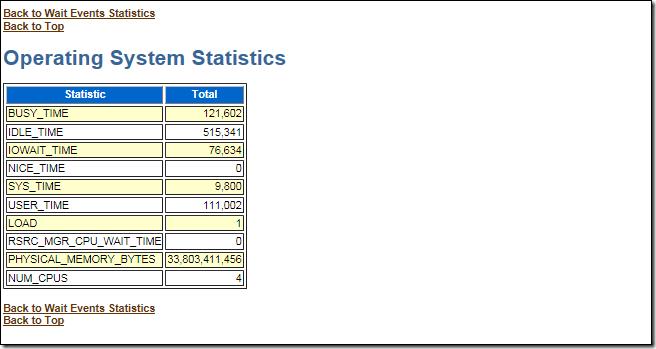

出现问题的时间段,数据库服务器是比较空闲的。

生成了20-Dec-16 09:11:16到20-Dec-16 09:21:16时段的ASH报告。如下所示,latch:library cache 和latch:shared pool为主要等待事件,但是Avg Active Sessions很小。

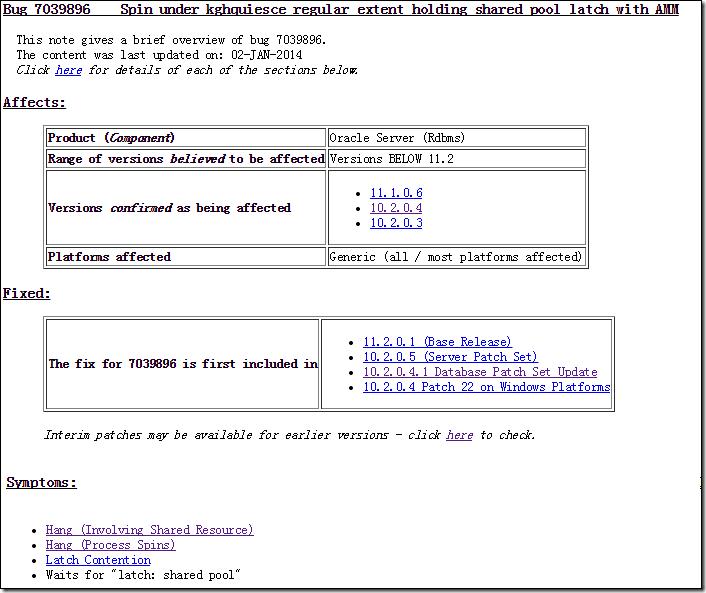

所以觉得很有可能是跟Bug有关系,后面在Oracle MetaLink查了一下是否有相关Bug,如下一些相关资料:

Bug 7039896 Spin under kghquiesce_regular_extent holding shared pool latch with AMM

Bug 6488694 - DATABASE HUNG WITH PMON FAILED TO ACQUIRE LATCH MESSAGE

Note 7039896.8 - Bug 7039896 - Spin under kghquiesce_regular_extent holding shared pool latch with AMM

Pmon Failed To Acquire Latch" Messages in Alert Log -Database Hung (文档 ID 468740.1)

Hang (Involving Shared Resource)

A process may hold a shared resource a lot longer than normally expected leading to many other processes having to wait for that resource. Such a resource could be a lock, a library cache pin, a latch etc.. The overall symptom is that typically numerous processes all appear to be stuck, although some processes may continue unhindered if they do not need the blocked resource.

A process enters a tight CPU loop so appears to hang but is actually consuming CPU.

This issue can result in latch contention within the database.

Waits for "latch: shared pool"

我们数据库版本为Oracle Database 10g Release 10.2.0.4.0 - 64bit Production, 所以Bug 7039896是会影响的这个数据库的, 而出现的现象也很符合,但是有一点就是并没有涉及MMAN进程。而且查过V$SGA_RESIZE_OPS,那个时间段并没有相关组件的增长、收缩。另外跟Bug 也非常类似,但是trc文件并没有发现跟MMAN进程有关系。 这个问题还是第一次出现,而且出现过一次后,最近几天都没有出现,所以更加确信是Bug引起的。当然是要找个时间应用Bug 7039896的相关补丁。

另外,在查找这个问题的时候,在官方文档看到一个如何处理、诊断\'PMON failed to acquire latch, see PMON dump\'的详细文档,本想收录于此,不过还是保持为PDF文件较好,需要可从下面链接下载。

以上是关于PMON failed to acquire latch, see PMON dump的主要内容,如果未能解决你的问题,请参考以下文章

kafka启动报错:Failed to acquire lock on file .lock in /tmp/kafka-log

Failed to acquire connection "SAP_PRD_NEW.SAPSR3". Connection may not be configured correc

kafka.common.KafkaException: Failed to acquire lock on file .lock in /tmp/kafka-logs. A Kafka instan

PMON (ospid: 2853): terminating the instance due to error 471

druid数据源集成p6spy:Failed to obtain JDBC Connection: dbType not support : null