『GreenPlum系列』GreenPlum 4节点集群安装(图文教程)

Posted 何石的博客

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了『GreenPlum系列』GreenPlum 4节点集群安装(图文教程)相关的知识,希望对你有一定的参考价值。

目标架构如上图

一、硬件评估

- cpu主频,核数

推荐CPU核数与磁盘数的比例在12:12以上

Instance上执行时只能利用一个CPU核资源进行计算,推荐高主频 - 内存容量

- 网络带宽

重分布操作 - Raid性能

条带宽度设置

回写特性

二、操作系统

1、在SUSE或者RedHat上使用xfs(操作系统使用ext3)

在Solaris上使用zfs(操作系统使用ufs)

2、系统包

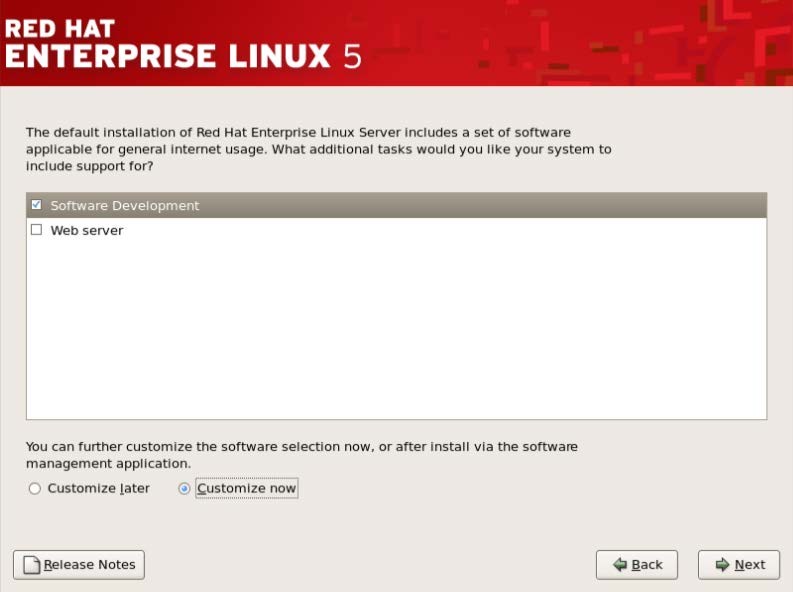

出现如下界面,按照下面的说明进行勾选,之后一直【Next】到开始安装。

--》【Desktop Environments】全置空

--》【Applications】中【Editors】和【Text-based Internet】保持不动,其他置空

--》【Development】中【Development Libraries】和【Development Tools】全选

其他置空

--》【Servers】全部置空

--》【Base System】置空【X Window System】

3、注意:SELINUX & IPTABLES

对于RedHat6.x系统来说,没有重启后的配置画面,缺省状态下SELINUX

和IPTABLES都是开启状态。在登录系统后还需要进行如下操作:

关闭SELINUX – 使用getenforce命令检查SELINUX状态,若结果不

是”Disabled”,可使用setenforce 0命令临时关闭SELINUX。要永久关闭

SELINUX,需修改/etc/selinux/config配置文件,修改配置为

SELINUX=disabled。

关闭IPTABLES – 使用service iptables status命令检查IPTABLES状态,若结

果不是”Firewall is stopped”,可使用service iptables stop命令关闭IPTABLES。

要永久关闭IPTABLES,使用chkconfig iptables off命令。

关闭SELINUX – 使用getenforce命令检查SELINUX状态,若结果不

是”Disabled”,可使用setenforce 0命令临时关闭SELINUX。要永久关闭

SELINUX,需修改/etc/selinux/config配置文件,修改配置为

SELINUX=disabled。

关闭IPTABLES – 使用service iptables status命令检查IPTABLES状态,若结

果不是”Firewall is stopped”,可使用service iptables stop命令关闭IPTABLES。

要永久关闭IPTABLES,使用chkconfig iptables off命令。

4、禁用OOM限制器(redhat5没有这个问题)

5、将/etc/sysctl.conf文件的内容修改为如下内容,重启生效(或执行sysctl -p生效)

kernel.shmmax = 5000000000 kernel.shmmni = 4096 kernel.shmall = 40000000000 kernel.sem = 250 5120000 100 20480 #SEMMSL SEMMNS SEMOPM SEMMNI kernel.sysrq = 1 kernel.core_uses_pid = 1 kernel.msgmnb = 65536 kernel.msgmax = 65536 kernel.msgmni = 2048 net.ipv4.tcp_syncookies = 1 net.ipv4.ip_forward = 0 net.ipv4.conf.default.accept_source_route = 0 net.ipv4.tcp_tw_recycle = 1 net.ipv4.tcp_max_syn_backlog = 4096 net.ipv4.conf.default.rp_filter = 1 net.ipv4.conf.default.arp_filter = 1 net.ipv4.conf.all.arp_filter = 1 net.ipv4.ip_local_port_range = 1025 65535 net.core.netdev_max_backlog = 10000 vm.overcommit_memory = 2

6、在/etc/security/limits.conf配置文件末尾处增加如下内容:

* soft nofile 65536

* hard nofile 65536

* soft nproc 131072

* hard nproc 131072

* soft core unlimited

注意:对于RedHat6.x系统,还需要将/etc/security/limits.d/90-nproc.conf文件中

的1024修改为131072。

7、在Linux平台,推荐使用XFS文件系统

GP建议使用下面的挂载参数:

rw,noatime,inode64,allocsize=16m

比如,挂载XFS格式的设备/dev/sdb到目录/data1,/etc/fstab中的配置如下:

/dev/sdb /data1 xfs rw,noatime,inode64,allocsize=16m 1 1

使用XFS文件系统,需安装相应的rpm软件包,并对磁盘设备进行格式化:

# rpm -ivh xfsprogs-2.9.4-4.el5.x86_64.rpm

# mkfs.xfs -f /dev/sdb

8、Linux磁盘I/O调度器对磁盘的访问支持不同的策略,默认的为CFQ,GP建议设置为deadline

要查看某驱动器的I/O调度策略,可通过如下命令查看,下面示例的为正确

的配置:

# cat /sys/block/{devname}/queue/scheduler

noop anticipatory [deadline] cfq

修改磁盘I/O调度策略的方法为,修改/boot/grub/menu.lst文件的启动参数,

在kernel一行的最后追加”elevator=deadline”,如下为正确配置的示例:

[root@gp_test1 ~]# vi /boot/grub/menu.lst

# grub.conf generated by anaconda

#

# Note that you do not have to rerun grub after making changes to this file

# NOTICE: You have a /boot partition. This means that

# all kernel and initrd paths are relative to /boot/, eg.

# root (hd0,0)

# kernel /vmlinuz-version ro root=/dev/vg00/LV_01

# initrd /initrd-version.img

#boot=/dev/sda

default=0

timeout=5

splashimage=(hd0,0)/grub/splash.xpm.gz

hiddenmenu

title Red Hat Enterprise Linux Server (2.6.18-308.el5)

root (hd0,0)

kernel /vmlinuz-2.6.18-308.el5 ro root=/dev/vg00/LV_01 rhgb quiet elevator=deadline

initrd /initrd-2.6.18-308.el5.img

注意:修改该配置文件需谨慎,错误的修改会导致重启操作系统失败。

9、每个磁盘设备文件需要配置read-ahead(blockdev)值为65536

官方文档的推荐值为16384,但译者认为应该为65536更合理,该值设置的是预读扇区数,

实际上预读的字节数是blockdev设置除以2,而GP缺省的blocksize为32KB,

刚好与65536(32768B/32KB)对应。

检查某块磁盘的read-ahead设置:

# blockdev --getra devname

例如:

# blockdev --getra /dev/sda

65536

修改系统的read-ahead设置,可通过/etc/rc.d/rc.local来修改,在文件尾部追加如下代码:

# blockdev --setra 65536 /dev/mapper/vg00-LV_01

如需临时修改read-ahead设置,可通过执行下面的命令来实现:

# blockdev --setra bytes devname

例如:

# blockdev --setra 65536 /dev/sda

三、运行GP安装程序

1、安装

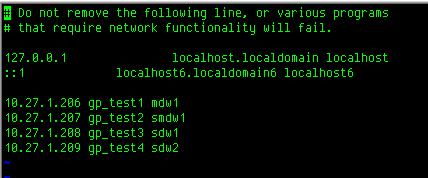

hosts配置正确很重要。

#hostname gp_test1

#vi /etc/sysconfig/network

#vi /etc/hosts

reboot一下

创建一个host_file,包含了Greenplum部署的所有主机名,内容如下:

mdw1

smdw1

sdw1

sdw2

创建一个hostfile_segonly,包含了所有的Segment Host的主机名,内容如下:

sdw1

sdw2

创建一个hostfile_exkeys,包含了所有的Greenplum主机的网口对应的主机名(因为有可能是双网卡的服务器),内如如下:

mdw1

smdw1

sdw1

sdw2

[root@gp_test1 gp_files]# gpseginstall -f host_file -u gpadmin -p gpadmin

20150312:00:06:39:003425 gpseginstall:gp_test1:root-[INFO]:-Installation Info: link_name None binary_path /opt/greenplum binary_dir_location /opt binary_dir_name greenplum 20150312:00:06:40:003425 gpseginstall:gp_test1:root-[INFO]:-check cluster password access 20150312:00:06:41:003425 gpseginstall:gp_test1:root-[INFO]:-de-duplicate hostnames 20150312:00:06:41:003425 gpseginstall:gp_test1:root-[INFO]:-master hostname: gp_test1 20150312:00:06:41:003425 gpseginstall:gp_test1:root-[INFO]:-check for user gpadmin on cluster 20150312:00:06:42:003425 gpseginstall:gp_test1:root-[INFO]:-add user gpadmin on master 20150312:00:06:42:003425 gpseginstall:gp_test1:root-[INFO]:-add user gpadmin on cluster 20150312:00:06:43:003425 gpseginstall:gp_test1:root-[INFO]:-chown -R gpadmin:gpadmin /opt/greenplum 20150312:00:06:44:003425 gpseginstall:gp_test1:root-[INFO]:-rm -f /opt/greenplum.tar; rm -f /opt/greenplum.tar.gz 20150312:00:06:44:003425 gpseginstall:gp_test1:root-[INFO]:-cd /opt; tar cf greenplum.tar greenplum 20150312:00:07:03:003425 gpseginstall:gp_test1:root-[INFO]:-gzip /opt/greenplum.tar 20150312:00:07:34:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: mkdir -p /opt 20150312:00:07:34:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: rm -rf /opt/greenplum 20150312:00:07:35:003425 gpseginstall:gp_test1:root-[INFO]:-scp software to remote location 20150312:00:07:52:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: gzip -f -d /opt/greenplum.tar.gz 20150312:00:11:09:003425 gpseginstall:gp_test1:root-[INFO]:-md5 check on remote location 20150312:00:11:15:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: cd /opt; tar xf greenplum.tar x`xxxxxxx20150312:00:14:40:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: rm -f /opt/greenplum.tar 20150312:00:14:41:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: chown -R gpadmin:gpadmin /opt/greenplum 20150312:00:14:42:003425 gpseginstall:gp_test1:root-[INFO]:-rm -f /opt/greenplum.tar.gz 20150312:00:14:42:003425 gpseginstall:gp_test1:root-[INFO]:-Changing system passwords ... 20150312:00:14:44:003425 gpseginstall:gp_test1:root-[INFO]:-exchange ssh keys for user root 20150312:00:14:51:003425 gpseginstall:gp_test1:root-[INFO]:-exchange ssh keys for user gpadmin 20150312:00:14:58:003425 gpseginstall:gp_test1:root-[INFO]:-/opt/greenplum//sbin/gpfixuserlimts -f /etc/security/limits.conf -u gpadmin 20150312:00:14:58:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: . /opt/greenplum//greenplum_path.sh; /opt/greenplum//sbin/gpfixuserlimts -f /etc/security/limits.conf -u gpadmin 20150312:00:14:59:003425 gpseginstall:gp_test1:root-[INFO]:-version string on master: gpssh version 4.3.4.1 build 2 20150312:00:14:59:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: . /opt/greenplum//greenplum_path.sh; /opt/greenplum//bin/gpssh --version 20150312:00:14:59:003425 gpseginstall:gp_test1:root-[INFO]:-remote command: . /opt/greenplum/greenplum_path.sh; /opt/greenplum/bin/gpssh --version 20150312:00:15:05:003425 gpseginstall:gp_test1:root-[INFO]:-SUCCESS -- Requested commands completed

[root@gp_test1 gp_files]#

2、确认安装

1). 在Master主机以gpadmin用户登录:

$ su - gpadmin

2). 加载GPDB安装目录下的路径文件:

# source /usr/local/greenplum-db/greenplum_path.sh

3). 使用gpssh命令确认是否可以在不提示输入密码的情况下登录到所有安装

了GP软件的主机。使用hostfile_exkeys文件。该文件需包含所有主机的所

有网口对应的主机名。例如:

$ gpssh -f host_file -e ls -l $GPHOME

如果成功登录到所有主机并且未提示输入密码,安装没有问题。所有主机在安

装路径显示相同的内容,且目录的所有权为gpadmin用户。

如果提示输入密码,执行下面的命令重新交换SSH密钥:

$ gpssh-exkeys -f host_file

3、安装 Oracle 兼容函数

作为可选项,许多的Oracle兼容函数在GPDb中是可用的。

在使用Oracle兼容函数之前,需要为没有数据库运行一次安装脚本:

$GPHOME/share/postgresql/contrib/orafunc.sql

例如,在testdb数据库中安装这些函数,使用命令:

$ psql –d testdb –f /opt/greenplum/share/postgresql/contrib/orafunc.sql

要卸载Oracle兼容函数,视角如下脚本:

$GPHOME/share/postgresql/contrib/uninstall_orafunc.sql

4、创建数据存储区域

1)在Master 主机 上创建数据目录位置

[root@gp_test1 Server]# mkdir /data/

[root@gp_test1 Server]# mkdir /data/master

[root@gp_test1 Server]# chown -R gpadmin:gpadmin /data/

2)使用gpssh命令在Standby Master上创建与和Master相同的数据存储位置

[root@gp_test1 data]# source /opt/greenplum/greenplum_path.sh

[root@gp_test1 data]# gpssh -h smdw1 -e \'mkdir /data/\'

[smdw1] mkdir /data/

[root@gp_test1 data]# gpssh -h smdw1 -e \'mkdir /data/master\'

[smdw1] mkdir /data/master

[root@gp_test1 data]#

[root@gp_test1 data]# gpssh -h smdw1 -e \'chown -R gpadmin:gpadmin /data/\'

[smdw1] chown -R gpadmin:gpadmin /data/

3)在所有Segment主机上创建数据目录位置

Tips:gpssh -h 针对给出的主机名hostname

Tips:gpssh -h 针对给出的主机名hostname

gpssh -f 针对files文件里的清单

使用刚刚创建的hostfile_segonly文件指定Segment主机列表。例如:

[root@gp_test1 data]# gpssh -f /dba_files/gp_files/hostfile_segonly -e \'mkdir /data\'

[sdw2] mkdir /data

[sdw1] mkdir /data

[root@gp_test1 data]# gpssh -f /dba_files/gp_files/hostfile_segonly -e \'mkdir /data/primary\'

[sdw2] mkdir /data/primary

[sdw1] mkdir /data/primary

[root@gp_test1 data]# gpssh -f /dba_files/gp_files/hostfile_segonly -e \'mkdir /data/mirror\'

[sdw2] mkdir /data/mirror

[sdw1] mkdir /data/mirror

[root@gp_test1 data]# gpssh -f /dba_files/gp_files/hostfile_segonly -e \'chown -R gpadmin:gpadmin /data/\'

[sdw2] chown -R gpadmin:gpadmin /data/

[sdw1] chown -R gpadmin:gpadmin /data/

4)NTP配置同步系统时钟

GP建议使用NTP(网络时间协议)来同步GPDB系统中所有主机的系统时钟。

在Segment 主机上,NTP应该配置Master 主机作为主时间源,而Standby作为备选时间源。在Master和Standby上配置NTP到首选的时间源(如果没有更好的选择可以选择Master自身作为最上端的事件源)。

配置NTP

1. 在Master主机,以root登录编辑/etc/ntp.conf文件。设置server参数指向数据中心

的NTP时间服务器。例如(假如10.6.220.20是数据中心NTP服务器的IP地址):

server 10.6.220.20

2. 在每个Segment主机,以root登录编辑/etc/ntp.conf文件。设置第一个server参数

指向Master主机,第二个server参数指向Standby主机。例如:

server mdw1 prefer

server smdw1

3. 在Standby主机,以root登录编辑/etc/ntp.conf文件。设置第一个server参数指向

Master主机,第二个参数指向数据中心的时间服务器。例如:

server mdw1 prefer

server 10.6.220.20

4. 在Master主机,使用NTP守护进程同步所有Segment主机的系统时钟。例如,使

用gpssh来完成:

# gpssh -f hostfile_ allhosts -v -e \'ntpd\'

5. 要配置集群自动同步系统时钟,应开启各个NTP客户机的ntpd服务,并设置为开机时自动运行:

# /etc/init.d/ntpd start

# chkconfig --level 35 ntpd on

或是service ntpd start ,再设置 ntsysv ,选择ntpd服务

传上NTP主机的/etc/ntp.conf 配置,也就是MASTER,修改部分见下划线

- # Permit time synchronization with our time source, but do not

# permit the source to query or modify the service on this system.restrict default kod nomodify notrap nopeer noqueryrestrict -6 default kod nomodify notrap nopeer noquery# Permit all access over the loopback interface. This could# be tightened as well, but to do so would effect some of# the administrative functions.restrict 127.0.0.1restrict -6::1restrict 10.27.1.0 mask 255.255.255.0 nomodify# Hosts on local network are less restricted.#restrict 192.168.1.0 mask 255.255.255.0 nomodify notrap# Use public servers from the pool.ntp.org project.# Please consider joining the pool (http://www.pool.ntp.org/join.html).#broadcast 192.168.1.255 key 42 # broadcast server#broadcastclient # broadcast client#broadcast 224.0.1.1 key 42 # multicast server#multicastclient 224.0.1.1 # multicast client#manycastserver 239.255.254.254 # manycast server#manycastclient 239.255.254.254 key 42 # manycast client# Undisciplined Local Clock. This is a fake driver intended for backup# and when no outside source of synchronized time is available.server 127.127.1.0# local clockfudge 127.127.1.0 stratum 10# Drift file. Put this in a directory which the daemon can write to.# No symbolic links allowed, either, since the daemon updates the file# by creating a temporary in the same directory and then rename()\'ing# it to the file.enable auth monitordriftfile /var/lib/ntp/driftstatsdir /var/lib/ntp/ntpstats/filegen peerstats file peerstats type day enablefilegen loopstats file loopstats type day enablefilegen clockstats file clockstats type day enable# Key file containing the keys and key identifiers used when operating# with symmetric key cryptography.keys /etc/ntp/keystrustedkey 0requestkey 0 controlkey# Specify the key identifiers which are trusted. #trustedkey 4 8 42 # Specify the key identifier to use with the ntpdc utility. #requestkey 8 # Specify the key identifier to use with the ntpq utility. #controlkey 8

5、检查系统环境

用gpadmin登录master主机

加载greenplum_path.sh文件

source /opt/greenplum/greenplum_path.sh

创建一个名为hostfile_gpcheck的文件,包含所有GP主机的主机名,确保无多余空格

vi /dba_files/gp_files/hostfile_gpcheck

mdw1

smdw1

sdw1

sdw2

可以用以下命令来check一下文件是否准确

# gpssh -f /dba_files/gp_files/hostfile_gpcheck -e hostname

这里会返回所有主机的hostname

[gpadmin@gp_test1 ~]$ gpcheck -f /dba_files/gp_files/hostfile_gpcheck -m mdw1 -s smdw1

20150314:20:07:34:017843 gpcheck:gp_test1:gpadmin-[INFO]:-dedupe hostnames 20150314:20:07:34:017843 gpcheck:gp_test1:gpadmin-[INFO]:-Detected platform: Generic Linux Cluster 20150314:20:07:34:017843 gpcheck:gp_test1:gpadmin-[INFO]:-generate data on servers 20150314:20:07:34:017843 gpcheck:gp_test1:gpadmin-[INFO]:-copy data files from servers 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[INFO]:-delete remote tmp files 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[INFO]:-Using gpcheck config file: /opt/greenplum//etc/gpcheck.cnf 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(None): utility will not check all settings when run as non-root user 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): on device (fd0) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): on device (hdc) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): on device (sda) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): /etc/sysctl.conf value for key \'kernel.shmmax\' has value \'5000000000\' and expects \'500000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): /etc/sysctl.conf value for key \'kernel.sem\' has value \'250 5120000 100 20480\' and expects \'250 512000 100 2048\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test4): /etc/sysctl.conf value for key \'kernel.shmall\' has value \'40000000000\' and expects \'4000000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): on device (fd0) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): on device (hdc) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): on device (sda) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): /etc/sysctl.conf value for key \'kernel.shmmax\' has value \'5000000000\' and expects \'500000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): /etc/sysctl.conf value for key \'kernel.sem\' has value \'250 5120000 100 20480\' and expects \'250 512000 100 2048\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test1): /etc/sysctl.conf value for key \'kernel.shmall\' has value \'40000000000\' and expects \'4000000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): on device (fd0) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): on device (hdc) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): on device (sda) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): /etc/sysctl.conf value for key \'kernel.shmmax\' has value \'5000000000\' and expects \'500000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): /etc/sysctl.conf value for key \'kernel.sem\' has value \'250 5120000 100 20480\' and expects \'250 512000 100 2048\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test2): /etc/sysctl.conf value for key \'kernel.shmall\' has value \'40000000000\' and expects \'4000000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): on device (fd0) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): on device (hdc) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): on device (sda) IO scheduler \'cfq\' does not match expected value \'deadline\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): /etc/sysctl.conf value for key \'kernel.shmmax\' has value \'5000000000\' and expects \'500000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): /etc/sysctl.conf value for key \'kernel.sem\' has value \'250 5120000 100 20480\' and expects \'250 512000 100 2048\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): /etc/sysctl.conf value for key \'kernel.shmall\' has value \'40000000000\' and expects \'4000000000\' 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[ERROR]:-GPCHECK_ERROR host(gp_test3): potential NTPD issue. gpcheck start time (Sat Mar 14 20:07:34 2015) time on machine (Sat Mar 14 20:07:14 2015) 20150314:20:07:35:017843 gpcheck:gp_test1:gpadmin-[INFO]:-gpcheck completing... [gpadmin@gp_test1 ~]$

四、检查硬件性能

1、检查网络性能

网络测试选项包括:并行测试(-r N)、串行测试(-r n)、矩阵测试(-r M)。测试时运行一个网络测试程序从当前主机向远程主机传输5秒钟的数据流。缺省时,数据并行传输到每个远程主机,报告出传输的最小、最大、平均和中值速率,单位为MB/S。如果主体的传输速率低于预期(小于100MB/S),可以使用-r n参数运行串行的网络测试以得到每个主机的结果。要运行矩阵测试,指定-r M参数,使得每个主机发送接收指定的所有其他主机的数据,这个测试可以验证网络层能否承受全矩阵工作负载。

[gpadmin@gp_test1 gp_files]$ gpcheckperf -f hostfile_exkeys -r N -d /tmp > subnet1.out

[gpadmin@gp_test1 gp_files]$ vi subnet1.out

/opt/greenplum//bin/gpcheckperf -f hostfile_exkeys -r N -d /tmp

-------------------

-- NETPERF TEST

-------------------

====================

== RESULT

====================

Netperf bisection bandwidth test

mdw1 -> smdw1 = 366.560000

sdw1 -> sdw2 = 362.050000

smdw1 -> mdw1 = 363.960000

sdw2 -> sdw1 = 366.690000

Summary:

sum = 1459.26 MB/sec

min = 362.05 MB/sec

max = 366.69 MB/sec

avg = 364.81 MB/sec

median = 366.56 MB/sec

~

2、检查磁盘IO、内存带宽

[gpadmin@gp_test1 gp_files]$ gpcheckperf -f hostfile_segonly -d /data/mirror -r ds

/opt/greenplum//bin/gpcheckperf -f hostfile_segonly -d /data/mirror -r ds

--------------------

-- DISK WRITE TEST

--------------------

--------------------

-- DISK READ TEST

--------------------

--------------------

-- STREAM TEST

--------------------

====================

== RESULT

====================

disk write avg time (sec): 752.24

disk write tot bytes: 4216225792

disk write tot bandwidth (MB/s): 5.46

disk write min bandwidth (MB/s): 0.00 [sdw2]

disk write max bandwidth (MB/s): 5.46 [sdw1]

disk read avg time (sec): 105.09

disk read tot bytes: 8432451584

disk read tot bandwidth (MB/s): 76.53

disk read min bandwidth (MB/s): 37.94 [sdw2]

disk read max bandwidth (MB/s): 38.59 [sdw1]

stream tot bandwidth (MB/s): 7486.56

stream min bandwidth (MB/s): 3707.16 [sdw2]

stream max bandwidth (MB/s): 3779.40 [sdw1]

看到这里是不是觉得我的4台主机配置很渣?

咳咳,确实是的,这4台其实是同一台主机,用vmware esx虚拟出来的。

五、初始化GreenPlum

1、确认前面的步骤已完成

2、创建只包含segment主机地址的host,如果有多网口,需要全部都列出来

3、配置文件,可以参考cp $GPHOME/docs/cli_help/gpconfigs/gpinitsystem_config /dba_files/gp_files

4、注意:可以在初始化时值配置Primary Segment Instance,而在之后使用gpaddmirrors命令部署Mirror Segment Instance

修改如下:

# FILE NAME: gpinitsystem_config

# Configuration file needed by the gpinitsystem

################################################

#### REQUIRED PARAMETERS

################################################

#### Name of this Greenplum system enclosed in quotes. 数据库的代号

ARRAY_NAME="Greenplum DW4P 2M2S(2m2p)"

#### Naming convention for utility-generated data directories. Segment的名称前缀

SEG_PREFIX=gpseg

#### Base number by which primary segment port numbers 起始的端口号

#### are calculated.

PORT_BASE=40000

#### File system location(s) where primary segment data directories

#### will be created. The number of locations in the list dictate

#### the number of primary segments that will get created per

#### physical host (if multiple addresses for a host are listed in

#### the hostfile, the number of segments will be spread evenly across

#### the specified interface addresses).

#### 指定Primary Segment的数据目录, DATA_DIRECTORY参数指定每个Segment主机配置多少个Instance。如果

#### 在host文件中为每个Segment主机列出了多个网口,这些Instance将平均分布到所有列出的网口上。

#### 这里的案例,hosts里有2个segment,sdw1,sdw2俩主机,都是单网卡

declare -a DATA_DIRECTORY=(/data1/primary /data1/primary)

#### OS-configured hostname or IP address of the master host.

#### Master所在机器的Hostname

MASTER_HOSTNAME=mdw1

#### File system location where the master data directory

#### will be created.

#### 指定Master的数据目录

MASTER_DIRECTORY=/data/master

#### Port number for the master instance.

#### Master的端口

MASTER_PORT=5432

#### Shell utility used to connect to remote hosts.

#### bash的版本

TRUSTED_SHELL=ssh

#### Maximum log file segments between automatic WAL checkpoints.

#### CHECK_POINT_SEGMENT

#### 设置的是检查点段的大小,较大的检查点段可以改善大数据量装载的性能,同时会加长灾难事务恢复的时间。更多信息可参考相关文档。缺省值为8,

#### 若为保守起见,建议配置为缺省值,本次测试环境为单台IBM3650M3,呃,可能要改叫联想3650了。

#### 如果多台服务器级的主机,有足够的内存>16G >16核,那么可以考虑设置为CHECK_POINT_SEGMENTS=256

CHECK_POINT_SEGMENTS=8

#### Default server-side character set encoding.

#### 字符集

ENCODING=UNICODE

################################################

#### OPTIONAL MIRROR PARAMETERS

################################################

#### Base number by which mirror segment port numbers

#### are calculated.

#### Mirror Segment起始的端口号

#MIRROR_PORT_BASE=50000

#### Base number by which primary file replication port

#### numbers are calculated.

#### Primary Segment主备同步的起始端口号

#REPLICATION_PORT_BASE=41000

#### Base number by which mirror file replication port

#### numbers are calculated.

#### Mirror Segment主备同步的起始端口号

#MIRROR_REPLICATION_PORT_BASE=51000

#### File system location(s) where mirror segment data directories

#### will be created. The number of mirror locations must equal the

#### number of primary locations as specified in the

#### DATA_DIRECTORY parameter.

#### Mirror Segment的数据目录

#declare -a MIRROR_DATA_DIRECTORY=(/data1/mirror /data1/mirror /data1/mirror /data2/mirror /data2/mirror /data2/mirror)

################################################

#### OTHER OPTIONAL PARAMETERS

################################################

#### Create a database of this name after initialization.

#DATABASE_NAME=name_of_database

#### Specify the location of the host address file here instead of

#### with the the -h option of gpinitsystem.

#MACHINE_LIST_FILE=/home/gpadmin/gpconfigs/hostfile_gpinitsystem

接着我们开始初始化GP集群了。

[gpadmin@gp_test1 gp_files]$ gpinitsystem -c gpinitsystem_config -h hostfile_segonly -s smdw1 -S

20150317:11:49:33:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking configuration parameters, please wait...

/bin/mv:是否覆盖 “gpinitsystem_config”,而不理会权限模式 0444?yes

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Reading Greenplum configuration file gpinitsystem_config

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Locale has not been set in gpinitsystem_config, will set to default value

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Locale set to en_US.utf8

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[WARN]:-Master hostname mdw1 does not match hostname output

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking to see if mdw1 can be resolved on this host

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Can resolve mdw1 to this host

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-No DATABASE_NAME set, will exit following template1 updates

20150317:11:49:43:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-MASTER_MAX_CONNECT not set, will set to default value 250

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking configuration parameters, Completed

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Commencing multi-home checks, please wait...

..

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Configuring build for standard array

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[WARN]:-Option -S supplied, but no mirrors have been defined, ignoring -S option

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Commencing multi-home checks, Completed

20150317:11:49:44:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Building primary segment instance array, please wait...

....

20150317:11:49:46:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking Master host

20150317:11:49:47:026105 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking new segment hosts, please wait...

./bin/touch: 无法触碰 “/data1/primary/tmp_file_test”: 没有那个文件或目录

20150317:11:49:49:gpinitsystem:gp_test1:gpadmin-[FATAL]:-Cannot write to /data1/primary on sdw1 Script Exiting!

咳咳,出师不利。/data/primary写错了,写成了/data1,所以提示没有那个文件或目录

这个时候需要注意的是,看下是否有如下的撤销脚本,/home/gpadmin/gpAdminLogs/backout_gpinitsystem_<user>_<timestamp> ,因为gpinitsystem失败会

有可能带来一个不完整的系统,可以用此脚本清理掉不完整的系统,该撤销脚本将会删除其创建的数据目录、postgres进程以及日志文件。

执行了撤销脚本,并在解决导致gpinitsystem失败的原因之后,可以重新尝试初始化GPDB集群。

在这里并没有生成撤销脚本,所以我修正gpinitsystem_config 配置档后继续执行

gpinitsystem -c gpinitsystem_config -h hostfile_segonly -s smdw1 -S

[gpadmin@gp_test1 gp_files]$ gpinitsystem -c gpinitsystem_config -h hostfile_segonly -s smdw1 -S20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking configuration parameters, please wait... 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-ReadingGreenplum configuration file gpinitsystem_config 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Locale has not been setin gpinitsystem_config, will set to default value 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Localeset to en_US.utf8 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-Master hostname mdw1 does not match hostname output 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking to see if mdw1 can be resolved on this host 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Can resolve mdw1 to this host 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-No DATABASE_NAME set, will exit following template1 updates 20150317:15:28:50:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-MASTER_MAX_CONNECT not set, will set to default value 250 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking configuration parameters,Completed 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Commencing multi-home checks, please wait... .. 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Configuring build for standard array 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-Option-S supplied, but no mirrors have been defined, ignoring -S option 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Commencing multi-home checks,Completed 20150317:15:28:51:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Building primary segment instance array, please wait... .... 20150317:15:28:53:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-CheckingMaster host 20150317:15:28:53:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking new segment hosts, please wait... .... 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checking new segment hosts,Completed 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-GreenplumDatabaseCreationParameters 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:--------------------------------------- 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-MasterConfiguration 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:--------------------------------------- 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master instance name =Greenplum DW4P 2M2S(2m2p) 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master hostname = mdw1 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master port =5432 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master instance dir =/data/master/gpseg-1 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master LOCALE = en_US.utf8 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Greenplum segment prefix = gpseg 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-MasterDatabase= 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master connections =250 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master buffers =128000kB 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Segment connections =750 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Segment buffers =128000kB 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Checkpoint segments =8 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Encoding= UNICODE 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Postgres param file =Off 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Initdb to be used =/opt/greenplum/bin/initdb 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-GP_LIBRARY_PATH is =/opt/greenplum/lib 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Ulimit check =Passed 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Array host connect type =Single hostname per node 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Master IP address [1]=10.27.1.206 20150317:15:28:58:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-StandbyMaster= smdw1 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Primary segment # = 2 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Standby IP address =10.27.1.207 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-TotalDatabase segments =4 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Trusted shell = ssh 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Number segment hosts =2 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Mirroring config = OFF 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:---------------------------------------- 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-GreenplumPrimarySegmentConfiguration 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:---------------------------------------- 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-sdw1 /data/primary/gpseg0 4000020 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-sdw1 /data/primary/gpseg1 4000131 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-sdw2 /data/primary/gpseg2 4000042 20150317:15:28:59:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-sdw2 /data/primary/gpseg3 4000153 Continue with Greenplum creation Yy/Nn> y 20150317:15:29:24:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Building the Master instance database, please wait... 20150317:15:30:20:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Starting the Masterin admin mode 20150317:15:30:26:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Commencing parallel build of primary segment instances 20150317:15:30:26:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Spawning parallel processes batch [1], please wait... .... 20150317:15:30:27:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Waitingfor parallel processes batch [1], please wait... ................................................................................................................. 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:------------------------------------------------ 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Parallel process exit status 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:------------------------------------------------ 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Total processes marked as completed =4 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Total processes marked as killed =0 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Total processes marked as failed =0 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:------------------------------------------------ 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Deleting distributed backout files 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Removing back out file 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-No errors generated from parallel processes 20150317:15:32:21:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Starting initialization of standby master smdw1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Validating environment and parameters for standby initialization... 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Checkingfor filespace directory /data/master/gpseg-1 on smdw1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:------------------------------------------------------ 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum standby master initialization parameters 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:------------------------------------------------------ 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum master hostname = gp_test1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum master data directory =/data/master/gpseg-1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum master port =5432 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum standby master hostname = smdw1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum standby master port =5432 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum standby master data directory =/data/master/gpseg-1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Greenplum update system catalog =On 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:------------------------------------------------------ 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Filespace locations 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:------------------------------------------------------ 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-pg_system ->/data/master/gpseg-1 20150317:15:32:22:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-SyncingGreenplumDatabase extensions to standby 20150317:15:32:23:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-The packages on smdw1 are consistent. 20150317:15:32:23:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Updating pg_hba.conf file... 20150317:15:32:23:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Updating pg_hba.conf file on segments... 20150317:15:32:30:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Adding standby master to catalog... 20150317:15:32:30:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Database catalog updated successfully. 20150317:15:32:41:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Updating filespace flat files 20150317:15:32:41:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Updating filespace flat files 20150317:15:32:41:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Removing pg_hba.conf backup... 20150317:15:32:41:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Starting standby master 20150317:15:32:41:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Checkingif standby master is running on host: smdw1 in directory:/data/master/gpseg-1 20150317:15:33:25:013395 gpinitstandby:gp_test1:gpadmin-[INFO]:-Successfully created standby master on smdw1 20150317:15:33:25:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Successfully completed standby master initialization 20150317:15:33:33:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Restarting the Greenplum instance in production mode 20150317:15:33:33:013771 gpstop:gp_test1:gpadmin-[INFO]:-Starting gpstop with args:-a -i -m -d /data/master/gpseg-1 20150317:15:33:33:013771 gpstop:gp_test1:gpadmin-[INFO]:-Gathering information and validating the environment... 20150317:15:33:33:013771 gpstop:gp_test1:gpadmin-[INFO]:-ObtainingGreenplumMaster catalog information 20150317:15:33:33:013771 gpstop:gp_test1:gpadmin-[INFO]:-ObtainingSegment details from master... 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-GreenplumVersion:\'postgres (Greenplum Database) 4.3.4.1 build 2\' 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-There are 0 connections to the database 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-CommencingMaster instance shutdown with mode=\'immediate\' 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-Master host=gp_test1 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-CommencingMaster instance shutdown with mode=immediate 20150317:15:33:34:013771 gpstop:gp_test1:gpadmin-[INFO]:-Master segment instance directory=/data/master/gpseg-1 20150317:15:33:35:013771 gpstop:gp_test1:gpadmin-[INFO]:-Attempting forceful termination of any leftover master process 20150317:15:33:35:013771 gpstop:gp_test1:gpadmin-[INFO]:-Terminating processes for segment /data/master/gpseg-1 20150317:15:33:35:013771 gpstop:gp_test1:gpadmin-[ERROR]:-Failed to kill processes for segment /data/master/gpseg-1:([Errno3]No such process) 20150317:15:33:35:013858 gpstart:gp_test1:gpadmin-[INFO]:-Starting gpstart with args:-a -d /data/master/gpseg-1 20150317:15:33:35:013858 gpstart:gp_test1:gpadmin-[INFO]:-Gathering information and validating the environment... 20150317:15:33:35:013858 gpstart:gp_test1:gpadmin-[INFO]:-GreenplumBinaryVersion:\'postgres (Greenplum Database) 4.3.4.1 build 2\' 20150317:15:33:35:013858 gpstart:gp_test1:gpadmin-[INFO]:-GreenplumCatalogVersion:\'201310150\' 20150317:15:33:35:013858 gpstart:gp_test1:gpadmin-[INFO]:-StartingMaster instance in admin mode 20150317:15:33:36:013858 gpstart:gp_test1:gpadmin-[INFO]:-ObtainingGreenplumMaster catalog information 20150317:15:33:36:013858 gpstart:gp_test1:gpadmin-[INFO]:-ObtainingSegment details from master... 20150317:15:33:36:013858 gpstart:gp_test1:gpadmin-[INFO]:-Setting new master era 20150317:15:33:36:013858 gpstart:gp_test1:gpadmin-[INFO]:-MasterStarted... 20150317:15:33:38:013858 gpstart:gp_test1:gpadmin-[INFO]:-Shutting down master 20150317:15:33:40:013858 gpstart:gp_test1:gpadmin-[INFO]:-Commencing parallel segment instance startup, please wait... ... 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-Process results... 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:----------------------------------------------------- 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-Successful segment starts =4 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-Failed segment starts =0 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-Skipped segment starts (segments are marked down in configuration)=0 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:----------------------------------------------------- 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:- 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-Successfully started 4 of 4 segment instances 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:----------------------------------------------------- 20150317:15:33:43:013858 gpstart:gp_test1:gpadmin-[INFO]:-StartingMaster instance gp_test1 directory /data/master/gpseg-1 20150317:15:33:47:013858 gpstart:gp_test1:gpadmin-[INFO]:-Command pg_ctl reports Master gp_test1 instance active 20150317:15:33:47:013858 gpstart:gp_test1:gpadmin-[INFO]:-Starting standby master 20150317:15:33:47:013858 gpstart:gp_test1:gpadmin-[INFO]:-Checkingif standby master is running on host: smdw1 in directory:/data/master/gpseg-1 20150317:15:34:00:013858 gpstart:gp_test1:gpadmin-[INFO]:-Database successfully started 20150317:15:34:00:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Completed restart of Greenplum instance in production mode 20150317:15:34:00:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Loading gp_toolkit... 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Scanning utility log file for any warning messages 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-******************************************************* 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-Scan of log file indicates that some warnings or errors 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-were generated during the array creation 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Please review contents of log file 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-/home/gpadmin/gpAdminLogs/gpinitsystem_20150317.log 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-To determine level of criticality 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-These messages could be from a previous run of the utility 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-that was called today! 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[WARN]:-******************************************************* 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-GreenplumDatabase instance successfully created 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:------------------------------------------------------- 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-To complete the environment configuration, please 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-update gpadmin .bashrc file with the following 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-1.Ensure that the greenplum_path.sh file is sourced 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-2.Add"export MASTER_DATA_DIRECTORY=/data/master/gpseg-1" 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:- to access the Greenplum scripts for this instance: 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:- or, use -d /data/master/gpseg-1 option for the Greenplum scripts 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Example gpstate -d /data/master/gpseg-1 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Script log file =/home/gpadmin/gpAdminLogs/gpinitsystem_20150317.log 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-To remove instance, run gpdeletesystem utility 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-StandbyMaster smdw1 has been configured 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-To activate the StandbyMasterSegmentin the event of Master 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-failure review options for gpactivatestandby 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:------------------------------------------------------- 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-TheMaster/data/master/gpseg-1/pg_hba.conf post gpinitsystem 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-has been configured to allow all hosts within this new 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-array to intercommunicate.Any hosts external to this 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-new array must be explicitly added to this file 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-Refer to the GreenplumAdmin support guide which is 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-located in the /opt/greenplum//docs directory 20150317:15:34:05:030282 gpinitsystem:gp_test1:gpadmin-[INFO]:-------------------------------------------------------

psql -d postgres 登录默认postgres数据库看看~

[gpadmin@gp_test1 /]$ psql -d postgrespsql (8.2.15)Type"help"for help.postgres=#postgres=#postgres=# helpYou are using psql, the command-line interface to PostgreSQL.Type: \\copyright for distribution terms\\h for help with SQL commands\\? for help 以上是关于『GreenPlum系列』GreenPlum 4节点集群安装(图文教程)的主要内容,如果未能解决你的问题,请参考以下文章