Linux系统配额与RAID

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Linux系统配额与RAID相关的知识,希望对你有一定的参考价值。

本篇内容:

一、Linux下的系统配额

二、Linux下RAID

一、系统磁盘配额

设置和检查文件系统上的磁盘配额,预防用户使用超出允许量的空间,还要预防整个文件系统被意外填满。

综述

在内核中执行

以文件系统为单位启用

对不同组或者用户的策略不同

根据块或者节点进行限制

软限制(soft limit):软限制: 警告限制,可以被突破

硬限制(hard limit):最大可用限制,不可突破;

配额大小:以K为单位,以文件个数为单位

初始化

分区挂载选项:usrquota、grpquota

初始化数据库:quotacheck

执行

开启或者取消配额:quotaon、quotaoff

直接编辑配额:edquota username

在shell中直接编辑:

setquota usename 4096 5120 40 50 /foo

定义原始标准用户

edquota -p user1 user2

报告

用户调查:quota

配额概述:repquota

其它工具:warnquota

创建一个分区并格式化,然后挂载至/home目录下,对此目录实现磁盘配额

1、创建一个10G的分区并且格式化为ext4系统

[[email protected] ~]# fdisk /dev/sdb [[email protected] ~]# lsblk /dev/sdb NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sdb 8:16 0 90G 0 disk └─sdb1 8:17 0 10G 0 part [[email protected] ~]# mkfs.ext4 /dev/sdb1

2、将/home目录下的文件移动到其他目录,然后将/dev/sdb1挂载至home目录下,再将数据复制回来。

[[email protected] ~]# mv /home/* /testdir/ [[email protected] ~]# ls /home/ [[email protected] ~]# [[email protected] ~]# mount /dev/sdb1 /home/ //将/dev/sdb1挂载至/home目录下 [[email protected] ~]# ls /home/ lost+found [[email protected] ~]# mv /testdir/* /home/ //将/home下原来的数据移动回来 [[email protected] ~]# ls /home/ lost+found xiaoshui

3、写入/etc/fstab,并且将挂载选项设为usrquota,grpquota,因为刚刚没有写挂载选项,需要卸载后重新挂载。

[[email protected] ~]# vi /etc/fstab # # /etc/fstab # Created by anaconda on Mon Jul 25 09:34:22 2016 # # Accessible filesystems, by reference, are maintained under ‘/dev/disk‘ # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/vg0-root / ext4 defaults 1 1 UUID=7bbc50de-dfee-4e22-8cb6-04d8520b6422 /boot ext4 defaults 1 2 /dev/mapper/vg0-usr /usr ext4 defaults 1 2 /dev/mapper/vg0-var /var ext4 defaults 1 2 /dev/mapper/vg0-swap swap swap defaults 0 0 tmpfs /dev/shm tmpfs defaults 0 0 devpts /dev/pts devpts gid=5,mode=620 0 0 sysfs /sys sysfs defaults 0 0 proc /proc proc defaults 1 0 /dev/sdb1 /home ext4 usrquota,grpquota 0 0 [[email protected] ~]# umount /home/ [[email protected] ~]# mount -a

4、创建配额数据库

[[email protected] ~]# quotacheck -cug /home

5、启用数据库

[[email protected] ~]# quotaon -p /home //查看是否启用数据库 group quota on /home (/dev/sdb1) is off user quota on /home (/dev/sdb1) is off [[email protected] ~]# quotaon /home //启用数据库

6、配置配额项

[[email protected] ~]# edquota xiaoshui //soft为软限制,hard为硬限制 Disk quotas for user xiaoshui (uid 500): Filesystem blocks soft hard inodes soft hard /dev/sdb1 32 300000 500000 8 0 0

7、切换到xiaoshui用户,来测试是否上述设置是否生效

[[email protected] ~]# su - xiaoshui //切换用户只xiaoshui [[email protected] ~]$ pwd /home/xiaoshui [[email protected] ~]$ dd if=/dev/zero of=file1 bs=1M count=290 //先新建一个290MB的文件 290+0 records in 290+0 records out 304087040 bytes (304 MB) copied, 1.08561 s, 280 MB/s //创建成功,并没有报错 [[email protected] ~]$ dd if=/dev/zero of=file1 bs=1M count=300 //覆盖file1,创建一个300MB的文件 sdb1: warning, user block quota exceeded. //出现警告! 300+0 records in 300+0 records out 314572800 bytes (315 MB) copied, 0.951662 s, 331 MB/s [[email protected] ~]$ ll -h 查看文件,发现依然创建成功 total 300M -rw-rw-r-- 1 xiaoshui xiaoshui 300M Aug 26 08:16 file1 [[email protected] ~]$ dd if=/dev/zero of=file1 bs=1M count=500 //继续覆盖file1,创建一个500MB的文件 sdb1: warning, user block quota exceeded. sdb1: write failed, user block limit reached. //出现警告,提示用户的block到了限制 dd: writing `file1‘: Disk quota exceeded 489+0 records in 488+0 records out 511967232 bytes (512 MB) copied, 2.344 s, 218 MB/s [[email protected] ~]$ ll -h //查看文件,发现并没有500MB的文件,系统自动dd过程停止了 total 489M -rw-rw-r-- 1 xiaoshui xiaoshui 489M Aug 26 08:19 file1

8、报告配额状态

[[email protected] ~]# quota xiaoshui //blocks显示了用户现在的文件block数,已经超过了limit Disk quotas for user xiaoshui (uid 500): Filesystem blocks quota limit grace files quota limit grace /dev/sdb1 500004* 300000 500000 6days 10 0 0

RAID

一、什么是RAID

RAID:Redundant Arrays of Inexpensive(Independent)Disks,多个磁盘合成一个“阵列”来提供更好的性能、冗余,或者两者都提供

优点:

提高IO能力:

磁盘并行读写

提高耐用性;

磁盘冗余来实现

二、RAID级别

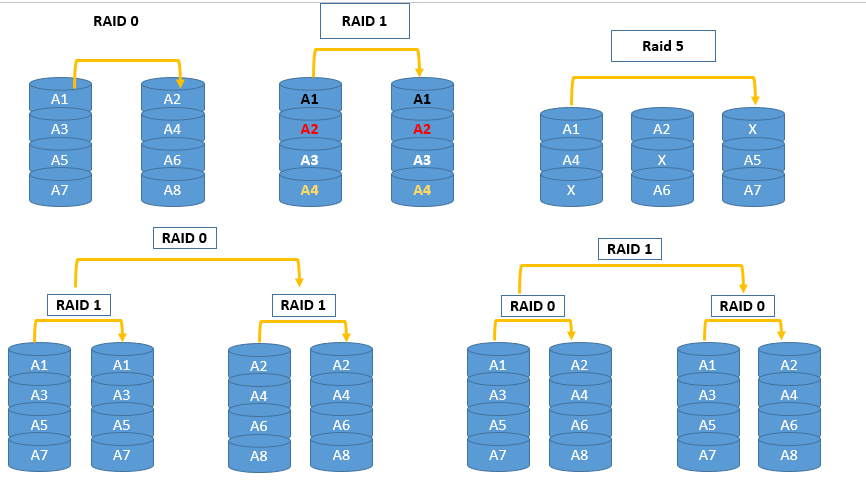

RAID-0:条带卷,strip

读、写性能提升;

可用空间:N*min(S1,S2,...)

无容错能力

最少磁盘数:2, 2

RAID-1: 镜像卷,mirror

读性能提升、写性能略有下降;

可用空间:1*min(S1,S2,...)

有冗余能力

最少磁盘数:2, 2N

RAID-2

..

RAID-5

读、写性能提升

可用空间:(N-1)*min(S1,S2,...)

有容错能力:有校验位,允许最多1块磁盘损坏

最少磁盘数:3, 3+

RAID-6

读、写性能提升

可用空间:(N-2)*min(S1,S2,...)

有容错能力:允许最多2块磁盘损坏

最少磁盘数:4, 4+

RAID-10

读、写性能提升

可用空间:N*min(S1,S2,...)/2

有容错能力:每组镜像最多只能坏一块

最少磁盘数:4, 4+

RAID-01

先RAID0,在RAID1

软件实现RAID 5

1、准备磁盘分区

[[email protected] ~]# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sda 8:0 0 80G 0 disk ├─sda1 8:1 0 488M 0 part /boot ├─sda2 8:2 0 40G 0 part / ├─sda3 8:3 0 20G 0 part /usr ├─sda4 8:4 0 512B 0 part ├─sda5 8:5 0 2G 0 part [SWAP] └─sda6 8:6 0 1M 0 part sdb 8:16 0 80G 0 disk └─sdb1 8:17 0 1G 0 part sdc 8:32 0 20G 0 disk sdd 8:48 0 20G 0 disk ├─sdd1 8:49 0 100M 0 part ├─sdd2 8:50 0 100M 0 part ├─sdd3 8:51 0 100M 0 part ├─sdd4 8:52 0 1K 0 part └─sdd5 8:53 0 99M 0 part sde 8:64 0 20G 0 disk └─sde1 8:65 0 100M 0 part sdf 8:80 0 20G 0 disk sr0 11:0 1 7.2G 0 rom

准备四块磁盘分区,分区类型设为RAID类型(fd),我这里为/dev/sdd{1,2,3},/dev/sde1

2、创建RAID 5

[[email protected] ~]# mdadm -C /dev/md0 -a yes -l 5 -n 3 -x1 /dev/sd{d{1,2,3},e1} mdadm: /dev/sdd1 appears to be part of a raid array: level=raid5 devices=4 ctime=Mon Aug 29 19:43:21 2016 mdadm: /dev/sdd2 appears to be part of a raid array: level=raid0 devices=0 ctime=Thu Jan 1 08:00:00 1970 mdadm: partition table exists on /dev/sdd2 but will be lost or meaningless after creating array Continue creating array? yes mdadm: Defaulting to version 1.2 metadata mdadm: array /dev/md0 started. [[email protected] ~]# mdadm -D /dev/md0 /dev/md0: Version : 1.2 Creation Time : Thu Sep 1 14:39:53 2016 Raid Level : raid5 Array Size : 202752 (198.03 MiB 207.62 MB) Used Dev Size : 101376 (99.02 MiB 103.81 MB) Raid Devices : 3 Total Devices : 4 Persistence : Superblock is persistent Update Time : Thu Sep 1 14:39:54 2016 State : clean Active Devices : 3 Working Devices : 4 Failed Devices : 0 Spare Devices : 1 Layout : left-symmetric Chunk Size : 512K Name : localhost.localdomain:0 (local to host localhost.localdomain) UUID : f4eaf910:514ae7ab:6dd6d28f:b6cfcc10 Events : 18 Number Major Minor RaidDevice State 0 8 49 0 active sync /dev/sdd1 1 8 50 1 active sync /dev/sdd2 4 8 51 2 active sync /dev/sdd3 3 8 65 - spare /dev/sde1 [[email protected] ~]#

3、格式化文件系统

[[email protected] ~]# mkfs.ext4 /dev/md0 mke2fs 1.42.9 (28-Dec-2013) Filesystem label= OS type: Linux Block size=1024 (log=0) Fragment size=1024 (log=0) Stride=512 blocks, Stripe width=1024 blocks 50800 inodes, 202752 blocks 10137 blocks (5.00%) reserved for the super user First data block=1 Maximum filesystem blocks=33816576 25 block groups 8192 blocks per group, 8192 fragments per group 2032 inodes per group Superblock backups stored on blocks: 8193, 24577, 40961, 57345, 73729 Allocating group tables: done Writing inode tables: done Creating journal (4096 blocks): done Writing superblocks and filesystem accounting information: done

4、生成配置文件并启动RAID 5然后挂载

[[email protected] ~]# mdadm -Ds /dev/md0 > /etc/mdadm.conf [[email protected] ~]# mdadm -A /dev/md0 mdadm: /dev/md0 has been started with 3 drives and 1 spare. [[email protected] ~]# mount /dev/md0 /testdir/

5、测试

[[email protected] ~]# cd /testdir/ [[email protected] testdir]# ls lost+found [[email protected] testdir]# cp /etc/* . //向挂载目录拷贝文件 [[email protected] testdir]# mdadm -D /dev/md0 //查看RAID运行状态 /dev/md0: Version : 1.2 Creation Time : Thu Sep 1 14:39:53 2016 Raid Level : raid5 Array Size : 202752 (198.03 MiB 207.62 MB) Used Dev Size : 101376 (99.02 MiB 103.81 MB) Raid Devices : 3 Total Devices : 4 Persistence : Superblock is persistent Update Time : Thu Sep 1 14:47:58 2016 State : clean Active Devices : 3 Working Devices : 4 Failed Devices : 0 Spare Devices : 1 Layout : left-symmetric Chunk Size : 512K Name : localhost.localdomain:0 (local to host localhost.localdomain) UUID : f4eaf910:514ae7ab:6dd6d28f:b6cfcc10 Events : 18 Number Major Minor RaidDevice State //三个分区正常运行 0 8 49 0 active sync /dev/sdd1 1 8 50 1 active sync /dev/sdd2 4 8 51 2 active sync /dev/sdd3 3 8 65 - spare /dev/sde1 [[email protected] testdir]# mdadm /dev/md0 -f /dev/sdd1 //模拟/dev/sdd1损坏 mdadm: set /dev/sdd1 faulty in /dev/md0 [[email protected] testdir]# mdadm -D /dev/md0 //再次查看磁盘状态 /dev/md0: Version : 1.2 Creation Time : Thu Sep 1 14:39:53 2016 Raid Level : raid5 Array Size : 202752 (198.03 MiB 207.62 MB) Used Dev Size : 101376 (99.02 MiB 103.81 MB) Raid Devices : 3 Total Devices : 4 Persistence : Superblock is persistent Update Time : Thu Sep 1 14:51:01 2016 State : clean Active Devices : 3 Working Devices : 3 Failed Devices : 1 Spare Devices : 0 Layout : left-symmetric Chunk Size : 512K Name : localhost.localdomain:0 (local to host localhost.localdomain) UUID : f4eaf910:514ae7ab:6dd6d28f:b6cfcc10 Events : 37 Number Major Minor RaidDevice State 3 8 65 0 active sync /dev/sde1 1 8 50 1 active sync /dev/sdd2 4 8 51 2 active sync /dev/sdd3 0 8 49 - faulty /dev/sdd1 ///dev/sdd1已经标记为损坏,并且之前的/dev/sde1自动补上去了 [[email protected] testdir]# cat fstab //依然正常查看文件 # # /etc/fstab # Created by anaconda on Mon Jul 25 12:06:44 2016 # # Accessible filesystems, by reference, are maintained under ‘/dev/disk‘ # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # UUID=136f7cbb-d8f6-439b-aa73-3958bd33b05f / xfs defaults 0 0 UUID=bf3d4b2f-4629-4fd7-8d70-a21302111564 /boot xfs defaults 0 0 UUID=cbf33183-93bf-4b4f-81c0-ea6ae91cd4f6 /usr xfs defaults 0 0 UUID=5e11b173-f7e2-4994-95b9-55cc4c41f20b swap swap defaults 0 0 [[email protected] testdir]# mdadm /dev/md0 -r /dev/sdd1 //模拟拔出硬盘 [[email protected] testdir]# mdadm -D /dev/md0 ..............................省略........................ Number Major Minor RaidDevice State 3 8 65 0 active sync /dev/sde1 //已经找不到/dev/sdd1 1 8 50 1 active sync /dev/sdd2 4 8 51 2 active sync /dev/sdd3 [[email protected] testdir]# [[email protected] testdir]# mdadm /dev/md0 -a /dev/sdd1 //模拟增加硬盘 mdadm: added /dev/sdd1 [[email protected] testdir]# mdadm -D /dev/md0 //查看信息 ..............................省略........................ Number Major Minor RaidDevice State 3 8 65 0 active sync /dev/sde1 1 8 50 1 active sync /dev/sdd2 4 8 51 2 active sync /dev/sdd3 5 8 49 - spare /dev/sdd1 /// /dev/sdd1成功增加 [[email protected] testdir]# mdadm /dev/md0 -f /dev/sdd2 //因为RAI5 中有校验位,允许其中的一块磁盘损坏掉,通过校验位可以把原来的数据文件给推算出来 mdadm: set /dev/sdd2 faulty in /dev/md0 [[email protected] testdir]# mdadm -D /dev/md0 ..............................省略........................ Number Major Minor RaidDevice State 5 8 49 0 active sync /dev/sdd1 2 0 0 2 removed 4 8 51 2 active sync /dev/sdd3 1 8 50 - faulty /dev/sdd2 3 8 65 - faulty /dev/sde1 //这种情况下依然能查看数据文件,不过性能会下降,所以需要立即修复

本文出自 “学無止境” 博客,请务必保留此出处http://dashui.blog.51cto.com/11254923/1845194

以上是关于Linux系统配额与RAID的主要内容,如果未能解决你的问题,请参考以下文章

linux高级文件系统管理概述:处理交换分区设置文件系统配额配置raid和逻辑卷