Hadoop2.6.2的Eclipse插件的使用

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop2.6.2的Eclipse插件的使用相关的知识,希望对你有一定的参考价值。

欢迎转载,且请注明出处,在文章页面明显位置给出原文连接。

本文链接:

首先给出eclipse插件的下载地址:http://download.csdn.net/download/zdfjf/9421244

-

1.插件的安装

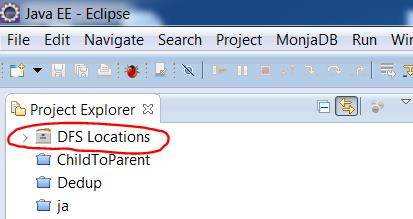

插件下载后,放在eclipse安装目录下的plugins文件夹下,然后重启eclipse,就会发现Project Explorer窗口里多出DFS Locations这一项,对应的是HDFS里存放的文件,现在里边还没有显示目录结构,不用着急,第二步配置之后,目录结构就会出现了。

我突然想起来博客园上有一篇文章对这部分介绍的很好,而且我感觉对这一部分,我不会写的比他好。所以我就不浪费时间了,直接参考虾皮工作室的,原文链接http://www.cnblogs.com/xia520pi/archive/2012/05/20/2510723.html,可以对这一部分配置完成,下面我们要说的是配置完成后,有一些问题导致运行程序不能成功。通过不断调试,我把我运行成功的代码和相应的配置贴出来。

- 2.代码

1 /** 2 * Licensed to the Apache Software Foundation (ASF) under one 3 * or more contributor license agreements. See the NOTICE file 4 * distributed with this work for additional information 5 * regarding copyright ownership. The ASF licenses this file 6 * to you under the Apache License, Version 2.0 (the 7 * "License"); you may not use this file except in compliance 8 * with the License. You may obtain a copy of the License at 9 * 10 * http://www.apache.org/licenses/LICENSE-2.0 11 * 12 * Unless required by applicable law or agreed to in writing, software 13 * distributed under the License is distributed on an "AS IS" BASIS, 14 * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. 15 * See the License for the specific language governing permissions and 16 * limitations under the License. 17 */ 18 package org.apache.hadoop.examples; 19 20 import java.io.IOException; 21 import java.util.StringTokenizer; 22 23 import org.apache.hadoop.conf.Configuration; 24 import org.apache.hadoop.fs.Path; 25 import org.apache.hadoop.io.IntWritable; 26 import org.apache.hadoop.io.Text; 27 import org.apache.hadoop.mapreduce.Job; 28 import org.apache.hadoop.mapreduce.Mapper; 29 import org.apache.hadoop.mapreduce.Reducer; 30 import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; 31 import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; 32 import org.apache.hadoop.util.GenericOptionsParser; 33 34 public class WordCount { 35 36 public static class TokenizerMapper 37 extends Mapper<Object, Text, Text, IntWritable>{ 38 39 private final static IntWritable one = new IntWritable(1); 40 private Text word = new Text(); 41 42 public void map(Object key, Text value, Context context 43 ) throws IOException, InterruptedException { 44 StringTokenizer itr = new StringTokenizer(value.toString()); 45 while (itr.hasMoreTokens()) { 46 word.set(itr.nextToken()); 47 context.write(word, one); 48 } 49 } 50 } 51 52 public static class IntSumReducer 53 extends Reducer<Text,IntWritable,Text,IntWritable> { 54 private IntWritable result = new IntWritable(); 55 56 public void reduce(Text key, Iterable<IntWritable> values, 57 Context context 58 ) throws IOException, InterruptedException { 59 int sum = 0; 60 for (IntWritable val : values) { 61 sum += val.get(); 62 } 63 result.set(sum); 64 context.write(key, result); 65 } 66 } 67 68 public static void main(String[] args) throws Exception { 69 System.setProperty("HADOOP_USER_NAME", "hadoop"); 70 Configuration conf = new Configuration(); 71 conf.set("mapreduce.framework.name", "yarn"); 72 conf.set("yarn.resourcemanager.address", "192.168.0.1:8032"); 73 conf.set("mapreduce.app-submission.cross-platform", "true"); 74 String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs(); 75 if (otherArgs.length < 2) { 76 System.err.println("Usage: wordcount <in> [<in>...] <out>"); 77 System.exit(2); 78 } 79 Job job = new Job(conf, "word count1"); 80 job.setJarByClass(WordCount.class); 81 job.setMapperClass(TokenizerMapper.class); 82 job.setCombinerClass(IntSumReducer.class); 83 job.setReducerClass(IntSumReducer.class); 84 job.setOutputKeyClass(Text.class); 85 job.setOutputValueClass(IntWritable.class); 86 for (int i = 0; i < otherArgs.length - 1; ++i) { 87 FileInputFormat.addInputPath(job, new Path(otherArgs[i])); 88 } 89 FileOutputFormat.setOutputPath(job, 90 new Path(otherArgs[otherArgs.length - 1])); 91 System.exit(job.waitForCompletion(true) ? 0 : 1); 92 } 93 }

这里第69行,因为我windows上用户名为frank,集群上用户名是hadoop ,所以这里增加配置文件,把HADOOP_USER_NAME设置为hadoop。第71和72行是因为配置文件没有起作用,如果不加这两行,会以本地方式运行,没有提交到集群上运行。第73行因为是跨平台的,windows->linux,所以加上这一句。

然后,最重要的一步来了,注意,注意,注意,重要的事说3遍。

插件本来会自动把项目打成jar包,上传运行。但是有问题,现在不会自动打包。所以,我们要把project打成jar包,然后build path ,配置为项目的外部依赖包,然后右键run as -> run on hadoop.就能运行成功了。

ps:这是我的一种方法,在配置的过程中,遇到的问题多种多样,造成问题的原因也不尽相同。So,多搜索,多思考,解决问题。

以上是关于Hadoop2.6.2的Eclipse插件的使用的主要内容,如果未能解决你的问题,请参考以下文章