Hadoop3.2.0集群(4节点-无HA)

Posted magic-chenyang

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Hadoop3.2.0集群(4节点-无HA)相关的知识,希望对你有一定的参考价值。

1.准备环境

1.1配置dns

# cat /etc/hosts

172.27.133.60 hadoop-01

172.27.133.61 hadoop-02

172.27.133.62 hadoop-03

172.27.133.63 hadoop-041.2配置免密登陆

# ssh-keygen

# ssh-copy-id [email protected]/03/041.3关闭防火墙

# cat /etc/selinux/config

SELINUX=disabled

# systemctl stop firewalld

# systemctl disable firewalld1.4配置Java环境,Hadoop环境

# tar -xf /data/software/jdk-8u171-linux-x64.tar.gz -C /usr/local/java

# tar -xf /data/software/hadoop-3.2.0.tar.gz -C /data/hadoop

# cat /etc/profile

export HADOOP_HOME=/data/hadoop/hadoop-3.2.0

export JAVA_HOME=/usr/local/java/jdk1.8.0_171

export PATH=$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

# source /etc/profile

# java -version

java version "1.8.0_171"

Java(TM) SE Runtime Environment (build 1.8.0_171-b11)

Java HotSpot(TM) 64-Bit Server VM (build 25.171-b11, mixed mode)2.配置Hadoop

2.1配置Hadoop环境脚本文件中的JAVA_HOME参数

# cd /data/hadoop/hadoop-3.2.0/etc/hadoop

# vim hadoop-env.sh 和 mapred-env.sh 和 yarn-env.sh,向脚本添加JAVA_HOME

export JAVA_HOME=/usr/local/java/jdk1.8.0_171

# hadoop version

Hadoop 3.2.0

Source code repository https://github.com/apache/hadoop.git -r e97acb3bd8f3befd27418996fa5d4b50bf2e17bf

Compiled by sunilg on 2019-01-08T06:08Z

Compiled with protoc 2.5.0

From source with checksum d3f0795ed0d9dc378e2c785d3668f39

This command was run using /data/hadoop/hadoop-3.2.0/share/hadoop/common/hadoop-common-3.2.0.jar2.2修改Hadoop配置文件

在hadoop-3.2.0/etc/hadoop目录下,修改core-site.xml、hdfs-site.xml、mapred-site.xml、yarn-site.xml、workers文件,具体参数按照实际情况修改。

2.2.1修改core-site.xml

(文件系统)

<configuration>

<property>

<!-- 配置hdfs地址 -->

<name>fs.defaultFS</name>

<value>hdfs://hadoop-01:9000</value>

</property>

<property>

<!-- 保存临时文件目录,需先在/data/hadoop下创建tmp目录 -->

<name>hadoop.tmp.dir</name>

<value>/data/hadoop/tmp</value>

</property>

</configuration># mkdir /data/hadoop/tmp2.2.2修改hdfs-site.xml

(副本数)

<configuration>

<property>

<!-- 主节点地址 -->

<name>dfs.namenode.http-address</name>

<value>hadoop-01:50070</value>

</property>

<property>

<name>dfs.secondary.http.address</name>

<value>hadoop-02:50070</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/data/hadoop/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/data/hadoop/dfs/data</value>

</property>

<property>

<!-- 备份数为默认值2 -->

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

<description>配置为false后,可以允许不要检查权限就生成dfs上的文件,方便倒是方便了,但是你需要防止误删除.</description>

</property>

</configuration>2.2.3修改mapred-site.xml

(资源调度框架)

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop-01:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop-01:19888</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*</value>

</property>

</configuration>2.2.4修改yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop-01</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>172.27.133.60:8088</value>

<description>配置外网只需要替换外网ip为真实ip,否则默认为 localhost:8088</description>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>2048</value>

<description>每个节点可用内存,单位MB,默认8182MB</description>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

<description>忽略虚拟内存的检查,如果你是安装在虚拟机上,这个配置很有用,配上去之后后续操作不容易出问题。</description>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value>

</property>

</configuration>2.2.5修改workers

添加从节点地址,IP或者hostname

hadoop-02

hadoop-03

hadoop-042.3将配置好的文件夹拷贝到其他从节点

# scp -r /data/hadoop [email protected]:/data/hadoop

# scp -r /data/hadoop [email protected]:/data/hadoop

# scp -r /data/hadoop [email protected]:/data/hadoop2.4配置启动脚本Yarn权限

2.4.1添加hdfs权限

在第二行空白位置添加hdfs权限

# cat sbin/start-dfs.sh

# cat sbin/stop-dfs.sh

#!/usr/bin/env bash

HDFS_DATANODE_USER=root

HDFS_DATANODE_SECURE_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

···2.4.2添加Yarn权限

在第二行空白位置添加Yarn权限

# cat sbin/start-yarn.sh

# cat sbin/stop-yarn.sh

#!/usr/bin/env bash

YARN_RESOURCEMANAGER_USER=root

HDFS_DATANODE_SECURE_USER=yarn

YARN_NODEMANAGER_USER=root

···如果不添加权限,在启动时候会报错

ERROR: Attempting to launch hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined. Aborting launch.

Starting datanodes

ERROR: Attempting to launch hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined. Aborting launch.

Starting secondary namenodes [localhost.localdomain]

ERROR: Attempting to launch hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting launch.3.初始化并启动

3.1格式化hdfs

# bin/hdfs namenode -format3.2启动

3.2.1一步启动

# sbin/start-all.sh

3.2.2分布启动

# sbin/start-dfs.sh

# sbin/start-yarn.sh

4.验证

4.1列出进程

主节点hadoop-01

# jps 32069 SecondaryNameNode 2405 NameNode 2965 ResourceManager 3645 Jps从节点hadoop-02

# jps 25616 NodeManager 25377 DataNode 25508 SecondaryNameNode 25945 Jps从节点hadoop-03

# jps 14946 NodeManager 15207 Jps 14809 DataNode从节点hadoop-04

# jps 14433 Jps 14034 DataNode 14171 NodeManager4

4.2访问页面

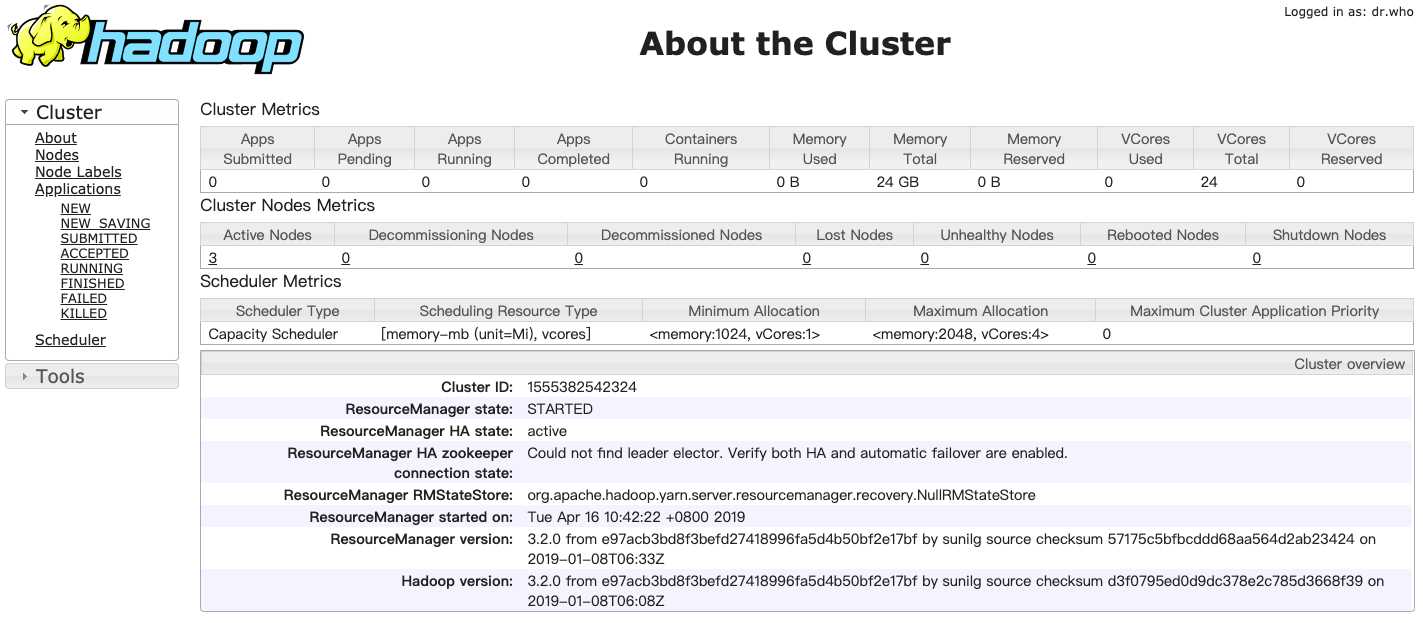

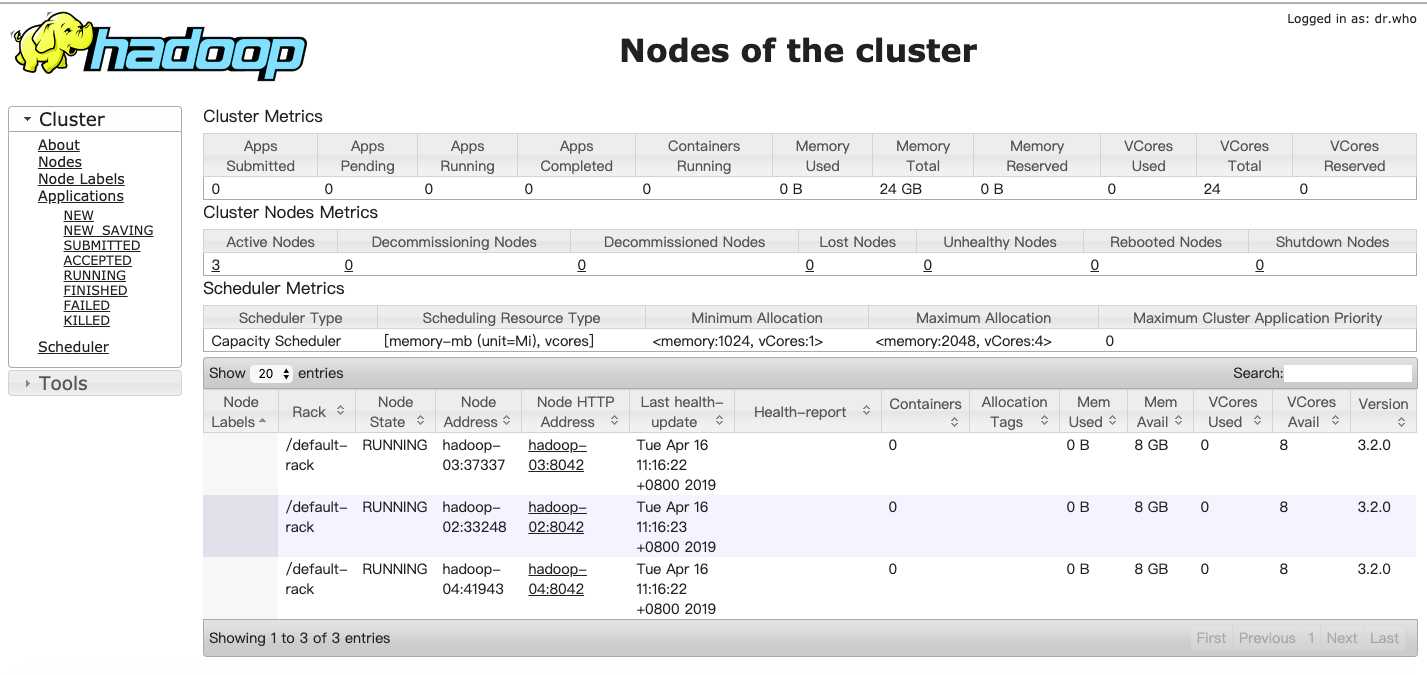

http://hadoop-01:8088打开ResourceManager页面

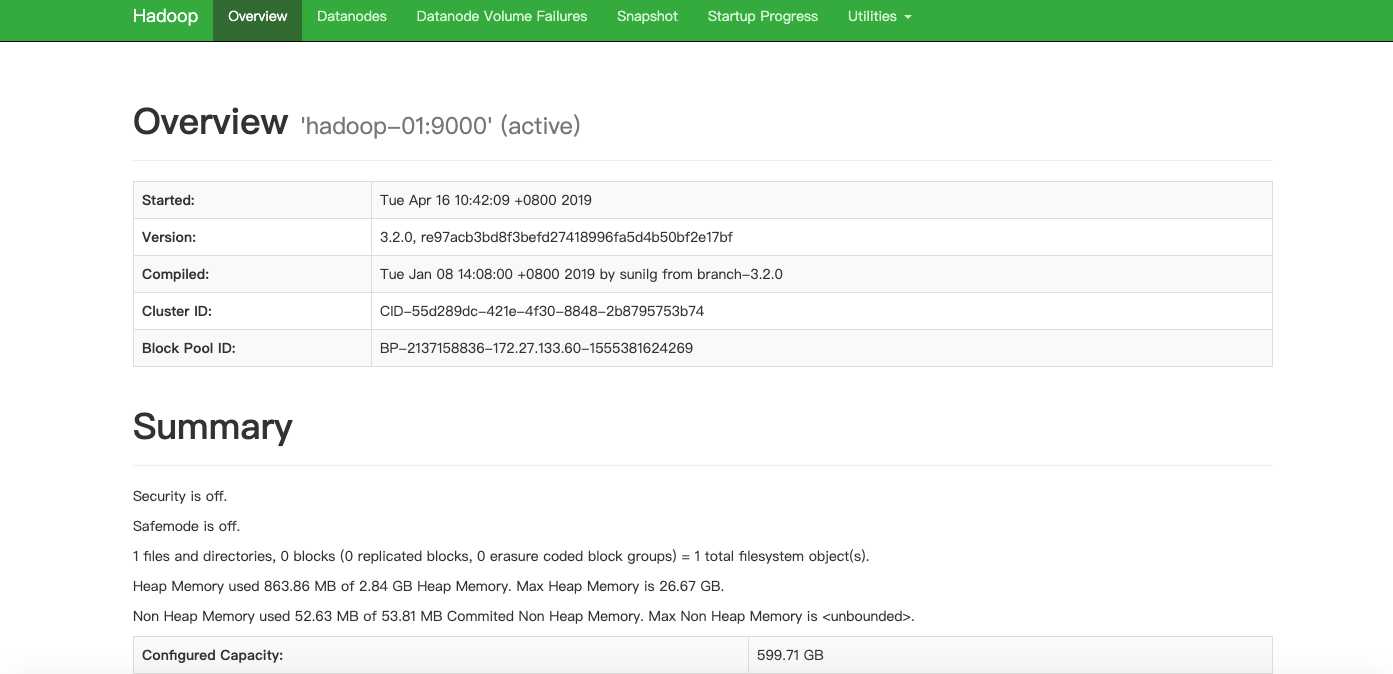

http://hadoop-01:50070打开Hadoop Namenode页面

以上是关于Hadoop3.2.0集群(4节点-无HA)的主要内容,如果未能解决你的问题,请参考以下文章