软硬链接&使用RAID与LVM磁盘阵列技术

Posted qw-kk

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了软硬链接&使用RAID与LVM磁盘阵列技术相关的知识,希望对你有一定的参考价值。

???0、软硬方式链接

??????软硬链接就相当于Windows下的快捷方式,但是有所不同。

硬链接:可以将其理解为指向原始文件的inode文件,这个文件就像一个指针。它指向了自身inode所链接的block块。所以,硬链接和原始文件其实是同一个文件,只是名字不同。删除源文件,硬链接会替代原始文件能够正常访问且内容一致。但是不能跨分区对目录进行链接

软链接:也叫做符号链接。仅包含所链接文件的路径名因此能跨分区、文件系统进行链接。但是原始文件被删除后链接文件也将失效。

??????软硬链接的创建方式只使用了一个命令:ln命令。

??????ln命令用于创建链接文件,格式为:ln [参数] 目标,默认创建硬链接。

| 参数 | 作用 |

|---|---|

| -s | 创建软连接 |

| -f | 强制创建文件或目录的链接 |

| -i | 覆盖前询问 |

| -v | 显示创建链接的过程 |

[root@localhost ~]# ls

anaconda-ks.cfg Documents initial-setup-ks.cfg Pictures Templates

Desktop Downloads Music Public Videos

[root@localhost ~]# ln anaconda-ks.cfg ana

[root@localhost ~]# ls

ana Documents Music Templates

anaconda-ks.cfg Downloads Pictures Videos

Desktop initial-setup-ks.cfg Public

[root@localhost ~]# ln -s initial-setup-ks.cfg init

[root@localhost ~]# ls

ana Documents initial-setup-ks.cfg Public

anaconda-ks.cfg Downloads Music Templates

Desktop init Pictures Videos

[root@localhost ~]# ls -l

total 12

-rw-------. 2 root root 1044 May 17 19:35 ana

-rw-------. 2 root root 1044 May 17 19:35 anaconda-ks.cfg

drwxr-xr-x. 2 root root 6 May 17 11:40 Desktop

drwxr-xr-x. 2 root root 6 May 17 11:40 Documents

drwxr-xr-x. 2 root root 6 May 17 11:40 Downloads

lrwxrwxrwx. 1 root root 20 May 22 07:31 init -> initial-setup-ks.cfg

-rw-r--r--. 1 root root 1095 May 17 11:36 initial-setup-ks.cfg

drwxr-xr-x. 2 root root 6 May 17 11:40 Music

drwxr-xr-x. 2 root root 6 May 17 11:40 Pictures

drwxr-xr-x. 2 root root 6 May 17 11:40 Public

drwxr-xr-x. 2 root root 6 May 17 11:40 Templates

drwxr-xr-x. 2 root root 6 May 17 11:40 Videos

[root@localhost ~]#

??????创建硬链接会让block引用次数增加。文件所有者前面的数字便是被引用的次数,直到为0文件才会被删除。

???1、RAID(独立冗余磁盘阵列)

??????RAID的出现提高了硬盘的读写速度并且解决了硬盘损坏后的数据丢失。

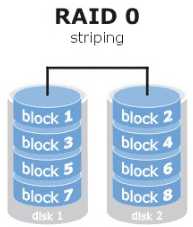

??????1.1 RAID 0

??????任意一块硬盘发生故障将导致整个系统的数据都受到破坏。RAID 0技术能够有效地提升硬盘数据的吞吐能力,但是不具备数据备份和错误修复能力。数据被分别写入到不同的硬盘设备中,即disk1和disk2硬盘设备会分别保存数据资料,最终实现提升读取、写入速度的效果。

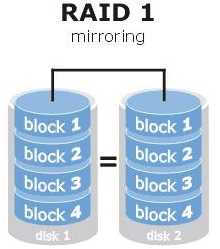

??????把两块以上的硬盘设备进行绑定,在写入数据时,是将数据同时写入到多块硬盘设备上(可以将其视为数据的镜像或备份)。当其中某一块硬盘发生故障后,一般会立即自动以热交换的方式来恢复数据的正常使用。这项技术保证了数据的安全性,但磁盘利用率下降,同时也增大了系统计算功能的负载

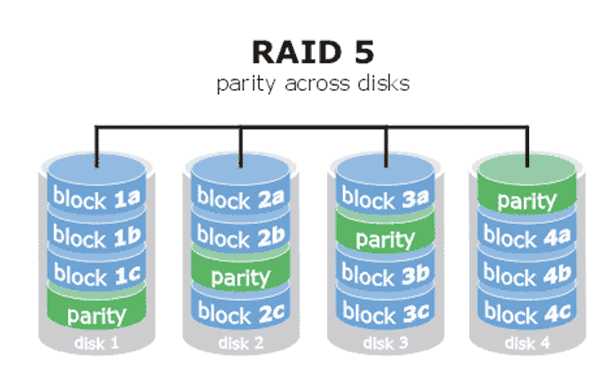

??????1.3 RAID 5

??????RAID5技术是把硬盘设备的数据奇偶校验信息保存到其他硬盘设备中。RAID 5磁盘阵列组中数据的奇偶校验信息并不是单独保存到某一块硬盘设备中,而是存储到除自身以外的其他每一块硬盘设备上,这样的好处是其中任何一设备损坏后不至于出现致命缺陷。RAID 5技术“妥协”地兼顾了硬盘设备的读写速度、数据安全性与存储成本问题。

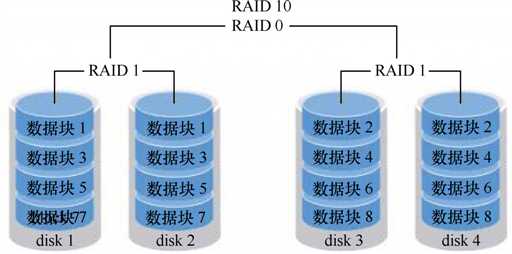

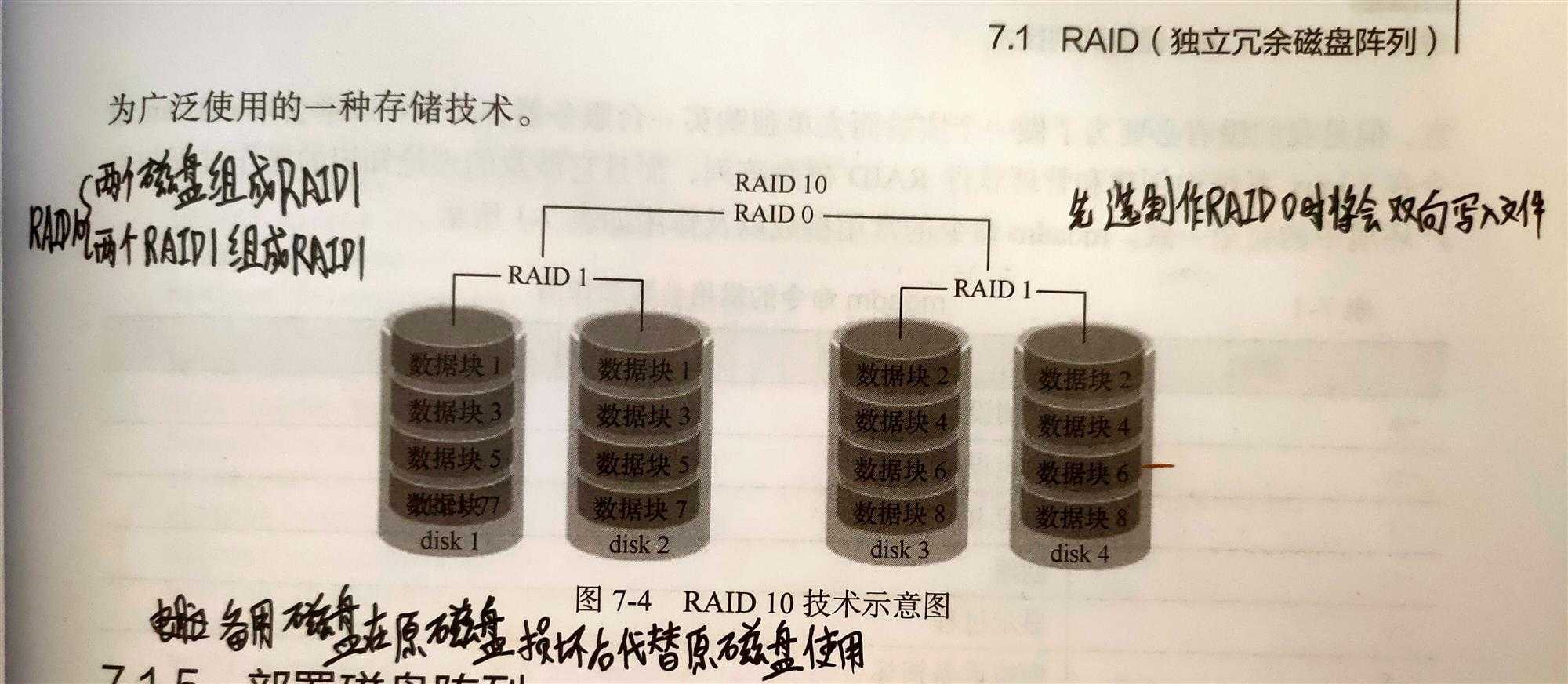

??????1.4 RAID 10

??????这个是一零不是十。表示RAID 10是RAID1和RAID 0 的结合体。从理论上来讲,只要坏的不是同一组中的所有硬盘,那么最多可以损坏50%的硬盘设备而不丢失数据。由于RAID 10技术继承了RAID 0的高读写速度和RAID 1的数据安全性,在不考虑成本的情况下RAID 10的性能都超过了RAID 5,因此当前成为广泛使用的一种存储技术。

??????制作顺序:先分别两两制作成RAID 1磁盘阵列,以保证数据的安全性;然后再对两个RAID 1磁盘阵列实施RAID 0技术,进一步提高硬盘设备的读写速度。

??????1.5 部署磁盘阵列

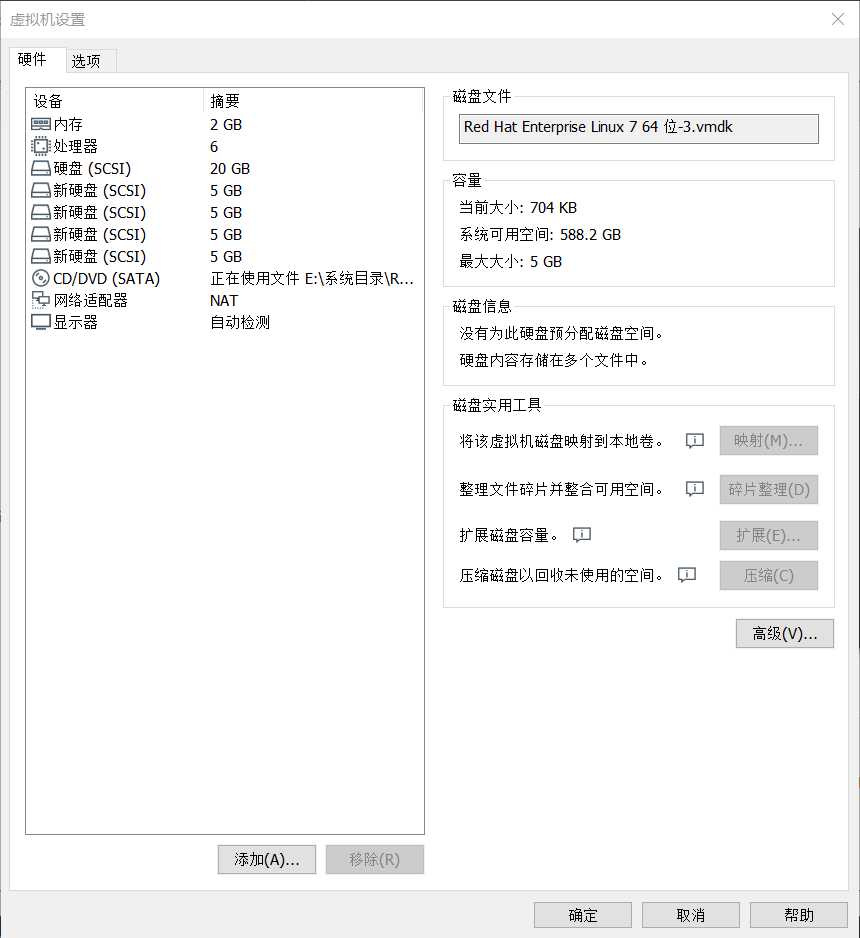

??????首先,需要在虚拟机中添加4块硬盘设备来制作一个RAID 10磁盘阵列。

??????mdadm命令用于管理Linux系统中的软件RAID硬盘阵列,格式为:mdadm [模式] <RAID设备名称> [选项] [成员设备名称]。

| 参数 | 作用 |

|---|---|

| -a | 检测设备名称 |

| -n | 指定设备数量 |

| -l | 指定RAID级别 |

| -C | 创建 |

| -v | 显示过程 |

| -f | 模拟设备损坏 |

| -r | 移除设备 |

| -Q | 查看摘要信息 |

| -D | 查看详细信息 |

| -S | 停止RAID磁盘阵列 |

??????接下来开始部署RAID阵列,mdadm命令-C参数代表创建一个RAID阵列卡;-v参数显示创建的过程,同时在后面追加一个设备名称/dev/md0,这样/dev/md0就是创建后的RAID磁盘阵列的名称;-a yes参数代表自动创建设备文件;-n 4参数代表使用4块硬盘来部署这个RAID磁盘阵列;而-l 10参数则代表RAID 10方案;最后再加上4块硬盘设备的名称就搞定了。

[root@localhost Desktop]# ls -ld /dev/sd*

brw-rw----. 1 root disk 8, 0 May 22 2020 /dev/sda

brw-rw----. 1 root disk 8, 1 May 22 2020 /dev/sda1

brw-rw----. 1 root disk 8, 2 May 22 2020 /dev/sda2

brw-rw----. 1 root disk 8, 16 May 22 2020 /dev/sdb

brw-rw----. 1 root disk 8, 32 May 22 2020 /dev/sdc

brw-rw----. 1 root disk 8, 48 May 22 2020 /dev/sdd

brw-rw----. 1 root disk 8, 64 May 22 2020 /dev/sde

# 创建磁盘阵列

[root@localhost Desktop]# mdadm -Cv /dev/md0 -a yes -n 4 -l 10 /dev/sdb /dev/sdc /dev/sdd /dev/sde

mdadm: layout defaults to n2

mdadm: layout defaults to n2

mdadm: chunk size defaults to 512K

mdadm: size set to 5238272K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

[root@localhost Desktop]#

# 格式化磁盘阵列

[root@localhost Desktop]# mkfs.ext4 /dev/md0 # 格式化磁盘阵列格式为Ext4

mke2fs 1.42.9 (28-Dec-2013)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=128 blocks, Stripe width=256 blocks

655360 inodes, 2619136 blocks

130956 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=2151677952

80 block groups

32768 blocks per group, 32768 fragments per group

8192 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632

Allocating group tables: done

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

[root@localhost Desktop]#

# 创建挂载点并挂载

[root@localhost Desktop]#

[root@localhost Desktop]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 18G 2.9G 15G 17% /

devtmpfs 905M 0 905M 0% /dev

tmpfs 914M 140K 914M 1% /dev/shm

tmpfs 914M 8.8M 905M 1% /run

tmpfs 914M 0 914M 0% /sys/fs/cgroup

/dev/sda1 497M 119M 379M 24% /boot

/dev/sr0 3.5G 3.5G 0 100% /run/media/root/RHEL-7.0 Server.x86_64

/dev/md0 9.8G 37M 9.2G 1% /RAIDmd0 #只显示总大小一半

[root@localhost Desktop]#

# 查看磁盘阵列的详细信息

[root@localhost Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Fri May 22 10:12:33 2020

Raid Level : raid10

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 4

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 10:15:27 2020

State : clean

Active Devices : 4

Working Devices : 4

Failed Devices : 0

Spare Devices : 0

Layout : near=2

Chunk Size : 512K

Name : localhost.localdomain:0 (local to host localhost.localdomain)

UUID : 613fa3ed:5abcf43f:dec36947:418a3848

Events : 17

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

1 8 32 1 active sync /dev/sdc

2 8 48 2 active sync /dev/sdd

3 8 64 3 active sync /dev/sde

[root@localhost Desktop]#

# 把信息写入/etc/fstab文件

[root@localhost Desktop]# vim /etc/fstab

#

# /etc/fstab

# Created by anaconda on Wed May 13 12:21:55 2020

#

# Accessible filesystems, by reference, are maintained under ‘/dev/disk‘

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/rhel-root / xfs defaults 1 1

UUID=5b557056-83b0-4f51-ba26-82a3d5ee0d20 /boot xfs defaults 1 2

/dev/mapper/rhel-swap swap swap defaults 0 0

/dev/md0 /RAIDmd0 ext4 defaults 0 0

??????1.6 损坏磁盘阵列及修复

??????

# 模拟磁盘损坏

[root@localhost Desktop]# mdadm /dev/md0 -f /dev/sdc

mdadm: set /dev/sdc faulty in /dev/md0

[root@localhost Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Fri May 22 10:12:33 2020

Raid Level : raid10

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 4

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 15:36:03 2020

State : clean, degraded

Active Devices : 3

Working Devices : 3

Failed Devices : 1

Spare Devices : 0

Layout : near=2

Chunk Size : 512K

Name : localhost.localdomain:0 (local to host localhost.localdomain)

UUID : 613fa3ed:5abcf43f:dec36947:418a3848

Events : 19

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

1 0 0 1 removed

2 8 48 2 active sync /dev/sdd

3 8 64 3 active sync /dev/sde

1 8 32 - faulty /dev/sdc

[root@localhost Desktop]#

# 由于我们是采用软件模拟,现在只需要重启并把新硬盘添加到RAID阵列中即可

[root@localhost Desktop]# umount /dev/md0

[root@localhost Desktop]# mdadm /dev/md0 -a /dev/sdc

mdadm: added /dev/sdc

[root@localhost Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Fri May 22 10:12:33 2020

Raid Level : raid10

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 4

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 15:46:46 2020

State : clean, degraded, recovering

Active Devices : 3

Working Devices : 4

Failed Devices : 0

Spare Devices : 1

Layout : near=2

Chunk Size : 512K

Rebuild Status : 80% complete

Name : localhost.localdomain:0 (local to host localhost.localdomain)

UUID : 613fa3ed:5abcf43f:dec36947:418a3848

Events : 41

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

4 8 32 1 spare rebuilding /dev/sdc

2 8 48 2 active sync /dev/sdd

3 8 64 3 active sync /dev/sde

[root@localhost Desktop]# mount -a # 当新硬盘与其他磁盘不相同时使用mount自动挂载一下试试看

[root@localhost Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Fri May 22 10:12:33 2020

Raid Level : raid10

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 4

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 15:47:14 2020

State : clean

Active Devices : 4

Working Devices : 4

Failed Devices : 0

Spare Devices : 0

Layout : near=2

Chunk Size : 512K

Name : localhost.localdomain:0 (local to host localhost.localdomain)

UUID : 613fa3ed:5abcf43f:dec36947:418a3848

Events : 48

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

4 8 32 1 active sync /dev/sdc

2 8 48 2 active sync /dev/sdd

3 8 64 3 active sync /dev/sde

[root@localhost Desktop]#

??????1.7 磁盘阵列+备份盘

??????RAID 10磁盘阵列中最多允许50%的硬盘设备发生故障,但是存在这样一种极端情况,即同一RAID 1磁盘阵列中的硬盘设备若全部损坏,也会导致数据丢失。换句话说,在RAID 10磁盘阵列中,如果RAID 1中的某一块硬盘出现了故障,而我们正在前往修复的路上,恰巧该RAID1磁盘阵列中的另一块硬盘设备也出现故障,那么数据就被彻底丢失了。

??????下面就以RAID 5为例试一下添加备份盘。部署RAID 5磁盘阵列时,至少需要用到3块硬盘,还需要再加一块备份硬盘,所以总计需要在虚拟机中模拟4块硬盘设备。

[root@localhost Desktop]# mdadm -Cv /dev/md1 -n 3 -l 5 -x 1 /dev/sdb /dev/sdc /dev/sdd /dev/sde

mdadm: layout defaults to left-symmetric

mdadm: layout defaults to left-symmetric

mdadm: chunk size defaults to 512K

mdadm: size set to 5238272K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md1 started.

[root@localhost Desktop]# mdadm -D /dev/md1

/dev/md1:

Version : 1.2

Creation Time : Fri May 22 16:09:06 2020

Raid Level : raid5

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 16:09:33 2020

State : clean

Active Devices : 3

Working Devices : 4

Failed Devices : 0

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 512K

Name : localhost.localdomain:1 (local to host localhost.localdomain)

UUID : d9cf8ad6:03012179:442be227:8c6c0c50

Events : 18

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

1 8 32 1 active sync /dev/sdc

4 8 48 2 active sync /dev/sdd

3 8 64 - spare /dev/sde

[root@localhost Desktop]# mkfs.ext4 /dev/md1

mke2fs 1.42.9 (28-Dec-2013)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=128 blocks, Stripe width=256 blocks

655360 inodes, 2619136 blocks

130956 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=2151677952

80 block groups

32768 blocks per group, 32768 fragments per group

8192 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632

Allocating group tables: done

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

[root@localhost Desktop]# echo "/dev/md1 /RAIDmd1 ext4 defaults 0 0" >>/dev/fstab

[root@localhost Desktop]# cd /

[root@localhost /]# mkdir RAIDmd1

[root@localhost /]# mount /dev/md1 /RAIDmd1/

[root@localhost /]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 18G 2.9G 15G 17% /

devtmpfs 905M 4.0K 905M 1% /dev

tmpfs 914M 140K 914M 1% /dev/shm

tmpfs 914M 8.8M 905M 1% /run

tmpfs 914M 0 914M 0% /sys/fs/cgroup

/dev/sda1 497M 119M 379M 24% /boot

/dev/sr0 3.5G 3.5G 0 100% /run/media/root/RHEL-7.0 Server.x86_64

/dev/md1 9.8G 37M 9.2G 1% /RAIDmd1

[root@localhost /]#

# 挂载完毕,现在模拟硬盘损坏

[root@localhost /]# mdadm /dev/md1 -f /dev/sdb

mdadm: set /dev/sdb faulty in /dev/md1

[root@localhost /]# mdadm -D /dev/md1

/dev/md1:

Version : 1.2

Creation Time : Fri May 22 16:09:06 2020

Raid Level : raid5

Array Size : 10476544 (9.99 GiB 10.73 GB)

Used Dev Size : 5238272 (5.00 GiB 5.36 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Fri May 22 16:22:45 2020

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 3

Failed Devices : 1

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 512K

Rebuild Status : 97% complete

Name : localhost.localdomain:1 (local to host localhost.localdomain)

UUID : d9cf8ad6:03012179:442be227:8c6c0c50

Events : 35

Number Major Minor RaidDevice State

3 8 64 0 spare rebuilding /dev/sde

1 8 32 1 active sync /dev/sdc

4 8 48 2 active sync /dev/sdd

0 8 16 - faulty /dev/sdb

# 可以看到,当sdb损坏时,sde自动挂载顶替了sdb的位置。

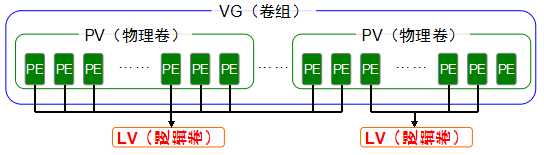

???2、LVM(逻辑卷管理器)

??????LVM可以允许用户对硬盘资源进行动态调整。逻辑卷管理器是Linux系统用于对硬盘分区进行管理的一种机制,理论性较强,其创建初衷是为了解决硬盘设备在创建分区后不易修改分区大小的缺陷。尽管对传统的硬盘分区进行强制扩容或缩容从理论上来讲是可行的,但是却可能造成数据的丢失。而LVM技术是在硬盘分区和文件系统之间添加了一个逻辑层,它提供了一个抽象的卷组,可以把多块硬盘进行卷组合并。这样一来,用户不必关心物理硬盘设备的底层架构和布局,就可以实现对硬盘分区的动态调整。

??????物理卷处于LVM中的最底层,可以将其理解为物理硬盘、硬盘分区或者RAID磁盘阵列,这都可以。卷组建立在物理卷之上,一个卷组可以包含多个物理卷,而且在卷组创建之后也可以继续向其中添加新的物理卷。逻辑卷是用卷组中空闲的资源建立的,并且逻辑卷在建立后可以动态地扩展或缩小空间。

?????? 2.1 部署逻辑卷

| 功能/命令 | 物理卷管理 | 卷组管理 | 逻辑卷管理 |

| 扫描 | pvscan | vgscan | lvscan |

| 建立 | pvcreate | vgcreate | lvcreate |

| 显示 | pvdisplay | vgdisplay | lvdisplay |

| 删除 | pvremove | vgremove | lvremove |

| 扩展 | vgextend | lvextend | |

| 缩小 | vgreduce | lvreduce |

# 第一步 添加两块硬盘支持LVM技术

[root@localhost /]# pvcreate /dev/sdb /dev/sdc

Physical volume "/dev/sdb" successfully created

Physical volume "/dev/sdc" successfully created

# 第二步 吧两块硬盘加到TEST卷组中,查看卷组状态

[root@localhost /]# vgcreate TEST /dev/sdb /dev/sdc

Volume group "TEST" successfully created

[root@localhost /]# vgdisplay

--- Volume group ---

VG Name TEST

System ID

Format lvm2

Metadata Areas 2

Metadata Sequence No 1

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 0

Open LV 0

Max PV 0

Cur PV 2

Act PV 2

VG Size 19.99 GiB

PE Size 4.00 MiB

Total PE 5118

Alloc PE / Size 0 / 0

Free PE / Size 5118 / 19.99 GiB

VG UUID RssVyX-Crh4-Pili-TNpG-YraS-ep1a-taHspM

--- Volume group ---

VG Name rhel

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 3

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 1

Act PV 1

VG Size 19.51 GiB

PE Size 4.00 MiB

Total PE 4994

Alloc PE / Size 4994 / 19.51 GiB

Free PE / Size 0 / 0

VG UUID KOe8jK-JQtp-TTMc-IwVF-JdAY-Keyx-yS7MxN

??????在对逻辑卷进行切割时有两种计量单位。第一种是以容量为单位,所使用的参数为-L。例如,使用-L 150M生成一个大小为150MB的逻辑卷。另外一种是以基本单元的个数为单位,所使用的参数为-l。每个基本单元的大小默认为4MB。例如,使用-l 37可以生成一个大小为37×4MB=148MB的逻辑卷。

# 第三步 切割出一个大约300M的逻辑卷

[root@localhost /]# lvcreate -n vo -L 300M TEST

Logical volume "vo" created

[root@localhost /]# lvdisplay

--- Logical volume ---

LV Path /dev/TEST/vo

LV Name vo

VG Name TEST

LV UUID BgAH04-J1D2-hqBf-gmZV-qiZt-3LfV-gD70kO

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2020-05-22 16:59:10 +0800

LV Status available

# open 0

LV Size 300.00 MiB # 切割后的300M

Current LE 75

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:2

--- Logical volume ---

LV Path /dev/rhel/swap

LV Name swap

VG Name rhel

LV UUID hKPRws-tpxx-dTvW-LErF-QiU5-SNhd-pO3K1y

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 2

LV Size 2.00 GiB

Current LE 512

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1

--- Logical volume ---

LV Path /dev/rhel/root

LV Name root

VG Name rhel

LV UUID YPpU5s-3xA8-7Jwh-1g0l-emdO-L4xh-fA4zPq

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 1

LV Size 17.51 GiB

Current LE 4482

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:0

# 第四步 格式化已经生成好的逻辑卷

[root@localhost /]# mkfs.ext4 /dev/TEST/vo

mke2fs 1.42.9 (28-Dec-2013)

Filesystem label=

OS type: Linux

Block size=1024 (log=0)

Fragment size=1024 (log=0)

Stride=0 blocks, Stripe width=0 blocks

76912 inodes, 307200 blocks

15360 blocks (5.00%) reserved for the super user

First data block=1

Maximum filesystem blocks=33947648

38 block groups

8192 blocks per group, 8192 fragments per group

2024 inodes per group

Superblock backups stored on blocks:

8193, 24577, 40961, 57345, 73729, 204801, 221185

Allocating group tables: done

Writing inode tables: done

Creating journal (8192 blocks): done

Writing superblocks and filesystem accounting information: done

[root@localhost /]# mkdir TESTvo

[root@localhost /]# mount /dev/TEST/vo /TESTvo/

# 第五步 查看挂载状态并将其永久挂载

[root@localhost /]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 18G 2.9G 15G 17% /

devtmpfs 905M 0 905M 0% /dev

tmpfs 914M 140K 914M 1% /dev/shm

tmpfs 914M 8.8M 905M 1% /run

tmpfs 914M 0 914M 0% /sys/fs/cgroup

/dev/sda1 497M 119M 379M 24% /boot

/dev/mapper/TEST-vo 283M 2.1M 262M 1% /TESTvo

[root@localhost /]# echo "/dev/TEST/vo /TEST/vo ext4 defaults 0 0" >>/dev/fstab

[root@localhost /]#

??????2.2 扩容逻辑卷

??????在前面的实验中,卷组是由两块硬盘设备共同组成的。用户在使用存储设备时感知不到设备底层的架构和布局,更不用关心底层是由多少块硬盘组成的,只要卷组中有足够的资源,就可以一直为逻辑卷扩容。扩展前请一定要记得卸载设备和挂载点的关联。

[root@localhost /]# umount /TESTvo

#第一步 把上一个实验中的逻辑卷vo扩展至500MB。

[root@localhost /]# lvextend -L 500M /dev/TEST/vo # 扩容

Extending logical volume vo to 500.00 MiB

Logical volume vo successfully resized

[root@localhost /]# e2fsck -f /dev/TEST/vo #检查硬盘完整性并重置硬盘容量

e2fsck 1.42.9 (28-Dec-2013)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

/dev/TEST/vo: 11/76912 files (0.0% non-contiguous), 19977/307200 blocks

[root@localhost /]# resize2fs /dev/TEST/vo

resize2fs 1.42.9 (28-Dec-2013)

Resizing the filesystem on /dev/TEST/vo to 512000 (1k) blocks.

The filesystem on /dev/TEST/vo is now 512000 blocks long.

[root@localhost /]# mount /dev/TEST/vo /TESTvo/

[root@localhost /]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 18G 2.9G 15G 17% /

devtmpfs 905M 4.0K 905M 1% /dev

tmpfs 914M 140K 914M 1% /dev/shm

tmpfs 914M 8.8M 905M 1% /run

tmpfs 914M 0 914M 0% /sys/fs/cgroup

/dev/sda1 497M 119M 379M 24% /boot

/dev/mapper/TEST-vo 477M 2.3M 446M 1% /TESTvo

[root@localhost /]#

??????2.3 缩逻辑卷

??????相较于扩容逻辑卷,在对逻辑卷进行缩容操作时,其丢失数据的风险更大。所以在生产环境中执行相应操作时,一定要提前备份好数据。另外Linux系统规定,在对LVM逻辑卷进行缩容操作之前,要先检查文件系统的完整性(当然这也是为了保证我们的数据安全)。

[root@localhost /]# umount /TESTvo

# 第一步 检查文件系统的完整性

[root@localhost /]# e2fsck -f /dev/TEST/vo

e2fsck 1.42.9 (28-Dec-2013)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

/dev/TEST/vo: 11/127512 files (0.0% non-contiguous), 26612/512000 blocks

# 把逻辑卷减小到120M

[root@localhost /]# resize2fs /dev/TEST/vo 120M

resize2fs 1.42.9 (28-Dec-2013)

The containing partition (or device) is only 122880 (1k) blocks.

You requested a new size of 204800 blocks.

[root@localhost /]# lvreduce -L 120M /dev/TEST/vo

WARNING: Reducing active logical volume to 120.00 MiB

THIS MAY DESTROY YOUR DATA (filesystem etc.)

Do you really want to reduce vo? [y/n]: y

Reducing logical volume vo to 120.00 MiB

Logical volume vo successfully resized

# 重新挂载

[root@localhost /]# mount /dev/TEST/vo /TESTvo/

[root@localhost /]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 18G 3.0G 15G 17% /

devtmpfs 985M 0 985M 0% /dev

tmpfs 994M 80K 994M 1% /dev/shm

tmpfs 994M 8.8M 986M 1% /run

tmpfs 994M 0 994M 0% /sys/fs/cgroup

/dev/sr0 3.5G 3.5G 0 100% /media/cdrom

/dev/sda1 497M 119M 379M 24% /boot

/dev/mapper/TEST-vo 113M 1.6M 103M 2% /TESTvo

??????2.4 逻辑卷快照

??????LVM还具备有“快照卷”功能,该功能类似于虚拟机软件的还原时间点功能。例如,可以对某一个逻辑卷设备做一次快照,如果日后发现数据被改错了,就可以利用之前做好的快照卷进行覆盖还原。LVM的快照卷功能有两个特点:

快照卷的容量必须等同于逻辑卷的容量

快照卷仅一次有效,一旦执行还原操作后则会被立即自动删除。

# 首先查看卷组的信息

[root@localhost /]# vgdisplay

--- Volume group ---

VG Name TEST

System ID

Format lvm2

Metadata Areas 2

Metadata Sequence No 4

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 1

Open LV 1

Max PV 0

Cur PV 2

Act PV 2

VG Size 19.99 GiB

PE Size 4.00 MiB

Total PE 5118

Alloc PE / Size 30 / 120.00 MiB

Free PE / Size 5088 / 19.88 GiB

VG UUID RssVyX-Crh4-Pili-TNpG-YraS-ep1a-taHspM

--- Volume group ---

VG Name rhel

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 3

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 1

Act PV 1

VG Size 19.51 GiB

PE Size 4.00 MiB

Total PE 4994

Alloc PE / Size 4994 / 19.51 GiB

Free PE / Size 0 / 0

VG UUID KOe8jK-JQtp-TTMc-IwVF-JdAY-Keyx-yS7MxN

[root@localhost /]#

# 通过卷组的输出信息可以清晰看到,卷组中已经使用了120MB的容量,空闲容量还有19.88GB。

#用重定向往逻辑卷设备所挂载的目录中写入一个文件

[root@localhost /]# echo "Welcome to Linuxprobe.com" > /TESTvo/readme.txt[root@localhost /]# ls -l /TESTvo/

total 14

drwx------. 2 root root 12288 May 22 17:35 lost+found

-rw-r--r--. 1 root root 26 May 22 17:52 readme.txt

[root@localhost /]#

??????接下来正式开始.

# 第一步:使用-s参数生成一个快照卷,使用-L参数指定切割的大小。另外,还需要在命令后面写上是针对哪个逻辑卷执行的快照操作。

[root@localhost /]# lvcreate -L 120M -s -n DEMO /dev/TEST/vo

Logical volume "DEMO" created

[root@localhost /]# lvdisplay

--- Logical volume ---

LV Path /dev/TEST/vo

LV Name vo

VG Name TEST

LV UUID BgAH04-J1D2-hqBf-gmZV-qiZt-3LfV-gD70kO

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2020-05-22 16:59:10 +0800

LV snapshot status source of

DEMO [active]

LV Status available

# open 1

LV Size 120.00 MiB

Current LE 30

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:2

--- Logical volume ---

LV Path /dev/TEST/DEMO

LV Name DEMO

VG Name TEST

LV UUID AEkQh7-GmBk-3qh3-wMWW-rpf2-EMAC-Cd3gqT

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2020-05-22 17:57:42 +0800

LV snapshot status active destination for vo

LV Status available

# open 0

LV Size 120.00 MiB

Current LE 30

COW-table size 120.00 MiB

COW-table LE 30

Allocated to snapshot 0.01%

Snapshot chunk size 4.00 KiB

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:3

--- Logical volume ---

LV Path /dev/rhel/swap

LV Name swap

VG Name rhel

LV UUID hKPRws-tpxx-dTvW-LErF-QiU5-SNhd-pO3K1y

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 2

LV Size 2.00 GiB

Current LE 512

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1

--- Logical volume ---

LV Path /dev/rhel/root

LV Name root

VG Name rhel

LV UUID YPpU5s-3xA8-7Jwh-1g0l-emdO-L4xh-fA4zPq

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 1

LV Size 17.51 GiB

Current LE 4482

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:0

[root@localhost /]#

# 第二步 在逻辑卷所挂载的目录中创建一个100MB的文件,然后再查看快照卷的状态。

[root@localhost /]# dd if=/dev/zero of=/TESTvo/files count=1 bs=100M

1+0 records in

1+0 records out

104857600 bytes (105 MB) copied, 0.356068 s, 294 MB/s

[root@localhost /]# lvdisplay

--- Logical volume ---

LV Path /dev/TEST/vo

LV Name vo

VG Name TEST

LV UUID BgAH04-J1D2-hqBf-gmZV-qiZt-3LfV-gD70kO

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2020-05-22 16:59:10 +0800

LV snapshot status source of

DEMO [active]

LV Status available

# open 1

LV Size 120.00 MiB

Current LE 30

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:2

--- Logical volume ---

LV Path /dev/TEST/DEMO

LV Name DEMO

VG Name TEST

LV UUID AEkQh7-GmBk-3qh3-wMWW-rpf2-EMAC-Cd3gqT

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2020-05-22 17:57:42 +0800

LV snapshot status active destination for vo

LV Status available

# open 0

LV Size 120.00 MiB

Current LE 30

COW-table size 120.00 MiB

COW-table LE 30

Allocated to snapshot 81.21%

Snapshot chunk size 4.00 KiB

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:3

--- Logical volume ---

LV Path /dev/rhel/swap

LV Name swap

VG Name rhel

LV UUID hKPRws-tpxx-dTvW-LErF-QiU5-SNhd-pO3K1y

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 2

LV Size 2.00 GiB

Current LE 512

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1

--- Logical volume ---

LV Path /dev/rhel/root

LV Name root

VG Name rhel

LV UUID YPpU5s-3xA8-7Jwh-1g0l-emdO-L4xh-fA4zPq

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 1

LV Size 17.51 GiB

Current LE 4482

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:0

[root@localhost /]#

# 第三步:为了校验SNAP快照卷的效果,需要对逻辑卷进行快照还原操作。在此之前记得先卸载掉逻辑卷设备与目录的挂载。

[root@localhost /]# umount /TESTvo/

[root@localhost /]# lvconvert --merge /dev/TEST/DEMO

Merging of volume DEMO started.

vo: Merged: 28.0%

vo: Merged: 100.0%

Merge of snapshot into logical volume vo has finished.

Logical volume "DEMO" successfully removed

[root@localhost /]#

# 第四步:快照卷会被自动删除掉,并且刚刚在逻辑卷设备被执行快照操作后再创建出来的100MB的文件也被清除了。

[root@localhost /]# mount /dev/TEST/vo /TESTvo/

[root@localhost /]# ls /TESTvo/

lost+found readme.txt

[root@localhost /]#

??????2.5 删除逻辑卷

??????当生产环境中想要重新部署LVM或者不再需要使用LVM时,则需要执行LVM的删除操作。为此,需要提前备份好重要的数据信息,然后依次删除逻辑卷、卷组、物理卷设备,这个顺序不可颠倒。

# 第一步:取消逻辑卷与目录的挂载关联,删除配置文件中永久生效的设备参数。

[root@localhost /]# umount /TESTvo/

[root@localhost /]# vim /etc/fstab

#

# /etc/fstab

# Created by anaconda on Wed May 13 12:21:55 2020

#

# Accessible filesystems, by reference, are maintained under ‘/dev/disk‘

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/rhel-root / xfs defaults 1 1

UUID=5b557056-83b0-4f51-ba26-82a3d5ee0d20 /boot xfs defaults 1 2

/dev/mapper/rhel-swap swap swap defaults 0 0

# 分别删除逻辑卷、卷组和物理卷,使用lvdisplay可以看到已经没有了TEST卷组

[root@localhost /]# lvremove /dev/TEST/vo

Do you really want to remove active logical volume vo? [y/n]: y

Logical volume "vo" successfully removed

[root@localhost /]# vgremove TEST

Volume group "TEST" successfully removed

[root@localhost /]# pvremove /dev/sdb /dev/sdc

Labels on physical volume "/dev/sdb" successfully wiped

Labels on physical volume "/dev/sdc" successfully wiped

[root@localhost /]# lvdisplay

--- Logical volume ---

LV Path /dev/rhel/swap

LV Name swap

VG Name rhel

LV UUID hKPRws-tpxx-dTvW-LErF-QiU5-SNhd-pO3K1y

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 2

LV Size 2.00 GiB

Current LE 512

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1

--- Logical volume ---

LV Path /dev/rhel/root

LV Name root

VG Name rhel

LV UUID YPpU5s-3xA8-7Jwh-1g0l-emdO-L4xh-fA4zPq

LV Write Access read/write

LV Creation host, time localhost, 2020-05-13 20:21:54 +0800

LV Status available

# open 1

LV Size 17.51 GiB

Current LE 4482

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:0

[root@localhost /]#

以上是关于软硬链接&使用RAID与LVM磁盘阵列技术的主要内容,如果未能解决你的问题,请参考以下文章