Kubernetes集群上搭建KubeSphere 教程

Posted 爱上口袋的天空

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Kubernetes集群上搭建KubeSphere 教程相关的知识,希望对你有一定的参考价值。

一、简介

KubeSphere®️ 是基于 Kubernetes 构建的分布式、多租户、多集群、企业级开源容器平台,具有强大且完善的网络与存储能力,并通过极简的人机交互提供完善的多集群管理、CI / CD 、微服务治理、应用管理等功能,帮助企业在云、虚拟化及物理机等异构基础设施上快速构建、部署及运维容器架构,实现应用的敏捷开发与全生命周期管理。

二、安装的步骤以及机器要求

- 选择4核8G(master)、8核16G(node1)、8核16G(node2) 三台机器,按量付费进行实验,CentOS7.9

- 安装Docker

- 安装Kubernetes

- 安装KubeSphere前置环境

- 安装KubeSphere

三、安装Docker

1、执行如下的命令(3台一起)

sudo yum remove docker* sudo yum install -y yum-utils #配置docker的yum地址 sudo yum-config-manager \\ --add-repo \\ http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo #安装指定版本 sudo yum install -y docker-ce-20.10.7 docker-ce-cli-20.10.7 containerd.io-1.4.6 # 启动&开机启动docker systemctl enable docker --now # docker加速配置 sudo mkdir -p /etc/docker sudo tee /etc/docker/daemon.json <<-'EOF' "registry-mirrors": ["https://8nkiv83o.mirror.aliyuncs.com"] "exec-opts": ["native.cgroupdriver=systemd"], "log-driver": "json-file", "log-opts": "max-size": "100m" , "storage-driver": "overlay2" EOF sudo systemctl daemon-reload sudo systemctl restart docker2、检查docker

由上可以知道docker已经安装好了

四、安装Kubernetes

1、基本环境(3台机器都要操作)

每个机器使用内网ip互通

每个机器配置自己的hostname,不能用localhost

#设置每个机器自己的hostname hostnamectl set-hostname xxx # 将 SELinux 设置为 permissive 模式(相当于将其禁用) sudo setenforce 0 sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config #关闭swap swapoff -a sed -ri 's/.*swap.*/#&/' /etc/fstab #允许 iptables 检查桥接流量 cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf br_netfilter EOF cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF sudo sysctl --system这里我们配置3台机器的主机名分别为k8s-master,node1以及node2

后面几个关闭swap安装命令执行即可

2、安装kubelet、kubeadm、kubectl(3台机器都要操作)

#配置k8s的yum源地址 cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF #安装 kubelet,kubeadm,kubectl sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 #启动kubelet sudo systemctl enable --now kubelet #所有机器配置master域名,下面的ip地址是主节点的ip地址 echo "ip地址 k8s-master" >> /etc/hosts3、初始化master节点(这个只用在master节点执行即可)

kubeadm init \\ --apiserver-advertise-address=主机ip地址 \\ --control-plane-endpoint=k8s-master \\ --image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \\ --kubernetes-version v1.20.9 \\ --service-cidr=10.96.0.0/16 \\ --pod-network-cidr=172.31.0.0/16--service-cidr=10.96.0.0/16:这个表示的是svc的网段地址范围

--pod-network-cidr=172.31.0.0/16:这个定义的是后面创建pod的网段地址范围

记录master执行完成后的日志,记录关键信息,下面的是:

Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of control-plane nodes by copying certificate authorities and service account keys on each node and then running the following as root: kubeadm join k8s-master:6443 --token negcwo.3xwa4834xtoeko66 \\ --discovery-token-ca-cert-hash sha256:b07662c84b743677cbc9e85d52274ca36fe6489c217fbe48b6d1001b458a2f9f \\ --control-plane Then you can join any number of worker nodes by running the following on each as root: kubeadm join k8s-master:6443 --token negcwo.3xwa4834xtoeko66 \\ --discovery-token-ca-cert-hash sha256:b07662c84b743677cbc9e85d52274ca36fe6489c217fbe48b6d1001b458a2f9f根据上面的关键信息,执行给与我们的命令:

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config执行完上面的命令我们就能够使用kubectl命令了:

4、安装Calico网络插件

curl https://docs.projectcalico.org/manifests/calico.yaml -O kubectl apply -f calico.yaml注意:上面的calio.yaml我们需要修改一下CALICO_IPV4POOL_CIDR属性,修改为我们上面定义的--pod-network-cidr=172.31.0.0/16,

等待这几个pod安装完成

5、加入worker节点

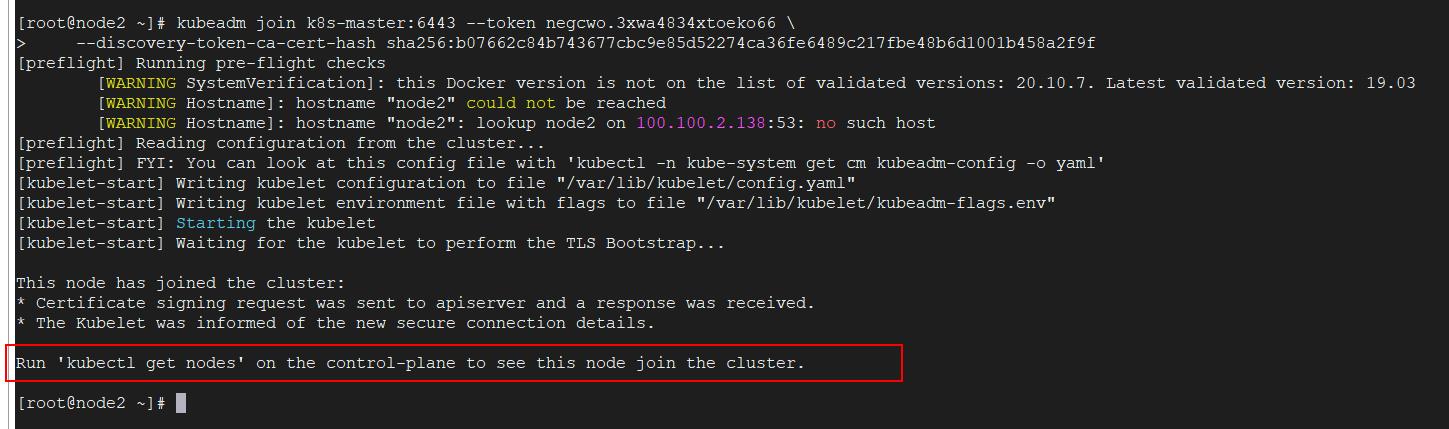

由上面的关键信息给的命令到两个从节点执行下面的命令:

kubeadm join k8s-master:6443 --token negcwo.3xwa4834xtoeko66 \\ --discovery-token-ca-cert-hash sha256:b07662c84b743677cbc9e85d52274ca36fe6489c217fbe48b6d1001b458a2f9f

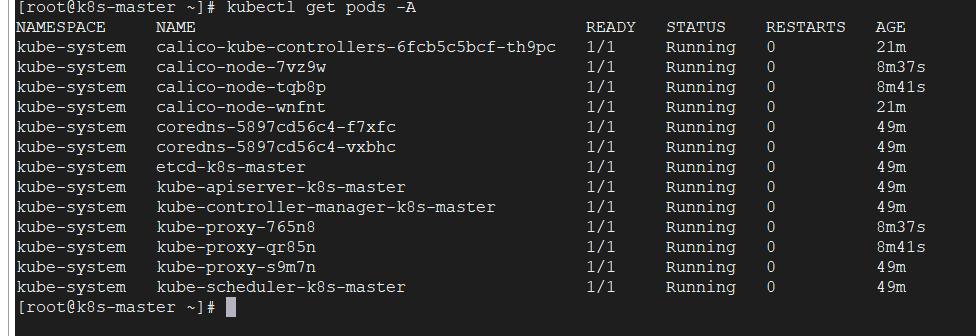

再次查看新增的pods是否成功:

可以看到全部成功(时间有点长)

到主节点查看集群是否安装成功:

五、安装KubeSphere前置环境

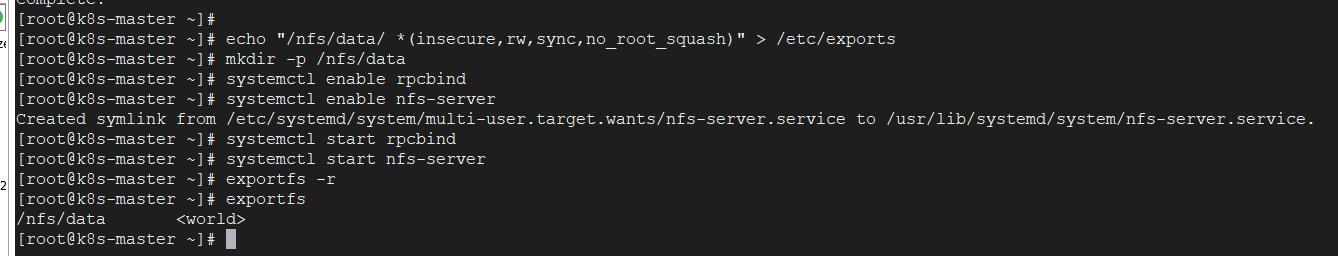

1、安装nfs-server

# 在每个机器。 yum install -y nfs-utils # 在master 执行以下命令 echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exports # 执行以下命令,启动 nfs 服务;创建共享目录,在master 执行 mkdir -p /nfs/data # 在master执行 systemctl enable rpcbind systemctl enable nfs-server systemctl start rpcbind systemctl start nfs-server # 使配置生效,在master 执行 exportfs -r #检查配置是否生效,在master 执行 exportfs

2、配置nfs-client(选做,在两台从节点操作)

showmount -e 主机ip地址 mkdir -p /nfs/data mount -t nfs 主机ip地址:/nfs/data /nfs/data[root@node1 ~]# showmount -e 192.168.0.164 Export list for 192.168.0.164: /nfs/data * [root@node1 ~]# mkdir -p /nfs/data [root@node1 ~]# mount -t nfs 192.168.0.164:/nfs/data /nfs/data [root@node1 ~]#3、配置默认存储

配置动态供应的默认存储类,之前我们配置过pv资源,必须要提前创建出来,然后创建pvc去申请合适的pv资源,那么现在我们想不需要提前创建pv资源,想提交pvc申请之后,动态的给我们分配一个指定大小的资源

下面创建一个sc.yaml文件,内容如下:

## 创建了一个存储类 apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: nfs-storage annotations: storageclass.kubernetes.io/is-default-class: "true" provisioner: k8s-sigs.io/nfs-subdir-external-provisioner parameters: archiveOnDelete: "true" ## 删除pv的时候,pv的内容是否要备份 --- apiVersion: apps/v1 kind: Deployment metadata: name: nfs-client-provisioner labels: app: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default spec: replicas: 1 strategy: type: Recreate selector: matchLabels: app: nfs-client-provisioner template: metadata: labels: app: nfs-client-provisioner spec: serviceAccountName: nfs-client-provisioner containers: - name: nfs-client-provisioner image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2 # resources: # limits: # cpu: 10m # requests: # cpu: 10m volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: k8s-sigs.io/nfs-subdir-external-provisioner - name: NFS_SERVER value: 192.168.0.164 ## 指定自己nfs服务器地址 - name: NFS_PATH value: /nfs/data ## nfs服务器共享的目录 volumes: - name: nfs-client-root nfs: server: 192.168.0.164 path: /nfs/data --- apiVersion: v1 kind: ServiceAccount metadata: name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: nfs-client-provisioner-runner rules: - apiGroups: [""] resources: ["nodes"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["persistentvolumes"] verbs: ["get", "list", "watch", "create", "delete"] - apiGroups: [""] resources: ["persistentvolumeclaims"] verbs: ["get", "list", "watch", "update"] - apiGroups: ["storage.k8s.io"] resources: ["storageclasses"] verbs: ["get", "list", "watch"] - apiGroups: [""] resources: ["events"] verbs: ["create", "update", "patch"] --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: run-nfs-client-provisioner subjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default roleRef: kind: ClusterRole name: nfs-client-provisioner-runner apiGroup: rbac.authorization.k8s.io --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default rules: - apiGroups: [""] resources: ["endpoints"] verbs: ["get", "list", "watch", "create", "update", "patch"] --- kind: RoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: leader-locking-nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default subjects: - kind: ServiceAccount name: nfs-client-provisioner # replace with namespace where provisioner is deployed namespace: default roleRef: kind: Role name: leader-locking-nfs-client-provisioner apiGroup: rbac.authorization.k8s.io

通过上面的kubectl apply -f sc.yaml执行之后,可以看到已经成功!

4、metrics-server

这个是集群指标监控组件,用来监控集群CPU等信息

创建一个metrics.yaml文件,内容如下:

apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: labels: k8s-app: metrics-server rbac.authorization.k8s.io/aggregate-to-admin: "true" rbac.authorization.k8s.io/aggregate-to-edit: "true" rbac.authorization.k8s.io/aggregate-to-view: "true" name: system:aggregated-metrics-reader rules: - apiGroups: - metrics.k8s.io resources: - pods - nodes verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: labels: k8s-app: metrics-server name: system:metrics-server rules: - apiGroups: - "" resources: - pods - nodes - nodes/stats - namespaces - configmaps verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: metrics-server name: metrics-server-auth-reader namespace: kube-system roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: extension-apiserver-authentication-reader subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: labels: k8s-app: metrics-server name: metrics-server:system:auth-delegator roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:auth-delegator subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: labels: k8s-app: metrics-server name: system:metrics-server roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:metrics-server subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: v1 kind: Service metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system spec: ports: - name: https port: 443 protocol: TCP targetPort: https selector: k8s-app: metrics-server --- apiVersion: apps/v1 kind: Deployment metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system spec: selector: matchLabels: k8s-app: metrics-server strategy: rollingUpdate: maxUnavailable: 0 template: metadata: labels: k8s-app: metrics-server spec: containers: - args: - --cert-dir=/tmp - --kubelet-insecure-tls - --secure-port=4443 - --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname - --kubelet-use-node-status-port image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/metrics-server:v0.4.3 imagePullPolicy: IfNotPresent livenessProbe: failureThreshold: 3 httpGet: path: /livez port: https scheme: HTTPS periodSeconds: 10 name: metrics-server ports: - containerPort: 4443 name: https protocol: TCP readinessProbe: failureThreshold: 3 httpGet: path: /readyz port: https scheme: HTTPS periodSeconds: 10 securityContext: readOnlyRootFilesystem: true runAsNonRoot: true runAsUser: 1000 volumeMounts: - mountPath: /tmp name: tmp-dir nodeSelector: kubernetes.io/os: linux priorityClassName: system-cluster-critical serviceAccountName: metrics-server volumes: - emptyDir: name: tmp-dir --- apiVersion: apiregistration.k8s.io/v1 kind: APIService metadata: labels: k8s-app: metrics-server name: v1beta1.metrics.k8s.io spec: group: metrics.k8s.io groupPriorityMinimum: 100 insecureSkipTLSVerify: true service: name: metrics-server namespace: kube-system version: v1beta1 versionPriority: 100执行上面的文件:kubectl apply -f metrics.yaml

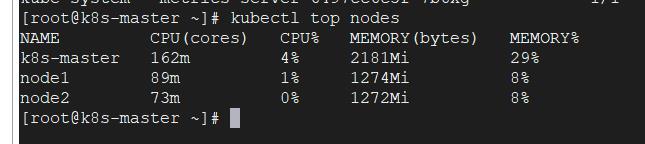

查看是否安装成功:kubectl top nodes

由上可以看到我们集群的CPU、内存使用情况

六、安装KubeSphere

1、下载核心文件

wget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/kubesphere-installer.yaml wget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/cluster-configuration.yaml执行上面的命令,下载下来下面的两个文件:

2、修改cluster-configuration.yaml文件

2.1、首先开启etcd的监控功能,ip地址是我们主节点ip

2.2、开启redis以及轻量级协议功能

2.3、开启系统告警、审计、devops、事件、日志功能

2.4、打开网络、指定calico、打开应用商店、打开servicemesh、kubeedge

3、执行安装

kubectl apply -f kubesphere-installer.yaml kubectl apply -f cluster-configuration.yaml

现在就是等待组件安装完成

上面这个安装器完成之后可以使用日志命令查看安装进度:

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-install -o jsonpath='.items[0].metadata.name') -f

由上面可以发现正在安装

注意,我们需要在我们阿里云的安全组中将k8s相关的端口都开放,k8s的端口范围

是30000-32767,

下面kubesphere安装完成,如下:

从上面可以看到默认的用户名和密码:

最好确认所有的pod都安装完:

上面这个prometheus-k8s-0需要安装一个东西,解决etcd监控证书找不到问题

kubectl -n kubesphere-monitoring-system create secret generic kube-etcd-client-certs --from-file=etcd-client-ca.crt=/etc/kubernetes/pki/etcd/ca.crt --from-file=etcd-client.crt=/etc/kubernetes/pki/apiserver-etcd-client.crt --from-file=etcd-client.key=/etc/kubernetes/pki/apiserver-etcd-client.key

上面所有的pod都安装完成结束

我们在k8s3台集群服务随机选择一个ip加上端口访问

地址:http://ip地址:30880

上面是登录进去后的界面

以上是关于Kubernetes集群上搭建KubeSphere 教程的主要内容,如果未能解决你的问题,请参考以下文章