K8s集群离线安装-kubeadm-详细篇

Posted Harry_z666

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了K8s集群离线安装-kubeadm-详细篇相关的知识,希望对你有一定的参考价值。

1、部署k8s的两种方式:kubeadm 和二进制源码安装

#本次实验采用的部署Kubernetes方式:

kubeadm

Kubeadm是一个K8s部署工具,提供kubeadm init和kubeadm join,用于快速部署Kubernetes集群。

2、环境准备

#服务器要求:

建议最小硬件配置:2核CPU、2G内存、20G硬盘

服务器最好可以访问外网,会有从网上拉取镜像需求,如果服务器不能上网,需要提前下载对应镜像并导入节点

#软件环境:

操作系统:CentOS Linux release 7.8.2003 (Core)

Docker:20.10.16

K8s:1.23

| 名称 | IP |

|---|---|

| master | 192.168.32.128 |

| node1 | 192.168.32.129 |

| node2 | 192.168.32.130 |

| repo | 192.168.32.131 |

3、下载安装包至本地

# 找一台可以连接外网的机器,下载所需的所有依赖安装包,作为yum仓库服务器

# yum仓库服务器 192.168.32.131

# 下载所需工具依赖包

yum install --downloadonly --downloaddir=/opt/repo ntpdate wget httpd createrepo vim telnet netstat lrzsz

[root@repo ~]# yum clean all

[root@repo ~]# yum makecache

[root@repo ~]# yum install -y wget

[root@repo ~]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

[root@repo ~]# yum install --downloadonly --downloaddir=/opt/repo docker-ce

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 先下载好kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0所需要的依赖包

[root@repo ~]# yum install --downloadonly --downloaddir=/opt/repo kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0

[root@repo ~]# wget --no-check-certificate https://docs.projectcalico.org/manifests/calico.yaml

[root@repo ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.4.0/aio/deploy/recommended.yaml

4、将安装包拷贝至本地并制作yum源

#使用yum下载命令

yum install $软件 --downloadonly --downloaddir=$指定目录

如:yum -y install httpd --downloadonly --downloaddir=/data/repo

yum -y install autossh --downloadonly --downloaddir=/data/repo

#将下载软件包的目录制作为yum源

[root@repo opt]# mkdir repo

[root@repo opt]# createrepo /opt/repo/

#命令执行后,会在该目录下创建一个repodata目录,如下图:

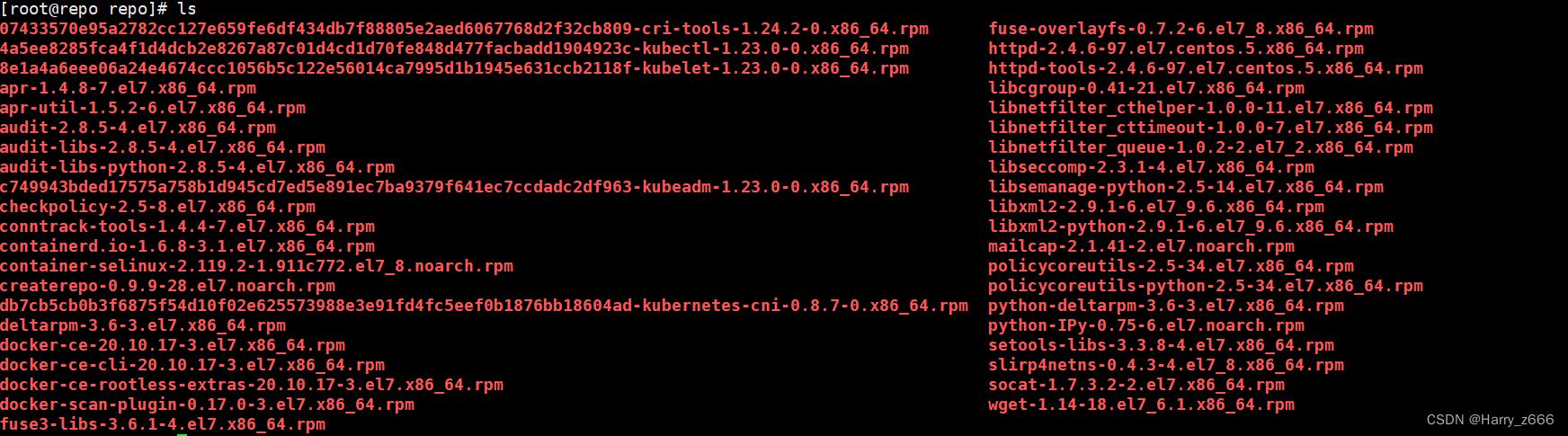

[root@repo repo]# ls

repodata

# 将上一步的安装包全部拷贝至192.168.32.131,并制作本地的yum源

更新createrepo

[root@repo repo]# createrepo --update /opt/repo

cd /opt/repo

yum install -y httpd

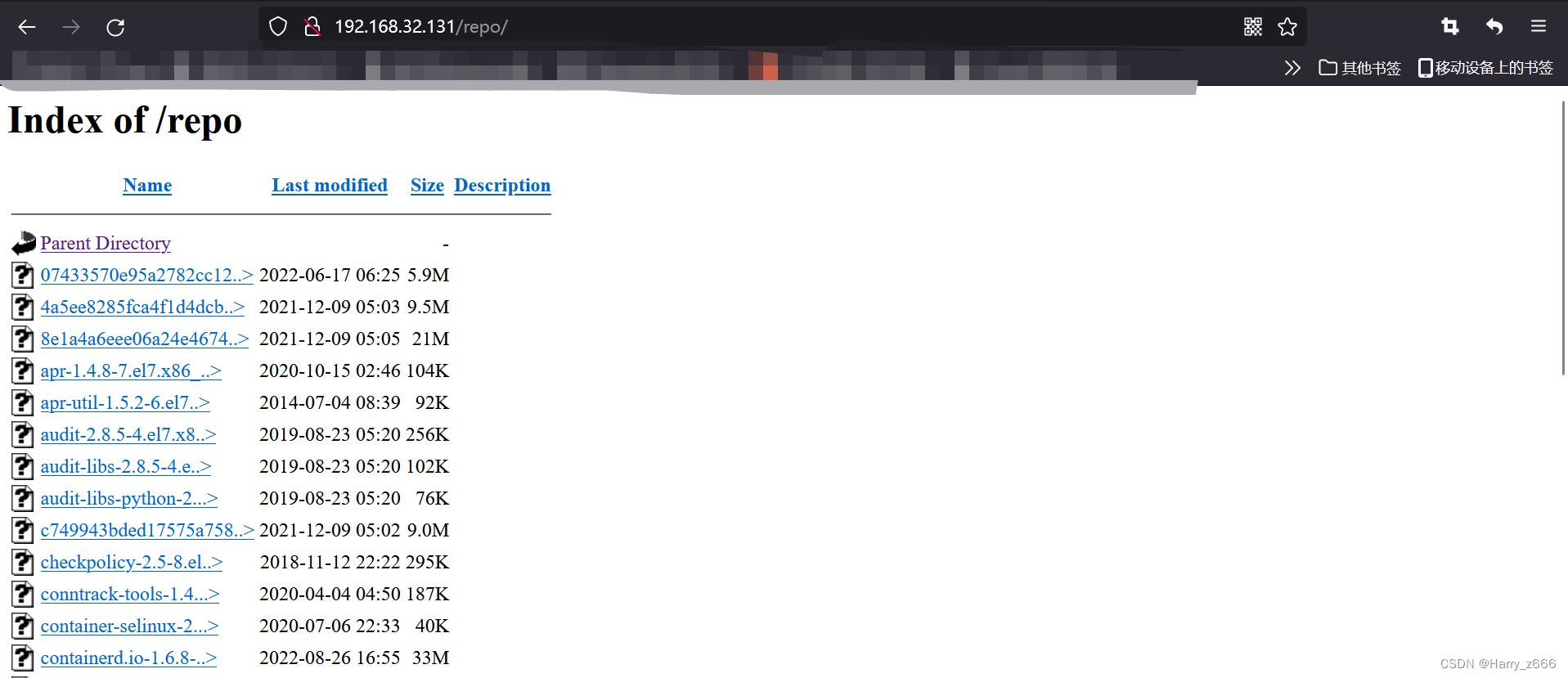

systemctl start httpd && systemctl enable httpd

ln -s /opt/repo /var/www/html/

# 此时就制作好了本地yum源

# 例子

下载php-mysql到createrepo仓库中:

yum install php-mysql --downloadonly --downloaddir=/opt/repo

[root@repo repo]# ls

libzip-0.10.1-8.el7.x86_64.rpm php-common-5.4.16-48.el7.x86_64.rpm php-mysql-5.4.16-48.el7.x86_64.rpm php-pdo-5.4.16-48.el7.x86_64.rpm repodata

#在内网机器上搜索php-mysql

[root@k8s-node2 yum.repos.d]# yum search php-mysql

已加载插件:fastestmirror

Loading mirror speeds from cached hostfile

================================================================== N/S matched: php-mysql ==================================================================

php-mysql.x86_64 : A module for PHP applications that use MySQL databases

名称和简介匹配 only,使用“search all”试试。

## 5、初始化配置

## 5、初始化配置

5.1、安装环境准备:下面的操作需要在所有的节点上执行。

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

setenforce 0 # 临时

# 关闭swap

swapoff -a # 临时

sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久

# 根据规划设置主机名

# 将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system # 生效

# 时间同步

yum install ntpdate -y

ntpdate time.windows.com

hostnamectl set-hostname k8s-node2

# 在master添加hosts

cat >> /etc/hosts << EOF

192.168.32.128 master

192.168.32.129 node1

192.168.32.130 node2

192.168.32.131 repo

EOF

# 在每个节点创建离线环境repo源

cat > /etc/yum.repos.d/local.repo << EOF

[local]

name=local

baseurl=http://192.168.32.131/repo/ # 目录地址很重要,一定要加对 必须加上/

enabled=1

gpgcheck=0

EOF

# 模拟离线状态下的yum 仓库

[root@k8s-master yum.repos.d]# cd /etc/yum.repos.d/

[root@k8s-master yum.repos.d]# mkdir bak

[root@k8s-master yum.repos.d]# mv *.repo bak/

[root@k8s-master yum.repos.d]# cd bak/

[root@k8s-master yum.repos.d]# mv local.repo ../

[root@k8s-master yum.repos.d]# yum clean all

[root@k8s-master yum.repos.d]# yum makecache

[root@k8s-master yum.repos.d]# yum repolist

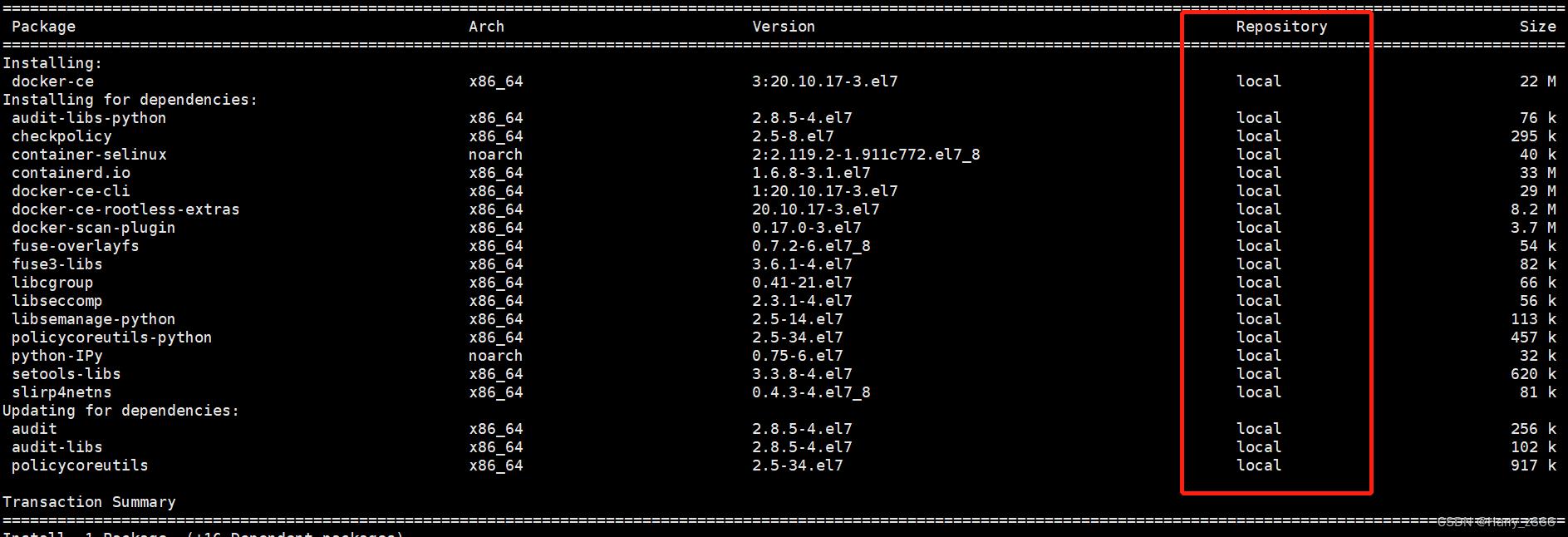

5.2、安装 Docker、kubeadm、kubelet【所有节点】

#安装docker:

yum -y install docker-ce

systemctl enable docker && systemctl start docker

#配置镜像下载加速器:

vim /etc/docker/daemon.json

"registry-mirrors": ["https://l51yxa8e.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

systemctl restart docker

#查看docker信息,进行确认

docker info

#先安装好kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0所需要的依赖包

yum install -y kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0

#先设置kubelet 为自启动

systemctl enable kubelet

所安装的依赖包都是来自local 本地仓库

6、部署k8s-master【master执行】

6.1、kubeadm部署

# 执行kubeadm时,需要用到一些镜像,我们需要提前准备。

# 查看需要依赖哪些镜像

[root@k8s-master ~]# kubeadm config images list

k8s.gcr.io/kube-apiserver:v1.23.0

k8s.gcr.io/kube-controller-manager:v1.23.0

k8s.gcr.io/kube-scheduler:v1.23.0

k8s.gcr.io/kube-proxy:v1.23.0

k8s.gcr.io/pause:3.6

k8s.gcr.io/etcd:3.5.1-0

k8s.gcr.io/coredns/coredns:v1.8.6

# 这里需要在192.168.32.131机器上执行

# 在生产环境,是肯定访问不了k8s.gcr.io这个地址的。在有大陆联网的机器上,也是无法访问的。所以我们需要使用国内镜像先下载下来。

# 解决办法跟简单,我们在一台可以上网的机器上使用docker命令搜索下

[root@repo yum.repos.d]# docker search kube-apiserver

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

aiotceo/kube-apiserver end of support, please pull kubestation/kube… 20

mirrorgooglecontainers/kube-apiserver 19

kubesphere/kube-apiserver 7

kubeimage/kube-apiserver-amd64 k8s.gcr.io/kube-apiserver-amd64 5

empiregeneral/kube-apiserver-amd64 kube-apiserver-amd64 4 [OK]

k8simage/kube-apiserver 3

docker/desktop-kubernetes-apiserver Mirror of selected tags from k8s.gcr.io/kube… 1

mirrorgcrio/kube-apiserver mirror of k8s.gcr.io/kube-apiserver:v1.23.6 1

# 选择stars 最多的 aiotceo/kube-apiserver

# 下载对应版本的base镜像

# kubeadm 所需镜像

registry.aliyuncs.com/google_containers/kube-apiserver:v1.23.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.23.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.23.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.23.0

registry.aliyuncs.com/google_containers/pause:3.6

registry.aliyuncs.com/google_containers/etcd:3.5.1-0

registry.aliyuncs.com/google_containers/coredns:v1.8.6

# 编写 pull 脚本:

vim pull_images.sh

#!/bin/bash

images=(

registry.aliyuncs.com/google_containers/kube-apiserver:v1.23.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.23.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.23.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.23.0

registry.aliyuncs.com/google_containers/pause:3.6

registry.aliyuncs.com/google_containers/etcd:3.5.1-0

registry.aliyuncs.com/google_containers/coredns:v1.8.6

)

for pullimageName in $images[@] ; do

docker pull $pullimageName

done

# 编写 save 脚本:

vim save_images.sh

#!/bin/bash

images=(

registry.aliyuncs.com/google_containers/kube-apiserver:v1.23.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.23.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.23.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.23.0

registry.aliyuncs.com/google_containers/pause:3.6

registry.aliyuncs.com/google_containers/etcd:3.5.1-0

registry.aliyuncs.com/google_containers/coredns:v1.8.6

)

for imageName in $images[@]; do

key=`echo $imageName | awk -F '\\\\\\/' 'print $3' | awk -F ':' 'print $1'`

docker save -o $key.tar $imageName

done

# 将save 出来的镜像传送到master节点上

[root@repo images]# ll

total 755536

-rw------- 1 root root 46967296 Sep 4 02:37 coredns.tar

-rw------- 1 root root 293936128 Sep 4 02:37 etcd.tar

-rw------- 1 root root 136559616 Sep 4 02:36 kube-apiserver.tar

-rw------- 1 root root 126385152 Sep 4 02:37 kube-controller-manager.tar

-rw------- 1 root root 114243584 Sep 4 02:37 kube-proxy.tar

-rw------- 1 root root 54864896 Sep 4 02:37 kube-scheduler.tar

-rw------- 1 root root 692736 Sep 4 02:37 pause.tar

scp *.tar root@192.168.32.128:/root/

#以下在master节点上执行

# 编写 load 脚本:

vim load_images.sh

#!/bin/bash

images=(

kube-apiserver

kube-controller-manager

kube-scheduler

kube-proxy

pause

etcd

coredns

)

for imageName in $images[@] ; do

key=.tar

docker load -i $imageName$key

done

[root@master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-apiserver v1.23.0 9ca5fafbe8dc 2 weeks ago 135MB

registry.aliyuncs.com/google_containers/kube-proxy v1.23.0 71b9bf9750e1 2 weeks ago 112MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.23.0 91a4a0d5de4e 2 weeks ago 125MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.23.0 d5c0efb802d9 2 weeks ago 53.5MB

registry.aliyuncs.com/google_containers/etcd 3.5.1-0 25f8c7f3da61 10 months ago 293MB

registry.aliyuncs.com/google_containers/coredns v1.8.6 a4ca41631cc7 11 months ago 46.8MB

registry.aliyuncs.com/google_containers/pause 3.6 6270bb605e12 12 months ago 683kB

kubeadm init \\

--apiserver-advertise-address=192.168.32.128 \\

--image-repository registry.aliyuncs.com/google_containers \\

--kubernetes-version v1.23.0 \\

--service-cidr=10.96.0.0/12 \\

--pod-network-cidr=10.244.0.0/16 \\

--ignore-preflight-errors=all

初始化之后,会输出一个join命令,先复制出来,node节点加入master会使用。

--image-repository registry.aliyuncs.com/google_containers 镜像仓库,离线安装需要把相关镜像先拉取下来

--apiserver-advertise-address 集群通告地址

--image-repository 由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

--kubernetes-version K8s版本,与上面安装的一致

--service-cidr 集群内部虚拟网络,Pod统一访问入口

--pod-network-cidr Pod网络,与下面部署的CNI网络组件yaml中保持一致

# 安装完成之后会有token,记录下来有用

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.32.128:6443 --token 6m4wt4.y90169m53e6nen8d \\

--discovery-token-ca-cert-hash sha256:0ea734ba54d630659ed78463d0f38fc6c407fabe9c8a0d41913b626160981402

6.2、拷贝k8s认证文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

#查看工作节点:

[root@master .kube]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane,master 6m46s v1.23.0

# 注:由于网络插件还没有部署,还没有准备就绪 NotReady,继续操作。

7、配置k8s的node节点【node节点操作】

# 向集群添加新节点,执行在kubeadm init输出的kubeadm join命令

# 在192.168.32.129 192.1168.32.130主机上输入以下命令

[root@k8s-node1 ~]# kubeadm join 192.168.32.128:6443 --token 6m4wt4.y90169m53e6nen8d \\

--discovery-token-ca-cert-hash sha256:0ea734ba54d630659ed78463d0f38fc6c407fabe9c8a0d41913b626160981402

[preflight] Running pre-flight checks

[WARNING Hostname]: hostname "k8s-node1" could not be reached

[WARNING Hostname]: hostname "k8s-node1": lookup k8s-node1 on 8.8.8.8:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

#默认token有效期为24小时,当过期之后,该token就不可用了。这时就需要重新创建token,可以直接使用命令快捷生成:

kubeadm token create --print-join-command

# 在192.168.32.128主机上

[root@master .kube]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-node1 NotReady <none> 47s v1.23.0

k8s-node2 NotReady <none> 8s v1.23.0

master NotReady control-plane,master 10m v1.23.0

# 注:由于网络插件还没有部署,还没有准备就绪 NotReady,继续操作。

8、部署容器网络 (master执行)

# Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案。

# calico镜像 在192.168.32.131能连接外网的主机上下载calico镜像,然后传到master主机上

# 查看pod 网段

[root@master .kube]# cat /etc/kubernetes/manifests/kube-controller-manager.yaml | grep cluster-cidr=

- --cluster-cidr=10.244.0.0/16

#把calico.yaml里pod所在网段改成kubeadm init时选项--pod-network-cidr所指定的网段,

直接用vim编辑打开此文件查找192,按如下标记进行修改:

# no effect. This should fall within `--cluster-cidr`.

# - name: CALICO_IPV4POOL_CIDR

# value: "192.168.0.0/16"

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

修改:

把两个#及#后面的空格去掉,并把192.168.0.0/16改成10.244.0.0/16

# no effect. This should fall within `--cluster-cidr`.

- name: CALICO_IPV4POOL_CIDR #此处

value: "10.244.0.0/16" #此处

# Disable file logging so `kubectl logs` works.

- name: CALICO_DISABLE_FILE_LOGGING

value: "true"

# 指定网卡,不然创建pod 会有报错

# 报错信息

network: error getting ClusterInformation: connection is unauthorized: Unauthorized

# Cluster type to identify the deployment type

- name: CLUSTER_TYPE

value: "k8s,bgp"

# 下面添加

- name: IP_AUTODETECTION_METHOD

value: "interface=eth0"

# eth0为本地网卡名字

# 参考链接:https://blog.csdn.net/m0_61237221/article/details/125217833

# 修改网卡名称教程:https://www.freesion.com/article/991174666/

# calico 版本

docker.io/calico/cni:v3.22.1

docker.io/calico/pod2daemon-flexvol:v3.22.1

docker.io/calico/node:v3.22.1

docker.io/calico/kube-controllers:v3.22.1

vim save_calico_images.sh

#!/bin/bash

images=(

docker.io/calico/cni:v3.22.1

docker.io/calico/pod2daemon-flexvol:v3.22.1

docker.io/calico/node:v3.22.1

docker.io/calico/kube-controllers:v3.22.1

)

for imageName in $images[@]; do

key=`echo $imageName | awk -F '\\\\\\/' 'print $3' | awk -F ':' 'print $1'`

docker save -o $key.tar $imageName

done

# 192.168.32.128

vim load_calico_images.sh

#!/bin/bash

images=(

cni

kube-controllers

node

pod2daemon-flexvol

)

for imageName in $images[@] ; do

key=.tar

docker load -i $imageName$key

done

# dashboard 版本镜像

kubernetesui/dashboard:v2.5.1

kubernetesui/metrics-scraper:v1.0.7

#下载YAML:

wget http://192.168.32.131/repo/calico.yaml

# 下载完后还需要修改里面定义Pod网络(CALICO_IPV4POOL_CIDR),与前面kubeadm init的 --pod-network-cidr指定的一样。

# The default IPv4 pool to create on startup if none exists. Pod IPs will be

# chosen from this range. Changing this value after installation will have

# no effect. This should fall within `--cluster-cidr`.

# - name: CALICO_IPV4POOL_CIDR

# value: "10.244.0.0/16" # 修改此处

# 修改完后文件后,进行部署:

kubectl apply -f calico.yaml

kubectl get pods -n kube-system

# 执行结束要等上一会才全部running

# 等Calico Pod都Running后,节点也会准备就绪。

# 注:以后所有yaml文件都只在Master节点执行。

# 安装目录:/etc/kubernetes/

# 组件配置文件目录:/etc/kubernetes/manifests/

[root@master ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready <none> 3h20m v1.23.0 192.168.32.129 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

k8s-node2 Ready <none> 3h19m v1.23.0 192.168.32.130 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

master Ready control-plane,master 3h30m v1.23.0 192.168.32.128 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

[root@master ~]# kubectl get nodes,svc -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node/k8s-node1 Ready <none> 3h20m v1.23.0 192.168.32.129 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

node/k8s-node2 Ready <none> 3h19m v1.23.0 192.168.32.130 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

node/master Ready control-plane,master 3h30m v1.23.0 192.168.32.128 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.17

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 3h30m <none>

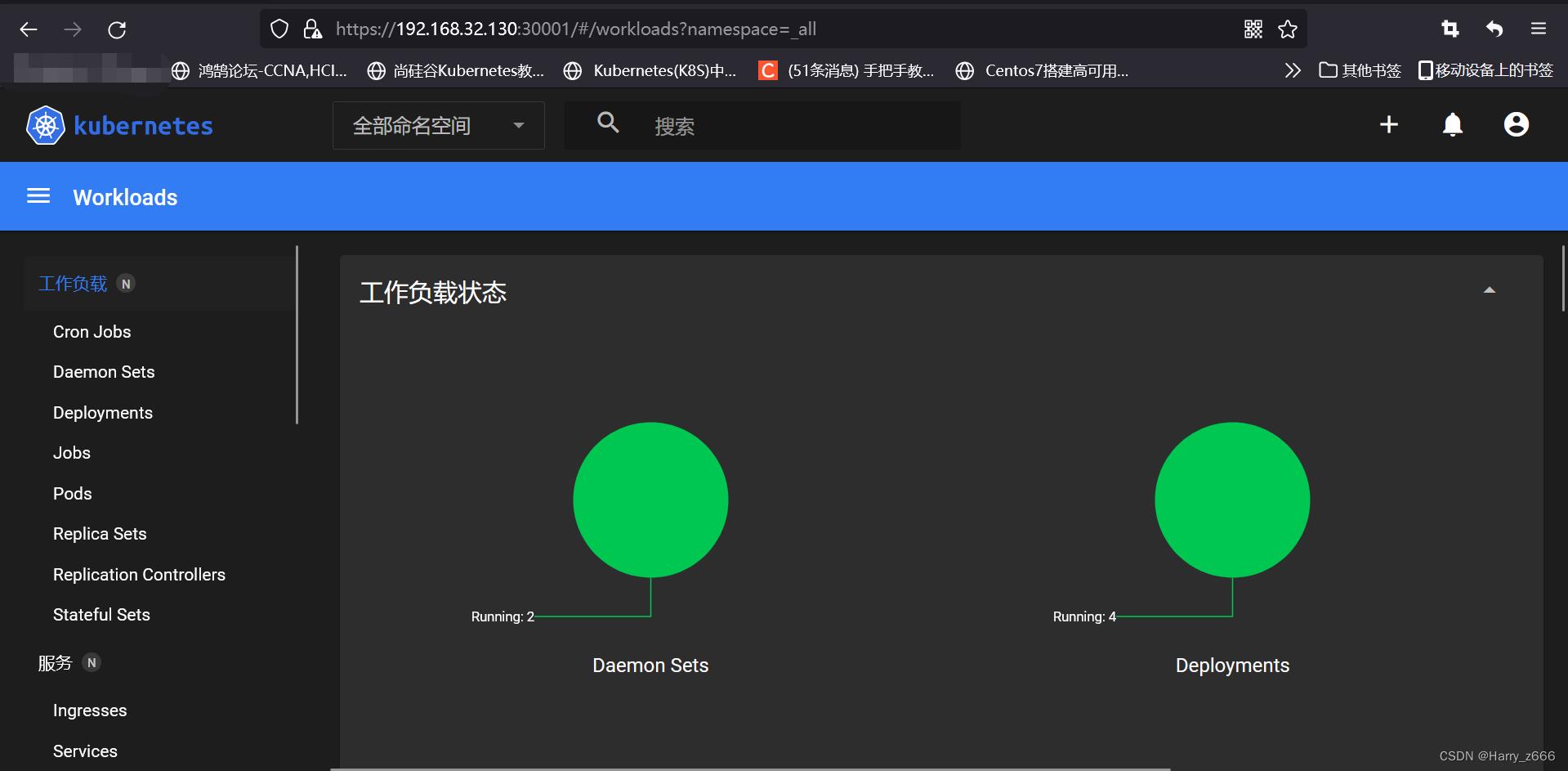

9、部署Dashboard (master执行)

# 参考网址:https://blog.csdn.net/qq_41980563/article/details/123182854

# Dashboard是官方提供的一个UI,可用于基本管理K8s资源。

# https://raw.githubusercontent.com/kubernetes/dashboard/v2.5.1/aio/deploy/recommended.yaml

# YAML下载地址:wget http://192.168.32.131/repo/recommended.yaml

# 默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

vim recommended.yaml #在第三步中下载的

...

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 30001

selector:

k8s-app: kubernetes-dashboard

type: NodePort

...

# 版本信息:

kubernetesui/dashboard:v2.5.1

kubernetesui/metrics-scraper:v1.0.7

kubectl apply -f recommended.yaml

[root@master ~]# kubectl get pods -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-799d786dbf-4fh5r 1/1 Running 0 10m

kubernetes-dashboard-fb8648fd9-pk6jk 1/1 Running 0 10m

# 访问地址:https://NodeIP:30001

# 创建service account并绑定默认cluster-admin管理员集群角色:

# 创建用户

[root@master ~]# kubectl create serviceaccount dashboard-admin -n kube-system

serviceaccount/dashboard-admin created

# 用户授权

[root@master ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created

# 获取用户Token

[root@master ~]# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/print $1')

Name: dashboard-admin-token-gr2bk

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: b3b13801-e4a6-481f-a3ea-2fb55b2f6f23

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1099 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6Ik1CLXR0QmxtSkhLZXRubXdLQ1c4X2paMk14dXRvQTRDMVBOdmw0SWtpX2cifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tZ3IyYmsiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiYjNiMTM4MDEtZTRhNi00ODFmLWEzZWEtMmZiNTViMmY2ZjIzIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.sH4z8gX5qdZXgw3pApBDzF9nlowdLjuFkkFfpdh1p0ljFdNOYdnfj84sRssU-PqoEx6ImCBF0czxQqfOJMXp6ha6-0XR44U15aWWjenqcfydw27RRJuV42z4N-PE7PdRETImCELIfvokkNU_r7uMlYahVE-cwRVi6Uj7ywL_Sjt6l8pv3qGDbCLnhWX-64mpZTqtjxhsH2xj1RUlOh_y_Q6UANtJzqxIvLmJwn5a1k7fNEWoI9F-9oBxbNEzias-AEygr9h4wjIdX3tB2LCR7EsDsulJ1KYLVNj-U72PqzU7j0IeFessB3F34TO50FXmQ6z6-fnHdK93aNYjY8BBcA

# https://192.168.32.130:30001/#/login

10、部署nginx服务

# 1.创建namespace.yaml文件

[root@k8s-master1 ~]# vi nginx-namespase.yaml

apiVersion: v1 #类型为Namespace

kind: Namespace #类型为Namespace

metadata:

name: ssx-nginx-ns #命名空间名称

labels:

name: lb-ssx-nginx-ns

# 然后应用到k8s中:

[root@master ~]# kubectl create -f nginx-namespase.yaml

namespace/ssx-nginx-ns created

# 2.创建nginx-deployment.yaml文件

vi nginx-deployment.yaml

[root@k8s-master1 ~]# vi nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx #为该Deployment设置key为app,value为nginx的标签

name: ssx-nginx-dm

namespace: ssx-nginx-ns

spec:

replicas: 2 #副本数量

selector: #标签选择器,与上面的标签共同作用

matchLabels: #选择包含标签app:nginx的资源

app: nginx

template: #这是选择或创建的Pod的模板

metadata: #Pod的元数据

labels: #Pod的标签,上面的selector即选择包含标签app:nginx的Pod

app: nginx

spec: #期望Pod实现的功能(即在pod中部署)

containers: #生成container,与docker中的container是同一种

- name: ssx-nginx-c

image: nginx:latest #使用镜像nginx: 创建container,该container默认80端口可访问

ports:

- containerPort: 80 # 开启本容器的80端口可访问

volumeMounts: #挂载持久存储卷

- name: volume #挂载设备的名字,与volumes[*].name 需要对应

mountPath: /usr/share/nginx/html #挂载到容器的某个路径下

volumes:

- name: volume #和上面保持一致 这是本地的文件路径,上面是容器内部的路径

hostPath:

path: /opt/web/dist #此路径需要实现创建

[root@master ~]# kubectl create -f nginx-deployment.yaml

# 3.创建service.yaml文件

[root@k8s-master1 ~]# vi nginx-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx

name: ssx-nginx-sv

namespace: ssx-nginx-ns

spec:

ports:

- port: 80 #写nginx本身端口

name: ssx-nginx-last

protocol: TCP

targetPort: 80 # 容器nginx对外开放的端口 上面的dm已经指定了

nodePort: 31090 #外网访问的端口

selector:

app: nginx #选择包含标签app:nginx的资源

type: NodePort

kubectl create -f ./nginx-service.yaml

[root@master ~]# kubectl get pods,svc -n ssx-nginx-ns -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/ssx-nginx-dm-686cdf7d5-72hhv 1/1 Running 0 98s 10.244.169使用kubeadm部署k8s集群03-扩容kube-apiserver到3节点

使用kubeadm部署k8s集群05-配置kubectl访问kube-apiserver

使用kubeadm部署k8s集群06-扩容kube-controller-manager到3节点

使用kubeadm部署k8s集群04-配置kubelet访问kube-apiserver