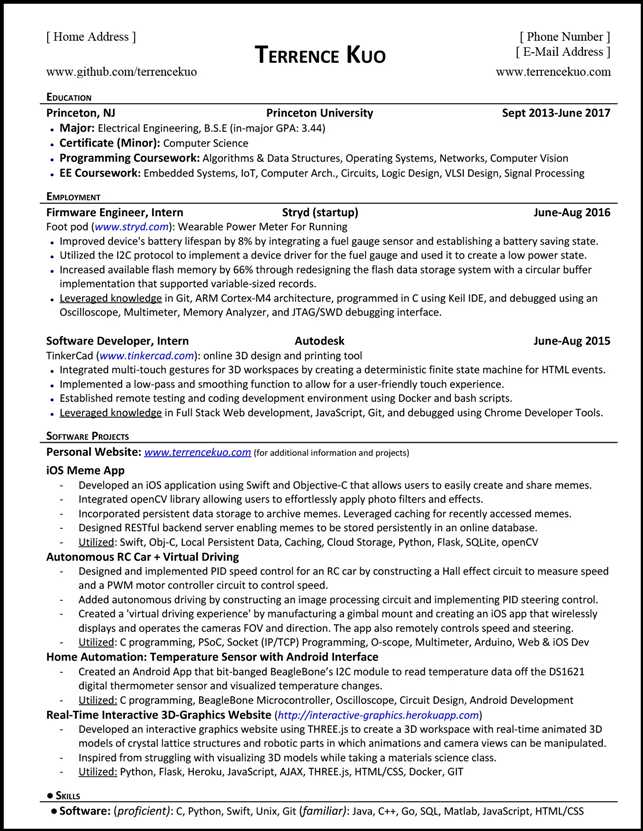

NLP Calculate the similarity of any two articles resume version

Posted ldphoebe

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了NLP Calculate the similarity of any two articles resume version相关的知识,希望对你有一定的参考价值。

https://radimrehurek.com/gensim/auto_examples/index.html#core-tutorials

Calculate the similarity of any two course

-Design a program to implement the retrieval of similar courses on corsera by using NLP

-Implement input a coursara course to find the top 10 courses similar to it by using python and accuracy can be above 90%

-Use the Topic model to solve this problem, mapping the text content to the Topic‘s dimensions, and then calculating its similarity.

-Utilized :Python,NLTK,genius,Topic model,lsi,tf*idf

基于 语料库当年为了保研究生 向tsinghua university apply the master degree

就是将两个"article"的文本内容映射到topic的维度,然后再计算其相似度。

download: https://www.aminer.cn/citation

Calculate the similarity of any two articles

基于关键词 :https://www.jiqizhixin.com/articles/2018-11-14-17

有很多种方法可以提取出关键词

tf-idf算出词频 然后作为文本的向量

基于全量的英文维基百科(400多万文章,压缩后9个多G的语料)

基于LSI模型的课程索引建立完毕,我们以Andrew Ng教授的机器学习公开课为例,这门课程在我们的coursera_corpus文件的第211行,也就是:

>>> print courses_name[210]

Machine Learning

现在我们就可以通过lsi模型将这门课程映射到10个topic主题模型空间上,然后和其他课程计算相似度:

>>> ml_course = texts[210]

>>> ml_bow = dicionary.doc2bow(ml_course)

>>> ml_lsi = lsi[ml_bow]

>>> print ml_lsi

[(0, 8.3270084238788673), (1, 0.91295652151975082), (2, -0.28296075112669405), (3, 0.0011599008827843801), (4, -4.1820134980024255), (5, -0.37889856481054851), (6, 2.0446999575052125), (7, 2.3297944485200031), (8, -0.32875594265388536), (9, -0.30389668455507612)]

>>> sims = index[ml_lsi]

>>> sort_sims = sorted(enumerate(sims), key=lambda item: -item[1])

取按相似度排序的前10门课程:

>>> print sort_sims[0:10]

[(210, 1.0), (174, 0.97812241), (238, 0.96428639), (203, 0.96283489), (63, 0.9605484), (189, 0.95390636), (141, 0.94975704), (184, 0.94269753), (111, 0.93654782), (236, 0.93601125)]

第一门课程是它自己:

>>> print courses_name[210]

Machine Learning

第二门课是Coursera上另一位大牛Pedro Domingos机器学习公开课

>>> print courses_name[174]

Machine Learning

第三门课是Coursera的另一位创始人,同样是大牛的Daphne Koller教授的概率图模型公开课:

>>> print courses_name[238]

Probabilistic Graphical Models

第四门课是另一位超级大牛Geoffrey Hinton的神经网络公开课,有同学评价是Deep Learning的必修课。

>>> print courses_name[203]

Neural Networks for Machine Learning

输入到住lsi主题模型中

# -*- coding:gb2312 -*- from gensim import corpora, models, similarities from nltk.tokenize import word_tokenize from nltk.corpus import brown

//文本做一些格式上的处理 courses=[] temp="" for line in file(‘aaa‘): if(line!=" "): temp =temp+line.strip()+" " else: courses.append(temp) temp="" courses_name = [] for course in courses: x=course.strip().split(‘ ‘) courses_name.append(x[0].strip(‘#*‘)) print courses_name[0:3] document=[‘#*AD‘,‘ADdd‘] document=document[0].decode(‘utf-8‘).lower() print document

//将文本分词 texts_tokenized = [[word.lower() for word in word_tokenize(document.decode(‘utf-8‘))] for document in courses] print texts_tokenized[0]

//去掉停用词 from nltk.corpus import stopwords english_stopwords = stopwords.words(‘english‘) texts_filtered_stopwords = [[word for word in document if not word in english_stopwords] for document in texts_tokenized] print texts_filtered_stopwords[0] english_punctuations = [‘,‘, ‘.‘, ‘:‘, ‘;‘, ‘?‘, ‘(‘, ‘)‘, ‘[‘, ‘]‘, ‘&‘, ‘!‘, ‘*‘, ‘@‘, ‘#‘, ‘$‘, ‘%‘] //词根

from nltk.stem.lancaster import LancasterStemmer texts_filtered = [[word for word in document if not word in english_punctuations] for document in texts_filtered_stopwords] print texts_filtered[0] st = LancasterStemmer() texts_stemmed = [[st.stem(word) for word in docment] for docment in texts_filtered] print texts_stemmed[0] all_stems = sum(texts_stemmed, []) stems_once = set(stem for stem in set(all_stems) if all_stems.count(stem) == 2)#去掉次数为2的低频词汇 texts = [[stem for stem in text if stem not in stems_once] for text in texts_stemmed] print texts f

rom gensim import corpora, models, similarities import logging logging.basicConfig(format=‘%(asctime)s : %(levelname)s : %(message)s‘, level=logging.INFO) // 构造词典 dictionary = corpora.Dictionary(texts) corpus = [dictionary.doc2bow(text) for text in texts]

//计算tf-idf tfidf = models.TfidfModel(corpus)

corpus_tfidf = tfidf[corpus]‘

lsi = models.LsiModel(corpus_tfidf, id2word=dictionary, num_topics=10)

//做成索引 index = similarities.MatrixSimilarity(lsi[corpus])

//输入第16门课

ml_course = texts[15]

//转成向量 ml_bow = dictionary.doc2bow(ml_course)

现在我们就可以通过lsi模型将这门课程映射到10个topic主题模型空间上,然后和其他课程计算相似 ml_lsi = lsi[ml_bow] print ml_lsi sims = index[ml_lsi] sort_sims = sorted(enumerate(sims), key=lambda item: -item[1]) print sort_sims[1:11] #print courses_name[23

以上是关于NLP Calculate the similarity of any two articles resume version的主要内容,如果未能解决你的问题,请参考以下文章