Kubesnetes实战总结 - 部署高可用集群

Posted leozhanggg

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Kubesnetes实战总结 - 部署高可用集群相关的知识,希望对你有一定的参考价值。

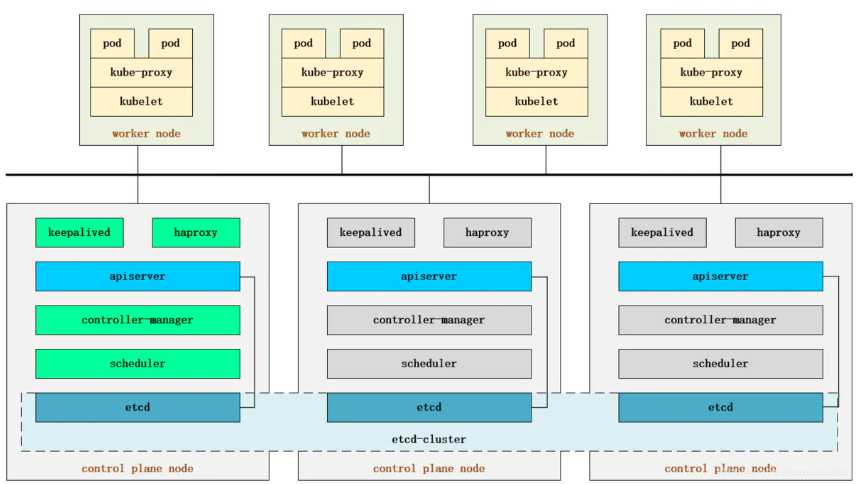

Kubernetes Master 节点运行组件如下:

- kube-apiserver: 提供了资源操作的唯一入口,并提供认证、授权、访问控制、API 注册和发现等机制

- kube-scheduler: 负责资源的调度,按照预定的调度策略将 Pod 调度到相应的机器上

- kube-controller-manager: 负责维护集群的状态,比如故障检测、自动扩展、滚动更新等

- etcd: CoreOS 基于 Raft 开发的分布式 key-value 存储,可用于服务发现、共享配置以及一致性保障(如数据库选主、分布式锁等)

高可用:

- kube-scheduler 和 controller-manager 可以以集群模式运行,通过 leader 选举产生一个工作进程,其它进程处于阻塞模式。

- kube-apiserver 可以运行多个实例,但对其它组件需要提供统一的访问地址。

我们将利用 HAProxy + Keepalived 配置 kube-apiserver 虚拟 IP 访问,从而实现高可用和负载均衡,拆解如下:

- Keepalived 提供 apiserver 对外服务的虚拟 IP(VIP)

- HAProxy 监听 Keepalived VIP

- 运行 Keepalived 和 HAProxy 的节点称为 LB(负载均衡) 节点

- Keepalived 是一主多备运行模式,故至少需要两个 LB 节点

- Keepalived 在运行过程中周期检查本机的 HAProxy 进程状态,如果检测到 HAProxy 进程异常,则触发重新选主的过程,VIP 将飘移到新选出来的主节点,从而实现 VIP 的高可用

- 所有组件(apiserver、scheduler 等)都通过 VIP +HAProxy 监听的 6444 端口访问 kube-apiserver 服务

- apiserver 默认端口为 6443,为了避免冲突我们将 HAProxy 端口设置为 6444,其它组件都是通过该端口统一请求 apiserver

1) 基础安装:

- 设置主机名以及域名解析

- 安装依赖包以及常用软件

- 关闭swap、selinux、firewalld

- 调整系统内核参数

- 加载系统ipvs相关模块

- 安装部署docker

- 安装部署kubernetes

以上基础安装请查看我的另外一篇博文>>> Kubernetes实战总结 - kubeadm部署集群(v1.17.4)

2) 部署haproxy和keepalived

1、配置haproxy.cfg,可以根据自己需要调整参数,重点修改最底部MasterIP。

global log 127.0.0.1 local0 log 127.0.0.1 local1 notice maxconn 4096 #chroot /usr/share/haproxy #user haproxy #group haproxy daemon defaults log global mode http option httplog option dontlognull retries 3 option redispatch timeout connect 5000 timeout client 50000 timeout server 50000 frontend stats-front bind *:8081 mode http default_backend stats-back frontend fe_k8s_6444 bind *:6444 mode tcp timeout client 1h log global option tcplog default_backend be_k8s_6443 acl is_websocket hdr(Upgrade) -i WebSocket acl is_websocket hdr_beg(Host) -i ws backend stats-back mode http balance roundrobin stats uri /haproxy/stats stats auth pxcstats:secret backend be_k8s_6443 mode tcp timeout queue 1h timeout server 1h timeout connect 1h log global balance roundrobin server k8s-master01 192.168.17.128:6443 server k8s-master02 192.168.17.129:6443 server k8s-master03 192.168.17.130:6443

2、配置启动脚本,修改MasterIP、虚拟IP、虚拟网卡名称

#!/bin/bash # 三台主节点IP地址(修改) MasterIP1=192.168.17.128 MasterIP2=192.168.17.129 MasterIP3=192.168.17.130 # Kube-apiserver默认端口 MasterPort=6443 # 虚拟网卡IP地址(修改) VIRTUAL_IP=192.168.17.100 # 虚拟网卡设备名(修改) INTERFACE=ens33 # 虚拟网卡子网掩码 NETMASK_BIT=24 # HAProxy服务端口 CHECK_PORT=6444 # 路由标识符 RID=10 # 虚拟路由标识符 VRID=160 # IPV4多播默认地址 MCAST_GROUP=224.0.0.18 echo "docker run haproxy-k8s..." #CURDIR=$(cd `dirname $0`; pwd) cp haproxy.cfg /etc/kubernetes/ docker run -dit --restart=always --name haproxy-k8s -p $CHECK_PORT:$CHECK_PORT -e MasterIP1=$MasterIP1 -e MasterIP2=$MasterIP2 -e MasterIP3=$MasterIP3 -e MasterPort=$MasterPort -v /etc/kubernetes/haproxy.cfg:/usr/local/etc/haproxy/haproxy.cfg wise2c/haproxy-k8s echo echo "docker run keepalived-k8s..." docker run -dit --restart=always --name=keepalived-k8s --net=host --cap-add=NET_ADMIN -e INTERFACE=$INTERFACE -e VIRTUAL_IP=$VIRTUAL_IP -e NETMASK_BIT=$NETMASK_BIT -e CHECK_PORT=$CHECK_PORT -e RID=$RID -e VRID=$VRID -e MCAST_GROUP=$MCAST_GROUP wise2c/keepalived-k8s

3、分别上传到各管理节点,然后执行脚本即可。

[root@k8s-141 ~]# docker ps | grep k8s d53e93ef4cd4 wise2c/keepalived-k8s "/usr/bin/keepalived…" 7 hours ago Up 3 minutes keepalived-k8s 028d18b75c3e wise2c/haproxy-k8s "/docker-entrypoint.…" 7 hours ago Up 3 minutes 0.0.0.0:6444->6444/tcp haproxy-k8s

3) 初始化管理节点

# 获取初始化配置文件,并修改相关参数,重点增加高可用配置 kubeadm config print init-defaults > kubeadm-config.yaml vim kubeadm-config.yaml

--- apiVersion: kubeadm.k8s.io/v1beta2 bootstrapTokens: - groups: - system:bootstrappers:kubeadm:default-node-token token: abcdef.0123456789abcdef ttl: 24h0m0s usages: - signing - authentication kind: InitConfiguration localAPIEndpoint: # 主节点IP地址 advertiseAddress: 192.168.17.128 bindPort: 6443 nodeRegistration: criSocket: /var/run/dockershim.sock # 主节点主机名 name: k8s-128 taints: - effect: NoSchedule key: node-role.kubernetes.io/master --- apiServer: timeoutForControlPlane: 4m0s apiVersion: kubeadm.k8s.io/v1beta2 # 证书默认路径 certificatesDir: /etc/kubernetes/pki clusterName: kubernetes # 高可用VIP地址 controlPlaneEndpoint: "192.168.17.100:6444" controllerManager: {} dns: type: CoreDNS etcd: local: dataDir: /var/lib/etcd # 镜像源 imageRepository: registry.aliyuncs.com/google_containers kind: ClusterConfiguration # k8s版本 kubernetesVersion: v1.17.4 networking: dnsDomain: cluster.local # Pod网段(必须与网络插件一致,且不冲突) podSubnet: 10.11.0.0/16 serviceSubnet: 10.96.0.0/12 scheduler: {} --- # 开启IPVS模式 apiVersion: kubeproxy.config.k8s.io/v1alpha1 kind: KubeProxyConfiguration featureGates: SupportIPVSProxyMode: true mode: ipvs

# 初始化主节点 kubeadm init --config=kubeadm-config.yaml --upload-certs | tee kubeadm-init.log # 根据初始化日志提示,需要将生成的admin.conf拷贝到.kube/config。 mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

4) 加入其余管理节点、工作节点、部署网络

# 根据初始化日志提示,执行kubeadm join命令加入集群。 kubeadm join 192.168.17.100:6444 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:56d53268517... --experimental-control-plane --certificate-key c4d1525b6cce4.... # 根据初始化日志提示,执行kubeadm join命令加入集群。 kubeadm join 192.168.17.100:6444 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:260796226d………… # 执行准备好的yaml部署文件 kubectl apply -f kube-flannel.yaml

5) 检查集群健康状况

[root@k8s-141 ~]# kubectl get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok etcd-0 Healthy {"health":"true"} scheduler Healthy ok [root@k8s-141 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-132 NotReady <none> 52m v1.17.4 k8s-141 Ready master 75m v1.17.4 k8s-142 Ready master 72m v1.17.4 k8s-143 Ready master 72m v1.17.4 [root@k8s-141 ~]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE coredns-9d85f5447-2x9lx 1/1 Running 1 28m coredns-9d85f5447-tl6qh 1/1 Running 1 28m etcd-k8s-141 1/1 Running 2 75m etcd-k8s-142 1/1 Running 1 72m etcd-k8s-143 1/1 Running 1 73m kube-apiserver-k8s-141 1/1 Running 2 75m kube-apiserver-k8s-142 1/1 Running 1 71m kube-apiserver-k8s-143 1/1 Running 1 73m kube-controller-manager-k8s-141 1/1 Running 3 75m kube-controller-manager-k8s-142 1/1 Running 2 71m kube-controller-manager-k8s-143 1/1 Running 2 73m kube-flannel-ds-amd64-5h7xw 1/1 Running 0 52m kube-flannel-ds-amd64-dpj5x 1/1 Running 3 58m kube-flannel-ds-amd64-fnwwl 1/1 Running 1 58m kube-flannel-ds-amd64-xlfrg 1/1 Running 2 58m kube-proxy-2nh48 1/1 Running 1 72m kube-proxy-dvhps 1/1 Running 1 73m kube-proxy-grrmr 1/1 Running 2 75m kube-proxy-zjtlt 1/1 Running 0 52m kube-scheduler-k8s-141 1/1 Running 3 75m kube-scheduler-k8s-142 1/1 Running 1 71m kube-scheduler-k8s-143 1/1 Running 2 73m

高可用状态查看

[root@k8s-141 ~]# ipvsadm -ln IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.96.0.1:443 rr -> 192.168.17.141:6443 Masq 1 0 0 -> 192.168.17.142:6443 Masq 1 0 0 -> 192.168.17.143:6443 Masq 1 1 0 TCP 10.96.0.10:53 rr -> 10.11.1.6:53 Masq 1 0 0 -> 10.11.2.8:53 Masq 1 0 0 TCP 10.96.0.10:9153 rr -> 10.11.1.6:9153 Masq 1 0 0 -> 10.11.2.8:9153 Masq 1 0 0 UDP 10.96.0.10:53 rr -> 10.11.1.6:53 Masq 1 0 126 -> 10.11.2.8:53 Masq 1 0 80 [root@k8s-141 ~]# kubectl -n kube-system get cm kubeadm-config -oyaml apiVersion: v1 data: ClusterConfiguration: | apiServer: extraArgs: authorization-mode: Node,RBAC timeoutForControlPlane: 4m0s apiVersion: kubeadm.k8s.io/v1beta2 certificatesDir: /etc/kubernetes/pki clusterName: kubernetes controlPlaneEndpoint: 192.168.17.100:6444 controllerManager: {} dns: type: CoreDNS etcd: local: dataDir: /var/lib/etcd imageRepository: registry.aliyuncs.com/google_containers kind: ClusterConfiguration kubernetesVersion: v1.17.4 networking: dnsDomain: cluster.local podSubnet: 10.11.0.0/16 serviceSubnet: 10.96.0.0/12 scheduler: {} ClusterStatus: | apiEndpoints: k8s-141: advertiseAddress: 192.168.17.141 bindPort: 6443 k8s-142: advertiseAddress: 192.168.17.142 bindPort: 6443 k8s-143: advertiseAddress: 192.168.17.143 bindPort: 6443 apiVersion: kubeadm.k8s.io/v1beta2 kind: ClusterStatus kind: ConfigMap metadata: creationTimestamp: "2020-04-14T03:01:16Z" name: kubeadm-config namespace: kube-system resourceVersion: "824" selfLink: /api/v1/namespaces/kube-system/configmaps/kubeadm-config uid: d303c1d1-5664-4fe1-9feb-d3dcc701a1e0 [root@k8s-141 ~]# kubectl -n kube-system exec etcd-k8s-141 -- etcdctl > --endpoints=https://192.168.17.141:2379 > --cacert=/etc/kubernetes/pki/etcd/ca.crt > --cert=/etc/kubernetes/pki/etcd/server.crt > --key=/etc/kubernetes/pki/etcd/server.key endpoint health https://192.168.17.141:2379 is healthy: successfully committed proposal: took = 7.50323ms [root@k8s-141 ~]# ip a |grep ens33 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000 inet 192.168.17.141/24 brd 192.168.17.255 scope global noprefixroute dynamic ens33

参考文档>>> Kubernetes 高可用集群

作者:Leozhanggg

出处:https://www.cnblogs.com/leozhanggg/p/12697237.html

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。

以上是关于Kubesnetes实战总结 - 部署高可用集群的主要内容,如果未能解决你的问题,请参考以下文章