人工智能深度学习:如何使用TensorFlow2.0实现文本分类?

Posted peijz

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了人工智能深度学习:如何使用TensorFlow2.0实现文本分类?相关的知识,希望对你有一定的参考价值。

1.IMDB数据集

下载

imdb=keras.datasets.imdb

(train_x, train_y), (test_x, text_y)=keras.datasets.imdb.load_data(num_words=10000)了解IMDB数据

print("Training entries: {}, labels: {}".format(len(train_x), len(train_y)))

print(train_x[0])

print(‘len: ‘,len(train_x[0]), len(train_x[1]))

Training entries: 25000, labels: 25000

[1, 14, 22, 16, 43, 530, 973, 1622, 1385, 65, 458, 4468, 66, 3941, 4, 173, 36, 256, 5, 25, 100, 43, 838, 112, 50, 670, 2, 9, 35, 480, 284, 5, 150, 4, 172, 112, 167, 2, 336, 385, 39, 4, 172, 4536, 1111, 17, 546, 38, 13, 447, 4, 192, 50, 16, 6, 147, 2025, 19, 14, 22, 4, 1920, 4613, 469, 4, 22, 71, 87, 12, 16, 43, 530, 38, 76, 15, 13, 1247, 4, 22, 17, 515, 17, 12, 16, 626, 18, 2, 5, 62, 386, 12, 8, 316, 8, 106, 5, 4, 2223, 5244, 16, 480, 66, 3785, 33, 4, 130, 12, 16, 38, 619, 5, 25, 124, 51, 36, 135, 48, 25, 1415, 33, 6, 22, 12, 215, 28, 77, 52, 5, 14, 407, 16, 82, 2, 8, 4, 107, 117, 5952, 15, 256, 4, 2, 7, 3766, 5, 723, 36, 71, 43, 530, 476, 26, 400, 317, 46, 7, 4, 2, 1029, 13, 104, 88, 4, 381, 15, 297, 98, 32, 2071, 56, 26, 141, 6, 194, 7486, 18, 4, 226, 22, 21, 134, 476, 26, 480, 5, 144, 30, 5535, 18, 51, 36, 28, 224, 92, 25, 104, 4, 226, 65, 16, 38, 1334, 88, 12, 16, 283, 5, 16, 4472, 113, 103, 32, 15, 16, 5345, 19, 178, 32]

len: 218 189创建id和词的匹配字典

word_index = imdb.get_word_index()

word2id = {k:(v+3) for k, v in word_index.items()}

word2id[‘<PAD>‘] = 0

word2id[‘<START>‘] = 1

word2id[‘<UNK>‘] = 2

word2id[‘<UNUSED>‘] = 3

id2word = {v:k for k, v in word2id.items()}

def get_words(sent_ids):

return ‘ ‘.join([id2word.get(i, ‘?‘) for i in sent_ids])

sent = get_words(train_x[0])

print(sent)

<START> this film was just brilliant casting location scenery story direction everyone‘s really suited the part they played and you could just imagine being there robert <UNK> is an amazing actor and now the same being director <UNK> father came from the same scottish island as myself so i loved the fact there was a real connection with this film the witty remarks throughout the film were great it was just brilliant so much that i bought the film as soon as it was released for <UNK> and would recommend it to everyone to watch and the fly fishing was amazing really cried at the end it was so sad and you know what they say if you cry at a film it must have been good and this definitely was also <UNK> to the two little boy‘s that played the <UNK> of norman and paul they were just brilliant children are often left out of the <UNK> list i think because the stars that play them all grown up are such a big profile for the whole film but these children are amazing and should be praised for what they have done don‘t you think the whole story was so lovely because it was true and was someone‘s life after all that was shared with us all2.准备数据

# 句子末尾padding

train_x = keras.preprocessing.sequence.pad_sequences(

train_x, value=word2id[‘<PAD>‘],

padding=‘post‘, maxlen=256

)

test_x = keras.preprocessing.sequence.pad_sequences(

test_x, value=word2id[‘<PAD>‘],

padding=‘post‘, maxlen=256

)

print(train_x[0])

print(‘len: ‘,len(train_x[0]), len(train_x[1]))

[ 1 14 22 16 43 530 973 1622 1385 65 458 4468 66 3941

4 173 36 256 5 25 100 43 838 112 50 670 2 9

35 480 284 5 150 4 172 112 167 2 336 385 39 4

172 4536 1111 17 546 38 13 447 4 192 50 16 6 147

2025 19 14 22 4 1920 4613 469 4 22 71 87 12 16

43 530 38 76 15 13 1247 4 22 17 515 17 12 16

626 18 2 5 62 386 12 8 316 8 106 5 4 2223

5244 16 480 66 3785 33 4 130 12 16 38 619 5 25

124 51 36 135 48 25 1415 33 6 22 12 215 28 77

52 5 14 407 16 82 2 8 4 107 117 5952 15 256

4 2 7 3766 5 723 36 71 43 530 476 26 400 317

46 7 4 2 1029 13 104 88 4 381 15 297 98 32

2071 56 26 141 6 194 7486 18 4 226 22 21 134 476

26 480 5 144 30 5535 18 51 36 28 224 92 25 104

4 226 65 16 38 1334 88 12 16 283 5 16 4472 113

103 32 15 16 5345 19 178 32 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0]

len: 256 2563.构建模型

import tensorflow.keras.layers as layers

vocab_size = 10000

model = keras.Sequential()

model.add(layers.Embedding(vocab_size, 16))

model.add(layers.GlobalAveragePooling1D())

model.add(layers.Dense(16, activation=‘relu‘))

model.add(layers.Dense(1, activation=‘sigmoid‘))

model.summary()

model.compile(optimizer=‘adam‘,

loss=‘binary_crossentropy‘,

metrics=[‘accuracy‘])

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, None, 16) 160000

_________________________________________________________________

global_average_pooling1d (Gl (None, 16) 0

_________________________________________________________________

dense (Dense) (None, 16) 272

_________________________________________________________________

dense_1 (Dense) (None, 1) 17

=================================================================

Total params: 160,289

Trainable params: 160,289

Non-trainable params: 0

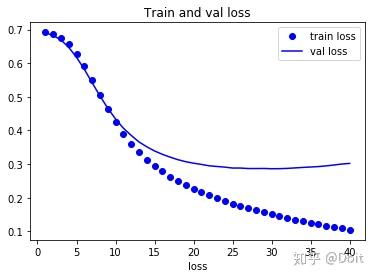

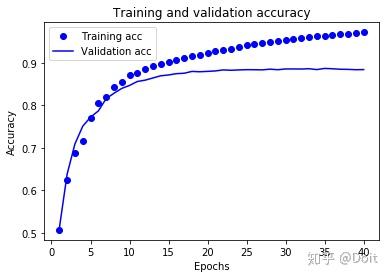

_________________________________________________________________4.模型训练与验证

x_val = train_x[:10000]

x_train = train_x[10000:]

y_val = train_y[:10000]

y_train = train_y[10000:]

history = model.fit(x_train,y_train,

epochs=40, batch_size=512,

validation_data=(x_val, y_val),

verbose=1)

result = model.evaluate(test_x, text_y)

print(result)

Train on 15000 samples, validate on 10000 samples

Epoch 1/40

15000/15000 [==============================] - 1s 73us/sample - loss: 0.6919 - accuracy: 0.5071 - val_loss: 0.6901 - val_accuracy: 0.5101

...

Epoch 40/40

15000/15000 [==============================] - 1s 45us/sample - loss: 0.1046 - accuracy: 0.9721 - val_loss: 0.3022 - val_accuracy: 0.8843

25000/25000 [==============================] - 1s 22us/sample - loss: 0.3216 - accuracy: 0.8729

[0.32155542838573453, 0.87292]5.查看准确率时序图

import matplotlib.pyplot as plt

history_dict = history.history

history_dict.keys()

acc = history_dict[‘accuracy‘]

val_acc = history_dict[‘val_accuracy‘]

loss = history_dict[‘loss‘]

val_loss = history_dict[‘val_loss‘]

epochs = range(1, len(acc)+1)

plt.plot(epochs, loss, ‘bo‘, label=‘train loss‘)

plt.plot(epochs, val_loss, ‘b‘, label=‘val loss‘)

plt.title(‘Train and val loss‘)

plt.xlabel(‘Epochs‘)

plt.xlabel(‘loss‘)

plt.legend()

plt.show()

plt.clf() # clear figure

plt.plot(epochs, acc, ‘bo‘, label=‘Training acc‘)

plt.plot(epochs, val_acc, ‘b‘, label=‘Validation acc‘)

plt.title(‘Training and validation accuracy‘)

plt.xlabel(‘Epochs‘)

plt.ylabel(‘Accuracy‘)

plt.legend()

plt.show()

以上是关于人工智能深度学习:如何使用TensorFlow2.0实现文本分类?的主要内容,如果未能解决你的问题,请参考以下文章