全网最详细最好懂 PyTorch CNN案例分析 识别手写数字

Posted pythonlearner

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了全网最详细最好懂 PyTorch CNN案例分析 识别手写数字相关的知识,希望对你有一定的参考价值。

先来看一下这是什么任务。就是给你手写数组的图片,然后识别这是什么数字:

dataset

首先先来看PyTorch的dataset类:

我已经在从零学习pytorch 第2课 Dataset类讲解了什么是dataset类以及他的运行原理

class MNIST_data(Dataset):

"""MNIST dtaa set"""

def __init__(self, file_path,

transform = transforms.Compose([transforms.ToPILImage(), transforms.ToTensor(),

transforms.Normalize(mean=(0.5,), std=(0.5,))])

):

df = pd.read_csv(file_path)

if len(df.columns) == n_pixels:

# test data

self.X = df.values.reshape((-1,28,28)).astype(np.uint8)[:,:,:,None]

self.y = None

else:

# training data

self.X = df.iloc[:,1:].values.reshape((-1,28,28)).astype(np.uint8)[:,:,:,None]

self.y = torch.from_numpy(df.iloc[:,0].values)

self.transform = transform

def __len__(self):

return len(self.X)

def __getitem__(self, idx):

if self.y is not None:

return self.transform(self.X[idx]), self.y[idx]

else:

return self.transform(self.X[idx])

- __init__中可以看到,file_path就是数据的读取地方,不同竞赛中的数据呈现模式不同,因此这个需要自行修改。

- transform默认是一个“转化成Image数据”,“转化成ToTensor”,“对图像数据进行归一化”。

- 我们先对df文件读取,这里的图像数据是存储在dataframe当中的,每个图片都是1784的数据,然后self.X是图像数据2828(tip:28*28 = 784)。如果数据是训练集,则有slef.y就是label(0~9),如果是测试集,就为None

- 然后在DataLoader中调用的__getitem__,我们对图像数据进行transform操作。

dataset构建结束。

加载数据

batch_size = 64

train_dataset = MNIST_data(‘../input/train.csv‘, transform= transforms.Compose(

[transforms.ToPILImage(), RandomRotation(degrees=20), RandomShift(3),

transforms.ToTensor(), transforms.Normalize(mean=(0.5,), std=(0.5,))]))

test_dataset = MNIST_data(‘../input/test.csv‘)

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,

batch_size=batch_size, shuffle=False)

先来看:

可以看到transoforms.Compose()啥?

- transforms.ToPILImage()

- RanomRotation(degrees=20)

- RandomShift(3)

- transforms.ToTensor()

- transforms.Normalize(mean=(0.5,),std=(0.5,))

第一个第四个第五个不用说,那个CNN图像处理的基本都有。第二个和第三个,Rotation是随机旋转degrees角度,shift是随机平移一段距离。

因为目前版本的torchvision.transform不带有旋转和平移的方法,所以需要自己写class。下面是直接从github复制下来的,可以直接照着使用,不用修改,非常良心,建议收藏方便下次直接复制哈哈:

class RandomRotation(object):

"""

https://github.com/pytorch/vision/tree/master/torchvision/transforms

Rotate the image by angle.

Args:

degrees (sequence or float or int): Range of degrees to select from.

If degrees is a number instead of sequence like (min, max), the range of degrees

will be (-degrees, +degrees).

resample ({PIL.Image.NEAREST, PIL.Image.BILINEAR, PIL.Image.BICUBIC}, optional):

An optional resampling filter.

See http://pillow.readthedocs.io/en/3.4.x/handbook/concepts.html#filters

If omitted, or if the image has mode "1" or "P", it is set to PIL.Image.NEAREST.

expand (bool, optional): Optional expansion flag.

If true, expands the output to make it large enough to hold the entire rotated image.

If false or omitted, make the output image the same size as the input image.

Note that the expand flag assumes rotation around the center and no translation.

center (2-tuple, optional): Optional center of rotation.

Origin is the upper left corner.

Default is the center of the image.

"""

def __init__(self, degrees, resample=False, expand=False, center=None):

if isinstance(degrees, numbers.Number):

if degrees < 0:

raise ValueError("If degrees is a single number, it must be positive.")

self.degrees = (-degrees, degrees)

else:

if len(degrees) != 2:

raise ValueError("If degrees is a sequence, it must be of len 2.")

self.degrees = degrees

self.resample = resample

self.expand = expand

self.center = center

@staticmethod

def get_params(degrees):

"""Get parameters for ``rotate`` for a random rotation.

Returns:

sequence: params to be passed to ``rotate`` for random rotation.

"""

angle = np.random.uniform(degrees[0], degrees[1])

return angle

def __call__(self, img):

"""

img (PIL Image): Image to be rotated.

Returns:

PIL Image: Rotated image.

"""

def rotate(img, angle, resample=False, expand=False, center=None):

"""Rotate the image by angle and then (optionally) translate it by (n_columns, n_rows)

Args:

img (PIL Image): PIL Image to be rotated.

angle ({float, int}): In degrees degrees counter clockwise order.

resample ({PIL.Image.NEAREST, PIL.Image.BILINEAR, PIL.Image.BICUBIC}, optional):

An optional resampling filter.

See http://pillow.readthedocs.io/en/3.4.x/handbook/concepts.html#filters

If omitted, or if the image has mode "1" or "P", it is set to PIL.Image.NEAREST.

expand (bool, optional): Optional expansion flag.

If true, expands the output image to make it large enough to hold the entire rotated image.

If false or omitted, make the output image the same size as the input image.

Note that the expand flag assumes rotation around the center and no translation.

center (2-tuple, optional): Optional center of rotation.

Origin is the upper left corner.

Default is the center of the image.

"""

return img.rotate(angle, resample, expand, center)

angle = self.get_params(self.degrees)

return rotate(img, angle, self.resample, self.expand, self.center)

class RandomShift(object):

def __init__(self, shift):

self.shift = shift

@staticmethod

def get_params(shift):

"""Get parameters for ``rotate`` for a random rotation.

Returns:

sequence: params to be passed to ``rotate`` for random rotation.

"""

hshift, vshift = np.random.uniform(-shift, shift, size=2)

return hshift, vshift

def __call__(self, img):

hshift, vshift = self.get_params(self.shift)

return img.transform(img.size, Image.AFFINE, (1,0,hshift,0,1,vshift), resample=Image.BICUBIC, fill=1)

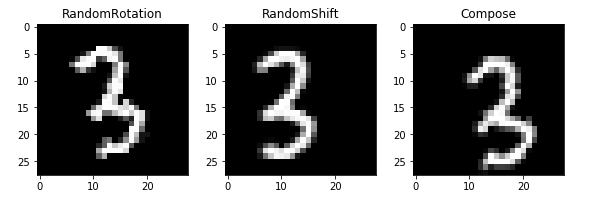

我们不妨看一下运行的可视化:

第一个图是旋转,第二个图是随机平移,第二图是组合,(平移加旋转)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(1, 32, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(32),

nn.ReLU(inplace=True),

nn.Conv2d(32, 32, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(32),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(32, 64, kernel_size=3, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True),

nn.Conv2d(64, 64, kernel_size=3, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.classifier = nn.Sequential(

nn.Dropout(p = 0.5),

nn.Linear(64 * 7 * 7, 512),

nn.BatchNorm1d(512),

nn.ReLU(inplace=True),

nn.Dropout(p = 0.5),

nn.Linear(512, 512),

nn.BatchNorm1d(512),

nn.ReLU(inplace=True),

nn.Dropout(p = 0.5),

nn.Linear(512, 10),

)

for m in self.features.children():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

for m in self.classifier.children():

if isinstance(m, nn.Linear):

nn.init.xavier_uniform(m.weight)

elif isinstance(m, nn.BatchNorm1d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def forward(self, x):

x = self.features(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

这个模型的构建,还记得模型构建的三定义吗?

从零学习pytorch 第5课 PyTorch模型搭建三要素

这个模型就是现实Conv2d层,然后batch归一化层,然后ReLU激活函数。之后又定义了线形层。

Conv2d的参数1是指输入图片有一个通道(因为是黑白图像,如果是RGB图片,这里为3),然后输出通道32,然后stride步长1,padding填充1.具体可以看一下:

Pytorch 中nn.Conv2d的参数用法 channel含义详解

优化器

model = Net()

optimizer = optim.Adam(model.parameters(), lr=0.003)

criterion = nn.CrossEntropyLoss()

exp_lr_scheduler = lr_scheduler.StepLR(optimizer, step_size=7, gamma=0.1)

if torch.cuda.is_available():

model = model.cuda()

criterion = criterion.cuda()

这里选中的优化器是Adam,吧model的参数传到优化器中。

从零学习PyTorch 第8课 PyTorch优化器基类Optimier

定义损失函数

这里的torch.optim.lr_scheduler提供了一个可以让学习率随着epoch变化而变化的方法。

StepLR是让epoch到某一条件,学习率*gamma变得更小,这个过程会有7次。

if torch.cuda.is_available():

model = model.cuda()

criterion = criterion.cuda()

这是判断模型是否使用GPU来训练。

trian

def train(epoch):

model.train()

exp_lr_scheduler.step()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = Variable(data), Variable(target)

if torch.cuda.is_available():

data = data.cuda()

target = target.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

if (batch_idx + 1)% 100 == 0:

print(‘Train Epoch: {} [{}/{} ({:.0f}%)] Loss: {:.6f}‘.format(

epoch, (batch_idx + 1) * len(data), len(train_loader.dataset),

100. * (batch_idx + 1) / len(train_loader), loss.data[0]))

这里面就是从train_loader中获取数据集和label,然后判断是否用GPU,是否吧数据从CPU转移到GPU内存中。然后吧优化器的梯度归零,然后通过model得到预测output,然后计算loss,然后让loss反向传播更新参数。

完整代码

# %% [markdown]

# # Convolutional Neural Network With PyTorch

# # ----------------------------------------------

# #

# # Here I only train for a few epochs as training takes couple of hours without GPU. But this network achieves 0.995 accuracy

# # after 50 epochs of training.

# %% [code]

import pandas as pd

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.optim import lr_scheduler

from torch.autograd import Variable

from torch.utils.data import DataLoader, Dataset

from torchvision import transforms

from torchvision.utils import make_grid

import math

import random

from PIL import Image, ImageOps, ImageEnhance

import numbers

import matplotlib.pyplot as plt

%matplotlib inline

# %% [markdown]

# ## Explore the Data

# %% [code]

train_df = pd.read_csv(‘../input/train.csv‘)

n_train = len(train_df)

n_pixels = len(train_df.columns) - 1

n_class = len(set(train_df[‘label‘]))

print(‘Number of training samples: {0}‘.format(n_train))

print(‘Number of training pixels: {0}‘.format(n_pixels))

print(‘Number of classes: {0}‘.format(n_class))

# %% [code]

test_df = pd.read_csv(‘../input/test.csv‘)

n_test = len(test_df)

n_pixels = len(test_df.columns)

print(‘Number of train samples: {0}‘.format(n_test))

print(‘Number of test pixels: {0}‘.format(n_pixels))

# %% [markdown]

# ### Display some images

# %% [code]

random_sel = np.random.randint(n_train, size=8)

grid = make_grid(torch.Tensor((train_df.iloc[random_sel, 1:].as_matrix()/255.).reshape((-1, 28, 28))).unsqueeze(1), nrow=8)

plt.rcParams[‘figure.figsize‘] = (16, 2)

plt.imshow(grid.numpy().transpose((1,2,0)))

plt.axis(‘off‘)

print(*list(train_df.iloc[random_sel, 0].values), sep = ‘, ‘)

# %% [markdown]

# ### Histogram of the classes

# %% [code]

plt.rcParams[‘figure.figsize‘] = (8, 5)

plt.bar(train_df[‘label‘].value_counts().index, train_df[‘label‘].value_counts())

plt.xticks(np.arange(n_class))

plt.xlabel(‘Class‘, fontsize=16)

plt.ylabel(‘Count‘, fontsize=16)

plt.grid(‘on‘, axis=‘y‘)

# %% [markdown]

# ## Data Loader

# %% [code]

class MNIST_data(Dataset):

"""MNIST dtaa set"""

def __init__(self, file_path,

transform = transforms.Compose([transforms.ToPILImage(), transforms.ToTensor(),

transforms.Normalize(mean=(0.5,), std=(0.5,))])

):

df = pd.read_csv(file_path)

if len(df.columns) == n_pixels:

# test data

self.X = df.values.reshape((-1,28,28)).astype(np.uint8)[:,:,:,None]

self.y = None

else:

# training data

self.X = df.iloc[:,1:].values.reshape((-1,28,28)).astype(np.uint8)[:,:,:,None]

self.y = torch.from_numpy(df.iloc[:,0].values)

self.transform = transform

def __len__(self):

return len(self.X)

def __getitem__(self, idx):

if self.y is not None:

return self.transform(self.X[idx]), self.y[idx]

else:

return self.transform(self.X[idx])

# %% [markdown]

# ### Random Rotation Transformation

# # Randomly rotate the image. Available in upcoming torchvision but not now.

# %% [code]

class RandomRotation(object):

"""

https://github.com/pytorch/vision/tree/master/torchvision/transforms

Rotate the image by angle.

Args:

degrees (sequence or float or int): Range of degrees to select from.

If degrees is a number instead of sequence like (min, max), the range of degrees

will be (-degrees, +degrees).

resample ({PIL.Image.NEAREST, PIL.Image.BILINEAR, PIL.Image.BICUBIC}, optional):

An optional resampling filter.

See http://pillow.readthedocs.io/en/3.4.x/handbook/concepts.html#filters

If omitted, or if the image has mode "1" or "P", it is set to PIL.Image.NEAREST.

expand (bool, optional): Optional expansion flag.

If true, expands the output to make it large enough to hold the entire rotated image.

If false or omitted, make the output image the same size as the input image.

Note that the expand flag assumes rotation around the center and no translation.

center (2-tuple, optional): Optional center of rotation.

Origin is the upper left corner.

Default is the center of the image.

"""

def __init__(self, degrees, resample=False, expand=False, center=None):

if isinstance(degrees, numbers.Number):

if degrees < 0:

raise ValueError("If degrees is a single number, it must be positive.")

self.degrees = (-degrees, degrees)

else:

if len(degrees) != 2:

raise ValueError("If degrees is a sequence, it must be of len 2.")

self.degrees = degrees

self.resample = resample

self.expand = expand

self.center = center

@staticmethod

def get_params(degrees):

"""Get parameters for ``rotate`` for a random rotation.

Returns:

sequence: params to be passed to ``rotate`` for random rotation.

"""

angle = np.random.uniform(degrees[0], degrees[1])

return angle

def __call__(self, img):

"""

img (PIL Image): Image to be rotated.

Returns:

PIL Image: Rotated image.

"""

def rotate(img, angle, resample=False, expand=False, center=None):

"""Rotate the image by angle and then (optionally) translate it by (n_columns, n_rows)

Args:

img (PIL Image): PIL Image to be rotated.

angle ({float, int}): In degrees degrees counter clockwise order.

resample ({PIL.Image.NEAREST, PIL.Image.BILINEAR, PIL.Image.BICUBIC}, optional):

An optional resampling filter.

See http://pillow.readthedocs.io/en/3.4.x/handbook/concepts.html#filters

If omitted, or if the image has mode "1" or "P", it is set to PIL.Image.NEAREST.

expand (bool, optional): Optional expansion flag.

If true, expands the output image to make it large enough to hold the entire rotated image.

If false or omitted, make the output image the same size as the input image.

Note that the expand flag assumes rotation around the center and no translation.

center (2-tuple, optional): Optional center of rotation.

Origin is the upper left corner.

Default is the center of the image.

"""

return img.rotate(angle, resample, expand, center)

angle = self.get_params(self.degrees)

return rotate(img, angle, self.resample, self.expand, self.center)

# %% [markdown]

# ### Random Vertical and Horizontal Shift

# %% [code]

class RandomShift(object):

def __init__(self, shift):

self.shift = shift

@staticmethod

def get_params(shift):

"""Get parameters for ``rotate`` for a random rotation.

Returns:

sequence: params to be passed to ``rotate`` for random rotation.

"""

hshift, vshift = np.random.uniform(-shift, shift, size=2)

return hshift, vshift

def __call__(self, img):

hshift, vshift = self.get_params(self.shift)

return img.transform(img.size, Image.AFFINE, (1,0,hshift,0,1,vshift), resample=Image.BICUBIC, fill=1)

# %% [code]

np.random.uniform(-4, 4, size=2)

# %% [markdown]

# ## Load the Data into Tensors

# # For the training set, apply random rotation within the range of (-45, 45) degrees, shift by (-3, 3) pixels

# # and normalize pixel values to [-1, 1]. For the test set, only apply nomalization.

# %% [code]

batch_size = 64

train_dataset = MNIST_data(‘../input/train.csv‘, transform= transforms.Compose(

[transforms.ToPILImage(), RandomRotation(degrees=20), RandomShift(3),

transforms.ToTensor(), transforms.Normalize(mean=(0.5,), std=(0.5,))]))

test_dataset = MNIST_data(‘../input/test.csv‘)

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,

batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,

batch_size=batch_size, shuffle=False)

# %% [markdown]

# ### Visualize the Transformations

# %% [code]

rotate = RandomRotation(20)

shift = RandomShift(3)

composed = transforms.Compose([RandomRotation(20),

RandomShift(3)])

# Apply each of the above transforms on sample.

fig = plt.figure()

sample = transforms.ToPILImage()(train_df.iloc[65,1:].as_matrix().reshape((28,28)).astype(np.uint8)[:,:,None])

for i, tsfrm in enumerate([rotate, shift, composed]):

transformed_sample = tsfrm(sample)

ax = plt.subplot(1, 3, i + 1)

plt.tight_layout()

ax.set_title(type(tsfrm).__name__)

ax.imshow(np.reshape(np.array(list(transformed_sample.getdata())), (-1,28)), cmap=‘gray‘)

plt.show()

# %% [markdown]

# ## Network Structure

# %% [code]

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(1, 32, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(32),

nn.ReLU(inplace=True),

nn.Conv2d(32, 32, kernel_size=3, stride=1, padding=1),

nn.BatchNorm2d(32),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(32, 64, kernel_size=3, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True),

nn.Conv2d(64, 64, kernel_size=3, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.classifier = nn.Sequential(

nn.Dropout(p = 0.5),

nn.Linear(64 * 7 * 7, 512),

nn.BatchNorm1d(512),

nn.ReLU(inplace=True),

nn.Dropout(p = 0.5),

nn.Linear(512, 512),

nn.BatchNorm1d(512),

nn.ReLU(inplace=True),

nn.Dropout(p = 0.5),

nn.Linear(512, 10),

)

for m in self.features.children():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

for m in self.classifier.children():

if isinstance(m, nn.Linear):

nn.init.xavier_uniform(m.weight)

elif isinstance(m, nn.BatchNorm1d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def forward(self, x):

x = self.features(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

# %% [code]

model = Net()

optimizer = optim.Adam(model.parameters(), lr=0.003)

criterion = nn.CrossEntropyLoss()

exp_lr_scheduler = lr_scheduler.StepLR(optimizer, step_size=7, gamma=0.1)

if torch.cuda.is_available():

model = model.cuda()

criterion = criterion.cuda()

# %% [markdown]

# ## Training and Evaluation

# %% [code]

def train(epoch):

model.train()

exp_lr_scheduler.step()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = Variable(data), Variable(target)

if torch.cuda.is_available():

data = data.cuda()

target = target.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

if (batch_idx + 1)% 100 == 0:

print(‘Train Epoch: {} [{}/{} ({:.0f}%)] Loss: {:.6f}‘.format(

epoch, (batch_idx + 1) * len(data), len(train_loader.dataset),

100. * (batch_idx + 1) / len(train_loader), loss.data[0]))

# %% [code]

def evaluate(data_loader):

model.eval()

loss = 0

correct = 0

for data, target in data_loader:

data, target = Variable(data, volatile=True), Variable(target)

if torch.cuda.is_available():

data = data.cuda()

target = target.cuda()

output = model(data)

loss += F.cross_entropy(output, target, size_average=False).data[0]

pred = output.data.max(1, keepdim=True)[1]

correct += pred.eq(target.data.view_as(pred)).cpu().sum()

loss /= len(data_loader.dataset)

print(‘

Average loss: {:.4f}, Accuracy: {}/{} ({:.3f}%)

‘.format(

loss, correct, len(data_loader.dataset),

100. * correct / len(data_loader.dataset)))

# %% [markdown]

# ### Train the network

# #

# # Reaches 0.995 accuracy on test set after 50 epochs

# %% [code]

n_epochs = 1

for epoch in range(n_epochs):

train(epoch)

evaluate(train_loader)

# %% [markdown]

# ## Prediction on Test Set

# %% [code]

def prediciton(data_loader):

model.eval()

test_pred = torch.LongTensor()

for i, data in enumerate(data_loader):

data = Variable(data, volatile=True)

if torch.cuda.is_available():

data = data.cuda()

output = model(data)

pred = output.cpu().data.max(1, keepdim=True)[1]

test_pred = torch.cat((test_pred, pred), dim=0)

return test_pred

# %% [code]

test_pred = prediciton(test_loader)

# %% [code]

out_df = pd.DataFrame(np.c_[np.arange(1, len(test_dataset)+1)[:,None], test_pred.numpy()],

columns=[‘ImageId‘, ‘Label‘])

# %% [code] {"scrolled":true}

out_df.head()

# %% [code]

out_df.to_csv(‘submission.csv‘, index=False)

以上是关于全网最详细最好懂 PyTorch CNN案例分析 识别手写数字的主要内容,如果未能解决你的问题,请参考以下文章

史上最详细的爬虫教程,Python采集全网最受欢迎的 500 本书!

❄️全网最详细的Python入门基础教程,Python最全教程(非常详细,整理而来)