华为云AI-深度学习糖尿病预测

Posted

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了华为云AI-深度学习糖尿病预测相关的知识,希望对你有一定的参考价值。

#!/usr/bin/env python2

# -*- coding: utf-8 -*-

"""

Created on Sat Sep 15 10:54:53 2018

@author: myhaspl

@email:[email protected]

糖尿病预测(多层)

csv格式:怀孕次数、葡萄糖、血压、皮肤厚度,胰岛素,bmi,糖尿病血统函数,年龄,结果

"""

import tensorflow as tf

import os

trainCount=10000

inputNodeCount=8

validateCount=50

sampleCount=200

testCount=10

outputNodeCount=1

g=tf.Graph()

with g.as_default():

def getWeights(shape,wname):

weights=tf.Variable(tf.truncated_normal(shape,stddev=0.1),name=wname)

return weights

def getBias(shape,bname):

biases=tf.Variable(tf.constant(0.1,shape=shape),name=bname)

return biases

def inferenceInput(x):

layer1=tf.nn.relu(tf.add(tf.matmul(x,w1),b1))

result=tf.add(tf.matmul(layer1,w2),b2)

return result

def inference(x):

yp=inferenceInput(x)

return tf.sigmoid(yp)

def loss():

yp=inferenceInput(x)

return tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(labels=y,logits=yp))

def train(learningRate,trainLoss,trainStep):

trainOp=tf.train.AdamOptimizer(learningRate).minimize(trainLoss,global_step=trainStep)

return trainOp

def evaluate(x):

return tf.cast(inference(x)>0.5,tf.float32)

def accuracy(x,y,count):

yp=evaluate(x)

return tf.reduce_mean(tf.cast(tf.equal(yp,y),tf.float32))

def inputFromFile(fileName,skipLines=1):

#生成文件名队列

fileNameQueue=tf.train.string_input_producer([fileName])

#生成记录键值对

reader=tf.TextLineReader(skip_header_lines=skipLines)

key,value=reader.read(fileNameQueue)

return value

def getTestData(fileName,skipLines=1,n=10):

#生成文件名队列

testFileNameQueue=tf.train.string_input_producer([fileName])

#生成记录键值对

testReader=tf.TextLineReader(skip_header_lines=skipLines)

testKey,testValue=testReader.read(testFileNameQueue)

testRecordDefaults=[[1.],[1.],[1.],[1.],[1.],[1.],[1.],[1.],[1.]]

testDecoded=tf.decode_csv(testValue,record_defaults=testRecordDefaults)

pregnancies,glucose,bloodPressure,skinThickness,insulin,bmi,diabetespedigreefunction,age,outcome=tf.train.shuffle_batch(testDecoded,batch_size=n,capacity=1000,min_after_dequeue=1)

testFeatures=tf.transpose(tf.stack([pregnancies,glucose,bloodPressure,skinThickness,insulin,bmi,diabetespedigreefunction,age]))

testY=tf.transpose([outcome])

return (testFeatures,testY)

def getNextBatch(n,values):

recordDefaults=[[1.],[1.],[1.],[1.],[1.],[1.],[1.],[1.],[1.]]

decoded=tf.decode_csv(values,record_defaults=recordDefaults)

pregnancies,glucose,bloodPressure,skinThickness,insulin,bmi,diabetespedigreefunction,age,outcome=tf.train.shuffle_batch(decoded,batch_size=n,capacity=1000,min_after_dequeue=1)

features=tf.transpose(tf.stack([pregnancies,glucose,bloodPressure,skinThickness,insulin,bmi,diabetespedigreefunction,age]))

y=tf.transpose([outcome])

return (features,y)

with tf.name_scope("inputSample"):

samples=inputFromFile("s3://myhaspl/tf_learn/diabetes.csv",1)

inputDs=getNextBatch(sampleCount,samples)

with tf.name_scope("validateSamples"):

validateInputs=getNextBatch(validateCount,samples)

with tf.name_scope("testSamples"):

testInputs=getTestData("s3://myhaspl/tf_learn/diabetes_test.csv")

with tf.name_scope("inputDatas"):

x=tf.placeholder(dtype=tf.float32,shape=[None,inputNodeCount],name="input_x")

y=tf.placeholder(dtype=tf.float32,shape=[None,outputNodeCount],name="input_y")

with tf.name_scope("Variable"):

w1=getWeights([inputNodeCount,12],"w1")

b1=getBias((),"b1")

w2=getWeights([12,outputNodeCount],"w2")

b2=getBias((),"b2")

trainStep=tf.Variable(0,dtype=tf.int32,name="tcount",trainable=False)

with tf.name_scope("train"):

trainLoss=loss()

trainOp=train(0.005,trainLoss,trainStep)

init=tf.global_variables_initializer()

with tf.Session(graph=g) as sess:

sess.run(init)

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(coord=coord)

while trainStep.eval()<trainCount:

sampleX,sampleY=sess.run(inputDs)

sess.run(trainOp,feed_dict={x:sampleX,y:sampleY})

nowStep=sess.run(trainStep)

if nowStep%500==0:

validate_acc=sess.run(accuracy(sampleX,sampleY,sampleCount))

print "%d次后=>正确率%g"%(nowStep,validate_acc)

if nowStep>trainCount:

break

testInputX,testInputY=sess.run(testInputs)

print "测试样本正确率%g"%sess.run(accuracy(testInputX,testInputY,testCount))

print testInputX,testInputY

print sess.run(evaluate(testInputX))

coord.request_stop()

coord.join(threads)

500次后=>正确率0.67

1000次后=>正确率0.75

1500次后=>正确率0.81

2000次后=>正确率0.75

2500次后=>正确率0.775

3000次后=>正确率0.765

3500次后=>正确率0.84

4000次后=>正确率0.85

4500次后=>正确率0.77

5000次后=>正确率0.78

5500次后=>正确率0.775

6000次后=>正确率0.835

6500次后=>正确率0.84

7000次后=>正确率0.785

7500次后=>正确率0.805

8000次后=>正确率0.765

8500次后=>正确率0.83

9000次后=>正确率0.835

9500次后=>正确率0.78

10000次后=>正确率0.775

测试样本正确率0.7

[[1.00e+01 1.01e+02 7.60e+01 4.80e+01 1.80e+02 3.29e+01 1.71e-01 6.30e+01]

[3.00e+00 7.80e+01 5.00e+01 3.20e+01 8.80e+01 3.10e+01 2.48e-01 2.60e+01]

[2.00e+00 1.22e+02 7.00e+01 2.70e+01 0.00e+00 3.68e+01 3.40e-01 2.70e+01]

[2.00e+00 8.80e+01 5.80e+01 2.60e+01 1.60e+01 2.84e+01 7.66e-01 2.20e+01]

[1.00e+01 1.01e+02 7.60e+01 4.80e+01 1.80e+02 3.29e+01 1.71e-01 6.30e+01]

[2.00e+00 1.22e+02 7.00e+01 2.70e+01 0.00e+00 3.68e+01 3.40e-01 2.70e+01]

[1.00e+00 8.90e+01 6.60e+01 2.30e+01 9.40e+01 2.81e+01 1.67e-01 2.10e+01]

[6.00e+00 1.48e+02 7.20e+01 3.50e+01 0.00e+00 3.36e+01 6.27e-01 5.00e+01]

[1.00e+00 9.30e+01 7.00e+01 3.10e+01 0.00e+00 3.04e+01 3.15e-01 2.30e+01]

[2.00e+00 1.22e+02 7.00e+01 2.70e+01 0.00e+00 3.68e+01 3.40e-01 2.70e+01]] [[0.]

[1.]

[0.]

[0.]

[0.]

[0.]

[0.]

[1.]

[0.]

[0.]]

[[1.]

[0.]

[0.]

[0.]

[1.]

[0.]

[0.]

[1.]

[0.]

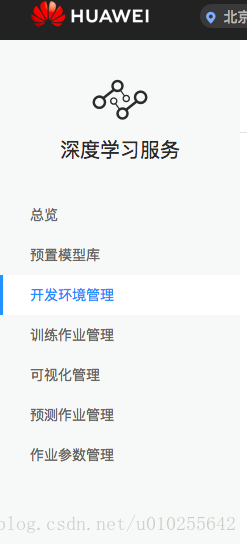

[0.]]感觉华为云中提供的深度学习服务,就是给你提供一个强大的服务器,然后,你自己编写代码。可能还提供了一些更多的功能

另外,提供了一个训练用户自定义数据的代码

补充一个概念:

MoXing是华为云深度学习服务提供的网络模型开发API。相对于TensorFlow和MXNet等原生API而言,MoXing API让模型的代码编写更加简单,而且能够自动获取高性能的分布式执行能力。

MoXing允许用户只需要关心数据输入(input_fn)和模型构建(model_fn)的代码,就可以实现任意模型在多GPU和分布式下的高性能运行。MoXing-TensorFlow支持原生TensorFlow、Keras、slim等API,帮助构建图像分类、物体检测、生成对抗、自然语言处理和OCR等多种模型。

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import tensorflow as tf

import moxing.tensorflow as mox

slim = tf.contrib.slim

# 用TensorFlow原生的方式定义超参

tf.flags.DEFINE_string(‘data_url‘, None, ‘‘)

tf.flags.DEFINE_string(‘train_dir‘, None, ‘‘)

flags = tf.flags.FLAGS

def train_my_model():

def input_fn(run_mode, **kwargs):

# 从TFRecord中获取输入数据集

keys_to_features = {

‘image/encoded‘: tf.FixedLenFeature((), tf.string, default_value=‘‘),

‘image/format‘: tf.FixedLenFeature((), tf.string, default_value=‘raw‘),

‘image/class/label‘: tf.FixedLenFeature(

[1], tf.int64, default_value=tf.zeros([1], dtype=tf.int64)),

}

items_to_handlers = {

‘image‘: slim.tfexample_decoder.Image(shape=[28, 28, 1], channels=1),

‘label‘: slim.tfexample_decoder.Tensor(‘image/class/label‘, shape=[]),

}

# 数据集中包含60000张训练集图像(数据文件名为mnist_train.tfrecord)

# 以及10000张验证集图像(数据文件名为mnist_test.tfrecord)

dataset = mox.get_tfrecord(dataset_dir=flags.data_url,

file_pattern=‘mnist_train.tfrecord‘ if run_mode == mox.ModeKeys.TRAIN else ‘mnist_test.tfrecord‘,

num_samples=60000 if run_mode == mox.ModeKeys.TRAIN else 10000,

keys_to_features=keys_to_features,

items_to_handlers=items_to_handlers,

capacity=1000)

image, label = dataset.get([‘image‘, ‘label‘])

# 将图像像素值转换为float并统一大小

image = tf.to_float(image)

image = tf.image.resize_image_with_crop_or_pad(image, 28, 28)

return image, label

def model_fn(inputs, run_mode, **kwargs):

# 获取一批输入数据

images, labels = inputs

# 将输入图像进行归一化

images = tf.subtract(images, 128.0)

images = tf.div(images, 128.0)

# 定义函数参数作用域:

# 1. 所有的卷积和全链接L2正则项系数为0

# 2. 所有的卷积和全链接使用截断正态分布初始化待训练变量

# 3. 所有的卷积和全链接的激活层采用ReLU

with slim.arg_scope(

[slim.conv2d, slim.fully_connected],

weights_regularizer=slim.l2_regularizer(scale=0.0),

weights_initializer=tf.truncated_normal_initializer(stddev=0.1),

activation_fn=tf.nn.relu):

# 定义网络

net = slim.conv2d(images, 32, [5, 5])

net = slim.max_pool2d(net, [2, 2], 2)

net = slim.conv2d(net, 64, [5, 5])

net = slim.max_pool2d(net, [2, 2], 2)

net = slim.flatten(net)

net = slim.fully_connected(net, 1024)

net = slim.dropout(net, 0.5, is_training=True)

logits = slim.fully_connected(net, 10, activation_fn=None)

labels_one_hot = slim.one_hot_encoding(labels, 10)

# 定义交叉熵损失值

loss = tf.losses.softmax_cross_entropy(

logits=logits, onehot_labels=labels_one_hot,

label_smoothing=0.0, weights=1.0)

# 由于函数参数作用域定义了所有L2正则项系数为0,所以这里将不会获取到任何L2正则项

regularization_losses = mox.get_collection(tf.GraphKeys.REGULARIZATION_LOSSES)

if len(regularization_losses) > 0:

regularization_loss = tf.add_n(regularization_losses)

loss += regularization_loss

# 定义评价指标

accuracy_top_1 = tf.reduce_mean(tf.cast(tf.nn.in_top_k(logits, labels, 1), tf.float32))

accuracy_top_5 = tf.reduce_mean(tf.cast(tf.nn.in_top_k(logits, labels, 5), tf.float32))

# 必须返回mox.ModelSpec

return mox.ModelSpec(loss=loss,

log_info={‘loss‘: loss, ‘top1‘: accuracy_top_1, ‘top5‘: accuracy_top_5})

# 获取一个内置的Optimizer

optimizer_fn = mox.get_optimizer_fn(‘sgd‘, learning_rate=0.01)

# 启动训练

mox.run(input_fn=input_fn,

model_fn=model_fn,

optimizer_fn=optimizer_fn,

run_mode=mox.ModeKeys.TRAIN,

batch_size=50,

log_dir=flags.train_dir,

max_number_of_steps=2000,

log_every_n_steps=10,

save_summary_steps=50,

save_model_secs=60)

if __name__ == ‘__main__‘:

train_my_model()以上是关于华为云AI-深度学习糖尿病预测的主要内容,如果未能解决你的问题,请参考以下文章