学习MFS

Posted wuhg

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了学习MFS相关的知识,希望对你有一定的参考价值。

MooseFS,是一个具备冗余容错功能的分布式网络文件系统,它将数据分别存放在多个物理server或单独disk或partition上,确保一份数据有多个备份副本,对于访问MFS的client或user来说,整个分布式网络文件系统集群看起来就像一个资源一样,从MFS对文件操作的情况看,相当于一个类unix的FS(ext{3,4}、nfs);

https://moosefs.com/index.html

特点:

分层的目录树结构;

存储支持POSIX标准的文件属性(权限、最后访问、修改时间);

支持特殊的文件,如:块设备、字符设备、管道、套接字、软硬链接;

支持基于IP和密码的访问方式;

高可靠性,每一份数据可设置多个副本,并可存储在不同的主机上;

高可扩展性,可通过增加主机增加主机数量或disk来动态扩展整个文件系统的存储量(尽量在前端加cache应用,而不是一味的扩充存储);

高可容错性,通过配置,当数据文件在删除后的一段时间内,仍存于主机的回收站中,以备恢复;

高数据一致性,即使文件被写入或访问,也可完成对文件的一致性快照;

优点:

轻量、易配置、易维护;

开发活跃、社区活跃、资料丰富;

扩容成本低,支持在线扩容(不影响业务);

以文件系统方式展示(例如图片,虽存在chunkserver上的是binary文件,但在挂载的mfs client仍以图片方式展示);

磁盘利用率较高,测试需要较大磁盘空间;

可设置删除文件的空间回收时间,避免误删文件丢失及恢复不及时影响业务;

系统负载,即数据rw分配到所有server上;

可设置文件备份的副本数量(一般建议3份);

缺点:

master是单点(虽会把数据信息同步到备份服务器,但恢复需要时间,会影响业务),解决:drbd+heartbeat或drbd+inotify,master和backup之间的同步类似mysql的主从同步;

master对主机的内存要求较高(所有metadata均加载在内存中);

backup(metalogger)复制metadata间隔时间较长(可调整);

应用场景:

大规模高并发的线上数据存储及访问(小文件、大文件);

大规模的数据处理,如:日志分析,小文件强调性能不用HDFS(hadoop);

Lustre;ceph;GlusterFS;HDFS;Mogilefs;FastDFS;FreeNAS;MooseFS;

MFS结构(4组件):

master(managing server,管理服务器,管理整个mfs的主服务器,master只能有一台处于工作状态,master除分发用户请求外,还用于存储整个FS中每个数据文件的metadata(file,directory,socket,pipe,device等的大小、属性、路径),类似LVS主服务器,LVS仅根据算法分发请求,而master根据内存中的metadata(会实时写入到disk)分发请求);

backup(metadata backupserver或metalogger,元数据备份服务器,backup可有一台或多台,备份master的变化的metadata信息日志文件(changelog_ml.*.mfs),当master出问题简单操作即可让新主服务器进行工作,类似MySQL的主从同步(不像MySQL从库那样在本地应用数据,只接收master上文件写入时记录的与文件相关的metadata信息));

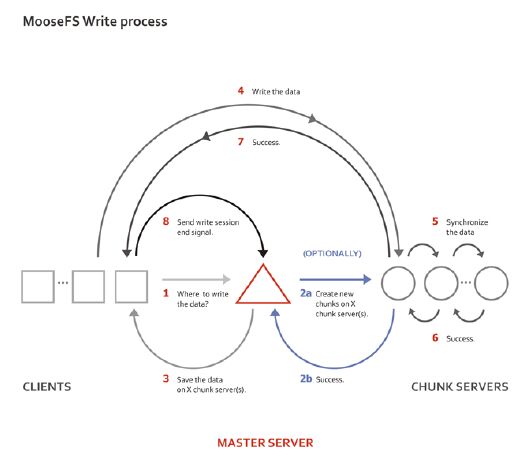

data(data server或chunk server,数据存储服务器,真正存放数据文件实体,这个角色可有多台不同的物理server或不同的disk及partition,当配置数据的副本多于一份时,当写入到一个数据服务器后,再根据算法同步备份到其它数据服务器上,类似LVS集群中的RS);

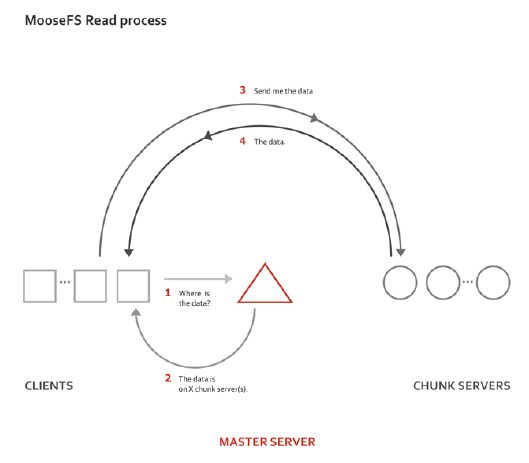

client(client server,挂载并使用mfs的client,即前端访问FS的应用服务器,client首先会连接master获取数据的metadata,根据得到的metadata访问data server读取或写入文件实体,client通过FUSEmechanism实现挂载);

高稳定性要求:

master(双电源分别接A、B路电,机柜多的分开存放;多块disk使用raid1或raid10,也可raid5(r好w慢));

backup(若确定在master失效后,用backup接管master,backup应与master同等配置,另一方案是在master上使用heartbeat+drbd);

data(所有data server硬盘大小一致,否则io不均,生产下data至少3台以上);

操作:

192.168.23.136(mfsmaster)

192.168.23.137(mfsbackup,mfsclient,两个角色)

192.168.23.138(mfsdata)

[[email protected] ~]# uname -rm

2.6.32-431.el6.x86_64 x86_64

[[email protected] ~]# cat /etc/redhat-release

Red Hat Enterprise Linux Server release 6.5(Santiago)

[[email protected] ~]# vim /etc/hosts #(三台主机hosts文件一致)

192.168.23.136 mfsmaster

192.168.23.137 mfsbackup

192.168.23.138 mfsdata

mfsmaster-side:

[[email protected] ~]# groupadd mfs

[[email protected] ~]# useradd -g mfs -s /sbin/nologin mfs

[[email protected] ~]# yum -y install fuse-devel zlib-devel

[[email protected] ~]# tar xf mfs-1.6.27-5.tar.gz

[[email protected] ~]# cd mfs-1.6.27

[[email protected] mfs-1.6.27]# ./configure –help #(--disable-mfsmaster,--disable-mfschunkserver,--disable-mfsmount)

[[email protected] mfs-1.6.27]# ./configure --prefix=/ane/mfs-1.6.27 --with-default-user=mfs --with-default-group=mfs #(完全安装,只在配置文件上区分)

[[email protected] mfs-1.6.27]# make

[[email protected] mfs-1.6.27]# make install

[[email protected] mfs-1.6.27]# cd /ane

[[email protected] ane]# ln -sv mfs-1.6.27/ mfs

`mfs‘ -> `mfs-1.6.27/‘

[[email protected] ane]# ll mfs/

total 20

drwxr-xr-x. 2 root root 4096 Apr 18 18:43bin

drwxr-xr-x. 3 root root 4096 Apr 18 18:43etc

drwxr-xr-x. 2 root root 4096 Apr 18 18:43sbin

drwxr-xr-x. 4 root root 4096 Apr 18 18:43share

drwxr-xr-x. 3 root root 4096 Apr 18 18:43var

[[email protected] ane]# ll mfs/etc/mfs/

total 28

-rw-r--r--. 1 root root 548 Apr 18 18:43 mfschunkserver.cfg.dist

-rw-r--r--. 1 root root 4060 Apr 18 18:43mfsexports.cfg.dist

-rw-r--r--. 1 root root 57 Apr 18 18:43 mfshdd.cfg.dist

-rw-r--r--. 1 root root 1023 Apr 18 18:43mfsmaster.cfg.dist

-rw-r--r--. 1 root root 433 Apr 18 18:43 mfsmetalogger.cfg.dist

-rw-r--r--. 1 root root 404 Apr 18 18:43 mfsmount.cfg.dist

-rw-r--r--. 1 root root 1123 Apr 18 18:43mfstopology.cfg.dist

[[email protected] ane]# ls mfs/bin

mfsappendchunks mfsdirinfo mfsgeteattr mfsmakesnapshot mfsrgettrashtime mfsseteattr mfssnapshot

mfscheckfile mfsfileinfo mfsgetgoal mfsmount mfsrsetgoal mfssetgoal mfstools

mfsdeleattr mfsfilerepair mfsgettrashtime mfsrgetgoal mfsrsettrashtime mfssettrashtime

[[email protected] ane]# ls mfs/sbin

mfscgiserv mfschunkserver mfsmaster mfsmetadump mfsmetalogger mfsmetarestore

[[email protected] ane]# cp mfs/etc/mfs/mfsmaster.cfg.dist mfs/etc/mfs/mfsmaster.cfg

[[email protected] ane]# vimmfs/etc/mfs/mfsmaster.cfg #(按默认,9419用于master<-->metalogger,9420用于master<-->chunkserver,9421用于master<-->client)

……

# MATOML_LISTEN_HOST = *

# MATOML_LISTEN_PORT = 9419

# MATOML_LOG_PRESERVE_SECONDS = 600

# MATOCS_LISTEN_HOST = *

# MATOCS_LISTEN_PORT = 9420

# MATOCL_LISTEN_HOST = *

# MATOCL_LISTEN_PORT = 9421

……

[[email protected] ane]# cp mfs/etc/mfs/mfsexports.cfg.dist mfs/etc/mfs/mfsexports.cfg

[[email protected] ane]# vimmfs/etc/mfs/mfsexports.cfg

#* / rw,alldirs,maproot=0

* . rw #此处配置与文件误删后的恢复有关

192.168.23.0/24 / rw,alldirs,mapall=mfs:mfs,password=passcode

mfsexports.cfg注:

第1列(格式:单ip;*表示所有ip;ip段,如f.f.f.f-t.t.t.t;ip/netmask;ip/netmask位数);

第2列(/表示mfs根;.表示mfsdata文件系统);

第3列(ro只读模式共享;rw读写模式共享;alldirs允许挂载任何指定的子目录;maproot映射为root或指定的用户;password指定client密码);

[[email protected] ane]# cd mfs/var/mfs/

[[email protected] mfs]# cp metadata.mfs.empty metadata.mfs

[[email protected] mfs]# vim /etc/profile.d/mfs.sh

exportPATH=$PATH:/ane/mfs/bin:/ane/mfs/sbin

[[email protected] mfs]# . !$

. /etc/profile.d/mfs.sh

[[email protected] mfs]# mfsmaster -h

usage: mfsmaster [-vdu] [-t locktimeout][-c cfgfile] [start|stop|restart|reload|test]

[[email protected] mfs]# mfsmaster start #(使用mfsmasterstop关闭,若用kill,无法正常启动时要用mfsmetastore修复)

working directory: /ane/mfs-1.6.27/var/mfs

lockfile created and locked

initializing mfsmaster modules ...

loading sessions ... file not found

if it is not fresh installation then youhave to restart all active mounts !!!

exports file has been loaded

mfstopology configuration file(/ane/mfs-1.6.27/etc/mfstopology.cfg) not found - using defaults

loading metadata ...

create new empty filesystemmetadata filehas been loaded

no charts data file - initializing emptycharts

master <-> metaloggers module: listenon *:9419

master <-> chunkservers module:listen on *:9420

main master server module: listen on *:9421

mfsmaster daemon initialized properly

[[email protected] mfs]# tail -f /var/log/messages

Apr 18 22:21:13 localhost mfsmaster[54691]:set gid to 501

Apr 18 22:21:13 localhost mfsmaster[54691]:set uid to 501

Apr 18 22:21:13 localhost mfsmaster[54691]:can‘t load sessions, fopen error: ENOENT (No such file or directory)

Apr 18 22:21:13 localhost mfsmaster[54691]:exports file has been loaded

Apr 18 22:21:13 localhost mfsmaster[54691]:mfstopology configuration file (/ane/mfs-1.6.27/etc/mfstopology.cfg) not found- network topology not defined

Apr 18 22:21:13 localhost mfsmaster[54691]:create new empty filesystem

Apr 18 22:21:13 localhost mfsmaster[54691]:no charts data file - initializing empty charts

Apr 18 22:21:13 localhost mfsmaster[54691]:master <-> metaloggers module: listen on *:9419

Apr 18 22:21:13 localhost mfsmaster[54691]:master <-> chunkservers module: listen on *:9420

Apr 18 22:21:13 localhost mfsmaster[54691]:main master server module: listen on *:9421

Apr 18 22:21:13 localhost mfsmaster[54691]:open files limit: 5000

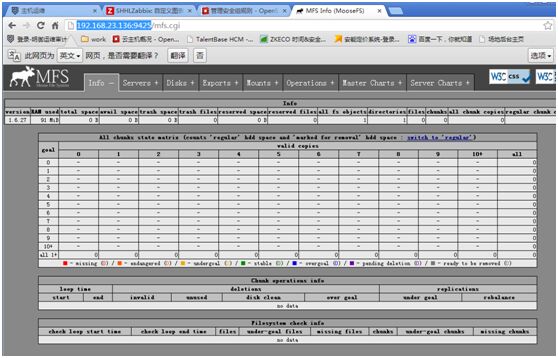

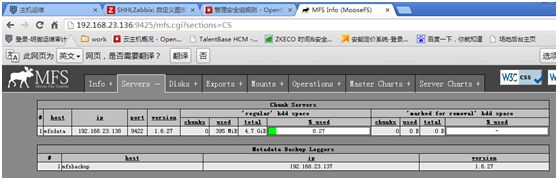

[[email protected] mfs]# mfscgiserv start #(mfs的图形监控,python编写)

lockfile created and locked

starting simple cgi server (host: any ,port: 9425 , rootpath:/ane/mfs-1.6.27/share/mfscgi)

[[email protected] mfs]# netstat -tnulp | grep:94

tcp 0 0 0.0.0.0:9419 0.0.0.0:* LISTEN 54691/mfsmaster

tcp 0 0 0.0.0.0:9420 0.0.0.0:* LISTEN 54691/mfsmaster

tcp 0 0 0.0.0.0:9421 0.0.0.0:* LISTEN 54691/mfsmaster

tcp 0 0 0.0.0.0:9425 0.0.0.0:* LISTEN 54806/python

mfsbackup-side:

安装同mfsmaster;

[[email protected] ane]# cd mfs/etc/mfs/

[[email protected] mfs]# cp mfsmetalogger.cfg.dist mfsmetalogger.cfg

[[email protected] mfs]# vim mfsmetalogger.cfg #(按默认,META_DOWNLOAD_FREQ元数据备份文件下载请求频率,默认为24h,即每隔一天从master上下载一个metadata.mfs.back文件,当mfsmaster故障时,metadata.mfs.back文件消失,若要恢复整个mfs,则需从backup取得文件,并结合日志文件changelog_ml_back.*.mfs一起才能恢复整个被损坏的分布式FS;MASTER_HOST项已在hosts文件中指定)

# META_DOWNLOAD_FREQ = 24

# MASTER_HOST = mfsmaster

[[email protected] mfs]# telnet 192.168.23.136 9419

Trying 192.168.23.136...

Connected to 192.168.23.136.

Escape character is ‘^]‘.

[[email protected] mfs]# vim /etc/profile.d/mfs.sh

exportPATH=$PATH:/ane/mfs/bin:/ane/mfs/sbin

[[email protected] mfs]# . !$

. /etc/profile.d/mfs.sh

[[email protected] mfs]# mfsmetalogger start #(只有进程无监听的端口)

working directory: /ane/mfs-1.6.27/var/mfs

lockfile created and locked

initializing mfsmetalogger modules ...

mfsmetalogger daemon initialized properly

[[email protected] mfs]# ll /ane/mfs/var/mfs/ #(日志文件位置)

total 12

-rw-r-----. 1 mfs mfs 0Apr 18 23:02 changelog_ml_back.0.mfs

-rw-r-----. 1 mfs mfs 0Apr 18 23:02 changelog_ml_back.1.mfs

-rw-r--r--. 1 root root 8 Apr 18 22:47 metadata.mfs.empty

-rw-r-----. 1 mfs mfs 95Apr 18 23:02 metadata_ml.mfs.back

-rw-r-----. 1 mfs mfs 10Apr 18 23:04 sessions_ml.mfs

[[email protected] mfs]# netstat -an | grep ESTABLISHED

tcp 0 52 192.168.23.137:22 192.168.23.1:1555 ESTABLISHED

tcp 0 0 192.168.23.137:36622 192.168.23.136:9419 ESTABLISHED

mfsdata-side:

安装同mfsmaster;

生产中至少3台以上;

[[email protected] ~]# df -h #(用独立的磁盘放数据,生产上一般用raid1/raid10/raid5)

……

/dev/sdb1 5.0G 138M 4.6G 3% /mfsdata

[[email protected] ~]# cd /ane/mfs/etc/mfs/

[[email protected] mfs]# cp mfschunkserver.cfg.dist mfschunkserver.cfg

[[email protected] mfs]# cp mfshdd.cfg.dist mfshdd.cfg

[[email protected] mfs]# vim mfschunkserver.cfg #(按默认)

[[email protected] mfs]# vim mfshdd.cfg #(此文件配置挂载点)

/mfsdata

[[email protected] mfs]# chown -R mfs.mfs /mfsdata/

[[email protected] mfs]# vim/etc/profile.d/mfs.sh

exportPATH=$PATH:/ane/mfs/bin:/ane/mfs/sbin

[[email protected] mfs]# . !$

. /etc/profile.d/mfs.sh

[[email protected] mfs]# mfschunkserver start

working directory: /ane/mfs-1.6.27/var/mfs

lockfile created and locked

initializing mfschunkserver modules ...

hdd space manager: path to scan: /mfsdata/

hdd space manager: start background hddscanning (searching for available chunks)

main server module: listen on *:9422

no charts data file - initializing emptycharts

mfschunkserver daemon initialized properly

[[email protected] mfs]# ls /mfsdata/

00 0A 14 1E 28 32 3C 46 50 5A 64 6E 78 82 8C 96 A0 AA B4 BE C8 D2 DC E6 F0 FA

01 0B 15 1F 29 33 3D 47 51 5B……

[[email protected] mfs]# netstat -an | grepESTABLISHED

tcp 0 52 192.168.23.138:22 192.168.23.1:1650 ESTABLISHED

tcp 0 0 192.168.23.138:53956 192.168.23.136:9420 ESTABLISHED

[[email protected] mfs]# df -h

Filesystem Size Used Avail Use% Mounted on

……

/dev/sdb1 5.0G 139M 4.6G 3% /mfsdata

注:#df -h的结果与web监控界面显示的不一样,相差256M,当mfs上的data空间剩余256m时将不会再存内容,master向data申请空间是按最小256m申请的,低于256m将不再申请空间;

mfsclient-side(在mfsbackup上配置):

[[email protected] mfs]# rpm -qa fuse #(#yum -y installfuse-devel)

fuse-2.8.3-4.el6.x86_64

[[email protected] mfs]# lsmod | grep fuse

fuse 73530 2

注:

[[email protected] mfs]# echo "modprobefuse" >> /etc/sysconfig/modules/fuse.modules #(开机自动加载fuse,或echo "modprobefuse" >> /etc/rc.modules)

[[email protected] mfs]# chmod 755 !$

chmod 755/etc/sysconfig/modules/fuse.modules

注:

若报libfuse.so.2 cannot open shared object file……

#find / -name "libfuse.so.2"

#echo"/usr/local/lib/libfuse.so.2" >> /etc/ld.so.conf

#ldconfig

[[email protected] mfs]# mkdir /mnt/mfs

[[email protected] mfs]# mfsmount -h

usage: mfsmount mountpoint [options]

-m --meta equivalent to ‘-o mfsmeta‘

-H HOST equivalent to ‘-o mfsmaster=HOST‘

-P PORT equivalent to ‘-o mfsport=PORT‘

-p --password similar to ‘-omfspassword=PASSWORD‘, but show prompt and ask user for password

-o mfspassword=PASSWORD authenticate to mfsmaster with password

[[email protected] mfs]# mfsmount /mnt/mfs/ -H 192.168.23.136 -o mfspassword=passcode #(用-o mfspassword=指定密码,也可-p在交互模式下输入)

mfsmaster accepted connection withparameters: read-write,restricted_ip,map_all ; root mapped to mfs:mfs ; usersmapped to root:root

[[email protected] mfs]# cd /mnt/mfs

[[email protected] mfs]# for i in `seq 10` ;do echo 123456 > test$i.txt ; done #(查看master上changelog.0.mfs和backup上changelog_ml.0.mfs变化情况)

[[email protected] mfs]# ls

test10.txt test1.txt test2.txt test3.txt test4.txt test5.txt test6.txt test7.txt test8.txt test9.txt

[[email protected] mfs]# find /mfsdata -type f #(mfsdata上数据变化情况)

/mfsdata/04/chunk_0000000000000004_00000001.mfs

/mfsdata/.lock

/mfsdata/05/chunk_0000000000000005_00000001.mfs

/mfsdata/06/chunk_0000000000000006_00000001.mfs

/mfsdata/03/chunk_0000000000000003_00000001.mfs

/mfsdata/07/chunk_0000000000000007_00000001.mfs

/mfsdata/01/chunk_0000000000000001_00000001.mfs

/mfsdata/02/chunk_0000000000000002_00000001.mfs

/mfsdata/0A/chunk_000000000000000A_00000001.mfs

/mfsdata/08/chunk_0000000000000008_00000001.mfs

/mfsdata/09/chunk_0000000000000009_00000001.mfs

设备备份副本(在mfsclient操作即mfsbackup):

设置备份副本时,要在刚开始使用时设置好;

针对挂载点下的目录设置,不要对挂载点设置;

副本会同步到其它mfsdata;

相关命令mfssetgoal/mfsgetgoal/mfsfileinfo/mfscheckfile;

[[email protected] mfs]# mfssetgoal -h

usage: mfssetgoal [-nhHr] GOAL[-|+] name[name ...]

-r - do it recursively

[[email protected] mfs]# mfsfileinfo test1.txt #(默认副本是1份)

test1.txt:

chunk0: 0000000000000001_00000001 / (id:1 ver:1)

copy1: 192.168.23.138:9422

[[email protected] mfs]# mfsgetgoal test1.txt

test1.txt: 1

[[email protected] mfs]# pwd

/mnt/mfs

[[email protected] mfs]# for i in `seq 5` ; do mkdir a$i ; done #(存文件尽可能放到不同的目录下,这样当某个目录占很大空间时,可单独划集群,否则无法拆分)

[[email protected] mfs]# mfssetgoal -r 3 a1/

a1/:

inodes with goal changed: 1

inodes with goal not changed: 0

inodes with permission denied: 0

[[email protected] mfs]# mfsgetgoala1/test.txt

a1/test.txt: 3

[[email protected] mfs]# mfsfileinfoa1/test.txt #(此环境只一个mfsdata,在实际生产上三个以上dataserver就不会出现这个问题了)

a1/test.txt:

chunk0: 000000000000000B_00000001 / (id:11 ver:1)

copy1: 192.168.23.138:9422

大文件存放:

mfs的数据存放是分多个chunk,每个chunk大小为64m,一个文件若超过64m会占多个chunk;

[[email protected] mfs]# pwd

/mnt/mfs

[[email protected] mfs]# mfssetgoal -r 3 a2/

a2/:

inodes with goal changed: 1

inodes with goal not changed: 0

inodes with permission denied: 0

[[email protected] mfs]# dd if=/dev/zero of=a2/63.img bs=1M count=63

63+0 records in

63+0 records out

66060288 bytes (66 MB) copied, 2.15635 s,30.6 MB/s

[[email protected] mfs]# dd if=/dev/zero of=a2/65.img bs=1M count=65

65+0 records in

65+0 records out

68157440 bytes (68 MB) copied, 2.16212 s,31.5 MB/s

[[email protected] mfs]# mfsfileinfo a2/63.img

a2/63.img:

chunk0: 000000000000000C_00000001 / (id:12 ver:1)

copy1: 192.168.23.138:9422

[[email protected] mfs]# mfsfileinfo a2/65.img

a2/65.img:

chunk 0: 000000000000000D_00000001 / (id:13 ver:1)

copy 1: 192.168.23.138:9422

chunk 1: 000000000000000E_00000001 / (id:14 ver:1)

copy 1: 192.168.23.138:9422

设置文件删除后的回收时间:

默认86400,1day;

[[email protected] mfs]# mfsgettrashtime a2/63.img

a2/63.img: 86400

[[email protected] mfs]# mfssettrashtime -h

usage: mfssettrashtime [-nhHr] SECONDS[-|+]name [name ...]

-r - do it recursively

[[email protected] mfs]# mfssettrashtime -r 1200 a3/ #(对目录下的所有文件生效)

a3/:

inodes with trashtime changed: 1

inodes with trashtime not changed: 0

inodes with permission denied: 0

[[email protected] mfs]# mfssettrashtime 1200 a2/65.img #(公对某个文件生效)

a2/65.img: 1200

[[email protected] mfs]# rm -f a2/65.img

[[email protected] mfs]# mkdir /mnt/mfs-trash

[[email protected] mfs]# mfsmount /mnt/mfs-trash/ -m -H 192.168.23.136

mfsmaster accepted connection withparameters: read-write,restricted_ip

[[email protected] mfs]# ll/mnt/mfs-trash/trash/ #(将此路径下的文件剪切至undel下)

total 66560

-rw-r--r--. 1 mfs mfs 68157440 Apr 19 00:21 00000018|a2|65.img

d-w-------. 2 root root 0 Apr 19 00:38 undel

[[email protected] mfs]# ll/mnt/mfs-trash/trash/undel/

total 0

[[email protected] mfs]# mv /mnt/mfs-trash/trash/00000018|a2|65.img /mnt/mfs-trash/trash/undel/

[[email protected] mfs]# ll a2/

total 131072

-rw-r--r--. 1 mfs mfs 66060288 Apr 19 00:2163.img

-rw-r--r--. 1 mfs mfs 68157440 Apr 19 00:2165.img

验证mfs的容灾性:

[[email protected] mfs]# mfsmetarestore -h

mfsmetarestore [-f] [-b] [-i] [-x [-x]] -m<meta data file> -o <restored meta data file> [ <change logfile> [ <change log file> [ .... ]]

1、依次将多个mfsdata停掉,在web监控上查看状态,并查看数据是否存在(备份副本设为3);

2、模拟用master上备份的metadata恢复宕机的master(

#mfsmetarestore -a #(mfsmaster-side)

#umount -lf /mnt/mfs ; mfsmount -H192.168.23.136 -o mfspassword=passcode #(mfsclient));

3、模拟用backup上的文件恢复master(

#cd /ane/mfs/var/mfs/ ; mfsmetarestore -m metadata_ml.mfs.back*-o metadata.mfs changelog_ml*);

4、master宕,将backup作为master(

#cd /ane/mfs/var/mfs ; mfsmetarestore -mmetadata_ml.mfs.back* -o metadata.mfs changelog_ml*);

启动顺序:

mfsmaster-->mfsdata-->mfsbackup-->client

关闭顺序:

client先umount-->mfsdata-->mfsdata-->mfsmaster

master单点解决:

1、部署多个mfsbackup;

2、heartbeat+drbd实现mfsmaster的HA(drbd最好将安装路径同步);

3、keepalived+inotify,对inotify的监控要准确;

以上是关于学习MFS的主要内容,如果未能解决你的问题,请参考以下文章