Windows下 IDEA编译调试 hive2.3.9

Posted 顧棟

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Windows下 IDEA编译调试 hive2.3.9相关的知识,希望对你有一定的参考价值。

Windows下 IDEA编译调试 hive2.3.9

环境

-

IDEA 2021.2

-

JDK1.8(试过用高版本的JDK17编译,不兼容编译不过)

-

一个Hadoop集群,涉及配置文件core-site.xml,hdfs-site.xml,yarn-site.xml,mapred-site.xml

-

Windows环境中配置hadoop客户端。

-

git

源码编译

在Git Bash中执行编译命令,hive2版本的编译命令 mvn clean package -DskipTests -Pdist。

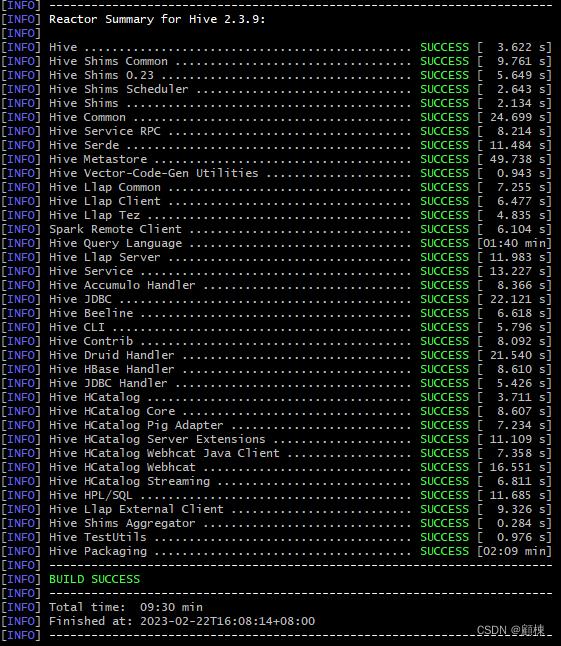

编译结果图

编译问题

[ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.8:run (generate-version-annotation) on project hive-standalone-metastore-common: An Ant BuildException has occured: exec returned: 127

[ERROR] around Ant part ...<exec failonerror="true" executable="bash">... @ 4:46 in F:\\subsys\\mystudy\\hive-2\\hive\\standalone-metastore\\metastore-common\\target\\antrun\\build-main.xml

[ERROR] -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <goals> -rf :hive-standalone-metastore-common

编译命令需要在shell环境下执行,,所以采用git bash环境编译。

导入IDEA

用IDEA打开项目

问题

Could not find artifact org.apache.directory.client.ldap:ldap-client-api:pom:0.1-SNAPSHOT

原因是:丢失了依赖 解决方案:查看社区,发现存在一个issue。issue号是HIVE-21777。在hive service 模块下的pom.xml文件排掉这个依赖项

<dependency>

<groupId>org.apache.directory.server</groupId>

<artifactId>apacheds-server-integ</artifactId>

<version>$apache-directory-server.version</version>

<scope>test</scope>

<!-- 增加的部分-->

<exclusions>

<exclusion>

<groupId>org.apache.directory.client.ldap</groupId>

<artifactId>ldap-client-api</artifactId>

</exclusion>

</exclusions>

</dependency>

启动Cli

-

分别把集群的配置文件copy到

hive-cli模块的resources(需要自己新建和配置)下core-site.xml,hdfs-site.xml,yarn-site.xml,mapred-site.xml,新建hive-log4j2.properties和hive-site.xml文件。 -

变更配置项

mapred-site.xml中新增,

<!-- 支持跨平台提交任务 --> <property> <name>mapreduce.app-submission.cross-platform</name> <value>true</value> </property>hive-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>hive.cli.print.current.db</name> <value>true</value> </property> <property> <name>hive.cli.print.header</name> <value>true</value> </property> <!-- hive 元数据链接地址 HA--> <property> <name>hive.metastore.uris</name> <value>thrift://192.168.1.1:9083,thrift://192.168.1.2:9083</value> <final>true</final> </property> <property> <name>hive.cluster.delegation.token.store.class</name> <value>org.apache.hadoop.hive.thrift.MemoryTokenStore</value> <description>Hive defaults to MemoryTokenStore, or ZooKeeperTokenStore</description> </property> <!-- 测试环境的 hive客户端本地模式--> <property> <name>hive.exec.mode.local.auto</name> <value>true</value> </property> <!--输入最大数据量,默认128MB--> <property> <name>hive.exec.mode.local.auto.inputbytes.max</name> <value>134217728</value> </property> <!-- 最大任务数--> <property> <name>hive.exec.mode.local.auto.input.files.max</name> <value>10</value> </property> </configuration>hive-log4j2.properties变更

property.hive.log.dir = /opt/hive/logs property.hive.log.file = hive-$sys:user.name.log -

修改

hive-cli模块中的pom文件,将commons-io,和disruptor范围取消test限制。 -

配置CliDriver启动,JVM参数

-Djline.WindowsTerminal.directConsole=false,环境配置,HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native;HADOOP_OPTS=-Djava.library.path=$HADOOP_HOME/lib

问题:import org.apache.hive.tmpl.QueryProfileTmpl 缺失

解决 在git bash执行mvn generate-sources ,然后拷贝hive service模块目录target/generated-jamon/org/apache/hive/tmpl生成的源文件至指定目录(需要手动创建),QueryProfileTmpl和QueryProfileTmplImpl。

本地模式下进行基础操作

建库

create database testdb;

建表

create table test001(id int,dummy string) stored as orc;

插入数据

use testdb;

insert into table testdb.test001 values(1,"X");

查询数据

select * from test001;

删除数据,不建议进行单行或者多行的条件式删除,可以进行分区或整表的删除,重新写入数据的方式。

执行记录:

hive (default)> create database testdb;

create database testdb;

OK

Time taken: 0.072 seconds

hive (default)> use testdb;

use testdb;

OK

Time taken: 0.045 seconds

hive (testdb)> create table test001(id int,dummy string) stored as orc;

create table test001(id int,dummy string) stored as orc;

OK

Time taken: 0.53 seconds

hive (testdb)> insert into table test001 values(1,"X");

insert into table test001 values(1,"X");

Automatically selecting local only mode for query

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = 19057578_20230222162806_f6ce7921-08d3-41db-8408-dfd18339e594

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2023-02-22 16:28:13,266 Stage-1 map = 0%, reduce = 0%

2023-02-22 16:28:14,295 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local2039626018_0001

Stage-4 is selected by condition resolver.

Stage-3 is filtered out by condition resolver.

Stage-5 is filtered out by condition resolver.

Moving data to directory hdfs://hadoopdemo/user/bigdata/hive/warehouse/testdb.db/test001/.hive-staging_hive_2023-02-22_16-28-06_738_8793114222801018317-1/-ext-10000

Loading data to table testdb.test001

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 4 HDFS Write: 348 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

Time taken: 8.293 seconds

_col0 _col1

hive (testdb)> select * from test001;

select * from test001;

OK

test001.id test001.dummy

1 X

hive (testdb)> Time taken: 0.241 seconds, Fetched: 1 row(s)

hive (testdb)> drop table test001;

drop table test001;

OK

Time taken: 0.218 seconds

hive (testdb)> show tables;

show tables;

OK

tab_name

values__tmp__table__1

hive (testdb)> Time taken: 0.063 seconds, Fetched: 1 row(s)

values__tmp__table__1为客户端内存中的临时数据,会在关闭客户端后删除,不会落盘。

遗留问题

提交任务到yarn集群失败

取消本地模式,在将任务提交到yarn是会有错误。判断是客户端环境配置问题,但是没有在客户端处解决。

yarn上的日志

************************************************************/

2023-02-22 16:35:04,263 INFO [main] org.apache.hadoop.security.SecurityUtil: Updating Configuration

2023-02-22 16:35:04,263 INFO [main] org.apache.hadoop.mapreduce.v2.app.MRAppMaster: Executing with tokens:

2023-02-22 16:35:04,263 INFO [main] org.apache.hadoop.mapreduce.v2.app.MRAppMaster: Kind: YARN_AM_RM_TOKEN, Service: , Ident: (appAttemptId application_id id: 1 cluster_timestamp: 1677047289113 attemptId: 2 keyId: 176967782)

2023-02-22 16:35:04,365 INFO [main] org.apache.hadoop.mapreduce.v2.app.MRAppMaster: OutputCommitter set in config org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter

2023-02-22 16:35:04,367 INFO [main] org.apache.hadoop.service.AbstractService: Service org.apache.hadoop.mapreduce.v2.app.MRAppMaster failed in state INITED; cause: java.lang.RuntimeException: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

java.lang.RuntimeException: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2427)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$2.call(MRAppMaster.java:545)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$2.call(MRAppMaster.java:522)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.callWithJobClassLoader(MRAppMaster.java:1764)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.createOutputCommitter(MRAppMaster.java:522)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.serviceInit(MRAppMaster.java:308)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$5.run(MRAppMaster.java:1722)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.initAndStartAppMaster(MRAppMaster.java:1719)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.main(MRAppMaster.java:1650)

Caused by: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2395)

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2419)

... 12 more

Caused by: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:2299)

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2393)

... 13 more

2023-02-22 16:35:04,369 FATAL [main] org.apache.hadoop.mapreduce.v2.app.MRAppMaster: Error starting MRAppMaster

java.lang.RuntimeException: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2427)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$2.call(MRAppMaster.java:545)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$2.call(MRAppMaster.java:522)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.callWithJobClassLoader(MRAppMaster.java:1764)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.createOutputCommitter(MRAppMaster.java:522)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.serviceInit(MRAppMaster.java:308)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster$5.run(MRAppMaster.java:1722)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.initAndStartAppMaster(MRAppMaster.java:1719)

at org.apache.hadoop.mapreduce.v2.app.MRAppMaster.main(MRAppMaster.java:1650)

Caused by: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2395)

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2419)

... 12 more

Caused by: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:2299)

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2393)

... 13 more

2023-02-22 16:35:04,372 INFO [main] org.apache.hadoop.util.ExitUtil: Exiting with status 1: java.lang.RuntimeException: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.hive.ql.io.HiveFileFormatUtils$NullOutputCommitter not found

以上是关于Windows下 IDEA编译调试 hive2.3.9的主要内容,如果未能解决你的问题,请参考以下文章

教你在Windows下Gradle如何调试Spring5.2.x

教你在Windows下Gradle如何调试Spring5.2.x

教你在Windows下Gradle如何调试Spring5.2.x