tensorflow利用预训练模型进行目标检测:预训练模型的使用

Posted vactor

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了tensorflow利用预训练模型进行目标检测:预训练模型的使用相关的知识,希望对你有一定的参考价值。

一、运行样例

官网链接:https://github.com/tensorflow/models/blob/master/research/object_detection/object_detection_tutorial.ipynb 但是一直有问题,没有运行起来,所以先使用一个别人写好的代码

上一个在ubuntu下可用的代码链接:https://gitee.com/bubbleit/JianDanWuTiShiBie 使用python2运行,python3可能会有问题

该代码由https://gitee.com/talengu/JianDanWuTiShiBie/tree/master而来,经过我部分的调整与修改,代码包含在ODtest.py文件中,/ssd_mobilenet_v1_coco_11_06_2017中存储的是预训练模型

原始代码如下

import numpy as np from matplotlib import pyplot as plt import os import tensorflow as tf from PIL import Image from utils import label_map_util from utils import visualization_utils as vis_util import datetime # 关闭tensorflow警告 os.environ[‘TF_CPP_MIN_LOG_LEVEL‘]=‘3‘ detection_graph = tf.Graph() # 加载模型数据------------------------------------------------------------------------------------------------------- def loading(): with detection_graph.as_default(): od_graph_def = tf.GraphDef() PATH_TO_CKPT = ‘ssd_mobilenet_v1_coco_11_06_2017‘ + ‘/frozen_inference_graph.pb‘ with tf.gfile.GFile(PATH_TO_CKPT, ‘rb‘) as fid: serialized_graph = fid.read() od_graph_def.ParseFromString(serialized_graph) tf.import_graph_def(od_graph_def, name=‘‘) return detection_graph # Detection检测------------------------------------------------------------------------------------------------------- def load_image_into_numpy_array(image): (im_width, im_height) = image.size return np.array(image.getdata()).reshape( (im_height, im_width, 3)).astype(np.uint8) # List of the strings that is used to add correct label for each box. PATH_TO_LABELS = os.path.join(‘data‘, ‘mscoco_label_map.pbtxt‘) label_map = label_map_util.load_labelmap(PATH_TO_LABELS) categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=90, use_display_name=True) category_index = label_map_util.create_category_index(categories) def Detection(image_path="images/image1.jpg"): loading() with detection_graph.as_default(): with tf.Session(graph=detection_graph) as sess: # for image_path in TEST_IMAGE_PATHS: image = Image.open(image_path) # the array based representation of the image will be used later in order to prepare the # result image with boxes and labels on it. image_np = load_image_into_numpy_array(image) # Expand dimensions since the model expects images to have shape: [1, None, None, 3] image_np_expanded = np.expand_dims(image_np, axis=0) image_tensor = detection_graph.get_tensor_by_name(‘image_tensor:0‘) # Each box represents a part of the image where a particular object was detected. boxes = detection_graph.get_tensor_by_name(‘detection_boxes:0‘) # Each score represent how level of confidence for each of the objects. # Score is shown on the result image, together with the class label. scores = detection_graph.get_tensor_by_name(‘detection_scores:0‘) classes = detection_graph.get_tensor_by_name(‘detection_classes:0‘) num_detections = detection_graph.get_tensor_by_name(‘num_detections:0‘) # Actual detection. (boxes, scores, classes, num_detections) = sess.run( [boxes, scores, classes, num_detections], feed_dict={image_tensor: image_np_expanded}) # Visualization of the results of a detection.将识别结果标记在图片上 vis_util.visualize_boxes_and_labels_on_image_array( image_np, np.squeeze(boxes), np.squeeze(classes).astype(np.int32), np.squeeze(scores), category_index, use_normalized_coordinates=True, line_thickness=8) # output result输出 for i in range(3): if classes[0][i] in category_index.keys(): class_name = category_index[classes[0][i]][‘name‘] else: class_name = ‘N/A‘ print("物体:%s 概率:%s" % (class_name, scores[0][i])) # matplotlib输出图片 # Size, in inches, of the output images. IMAGE_SIZE = (20, 12) plt.figure(figsize=IMAGE_SIZE) plt.imshow(image_np) plt.show() # 运行 Detection()

git clone到本地后执行有几个错误

问题1

报错信息: UnicodeDecodeError: ‘ascii‘ codec can‘t decode byte 0xe5 in position 1: ordinal not in range(128)

solution:参考:https://www.cnblogs.com/QuLory/p/3615584.html

主要错误是上面最后一行的Unicode解码问题,网上搜索说是读取文件时使用的编码默认时ascii而不是utf8,导致的错误;

在代码中加上如下几句即可。

import sys reload(sys) sys.setdefaultencoding(‘utf8‘)

问题1

报错信息:_tkinter.TclError: no display name and no $DISPLAY environment variable 详情:

Traceback (most recent call last): File "ODtest.py", line 103, in <module> Detection() File "ODtest.py", line 96, in Detection plt.figure(figsize=IMAGE_SIZE) File "/usr/local/lib/python2.7/dist-packages/matplotlib/pyplot.py", line 533, in figure **kwargs) File "/usr/local/lib/python2.7/dist-packages/matplotlib/backend_bases.py", line 161, in new_figure_manager return cls.new_figure_manager_given_figure(num, fig) File "/usr/local/lib/python2.7/dist-packages/matplotlib/backends/_backend_tk.py", line 1046, in new_figure_manager_given_figure window = Tk.Tk(className="matplotlib") File "/usr/lib/python2.7/lib-tk/Tkinter.py", line 1822, in __init__ self.tk = _tkinter.create(screenName, baseName, className, interactive, wantobjects, useTk, sync, use) _tkinter.TclError: no display name and no $DISPLAY environment variable

solution:参考:https://blog.csdn.net/qq_22194315/article/details/77984423

纯代码解决方案

这也是大部分人在网上诸如stackoverflow的问答平台得到的解决方案,在引入pyplot、pylab之前,要先更改matplotlib的后端模式为”Agg”。直接贴代码吧!

# do this before importing pylab or pyplot Import matplotlib matplotlib.use(‘Agg‘) import matplotlib.pyplot asplt

修改之后代码为:

#!usr/bin/python # -*- coding: utf-8 -*- import numpy as np import matplotlib matplotlib.use(‘Agg‘) import matplotlib.pyplot from matplotlib import pyplot as plt import os import tensorflow as tf from PIL import Image from utils import label_map_util from utils import visualization_utils as vis_util import datetime # 关闭tensorflow警告 import sys reload(sys) sys.setdefaultencoding(‘utf8‘) os.environ[‘TF_CPP_MIN_LOG_LEVEL‘]=‘3‘ detection_graph = tf.Graph() # 加载模型数据------------------------------------------------------------------------------------------------------- def loading(): with detection_graph.as_default(): od_graph_def = tf.GraphDef() PATH_TO_CKPT = ‘ssd_mobilenet_v1_coco_11_06_2017‘ + ‘/frozen_inference_graph.pb‘ with tf.gfile.GFile(PATH_TO_CKPT, ‘rb‘) as fid: serialized_graph = fid.read() od_graph_def.ParseFromString(serialized_graph) tf.import_graph_def(od_graph_def, name=‘‘) return detection_graph # Detection检测------------------------------------------------------------------------------------------------------- def load_image_into_numpy_array(image): (im_width, im_height) = image.size return np.array(image.getdata()).reshape( (im_height, im_width, 3)).astype(np.uint8) # List of the strings that is used to add correct label for each box. PATH_TO_LABELS = os.path.join(‘data‘, ‘mscoco_label_map.pbtxt‘) label_map = label_map_util.load_labelmap(PATH_TO_LABELS) categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=90, use_display_name=True) category_index = label_map_util.create_category_index(categories) def Detection(image_path="images/image1.jpg"): loading() with detection_graph.as_default(): with tf.Session(graph=detection_graph) as sess: # for image_path in TEST_IMAGE_PATHS: image = Image.open(image_path) # the array based representation of the image will be used later in order to prepare the # result image with boxes and labels on it. image_np = load_image_into_numpy_array(image) # Expand dimensions since the model expects images to have shape: [1, None, None, 3] image_np_expanded = np.expand_dims(image_np, axis=0) image_tensor = detection_graph.get_tensor_by_name(‘image_tensor:0‘) # Each box represents a part of the image where a particular object was detected. boxes = detection_graph.get_tensor_by_name(‘detection_boxes:0‘) # Each score represent how level of confidence for each of the objects. # Score is shown on the result image, together with the class label. scores = detection_graph.get_tensor_by_name(‘detection_scores:0‘) classes = detection_graph.get_tensor_by_name(‘detection_classes:0‘) num_detections = detection_graph.get_tensor_by_name(‘num_detections:0‘) # Actual detection. (boxes, scores, classes, num_detections) = sess.run( [boxes, scores, classes, num_detections], feed_dict={image_tensor: image_np_expanded}) # Visualization of the results of a detection.将识别结果标记在图片上 vis_util.visualize_boxes_and_labels_on_image_array( image_np, np.squeeze(boxes), np.squeeze(classes).astype(np.int32), np.squeeze(scores), category_index, use_normalized_coordinates=True, line_thickness=8) # output result输出 for i in range(3): if classes[0][i] in category_index.keys(): class_name = category_index[classes[0][i]][‘name‘] else: class_name = ‘N/A‘ print("object:%s gailv:%s" % (class_name, scores[0][i])) # matplotlib输出图片 # Size, in inches, of the output images. IMAGE_SIZE = (20, 12) plt.figure(figsize=IMAGE_SIZE) plt.imshow(image_np) plt.show() # 运行 Detection()

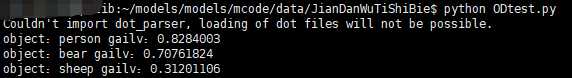

运行结果:

如无意外,加上时间统计函数,调用已下载好的预训练模型即可

二、使用与训练模型

aa

以上是关于tensorflow利用预训练模型进行目标检测:预训练模型的使用的主要内容,如果未能解决你的问题,请参考以下文章