FPN

Posted hugeng007

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了FPN相关的知识,希望对你有一定的参考价值。

contrast between Chinese and English

Feature Pyramid Networks for Object Detection

用于特征检测的目标金字塔网络

Abstract

摘要

Feature pyramids are a basic component in recognition systems for detecting objects at different scales. But recent deep learning object detectors have avoided pyramid representations, in part because they are compute and memory intensive. In this paper, we exploit the inherent multi-scale, pyramidal hierarchy of deep convolutional networks to construct feature pyramids with marginal extra cost. A top-down architecture with lateral connections is developed for building high-level semantic feature maps at all scales. This architecture, called a Feature Pyramid Network (FPN), shows significant improvement as a generic feature extractor in several applications. Using FPN in a basic Faster R-CNN system, our method achieves state-of-the-art single-model results on the COCO detection benchmark without bells and whistles, surpassing all existing single-model entries including those from the COCO 2016 challenge winners. In addition, our method can run at 6 FPS on a GPU and thus is a practical and accurate solution to multi-scale object detection. Code will be made publicly available.

特征金字塔是识别系统中用于检测不同尺度目标的基本组件。但最近的深度学习目标检测器已经避免了金字塔表示,部分原因是它们是计算和内存密集型的。在本文中,我们利用深度卷积网络内在的多尺度、金字塔分级来构造具有很少额外成本的特征金字塔。开发了一种具有横向连接的自顶向下架构,用于在所有尺度上构建高级语义特征映射。这种称为特征金字塔网络(FPN)的架构在几个应用程序中作为通用特征提取器表现出了显著的改进。在一个基本的Faster R-CNN系统中使用FPN,没有任何不必要的东西,我们的方法可以在COCO检测基准数据集上取得最先进的单模型结果,结果超过了所有现有的单模型输入,包括COCO 2016挑战赛的获奖者。此外,我们的方法可以在GPU上以6FPS运行,因此是多尺度目标检测的实用和准确的解决方案。代码将公开发布。

1.Introduction

1.引言

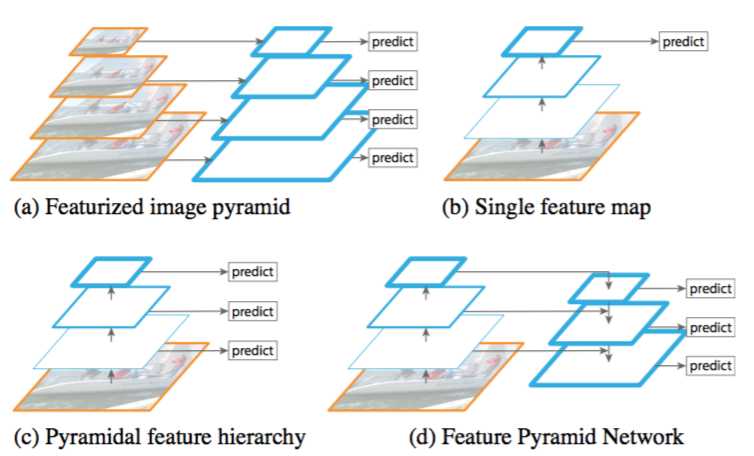

Recognizing objects at vastly different scales is a fundamental challenge in computer vision. Feature pyramids built upon image pyramids (for short we call these featurized image pyramids) form the basis of a standard solution [1] (Fig. 1(a)). These pyramids are scale-invariant in the sense that an object’s scale change is offset by shifting its level in the pyramid. Intuitively, this property enables a model to detect objects across a large range of scales by scanning the model over both positions and pyramid levels.

识别不同尺度的目标是计算机视觉中的一个基本挑战。建立在图像金字塔之上的特征金字塔(我们简称为特征化图像金字塔)构成了标准解决方案的基础[1](图1(a))。这些金字塔是尺度不变的,因为目标的尺度变化是通过在金字塔中移动它的层级来抵消的。直观地说,该属性使模型能够通过在位置和金字塔等级上扫描模型来检测大范围尺度内的目标。

Figure 1. (a) Using an image pyramid to build a feature pyramid. Features are computed on each of the image scales independently, which is slow. (b) Recent detection systems have opted to use only single scale features for faster detection. (c) An alternative is to reuse the pyramidal feature hierarchy computed by a ConvNet as if it were a featurized image pyramid. (d) Our proposed Feature Pyramid Network (FPN) is fast like (b) and (c), but more accurate. In this figure, feature maps are indicate by blue outlines and thicker outlines denote semantically stronger features.

图1。(a)使用图像金字塔构建特征金字塔。每个图像尺度上的特征都是独立计算的,速度很慢。(b)最近的检测系统选择只使用单一尺度特征进行更快的检测。(c)另一种方法是重用ConvNet计算的金字塔特征层次结构,就好像它是一个特征化的图像金字塔。(d)我们提出的特征金字塔网络(FPN)与(b)和(c)类似,但更准确。在该图中,特征映射用蓝色轮廓表示,较粗的轮廓表示语义上较强的特征。

Featurized image pyramids were heavily used in the era of hand-engineered features [5, 25]. They were so critical that object detectors like DPM [7] required dense scale sampling to achieve good results (e.g., 10 scales per octave). For recognition tasks, engineered features have largely been replaced with features computed by deep convolutional networks (ConvNets) [19, 20]. Aside from being capable of representing higher-level semantics, ConvNets are also more robust to variance in scale and thus facilitate recognition from features computed on a single input scale [15, 11, 29] (Fig. 1(b)). But even with this robustness, pyramids are still needed to get the most accurate results. All recent top entries in the ImageNet [33] and COCO [21] detection challenges use multi-scale testing on featurized image pyramids (e.g., [16, 35]). The principle advantage of featurizing each level of an image pyramid is that it produces a multi-scale feature representation in which all levels are semantically strong, including the high-resolution levels.

特征化图像金字塔在手工设计的时代被大量使用[5,25]。它们非常关键,以至于像DPM[7]这样的目标检测器需要密集的尺度采样才能获得好的结果(例如每组10个尺度,octave含义参考SIFT特征)。对于识别任务,工程特征大部分已经被深度卷积网络(ConvNets)[19,20]计算的特征所取代。除了能够表示更高级别的语义,ConvNets对于尺度变化也更加鲁棒,从而有助于从单一输入尺度上计算的特征进行识别[15,11,29](图1(b))。但即使有这种鲁棒性,金字塔仍然需要得到最准确的结果。在ImageNet[33]和COCO[21]检测挑战中,最近的所有排名靠前的输入都使用了针对特征化图像金字塔的多尺度测试(例如[16,35])。对图像金字塔的每个层次进行特征化的主要优势在于它产生了多尺度的特征表示,其中所有层次上在语义上都很强,包括高分辨率层。

Nevertheless, featurizing each level of an image pyramid has obvious limitations. Inference time increases considerably (e.g., by four times [11]), making this approach impractical for real applications. Moreover, training deep networks end-to-end on an image pyramid is infeasible in terms of memory, and so, if exploited, image pyramids are used only at test time [15, 11, 16, 35], which creates an inconsistency between train/test-time inference. For these reasons, Fast and Faster R-CNN [11, 29] opt to not use featurized image pyramids under default settings.

尽管如此,特征化图像金字塔的每个层次都具有明显的局限性。推断时间显著增加(例如,四倍[11]),使得这种方法在实际应用中不切实际。此外,在图像金字塔上端对端地训练深度网络在内存方面是不可行的,所以如果被采用,图像金字塔仅在测试时被使用[15,11,16,35],这造成了训练/测试时推断的不一致性。出于这些原因,Fast和Faster R-CNN[11,29]选择在默认设置下不使用特征化图像金字塔。

However, image pyramids are not the only way to compute a multi-scale feature representation. A deep ConvNet computes a feature hierarchy layer by layer, and with subsampling layers the feature hierarchy has an inherent multi-scale, pyramidal shape. This in-network feature hierarchy produces feature maps of different spatial resolutions, but introduces large semantic gaps caused by different depths. The high-resolution maps have low-level features that harm their representational capacity for object recognition.

但是,图像金字塔并不是计算多尺度特征表示的唯一方法。深层ConvNet逐层计算特征层级,而对于下采样层,特征层级具有内在的多尺度金字塔形状。这种网内特征层级产生不同空间分辨率的特征映射,但引入了由不同深度引起的较大的语义差异。高分辨率映射具有损害其目标识别表示能力的低级特征。

The Single Shot Detector (SSD) [22] is one of the first attempts at using a ConvNet’s pyramidal feature hierarchy as if it were a featurized image pyramid (Fig. 1(c)). Ideally, the SSD-style pyramid would reuse the multi-scale feature maps from different layers computed in the forward pass and thus come free of cost. But to avoid using low-level features SSD foregoes reusing already computed layers and instead builds the pyramid starting from high up in the network (e.g., conv4_3 of VGG nets [36]) and then by adding several new layers. Thus it misses the opportunity to reuse the higher-resolution maps of the feature hierarchy. We show that these are important for detecting small objects.

单次检测器(SSD)[22]是首先尝试使用ConvNet的金字塔特征层级中的一个,好像它是一个特征化的图像金字塔(图1(c))。理想情况下,SSD风格的金字塔将重用正向传递中从不同层中计算的多尺度特征映射,因此是零成本的。但为了避免使用低级特征,SSD放弃重用已经计算好的图层,而从网络中的最高层开始构建金字塔(例如,VGG网络的conv4_3[36]),然后添加几个新层。因此它错过了重用特征层级的更高分辨率映射的机会。我们证明这些对于检测小目标很重要。

The goal of this paper is to naturally leverage the pyramidal shape of a ConvNet’s feature hierarchy while creating a feature pyramid that has strong semantics at all scales. To achieve this goal, we rely on an architecture that combines low-resolution, semantically strong features with high-resolution, semantically weak features via a top-down pathway and lateral connections (Fig. 1(d)). The result is a feature pyramid that has rich semantics at all levels and is built quickly from a single input image scale. In other words, we show how to create in-network feature pyramids that can be used to replace featurized image pyramids without sacrificing representational power, speed, or memory.

本文的目标是自然地利用ConvNet特征层级的金字塔形状,同时创建一个在所有尺度上都具有强大语义的特征金字塔。为了实现这个目标,我们依赖于一种结构,它将低分辨率,具有高分辨率的强大语义特征,语义上的弱特征通过自顶向下的路径和横向连接相结合(图1(d))。其结果是一个特征金字塔,在所有级别都具有丰富的语义,并且可以从单个输入图像尺度上进行快速构建。换句话说,我们展示了如何创建网络中的特征金字塔,可以用来代替特征化的图像金字塔,而不牺牲表示能力,速度或内存。

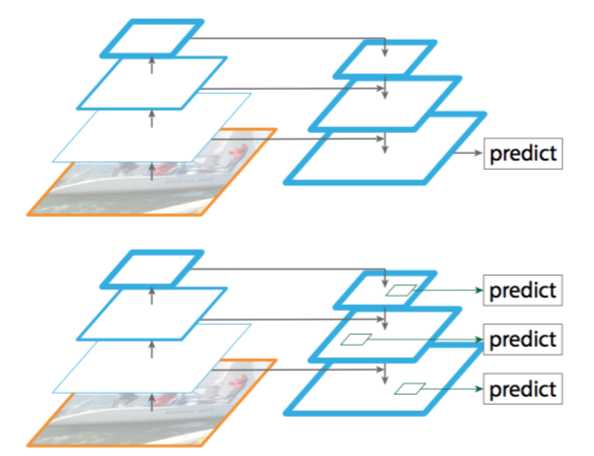

Similar architectures adopting top-down and skip connections are popular in recent research [28, 17, 8, 26]. Their goals are to produce a single high-level feature map of a fine resolution on which the predictions are to be made (Fig. 2 top). On the contrary, our method leverages the architecture as a feature pyramid where predictions (e.g., object detections) are independently made on each level (Fig. 2 bottom). Our model echoes a featurized image pyramid, which has not been explored in these works.

最近的研究[28,17,8,26]中流行采用自顶向下和跳跃连接的类似架构。他们的目标是生成具有高分辨率的单个高级特征映射,并在其上进行预测(图2顶部)。相反,我们的方法利用这个架构作为特征金字塔,其中预测(例如目标检测)在每个级别上独立进行(图2底部)。我们的模型反映了一个特征化的图像金字塔,这在这些研究中还没有探索过。

Figure 2. Top: a top-down architecture with skip connections, where predictions are made on the finest level (e.g., [28]). Bottom: our model that has a similar structure but leverages it as a feature pyramid, with predictions made independently at all levels.

图2。顶部:带有跳跃连接的自顶向下的架构,在最好的级别上进行预测(例如,[28])。底部:我们的模型具有类似的结构,但将其用作特征金字塔,并在各个层级上独立进行预测

We evaluate our method, called a Feature Pyramid Network (FPN), in various systems for detection and segmentation [11, 29, 27]. Without bells and whistles, we report a state-of-the-art single-model result on the challenging COCO detection benchmark [21] simply based on FPN and a basic Faster R-CNN detector [29], surpassing all existing heavily-engineered single-model entries of competition winners. In ablation experiments, we find that for bounding box proposals, FPN significantly increases the Average Recall (AR) by 8.0 points; for object detection, it improves the COCO-style Average Precision (AP) by 2.3 points and PASCAL-style AP by 3.8 points, over a strong single-scale baseline of Faster R-CNN on ResNets [16]. Our method is also easily extended to mask proposals and improves both instance segmentation AR and speed over state-of-the-art methods that heavily depend on image pyramids.

我们评估了我们称为特征金字塔网络(FPN)的方法,其在各种系统中用于检测和分割[11,29,27]。没有任何不必要的东西,我们在具有挑战性的COCO检测基准数据集上报告了最新的单模型结果,仅仅基于FPN和基本的Faster R-CNN检测器[29],就超过了竞赛获奖者所有现存的严重工程化的单模型竞赛输入。在消融实验中,我们发现对于边界框提议,FPN将平均召回率(AR)显著增加了8个百分点;对于目标检测,它将COCO型的平均精度(AP)提高了2.3个百分点,PASCAL型AP提高了3.8个百分点,超过了ResNet[16]上Faster R-CNN强大的单尺度基准线。我们的方法也很容易扩展掩模提议,改进实例分隔AR,加速严重依赖图像金字塔的最先进方法。

In addition, our pyramid structure can be trained end-to-end with all scales and is used consistently at train/test time, which would be memory-infeasible using image pyramids. As a result, FPNs are able to achieve higher accuracy than all existing state-of-the-art methods. Moreover, this improvement is achieved without increasing testing time over the single-scale baseline. We believe these advances will facilitate future research and applications. Our code will be made publicly available.

另外,我们的金字塔结构可以通过所有尺度进行端对端培训,并且在训练/测试时一致地使用,这在使用图像金字塔时是内存不可行的。因此,FPN能够比所有现有的最先进方法获得更高的准确度。此外,这种改进是在不增加单尺度基准测试时间的情况下实现的。我们相信这些进展将有助于未来的研究和应用。我们的代码将公开发布。

2.Related Work

- 相关工作

Hand-engineered features and early neural networks. SIFT features [25] were originally extracted at scale-space extrema and used for feature point matching. HOG features [5], and later SIFT features as well, were computed densely over entire image pyramids. These HOG and SIFT pyramids have been used in numerous works for image classification, object detection, human pose estimation, and more. There has also been significant interest in computing featurized image pyramids quickly. Dollar et al.[6] demonstrated fast pyramid computation by first computing a sparsely sampled (in scale) pyramid and then interpolating missing levels. Before HOG and SIFT, early work on face detection with ConvNets [38, 32] computed shallow networks over image pyramids to detect faces across scales.

手工设计特征和早期神经网络。SIFT特征[25]最初是从尺度空间极值中提取的,用于特征点匹配。HOG特征[5],以及后来的SIFT特征,都是在整个图像金字塔上密集计算的。这些HOG和SIFT金字塔已在许多工作中得到了应用,用于图像分类,目标检测,人体姿势估计等。这对快速计算特征化图像金字塔也很有意义。Dollar等人[6]通过先计算一个稀疏采样(尺度)金字塔,然后插入缺失的层级,从而演示了快速金字塔计算。在HOG和SIFT之前,使用ConvNet[38,32]的早期人脸检测工作计算了图像金字塔上的浅网络,以检测跨尺度的人脸。

Deep ConvNet object detectors. With the development of modern deep ConvNets [19], object detectors like OverFeat [34] and R-CNN [12] showed dramatic improvements in accuracy. OverFeat adopted a strategy similar to early neural network face detectors by applying a ConvNet as a sliding window detector on an image pyramid. R-CNN adopted a region proposal-based strategy [37] in which each proposal was scale-normalized before classifying with a ConvNet. SPPnet [15] demonstrated that such region-based detectors could be applied much more efficiently on feature maps extracted on a single image scale. Recent and more accurate detection methods like Fast R-CNN [11] and Faster R-CNN [29] advocate using features computed from a single scale, because it offers a good trade-off between accuracy and speed. Multi-scale detection, however, still performs better, especially for small objects.

Deep ConvNet目标检测器。随着现代深度卷积网络[19]的发展,像OverFeat[34]和R-CNN[12]这样的目标检测器在精度上显示出了显著的提高。OverFeat采用了一种类似于早期神经网络人脸检测器的策略,通过在图像金字塔上应用ConvNet作为滑动窗口检测器。R-CNN采用了基于区域提议的策略[37],其中每个提议在用ConvNet进行分类之前都进行了尺度归一化。SPPnet[15]表明,这种基于区域的检测器可以更有效地应用于在单个图像尺度上提取的特征映射。最近更准确的检测方法,如Fast R-CNN[11]和Faster R-CNN[29]提倡使用从单一尺度计算出的特征,因为它提供了精确度和速度之间的良好折衷。然而,多尺度检测性能仍然更好,特别是对于小型目标。

Methods using multiple layers. A number of recent approaches improve detection and segmentation by using different layers in a ConvNet. FCN [24] sums partial scores for each category over multiple scales to compute semantic segmentations. Hypercolumns [13] uses a similar method for object instance segmentation. Several other approaches (HyperNet [18], ParseNet [23], and ION [2]) concatenate features of multiple layers before computing predictions, which is equivalent to summing transformed features. SSD [22] and MS-CNN [3] predict objects at multiple layers of the feature hierarchy without combining features or scores.

使用多层的方法。一些最近的方法通过使用ConvNet中的不同层来改进检测和分割。FCN[24]将多个尺度上的每个类别的部分分数相加以计算语义分割。Hypercolumns[13]使用类似的方法进行目标实例分割。在计算预测之前,其他几种方法(HyperNet[18],ParseNet[23]和ION[2])将多个层的特征连接起来,这相当于累加转换后的特征。SSD[22]和MS-CNN[3]可预测特征层级中多个层的目标,而不需要组合特征或分数。

There are recent methods exploiting lateral/skip connections that associate low-level feature maps across resolutions and semantic levels, including U-Net [31] and SharpMask [28] for segmentation, Recombinator networks [17] for face detection, and Stacked Hourglass networks [26] for keypoint estimation. Ghiasi et al. [8] present a Laplacian pyramid presentation for FCNs to progressively refine segmentation. Although these methods adopt architectures with pyramidal shapes, they are unlike featurized image pyramids [5, 7, 34] where predictions are made independently at all levels, see Fig. 2. In fact, for the pyramidal architecture in Fig. 2 (top), image pyramids are still needed to recognize objects across multiple scales [28].

最近有一些方法利用横向/跳跃连接将跨分辨率和语义层次的低级特征映射关联起来,包括用于分割的U-Net[31]和SharpMask[28],Recombinator网络[17]用于人脸检测以及Stacked Hourglass网络[26]用于关键点估计。Ghiasi等人[8]为FCN提出拉普拉斯金字塔表示,以逐步细化分割。尽管这些方法采用的是金字塔形状的架构,但它们不同于特征化的图像金字塔[5,7,34],其中所有层次上的预测都是独立进行的,参见图2。事实上,对于图2(顶部)中的金字塔结构,图像金字塔仍然需要跨多个尺度上识别目标[28]。

3.Feature Pyramid Networks

- 特征金字塔网络

Our goal is to leverage a ConvNet’s pyramidal feature hierarchy, which has semantics from low to high levels, and build a feature pyramid with high-level semantics throughout. The resulting Feature Pyramid Network is general-purpose and in this paper we focus on sliding window proposers (Region Proposal Network, RPN for short) [29] and region-based detectors (Fast R-CNN) [11]. We also generalize FPNs to instance segmentation proposals in Sec.6.

我们的目标是利用ConvNet的金字塔特征层级,该层次结构具有从低到高的语义,并在整个过程中构建具有高级语义的特征金字塔。由此产生的特征金字塔网络是通用的,在本文中,我们侧重于滑动窗口提议(Region Proposal Network,简称RPN)[29]和基于区域的检测器(Fast R-CNN)[11]。在第6节中我们还将FPN泛化到实例细分提议。

Our method takes a single-scale image of an arbitrary size as input, and outputs proportionally sized feature maps at multiple levels, in a fully convolutional fashion. This process is independent of the backbone convolutional architectures (e.g., [19, 36, 16]), and in this paper we present results using ResNets [16]. The construction of our pyramid involves a bottom-up pathway, a top-down pathway, and lateral connections, as introduced in the following.

我们的方法以任意大小的单尺度图像作为输入,并以全卷积的方式输出多层适当大小的特征映射。这个过程独立于主卷积体系结构(例如[19,36,16]),在本文中,我们呈现了使用ResNets[16]的结果。如下所述,我们的金字塔结构包括自下而上的路径,自上而下的路径和横向连接。

Bottom-up pathway. The bottom-up pathway is the feed-forward computation of the backbone ConvNet, which computes a feature hierarchy consisting of feature maps at several scales with a scaling step of 2. There are often many layers producing output maps of the same size and we say these layers are in the same network stage. For our feature pyramid, we define one pyramid level for each stage. We choose the output of the last layer of each stage as our reference set of feature maps, which we will enrich to create our pyramid. This choice is natural since the deepest layer of each stage should have the strongest features.

自下而上的路径。自下向上的路径是主ConvNet的前馈计算,其计算由尺度步长为2的多尺度特征映射组成的特征层级。通常有许多层产生相同大小的输出映射,并且我们认为这些层位于相同的网络阶段。对于我们的特征金字塔,我们为每个阶段定义一个金字塔层。我们选择每个阶段的最后一层的输出作为我们的特征映射参考集,我们将丰富它来创建我们的金字塔。这种选择是自然的,因为每个阶段的最深层应具有最强大的特征。

Specifically, for ResNets [16] we use the feature activations output by each stage’s last residual block. We denote the output of these last residual blocks as (lbrace C_2 , C_3 , C_4 , C_5 brace) for conv2, conv3, conv4, and conv5 outputs, and note that they have strides of {4, 8, 16, 32} pixels with respect to the input image. We do not include conv1 into the pyramid due to its large memory footprint.

具体而言,对于ResNets[16],我们使用每个阶段的最后一个残差块输出的特征激活。对于conv2,conv3,conv4和conv5输出,我们将这些最后残差块的输出表示为(lbrace C_2 , C_3 , C_4 , C_5 brace),并注意相对于输入图像它们的步长为{4,8,16,32}个像素。由于其庞大的内存占用,我们不会将conv1纳入金字塔。

Top-down pathway and lateral connections. The top-down pathway hallucinates higher resolution features by upsampling spatially coarser, but semantically stronger, feature maps from higher pyramid levels. These features are then enhanced with features from the bottom-up pathway via lateral connections. Each lateral connection merges feature maps of the same spatial size from the bottom-up pathway and the top-down pathway. The bottom-up feature map is of lower-level semantics, but its activations are more accurately localized as it was subsampled fewer times.

自顶向下的路径和横向连接。自顶向下的路径通过上采样空间上更粗糙但在语义上更强的来自较高金字塔等级的特征映射来幻化更高分辨率的特征。这些特征随后通过来自自下而上路径上的特征经由横向连接进行增强。每个横向连接合并来自自下而上路径和自顶向下路径的具有相同空间大小的特征映射。自下而上的特征映射具有较低级别的语义,但其激活可以更精确地定位,因为它被下采样的次数更少。

the unknow word

| The First Column | The Second Column |

|---|---|

| authentication token | 身份令牌 |

| authentication | [o:thentikeition]认证 |

| token | [‘touken]象征,记号,代币 |

以上是关于FPN的主要内容,如果未能解决你的问题,请参考以下文章