第二十五章 Kubernetes部署Promethus集群资源监控

Posted 青青子衿悠悠我心

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了第二十五章 Kubernetes部署Promethus集群资源监控相关的知识,希望对你有一定的参考价值。

一、监控指标

#1.集群监控

1)节点资源利用率

2)节点数

3)运行的pods

#2.Pod监控

1)容器指标

2)应用程序

二、部署应用Promethus

1.创建promethus目录

[root@kubernetes-master-001 ~]# mkdir -p monitor && cd monitor/

[root@kubernetes-master-001 ~/monitor]# mkdir -p promethus && cd promethus

2.创建deployment文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi promethus_deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:v2.0.0

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config

3.创建service文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi promethus_svc.yaml

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30003

selector:

app: prometheus

4.创建configmap文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: \'kubernetes-apiservers\'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: \'kubernetes-nodes\'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: \'kubernetes-cadvisor\'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: \'kubernetes-service-endpoints\'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\\d+)?;(\\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: \'kubernetes-services\'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: \'kubernetes-ingresses\'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: \'kubernetes-pods\'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\\d+)?;(\\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

5.创建rabc文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi rabc-setup.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

6.创建应用并验证

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl create -f .

configmap/prometheus-config created

deployment.apps/prometheus created

service/prometheus created

clusterrole.rbac.authorization.k8s.io/prometheus created

serviceaccount/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

prometheus-68546b8d9-9mgft 1/1 Running 0 3m30s

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 17d

metrics-server ClusterIP 10.98.147.122 <none> 443/TCP 17h

prometheus NodePort 10.99.251.153 <none> 9090:30003/TCP 12m

三、部署Node_exporter

1.编写yaml文件

[root@kubernetes-master-001 ~/monitor/promethus]# vi node_expoter.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

k8s-app: node-exporter

spec:

selector:

matchLabels:

k8s-app: node-exporter

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: kube-system

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type: NodePort

selector:

k8s-app: node-exporter

2.部署node_exporter并验证

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl create -f node_expoter.yaml

daemonset.apps/node-exporter created

service/node-exporter created

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

node-exporter-49fkx 1/1 Running 0 89s

node-exporter-6wdpp 1/1 Running 0 89s

prometheus-68546b8d9-9mgft 1/1 Running 0 24m

[root@kubernetes-master-001 ~/monitor/promethus]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 17d

metrics-server ClusterIP 10.98.147.122 <none> 443/TCP 18h

node-exporter NodePort 10.107.177.177 <none> 9100:31672/TCP 91s

prometheus NodePort 10.99.251.153 <none> 9090:30003/TCP 24m

四、部署Grafana

1.创建grafana目录

[root@kubernetes-master-001 ~/monitor/promethus]# cd ..

[root@kubernetes-master-001 ~/monitor]# mkdir grafana && cd grafana

2.编写deployment文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana-core

namespace: kube-system

labels:

app: grafana

component: core

spec:

selector:

matchLabels:

app: grafana

replicas: 1

template:

metadata:

labels:

app: grafana

component: core

spec:

containers:

- image: grafana/grafana:4.2.0

name: grafana-core

imagePullPolicy: IfNotPresent

# env:

resources:

# keep request = limit to keep this container in guaranteed class

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

env:

# The following env variables set up basic auth twith the default admin user and admin password.

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "false"

# - name: GF_AUTH_ANONYMOUS_ORG_ROLE

# value: Admin

# does not really work, because of template variables in exported dashboards:

# - name: GF_DASHBOARDS_JSON_ENABLED

# value: "true"

readinessProbe:

httpGet:

path: /login

port: 3000

# initialDelaySeconds: 30

# timeoutSeconds: 1

volumeMounts:

- name: grafana-persistent-storage

mountPath: /var

volumes:

- name: grafana-persistent-storage

emptyDir: {}

3.编写service文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

selector:

app: grafana

component: core

4.编写ingress文件

[root@kubernetes-master-001 ~/monitor/grafana]# vi grafana-ing.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: grafana

namespace: kube-system

annotations:

kubernetes.io/ingress.class: nginx

spec:

rules:

- host: grafana.test.mydomain.com

http:

paths:

- path: "/"

pathType: Prefix

backend:

service:

name: grafana

port:

number: 3000

5.部署应用并验证

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl create -f .

deployment.apps/grafana-core created

ingress.networking.k8s.io/grafana created

service/grafana created

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

grafana-core-85587c9c49-945lv 1/1 Running 0 3m28s

metrics-server-599cdf68d4-cbngr 1/1 Running 0 18h

node-exporter-49fkx 1/1 Running 0 41m

node-exporter-6wdpp 1/1 Running 0 41m

prometheus-68546b8d9-9mgft 1/1 Running 0 64m

[root@kubernetes-master-001 ~/monitor/grafana]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

grafana NodePort 10.111.128.51 <none> 3000:30369/TCP 3m42s

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 17d

metrics-server ClusterIP 10.98.147.122 <none> 443/TCP 18h

node-exporter NodePort 10.107.177.177 <none> 9100:31672/TCP 41m

prometheus NodePort 10.99.251.153 <none> 9090:30003/TCP 64m

五、浏览器验证部署

1.Promethus访问测试

2.Grafana访问测试

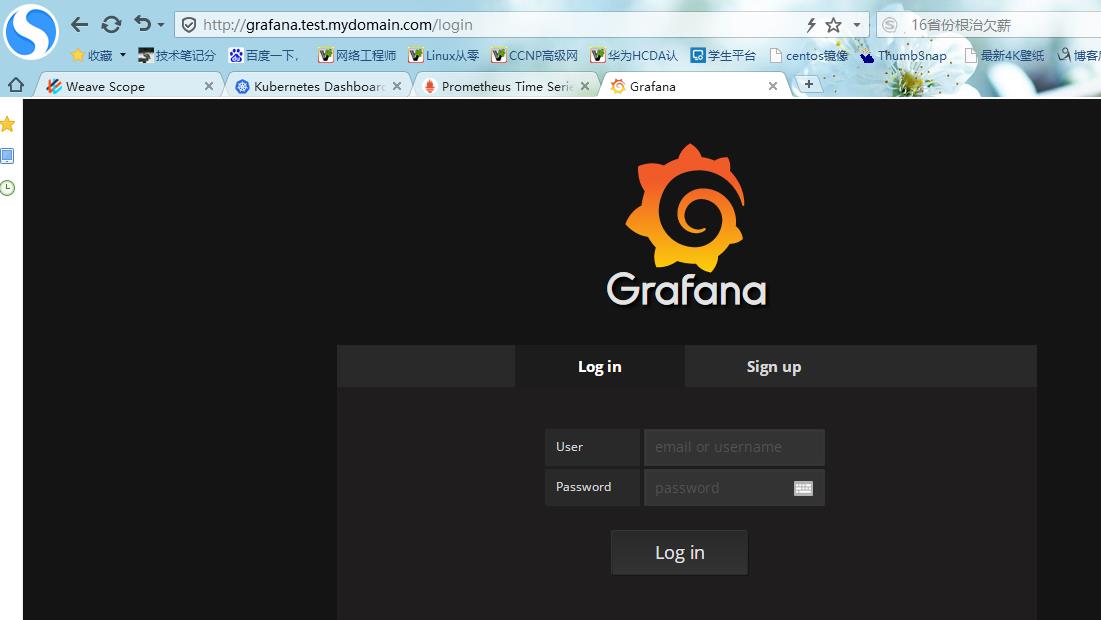

#1.通过域名访问:grafana.test.mydomain.com,默认用户名密码:admin

#2.登录成功后出现以下界面

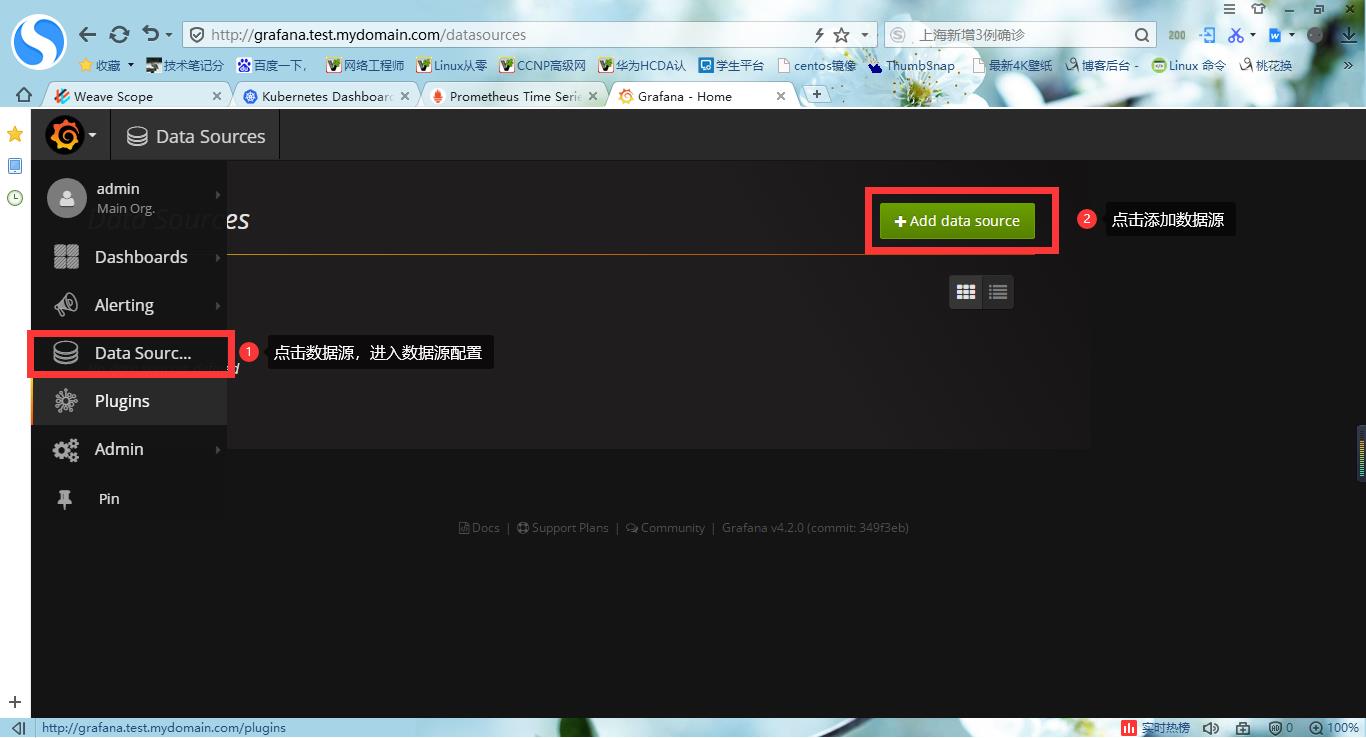

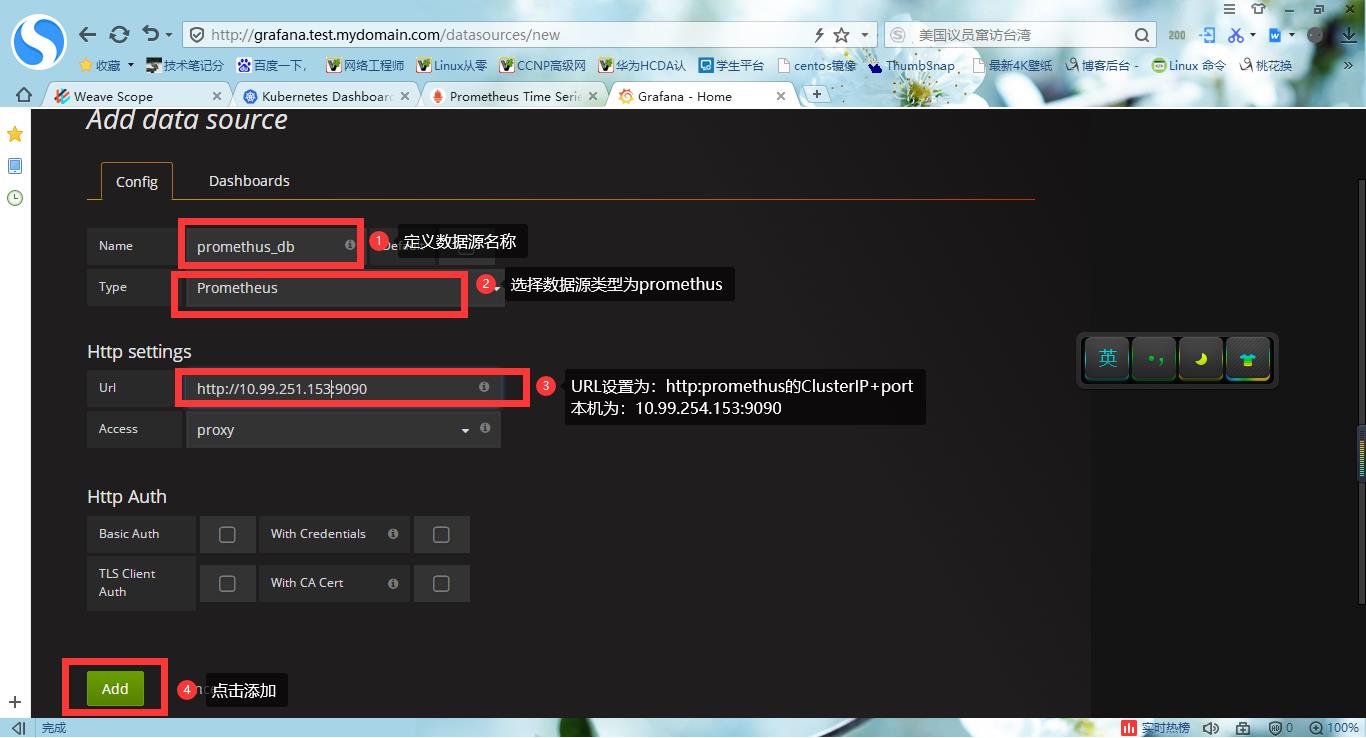

#3.配置数据源,使用promethus

#4.设置显示数据模板

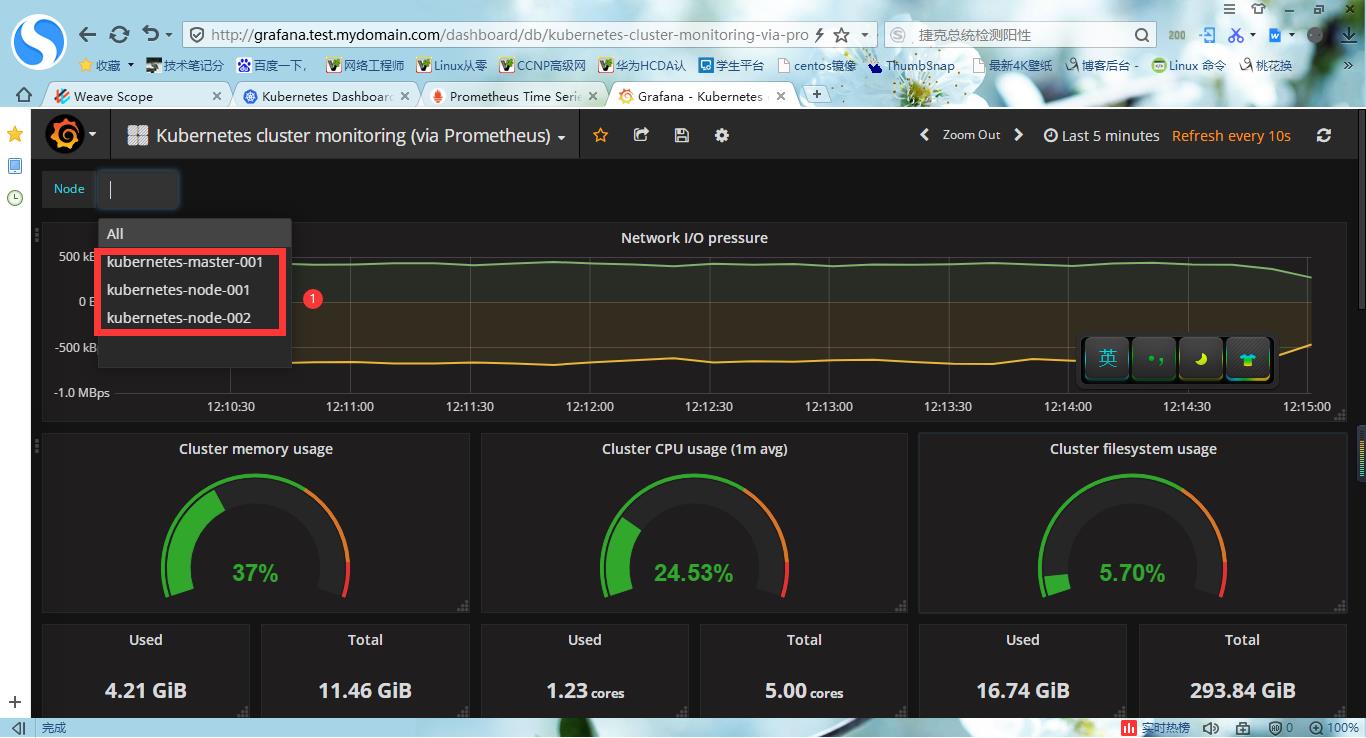

#5.查看dashboard界面

以上是关于第二十五章 Kubernetes部署Promethus集群资源监控的主要内容,如果未能解决你的问题,请参考以下文章