FocusBI: 使用Python爬虫为BI准备数据源(原创)

Posted FocusBI

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了FocusBI: 使用Python爬虫为BI准备数据源(原创)相关的知识,希望对你有一定的参考价值。

关注微信公众号:FocusBI 查看更多文章;加QQ群:808774277 获取学习资料和一起探讨问题。

《商业智能教程》pdf下载地址

链接:https://pan.baidu.com/s/1f9VdZUXztwylkOdFLbcmWw 密码:2r4v

在为企业实施商业智能时,大部分都是使用内部数据建模和可视化;以前极少企业有爬虫工程师来为企业准备外部数据,最近一年来Python爬虫异常火爆,企业也开始招爬虫工程师为企业丰富数据来源。

我使用Python 抓取过一些网站数据,如:美团、点评、一亩田、租房等;这些数据并没有用作商业用途而是个人兴趣爬取下来做练习使用;这里我已 一亩田为例使用 scrapy框架去抓取它的数据。

一亩田

它是一个农产品网站,汇集了中国大部分农产品产地和市场行情,发展初期由百度系的人员创建,最初是招了大量的业务员去农村收集和教育农民把产品信息发布到一亩田网上..。

一亩田一开始是网页版,由于爬虫太多和农户在外劳作使用不方便而改成APP版废弃网页版,一亩田App反爬能力非常强悍;另外一亩田有一亩田产地行情和市场行情网页版,它的信息量也非常多,所以我选择爬取一亩田产地行情数据。

爬取一亩田使用的是Scrapy框架,这个框架的原理及dome我在这里不讲,直接给爬取一亩田的分析思路及源码;

一亩田爬虫分析思路

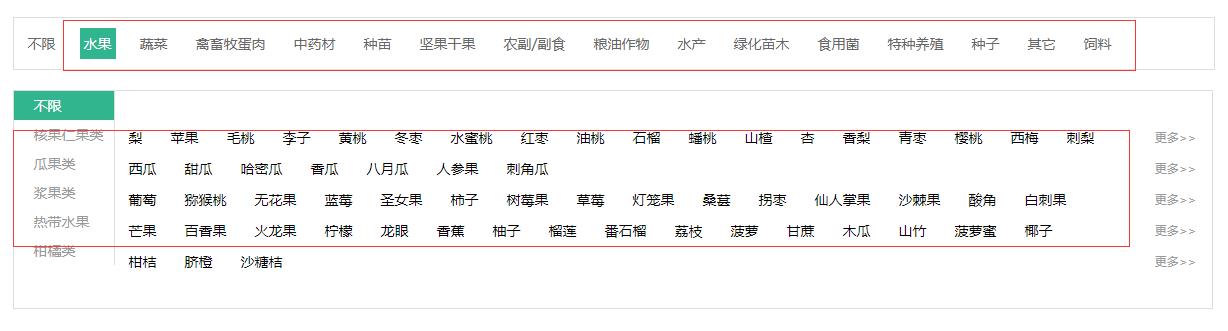

首先登陆一亩田产地行情:http://hangqing.ymt.com/chandi,看到农产品分类

单击水果分类就能看到它下面有很多小分类,单击梨进入水果梨的行情页,能看到它下面有全部品种和指定地区选择一个省就能看到当天的行情和一个月的走势;

看到这一连串的网页我就根据这个思路去抓取数据。

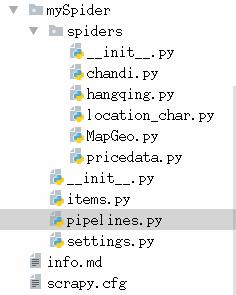

一亩田爬虫源码

1.首先创建一个Spider

2.行情数据

抓取大类、中类、小类、品种 hangqing.py

1 # -*- coding: utf-8 -*- 2 import scrapy 3 from mySpider.items import MyspiderItem 4 from copy import deepcopy 5 import time 6 7 8 class HangqingSpider(scrapy.Spider): 9 name = "hangqing" 10 allowed_domains = ["hangqing.ymt.com"] 11 start_urls = ( 12 \'http://hangqing.ymt.com/\', 13 ) 14 15 # 大分类数据 16 def parse(self, response): 17 a_list = response.xpath("//div[@id=\'purchase_wrapper\']/div//a[@class=\'hide\']") 18 19 for a in a_list: 20 items = MyspiderItem() 21 items["ymt_bigsort_href"] = a.xpath("./@href").extract_first() 22 items["ymt_bigsort_id"] = items["ymt_bigsort_href"].replace("http://hangqing.ymt.com/common/nav_chandi_", "") 23 items["ymt_bigsort_name"] = a.xpath("./text()").extract_first() 24 25 # 发送详情页的请求 26 yield scrapy.Request( 27 items["ymt_bigsort_href"], 28 callback=self.parse_medium_detail, 29 meta={"item": deepcopy(items)} 30 ) 31 32 33 # 中分类数据 其中小类也包含在其中 34 def parse_medium_detail(self, response): 35 items = response.meta["item"] 36 li_list = response.xpath("//div[@class=\'cate_nav_wrap\']//a") 37 for li in li_list: 38 items["ymt_mediumsort_id"] = li.xpath("./@data-id").extract_first() 39 items["ymt_mediumsort_name"] = li.xpath("./text()").extract_first() 40 yield scrapy.Request( 41 items["ymt_bigsort_href"], 42 callback=self.parse_small_detail, 43 meta={"item": deepcopy(items)}, 44 dont_filter=True 45 ) 46 47 # 小分类数据 48 def parse_small_detail(self, response): 49 item = response.meta["item"] 50 mediumsort_id = item["ymt_mediumsort_id"] 51 if int(mediumsort_id) > 0: 52 nav_product_id = "nav-product-" + mediumsort_id 53 a_list = response.xpath("//div[@class=\'cate_content_1\']//div[contains(@class,\'{}\')]//ul//a".format(nav_product_id)) 54 for a in a_list: 55 item["ymt_smallsort_id"] = a.xpath("./@data-id").extract_first() 56 item["ymt_smallsort_href"] = a.xpath("./@href").extract_first() 57 item["ymt_smallsort_name"] = a.xpath("./text()").extract_first() 58 yield scrapy.Request( 59 item["ymt_smallsort_href"], 60 callback=self.parse_variety_detail, 61 meta={"item": deepcopy(item)} 62 ) 63 64 # 品种数据 65 def parse_variety_detail(self, response): 66 item = response.meta["item"] 67 li_list = response.xpath("//ul[@class=\'all_cate clearfix\']//li") 68 if len(li_list) > 0: 69 for li in li_list: 70 item["ymt_breed_href"] = li.xpath("./a/@href").extract_first() 71 item["ymt_breed_name"] = li.xpath("./a/text()").extract_first() 72 item["ymt_breed_id"] = item["ymt_breed_href"].split("_")[2] 73 74 yield item 75 76 else: 77 item["ymt_breed_href"] = "" 78 item["ymt_breed_name"] = "" 79 item["ymt_breed_id"] = -1 80 81 yield item

3.产地数据

抓取省份、城市、县市 chandi.py

1 # -*- coding: utf-8 -*- 2 import scrapy 3 from mySpider.items import MyspiderChanDi 4 from copy import deepcopy 5 6 7 class ChandiSpider(scrapy.Spider): 8 name = \'chandi\' 9 allowed_domains = [\'hangqing.ymt.com\'] 10 start_urls = [\'http://hangqing.ymt.com/chandi_8031_0_0\'] 11 12 # 省份数据 13 def parse(self, response): 14 # 产地列表 15 li_list = response.xpath("//div[@class=\'fl sku_name\']/ul//li") 16 for li in li_list: 17 items = MyspiderChanDi() 18 items["ymt_province_href"] = li.xpath("./a/@href").extract_first() 19 items["ymt_province_id"] = items["ymt_province_href"].split("_")[-1] 20 items["ymt_province_name"] = li.xpath("./a/text()").extract_first() 21 22 yield scrapy.Request( 23 items["ymt_province_href"], 24 callback=self.parse_city_detail, 25 meta={"item": deepcopy(items)} 26 ) 27 28 # 城市数据 29 def parse_city_detail(self, response): 30 item = response.meta["item"] 31 option = response.xpath("//select[@class=\'location_select\'][1]//option") 32 33 if len(option) > 0: 34 for op in option: 35 name = op.xpath("./text()").extract_first() 36 if name != "全部": 37 item["ymt_city_name"] = name 38 item["ymt_city_href"] = op.xpath("./@data-url").extract_first() 39 item["ymt_city_id"] = item["ymt_city_href"].split("_")[-1] 40 yield scrapy.Request( 41 item["ymt_city_href"], 42 callback=self.parse_area_detail, 43 meta={"item": deepcopy(item)} 44 ) 45 else: 46 item["ymt_city_name"] = "" 47 item["ymt_city_href"] = "" 48 item["ymt_city_id"] = 0 49 yield scrapy.Request( 50 item["ymt_city_href"], 51 callback=self.parse_area_detail, 52 meta={"item": deepcopy(item)} 53 54 ) 55 56 # 县市数据 57 def parse_area_detail(self, response): 58 item = response.meta["item"] 59 area_list = response.xpath("//select[@class=\'location_select\'][2]//option") 60 61 if len(area_list) > 0: 62 for area in area_list: 63 name = area.xpath("./text()").extract_first() 64 if name != "全部": 65 item["ymt_area_name"] = name 66 item["ymt_area_href"] = area.xpath("./@data-url").extract_first() 67 item["ymt_area_id"] = item["ymt_area_href"].split("_")[-1] 68 yield item 69 else: 70 item["ymt_area_name"] = "" 71 item["ymt_area_href"] = "" 72 item["ymt_area_id"] = 0 73 yield item

4.行情分布

location_char.py

1 # -*- coding: utf-8 -*- 2 import scrapy 3 import pymysql 4 import json 5 from copy import deepcopy 6 from mySpider.items import MySpiderSmallProvincePrice 7 import datetime 8 9 10 class LocationCharSpider(scrapy.Spider): 11 name = \'location_char\' 12 allowed_domains = [\'hangqing.ymt.com\'] 13 start_urls = [\'http://hangqing.ymt.com/\'] 14 15 i = datetime.datetime.now() 16 dateKey = str(i.year) + str(i.month) + str(i.day) 17 db = pymysql.connect( 18 host="127.0.0.1", port=3306, 19 user=\'root\', password=\'mysql\', 20 db=\'ymt_db\', charset=\'utf8\' 21 ) 22 23 def parse(self, response): 24 cur = self.db.cursor() 25 location_char_sql = "select small_id from ymt_price_small where dateKey = {} and day_avg_price > 0".format(self.dateKey) 26 27 cur.execute(location_char_sql) 28 location_chars = cur.fetchall() 29 for ch in location_chars: 30 item = MySpiderSmallProvincePrice() 31 item["small_id"] = ch[0] 32 location_char_url = "http://hangqing.ymt.com/chandi/location_charts" 33 small_id = str(item["small_id"]) 34 form_data = { 35 "locationId": "0", 36 "productId": small_id, 37 "breedId": "0" 38 } 39 yield scrapy.FormRequest( 40 location_char_url, 41 formdata=form_data, 42 callback=self.location_char, 43 meta={"item": deepcopy(item)} 44 ) 45 46 def location_char(self, response): 47 item = response.meta["item"] 48 49 html_str = json.loads(response.text) 50 status = html_str["status"] 51 if status == 0: 52 item["unit"] = html_str["data"]["unit"] 53 item["dateKey"] = self.dateKey 54 dataList = html_str["data"]["dataList"] 55 for data in dataList: 56 if type(data) == type([]): 57 item["province_name"] = data[0] 58 item["province_price"] = data[1] 59 elif type(data) == type({}): 60 item["province_name"] = data["name"] 61 item["province_price"] = data["y"] 62 63 location_char_url = "http://hangqing.ymt.com/chandi/location_charts" 64 small_id = str(item["small_id"]) 65 province_name = str(item["province_name"]) 66 province_id_sql = "select province_id from ymt_1_dim_cdProvince where province_name = \\"{}\\" ".format(province_name) 67 cur = self.db.cursor() 68 cur.execute(province_id_sql) 69 province_id = cur.fetchone() 70 71 item["province_id"] = province_id[0] 72 73 province_id = str(province_id[0]) 74 form_data = { 75 "locationId": province_id, 76 "productId": small_id, 77 "breedId": "0" 78 } 79 yield scrapy.FormRequest( 80 location_char_url, 81 formdata=form_data, 82 callback=self.location_char_province, 83 meta={"item": deepcopy(item)} 84 ) 85 86 def location_char_province(self, response): 87 item = response.meta["item"] 88 89 html_str = json.loads(response.text) 90 status = html_str["status"] 91 92 if status == 0: 93 dataList = html_str["data"]["dataList"] 94 for data in dataList: 95 if type(data) == type([]): 96 item["city_name"] = data[0] 97 item["city_price"] = data[1] 98 elif type(data) == type({}): 99 item["city_name"] = data["name"] 100 item["city_price"] = data["y"] 101 102 location_char_url = "http://hangqing.ymt.com/chandi/location_charts" 103 small_id = str(item["small_id"]) 104 city_name = str(item["city_name"]) 105 city_id_sql = "select city_id from ymt_1_dim_cdCity where city_name = \\"{}\\" ".format(city_name) 106 cur = self.db.cursor() 107 cur.execute(city_id_sql) 108 city_id = cur.fetchone() 109 110 item["city_id"] = city_id[0] 111 112 city_id = str(city_id[0]) 113 form_data = { 114 "locationId": city_id, 115 "productId": small_id, 116 "breedId": "0" 117 } 118 yield scrapy.FormRequest( 119 location_char_url, 120 formdata=form_data, 121 callback=self.location_char_province_city, 122 meta={"item": deepcopy(item)} 123 以上是关于FocusBI: 使用Python爬虫为BI准备数据源(原创)的主要内容,如果未能解决你的问题,请参考以下文章