别玩手机 图像分类比赛

Posted 龙火火的博客

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了别玩手机 图像分类比赛相关的知识,希望对你有一定的参考价值。

1 选手禁止互相抄袭,发现结果雷同者将取消成绩;

2 请在基线模型基础上修改代码,不允许使用第三方封装库、套件或者其他工具,否则做 0 分处理;

3 每位同学请独立完成比赛,不允许就比赛技术问题进行相互交流,更不允许索要代码,请自觉遵守规则,保持良好的品格;

4 晚上 12:00 以后不允许递交,否则做 0 分处理;

5 结果文件必须是程序生成,不允许手动修改或者后期处理。

赛题背景

如今,手机已成为大众离不开的生活工具,而且它的迅速发展使得它的功能不再以通讯为主,手机逐渐发展为可移动的大众传播媒体终端设备,甚至可以比作为第五媒体。当今的大学生群体是智能手机使用者中的一支巨大的的队伍,零零后大学生在进入大学以来,学习生活中过度的依赖手机,甚至上课时忘记携带手机便会手足无措,神情恍惚。本比赛要求通过监控摄像头等拍摄到的画面判断画面中的人物是否正在使用手机

数据集介绍

本比赛采用的数据集中,训练集共 2180 张使用手机的图片(位于目录 data/data146247/train/0_phone/)、1971 张没有使用手机的图片(位于目录 data/data146247/train/1_no_phone/)

测试集共 1849 张图片,无标注信息

总体思路

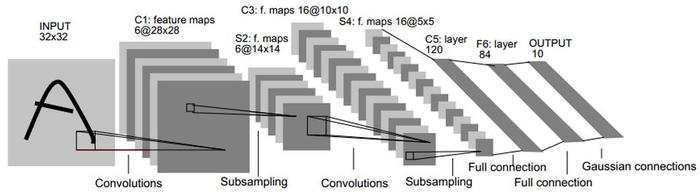

本 Baseline 采用 LeNet 模型架构,参赛者可以在此基础上进行修改,也可以使用全新的网络架构冲榜~~

LeNet 网络结构图如下

LeNet-5 共有 7 层,不包含输入,每层都包含可训练参数;每个层有多个 Feature Map,每个 FeatureMap 通过一种卷积滤波器提取输入的一种特征,然后每个 FeatureMap 有多个神经元。

- 预处理

- 生成标签

- 读取数据集

- 训练

- 模型组网

- 反向传播

- 预测

- 模型预测并保存结果

!rm aug.csv

!rm test.csv

!rm train.csv

!rm -r augment

!rm -r train

!rm -r test

!unzip -oq /home/aistudio/data/data146247/train.zip

!unzip -oq /home/aistudio/data/data146247/test.zip #解压数据集

!mkdir augment

!mkdir augment/0_phone

!mkdir augment/1_no_phone

import numpy as np

import pandas as pd

import paddle.nn.functional as F

import paddle.vision.transforms as T

from paddle.nn import Conv2D, BatchNorm2D, Linear, Dropout

from paddle.nn import AdaptiveAvgPool2D, MaxPool2D, AvgPool2D

import paddle

import paddle.nn as nn

import os

from paddle.io import Dataset, DataLoader, IterableDataset

from sklearn.utils import shuffle

from PIL import Image

from paddle.vision.transforms import Resize

PLACE = paddle.CUDAPlace(0) # 在gpu上训练

预处理

读取解压好的数据集,将图片名全部保存 train.csv(训练集)和 test.csv(测试集)中

处理完后,就可以定义数据集类并读取数据集了

# 写入训练集csv

list1=[]

for path in os.listdir("train/1_no_phone") :

if path[-3:]==\'jpg\':

k=["train/1_no_phone/"+path,1]

list1.append(k)

for path in os.listdir("train/0_phone") :

if path[-3:]==\'jpg\':

k=["train/0_phone/"+path,0]

list1.append(k)

result_df = pd.DataFrame(list1)

result_df.columns=[\'image_id\', \'label\']

data = shuffle(result_df)

data.to_csv(\'train.csv\', index=False, header=True)

# 写入测试集csv

list1=[]

for path in os.listdir("test") :

if path[-3:]==\'jpg\':

k=["test/"+path]

list1.append(k)

result_df = pd.DataFrame(list1)

result_df.columns=[\'image_id\']

result_df.to_csv(\'test.csv\', index=False, header=True)

pic_list=pd.read_csv("train.csv")

pic_list=np.array(pic_list)

train_list=pic_list[:int(len(pic_list)*0.8)]

test_list=pic_list[int(len(pic_list)*0.8):]

print(len(train_list))

train_list

3320

array([[\'train/1_no_phone/tWuCoxIkbAedBjParJZQGn9XVYLK16hS.jpg\', 1],

[\'train/1_no_phone/rkX9Rj7YqENc85bFxdDaw14Is0yCeAnp.jpg\', 1],

[\'train/0_phone/wfhSF1W5B46doJKEc7VTU2OxbCtRDAI9.jpg\', 0],

...,

[\'train/1_no_phone/uvHPyhakBK89o43fjJRXgZ2CIEpGzSAQ.jpg\', 1],

[\'train/0_phone/rlw854paLXkqc3zhgo9N2idUSeyIPAWf.jpg\', 0],

[\'train/0_phone/Wy59OfsandAo1lp2S7e3cEutPbi4zqYF.jpg\', 0]],

dtype=object)

离线图像增广

对图像进行 70%概率的随机水平翻转,并对翻转后的图像亮度、对比度和饱和度进行变化,将增广后的图像保存到 augment 文件夹,将图像数据追加到 train_list 后面,

import paddle.vision.transforms as T

directory_name = "augment/"

for i in range(len(train_list)):

img_path = \'/\'.join(train_list[i][0].split(\'/\')[:4])

img_path[6:-4]

img = Image.open(img_path)

img = T.RandomHorizontalFlip(0.7)(img)

# img.save(directory_name + "/" + img_path[6:-4] + "-tramsforms1.jpg")

img = T.ColorJitter(0.5, 0.5, 0.5, 0.0)(img)

img.save(directory_name + "/" + img_path[6:-4] + "-tramsforms.jpg")

# 写入训练集csv

list4=[]

for path in os.listdir("augment/1_no_phone") :

if path[-3:]==\'jpg\':

k=["augment/1_no_phone/"+path,1]

list4.append(k)

for path in os.listdir("augment/0_phone") :

if path[-3:]==\'jpg\':

k=["augment/0_phone/"+path,0]

list4.append(k)

result_df = pd.DataFrame(list4)

result_df.columns=[\'image_id\', \'label\']

data = shuffle(result_df)

data.to_csv(\'aug.csv\', index=False, header=True)

aug_list=pd.read_csv("aug.csv")

aug_list=np.array(aug_list)

train_list = np.append(train_list, aug_list, axis=0)

print(len(train_list))

train_list

6640

array([[\'train/1_no_phone/tWuCoxIkbAedBjParJZQGn9XVYLK16hS.jpg\', 1],

[\'train/1_no_phone/rkX9Rj7YqENc85bFxdDaw14Is0yCeAnp.jpg\', 1],

[\'train/0_phone/wfhSF1W5B46doJKEc7VTU2OxbCtRDAI9.jpg\', 0],

...,

[\'augment/1_no_phone/WPNLg72lV8Jhn5TpokKMf34QR1Fd6IcH-tramsforms.jpg\',

1],

[\'augment/0_phone/qJcVtZH2yiAL6w34rOQopT9IemzEDjWK-tramsforms.jpg\',

0],

[\'augment/0_phone/qwoDX3ENaVuCk9IYjAnBHbv7TLUPR5Kr-tramsforms.jpg\',

0]], dtype=object)

class H2ZDateset(Dataset):

def __init__(self, data_dir):

super(H2ZDateset, self).__init__()

self.pic_list=data_dir

def __getitem__(self, idx):

image_file,label=self.pic_list[idx]

img = Image.open(image_file) # 读取图片

img = img.resize((256, 256), Image.ANTIALIAS) # 图片大小样式归一化

img = np.array(img).astype(\'float32\') # 转换成数组类型浮点型32位

img = img.transpose((2, 0, 1))

img = img/255.0 # 数据缩放到0-1的范围

return img, np.array(label, dtype=\'int64\').reshape(-1)

def __len__(self):

return len(self.pic_list)

h2zdateset = H2ZDateset(train_list)

BATCH_SIZE = 32

loader = DataLoader(h2zdateset, places=PLACE, shuffle=True, batch_size=BATCH_SIZE, drop_last=False, num_workers=0, use_shared_memory=False)

data,label = next(loader())

print("读取的数据形状:", data.shape,label.shape)

读取的数据形状: [32, 3, 256, 256] [32, 1]

train_data = H2ZDateset(train_list)

test_data = H2ZDateset(test_list)

train_data_reader = DataLoader(train_data, places=PLACE, shuffle=True, batch_size=BATCH_SIZE, drop_last=False, num_workers=2, use_shared_memory=True)

test_data_reader = DataLoader(test_data, places=PLACE, shuffle=True, batch_size=BATCH_SIZE, drop_last=False, num_workers=2, use_shared_memory=True)

训练

首先进行 LeNet 模型组网

这里用到了 paddle.Model 的 summary()方法来将模型可视化,通过 summary()可以快速打印模型的网络结构,并且,执行该语句的时候会执行一次网络。在动态图中,我们需要手算网络的输入和输出层,如果出现一点问题就会报错非常麻烦,而 summary()能大大缩短 debug 时间

自定义 ResNeXt 类,导入 resnext101_64x4d 网络,并开启预训练模型的选项,增一个线性层,将原本模型的 1000 分类问题变成 2 分类问题

class ResNeXt(nn.Layer):

def __init__(self):

super(ResNeXt, self).__init__()

self.layer = paddle.vision.models.resnext101_64x4d(pretrained=True)

self.fc = nn.Sequential(

nn.Dropout(0.5),

nn.Linear(1000, 2)

)

def forward(self, inputs):

outputs = self.layer(inputs)

outputs = self.fc(outputs)

return outputs

model = ResNeXt()

paddle.Model(model).summary((-1, 3, 256, 256))

W0510 00:32:22.100802 8743 gpu_context.cc:244] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.2, Runtime API Version: 10.1

W0510 00:32:22.104816 8743 gpu_context.cc:272] device: 0, cuDNN Version: 7.6.

-------------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===============================================================================

Conv2D-1 [[1, 3, 256, 256]] [1, 64, 128, 128] 9,408

BatchNorm-1 [[1, 64, 128, 128]] [1, 64, 128, 128] 256

ConvBNLayer-1 [[1, 3, 256, 256]] [1, 64, 128, 128] 0

MaxPool2D-1 [[1, 64, 128, 128]] [1, 64, 64, 64] 0

Conv2D-2 [[1, 64, 64, 64]] [1, 256, 64, 64] 16,384

BatchNorm-2 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-2 [[1, 64, 64, 64]] [1, 256, 64, 64] 0

Conv2D-3 [[1, 256, 64, 64]] [1, 256, 64, 64] 9,216

BatchNorm-3 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-3 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-4 [[1, 256, 64, 64]] [1, 256, 64, 64] 65,536

BatchNorm-4 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-4 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-5 [[1, 64, 64, 64]] [1, 256, 64, 64] 16,384

BatchNorm-5 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-5 [[1, 64, 64, 64]] [1, 256, 64, 64] 0

BottleneckBlock-1 [[1, 64, 64, 64]] [1, 256, 64, 64] 0

Conv2D-6 [[1, 256, 64, 64]] [1, 256, 64, 64] 65,536

BatchNorm-6 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-6 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-7 [[1, 256, 64, 64]] [1, 256, 64, 64] 9,216

BatchNorm-7 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-7 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-8 [[1, 256, 64, 64]] [1, 256, 64, 64] 65,536

BatchNorm-8 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-8 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

BottleneckBlock-2 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-9 [[1, 256, 64, 64]] [1, 256, 64, 64] 65,536

BatchNorm-9 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-9 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-10 [[1, 256, 64, 64]] [1, 256, 64, 64] 9,216

BatchNorm-10 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-10 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-11 [[1, 256, 64, 64]] [1, 256, 64, 64] 65,536

BatchNorm-11 [[1, 256, 64, 64]] [1, 256, 64, 64] 1,024

ConvBNLayer-11 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

BottleneckBlock-3 [[1, 256, 64, 64]] [1, 256, 64, 64] 0

Conv2D-12 [[1, 256, 64, 64]] [1, 512, 64, 64] 131,072

BatchNorm-12 [[1, 512, 64, 64]] [1, 512, 64, 64] 2,048

ConvBNLayer-12 [[1, 256, 64, 64]] [1, 512, 64, 64] 0

Conv2D-13 [[1, 512, 64, 64]] [1, 512, 32, 32] 36,864

BatchNorm-13 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-13 [[1, 512, 64, 64]] [1, 512, 32, 32] 0

Conv2D-14 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-14 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-14 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-15 [[1, 256, 64, 64]] [1, 512, 32, 32] 131,072

BatchNorm-15 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-15 [[1, 256, 64, 64]] [1, 512, 32, 32] 0

BottleneckBlock-4 [[1, 256, 64, 64]] [1, 512, 32, 32] 0

Conv2D-16 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-16 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-16 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-17 [[1, 512, 32, 32]] [1, 512, 32, 32] 36,864

BatchNorm-17 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-17 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-18 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-18 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-18 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

BottleneckBlock-5 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-19 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-19 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-19 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-20 [[1, 512, 32, 32]] [1, 512, 32, 32] 36,864

BatchNorm-20 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-20 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-21 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-21 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-21 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

BottleneckBlock-6 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-22 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-22 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-22 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-23 [[1, 512, 32, 32]] [1, 512, 32, 32] 36,864

BatchNorm-23 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-23 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-24 [[1, 512, 32, 32]] [1, 512, 32, 32] 262,144

BatchNorm-24 [[1, 512, 32, 32]] [1, 512, 32, 32] 2,048

ConvBNLayer-24 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

BottleneckBlock-7 [[1, 512, 32, 32]] [1, 512, 32, 32] 0

Conv2D-25 [[1, 512, 32, 32]] [1, 1024, 32, 32] 524,288

BatchNorm-25 [[1, 1024, 32, 32]] [1, 1024, 32, 32] 4,096

ConvBNLayer-25 [[1, 512, 32, 32]] [1, 1024, 32, 32] 0

Conv2D-26 [[1, 1024, 32, 32]] [1, 1024, 16, 16] 147,456

BatchNorm-26 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-26 [[1, 1024, 32, 32]] [1, 1024, 16, 16] 0

Conv2D-27 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-27 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-27 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-28 [[1, 512, 32, 32]] [1, 1024, 16, 16] 524,288

BatchNorm-28 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-28 [[1, 512, 32, 32]] [1, 1024, 16, 16] 0

BottleneckBlock-8 [[1, 512, 32, 32]] [1, 1024, 16, 16] 0

Conv2D-29 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-29 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-29 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-30 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-30 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-30 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-31 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-31 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-31 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-9 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-32 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-32 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-32 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-33 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-33 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-33 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-34 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-34 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-34 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-10 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-35 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-35 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-35 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-36 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-36 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-36 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-37 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-37 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-37 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-11 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-38 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-38 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-38 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-39 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-39 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-39 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-40 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-40 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-40 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-12 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-41 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-41 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-41 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-42 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-42 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-42 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-43 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-43 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-43 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-13 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-44 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-44 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-44 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-45 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-45 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-45 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-46 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-46 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-46 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-14 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-47 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-47 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-47 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-48 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-48 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-48 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-49 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-49 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-49 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-15 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-50 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-50 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-50 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-51 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-51 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-51 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-52 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-52 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-52 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-16 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-53 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-53 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-53 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-54 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-54 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-54 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-55 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-55 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-55 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-17 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-56 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-56 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-56 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-57 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-57 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-57 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-58 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-58 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-58 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-18 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-59 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-59 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-59 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-60 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-60 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-60 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-61 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-61 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-61 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-19 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-62 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-62 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-62 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-63 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-63 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-63 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-64 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-64 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-64 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-20 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-65 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-65 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-65 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-66 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-66 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-66 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-67 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-67 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-67 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-21 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-68 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-68 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-68 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-69 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-69 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-69 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-70 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-70 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-70 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-22 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-71 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-71 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-71 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-72 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-72 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-72 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-73 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-73 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-73 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-23 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-74 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-74 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-74 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-75 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-75 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-75 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-76 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-76 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-76 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-24 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-77 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-77 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-77 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-78 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-78 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-78 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-79 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-79 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-79 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-25 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-80 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-80 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-80 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-81 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-81 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-81 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-82 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-82 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-82 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-26 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-83 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-83 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-83 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-84 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-84 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-84 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-85 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-85 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-85 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-27 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-86 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-86 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-86 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-87 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-87 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-87 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-88 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-88 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-88 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-28 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-89 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-89 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-89 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-90 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-90 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-90 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-91 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-91 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-91 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-29 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-92 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-92 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-92 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-93 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 147,456

BatchNorm-93 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-93 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-94 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 1,048,576

BatchNorm-94 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 4,096

ConvBNLayer-94 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

BottleneckBlock-30 [[1, 1024, 16, 16]] [1, 1024, 16, 16] 0

Conv2D-95 [[1, 1024, 16, 16]] [1, 2048, 16, 16] 2,097,152

BatchNorm-95 [[1, 2048, 16, 16]] [1, 2048, 16, 16] 8,192

ConvBNLayer-95 [[1, 1024, 16, 16]] [1, 2048, 16, 16] 0

Conv2D-96 [[1, 2048, 16, 16]] [1, 2048, 8, 8] 589,824

BatchNorm-96 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-96 [[1, 2048, 16, 16]] [1, 2048, 8, 8] 0

Conv2D-97 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 4,194,304

BatchNorm-97 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-97 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-98 [[1, 1024, 16, 16]] [1, 2048, 8, 8] 2,097,152

BatchNorm-98 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-98 [[1, 1024, 16, 16]] [1, 2048, 8, 8] 0

BottleneckBlock-31 [[1, 1024, 16, 16]] [1, 2048, 8, 8] 0

Conv2D-99 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 4,194,304

BatchNorm-99 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-99 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-100 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 589,824

BatchNorm-100 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-100 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-101 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 4,194,304

BatchNorm-101 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-101 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

BottleneckBlock-32 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-102 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 4,194,304

BatchNorm-102 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-102 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-103 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 589,824

BatchNorm-103 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-103 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

Conv2D-104 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 4,194,304

BatchNorm-104 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 8,192

ConvBNLayer-104 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

BottleneckBlock-33 [[1, 2048, 8, 8]] [1, 2048, 8, 8] 0

AdaptiveAvgPool2D-1 [[1, 2048, 8, 8]] [1, 2048, 1, 1] 0

Linear-1 [[1, 2048]] [1, 1000] 2,049,000

ResNeXt-2 [[1, 3, 256, 256]] [1, 1000] 0

Dropout-1 [[1, 1000]] [1, 1000] 0

Linear-2 [[1, 1000]] [1, 2] 2,002

===============================================================================

Total params: 83,660,154

Trainable params: 83,254,394

Non-trainable params: 405,760

-------------------------------------------------------------------------------

Input size (MB): 0.75

Forward/backward pass size (MB): 1024.04

Params size (MB): 319.14

Estimated Total Size (MB): 1343.93

-------------------------------------------------------------------------------

\'total_params\': 83660154, \'trainable_params\': 83254394

预测

模型训练好之后就可以开始预测

在使用带有动量的 SGD 学习率优化器后训练 5 个 epoch,在 valid_list 中已经可以达到 95.3%的准确率

epochs_num = 5 #迭代次数

# opt = paddle.optimizer.Adam(learning_rate=0.001, parameters=model.parameters())

opt = paddle.optimizer.Momentum(learning_rate=0.002, parameters=model.parameters(), weight_decay=0.005, momentum=0.9)

train_acc, train_loss, valid_acc = [], [], []

for pass_num in range(epochs_num):

model.train() #训练模式

accs=[]

for batch_id,data in enumerate(train_data_reader):

images, labels = data

predict = model(images)#预测

loss=F.cross_entropy(predict,labels)

avg_loss=paddle.mean(loss)

acc=paddle.metric.accuracy(predict,labels)#计算精度

accs.append(acc.numpy()[0])

if batch_id % 20 == 0:

print("epoch:, iter:, loss:, acc:".format(pass_num, batch_id, avg_loss.numpy(), acc.numpy()[0]))

opt.clear_grad()

avg_loss.backward()

opt.step()

print("train_pass:, train_loss:, train_acc:".format(pass_num, avg_loss.numpy(), np.mean(accs)))

train_acc.append(np.mean(accs)), train_loss.append(avg_loss.numpy())

##得到验证集的性能

model.eval()

val_accs=[]

for batch_id,data in enumerate(test_data_reader):

images, labels = data

predict=model(images)#预测

acc=paddle.metric.accuracy(predict,labels)#计算精度

val_accs.append(acc.numpy()[0])

print(\'val_acc=\'.format(np.mean(val_accs)))

valid_acc.append(np.mean(val_accs))

paddle.save(model.state_dict(),\'resnext101_64x4d\')#保存模型

epoch:0, iter:0, loss:[1.2695745], acc:0.5625

epoch:0, iter:20, loss:[0.72218126], acc:0.78125

epoch:0, iter:40, loss:[0.8521573], acc:0.625

epoch:0, iter:60, loss:[0.9325488], acc:0.78125

epoch:0, iter:80, loss:[0.43677044], acc:0.90625

epoch:0, iter:100, loss:[0.37998652], acc:0.84375

epoch:0, iter:120, loss:[0.17167747], acc:0.9375

epoch:0, iter:140, loss:[0.13679577], acc:0.9375

epoch:0, iter:160, loss:[0.27065688], acc:0.9375

epoch:0, iter:180, loss:[0.27734363], acc:0.875

epoch:0, iter:200, loss:[0.27193183], acc:0.90625

train_pass:0, train_loss:[0.37479421], train_acc:0.8610276579856873

val_acc=0.9120657444000244

epoch:1, iter:0, loss:[0.29650056], acc:0.875

epoch:1, iter:20, loss:[0.03968127], acc:0.96875

epoch:1, iter:40, loss:[0.0579604], acc:0.96875

epoch:1, iter:60, loss:[0.08308301], acc:0.96875

epoch:1, iter:80, loss:[0.02734788], acc:1.0

epoch:1, iter:100, loss:[0.04930278], acc:0.96875

epoch:1, iter:120, loss:[0.08352702], acc:0.96875

epoch:1, iter:140, loss:[0.180908], acc:0.96875

epoch:1, iter:160, loss:[0.00271969], acc:1.0

epoch:1, iter:180, loss:[0.23547903], acc:0.90625

epoch:1, iter:200, loss:[0.02033656], acc:1.0

train_pass:1, train_loss:[0.00160835], train_acc:0.9695011973381042

val_acc=0.9362205266952515

epoch:2, iter:0, loss:[0.06544636], acc:0.96875

epoch:2, iter:20, loss:[0.06581119], acc:0.96875

epoch:2, iter:40, loss:[0.00562927], acc:1.0

epoch:2, iter:60, loss:[0.01040748], acc:1.0

epoch:2, iter:80, loss:[0.03810382], acc:0.96875

epoch:2, iter:100, loss:[0.01447718], acc:1.0

epoch:2, iter:120, loss:[0.12421186], acc:0.96875

epoch:2, iter:140, loss:[0.00112416], acc:1.0

epoch:2, iter:160, loss:[0.003324], acc:1.0

epoch:2, iter:180, loss:[0.01755645], acc:1.0

epoch:2, iter:200, loss:[0.06159591], acc:0.96875

train_pass:2, train_loss:[0.00045859], train_acc:0.990234375

val_acc=0.9543269276618958

epoch:3, iter:0, loss:[0.00377628], acc:1.0

epoch:3, iter:20, loss:[0.0032537], acc:1.0

epoch:3, iter:40, loss:[0.00095566], acc:1.0

epoch:3, iter:60, loss:[0.1955388], acc:0.9375

epoch:3, iter:80, loss:[0.00345089], acc:1.0

epoch:3, iter:100, loss:[0.00279539], acc:1.0

epoch:3, iter:120, loss:[0.01505984], acc:1.0

epoch:3, iter:140, loss:[0.06922489], acc:0.96875

epoch:3, iter:160, loss:[0.05011526], acc:0.96875

epoch:3, iter:180, loss:[0.13640904], acc:0.96875

epoch:3, iter:200, loss:[0.01501912], acc:1.0

train_pass:3, train_loss:[0.00018014], train_acc:0.991135835647583

val_acc=0.944672703742981

epoch:4, iter:0, loss:[0.00011357], acc:1.0

epoch:4, iter:20, loss:[0.00145513], acc:1.0

epoch:4, iter:40, loss:[0.00594005], acc:1.0

epoch:4, iter:60, loss:[0.00403259], acc:1.0

epoch:4, iter:80, loss:[0.00525207], acc:1.0

epoch:4, iter:100, loss:[0.01096269], acc:1.0

epoch:4, iter:120, loss:[0.0166808], acc:1.0

epoch:4, iter:140, loss:[0.00298717], acc:1.0

epoch:4, iter:160, loss:[0.00306062], acc:1.0

epoch:4, iter:180, loss:[0.0080948], acc:1.0

epoch:4, iter:200, loss:[0.08889838], acc:0.9375

train_pass:4, train_loss:[0.07838897], train_acc:0.9887319803237915

val_acc=0.9530086517333984

from tqdm import tqdm

test=pd.read_csv("test.csv")

test["label"]=0

with tqdm(total=len(test)) as pbar:

for i in range(len(test)):

image_file=test["image_id"][i]

pbar.set_description(f\'Processing: test["image_id"][i]\')

img = Image.open(image_file) # 读取图片

img = img.resize((256, 256), Image.ANTIALIAS) # 图片大小样式归一化

img = np.array(img).astype(\'float32\') # 转换成数组类型浮点型32位

img = img.transpose((2, 0, 1))

img = img/255.0 # 数据缩放到0-1的范围

img=paddle.to_tensor(img.reshape(-1,3,256,256))

predict = model(img)

result = int(np.argmax(predict.numpy()))

test.loc[i, "image_id"]=test.loc[i, "image_id"][5:]

test.loc[i, "label"]=result

pbar.update(1)

test.to_csv(\'predict_result.csv\', index=False)

print("end")

Processing: test/yheWlrsgStOjfLdqHbYE7p1P28ViDBQ9.jpg: 100%|██████████| 1849/1849 [01:58<00:00, 15.65it/s]

end

改进方向

- 可以在基线模型的基础上通过调参及模型优化进一步提升效果

- 可以对训练集进行数据增强从而增大训练数据量以提升模型泛化能力

- 可以尝试采用更深的神经网络,如 Resnet、VGG

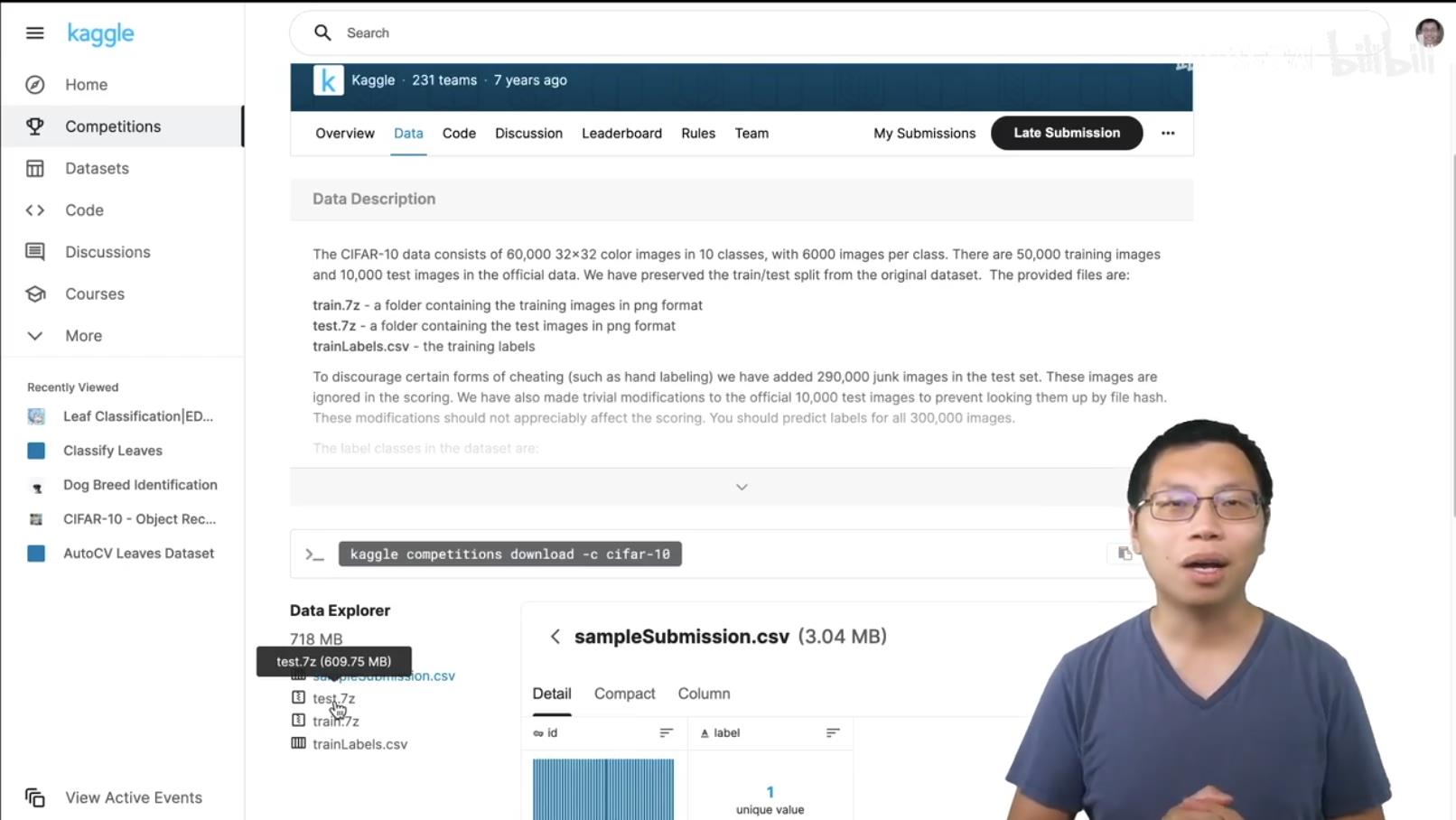

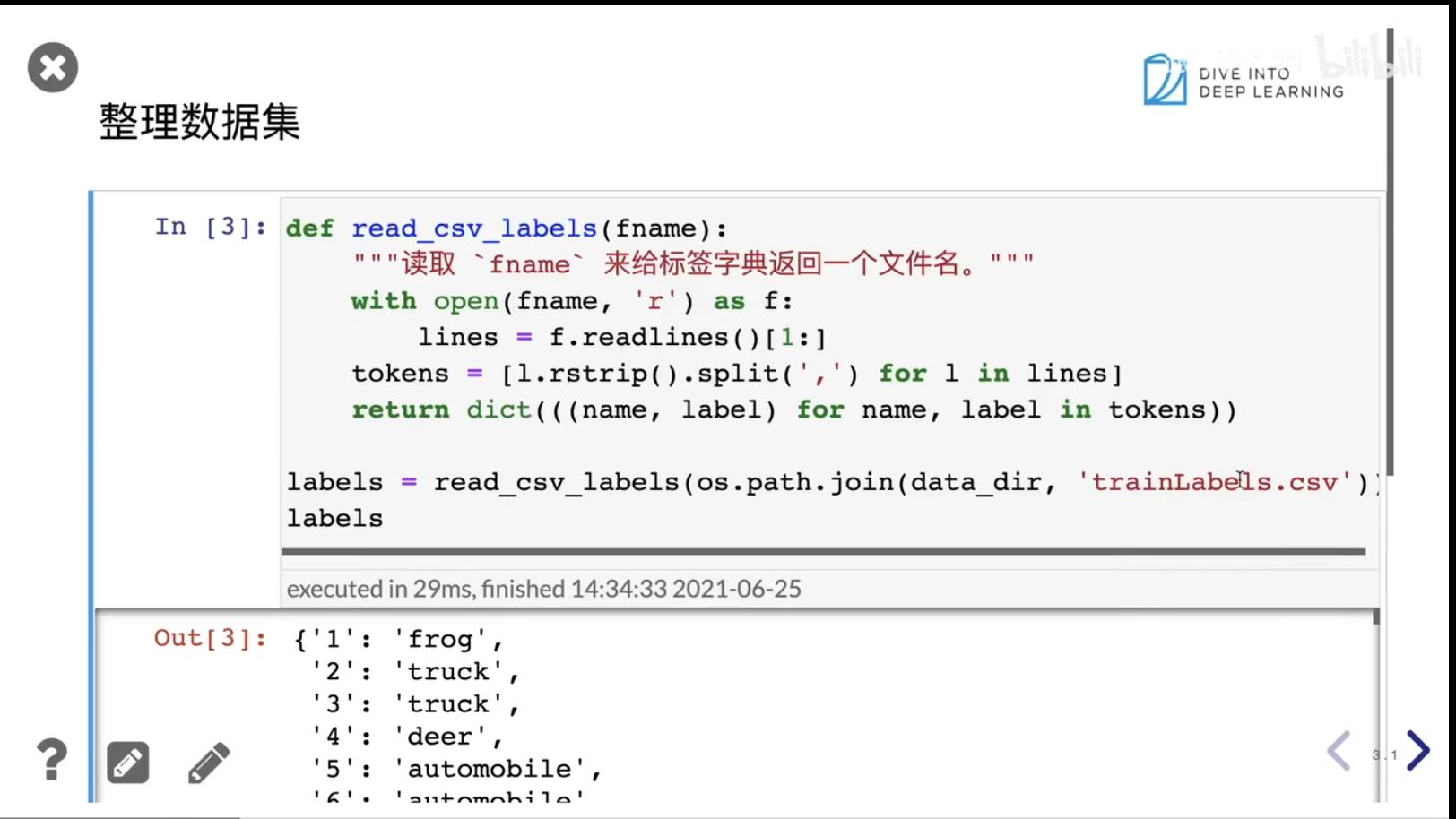

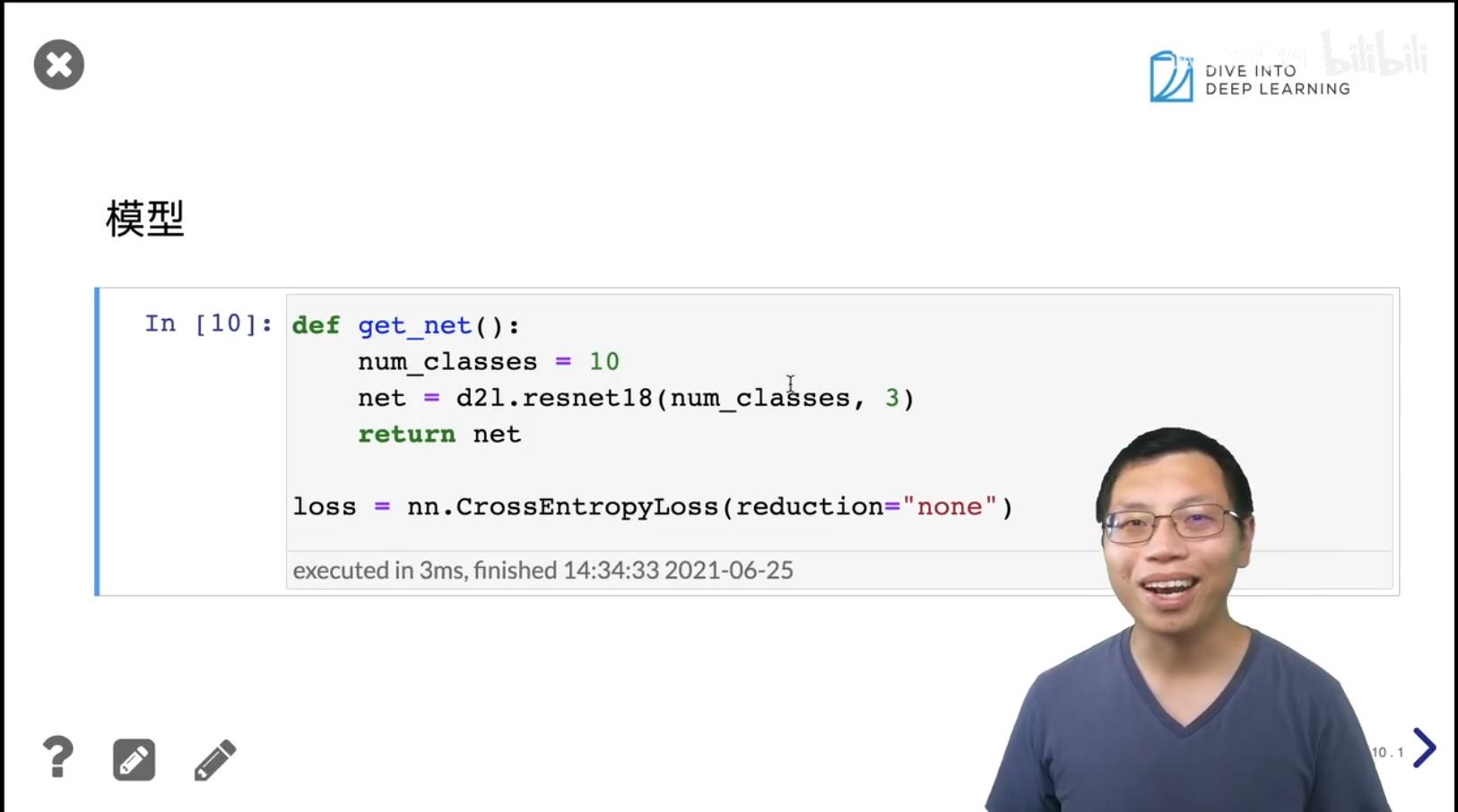

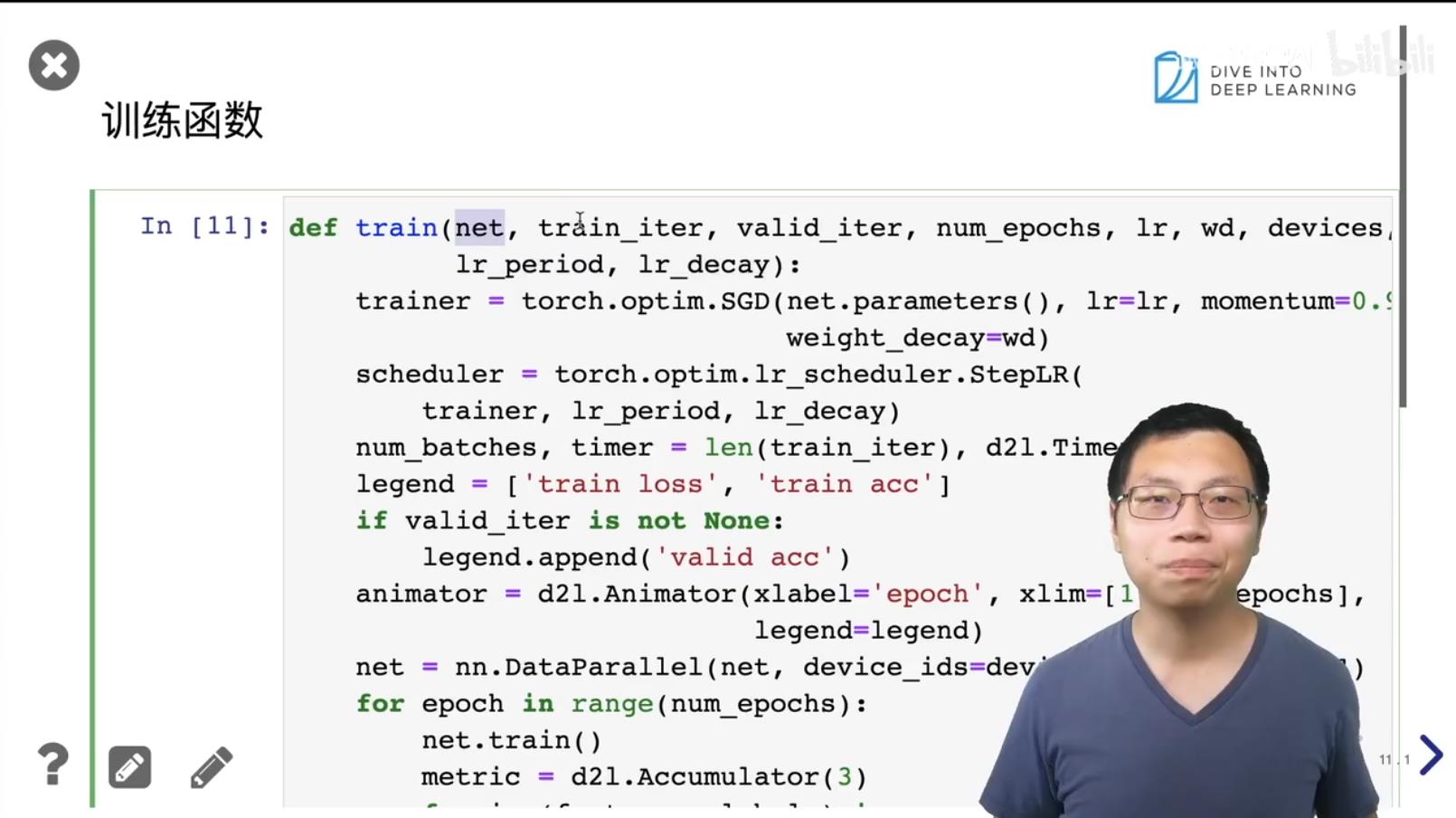

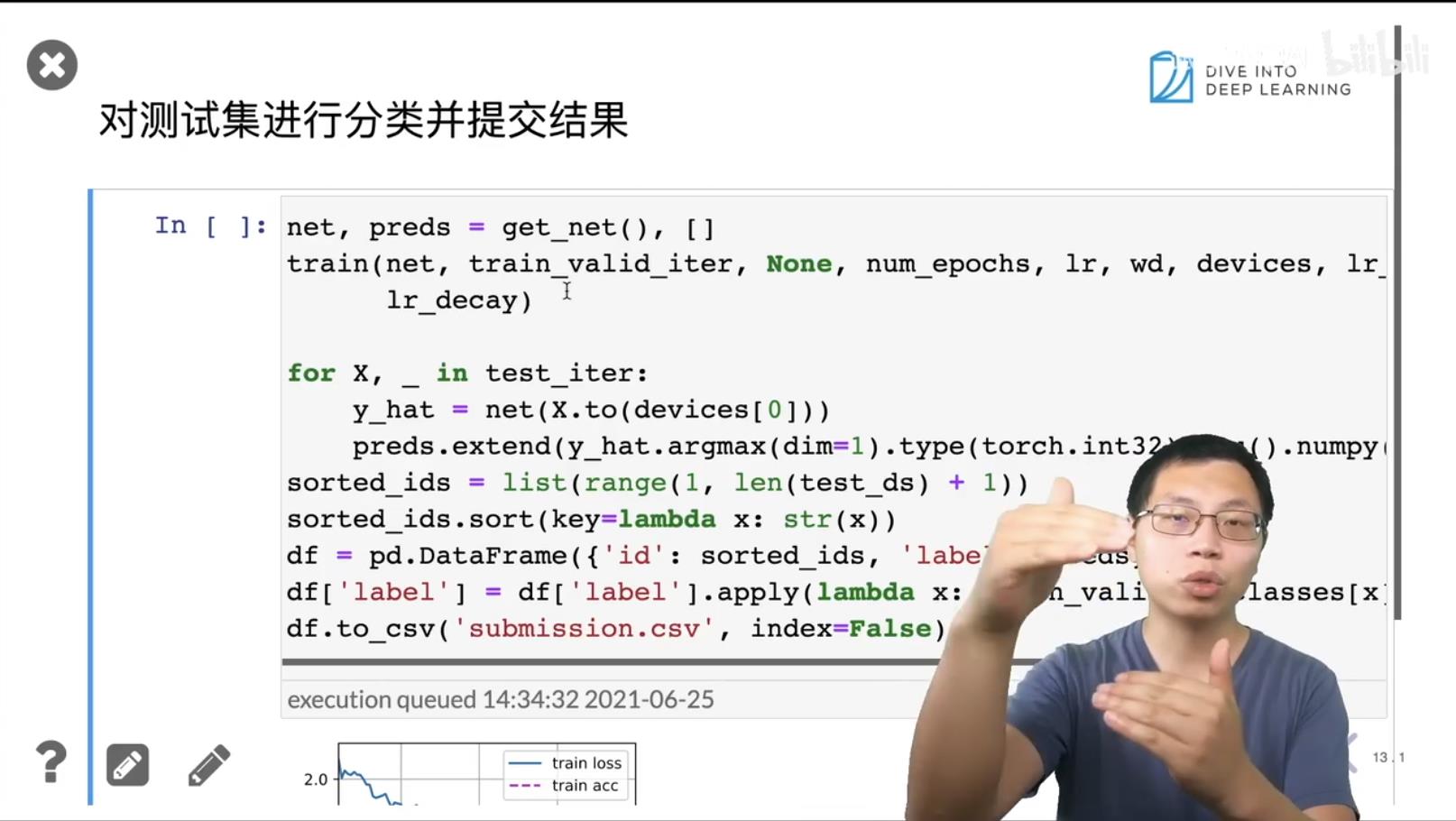

实战 Kaggle 比赛:图像分类(CIFAR-10) 动手学深度学习v2

1. 实战 Kaggle 比赛:图像分类(CIFAR-10)

https://www.kaggle.com/c/cifar-10

2. Q&A

-

- 凸函数表示有最优解。损失函数是个凸函数,但是神经网络大多数都是非凸的,一般神经网络是没有最优解的。

-

- momentum表示把曲线变得平滑一点。

-

- Scheduler 前期选择的学习率大一点,后期学习率变小一点。类似年轻的时候多出去看看。

-

- sgd 相当于做了regular的作用,所以sgd比别的算法好一点。

参考

https://www.bilibili.com/video/BV1Gy4y1M7Cu?p=1

以上是关于别玩手机 图像分类比赛的主要内容,如果未能解决你的问题,请参考以下文章

实战 Kaggle 比赛:图像分类(CIFAR-10) 动手学深度学习v2

Kaggle—So Easy!百行代码实现排名Top 5%的图像分类比赛