电商产品评论数据情感分析

Posted Mint-L

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了电商产品评论数据情感分析相关的知识,希望对你有一定的参考价值。

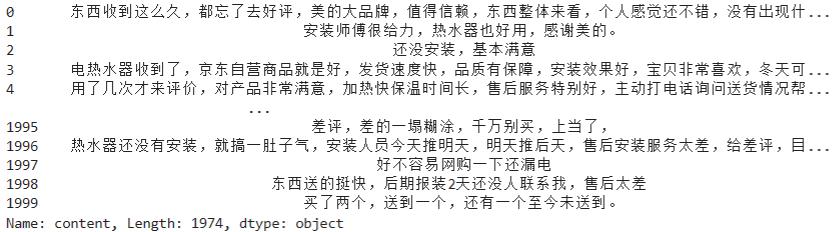

1、评论去重的代码

import pandas as pd import re import jieba.posseg as psg import numpy as np # 去重,去除完全重复的数据 reviews = pd.read_csv("./reviews.csv") reviews = reviews[[\'content\', \'content_type\']].drop_duplicates() content = reviews[\'content\']

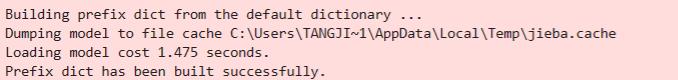

2、数据清洗、分词、词性标注、去除停用词代码

# 去除去除英文、数字等 # 由于评论主要为京东美的电热水器的评论,因此去除这些词语 strinfo = re.compile(\'[0-9a-zA-Z]|京东|美的|电热水器|热水器|\') content = content.apply(lambda x: strinfo.sub(\'\', x)) worker = lambda s: [(x.word, x.flag) for x in psg.cut(s)] # 自定义简单分词函数 seg_word = content.apply(worker) # 将词语转为数据框形式,一列是词,一列是词语所在的句子ID,最后一列是词语在该句子的位置 n_word = seg_word.apply(lambda x: len(x)) # 每一评论中词的个数 n_content = [[x+1]*y for x,y in zip(list(seg_word.index), list(n_word))] index_content = sum(n_content, []) # 将嵌套的列表展开,作为词所在评论的id seg_word = sum(seg_word, []) word = [x[0] for x in seg_word] # 词 nature = [x[1] for x in seg_word] # 词性 content_type = [[x]*y for x,y in zip(list(reviews[\'content_type\']), list(n_word))] content_type = sum(content_type, []) # 评论类型 result = pd.DataFrame("index_content":index_content, "word":word, "nature":nature, "content_type":content_type) # 删除标点符号 result = result[result[\'nature\'] != \'x\'] # x表示标点符号 # 删除停用词 stop_path = open("./stoplist.txt", \'r\',encoding=\'UTF-8\') stop = stop_path.readlines() stop = [x.replace(\'\\n\', \'\') for x in stop] word = list(set(word) - set(stop)) result = result[result[\'word\'].isin(word)] # 构造各词在对应评论的位置列 n_word = list(result.groupby(by = [\'index_content\'])[\'index_content\'].count()) index_word = [list(np.arange(0, y)) for y in n_word] index_word = sum(index_word, []) # 表示词语在改评论的位置 # 合并评论id,评论中词的id,词,词性,评论类型 result[\'index_word\'] = index_word

3、提取含有名词的评论

# 提取含有名词类的评论 ind = result[[\'n\' in x for x in result[\'nature\']]][\'index_content\'].unique() result = result[[x in ind for x in result[\'index_content\']]]

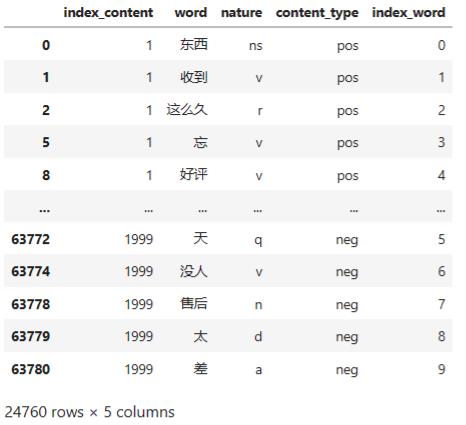

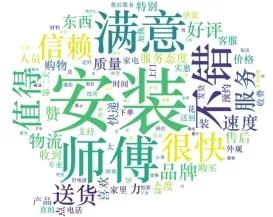

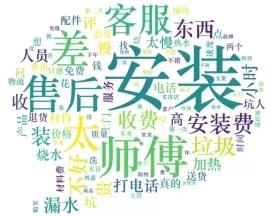

4、绘制词云

import matplotlib.pyplot as plt from wordcloud import WordCloud frequencies = result.groupby(by = [\'word\'])[\'word\'].count() frequencies = frequencies.sort_values(ascending = False) backgroud_Image=plt.imread(\'./pl.jpg\') wordcloud = WordCloud(font_path="C:\\Windows\\Fonts\\STZHONGS.ttf", max_words=100, background_color=\'white\', mask=backgroud_Image) my_wordcloud = wordcloud.fit_words(frequencies) plt.imshow(my_wordcloud) plt.axis(\'off\') plt.show() # 将结果写出 result.to_csv("./word.csv", index = False, encoding = \'utf-8\')

5、 匹配情感词

import pandas as pd import numpy as np word = pd.read_csv("./word.csv") # 读入正面、负面情感评价词 pos_comment = pd.read_csv("./正面评价词语(中文).txt", header=None,sep="\\n", encoding = \'utf-8\', engine=\'python\') neg_comment = pd.read_csv("./负面评价词语(中文).txt", header=None,sep="\\n", encoding = \'utf-8\', engine=\'python\') pos_emotion = pd.read_csv("./正面情感词语(中文).txt", header=None,sep="\\n", encoding = \'utf-8\', engine=\'python\') neg_emotion = pd.read_csv("./负面情感词语(中文).txt", header=None,sep="\\n", encoding = \'utf-8\', engine=\'python\') # 合并情感词与评价词 positive = set(pos_comment.iloc[:,0])|set(pos_emotion.iloc[:,0]) negative = set(neg_comment.iloc[:,0])|set(neg_emotion.iloc[:,0]) intersection = positive&negative # 正负面情感词表中相同的词语 positive = list(positive - intersection) negative = list(negative - intersection) positive = pd.DataFrame("word":positive, "weight":[1]*len(positive)) negative = pd.DataFrame("word":negative, "weight":[-1]*len(negative)) posneg = positive.append(negative) # 将分词结果与正负面情感词表合并,定位情感词 data_posneg = posneg.merge(word, left_on = \'word\', right_on = \'word\', how = \'right\') data_posneg = data_posneg.sort_values(by = [\'index_content\',\'index_word\'])

6、修正情感倾向

# 根据情感词前时候有否定词或双层否定词对情感值进行修正 # 载入否定词表 notdict = pd.read_csv("./not.csv") # 处理否定修饰词 data_posneg[\'amend_weight\'] = data_posneg[\'weight\'] # 构造新列,作为经过否定词修正后的情感值 data_posneg[\'id\'] = np.arange(0, len(data_posneg)) only_inclination = data_posneg.dropna() # 只保留有情感值的词语 only_inclination.index = np.arange(0, len(only_inclination)) index = only_inclination[\'id\'] for i in np.arange(0, len(only_inclination)): review = data_posneg[data_posneg[\'index_content\'] == only_inclination[\'index_content\'][i]] # 提取第i个情感词所在的评论 review.index = np.arange(0, len(review)) affective = only_inclination[\'index_word\'][i] # 第i个情感值在该文档的位置 if affective == 1: ne = sum([i in notdict[\'term\'] for i in review[\'word\'][affective - 1]]) if ne == 1: data_posneg[\'amend_weight\'][index[i]] = -\\ data_posneg[\'weight\'][index[i]] elif affective > 1: ne = sum([i in notdict[\'term\'] for i in review[\'word\'][[affective - 1, affective - 2]]]) if ne == 1: data_posneg[\'amend_weight\'][index[i]] = -\\ data_posneg[\'weight\'][index[i]] # 更新只保留情感值的数据 only_inclination = only_inclination.dropna() # 计算每条评论的情感值 emotional_value = only_inclination.groupby([\'index_content\'], as_index=False)[\'amend_weight\'].sum() # 去除情感值为0的评论 emotional_value = emotional_value[emotional_value[\'amend_weight\'] != 0]

7、查看情感分析的结果

# 给情感值大于0的赋予评论类型(content_type)为pos,小于0的为neg emotional_value[\'a_type\'] = \'\' emotional_value[\'a_type\'][emotional_value[\'amend_weight\'] > 0] = \'pos\' emotional_value[\'a_type\'][emotional_value[\'amend_weight\'] < 0] = \'neg\' # 查看情感分析结果 result = emotional_value.merge(word, left_on = \'index_content\', right_on = \'index_content\', how = \'left\') result = result[[\'index_content\',\'content_type\', \'a_type\']].drop_duplicates() confusion_matrix = pd.crosstab(result[\'content_type\'], result[\'a_type\'], margins=True) # 制作交叉表 (confusion_matrix.iat[0,0] + confusion_matrix.iat[1,1])/confusion_matrix.iat[2,2] # 提取正负面评论信息 ind_pos = list(emotional_value[emotional_value[\'a_type\'] == \'pos\'][\'index_content\']) ind_neg = list(emotional_value[emotional_value[\'a_type\'] == \'neg\'][\'index_content\']) posdata = word[[i in ind_pos for i in word[\'index_content\']]] negdata = word[[i in ind_neg for i in word[\'index_content\']]] # 绘制词云 import matplotlib.pyplot as plt from wordcloud import WordCloud # 正面情感词词云 freq_pos = posdata.groupby(by = [\'word\'])[\'word\'].count() freq_pos = freq_pos.sort_values(ascending = False) backgroud_Image=plt.imread(\'./pl.jpg\') wordcloud = WordCloud(font_path="C:\\Windows\\Fonts\\STZHONGS.ttf", max_words=100, background_color=\'white\', mask=backgroud_Image) pos_wordcloud = wordcloud.fit_words(freq_pos) plt.imshow(pos_wordcloud) plt.rcParams[\'font.sans-serif\'] = \'SimHei\' # 设置中文显示 plt.axis(\'off\') plt.show() # 负面情感词词云 freq_neg = negdata.groupby(by = [\'word\'])[\'word\'].count() freq_neg = freq_neg.sort_values(ascending = False) neg_wordcloud = wordcloud.fit_words(freq_neg) plt.imshow(neg_wordcloud) plt.rcParams[\'font.sans-serif\'] = \'SimHei\' # 设置中文显示 plt.axis(\'off\') plt.show() # 将结果写出,每条评论作为一行 posdata.to_csv("./posdata.csv", index = False, encoding = \'utf-8\') negdata.to_csv("./negdata.csv", index = False, encoding = \'utf-8\')

8、建立词典及语料库

import pandas as pd import numpy as np import re import itertools import matplotlib.pyplot as plt # 载入情感分析后的数据 posdata = pd.read_csv("./posdata.csv", encoding = \'utf-8\') negdata = pd.read_csv("./negdata.csv", encoding = \'utf-8\') from gensim import corpora, models # 建立词典 pos_dict = corpora.Dictionary([[i] for i in posdata[\'word\']]) # 正面 neg_dict = corpora.Dictionary([[i] for i in negdata[\'word\']]) # 负面 # 建立语料库 pos_corpus = [pos_dict.doc2bow(j) for j in [[i] for i in posdata[\'word\']]] # 正面 neg_corpus = [neg_dict.doc2bow(j) for j in [[i] for i in negdata[\'word\']]] # 负面

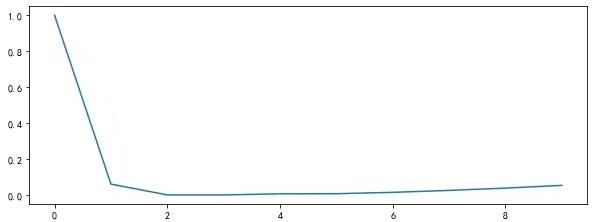

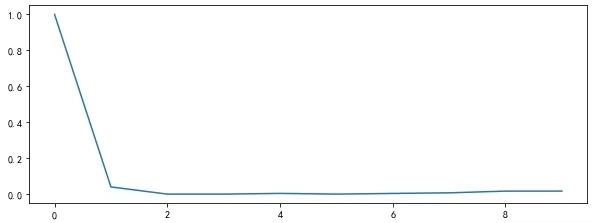

9、主题数寻优

# 构造主题数寻优函数 def cos(vector1, vector2): # 余弦相似度函数 dot_product = 0.0; normA = 0.0; normB = 0.0; for a,b in zip(vector1, vector2): dot_product += a*b normA += a**2 normB += b**2 if normA == 0.0 or normB==0.0: return(None) else: return(dot_product / ((normA*normB)**0.5)) # 主题数寻优 def lda_k(x_corpus, x_dict): # 初始化平均余弦相似度 mean_similarity = [] mean_similarity.append(1) # 循环生成主题并计算主题间相似度 for i in np.arange(2,11): lda = models.LdaModel(x_corpus, num_topics = i, id2word = x_dict) # LDA模型训练 for j in np.arange(i): term = lda.show_topics(num_words = 50) # 提取各主题词 top_word = [] for k in np.arange(i): top_word.append([\'\'.join(re.findall(\'"(.*)"\',i)) \\ for i in term[k][1].split(\'+\')]) # 列出所有词 # 构造词频向量 word = sum(top_word,[]) # 列出所有的词 unique_word = set(word) # 去除重复的词 # 构造主题词列表,行表示主题号,列表示各主题词 mat = [] for j in np.arange(i): top_w = top_word[j] mat.append(tuple([top_w.count(k) for k in unique_word])) p = list(itertools.permutations(list(np.arange(i)),2)) l = len(p) top_similarity = [0] for w in np.arange(l): vector1 = mat[p[w][0]] vector2 = mat[p[w][1]] top_similarity.append(cos(vector1, vector2)) # 计算平均余弦相似度 mean_similarity.append(sum(top_similarity)/l) return(mean_similarity) # 计算主题平均余弦相似度 pos_k = lda_k(pos_corpus, pos_dict) neg_k = lda_k(neg_corpus, neg_dict) # 绘制主题平均余弦相似度图形 from matplotlib.font_manager import FontProperties font = FontProperties(size=14) #解决中文显示问题 plt.rcParams[\'font.sans-serif\']=[\'SimHei\'] plt.rcParams[\'axes.unicode_minus\'] = False fig = plt.figure(figsize=(10,8)) ax1 = fig.add_subplot(211) ax1.plot(pos_k) ax1.set_xlabel(\' \', fontproperties=font) ax2 = fig.add_subplot(212) ax2.plot(neg_k) ax2.set_xlabel(\' \', fontproperties=font)

10、LDA主题分析

# LDA主题分析 pos_lda = models.LdaModel(pos_corpus, num_topics = 3, id2word = pos_dict) neg_lda = models.LdaModel(neg_corpus, num_topics = 3, id2word = neg_dict) pos_lda.print_topics(num_words = 10) neg_lda.print_topics(num_words = 10)

python实现电商评论的情感分析

现如今各种APP、微信订阅号、微博、购物网站等网站都允许用户发表一些个人看法、意见、态度、评价、立场等信息。针对这些数据,我们可以利用情感分析技术对其进行分析,总结出大量的有价值信息。例如对商品评论的分析,可以了解用户对商品的满意度,进而改进产品;通过对一个人分布内容的分析,了解他的情绪变化,哪种情绪多,哪种情绪少,进而分析他的性格。怎样知道哪些评论是正面的,哪些评论是负面的呢?正面评价的概率是多少呢?

利用python的第三方模块SnowNLP可以实现对评论内容的情感分析预测,SnowNLP可以方便的处理中文文本内容,如中文分词、词性标注、情感分析、文本分类、提取文本关键词、文本相似度计算等。大概大于等于0.5,可以判断为正面评价——积极情感,小于0.5,可以判断为负面评价——消极情感。

下面分析一组京东上某产品的评论数据并生成折线图:

部分源数据:

实现过程:

#加载情感分析模块 from snownlp import SnowNLP #from snownlp import sentiment import pandas as pd import matplotlib.pyplot as plt #导入样例数据 aa =\'F:\\\\python入门\\\\python编程锦囊\\\\Code(实例源码及使用说明)\\\\Code(实例源码及使用说明)\\\\Code(实例源码及使用说明)\\\\09\\\\data\\\\京东评论.xls\' #读取文本数据 df=pd.read_excel(aa) #提取所有数据 df1=df.iloc[:,3] print(\'将提取的数据打印出来:\\n\',df1) #遍历每条评论进行预测 values=[SnowNLP(i).sentiments for i in df1] #输出积极的概率,大于0.5积极的,小于0.5消极的 #myval保存预测值 myval=[] good=0 bad=0 for i in values: if (i>=0.5): myval.append("正面") good=good+1 else: myval.append("负面") bad=bad+1 df[\'预测值\']=values df[\'评价类别\']=myval #将结果输出到Excel df.to_excel(\'F:\\\\python入门\\\\python编程锦囊\\\\Code(实例源码及使用说明)\\\\Code(实例源码及使用说明)\\\\Code(实例源码及使用说明)\\\\09\\\\data\\\\result2.xls\') rate=good/(good+bad) print(\'好评率\',\'%.f%%\' % (rate * 100)) #格式化为百分比 #作图 y=values plt.rc(\'font\', family=\'SimHei\', size=10) plt.plot(y, marker=\'o\', mec=\'r\', mfc=\'w\',label=u\'评价分值\') plt.xlabel(\'用户\') plt.ylabel(\'评价分值\') # 让图例生效 plt.legend() #添加标题 plt.title(\'京东评论情感分析\',family=\'SimHei\',size=14,color=\'blue\') plt.show()

Excel结果:

作图的结果:

以上是关于电商产品评论数据情感分析的主要内容,如果未能解决你的问题,请参考以下文章