k8s的1.15.1高可用版本(Centos:7.9)

Posted 村里唯一的运维

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了k8s的1.15.1高可用版本(Centos:7.9)相关的知识,希望对你有一定的参考价值。

1. 软件版本

首先要把centos7系统的内核升级最好4.4以上(默认3.10的内核,运行大规模docker的时候会有bug)

| 软件/系统 | 版本 | 备注 |

|---|---|---|

| Centos | 7.9 | 最小安装版 |

| k8s | 1.15.1 | |

| flannel | 0.11 | |

| etcd | 3.3.10 |

2. 角色分配

| k8s角色 | 主机名 | 节点IP | 备注 |

|---|---|---|---|

| master1+etcd1 | master1.host.com | 10.0.0.70 | master节点 |

| master2+etcd2 | master2.host.com | 10.0.0.71 | |

| master3+etcd3 | master3.host.com | 10.0.0.72 | |

| node1 | node1.host.com | 10.0.0.73 | node节点 |

| node2 | node2.host.com | 10.0.0.74 | |

| haproxy1+keepalived | haproxy1.host.com | 10.0.0.75 | 负载均衡(vip:10.0.0.80) |

| haproxy2+keepalived | haproxy2.host.com | 10.0.0.76 |

3. 安装流程

第1个里程: 初始化工具安装

所有节点都执行

点击查看代码

yum install net-tools vim wget -y

第2个里程: 关闭防火墙与Selinux

所有节点都执行

systemctl stop firewalld

systemctl disable firewalld

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

reboot

第3个里程: 设置时区

所有节点都执行

\\cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime -rf

第4个里程: 关闭交换分区

所有节点都执行

swapoff -a

sed -i \'/ swap / s/^\\(.*\\)$/#\\1/g\' /etc/fstab

第5个里程:设置系统时间同步

所有节点都执行

yum install -y ntpdate

ntpdate -u ntp.aliyun.com

echo "*/5 * * * * ntpdate ntp.aliyun.com >/dev/null 2>&1" >> /etc/crontab

systemctl start crond.service

systemctl enable crond.service

第6个里程: 设置主机名

cat > /etc/hosts <<EOF

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.0.0.70 master1.host.com

10.0.0.71 master2.host.com

10.0.0.72 master3.host.com

10.0.0.73 node1.host.com

10.0.0.74 node2.host.com

10.0.0.75 haproxy1.host.com

10.0.0.76 haproxy2.host.com

EOF

第7个里程: 免密钥登录

master执行脚本即可

yum install sshpass -y

cat > scp.sh << EOF

#!/bin/sh

IP="10.0.0.70

10.0.0.71

10.0.0.72

10.0.0.73

10.0.0.74

10.0.0.75

10.0.0.76

"

for node in $IP

do

sshpass -p123456 ssh-copy-id -i /root/.ssh/id_rsa.pub $node -o StrictHostKeyChecking=no &>/dev/null

if [ $? -eq 0 ];then

echo "$node 秘钥copy完成"

else

echo "$node 秘钥copy失败"

fi

done

EOF

第8个里程:优化内核参数

master和node节点

cat >/etc/sysctl.d/kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

vm.swappiness=0

fs.file-max=52706963

fs.nr_open=52706963

EOF

sysctl -p

第9个里程: 配置高可用

安装keepalived

安装

2台haproxy都要安装

yum install -y keepalived

编写配置文件

haproxy1配置成master

cat >/etc/keepalived/keepalived.conf<<EOF

! Configuration File for keepalived

global_defs

router_id KUB_LVS

vrrp_instance VI_1

state MASTER

interface eth0

virtual_router_id 66

priority 100

advert_int 1

authentication

auth_type PASS

auth_pass 1111

virtual_ipaddress

10.0.0.80/24 dev eth0 label eth0:1

EOF

haproxy2配置成BACKUP

cat >/etc/keepalived/keepalived.conf<<EOF

! Configuration File for keepalived

global_defs

router_id KUB_LVS

vrrp_instance VI_1

state BACKUP

interface eth0

virtual_router_id 66

priority 80

advert_int 1

authentication

auth_type PASS

auth_pass 1111

virtual_ipaddress

10.0.0.80/24 dev eth0 label eth0:1

EOF

设置keepalived开机自启动

systemctl start keepalived

systemctl enable keepalived

安装haproxy(2台节点只需要修改对应的地址即可)

安装

1. 上传软件包

root@haproxy1:/usr/local/src# ll

total 3792

drwxr-xr-x 4 root root 96 Jun 9 14:01 ./

drwxr-xr-x 10 root root 140 Jun 9 14:02 ../

drwxrwxr-x 13 root root 4096 Jun 9 14:16 haproxy-2.4.4/

-rw-r--r-- 1 root root 3570069 May 24 10:25 haproxy-2.4.4.tar.gz

drwxr-xr-x 4 1026 ygw 58 Jun 27 2018 lua-5.3.5/

-rw-r--r-- 1 root root 303543 Nov 16 2020 lua-5.3.5.tar.gz

2. 做软连接

ln -s /usr/local/src/lua-5.3.5 /usr/local/lua

ln -s /usr/local/src/haproxy-2.4.4 /usr/local/haproxy

3. 编译安装lua

1)安装依赖

yum install gcc gcc-c++ readline-devel glibc glibc-devel pcre pcre-devel openssl-devel zlib-devel systemd-devel -y

cd /usr/local/lua && make linux

查看编译安装的版本

/usr/local/lua/src/lua -v

Lua 5.3.5 Copyright (C) 1994-2018 Lua.org, PUC-Rio

4. 编译安装haproxy

cd /usr/local/src/haproxy-2.4.4 && make TARGET=linux-glibc USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_SYSTEMD=1 USE_LUA=1 LUA_INC=/usr/local/lua/src/ LUA_LIB=/usr/local/lua/src/ && make install PREFIX=/apps/haproxy

5. 方便命令的调用

ln -s /apps/haproxy/sbin/haproxy /usr/sbin/

6. 查看版本

haproxy -v

编写haproxy配置文件

mkdir /etc/haproxy

cat >/etc/haproxy/haproxy.cfg<<EOF

global

log 127.0.0.1 local2 info

chroot /apps/haproxy

pidfile /var/lib/haproxy/haproxy.pid

stats socket /var/lib/haproxy/haproxy.sock mode 600 level admin

maxconn 100000

user haproxy

group haproxy

daemon

defaults

mode http

option http-keep-alive

option forwardfor

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

maxconn 100000

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:123456

listen k8s-api-6443

bind 10.0.0.80:6443

mode tcp

log global

server master1 10.0.0.70:6443 check inter 3000 fall 3 rise 5

server master2 10.0.0.71:6443 check inter 3000 fall 3 rise 5

server master3 10.0.0.72:6443 check inter 3000 fall 3 rise 5

EOF

编写haproxy的service文件

cat >/etc/systemd/system/haproxy.service<<EOF

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

[Service]

ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q

ExecStart=/usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

ExecReload=/bin/kill -USR2 $MAINPID

[Install]

WantedBy=multi-user.target

EOF

设置用户和目录权限

mkdir /var/lib/haproxy

useradd -r -s /sbin/nologin -d /var/lib/haproxy haproxy

设置haproxy开机自启动

systemctl daemon-reload

systemctl start haproxy

systemctl enable haproxy

第10个里程: 配置证书

下载自签名证书生成工具

Master1上操作

mkdir /soft && cd /soft

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

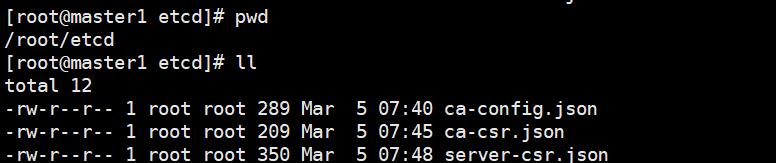

生成ETCD证书

创建目录(master1)

mkdir /root/etcd && cd /root/etcd

证书配置

Master1

cd /root/etcd

cat << EOF | tee ca-config.json

"signing":

"default":

"expiry": "876000h"

,

"profiles":

"www":

"expiry": "876000h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

EOF

创建CA证书请求文件

cd /root/etcd

cat << EOF | tee ca-csr.json

"CA":"expiry":"876000h",

"CN": "etcd CA",

"key":

"algo": "rsa",

"size": 2048

,

"names": [

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

]

EOF

创建ETCD证书请求文件

可以把所有的master IP 加入到csr文件中(Master1上执行)

cd /root/etcd

cat << EOF | tee server-csr.json

"CN": "etcd",

"hosts": [

"master-1",

"master-2",

"master-3",

"192.168.3.200",

"192.168.3.201",

"192.168.3.202"

],

"key":

"algo": "rsa",

"size": 2048

,

"names": [

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

]

EOF

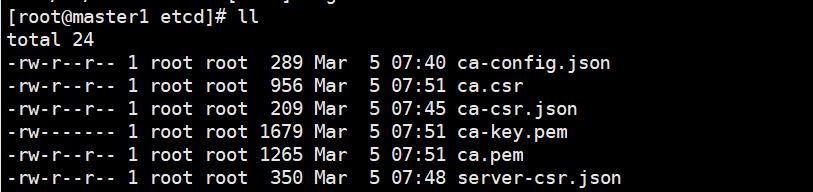

生成 ETCD CA 证书和ETCD公私钥(Master-1上执行)

cd /root/etcd/

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@master1 etcd]# ll

total 24

-rw-r--r-- 1 root root 289 Mar 5 07:40 ca-config.json #ca 的配置文件

-rw-r--r-- 1 root root 956 Mar 5 07:51 ca.csr #ca 证书生成文件

-rw-r--r-- 1 root root 209 Mar 5 07:45 ca-csr.json #ca 证书请求文件

-rw------- 1 root root 1679 Mar 5 07:51 ca-key.pem #ca 证书key

-rw-r--r-- 1 root root 1265 Mar 5 07:51 ca.pem #ca 证书

-rw-r--r-- 1 root root 350 Mar 5 07:48 server-csr.json

生成etcd证书(Master-1)

cd /root/etcd/

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@master1 etcd]# ll

total 36

-rw-r--r-- 1 root root 289 Mar 5 07:40 ca-config.json

-rw-r--r-- 1 root root 956 Mar 5 07:51 ca.csr

-rw-r--r-- 1 root root 209 Mar 5 07:45 ca-csr.json

-rw------- 1 root root 1679 Mar 5 07:51 ca-key.pem

-rw-r--r-- 1 root root 1265 Mar 5 07:51 ca.pem

-rw-r--r-- 1 root root 1086 Mar 5 07:54 server.csr

-rw-r--r-- 1 root root 350 Mar 5 07:48 server-csr.json

-rw------- 1 root root 1679 Mar 5 07:54 server-key.pem #etcd客户端使用

-rw-r--r-- 1 root root 1415 Mar 5 07:54 server.pem

创建 Kubernetes 相关证书

此证书用于Kubernetes节点直接的通信, 与之前的ETCD证书不同. (Master-1)

创建目录(Master-1)

mkdir /root/kubernetes/ && cd /root/kubernetes/

配置ca 文件(Master-1)

cd /root/kubernetes/

cat << EOF | tee ca-config.json

"signing":

"default":

"expiry": "876000h"

,

"profiles":

"kubernetes":

"expiry": "876000h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

EOF

[root@master1 kubernetes]# ll

total 4

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

创建ca证书申请文件(Master-1)

cd /root/kubernetes/

cat << EOF | tee ca-csr.json

"CA": "expiry": "876000h" ,

"CN": "kubernetes",

"key":

"algo": "rsa",

"size": 2048

,

"names": [

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

]

EOF

[root@master1 kubernetes]# ll

total 8

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

生成API SERVER证书申请文件(Master-1)

cd /root/kubernetes/

cat << EOF | tee server-csr.json

"CN": "kubernetes",

"hosts": [

"192.168.0.1", # service网段

"127.0.0.1",

"192.168.0.2", #将来DNS需要用的地址

"10.0.0.70",

"10.0.0.71",

"10.0.0.72",

"10.0.0.73",

"10.0.0.74",

"10.0.0.80",

"master1.host.com",

"master2.host.com",

"master3.host.com",

"node1.host.com",

"node2.host.com",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key":

"algo": "rsa",

"size": 2048

,

"names": [

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

]

EOF

[root@master1 kubernetes]# ll

total 12

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

创建 Kubernetes Proxy 证书申请文件(Master-1)

cd /root/kubernetes/

cat << EOF | tee kube-proxy-csr.json

"CN": "system:kube-proxy",

"hosts": [],

"key":

"algo": "rsa",

"size": 2048

,

"names": [

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

]

EOF

生成 kubernetes CA 证书和公私钥

生成ca证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@master1 kubernetes]# ll

total 28

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

生成 api-server 证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

[root@master1 kubernetes]# ll

total 40

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw-r--r-- 1 root root 1419 Mar 5 08:25 server.csr

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

-rw------- 1 root root 1679 Mar 5 08:25 server-key.pem

-rw-r--r-- 1 root root 1785 Mar 5 08:25 server.pem

生成 kube-proxy 证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@master1 kubernetes]# ll

total 52

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 1009 Mar 5 08:27 kube-proxy.csr

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw------- 1 root root 1679 Mar 5 08:27 kube-proxy-key.pem

-rw-r--r-- 1 root root 1403 Mar 5 08:27 kube-proxy.pem

-rw-r--r-- 1 root root 1419 Mar 5 08:25 server.csr

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

-rw------- 1 root root 1679 Mar 5 08:25 server-key.pem

-rw-r--r-- 1 root root 1785 Mar 5 08:25 server.pem

第11个里程: 部署ETCD

下载etcd二进制安装文件(所有master)

mkdir -p /soft && cd /soft

wget https://github.com/etcd-io/etcd/releases/download/v3.3.10/etcd-v3.3.10-linux-amd64.tar.gz

tar -xvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64/

cp etcd etcdctl /usr/local/bin/

编辑etcd配置文件(所有master)

注意修改每个节点的ETCD_NAME

注意修改每个节点的监听地址

mkdir -p /etc/etcd/cfg,ssl

cat >/etc/etcd/cfg/etcd.conf<<EOF

#[Member]

ETCD_NAME="master1.host.com"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.70:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.70:2379,http://10.0.0.70:2390"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.70:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.70:2379"

ETCD_INITIAL_CLUSTER="master1.host.com=https://10.0.0.70:2380,master2.host.com=https://10.0.0.71:2380,master3.host.com=https://10.0.0.72:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

mkdir -p /etc/etcd/cfg,ssl

cat >/etc/etcd/cfg/etcd.conf<<EOF

#[Member]

ETCD_NAME="master2.host.com"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.71:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.71:2379,http://10.0.0.71:2390"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.71:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.71:2379"

ETCD_INITIAL_CLUSTER="master1.host.com=https://10.0.0.70:2380,master2.host.com=https://10.0.0.71:2380,master3.host.com=https://10.0.0.72:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

mkdir -p /etc/etcd/cfg,ssl

cat >/etc/etcd/cfg/etcd.conf<<EOF

#[Member]

ETCD_NAME="master3.host.com"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.72:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.72:2379,http://10.0.0.72:2390"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.72:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.72:2379"

ETCD_INITIAL_CLUSTER="master1.host.com=https://10.0.0.70:2380,master2.host.com=https://10.0.0.71:2380,master3.host.com=https://10.0.0.72:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

创建ETCD的系统启动服务(所有master)

cat > /usr/lib/systemd/system/etcd.service<<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/etc/etcd/cfg/etcd.conf

ExecStart=/usr/local/bin/etcd \\

--name=\\$ETCD_NAME \\

--data-dir=\\$ETCD_DATA_DIR \\

--listen-peer-urls=\\$ETCD_LISTEN_PEER_URLS \\

--listen-client-urls=\\$ETCD_LISTEN_CLIENT_URLS,http://127.0.0.1:2379 \\

--advertise-client-urls=\\$ETCD_ADVERTISE_CLIENT_URLS \\

--initial-advertise-peer-urls=\\$ETCD_INITIAL_ADVERTISE_PEER_URLS \\

--initial-cluster=\\$ETCD_INITIAL_CLUSTER \\

--initial-cluster-token=\\$ETCD_INITIAL_CLUSTER_TOKEN \\

--initial-cluster-state=new \\

--cert-file=/etc/etcd/ssl/server.pem \\

--key-file=/etc/etcd/ssl/server-key.pem \\

--peer-cert-file=/etc/etcd/ssl/server.pem \\

--peer-key-file=/etc/etcd/ssl/server-key.pem \\

--trusted-ca-file=/etc/etcd/ssl/ca.pem \\

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

复制etcd证书到指定目录(master1)

\\cp /root/etcd/*pem /etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.71:/etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.72:/etc/etcd/ssl/

拷贝到node节点上要现在node上创建相关目录mkdir -p /etc/etcd/cfg,ssl

scp /etc/etcd/ssl/* 10.0.0.73:/etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.74:/etc/etcd/ssl/

启动etcd (master节点)

systemctl start etcd

systemctl enable etcd

systemctl status etcd

启动的时候master1会先挂起

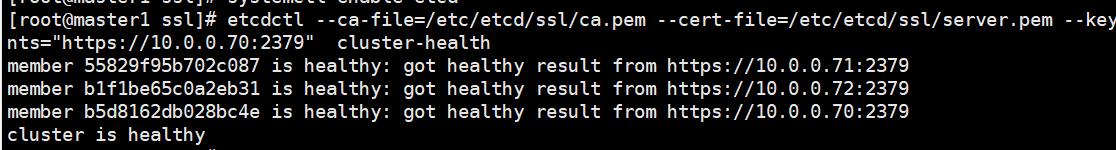

检查etcd 集群是否运行正常

etcdctl --ca-file=/etc/etcd/ssl/ca.pem --cert-file=/etc/etcd/ssl/server.pem --key-file=/etc/etcd/ssl/server-key.pem --endpoints="https://10.0.0.70:2379" cluster-health

member 55829f95b702c087 is healthy: got healthy result from https://10.0.0.71:2379

member b1f1be65c0a2eb31 is healthy: got healthy result from https://10.0.0.72:2379

member b5d8162db028bc4e is healthy: got healthy result from https://10.0.0.70:2379

cluster is healthy

第12个里程: 所有node节点安装docker

参考docker安装文档

第13个里程: 创建Docker所需分配POD 网段(任意master节点)

etcdctl --ca-file=/etc/etcd/ssl/ca.pem \\

--cert-file=/etc/etcd/ssl/server.pem --key-file=/etc/etcd/ssl/server-key.pem \\

--endpoints="https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379" \\

set /coreos.com/network/config \\

\' "Network": "172.17.0.0/16", "Backend": "Type": "vxlan"\'

检查

etcdctl \\

--endpoints=https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379 \\

--ca-file=/etc/etcd/ssl/ca.pem \\

--cert-file=/etc/etcd/ssl/server.pem \\

--key-file=/etc/etcd/ssl/server-key.pem \\

get /coreos.com/network/config

第14个里程: 部署flannel

安装

所有节点都要安装

cd /soft

tar xf flannel-v0.11.0-linux-amd64.tar.gz

mv flanneld mk-docker-opts.sh /usr/local/bin/

拷贝到其他节点上

scp /usr/local/bin/flanneld 10.0.0.71:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.71:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.72:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.72:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.73:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.73:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.74:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.74:/usr/local/bin

配置flannel

所有k8s节点

mkdir -p /etc/flannel

cat > /etc/flannel/flannel.cfg<<EOF

FLANNEL_OPTIONS="-etcd-endpoints=https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379 -etcd-cafile=/etc/etcd/ssl/ca.pem -etcd-certfile=/etc/etcd/ssl/server.pem -etcd-keyfile=/etc/etcd/ssl/server-key.pem"

EOF

编写flannel启动文件

所有k8s节点

cat > /usr/lib/systemd/system/flanneld.service <<EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/etc/flannel/flannel.cfg

ExecStart=/usr/local/bin/flanneld --ip-masq \\$FLANNEL_OPTIONS

ExecStartPost=/usr/local/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

启动flannel

所有节点

systemctl daemon-reload

systemctl start flanneld.service

systemctl enable flanneld.service

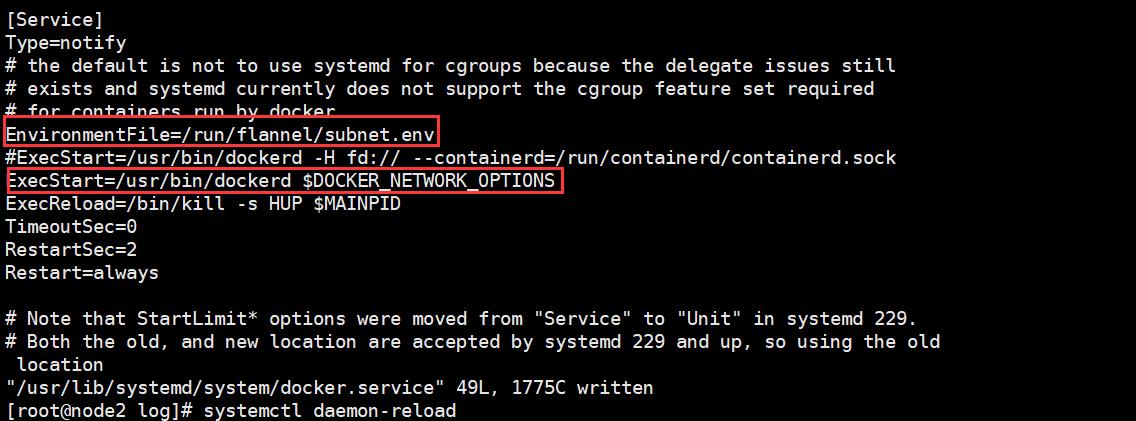

修改docker启动使用flannel的配置

这一步的目的是让docker和flannel运行在同一个网段

systemctl daemon-reload

systemctl restart docker

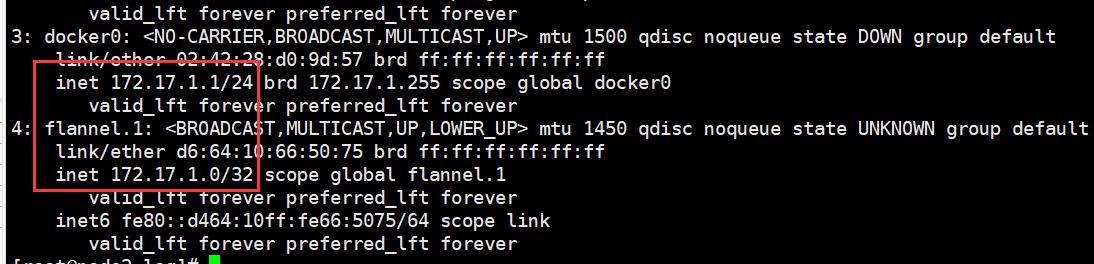

检查是否和flannel在同一个网段

可以在任意一台master能否ping通172.17.1.1

Node 节点验证是否可以访问其他节点Docker0

第15个里程: 安装Master 组件

Master端需要安装的组件如下:

kube-apiserver

kube-scheduler

kube-controller-manager

安装Api Server服务

下载Kubernetes二进制包(1.15.1)

在master1上解压后传到其它的master上

cd /soft

tar xvf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin/

cp kube-scheduler kube-apiserver kube-controller-manager kubectl /usr/local/bin/

复制执行文件到其他的master节点

scp /usr/local/bin/kube* 10.0.0.71:/usr/local/bin

scp /usr/local/bin/kube* 10.0.0.72:/usr/local/bin

配置Kubernetes证书

Kubernetes各个组件之间通信需要证书,需要复制到每个master节点(master1)

master1

mkdir -p /etc/kubernetes/cfg,ssl

cp /root/kubernetes/*.pem /etc/kubernetes/ssl/

master2

mkdir -p /etc/kubernetes/cfg,ssl

master3

mkdir -p /etc/kubernetes/cfg,ssl

复制到其他的节点

scp /etc/kubernetes/ssl/* 10.0.0.71:/etc/kubernetes/ssl

scp /etc/kubernetes/ssl/* 10.0.0.72:/etc/kubernetes/ssl

创建 TLS Bootstrapping Token(这里记录是告诉其作用,实际操作是下面的一步)

TLS bootstrapping 功能就是让 kubelet 先使用一个预定的低权限用户连接到 apiserver,然后向 apiserver 申请证书,kubelet 的证书由 apiserver 动态签署

Token可以是任意的包含128 bit的字符串,可以使用安全的随机数发生器生成

head -c 16 /dev/urandom | od -An -t x | tr -d \' \'

编辑Token 文件(所有master)

f89a76f197526a0d4bc2bf9c86e871c3:随机字符串,自定义生成; kubelet-bootstrap:用户名; 10001:UID; system:kubelet-bootstrap:用户组

cat > /etc/kubernetes/cfg/token.csv << EOF

f89a76f197526a0d4bc2bf9c86e871c3,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

创建Apiserver配置文件(所有的master节点)

配置文件内容基本相同, 如果有多个节点, 那么需要修改IP地址即可

cat >/etc/kubernetes/cfg/kube-apiserver.cfg <<EOF

KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--insecure-bind-address=0.0.0.0 \\

--insecure-port=8080 \\

--etcd-servers=https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379 \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--advertise-address=0.0.0.0 \\

--allow-privileged=true \\

--service-cluster-ip-range=192.168.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--enable-bootstrap-token-auth \\

--token-auth-file=/etc/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/etc/kubernetes/ssl/server.pem \\

--tls-private-key-file=/etc/kubernetes/ssl/server-key.pem \\

--client-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/etc/etcd/ssl/ca.pem \\

--etcd-certfile=/etc/etcd/ssl/server.pem \\

--etcd-keyfile=/etc/etcd/ssl/server-key.pem"

EOF

#参数说明

--logtostderr 启用日志

---v4 日志等级

--etcd-servers etcd 集群地址

--etcd-servers=https://192.168.91.200:2379,https://192.168.91.201:2379,https://192.168.91.202:2379

--bind-address 监听地址

--service-cluster-ip-range=192.168.0.0/24 这个地址段要和前面etcd签发证书的时候的网段是一样的(本次实验用的是192.168.0.0网段)

--secure-port https 安全端口

--advertise-address 集群通告地址

--allow-privileged 启用授权

--service-cluster-ip-range Service 虚拟IP地址段

--enable-admission-plugins 准入控制模块

--authorization-mode 认证授权,启用RBAC授权

--enable-bootstrap-token-auth 启用TLS bootstrap功能

--token-auth-file token 文件

--service-node-port-range Service Node类型默认分配端口范围

配置kube-apiserver 启动文件(所有的master节点)

cat >/usr/lib/systemd/system/kube-apiserver.service<<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-apiserver.cfg

ExecStart=/usr/local/bin/kube-apiserver \\$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

启动kube-apiserver服务(所有master)

systemctl daemon-reload

systemctl start kube-apiserver.service

systemctl enable kube-apiserver.service

检查

查看加密的端口是否已经启动

[root@master1 ssl]# netstat -anltup | grep 6443

tcp6 0 0 :::6443 :::* LISTEN 49473/kube-apiserve

tcp6 0 0 ::1:43658 ::1:6443 ESTABLISHED 49473/kube-apiserve

tcp6 0 0 ::1:6443 ::1:43658 ESTABLISHED 49473/kube-apiserve

查看加密的端口是否已经启动(node节点)

[root@node1 ~]# telnet 10.0.0.70 6443

Trying 10.0.0.70...

Connected to 10.0.0.70.

Escape character is \'^]\'.

第16个里程: 部署kube-scheduler 服务

创建kube-scheduler配置文件(所有的master节点)

cat >/etc/kubernetes/cfg/kube-scheduler.cfg<<EOF

KUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --bind-address=0.0.0.0 --master=127.0.0.1:8080 --leader-elect"

EOF

创建kube-scheduler 启动文件(所有master)

cat >/usr/lib/systemd/system/kube-scheduler.service<<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-scheduler.cfg

ExecStart=/usr/local/bin/kube-scheduler \\$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

启动kube-scheduler服务(所有的master节点)

systemctl daemon-reload

systemctl start kube-scheduler.service

systemctl enable kube-scheduler.service

查看Master节点组件状态(任意一台master)

[root@master3 ssl]# kubectl get cs

NAME STATUS MESSAGE ERROR

#这里是因为这个服务还没有安装

controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: connect: connection refused

scheduler Healthy ok

etcd-2 Healthy "health":"true"

etcd-1 Healthy "health":"true"

etcd-0 Healthy "health":"true"

第17个里程: 部署kube-controller-manager

创建kube-controller-manager配置文件(所有master节点)

cat >/etc/kubernetes/cfg/kube-controller-manager.cfg<<EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=127.0.0.1:8080 \\

--leader-elect=true \\

--address=0.0.0.0 \\

--service-cluster-ip-range=192.168.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem"

EOF

参数说明

--master=127.0.0.1:8080 #指定Master地址

--leader-elect #竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。

--service-cluster-ip-range=192.168.0.0/24 这个地址段要和前面etcd签发证书的时候的网段是一样的(本次实验用的是192.168.0.0网段)

--service-cluster-ip-range #kubernetes service 指定的IP地址范围。

创建kube-controller-manager 启动文件(所有master节点)

cat >/usr/lib/systemd/system/kube-controller-manager.service<<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-controller-manager.cfg

ExecStart=/usr/local/bin/kube-controller-manager \\$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

启动kube-controller-manager服务(所有master节点)

systemctl daemon-reload

systemctl start kube-controller-manager.service

systemctl enable kube-controller-manager.service

systemctl status kube-controller-manager.service

查看Master 节点组件状态

必须要在各个节点组件正常的情况下, 才去部署Node节点组件

[root@master1 ssl]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy "health":"true"

etcd-2 Healthy "health":"true"

etcd-0 Healthy "health":"true"

第18个里程: 部署Node节点组件

部署 kubelet 组件

从Master节点复制kubelet和kube-proxy 执行文件到Node

拷贝到node1

scp /soft/kubernetes/server/bin/kubelet 10.0.0.73:/usr/local/bin/

scp /soft/kubernetes/server/bin/kube-proxy 10.0.0.73:/usr/local/bin/

拷贝到node2

scp /soft/kubernetes/server/bin/kubelet 10.0.0.74:/usr/local/bin/

scp /soft/kubernetes/server/bin/kube-proxy 10.0.0.74:/usr/local/bin/

创建kubelet bootstrap.kubeconfig 文件

Maste1节点

mkdir /root/config ; cd /root/config

cat >/root/config/environment.sh<<EOF

# 创建kubelet bootstrapping kubeconfig

BOOTSTRAP_TOKEN=f89a76f197526a0d4bc2bf9c86e871c3

# 这里监听的是vip地址

KUBE_APISERVER="https://10.0.0.80:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \\

--certificate-authority=/etc/kubernetes/ssl/ca.pem \\

--embed-certs=true \\

--server=\\$KUBE_APISERVER \\

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \\

--token=\\$BOOTSTRAP_TOKEN \\

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \\

--cluster=kubernetes \\

--user=kubelet-bootstrap \\

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#通过 bash environment.sh获取 bootstrap.kubeconfig 配置文件。

EOF

执行脚本

[root@master1 config]# pwd

/root/config

sh /root/config/environment.sh

创建kube-proxy kubeconfig文件(master-1)

cat >/root/config/env_proxy.sh<<EOF

# 创建kube-proxy kubeconfig文件

BOOTSTRAP_TOKEN=f89a76f197526a0d4bc2bf9c86e871c3

# 这里换成VIP地址

KUBE_APISERVER="https://10.0.0.80:6443"

kubectl config set-cluster kubernetes \\

--certificate-authority=/etc/kubernetes/ssl/ca.pem \\

--embed-certs=true \\

--server=\\$KUBE_APISERVER \\

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \\

--client-certificate=/etc/kubernetes/ssl/kube-proxy.pem \\

--client-key=/etc/kubernetes/ssl/kube-proxy-key.pem \\

--embed-certs=true \\

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \\

--cluster=kubernetes \\

--user=kube-proxy \\

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

EOF

执行脚本

[root@master1 config]# pwd

/root/config

sh /root/config/env_proxy.sh

复制kubeconfig文件与证书到所有Node节点

将bootstrap kubeconfig kube-proxy.kubeconfig 文件复制到所有Node节点

先在node节点上创建目录

mkdir -p /etc/kubernetes/cfg,ssl

复制证书文件ssl(master1)

scp /etc/kubernetes/ssl/* 10.0.0.73:/etc/kubernetes/ssl/

scp /etc/kubernetes/ssl/* 10.0.0.74:/etc/kubernetes/ssl/

复制kubeconfig文件(master1)

拷贝到node1

scp /root/config/bootstrap.kubeconfig 10.0.0.73:/etc/kubernetes/cfg

scp /root/config/kube-proxy.kubeconfig 10.0.0.73:/etc/kubernetes/cfg

拷贝到node2

scp /root/config/bootstrap.kubeconfig 10.0.0.74:/etc/kubernetes/cfg

scp /root/config/kube-proxy.kubeconfig 10.0.0.74:/etc/kubernetes/cfg

创建kubelet参数配置文件

不同的Node节点, 需要修改IP地址 (node节点操作)

cat >/etc/kubernetes/cfg/kubelet.config<<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 10.0.0.73

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["192.168.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat >/etc/kubernetes/cfg/kubelet.config<<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 10.0.0.74

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["192.168.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

创建kubelet配置文件

不同的Node节点, 需要修改IP地址

cat >/etc/kubernetes/cfg/kubelet<<EOF

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=10.0.0.73 \\

--kubeconfig=/etc/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/etc/kubernetes/cfg/kubelet.config \\

--cert-dir=/etc/kubernetes/ssl \\

--pod-infra-container-image=docker.io/kubernetes/pause:latest"

EOF

cat >/etc/kubernetes/cfg/kubelet<<EOF

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=10.0.0.74 \\

--kubeconfig=/etc/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/etc/kubernetes/cfg/kubelet.config \\

--cert-dir=/etc/kubernetes/ssl \\

--pod-infra-container-image=docker.io/kubernetes/pause:latest"

EOF

创建kubelet系统启动文件(所有node节点)

cat >/usr/lib/systemd/system/kubelet.service<<EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kubelet

ExecStart=/usr/local/bin/kubelet \\$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

将kubelet-bootstrap用户绑定到系统集群角色

master1节点操作

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

启动kubelet服务(所有node节点)

systemctl daemon-reload

systemctl start kubelet.service

systemctl enable kubelet.service

systemctl status kubelet.service

服务端批准与查看CSR请求

Maste1节点操作

[root@master1 ~]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-I81WpTLkI1GcJL1RN_7AsH2gDtqkRuIGb9Cvkzktg00 23s kubelet-bootstrap Pending

node-csr-vaqrhpHnGVhoa6lFp3ADkZxKtcZLYFEbuXbJ1r9AtrM 13s kubelet-bootstrap Pending

批准请求

Master节点操作

kubectl certificate approve node-csr-I81WpTLkI1GcJL1RN_7AsH2gDtqkRuIGb9Cvkzktg00

kubectl certificate approve node-csr-vaqrhpHnGVhoa6lFp3ADkZxKtcZLYFEbuXbJ1r9AtrM

查看节点状态

[root@master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

10.0.0.73 Ready <none> 5s v1.15.1

10.0.0.74 Ready <none> 32m v1.15.1

部署kube-proxy 组件

kube-proxy 运行在所有Node节点上, 监听Apiserver 中 Service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡

创建kube-proxy配置文件

注意修改hostname-override地址, 不同的节点则不同

cat >/etc/kubernetes/cfg/kube-proxy<<EOF

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--metrics-bind-address=0.0.0.0 \\

--hostname-override=10.0.0.73 \\

--cluster-cidr=192.168.0.0/24 \\

--kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

参数说明:

--hostname-override=10.0.0.73 节点的ip地址

--cluster-cidr=192.168.0.0/24 这里用的网段也是给etcd证书签发的时候用到的网段

cat >/etc/kubernetes/cfg/kube-proxy<<EOF

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--metrics-bind-address=0.0.0.0 \\

--hostname-override=10.0.0.74 \\

--cluster-cidr=192.168.0.0/24 \\

--kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

创建kube-proxy systemd unit 文件(所有node节点)

cat >/usr/lib/systemd/system/kube-proxy.service<<EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \\$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

启动kube-proxy 服务

systemctl daemon-reload

systemctl start kube-proxy.service

systemctl enable kube-proxy.service

systemctl status kube-proxy.service

运行Demo项目检测(任意master执行即可)

[root@master1 ~]# kubectl run nginx --image=nginx --replicas=2

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

deployment.apps/nginx created

[root@master1 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

default nginx-7bb7cd8db5-8b5gs 0/1 ContainerCreating 0 7s

default nginx-7bb7cd8db5-dzc5q 0/1 ContainerCreating 0 7s

#获取容器IP与运行节点

[root@master1 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7bb7cd8db5-8b5gs 1/1 Running 0 56s 172.17.1.2 10.0.0.74 <none> <none>

nginx-7bb7cd8db5-dzc5q 1/1 Running 0 56s 172.17.46.2 10.0.0.73 <none> <none>

#创建容器svc端口

[root@master1 ~]# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# 查看SVC

[root@master1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 125m

nginx NodePort 192.168.0.147 <none> 88:39073/TCP 11s

# 访问node节点上的pod

[root@master1 ~]# curl http://10.0.0.73:39073

[root@master1 ~]# curl http://10.0.0.74:39073

删除demon项目

kubectl delete deployment nginx

kubectl delete pods nginx

kubectl delete svc -l run=nginx

第19个里程: 部署DNS

把镜像先传到node节点,然后倒入镜像

docker load -i coredns1.0.6.tar

master1上应用yml文件

kubectl apply -f coredns-1.0.6.yml

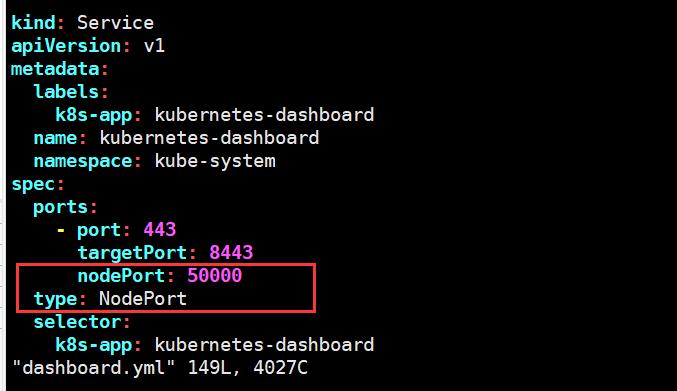

第20个里程: 部署dashboard

部署

下载yml文件以后,修改一下如下地方

访问的时候前面地址加上https:节点ip:50000

创建用户授权

master上执行(任意一个master节点)

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

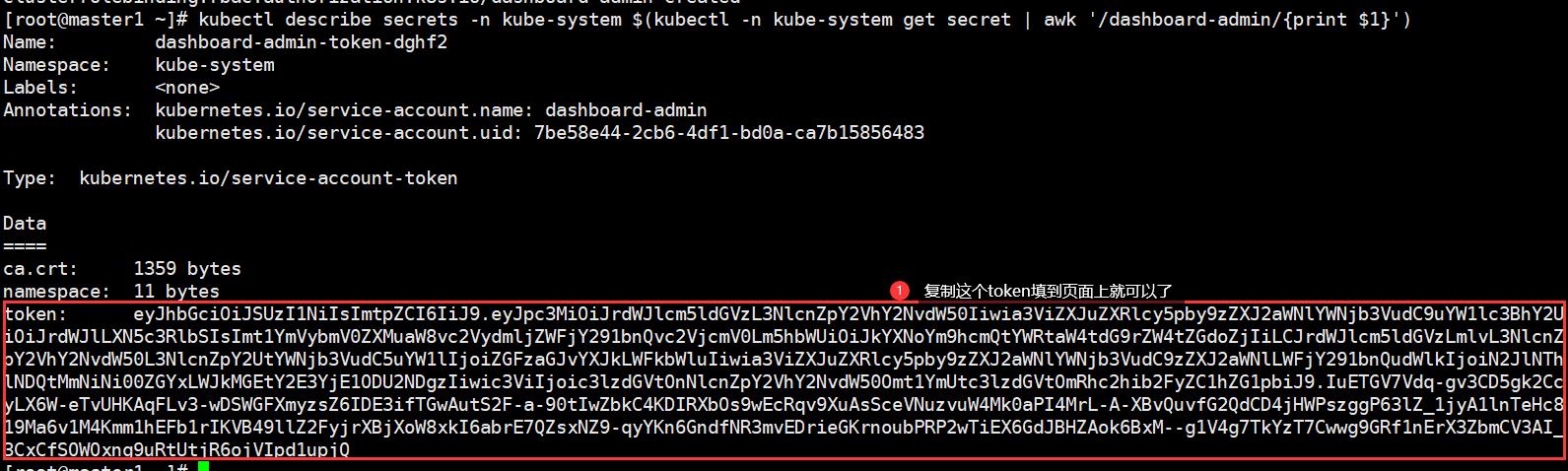

获取Token

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk \'/dashboard-admin/print $1\')

第21个里程: 部署Traefik 2.0

创建 traefik-crd.yaml

crd资源不区分命名空间

mkdir /root/ingress && cd /root/ingress

[root@master1 ingress]# cat traefik-crd.yaml

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ingressroutes.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: IngressRoute

plural: ingressroutes

singular: ingressroute

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ingressroutetcps.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: IngressRouteTCP

plural: ingressroutetcps

singular: ingressroutetcp

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: middlewares.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: Middleware

plural: middlewares

singular: middleware

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: tlsoptions.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: TLSOption

plural: tlsoptions

singular: tlsoption

创建

kubectl apply -f traefik-crd.yaml

检查

[root@master1 ingress]# kubectl get crd

NAME CREATED AT

ingressroutes.traefik.containo.us 2023-03-11T01:29:34Z

ingressroutetcps.traefik.containo.us 2023-03-11T01:29:34Z

middlewares.traefik.containo.us 2023-03-11T01:29:34Z

tlsoptions.traefik.containo.us 2023-03-11T01:29:34Z

创建Traefik RBAC文件

rbac区分命名空间

[root@master1 ingress]# cat traefik-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

namespace: kube-system

name: traefik-ingress-controller

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

rules:

- apiGroups: [""]

resources: ["services","endpoints","secrets"]

verbs: ["get","list","watch"]

- apiGroups: ["extensions"]

resources: ["ingresses"]

verbs: ["get","list","watch"]

- apiGroups: ["extensions"]

resources: ["ingresses/status"]

verbs: ["update"]

- apiGroups: ["traefik.containo.us"]

resources: ["middlewares"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["ingressroutes"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["ingressroutetcps"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["tlsoptions"]

verbs: ["get","list","watch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

kubectl apply -f traefik-rbac.yaml

创建Traefik ConfigMap

[root@master1 ingress]# cat traefik-config.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: traefik-config

namespace: kube-system

data:

traefik.yaml: |-

serversTransport:

insecureSkipVerify: true

api:

insecure: true

dashboard: true

debug: true

metrics:

prometheus: ""

entryPoints:

web:

address: ":80"

websecure:

address: ":443"

providers:

kubernetesCRD: ""

log:

filePath: ""

level: error

format: json

accessLog:

filePath: ""

format: json

bufferingSize: 0

filters:

retryAttempts: true

minDuration: 20

fields:

defaultMode: keep

names:

ClientUsername: drop

headers:

defaultMode: keep

names:

User-Agent: redact

Authorization: drop

Content-Type: keep

创建

kubectl apply -f traefik-config.yaml

检查

[root@master1 ingress]# kubectl get configmap -n kube-system

NAME DATA AGE

coredns 1 14h

extension-apiserver-authentication 1 15h

kubernetes-dashboard-settings 1 13h

traefik-config 1 4s

节点设置label标签

由于是 Kubernetes DeamonSet 这种方式部署 Traefik,所以需要提前给节点设置 Label,这样当程序部署时 Pod 会自动调度到设置 Label 的节点上

kubectl label nodes 192.168.3.203 IngressProxy=true

查看标签是否成功

[root@master1 ingress]# kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

192.168.3.203 Ready <none> 13h v1.15.1 IngressProxy=true,beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=192.168.3.203,kubernetes.io/os=linux

192.168.3.204 Ready <none> 13h v1.15.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=192.168.3.204,kubernetes.io/os=linux

注意每个Node节点的80与443端口不能被占用

netstat -antupl | grep -E "80|443"

创建 traefik 部署文件

上面打了节点标签,它只会到到指定的节点上面,即使是用的daemonset

[root@master1 ingress]# cat traefik-deploy.yaml

apiVersion: v1

kind: Service

metadata:

name: traefik

namespace: kube-system

spec:

ports:

- name: web

port: 80

- name: websecure

port: 443

- name: admin

port: 8080

selector:

app: traefik

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: traefik-ingress-controller

namespace: kube-system

labels:

app: traefik

spec:

selector:

matchLabels:

app: traefik

template:

metadata:

name: traefik

labels:

app: traefik

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 1

containers:

- image: traefik:2.0.5

name: traefik-ingress-lb

ports:

- name: web

containerPort: 80

hostPort: 80

- name: websecure

containerPort: 443

hostPort: 443

- name: admin

containerPort: 8080

resources:

limits:

cpu: 2000m

memory: 1024Mi

requests:

cpu: 1000m

memory: 1024Mi

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

args:

- --configfile=/config/traefik.yaml

volumeMounts:

- mountPath: "/config"

name: "config"

volumes:

- name: config

configMap:

name: traefik-config

tolerations:

- operator: "Exists"

nodeSelector:

IngressProxy: "true"

创建

kubectl apply -f traefik-deploy.yaml

查看运行状态

[root@master1 ingress]# kubectl get DaemonSet -A

NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

kube-system traefik-ingress-controller 1 1 0 1 0 IngressProxy=true 18s

Traefik 路由配置

vim traefik-dashboard-route.yaml

apiVersion: traefik.containo.us/v1alpha1

kind: IngressRoute

metadata:

name: traefik-dashboard-route

namespace: kube-system

spec:

entryPoints:

- web

routes:

- match: Host(`ingress.abcd.com`)

kind: Rule

services:

- name: traefik

port: 8080

创建

kubectl apply -f traefik-dashboard-route.yaml

检查

[root@master1 ingress]# kubectl get ingressroute.traefik.containo.us -A

NAMESPACE NAME AGE

kube-system traefik-dashboard-route 78s

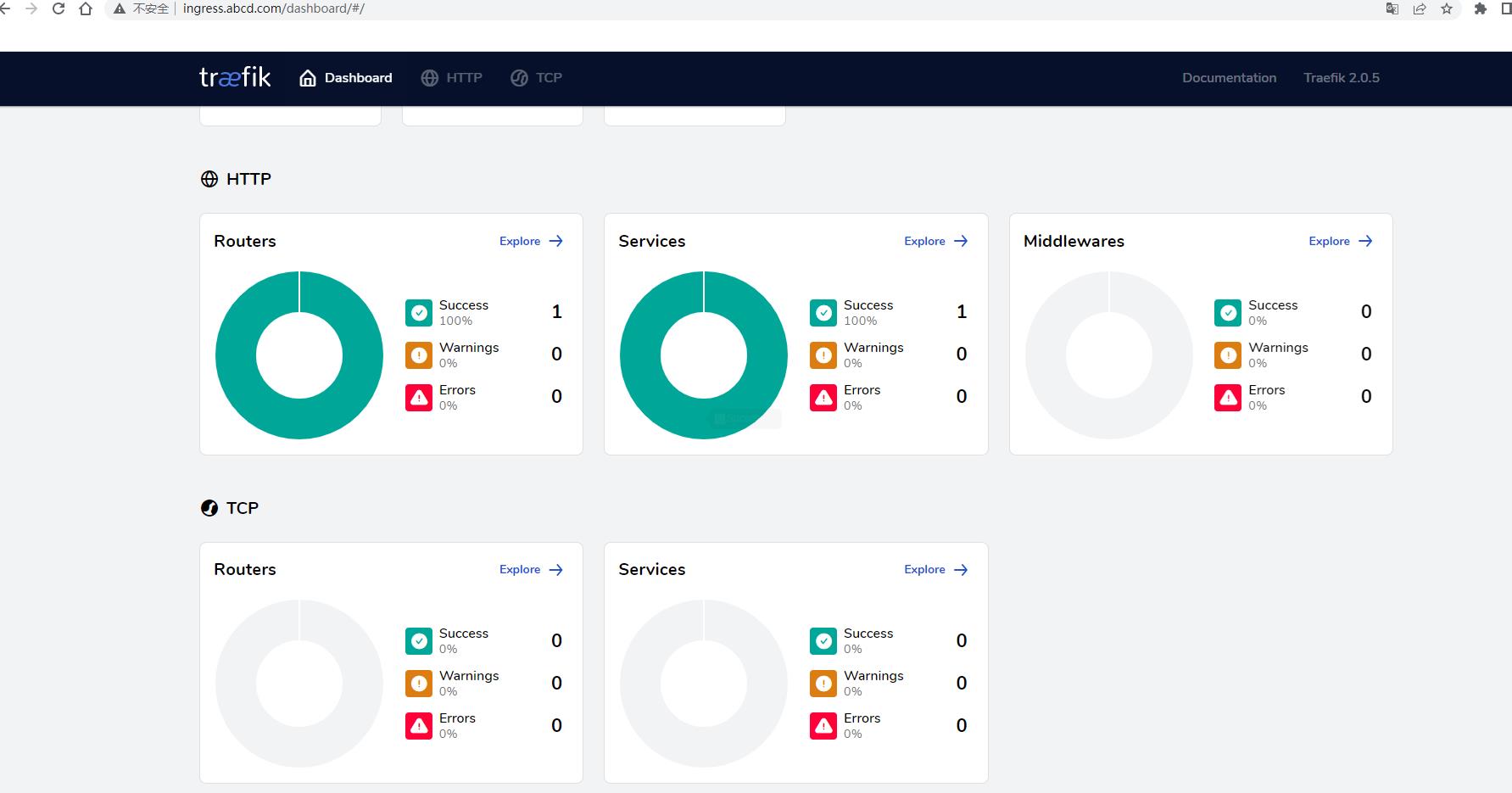

客户端访问Traefik Dashboard

绑定物理主机Hosts文件或者域名解析

/etc/hosts

192.168.3.203 ingress.abcd.com

访问web

CentOS 8 二进制 高可用 安装 k8s 1.16.x

- 基本说明

本文章将演示CentOS 8二进制方式安装高可用k8s 1.16.x,相对于其他版本,二进制安装方式并无太大区别。CentOS 8相对于CentOS 7操作更加方便,比如一些服务的关闭,无需修改配置文件即可永久生效,CentOS 8默认安装的内核版本是4.18,所以在安装k8s的过程中也无需在进行内核升级,系统环境也可按需升级,如果下载的是最新版的CentOS 8,系统升级也可省略。

- 基本环境配置

主机信息

192.168.1.19 k8s-master01

192.168.1.18 k8s-master02

192.168.1.20 k8s-master03

192.168.1.88 k8s-master-lb

192.168.1.21 k8s-node01

192.168.1.22 k8s-node02

系统环境

[root@k8s-master01 ~]# cat /etc/redhat-release

CentOS Linux release 8.0.1905 (Core)

[root@k8s-master01 ~]# uname -a

Linux k8s-master01 4.18.0-80.el8.x86_64 #1 SMP Tue Jun 4 09:19:46 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

配置所有节点hosts文件

[root@k8s-master01 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.1.19 k8s-master01

192.168.1.18 k8s-master02

192.168.1.20 k8s-master03

192.168.1.88 k8s-master-lb

192.168.1.21 k8s-node01

192.168.1.22 k8s-node02

所有节点关闭firewalld 、dnsmasq、NetworkManager、selinux

systemctl disable --now firewalld

systemctl disable --now dnsmasq

setenforce 0

所有节点关闭swap分区

[root@k8s-master01 ~]# swapoff -a && sysctl -w vm.swappiness=0

vm.swappiness = 0

所有节点同步时间

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo ‘Asia/Shanghai‘ >/etc/timezone

ntpdate time2.aliyun.com

Master01节点生成ssh key

[root@k8s-master01 ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory ‘/root/.ssh‘.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:6uz2kI+jcMJIUQWKqRcDRbvpVxhCW3Tmqn0NKS+lT3U root@k8s-master01

The key‘s randomart image is:

+---[RSA 2048]----+

|.o++=.o |

|.+o+ + |

|oo* . . |

|. .* + . |

|..+ + = S E |

|.= o * * . |

|. * * B . |

| = Bo+ |

| .+*oo |

+----[SHA256]-----+

Master01配置免密码登录其他节点

[root@k8s-master01 ~]# for i in k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

所有节点安装基本工具

yum install wget jq psmisc vim net-tools yum-utils device-mapper-persistent-data lvm2 git -y

Master01下载安装文件

[root@k8s-master01 ~]# git clone https://github.com/dotbalo/k8s-ha-install.git

Cloning into ‘k8s-ha-install‘...

remote: Enumerating objects: 12, done.

remote: Counting objects: 100% (12/12), done.

remote: Compressing objects: 100% (11/11), done.

remote: Total 461 (delta 2), reused 5 (delta 1), pack-reused 449

Receiving objects: 100% (461/461), 19.52 MiB | 4.04 MiB/s, done.

Resolving deltas: 100% (163/163), done.

切换到1.16.x分支

git checkout manual-installation-v1.16.x

-

基本组件安装

配置Docker yum源

[root@k8s-master01 k8s-ha-install]# curl https://download.docker.com/linux/centos/docker-ce.repo -o /etc/yum.repos.d/docker-ce.repo

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 2424 100 2424 0 0 3639 0 --:--:-- --:--:-- --:--:-- 3645

[root@k8s-master01 k8s-ha-install]# yum makecache

CentOS-8 - AppStream 559 kB/s | 6.3 MB 00:11

CentOS-8 - Base 454 kB/s | 7.9 MB 00:17

CentOS-8 - Extras 592 B/s | 2.1 kB 00:03

Docker CE Stable - x86_64 5.8 kB/s | 20 kB 00:03

Last metadata expiration check: 0:00:01 ago on Sat 02 Nov 2019 02:46:29 PM CST.

Metadata cache created.

所有节点安装新版containerd

[root@k8s-master01 k8s-ha-install]# wget https://download.docker.com/linux/centos/7/x86_64/edge/Packages/containerd.io-1.2.6-3.3.el7.x86_64.rpm

--2019-11-02 15:00:20-- https://download.docker.com/linux/centos/7/x86_64/edge/Packages/containerd.io-1.2.6-3.3.el7.x86_64.rpm

Resolving download.docker.com (download.docker.com)... 13.225.103.32, 13.225.103.65, 13.225.103.10, ...

Connecting to download.docker.com (download.docker.com)|13.225.103.32|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 27119348 (26M) [binary/octet-stream]

Saving to: ‘containerd.io-1.2.6-3.3.el7.x86_64.rpm’

containerd.io-1.2.6-3.3.el7.x86_64.rpm 100%[===================================================================================================================>] 25.86M 1.55MB/s in 30s

2019-11-02 15:00:51 (887 KB/s) - ‘containerd.io-1.2.6-3.3.el7.x86_64.rpm’ saved [27119348/27119348]

[root@k8s-master01 k8s-ha-install]# yum -y install containerd.io-1.2.6-3.3.el7.x86_64.rpm

Last metadata expiration check: 0:14:35 ago on Sat 02 Nov 2019 02:46:29 PM CST.

所有节点安装最新版Docker

[root@k8s-master01 k8s-ha-install]# yum install docker-ce -y

所有节点开启Docker并设置开机自启动

[root@k8s-master01 k8s-ha-install]# systemctl enable --now docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /usr/lib/systemd/system/docker.service.

[root@k8s-master01 k8s-ha-install]# docker version

Client: Docker Engine - Community

Version: 19.03.4

API version: 1.40

Go version: go1.12.10

Git commit: 9013bf583a

Built: Fri Oct 18 15:52:22 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 19.03.4

API version: 1.40 (minimum version 1.12)

Go version: go1.12.10

Git commit: 9013bf583a

Built: Fri Oct 18 15:50:54 2019

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.2.6

GitCommit: 894b81a4b802e4eb2a91d1ce216b8817763c29fb

runc:

Version: 1.0.0-rc8

GitCommit: 425e105d5a03fabd737a126ad93d62a9eeede87f

docker-init:

Version: 0.18.0

GitCommit: fec3683

4. k8s组件安装

下载kubernetes 1.16.x安装包

https://dl.k8s.io/v1.16.2/kubernetes-server-linux-amd64.tar.gz

下载etcd 3.3.15安装包

[root@k8s-master01 ~]# wget https://github.com/etcd-io/etcd/releases/download/v3.3.15/etcd-v3.3.15-linux-amd64.tar.gz

解压kubernetes安装文件

[root@k8s-master01 ~]# tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

解压etcd安装文件

[root@k8s-master01 ~]# tar -zxvf etcd-v3.3.15-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.3.15-linux-amd64/etcd{,ctl}

版本查看

[root@k8s-master01 ~]# etcd --version

etcd Version: 3.3.15

Git SHA: 94745a4ee

Go Version: go1.12.9

Go OS/Arch: linux/amd64

[root@k8s-master01 ~]# kubectl version

Client Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.2", GitCommit:"c97fe5036ef3df2967d086711e6c0c405941e14b", GitTreeState:"clean", BuildDate:"2019-10-15T19:18:23Z", GoVersion:"go1.12.10", Compiler:"gc", Platform:"linux/amd64"}

The connection to the server localhost:8080 was refused - did you specify the right host or port?

将组件发送到其他节点

MasterNodes=‘k8s-master02 k8s-master03‘

WorkNodes=‘k8s-node01 k8s-node02‘

for NODE in $MasterNodes; do echo $NODE; scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/; scp /usr/local/bin/etcd* $NODE:/usr/local/bin/; done

for NODE in $WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/ ; done

CNI安装,下载CNI组件

wget https://github.com/containernetworking/plugins/releases/download/v0.7.5/cni-plugins-amd64-v0.7.5.tgz

所有节点创建/opt/cni/bin目录

mkdir -p /opt/cni/bin

解压cni并发送至其他节点

tar -zxf cni-plugins-amd64-v0.7.5.tgz -C /opt/cni/bin

for NODE in $MasterNodes; do ssh $NODE ‘mkdir -p /opt/cni/bin‘; scp /opt/cni/bin/ $NODE:/opt/cni/bin/; done

for NODE in $WorkNodes; do ssh $NODE ‘mkdir -p /opt/cni/bin‘; scp /opt/cni/bin/ $NODE:/opt/cni/bin/; done

5. 生成证书

下载生成证书工具

wget "https://pkg.cfssl.org/R1.2/cfssl_linux-amd64" -O /usr/local/bin/cfssl

wget "https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64" -O /usr/local/bin/cfssljson

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

所有Master节点创建etcd证书目录

mkdir /etc/etcd/ssl -p

Master01节点生成etcd证书

[root@k8s-master01 pki]# pwd

/root/k8s-ha-install/pki

[root@k8s-master01 pki]# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

2019/11/02 20:39:26 [INFO] generating a new CA key and certificate from CSR

2019/11/02 20:39:26 [INFO] generate received request

2019/11/02 20:39:26 [INFO] received CSR

2019/11/02 20:39:26 [INFO] generating key: rsa-2048

2019/11/02 20:39:27 [INFO] encoded CSR

2019/11/02 20:39:27 [INFO] signed certificate with serial number 603981780847439086338945186785919518080443607000

[root@k8s-master01 pki]# cfssl gencert

-ca=/etc/etcd/ssl/etcd-ca.pem

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem

-config=ca-config.json

-hostname=127.0.0.1,k8s-master01,k8s-master02,k8s-master03,192.168.1.19,192.168.1.18,192.168.1.20

json | cfssljson -bare /etc/etcd/ssl/etcd

-profile=kubernetes

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

2019/11/02 20:48:51 [INFO] generate received request

2019/11/02 20:48:51 [INFO] received CSR

2019/11/02 20:48:51 [INFO] generating key: rsa-2048

2019/11/02 20:48:52 [INFO] encoded CSR

2019/11/02 20:48:52 [INFO] signed certificate with serial number 584188914871773268065873129590581350709445633441

将证书复制到其他节点

[root@k8s-master01 pki]# MasterNodes=‘k8s-master02 k8s-master03‘

[root@k8s-master01 pki]# WorkNodes=‘k8s-node01 k8s-node02‘

[root@k8s-master01 pki]#

[root@k8s-master01 pki]#

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# for NODE in $MasterNodes; do

ssh $NODE "mkdir -p /etc/etcd/ssl" for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do scp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE} donedone

etcd-ca-key.pem 100% 1675 424.0KB/s 00:00

etcd-ca.pem 100% 1363 334.4KB/s 00:00

etcd-key.pem 100% 1679 457.4KB/s 00:00

etcd.pem 100% 1505 254.5KB/s 00:00

etcd-ca-key.pem 100% 1675 308.3KB/s 00:00

etcd-ca.pem 100% 1363 479.0KB/s 00:00

etcd-key.pem 100% 1679 208.1KB/s 00:00

etcd.pem 100% 1505 398.1KB/s 00:00

生成kubernetes证书

所有节点创建kubernetes相关目录

mkdir -p /etc/kubernetes/pki

[root@k8s-master01 pki]# cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

2019/11/02 20:56:39 [INFO] generating a new CA key and certificate from CSR

2019/11/02 20:56:39 [INFO] generate received request

2019/11/02 20:56:39 [INFO] received CSR

2019/11/02 20:56:39 [INFO] generating key: rsa-2048

2019/11/02 20:56:39 [INFO] encoded CSR

2019/11/02 20:56:39 [INFO] signed certificate with serial number 204375274212114571715224985915861594178740016435

[root@k8s-master01 pki]# cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.96.0.1,192.168.1.88,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,192.168.1.19,192.168.1.18,192.168.1.20 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

2019/11/02 20:58:37 [INFO] generate received request

2019/11/02 20:58:37 [INFO] received CSR

2019/11/02 20:58:37 [INFO] generating key: rsa-2048

2019/11/02 20:58:37 [INFO] encoded CSR

2019/11/02 20:58:37 [INFO] signed certificate with serial number 530242414195398724956103410222477752498137496229

[root@k8s-master01 pki]# cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

netes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

2019/11/02 20:59:28 [INFO] generating a new CA key and certificate from CSR

2019/11/02 20:59:28 [INFO] generate received request

2019/11/02 20:59:28 [INFO] received CSR

2019/11/02 20:59:28 [INFO] generating key: rsa-2048

2019/11/02 20:59:29 [INFO] encoded CSR

2019/11/02 20:59:29 [INFO] signed certificate with serial number 400365003875188640769336046625866020115935574187

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

2019/11/02 20:59:29 [INFO] generate received request

2019/11/02 20:59:29 [INFO] received CSR

2019/11/02 20:59:29 [INFO] generating key: rsa-2048

2019/11/02 20:59:31 [INFO] encoded CSR

2019/11/02 20:59:31 [INFO] signed certificate with serial number 714876805420065531759406103649716563367283489841

[root@k8s-master01 pki]# cfssl gencert

-ca=/etc/kubernetes/pki/ca.pem

-ca-key=/etc/kubernetes/pki/ca-key.pem

-config=ca-config.json

-profile=kubernetes

manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

2019/11/02 20:59:56 [INFO] generate received request

2019/11/02 20:59:56 [INFO] received CSR

2019/11/02 20:59:56 [INFO] generating key: rsa-2048

2019/11/02 20:59:56 [INFO] encoded CSR

2019/11/02 20:59:56 [INFO] signed certificate with serial number 365163931170718211641188236585237940196007847195

2019/11/02 20:59:56 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-cluster kubernetes

--certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.88:8443 --kubeconfig=/etc/kubernetes/controller-manager.kubeconfigig

kubectl config set-context system:kube-controller-manager@kubernetes

--cluster=kubernetes

--user=system:kube-controller-manager

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

kubectl config use-context system:kube-controller-manager@kubernetes

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-credentials system:kube-controller-manager

--client-certificate=/etc/kubernetes/pki/controller-manager.pem --client-key=/etc/kubernetes/pki/controller-manager-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/controller-manager.kubeconfigUser "system:kube-controller-manager" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-context system:kube-controller-manager@kubernetes

--cluster=kubernetes

--user=system:kube-controller-manager

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

Context "system:kube-controller-manager@kubernetes" created.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config use-context system:kube-controller-manager@kubernetes

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

Switched to context "system:kube-controller-manager@kubernetes".

[root@k8s-master01 pki]# cfssl gencert

-ca=/etc/kubernetes/pki/ca.pem

-ca-key=/etc/kubernetes/pki/ca-key.pem

-config=ca-config.json

-profile=kubernetes

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

2019/11/02 21:00:48 [INFO] generate received request

2019/11/02 21:00:48 [INFO] received CSR

2019/11/02 21:00:48 [INFO] generating key: rsa-2048

2019/11/02 21:00:49 [INFO] encoded CSR

2019/11/02 21:00:49 [INFO] signed certificate with serial number 536666325566380578973549296433116161533422391008

2019/11/02 21:00:49 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master01 pki]# kubectl config set-cluster kubernetes

--certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.88:8443 --kubeconfig=/etc/kubernetes/scheduler.kubeconfigheduler@kubernetes

--cluster=kubernetes

--user=system:kube-scheduler

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config use-context system:kube-scheduler@kubernetes

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-credentials system:kube-scheduler

--client-certificate=/etc/kubernetes/pki/scheduler.pem --client-key=/etc/kubernetes/pki/scheduler-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/scheduler.kubeconfigUser "system:kube-scheduler" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-context system:kube-scheduler@kubernetes

--cluster=kubernetes

--user=system:kube-scheduler

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Context "system:kube-scheduler@kubernetes" created.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config use-context system:kube-scheduler@kubernetes

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Switched to context "system:kube-scheduler@kubernetes".

[root@k8s-master01 pki]# cfssl gencert

-ca=/etc/kubernetes/pki/ca.pem

-ca-key=/etc/kubernetes/pki/ca-key.pem

-config=ca-config.json

-profile=kubernetes

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

2019/11/02 21:01:56 [INFO] generate received request

2019/11/02 21:01:56 [INFO] received CSR

2019/11/02 21:01:56 [INFO] generating key: rsa-2048

2019/11/02 21:01:56 [INFO] encoded CSR

2019/11/02 21:01:56 [INFO] signed certificate with serial number 596648060489336426936623033491721470152303339581

2019/11/02 21:01:56 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master01 pki]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.88:8443 --kubeconfig=/etc/kubernetes/admin.kubeconfig

s --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

Cluster "kubernetes" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfig

User "kubernetes-admin" set.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig

Context "kubernetes-admin@kubernetes" created.

[root@k8s-master01 pki]#

[root@k8s-master01 pki]# kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

Switched to context "kubernetes-admin@kubernetes".

[root@k8s-master01 pki]# for NODE in k8s-master01 k8s-master02 k8s-master03; do

cp kubelet-csr.json kubelet-$NODE-csr.json; sed -i "s/$NODE/$NODE/g" kubelet-$NODE-csr.json; cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=$NODE -profile=kubernetes kubelet-$NODE-csr.json | cfssljson -bare /etc/kubernetes/pki/kubelet-$NODE; rm -f kubelet-$NODE-csr.jsondone

2019/11/02 21:03:19 [INFO] generate received request

2019/11/02 21:03:19 [INFO] received CSR

2019/11/02 21:03:19 [INFO] generating key: rsa-2048

2019/11/02 21:03:20 [INFO] encoded CSR

2019/11/02 21:03:20 [INFO] signed certificate with serial number 537071233304209996689594645648717291884343909273

2019/11/02 21:03:20 [INFO] generate received request

2019/11/02 21:03:20 [INFO] received CSR

2019/11/02 21:03:20 [INFO] generating key: rsa-2048

2019/11/02 21:03:20 [INFO] encoded CSR

2019/11/02 21:03:20 [INFO] signed certificate with serial number 93675644052244007817881327407761955585316699106

2019/11/02 21:03:20 [INFO] generate received request

2019/11/02 21:03:20 [INFO] received CSR

2019/11/02 21:03:20 [INFO] generating key: rsa-2048

2019/11/02 21:03:21 [INFO] encoded CSR

2019/11/02 21:03:21 [INFO] signed certificate with serial number 369432006714320793581219965286097236027135622831

[root@k8s-master01 pki]# for NODE in k8s-master01 k8s-master02 k8s-master03; do

ssh $NODE "mkdir -p /etc/kubernetes/pki" scp /etc/kubernetes/pki/ca.pem $NODE:/etc/kubernetes/pki/ca.pem scp /etc/kubernetes/pki/kubelet-$NODE-key.pem $NODE:/etc/kubernetes/pki/kubelet-key.pem scp /etc/kubernetes/pki/kubelet-$NODE.pem $NODE:/etc/kubernetes/pki/kubelet.pem rm -f /etc/kubernetes/pki/kubelet-$NODE-key.pem /etc/kubernetes/pki/kubelet-$NODE.pemdone

ca.pem 100% 1407 494.1KB/s 00:00

kubelet-k8s-master01-key.pem 100% 1679 549.9KB/s 00:00

kubelet-k8s-master01.pem 100% 1501 535.5KB/s 00:00

ca.pem 100% 1407 358.2KB/s 00:00

kubelet-k8s-master02-key.pem 100% 1675 539.8KB/s 00:00

kubelet-k8s-master02.pem 100% 1501 375.5KB/s 00:00

ca.pem 100% 1407 464.7KB/s 00:00

kubelet-k8s-master03-key.pem 100% 1679 566.2KB/s 00:00

kubelet-k8s-master03.pem 100% 1501 515.1KB/s 00:00

[root@k8s-master01 pki]# for NODE in k8s-master01 k8s-master02 k8s-master03; do

ssh $NODE "cd /etc/kubernetes/pki && kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.88:8443 --kubeconfig=/etc/kubernetes/kubelet.kubeconfig && kubectl config set-credentials system:node:${NODE} --client-certificate=/etc/kubernetes/pki/kubelet.pem --client-key=/etc/kubernetes/pki/kubelet-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/kubelet.kubeconfig && kubectl config set-context system:node:${NODE}@kubernetes --cluster=kubernetes --user=system:node:${NODE} --kubeconfig=/etc/kubernetes/kubelet.kubeconfig && kubectl config use-context system:node:${NODE}@kubernetes --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"done

Cluster "kubernetes" set.

User "system:node:k8s-master01" set.

Context "system:node:k8s-master01@kubernetes" created.

Switched to context "system:node:k8s-master01@kubernetes".

Cluster "kubernetes" set.

User "system:node:k8s-master02" set.

Context "system:node:k8s-master02@kubernetes" created.

Switched to context "system:node:k8s-master02@kubernetes".

Cluster "kubernetes" set.

User "system:node:k8s-master03" set.

Context "system:node:k8s-master03@kubernetes" created.

Switched to context "system:node:k8s-master03@kubernetes".

创建ServiceAccount Key

[root@k8s-master01 pki]# openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

Generating RSA private key, 2048 bit long modulus (2 primes)

...................................................................................+++++

...............+++++

e is 65537 (0x010001)

[root@k8s-master01 pki]# openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

writing RSA key

[root@k8s-master01 pki]# for NODE in k8s-master02 k8s-master03; do

for FILE in $(ls /etc/kubernetes/pki | grep -v etcd); dofig; do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE}

done

done

scp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE}

done

for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE}

done

done

admin.csr 100% 1025 303.5KB/s 00:00

admin-key.pem 100% 1675 544.2KB/s 00:00

admin.pem 100% 1440 447.6KB/s 00:00

apiserver.csr 100% 1029 251.9KB/s 00:00

apiserver-key.pem 100% 1679 579.5KB/s 00:00

apiserver.pem 100% 1692 592.0KB/s 00:00

ca.csr 100% 1025 372.6KB/s 00:00

ca-key.pem 100% 1679 535.5KB/s 00:00

ca.pem 100% 1407 496.0KB/s 00:00

controller-manager.csr 100% 1082 273.4KB/s 00:00

controller-manager-key.pem 100% 1679 552.8KB/s 00:00

controller-manager.pem 100% 1497 246.7KB/s 00:00

front-proxy-ca.csr 100% 891 322.2KB/s 00:00

front-proxy-ca-key.pem 100% 1679 408.7KB/s 00:00

front-proxy-ca.pem 100% 1143 436.9KB/s 00:00

front-proxy-client.csr 100% 903 283.5KB/s 00:00

front-proxy-client-key.pem 100% 1679 265.2KB/s 00:00

front-proxy-client.pem 100% 1188 358.8KB/s 00:00

kubelet-k8s-master01.csr 100% 1050 235.6KB/s 00:00

kubelet-k8s-master02.csr 100% 1050 385.3KB/s 00:00

kubelet-k8s-master03.csr 100% 1050 319.8KB/s 00:00

kubelet-key.pem 100% 1679 562.2KB/s 00:00

kubelet.pem 100% 1501 349.4KB/s 00:00

sa.key 100% 1679 455.2KB/s 00:00

sa.pub 100% 451 152.3KB/s 00:00

scheduler.csr 100% 1058 402.0KB/s 00:00

scheduler-key.pem 100% 1675 291.8KB/s 00:00

scheduler.pem 100% 1472 426.2KB/s 00:00

admin.kubeconfig 100% 6432 1.5MB/s 00:00

controller-manager.kubeconfig 100% 6568 1.1MB/s 00:00

scheduler.kubeconfig 100% 6496 601.6KB/s 00:00

admin.csr 100% 1025 368.2KB/s 00:00

admin-key.pem 100% 1675 542.3KB/s 00:00

admin.pem 100% 1440 247.7KB/s 00:00

apiserver.csr 100% 1029 212.4KB/s 00:00

apiserver-key.pem 100% 1679 530.8KB/s 00:00

apiserver.pem 100% 1692 557.3KB/s 00:00

ca.csr 100% 1025 266.4KB/s 00:00

ca-key.pem 100% 1679 408.6KB/s 00:00

ca.pem 100% 1407 404.6KB/s 00:00

controller-manager.csr 100% 1082 248.9KB/s 00:00

controller-manager-key.pem 100% 1679 495.4KB/s 00:00

controller-manager.pem 100% 1497 262.1KB/s 00:00

front-proxy-ca.csr 100% 891 140.9KB/s 00:00

front-proxy-ca-key.pem 100% 1679 418.7KB/s 00:00

front-proxy-ca.pem 100% 1143 362.3KB/s 00:00

front-proxy-client.csr 100% 903 305.6KB/s 00:00

front-proxy-client-key.pem 100% 1679 342.7KB/s 00:00

front-proxy-client.pem 100% 1188 235.1KB/s 00:00

kubelet-k8s-master01.csr 100% 1050 215.1KB/s 00:00

kubelet-k8s-master02.csr 100% 1050 354.0KB/s 00:00

kubelet-k8s-master03.csr 100% 1050 243.0KB/s 00:00

kubelet-key.pem 100% 1679 591.3KB/s 00:00

kubelet.pem 100% 1501 557.0KB/s 00:00

sa.key 100% 1679 387.5KB/s 00:00

sa.pub 100% 451 154.0KB/s 00:00

scheduler.csr 100% 1058 191.5KB/s 00:00

scheduler-key.pem 100% 1675 426.3KB/s 00:00

scheduler.pem 100% 1472 561.3KB/s 00:00

admin.kubeconfig 100% 6432 1.3MB/s 00:00

controller-manager.kubeconfig 100% 6568 1.7MB/s 00:00

scheduler.kubeconfig 100% 6496 1.3MB/s 00:00

6. Kubernetes系统组件配置

etcd配置大致相同,注意修改每个Master节点的etcd配置的主机名和IP地址

name: ‘k8s-master01‘

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: ‘https://192.168.1.19:2380‘

listen-client-urls: ‘https://192.168.1.19:2379,http://127.0.0.1:2379‘

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: ‘https://192.168.1.19:2380‘

advertise-client-urls: ‘https://192.168.1.19:2379‘

discovery:

discovery-fallback: ‘proxy‘

discovery-proxy:

discovery-srv:

initial-cluster: ‘k8s-master01=https://192.168.1.19:2380,k8s-master02=https://192.168.1.18:2380,k8s-master03=https://192.168.1.20:2380‘

initial-cluster-token: ‘etcd-k8s-cluster‘

initial-cluster-state: ‘new‘

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: ‘off‘

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

ca-file: ‘/etc/kubernetes/pki/etcd/etcd-ca.pem‘

cert-file: ‘/etc/kubernetes/pki/etcd/etcd.pem‘

key-file: ‘/etc/kubernetes/pki/etcd/etcd-key.pem‘

client-cert-auth: true

trusted-ca-file: ‘/etc/kubernetes/pki/etcd/etcd-ca.pem‘

auto-tls: true

peer-transport-security:

ca-file: ‘/etc/kubernetes/pki/etcd/etcd-ca.pem‘

cert-file: ‘/etc/kubernetes/pki/etcd/etcd.pem‘

key-file: ‘/etc/kubernetes/pki/etcd/etcd-key.pem‘

peer-client-cert-auth: true

trusted-ca-file: ‘/etc/kubernetes/pki/etcd/etcd-ca.pem‘

auto-tls: true

debug: false

log-package-levels:

log-output: default

force-new-cluster: false

所有Master节点创建etcd service并启动

[root@k8s-master01 pki]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

[root@k8s-master01 pki]# mkdir /etc/kubernetes/pki/etcd

[root@k8s-master01 pki]# ln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

[root@k8s-master01 pki]# systemctl daemon-reload

[root@k8s-master01 pki]# systemctl enable --now etcd

Created symlink /etc/systemd/system/etcd3.service → /usr/lib/systemd/system/etcd.service.

Created symlink /etc/systemd/system/multi-user.target.wants/etcd.service → /usr/lib/systemd/system/etcd.service.

高可用配置

所有Master节点安装keepalived和haproxy

yum install keepalived haproxy -y

HAProxy配置

[root@k8s-master01 pki]# cat /etc/haproxy/haproxy.cfg

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults