Pytorch实战笔记

Posted GoAI

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了Pytorch实战笔记相关的知识,希望对你有一定的参考价值。

实战配套视频:《PyTorch深度学习实践》完结合集

实战笔记:

Learning_AI的博客_学习CV的研一小白_PyTorch学习笔记,

刘二大人:pytorch深度学习实践(代码详细笔记,适合零基础)

pytorch实战教学(一篇管够)_小星AI-CSDN博客_pytorch实战

Pytorch学习笔记--Bilibili刘二大人Pytorch教学代码汇总

线性模型

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

def forward(x):

return x * w

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) * (y_pred - y)

w_list = []

mse_list = []

for w in np.arange(0.0, 4.1, 0.1):

print('w=', w)

l_sum = 0

for x_val, y_val in zip(x_data, y_data):

y_pred_val = forward(x_val)

loss_val = loss(x_val, y_val)

l_sum += loss_val

print('\\t', x_val, y_val, y_pred_val, loss_val)

print('MSE=', l_sum / 3)

w_list.append(w)

mse_list.append(l_sum / 3)

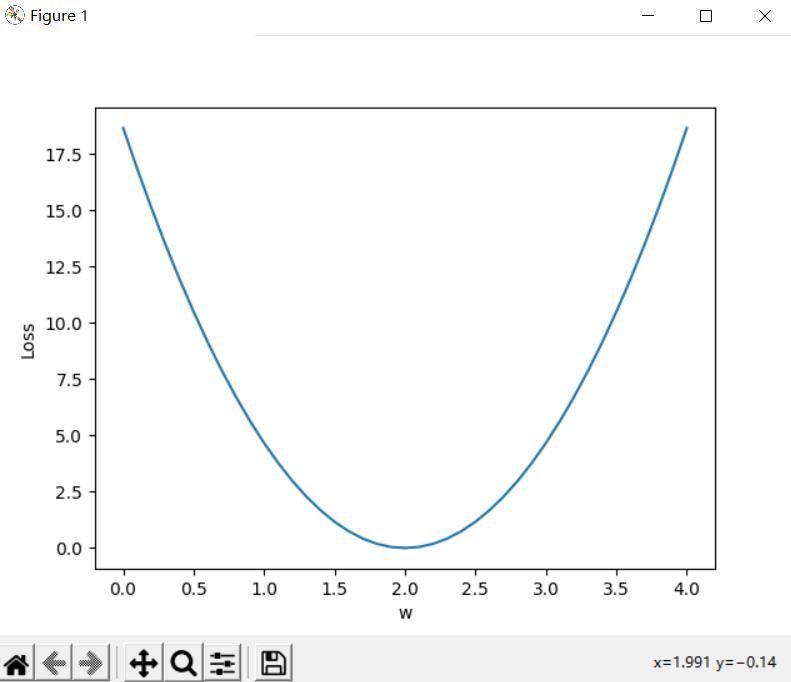

plt.plot(w_list, mse_list)

plt.ylabel('Loss')

plt.xlabel('w')

plt.show()

运行截图如下:

梯度下降

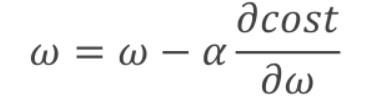

以模型  为例,梯度下降算法就是一种训练参数

为例,梯度下降算法就是一种训练参数  到最佳值的一种算法,

到最佳值的一种算法, 每次变化的趋势由

每次变化的趋势由  (学习率:一种超参数,由人手动设置调节),以及

(学习率:一种超参数,由人手动设置调节),以及 的导数来决定,具体公式如下:

的导数来决定,具体公式如下:

注: 此时 函数是指所有的损失函数之和

函数是指所有的损失函数之和

针对模型  的梯度下降算法的公式化简如下:

的梯度下降算法的公式化简如下:

# 输入训练数据

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

# 设置初始参数

w = 1.0 # 初始权重

alpha = 0.005 #初始梯度下降法的学习率

# 定义计算y_hat的函数

def forward(x):

return x * w

# 定义计算平均损失的函数

def cost(xs, ys):

sum_cost = 0

for x, y in zip(xs, ys): # zip函数的功能是打包为元组列表

y_pred = forward(x)

sum_cost += (y_pred - y) ** 2

return sum_cost / len(xs)

def gradient(xs, ys):

grad = 0

for x, y in zip(xs, ys):

grad += 2 * x * (x * w - y)

return grad / len(xs)

print('Predict (before training)', 4, forward(4)) # 计算训练前初始参数对应的y_hat值

for epoch in range(1000):

cost_val = cost(x_data, y_data) # 计算平均损失值

grad_val = gradient(x_data, y_data) # 计算梯度值

w -= alpha * grad_val # 更新权重w

print('Epoch', epoch, 'w = ', w, 'loss = ', cost_val) # 输出当前迭代次数的权重值和平均损失值

print('Predict (after training)', 4, forward(4)) #计算训练权重w后,对应的y_hat值

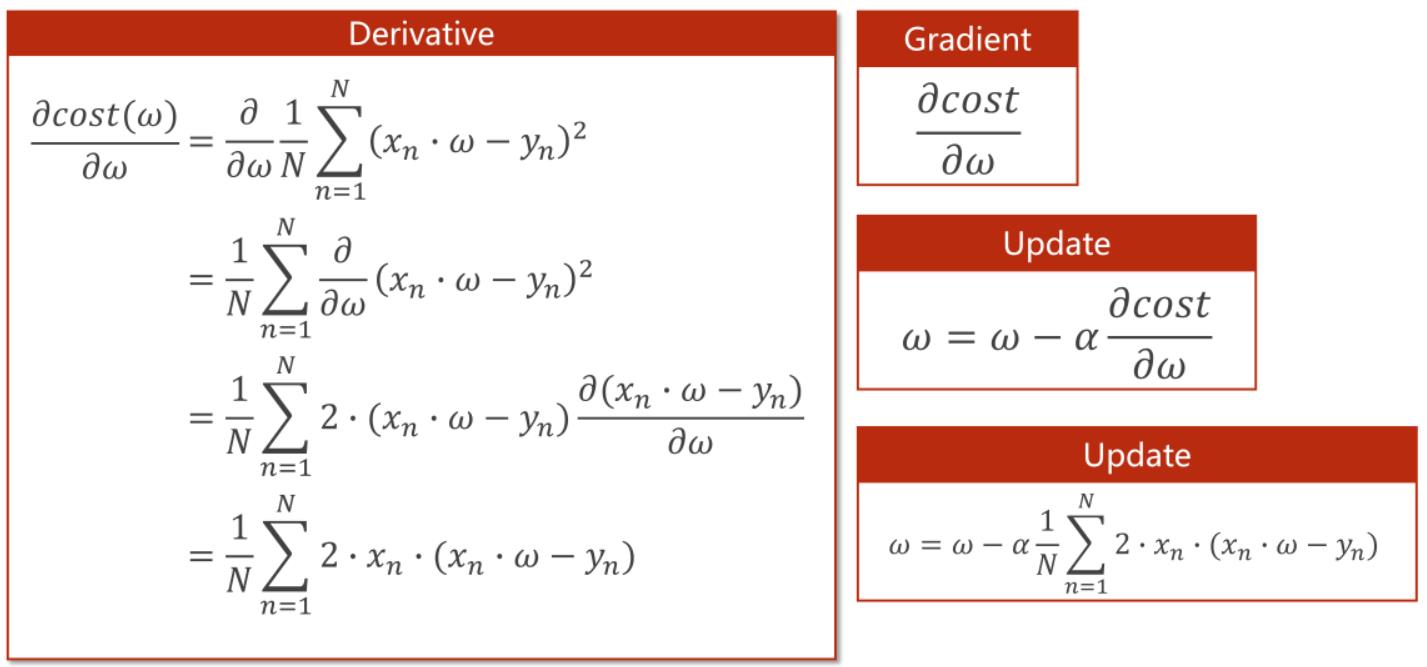

随机梯度下降:

随机梯度下降算法与梯度下降算法的不同之处在于,随机梯度下降算法不再计算损失函数之和的导数,而是随机选取任一随机函数计算导数,随机的决定  下次的变化趋势,具体公式变化如图:

下次的变化趋势,具体公式变化如图:

# 输入训练数据

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

# 设置初始参数

w = 1.0 # 初始权重

alpha = 0.005 #初始梯度下降法的学习率

# 定义计算y_hat的函数

def forward(x):

return x * w

# 定义计算单个样本损失的函数

def loss(xs, ys):

y_pred = forward(x) # 计算预测值y_hat

single_lost = (y_pred - ys) ** 2 # 计算误差

return single_lost

def gradient(xs, ys):

grad = 2 * x * (x * w - y)

return grad

print('Predict (before training)', 4, forward(4)) # 计算训练前初始参数对应的y_hat值

for epoch in range(1000): # 迭代次数

for x, y in zip(x_data, y_data): # 遍历数据

grad_val = gradient(x, y) # 计算当前数据的梯度值

w -= alpha * grad_val # 更新权重w

print("\\tgrad: ", x, y, grad_val)

los = loss(x, y) # 计算当前数据的损失值

print('progress: ', epoch, 'w = ', w, 'loss = ', los)

print('Predict (after training)', 4, forward(4)) # 计算训练权重w后,对应的y_hat值反向传播

import torch

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

w = torch.Tensor([1.0])

w.requires_grad = True # 需要计算梯度

def forward(x):

return x * w # tensor

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) ** 2

print('predict (before training)', 4, forward(4).item())

for epoch in range(100):

for x, y in zip(x_data, y_data):

l = loss(x, y) # 前向,计算loss

l.backward() # 做完后计算图会释放

print('\\tgrad:', x, y, w.grad.item()) # item取值,要是张量计算图一直累积

w.data -= 0.01 * w.grad.data # 不取data会是TENSOR有计算图

w.grad.data.zero_() # 计算出来的梯度不清零会累加

print("progress:", epoch, l.item())

print('predict (after training)', 4, forward(4).item())

Pytorch实战--线性回归

# 1、算预测值

# 2、算loss

# 3、梯度设为0,并反向传播

# 3、梯度更新

import torch

x_data = torch.Tensor([[1.0], [2.0], [3.0]])

y_data = torch.Tensor([[2.0], [4.0], [6.0]])

# 构造线性模型,后面都是使用这样的模板

# 至少实现两个函数,__init__构造函数和forward()前馈函数

# backward()会根据我们的计算图自动构建

# 可以继承Functions来构建自己的计算块

class LinerModel(torch.nn.Module):

def __init__(self):

# 调用父类的构造

super(LinerModel, self).__init__()

# 构造Linear这个对象,对输入数据做线性变换

# class torch.nn.Linear(in_features, out_features, bias=True)

# in_features - 每个输入样本的大小

# out_features - 每个输出样本的大小

# bias - 若设置为False,这层不会学习偏置。默认值:True

self.linear = torch.nn.Linear(1, 1)

def forward(self, x):

y_pred = self.linear(x)

return y_pred

model = LinerModel() # 实例化,可调用

# 定义MSE(均方差)损失函数,size_average=False不求均值

criterion = torch.nn.MSELoss(size_average=False)

# optim优化模块的SGD,第一个参数就是传递权重,model.parameters()model的所有权重

# 优化器对象

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

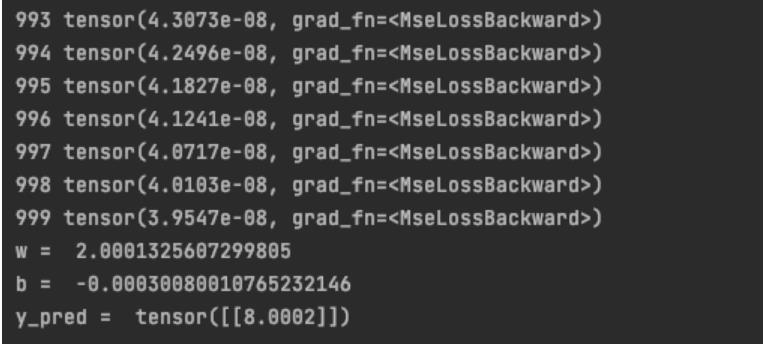

for epoch in range(100):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

# loss为一个对象,loss不会产生计算图,但会自动调用__str__()所以不会出错

print(epoch, loss)

# 梯度归零

optimizer.zero_grad()

# 反向传播

loss.backward()

# 根据梯度和预先设置的学习率进行更新(权重更新)

optimizer.step()

# 打印权重和偏置值,weight是一个值但是一个矩阵

print('w=', model.linear.weight.item())

print('b=', model.linear.bias.item())

# 测试

x_test = torch.Tensor([4.0])

y_test = model(x_test)

print('y_pred=', y_test.data)

结果图:

Pytorch实战--逻辑回归

import torch

import torch.nn.functional as F

import numpy as np

import matplotlib.pyplot as plt

x_data = torch.Tensor([[1.0], [2.0], [3.0]])

y_data = torch.Tensor([[0], [0], [1]])

##

class LogisticRegressionModel(torch.nn.Module):

def __init__(self): #构造函数

super(LogisticRegressionModel, self).__init__()

self.linear = torch.nn.Linear(1, 1) #线性层

def forward(self, x):

y_pred = F.sigmoid(self.linear(x)) #激活函数

return y_pred

model = LogisticRegressionModel()

##

criterion = torch.nn.BCELoss(size_average = False) #计算损失

optimizer = torch.optim.SGD(model.parameters(), lr = 0.01) #优化器

##

for epoch in range(1000):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

print(epoch, loss.item())

optimizer.zero_grad() # 梯度置0

loss.backward() # 计算梯度,反向传播

optimizer.step() # 更新参数

##

x = np.linspace(0, 10, 200)

x_t = torch.Tensor(x).view((200, 1))

y_t = model(x_t)

y = y_t.data.numpy()

plt.plot(x, y)

plt.plot([0, 10], [0.5, 0.5], c='r')

plt.xlabel('Hours')

plt.ylabel('Probability of Pass')

plt.grid()

plt.show()RNN

1.准备数据

定义一个数据集类,并读取数据文件。

from torch.utils.data import Dataset

import pandas as pd

class NameDataset(Dataset):

"""数据集类"""

def __init__(self, is_train_set=True):

filename = './name_data/names_train.csv' if is_train_set else './name_data/names_test.csv'

data = pd.read_csv(filename, header=None)

self.names = data[0]

self.len = len(self.names)

self.countries = data[1]

self.country_list = list(sorted(set(self.countries)))

self.country_dict = self.getCountryDict()

self.country_num = len(self.country_list)

def __getitem__(self, index):

return self.names[index], self.country_dict[self.countries[index]]

def __len__(self):

return self.len

def idx2country(self, index):

return self.country_list[index]

def getCountryDict(self):

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

def getCountriesNum(self):

return self.country_num

定义函数,用于将读取到的数据转化为tensor。

def name2list(name):

"""返回ASCII码表示的姓名列表与列表长度"""

arr = [ord(c) for c in name]

return arr, len(arr)

def make_tensors(names, countries):

# 元组列表,每个元组包含ASCII码表示的姓名列表与列表长度

sequences_and_lengths = [name2list(name) for name in names]

# 取出所有的ASCII码表示的姓名列表

name_sequences = [sl[0] for sl in sequences_and_lengths]

# 取出所有的列表长度

seq_lengths = torch.LongTensor([sl[1] for sl in sequences_and_lengths])

# 将countries转为long型

countries = countries.long()

# 接下来每个名字序列补零,使之长度一样。

# 先初始化一个全为零的tensor,大小为 所有姓名的数量*最长姓名的长度

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

# 将姓名序列覆盖到初始化的全零tensor上

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

# 根据序列长度seq_lengths对补零后tensor进行降序怕排列,方便后面加速计算。

# 返回排序后的seq_lengths与索引变化列表

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

# 根据索引变化列表对ASCII码表示的姓名列表进行排序

seq_tensor = seq_tensor[perm_idx]

# 根据索引变化列表对countries进行排序,使姓名与国家还是一一对应关系

# seq_tensor.shape : batch_size*max_seq_lengths,

# seq_lengths.shape : batch_size

# countries.shape : batch_size

countries = countries[perm_idx]

return seq_tensor, seq_lengths, countries

2.定义模型

import torch

from torch.nn.utils.rnn import pack_padded_sequence

class RNNClassifier(torch.nn.Module):

# input_size=128, hidden_size=100, output_size=18

def __init__(self, input_size, hidden_size, output_size, n_layers=1, bidirectional=True):

super(RNNClassifier, self).__init__()

self.hidden_size = hidden_size

self.n_layers = n_layers

self.n_directions = 2 if bidirectional else 1 # 是否双向

self.embedding = torch.nn.Embedding(input_size, hidden_size) # 输入大小128,输出大小100。

# 经过Embedding后input的大小是100,hidden_size的大小也是100,所以形参都是hidden_size。

self.gru = torch.nn.GRU(hidden_size, hidden_size, n_layers, bidirectional=bidirectional)

# 如果是双向,会输出两个hidden层,要进行拼接,所以线形成的input大小是 hidden_size * self.n_directions,输出是大小是18,是为18个国家的概率。

self.fc = torch.nn.Linear(hidden_size * self.n_directions, output_size)

def _init_hidden(self, batch_size):

hidden = torch.zeros(self.n_layers * self.n_directions, batch_size, self.hidden_size)

return hidden

def forward(self, input, seq_lengths):

# 先对input进行转置,input shape : batch_size*max_seq_lengths -> max_seq_lengths*batch_size 每一列表示姓名

input = input.t()

batch_size = input.size(1) # 总共有多少列,既是batch_size的大小

hidden = self._init_hidden(batch_size) # 初始化隐藏层

embedding = self.embedding(input) # embedding.shape : max_seq_lengths*batch_size*hidden_size 12*64*100

# pack_padded_sequence方便批量计算

gru_input = pack_padded_sequence(embedding, seq_lengths)

# 进入网络进行计算

output, hidden = self.gru(gru_input, hidden)

# 如果是双向的,需要进行拼接

if self.n_directions == 2:

hidden_cat = torch.cat([hidden[-1], hidden[-2]], dim=1)

else:

hidden_cat = hidden[-1]

# 线性层输出大小为18

fc_output = self.fc(hidden_cat)

return fc_output

3.定义训练函数

def time_since(since):

s = time.time() - since

m = math.floor(s/60)

s-= m*60

return '%dm %ds' % (m, s)

def trainModel():

total_loss = 0

for i, (names, countries) in enumerate(trainloader, 1): # 这里的1意思是 i 从1开始。

# make_tensors函数返回经过降序排列后的 姓名列表,列表长度,国家

inputs, seq_lengths, target = make_tensors(names, countries)

# 输入姓名列表与列表长度向前计算

output = classifier(inputs, seq_lengths)

loss = criterion(output, target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

if i % 10 == 0:

print(i)

print(f'[time_since(start)] Epoch epoch ', end='')

print(f'[i * len(inputs)/len(trainset)] ', end='')

print(f'loss=total_loss / (i * len(inputs))')

return total_loss

4.定义测试函数,跟训练函数相差不大

def testModel():

correct = 0

total = len(testset)

print("evaluating trained model ...")

with torch.no_grad():

for i, (names, countries) in enumerate(testloader, 1):

inputs, seq_lengths, target = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

pred = output.max(dim=1, keepdim=True)[1]

correct += pred.eq(target.view_as(pred)).sum().item()

percent = '%.2f' % (100 * correct / total)

print(f'Test set: Accuracy correct/total percent%')

return correct / total

5.主函数循环

from torch.utils.data import DataLoader

import time

import math

if __name__ == '__main__':

N_EPOCHS = 30 # epoch

HIDDEN_SIZE = 100 # 隐藏层的大小,也是Embedding后输出的大小

BATCH_SIZE = 64

N_COUNTRY = 18 # 总共有18个类别的国家,为RNN后输出的大小

N_LAYER = 2

N_CHARS = 128 # 字母字典的大小,Embedding输入的大小

trainset = NameDataset(is_train_set=True)

trainloader = DataLoader(trainset, batch_size=BATCH_SIZE, shuffle=True)

testset = NameDataset(is_train_set=False)

testloader = DataLoader(testset, batch_size=BATCH_SIZE, shuffle=False)

# 建立分类模型

classifier = RNNClassifier(N_CHARS, HIDDEN_SIZE, N_COUNTRY, N_LAYER)

# 建立损失函数与优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(classifier.parameters(), lr=0.001)

start = time.time()

print("Training for %d epochs..." % N_EPOCHS)

acc_list = []

for epoch in range(1, N_EPOCHS + 1):

# Train cycle

trainModel()

acc = testModel()

acc_list.append(acc)以上是关于Pytorch实战笔记的主要内容,如果未能解决你的问题,请参考以下文章