YOLOv7YOLOv5改进多种检测解耦头系列|即插即用:首发最新更新超多种高精度&轻量化解耦检测头(最新检测头改进集合),内含多种检测头/解耦头改进,高效涨点

Posted 芒果汁没有芒果

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了YOLOv7YOLOv5改进多种检测解耦头系列|即插即用:首发最新更新超多种高精度&轻量化解耦检测头(最新检测头改进集合),内含多种检测头/解耦头改进,高效涨点相关的知识,希望对你有一定的参考价值。

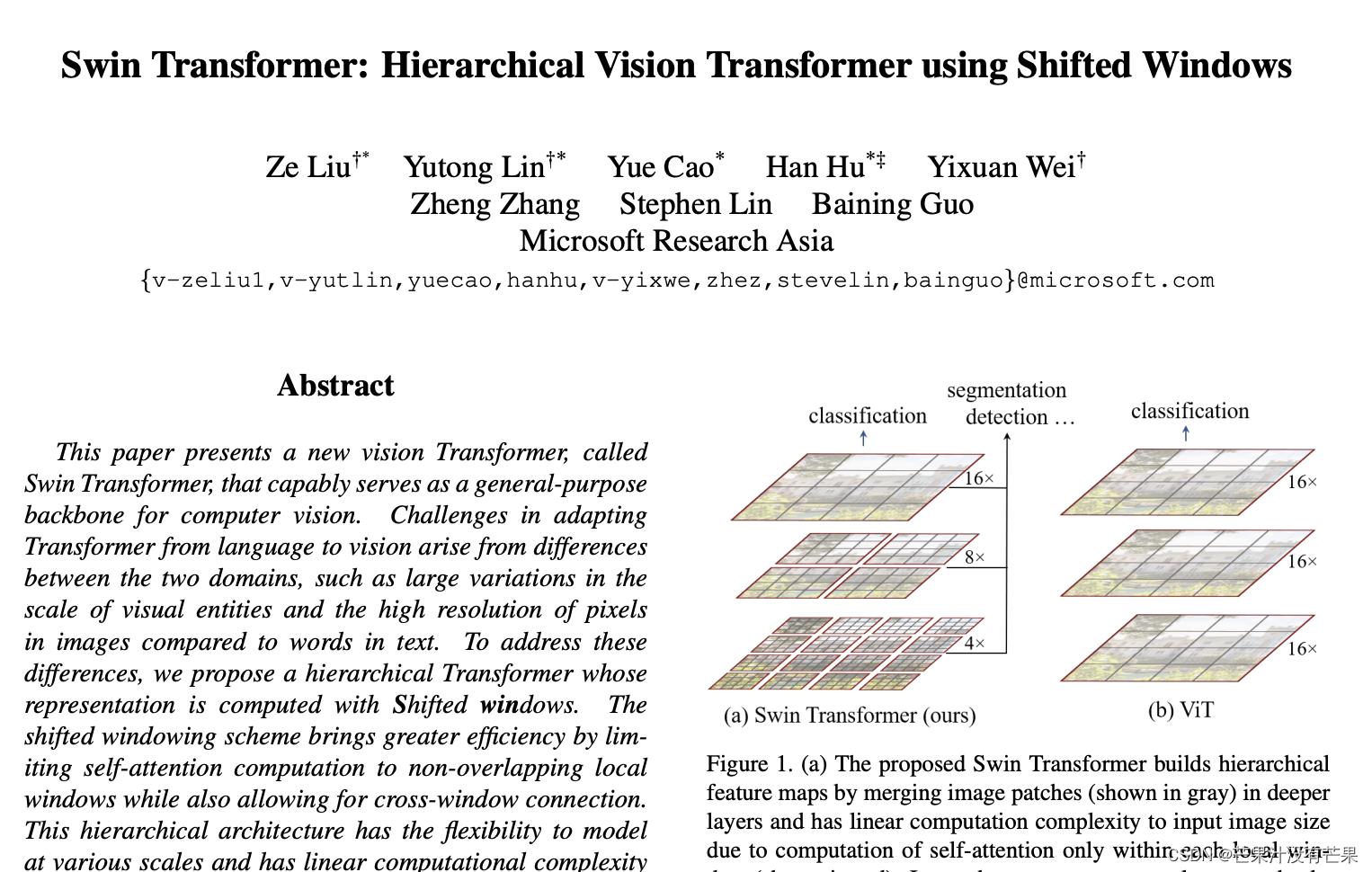

改进YOLOv5系列:增加Swin-Transformer小目标检测头

- 💡统一使用 YOLOv5 代码框架,结合不同模块来构建不同的YOLO目标检测模型。

- 🌟本项目包含大量的改进方式,降低改进难度,改进点包含

【Backbone特征主干】、【Neck特征融合】、【Head检测头】、【注意力机制】、【IoU损失函数】、【NMS】、【Loss计算方式】、【自注意力机制】、【数据增强部分】、【标签分配策略】、【激活函数】等各个部分。

本篇是《增加一个Swin检测头结构🚀》的代码演示

最新创新点改进博客推荐

(🔥 博客内 附有多种模型改进方式,均适用于YOLOv5系列 以及 YOLOv7系列 改进!!!)

-

💡🎈☁️:改进YOLOv7系列:首发最新结合多种X-Transformer结构新增小目标检测层,让YOLO目标检测任务中的小目标无处遁形

-

💡🎈☁️:改进YOLOv7系列:结合Adaptively Spatial Feature Fusion自适应空间特征融合结构,提高特征尺度不变性

-

💡🎈☁️:改进YOLOv5系列:首发结合最新Extended efficient Layer Aggregation Networks结构,高效的聚合网络设计,提升性能

-

💡🎈☁️:改进YOLOv7系列:首发结合最新CSPNeXt主干结构(适用YOLOv7),高性能,低延时的单阶段目标检测器主干,通过COCO数据集验证高效涨点

-

💡🎈☁️:改进YOLOv7系列:首发结合最新Transformer视觉模型MOAT结构:交替移动卷积和注意力带来强大的Transformer视觉模型,超强的提升

-

💡🎈☁️:改进YOLOv7系列:首发结合最新Centralized Feature Pyramid集中特征金字塔,通过COCO数据集验证强势涨点

-

💡🎈☁️:改进YOLOv7系列:首发结合 RepLKNet 构建 最新 RepLKDeXt 结构|CVPR2022 超大卷积核, 越大越暴力,大到31x31, 涨点高效

-

💡🎈☁️:改进YOLOv5系列:4.YOLOv5_最新MobileOne结构换Backbone修改,超轻量型架构,移动端仅需1ms推理!苹果最新移动端高效主干网络

-

💡🎈☁️:改进YOLOv7系列:最新HorNet结合YOLOv7应用! | 新增 HorBc结构,多种搭配,即插即用 | Backbone主干、递归门控卷积的高效高阶空间交互

文章目录

YOLOv5网络

1.YOLOv5s标准网络配置

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

2.增加Swin Transformer小目标检测头配置

增加yolov5s6_swin.yaml文件

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [19,27, 44,40, 38,94] # P3/8

- [96,68, 86,152, 180,137] # P4/16

- [140,301, 303,264, 238,542] # P5/32

- [436,615, 739,380, 925,792] # P6/64

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [768, 3, 2]], # 7-P5/32

[-1, 3, C3, [768]],

[-1, 1, Conv, [1024, 3, 2]], # 9-P6/64

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 11

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [768, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 8], 1, Concat, [1]], # cat backbone P5

[-1, 3, C3, [768, False]], # 15

[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 19

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 23 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 20], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 26 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 16], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [768, False]], # 29 (P5/32-large)

[-1, 1, Conv, [768, 3, 2]],

[[-1, 12], 1, Concat, [1]], # cat head P6

[-1, 3, C3STR, [512]], # 32 (P6/64-xlarge)

[[23, 26, 29, 32], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5, P6)

]

3.核心代码

参考本博主的这篇:👉改进YOLOv5系列:3.YOLOv5结合Swin Transformer结构,ICCV 2021最佳论文 使用 Shifted Windows 的分层视觉转换器

里面的common.py配置部分

class SwinTransformerBlock(nn.Module):

def __init__(self, c1, c2, num_heads, num_layers, window_size=8):

super().__init__()

self.conv = None

if c1 != c2:

self.conv = Conv(c1, c2)

# remove input_resolution

self.blocks = nn.Sequential(*[SwinTransformerLayer(dim=c2, num_heads=num_heads, window_size=window_size,

shift_size=0 if (i % 2 == 0) else window_size // 2) for i in range(num_layers)])

def forward(self, x):

if self.conv is not None:

x = self.conv(x)

x = self.blocks(x)

return x

class WindowAttention(nn.Module):

def __init__(self, dim, window_size, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0., proj_drop=0.):

super().__init__()

self.dim = dim

self.window_size = window_size # Wh, Ww

self.num_heads = num_heads

head_dim = dim // num_heads

self.scale = qk_scale or head_dim ** -0.5

# define a parameter table of relative position bias

self.relative_position_bias_table = nn.Parameter(

torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nH

# get pair-wise relative position index for each token inside the window

coords_h = torch.arange(self.window_size[0])

coords_w = torch.arange(self.window_size[1])

coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Ww

coords_flatten = torch.flatten(coords, 1) # 2, Wh*Ww

relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Ww

relative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2

relative_coords[:, :, 0] += self.window_size[0] - 1 # shift to start from 0

relative_coords[:, :, 1] += self.window_size[1] - 1

relative_coords[:, :, 0] *= 2 * self.window_size[1] - 1

relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Ww

self.register_buffer("relative_position_index", relative_position_index)

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

nn.init.normal_(self.relative_position_bias_table, std=.02)

self.softmax = nn.Softmax(dim=-1)

def forward(self, x, mask=None):

B_, N, C = x.shape

qkv = self.qkv(x).reshape(B_, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

q, k, v = qkv以上是关于YOLOv7YOLOv5改进多种检测解耦头系列|即插即用:首发最新更新超多种高精度&轻量化解耦检测头(最新检测头改进集合),内含多种检测头/解耦头改进,高效涨点的主要内容,如果未能解决你的问题,请参考以下文章