apache flink docker-compose 运行试用

Posted rongfengliang-荣锋亮

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了apache flink docker-compose 运行试用相关的知识,希望对你有一定的参考价值。

apache 是一个流处理框架,官方提供了docker 镜像,同时也提供了基于docker-compose 运行的说明

docker-compose file

version: "2.1"

services:

jobmanager:

image: flink

expose:

- "6123"

ports:

- "8081:8081"

command: jobmanager

environment:

- JOB_MANAGER_RPC_ADDRESS=jobmanager

taskmanager:

image: flink

expose:

- "6121"

- "6122"

depends_on:

- jobmanager

command: taskmanager

links:

- "jobmanager:jobmanager"

environment:

- JOB_MANAGER_RPC_ADDRESS=jobmanager运行

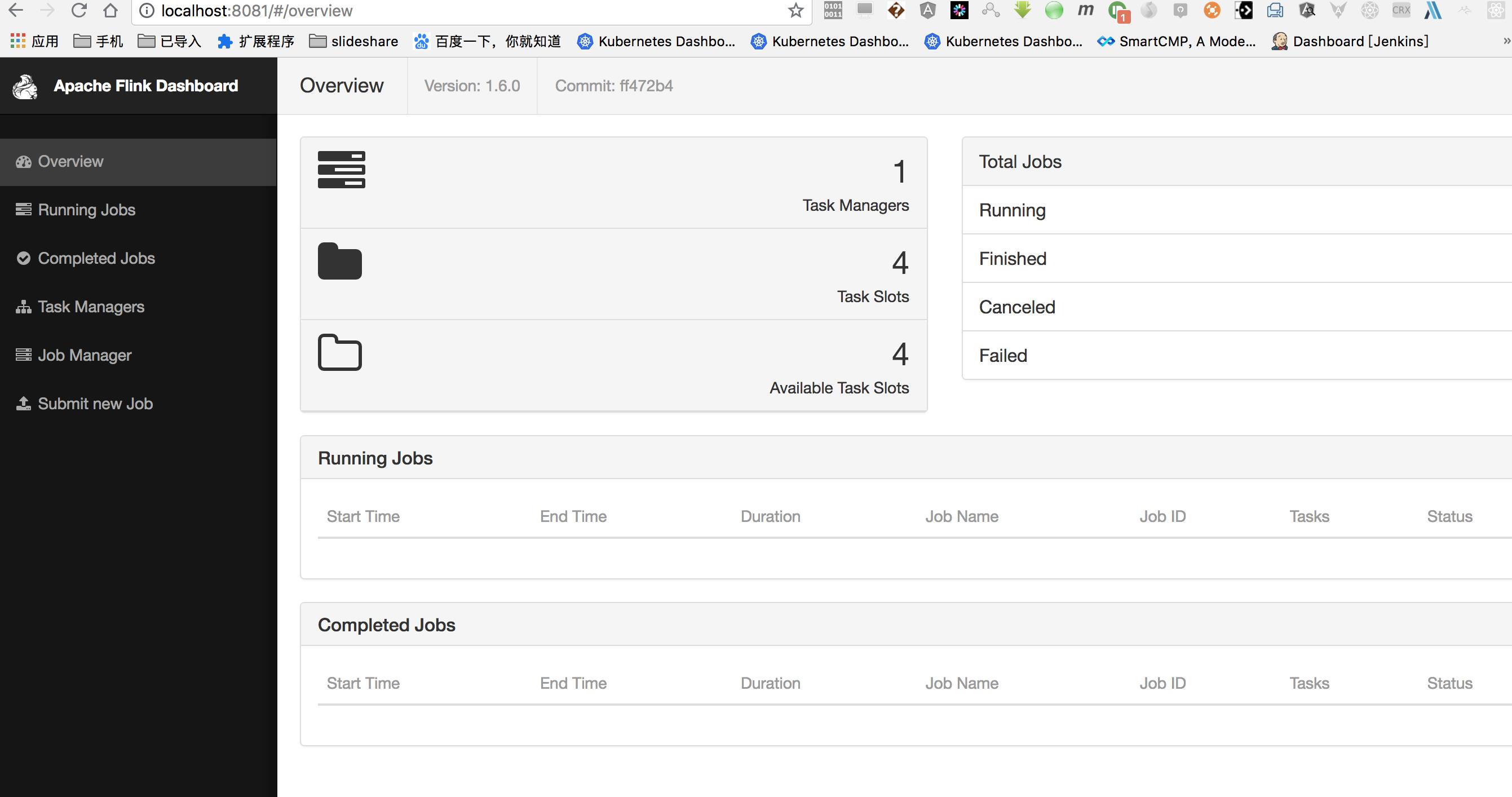

docker-compose up -d效果

编写简单job

使用maven

- 脚手架生成

按照提示输入group 等信息。。

mvn archetype:generate \\

-DarchetypeGroupId=org.apache.flink \\

-DarchetypeArtifactId=flink-quickstart-java \\

-DarchetypeVersion=1.6.0 - JOB_MANAGER_RPC_ADDRESS=jobmanager- 修改代码

默认项目结构

├── pom.xml

└── src

└── main

├── java

│ └── com

│ └── dalong

│ └── app

│ ├── BatchJob.java

│ ├── StreamingJob.java

└── resources

└── log4j.properties

添加一个技术的批处理

添加之后项目结构

├── pom.xml

└── src

└── main

├── java

│ └── com

│ └── dalong

│ └── app

│ ├── BatchJob.java

│ ├── StreamingJob.java

│ └── util

│ └── WordCountData.java

└── resources

└── log4j.properties

代码说明:

BatchJob.java

package com.dalong.app;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.core.fs.FileSystem.WriteMode;

import com.dalong.app.util.WordCountData;

import org.apache.flink.util.Collector;

public class BatchJob {

public static class Word {

// fields

private String word;

private int frequency;

// constructors

public Word() {}

public Word(String word, int i) {

this.word = word;

this.frequency = i;

}

// getters setters

public String getWord() {

return word;

}

public void setWord(String word) {

this.word = word;

}

public int getFrequency() {

return frequency;

}

public void setFrequency(int frequency) {

this.frequency = frequency;

}

@Override

public String toString() {

return "Word=" + word + " freq=" + frequency;

}

}

public static void main(String[] args) throws Exception {

final ParameterTool params = ParameterTool.fromArgs(args);

// set up the execution environment

final ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

// make parameters available in the web interface

env.getConfig().setGlobalJobParameters(params);

// get input data

DataSet<String> text;

if (params.has("input")) {

// read the text file from given input path

text = env.readTextFile(params.get("input"));

} else {

// get default test text data

System.out.println("Executing WordCount example with default input data set.");

System.out.println("Use --input to specify file input.");

text = WordCountData.getDefaultTextLineDataSet(env);

}

DataSet<Word> counts =

// split up the lines into Word objects (with frequency = 1)

text.flatMap(new Tokenizer())

// group by the field word and sum up the frequency

.groupBy("word")

.reduce(new ReduceFunction<Word>() {

@Override

public Word reduce(Word value1, Word value2) throws Exception {

return new Word(value1.word, value1.frequency + value2.frequency);

}

});

if (params.has("output")) {

counts.writeAsText(params.get("output"), WriteMode.OVERWRITE);

// execute program

env.execute("WordCount-Pojo Example");

} else {

System.out.println("Printing result to stdout. Use --output to specify output path.");

counts.print();

}

}

public static final class Tokenizer implements FlatMapFunction<String, Word> {

@Override

public void flatMap(String value, Collector<Word> out) {

// normalize and split the line

String[] tokens = value.toLowerCase().split("\\\\W+");

// emit the pairs

for (String token : tokens) {

if (token.length() > 0) {

out.collect(new Word(token, 1));

}

}

}

}

}

util/WordCountData.java

package com.dalong.app.util;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

public class WordCountData {

public static final String[] WORDS = new String[] {

"To be, or not to be,--that is the question:--",

"Whether \'tis nobler in the mind to suffer",

"The slings and arrows of outrageous fortune",

"Or to take arms against a sea of troubles,",

"And by opposing end them?--To die,--to sleep,--",

"No more; and by a sleep to say we end",

"The heartache, and the thousand natural shocks",

"That flesh is heir to,--\'tis a consummation",

"Devoutly to be wish\'d. To die,--to sleep;--",

"To sleep! perchance to dream:--ay, there\'s the rub;",

"For in that sleep of death what dreams may come,",

"When we have shuffled off this mortal coil,",

"Must give us pause: there\'s the respect",

"That makes calamity of so long life;",

"For who would bear the whips and scorns of time,",

"The oppressor\'s wrong, the proud man\'s contumely,",

"The pangs of despis\'d love, the law\'s delay,",

"The insolence of office, and the spurns",

"That patient merit of the unworthy takes,",

"When he himself might his quietus make",

"With a bare bodkin? who would these fardels bear,",

"To grunt and sweat under a weary life,",

"But that the dread of something after death,--",

"The undiscover\'d country, from whose bourn",

"No traveller returns,--puzzles the will,",

"And makes us rather bear those ills we have",

"Than fly to others that we know not of?",

"Thus conscience does make cowards of us all;",

"And thus the native hue of resolution",

"Is sicklied o\'er with the pale cast of thought;",

"And enterprises of great pith and moment,",

"With this regard, their currents turn awry,",

"And lose the name of action.--Soft you now!",

"The fair Ophelia!--Nymph, in thy orisons",

"Be all my sins remember\'d."

};

public static DataSet<String> getDefaultTextLineDataSet(ExecutionEnvironment env) {

return env.fromElements(WORDS);

}

}

- 构建

cd flink-app

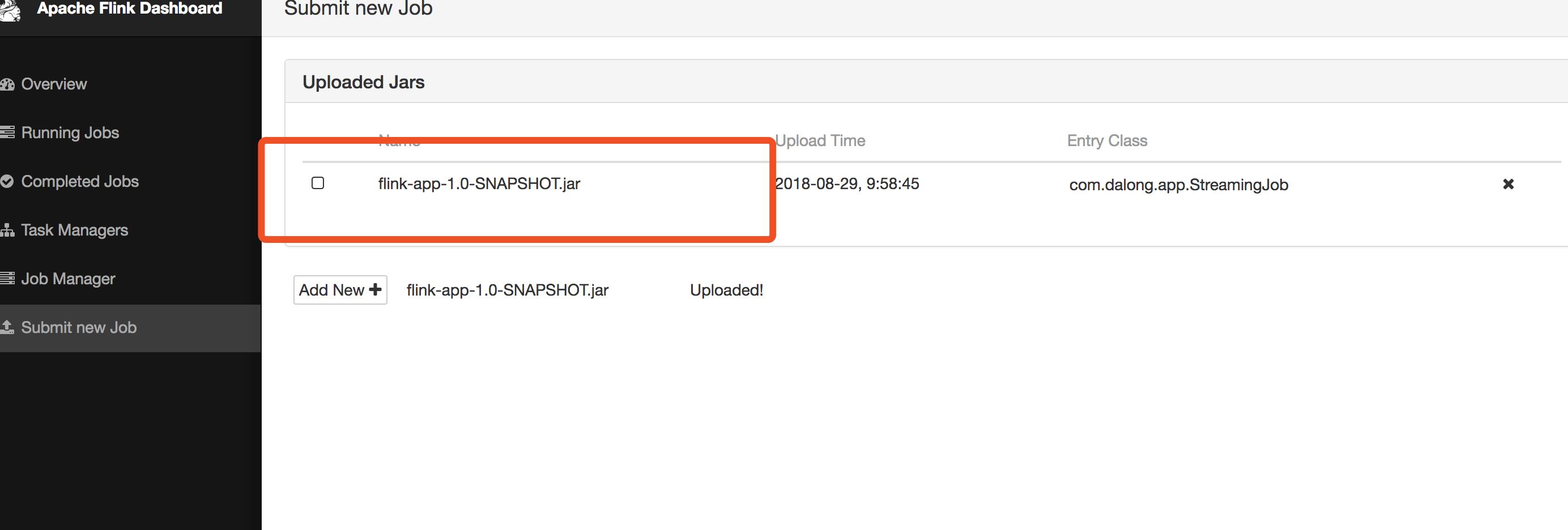

mvn clean package- 提交job

生成的代码很简单

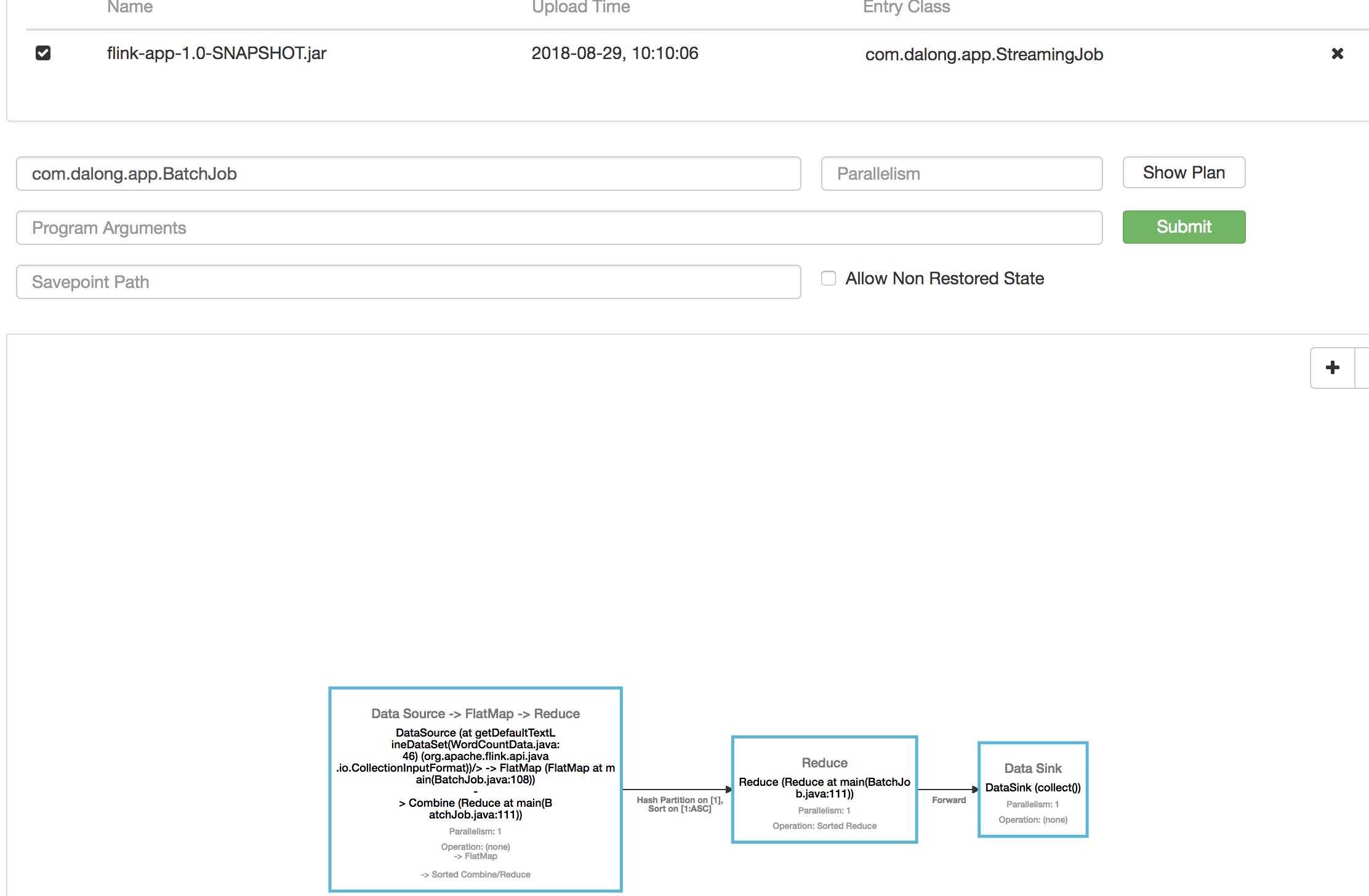

- 运行job

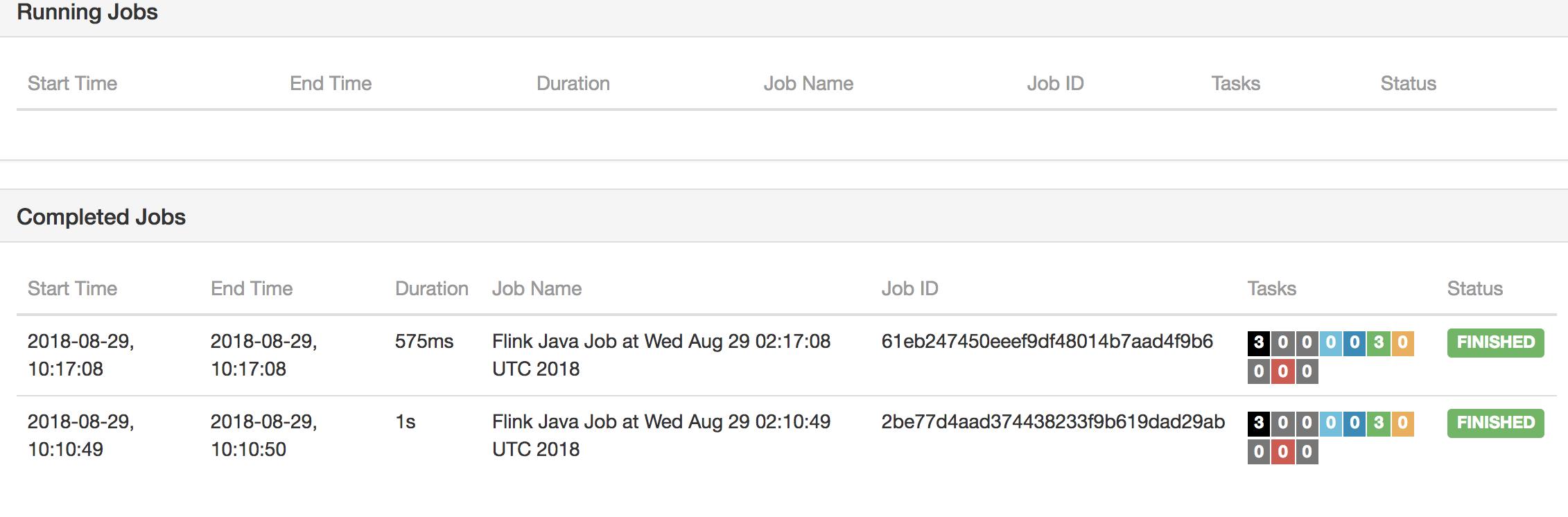

- 效果

参考资料

https://hub.docker.com/r/library/flink/

https://github.com/apache/flink/blob/master/flink-examples/flink-examples-batch/src/main/java/org/apache/flink/examples/java/wordcount/

https://github.com/rongfengliang/flink-docker-compose-demo

以上是关于apache flink docker-compose 运行试用的主要内容,如果未能解决你的问题,请参考以下文章

Apache Flink 入门,了解 Apache Flink

译文《Apache Flink官方文档》 Apache Flink介绍