K8S简介+CentOS7 部署K8S集群

Posted 技术栈

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了K8S简介+CentOS7 部署K8S集群相关的知识,希望对你有一定的参考价值。

一、前言

- Kubernetes(简称K8S)是开源的容器集群管理系统,可以实现容器集群的自动化部署、自动扩缩容、维护等功能。它既是一款容器编排工具,也是全新的基于容器技术的分布式架构领先方案。在Docker技术的基础上,为容器化的应用提供部署运行、资源调度、服务发现和动态伸缩等功能,提高了大规模容器集群管理的便捷性。【Kubernetes是容器集群管理工具】

二、Kubernetes的架构图

三、重要概念

3.1、cluster

- cluster是 计算、存储和网络资源的集合,k8s利用这些资源运行各种基于容器的应用。

3.2、master

- master是cluster的大脑,他的主要职责是调度,即决定将应用放在那里运行。master运行linux操作系统,可以是物理机或者虚拟机。为了实现高可用,可以运行多个master。

3.3、node

- node的职责是运行容器应用。node由master管理,node负责监控并汇报容器的状态,同时根据master的要求管理容器的生命周期。node运行在linux的操作系统上,可以是物理机或者是虚拟机。

3.4、pod

- pod是k8s的最小工作单元。每个pod包含一个或者多个容器。pod中的容器会作为一个整体被master调度到一个node上运行。

3.5、controller

- k8s通常不会直接创建pod,而是通过controller来管理pod的。controller中定义了pod的部署特性,比如有几个剧本,在什么样的node上运行等。为了满足不同的业务场景,k8s提供了多种controller,包括deployment、replicaset、daemonset、statefulset、job等。

3.6、deployment

- 是最常用的controller。deployment可以管理pod的多个副本,并确保pod按照期望的状态运行。

3.7、replicaset

- 实现了pod的多副本管理。使用deployment时会自动创建replicaset,也就是说deployment是通过replicaset来管理pod的多个副本的,我们通常不需要直接使用replicaset。

3.8、daemonset

- 用于每个node最多只运行一个pod副本的场景。正如其名称所示的,daemonset通常用于运行daemon。

3.9、statefuleset

- 能够保证pod的每个副本在整个生命周期中名称是不变的,而其他controller不提供这个功能。当某个pod发生故障需要删除并重新启动时,pod的名称会发生变化,同时statefulset会保证副本按照固定的顺序启动、更新或者删除。

3.10、job

- 用于运行结束就删除的应用,而其他controller中的pod通常是长期持续运行的。

3.11、service

- k8s的 service定义了外界访问一组特定pod的方式。service有自己的IP和端口,service为pod提供了负载均衡。

- k8s运行容器pod与访问容器这两项任务分别由controller和service执行。

3.12、namespace

- 可以将一个物理的cluster逻辑上划分成多个虚拟cluster,每个cluster就是一个namespace。不同的namespace里的资源是完全隔离的。

四、安装部署K8S集群

备注:第2步~第9步,所有的节点都要操作,第10、11步Master节点操作,第12步Node节点操作。

如果第10、11、12步操作失败,可以通过 kubeadm reset 命令来清理环境重新安装。

4.1、机器准备

| 机器IP | hostname |

| 192.168.182.135 | k8s-master |

| 192.168.182.136 | k8s-node1 |

| 192.168.182.137 | k8s-node2 |

4.2、关闭防火墙

- systemctl stop firewalld

- systemctl disable firewalld

4.3、关闭selinux

- setenforce 0 # 临时关闭

- sed -i \'s/SELINUX=enforcing/SELINUX=disabled/g\' /etc/selinux/config # 永久关闭

4.4、关闭swap

- swapoff -a # 临时关闭;关闭swap主要是为了性能考虑

- free # 可以通过这个命令查看swap是否关闭了

- sed -ri \'s/.*swap.*/#&/\' /etc/fstab # 永久关闭

4.5、添加主机名与IP对应的关系

- $ vim /etc/hosts

- 添加如下内容:

192.168.182.135 k8s-master 192.168.182.136 k8s-node1 192.168.182.137 k8s-node2

修改主机名

k8s-master:

[root@localhost ~]# hostname

localhost.localdomain

[root@localhost ~]# hostname k8s-master ##临时生效

[root@localhost ~]# hostnamectl set-hostname k8s-master ##重启后永久生效

k8s-node1:

[root@localhost ~]# hostname

localhost.localdomain

[root@localhost ~]# hostname k8s-node1##临时生效

[root@localhost ~]# hostnamectl set-hostname k8s-node1##重启后永久生效

k8s-node2:

[root@localhost ~]# hostname

localhost.localdomain

[root@localhost ~]# hostname k8s-node2 ##临时生效

[root@localhost ~]# hostnamectl set-hostname k8s-node2 ##重启后永久生效

4.6、将桥接的IPV4流量传递到iptables 的链

$ cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF $ sysctl --system

4.7、安装Docker

# 添加docker yum源

$ wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O/etc/yum.repos.d/docker-ce.repo

# 安装 $ yum -y install docker-ce

# 设置开机启动 $ systemctl enable docker

# 启动docker $ systemctl start docker

4.8、添加阿里云YUM软件源

cat > /etc/yum.repos.d/kubernetes.repo << EOF [k8s] name=k8s enabled=1 gpgcheck=0 baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ EOF

4.9、安装kubeadm,kubelet和kubectl

kubelet # 运行在 Cluster 所有节点上,负责启动 Pod 和容器。

kubeadm # 用于初始化 Cluster。

kubectl # 是 Kubernetes 命令行工具。通过 kubectl 可以部署和管理应用,查看各种资源,创建、删除和更新各种组件。

- 在部署kubernetes时,要求master node和worker node上的版本保持一致,否则会出现版本不匹配导致奇怪的问题出现。本文将介绍如何在CentOS系统上,使用yum安装指定版本的Kubernetes。

- 我们需要安装指定版本的kubernetes。那么如何做呢?在进行yum安装时,可以使用下列的格式来进行安装:

- yum install -y kubelet-<version> kubectl-<version> kubeadm-<version>

# 这里安装最新的

$ yum install kubelet kubeadm kubectl -y

# 最后安装的版本为:kubeadm.x86_64 0:1.17.0-0;kubectl.x86_64 0:1.17.0-0;kubelet.x86_64 0:1.17.0-0

-

启动kubelet

# 此时,还不能启动kubelet,因为此时配置还不能,现在仅仅可以设置开机自启动

$ systemctl enable kubelet

4.10、部署Kubernetes Master

1)初始化kubeadm

$ kubeadm init \\

--apiserver-advertise-address=192.168.182.135 \\

--image-repository registry.aliyuncs.com/google_containers \\

--kubernetes-version v1.17.0 \\

--service-cidr=10.1.0.0/16 \\

--pod-network-cidr=10.244.0.0/16

# –image-repository string: 这个用于指定从什么位置来拉取镜像(1.13版本才有的),默认值是k8s.gcr.io,我们将其指定为国内镜像地址:registry.aliyuncs.com/google_containers

# –kubernetes-version string: 指定kubenets版本号,默认值是stable-1,会导致从https://dl.k8s.io/release/stable-1.txt下载最新的版本号,我们可以将其指定为固定版本(v1.15.1)来跳过网络请求。

# –apiserver-advertise-address 指明用 Master 的哪个 interface 与 Cluster 的其他节点通信。如果 Master 有多个 interface,建议明确指定,如果不指定,kubeadm 会自动选择有默认网关的 interface。

# –pod-network-cidr 指定 Pod 网络的范围。Kubernetes 支持多种网络方案,而且不同网络方案对 –pod-network-cidr有自己的要求,这里设置为10.244.0.0/16 是因为我们将使用 flannel 网络方案,必须设置成这个 CIDR。

注意:

- 建议至少2 cpu ,2G,非硬性要求,1cpu,1G也可以搭建起集群。但是:

- 1个cpu的话初始化master的时候会报 [WARNING NumCPU]: the number of available CPUs 1 is less than the required 2

- 部署插件或者pod时可能会报warning:FailedScheduling:Insufficient cpu, Insufficient memory

- 如果出现这种提示,说明你的虚拟机分配的CPU为1核,需要重新设置虚拟机master节点内核数。

最后初始化成功:

# 其它node节点执行,如果重置了,就不一样了,得根据上面执行情况(node)

kubeadm join 192.168.182.135:6443 --token 7rpjfp.n3vg39zrgstzr0rs \\

--discovery-token-ca-cert-hash sha256:8c5aa1a4e82e70fed62b02e8d7bff54c801251b5ee40c7cec68a8c214dcc1234

$ docker images

2)使用kubectl工具

复制如下命令直接执行(master)

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

下面就可以直接使用kubectl命令了(master)

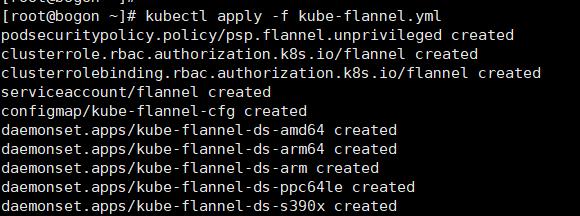

4.11、安装Pod网络插件(CNI)(master)

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

或者:

kubectl apply -f kube-flannel.yml

$ cat kube-flannel.yml

--- apiVersion: policy/v1beta1 kind: PodSecurityPolicy metadata: name: psp.flannel.unprivileged annotations: seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default spec: privileged: false volumes: - configMap - secret - emptyDir - hostPath allowedHostPaths: - pathPrefix: "/etc/cni/net.d" - pathPrefix: "/etc/kube-flannel" - pathPrefix: "/run/flannel" readOnlyRootFilesystem: false # Users and groups runAsUser: rule: RunAsAny supplementalGroups: rule: RunAsAny fsGroup: rule: RunAsAny # Privilege Escalation allowPrivilegeEscalation: false defaultAllowPrivilegeEscalation: false # Capabilities allowedCapabilities: [\'NET_ADMIN\'] defaultAddCapabilities: [] requiredDropCapabilities: [] # Host namespaces hostPID: false hostIPC: false hostNetwork: true hostPorts: - min: 0 max: 65535 # SELinux seLinux: # SELinux is unused in CaaSP rule: \'RunAsAny\' --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel rules: - apiGroups: [\'extensions\'] resources: [\'podsecuritypolicies\'] verbs: [\'use\'] resourceNames: [\'psp.flannel.unprivileged\'] - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1beta1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-amd64 namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - amd64 hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.11.0-amd64 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.11.0-amd64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-arm64 namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - arm64 hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.11.0-arm64 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.11.0-arm64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-arm namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - arm hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.11.0-arm command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.11.0-arm command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-ppc64le namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - ppc64le hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.11.0-ppc64le command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.11.0-ppc64le command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds-s390x namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: beta.kubernetes.io/os operator: In values: - linux - key: beta.kubernetes.io/arch operator: In values: - s390x hostNetwork: true tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.11.0-s390x command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.11.0-s390x command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg

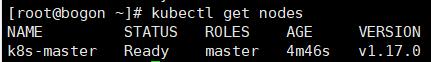

2)查看是否部署成功

3)再次查看node,可以看到状态为ready

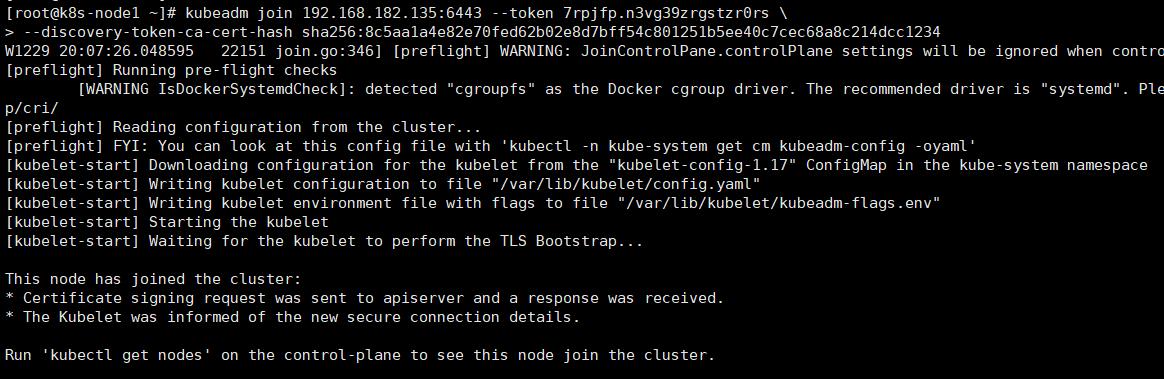

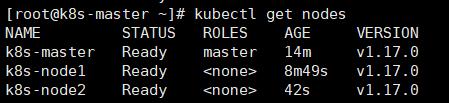

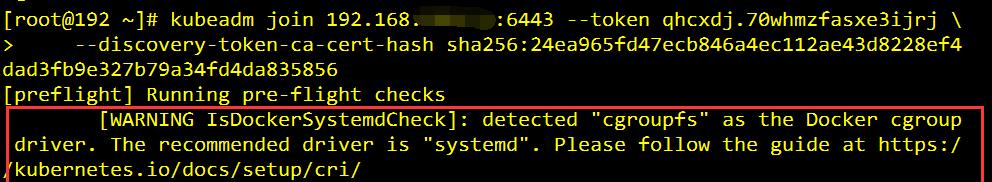

12、Node节点加入集群(node)

- 向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

- 复制上面命令,在node节点上执行,记得更换对应的ip

- 这里的–token 来自前面kubeadm init输出提示,如果当时没有记录下来可以通过kubeadm token list 查看。

kubeadm join 192.168.182.135:6443 --token 7rpjfp.n3vg39zrgstzr0rs \\

--discovery-token-ca-cert-hash sha256:8c5aa1a4e82e70fed62b02e8d7bff54c801251b5ee40c7cec68a8c214dcc1234

在master端查看:

注意:重新初始化得先执行这几步

docker rm -f `docker ps -a -q`

rm -rf /etc/kubernetes/*

rm -rf /var/lib/etcd/

kubeadm reset

问题1:No networks found in /etc/cni/net.d

- 解决:cat /etc/cni/net.d/10-flannel.conflist

-

View Code

View Code{ "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] }

问题2:如果出现如下错误提示:

- 解决:

-

# 更改docker的启动参数 $ vim /usr/lib/systemd/system/docker.service #ExecStart=/usr/bin/dockerd ExecStart=/usr/bin/dockerd --exec-opt native.cgroupdriver=systemd

# 重启docker

$ systemctl daemon-reload

$ systemctl restart docker

13、补充:移除NODE节点的方法(master执行)

第一步:先将节点设置为维护模式(k8s-node1是节点名称)

[root@k8s-master ~]# kubectl drain k8s-node1 --delete-local-data --force --ignore-daemonsets

node/k8s-node1 cordoned

node/k8s-node1 drained

第二步:然后删除节点

[root@k8s-master ~]# kubectl delete node k8s-node1

node "k8s-node1" deleted

第三步:查看节点

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 18m v1.17.0 k8s-node2 Ready <none> 5m7s v1.17.0

发现k8s-node1节点已经被删除了

14、如果这个时候再想添加进来这个node,需要执行两步操作

第一步:停掉kubelet(需要添加进来的节点操作)

[root@k8s-node2 ~]# systemctl stop kubelet

第二步:删除相关文件

[root@k8s-node2 ~]# rm -rf /etc/kubernetes/*

第三步:添加节点

kubeadm join 192.168.182.135:6443 --token 7rpjfp.n3vg39zrgstzr0rs \\

--discovery-token-ca-cert-hash sha256:8c5aa1a4e82e70fed62b02e8d7bff54c801251b5ee40c7cec68a8c214dcc1234

第四步:查看节点

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 24m v1.17.0 k8s-node1 NotReady <none> 6s v1.17.0 k8s-node2 Ready <none> 10m v1.17.0

15、忘掉token再次添加进k8s集群

第一步:主节点执行命令

获取token

[root@k8s-master ~]# kubeadm token list

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

7rpjfp.n3vg39zrgstzr0rs 23h 2019-12-30T20:01:50+08:00 authentication,signing The default bootstrap token generated by \'kubeadm init\'. system:bootstrappers:kubeadm:default-node-token

第二步: 获取ca证书sha256编码hash值

[root@k8s-master ~]# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed \'s/^.* //\' 8c5aa1a4e82e70fed62b02e8d7bff54c801251b5ee40c7cec68a8c214dcc1234

第三步:从节点执行如下的命令

[root@k8s-master ~]# systemctl stop kubelet

第四步:删除相关文件

[root@k8s-master ~]# rm -rf /etc/kubernetes/*

第五步:加入集群

指定主节点IP,端口是6443

在生成的证书前有sha256:

kubeadm join 192.168.64.10:6443 --token 7rpjfp.n3vg39zrgstzr0rs --discovery-token-ca-cert-hash sha256:8c5aa1a4e82e70fed62b02e8d7bff54c801251b5ee40c7cec68a8c214dcc1234

16、常用命令

16.1、查看node

# -o wide以yaml格式显示详细信息 kubectl get node -o wide

16.2、创建deployments

kubectl run net-test --image=alpine --replicas=2 sleep 10

16.3、查看deployments详情

kubectl describe deployment net-test

16.4、删除deployments

kubectl delete deployment net-test -n default

16.5、查看pod

kubectl get pod -o wide

16.6、查看pod的详情

kubectl describe pod net-test-5767cb94df-7lwtq

16.7、手动扩容缩容

# 通过执行扩容命令,对某个deployment直接进行扩容: $ kubectl scale deployment net-test --replicas=4 # 当要缩容,减少副本数量即可: $ kubectl scale deployment net-test --replicas=2

17、搭建K8S Dashboard

1. 下载dashboard文件:

curl -o kubernetes-dashboard.yaml https://raw.githubusercontent.com/kubernetes/dashboard/master/aio/deploy/recommended/kubernetes-dashboard.yaml

如果地址不可用可以使用下面的【kubernetes-dashboard.yaml】文件:对外端口为nodePort

apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-certs namespace: kube-system type: Opaque --- apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kube-system --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: name: kubernetes-dashboard-minimal namespace: kube-system rules: - apiGroups: [""] resources: ["secrets"] verbs: ["create"] - apiGroups: [""] resources: ["configmaps"] verbs: ["create"] - apiGroups: [""] resources: ["secrets"] resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"] verbs: ["get", "update", "delete"] - apiGroups: [""] resources: ["configmaps"] resourceNames: ["kubernetes-dashboard-settings"] verbs: ["get", "update"] - apiGroups: [""] resources: ["services"] resourceNames: ["heapster"] verbs: ["proxy"] - apiGroups: [""] resources: ["services/proxy"] resourceNames: ["heapster", "http:heapster:", "https:heapster:"] verbs: ["get"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard-minimal namespace: kube-system roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kube-system --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kube-system spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard spec: containers: - name: kubernetes-dashboard image: 192.168.182.135:9090/library/kubernetes-dashboard-amd64:v1.8.3 ports: - containerPort: 8443 protocol: TCP args: - --auto-generate-certificates volumeMounts: - name: kubernetes-dashboard-certs mountPath: /certs - mountPath: /tmp name: tmp-volume livenessProbe: httpGet: scheme: HTTPS path: / port: 8443 initialDelaySeconds: 30 timeoutSeconds: 30 volumes: - name: kubernetes-dashboard-certs secret: secretName: kubernetes-dashboard-certs - name: tmp-volume emptyDir: {} serviceAccountName: kubernetes-dashboard tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule --- kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kube-system spec: type: NodePort ports: - port: 443 targetPort: 8443 nodePort: 31080 selector: k8s-app: kubernetes-dashboard

2.下载镜像镜像【k8s.gcr.io#kubernetes-dashboard-amd64.tar】

链接: https://pan.baidu.com/s/1vQUGpx89TZw-HyxCVE972w 提取码: 5pui 复制这段内容后打开百度网盘手机App,操作更方便哦

3. 创建kubernetes-dashboard:注意:修改【kubernetes-dashboard.yaml】文件里面的镜像地址

kubectl create -f kubernetes-dashboard.yaml

4. 由于我之前安装过一次,所以报错:

Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": secrets "kubernetes-dashboard-certs" already exists Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": serviceaccounts "kubernetes-dashboard" already exists Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": roles.rbac.authorization.k8s.io "kubernetes-dashboard-minimal" already exists Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": rolebindings.rbac.authorization.k8s.io "kubernetes-dashboard-minimal" already exists Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": deployments.apps "kubernetes-dashboard" already exists Error from server (AlreadyExists): error when creating "kubernetes-dashboard.yaml": services "kubernetes-dashboard" already exists

5. 卸载之前安装的内容:

kubectl delete -f kubernetes-dashboard.yaml

6. 重新安装dashboard:

kubectl create -f kubernetes-dashboard.yaml

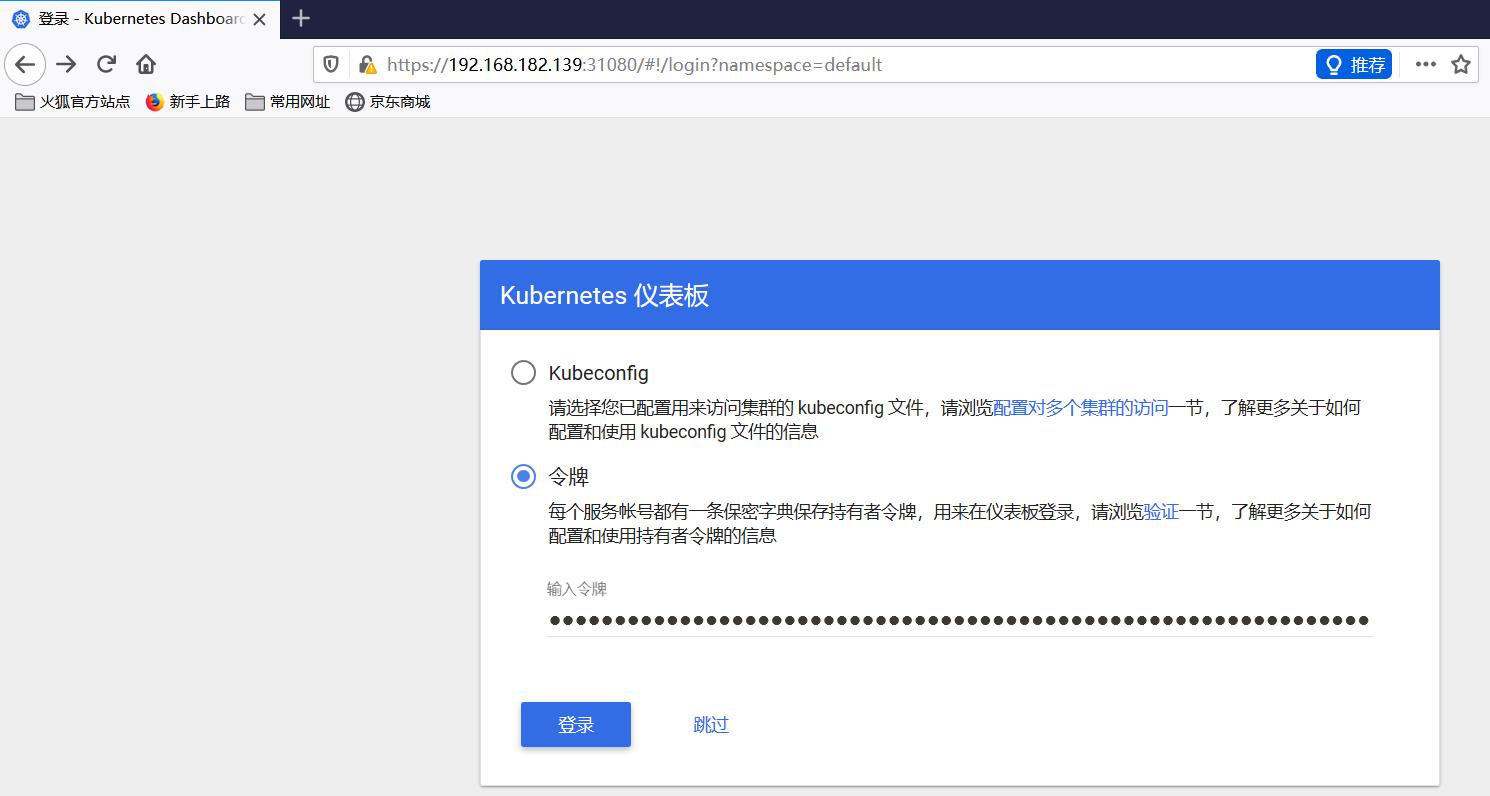

7. 获取token:

kubectl -n kube-system describe $(kubectl -n kube-system get secret -n kube-system -o name | grep namespace) | grep token

8.如果谷歌浏览器访问不了,则用火狐访问:http://nodeIp:nodePort

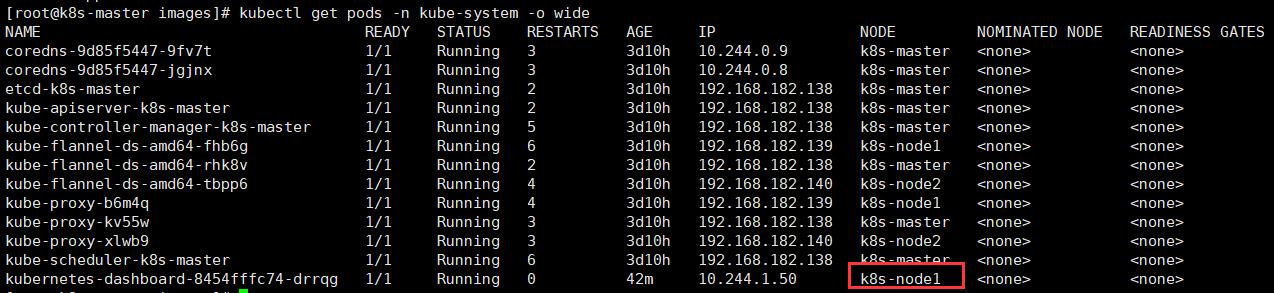

查看服务被分配到哪个节点上:$ kubectl get pods -n kube-system -o wide

9. 访问以下链接时,将获取的token粘贴到输入框中:

之后就可以通过令牌登录了。

解决证书问题:

# 定义别名 $ alias ksys=\'kubectl -n kube-system\' # 查看证书 $ ksys get secret # 删除证书 $ ksys delete secret kubernetes-dashboard-certs # 创建证书 $ openssl genrsa -out dashboard.key 2048 $ openssl req -new -out dashboard.csr -key dashboard.key -subj \'/CN=192.168.182.139\' $ openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt $ openssl x509 -in dashboard.crt -text -noout $ ksys create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt

~~~未完待续~~~

以上是关于K8S简介+CentOS7 部署K8S集群的主要内容,如果未能解决你的问题,请参考以下文章