使用 Flomesh 强化 Spring Cloud 服务治理

Posted CSDN云计算

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了使用 Flomesh 强化 Spring Cloud 服务治理相关的知识,希望对你有一定的参考价值。

作者 | Addo Zhang

来源 | 云原生指北

写在最前

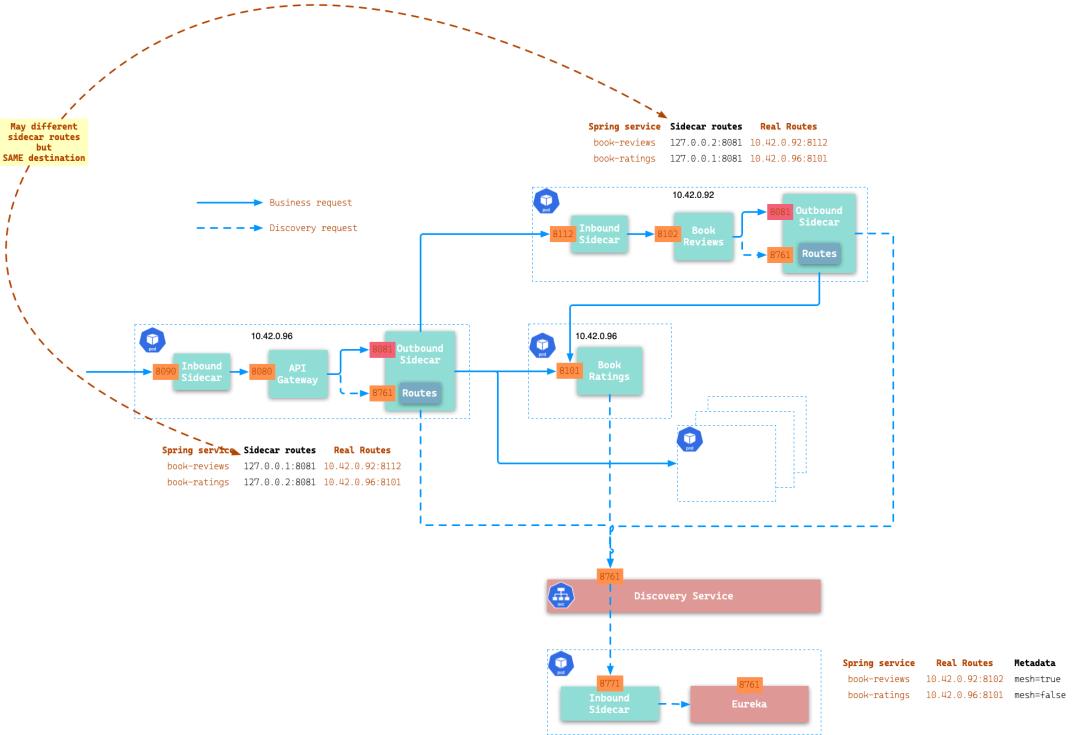

这篇是关于如何使用 Flomesh[1] 服务网格来强化 Spring Cloud 的服务治理能力,降低 Spring Cloud 微服务架构落地服务网格的门槛,实现“自主可控”。

架构

环境搭建

搭建 Kubernetes 环境,可以选择 kubeadm 进行集群搭建。也可以选择 minikube、k3s、Kind 等,本文使用 k3s。

使用 k3d[3] 安装 k3s[4]。k3d 将在 Docker 容器中运行 k3s,因此需要保证已经安装了 Docker。

$ k3d cluster create spring-demo -p "81:80@loadbalancer"--k3s-server-arg '--no-deploy=traefik'安装 Flomesh

从仓库 https://github.com/flomesh-io/flomesh-bookinfo-demo.git 克隆代码。进入到 flomesh-bookinfo-demo/kubernetes目录。

所有 Flomesh 组件以及用于 demo 的 yamls 文件都位于这个目录中。

安装 Cert Manager

$ kubectl apply -f artifacts/cert-manager-v1.3.1.yaml

customresourcedefinition.apiextensions.k8s.io/certificaterequests.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/certificates.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/challenges.acme.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/clusterissuers.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/issuers.cert-manager.io created

customresourcedefinition.apiextensions.k8s.io/orders.acme.cert-manager.io created

namespace/cert-manager created

serviceaccount/cert-manager-cainjector created

serviceaccount/cert-manager created

serviceaccount/cert-manager-webhook created

clusterrole.rbac.authorization.k8s.io/cert-manager-cainjector created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-issuers created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-clusterissuers created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-certificates created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-orders created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-challenges created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-ingress-shim created

clusterrole.rbac.authorization.k8s.io/cert-manager-view created

clusterrole.rbac.authorization.k8s.io/cert-manager-edit created

clusterrole.rbac.authorization.k8s.io/cert-manager-controller-approve:cert-manager-io created

clusterrole.rbac.authorization.k8s.io/cert-manager-webhook:subjectaccessreviews created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-cainjector created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-issuers created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-clusterissuers created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-certificates created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-orders created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-challenges created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-ingress-shim created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-controller-approve:cert-manager-io created

clusterrolebinding.rbac.authorization.k8s.io/cert-manager-webhook:subjectaccessreviews created

role.rbac.authorization.k8s.io/cert-manager-cainjector:leaderelection created

role.rbac.authorization.k8s.io/cert-manager:leaderelection created

role.rbac.authorization.k8s.io/cert-manager-webhook:dynamic-serving created

rolebinding.rbac.authorization.k8s.io/cert-manager-cainjector:leaderelection created

rolebinding.rbac.authorization.k8s.io/cert-manager:leaderelection created

rolebinding.rbac.authorization.k8s.io/cert-manager-webhook:dynamic-serving created

service/cert-manager created

service/cert-manager-webhook created

deployment.apps/cert-manager-cainjector created

deployment.apps/cert-manager created

deployment.apps/cert-manager-webhook created

mutatingwebhookconfiguration.admissionregistration.k8s.io/cert-manager-webhook created

validatingwebhookconfiguration.admissionregistration.k8s.io/cert-manager-webhook created注意: 要保证 cert-manager 命名空间中所有的 pod 都正常运行:

$ kubectl get pod -n cert-manager

NAME READY STATUS RESTARTS AGE

cert-manager-webhook-56fdcbb848-q7fn5 1/1Running098s

cert-manager-59f6c76f4b-z5lgf 1/1Running098s

cert-manager-cainjector-59f76f7fff-flrr7 1/1Running098s安装 Pipy Operator

$ kubectl apply -f artifacts/pipy-operator.yaml执行完命令后会看到类似的结果:

namespace/flomesh created

customresourcedefinition.apiextensions.k8s.io/proxies.flomesh.io created

customresourcedefinition.apiextensions.k8s.io/proxyprofiles.flomesh.io created

serviceaccount/operator-manager created

role.rbac.authorization.k8s.io/leader-election-role created

clusterrole.rbac.authorization.k8s.io/manager-role created

clusterrole.rbac.authorization.k8s.io/metrics-reader created

clusterrole.rbac.authorization.k8s.io/proxy-role created

rolebinding.rbac.authorization.k8s.io/leader-election-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/manager-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/proxy-rolebinding created

configmap/manager-config created

service/operator-manager-metrics-service created

service/proxy-injector-svc created

service/webhook-service created

deployment.apps/operator-manager created

deployment.apps/proxy-injector created

certificate.cert-manager.io/serving-cert created

issuer.cert-manager.io/selfsigned-issuer created

mutatingwebhookconfiguration.admissionregistration.k8s.io/mutating-webhook-configuration created

mutatingwebhookconfiguration.admissionregistration.k8s.io/proxy-injector-webhook-cfg created

validatingwebhookconfiguration.admissionregistration.k8s.io/validating-webhook-configuration created注意:要保证 flomesh 命名空间中所有的 pod 都正常运行:

$ kubectl get pod -n flomesh

NAME READY STATUS RESTARTS AGE

proxy-injector-5bccc96595-spl6h 1/1Running039s

operator-manager-c78bf8d5f-wqgb4 1/1Running039s安装 Ingress 控制器:ingress-pipy

$ kubectl apply -f ingress/ingress-pipy.yaml

namespace/ingress-pipy created

customresourcedefinition.apiextensions.k8s.io/ingressparameters.flomesh.io created

serviceaccount/ingress-pipy created

role.rbac.authorization.k8s.io/ingress-pipy-leader-election-role created

clusterrole.rbac.authorization.k8s.io/ingress-pipy-role created

rolebinding.rbac.authorization.k8s.io/ingress-pipy-leader-election-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/ingress-pipy-rolebinding created

configmap/ingress-config created

service/ingress-pipy-cfg created

service/ingress-pipy-controller created

service/ingress-pipy-defaultbackend created

service/webhook-service created

deployment.apps/ingress-pipy-cfg created

deployment.apps/ingress-pipy-controller created

deployment.apps/ingress-pipy-manager created

certificate.cert-manager.io/serving-cert created

issuer.cert-manager.io/selfsigned-issuer created

mutatingwebhookconfiguration.admissionregistration.k8s.io/mutating-webhook-configuration configured

validatingwebhookconfiguration.admissionregistration.k8s.io/validating-webhook-configuration configured检查 ingress-pipy 命名空间下 pod 的状态:

$ kubectl get pod -n ingress-pipy

NAME READY STATUS RESTARTS AGE

svclb-ingress-pipy-controller-8pk8k1/1Running071s

ingress-pipy-cfg-6bc649cfc7-8njk71/1Running071s

ingress-pipy-controller-76cd866d78-m7gfp 1/1Running071s

ingress-pipy-manager-5f568ff988-tw5w6 0/1Running070s至此,你已经成功安装 Flomesh 的所有组件,包括 operator 和 ingress 控制器。

中间件

Demo 需要用到中间件完成日志和统计数据的存储,这里为了方便使用 pipy 进行 mock:直接在控制台中打印数据。

另外,服务治理相关的配置有 mock 的 pipy config 服务提供。

log & metrics

$ cat > middleware.js <<EOF

pipy()

.listen(8123)

.link('mock')

.listen(9001)

.link('mock')

.pipeline('mock')

.decodeHttpRequest()

.replaceMessage(

req =>(

console.log(req.body.toString()),

newMessage('OK')

)

)

.encodeHttpResponse()

EOF

$ docker run --rm --name middleware --entrypoint "pipy"-v $PWD:/script -p 8123:8123-p 9001:9001 flomesh/pipy-pjs:0.4.0-118/script/middleware.jspipy config

$ cat > mock-config.json <<EOF

"ingress":,

"inbound":

"rateLimit":-1,

"dataLimit":-1,

"circuitBreak":false,

"blacklist":[]

,

"outbound":

"rateLimit":-1,

"dataLimit":-1

EOF

$ cat > mock.js <<EOF

pipy(

_CONFIG_FILENAME:'mock-config.json',

_serveFile:(req, filename, type)=>(

newMessage(

bodiless: req.head.method ==='HEAD',

headers:

'etag': os.stat(filename)?.mtime |0,

'content-type': type,

,

,

req.head.method ==='HEAD'?null: os.readFile(filename),

)

),

_router:new algo.URLRouter(

'/config': req => _serveFile(req, _CONFIG_FILENAME,'application/json'),

'/*':()=>newMessage( status:404,'Not found'),

),

)

// Config

.listen(9000)

.decodeHttpRequest()

.replaceMessage(

req =>(

_router.find(req.head.path)(req)

)

)

.encodeHttpResponse()

EOF

$ docker run --rm --name mock --entrypoint "pipy"-v $PWD:/script -p 9000:9000 flomesh/pipy-pjs:0.4.0-118/script/mock.js运行 Demo

Demo 运行在另一个独立的命名空间 flomesh-spring 中,执行命令 kubectl apply -f base/namespace.yaml 来创建该命名空间。如果你 describe 该命名空间你会发现其使用了 flomesh.io/inject=true 标签。

这个标签告知 operator 的 admission webHook 拦截标注的命名空间下 pod 的创建。

$ kubectl describe ns flomesh-spring

Name: flomesh-spring

Labels: app.kubernetes.io/name=spring-mesh

app.kubernetes.io/version=1.19.0

flomesh.io/inject=true

kubernetes.io/metadata.name=flomesh-spring

Annotations:<none>

Status:Active

No resource quota.

NoLimitRange resource.我们首先看下 Flomesh 提供的 CRD ProxyProfile。这个 demo 中,其定义了 sidecar 容器片段以及所使用的的脚本。检查 sidecar/proxy-profile.yaml 获取更多信息。执行下面的命令,创建 CRD 资源。

$ kubectl apply -f sidecar/proxy-profile.yaml检查是否创建成功:

$ kubectl get pf -o wide

NAME NAMESPACE DISABLED SELECTOR CONFIG AGE

proxy-profile-002-bookinfo flomesh-spring false"matchLabels":"sys":"bookinfo-samples""flomesh-spring":"proxy-profile-002-bookinfo-fsmcm-b67a9e39-0418"27sAs the services has startup dependencies, you need to deploy it one by one following the strict order. Before starting, check the Endpoints section of base/clickhouse.yaml.

提供中间件的访问 endpoid,将 base/clickhouse.yaml、base/metrics.yaml 和 base/config.yaml 中的 ip 地址改为本机的 ip 地址(不是 127.0.0.1)。

修改之后,执行如下命令:

$ kubectl apply -f base/clickhouse.yaml

$ kubectl apply -f base/metrics.yaml

$ kubectl apply -f base/config.yaml

$ kubectl get endpoints samples-clickhouse samples-metrics samples-config

NAME ENDPOINTS AGE

samples-clickhouse 192.168.1.101:81233m

samples-metrics 192.168.1.101:90013s

samples-config 192.168.1.101:90003m部署注册中心

$ kubectl apply -f base/discovery-server.yaml检查注册中心 pod 的状态,确保 3 个容器都运行正常。

$ kubectl get pod

NAME READY STATUS RESTARTS AGE

samples-discovery-server-v1-85798c47d4-dr72k 3/3Running096s部署配置中心

$ kubectl apply -f base/config-service.yaml部署 API 网关以及 bookinfo 相关的服务

$ kubectl apply -f base/bookinfo-v1.yaml

$ kubectl apply -f base/bookinfo-v2.yaml

$ kubectl apply -f base/productpage-v1.yaml

$ kubectl apply -f base/productpage-v2.yaml检查 pod 状态,可以看到所有 pod 都注入了容器。

$ kubectl get pods

samples-discovery-server-v1-85798c47d4-p6zpb 3/3Running019h

samples-config-service-v1-84888bfb5b-8bcw91/1Running019h

samples-api-gateway-v1-75bb6456d6-nt2nl 3/3Running06h43m

samples-bookinfo-ratings-v1-6d557dd894-cbrv7 3/3Running06h43m

samples-bookinfo-details-v1-756bb89448-dxk66 3/3Running06h43m

samples-bookinfo-reviews-v1-7778cdb45b-pbknp 3/3Running06h43m

samples-api-gateway-v2-7ddb5d7fd9-8jgms3/3Running06h37m

samples-bookinfo-ratings-v2-845d95fb7-txcxs 3/3Running06h37m

samples-bookinfo-reviews-v2-79b4c67b77-ddkm2 3/3Running06h37m

samples-bookinfo-details-v2-7dfb4d7c-jfq4j 3/3Running06h37m

samples-bookinfo-productpage-v1-854675b56-8n2xd1/1Running07m1s

samples-bookinfo-productpage-v2-669bd8d9c7-8wxsf1/1Running06m57s添加 Ingress 规则

执行如下命令添加 Ingress 规则。

$ kubectl apply -f ingress/ingress.yaml测试前的准备

访问 demo 服务都要通过 ingress 控制器。因此需要先获取 LB 的 ip 地址。

//Obtain the controller IP

//Here, we append port.

ingressAddr=`kubectl get svc ingress-pipy-controller -n ingress-pipy -o jsonpath='.spec.clusterIP'`:81这里我们使用了是 k3d 创建的 k3s,命令中加入了 -p 81:80@loadbalancer 选项。我们可以使用 127.0.0.1:81 来访问 ingress 控制器。这里执行命令 ingressAddr=127.0.0.1:81。

Ingress 规则中,我们为每个规则指定了 host,因此每个请求中需要通过 HTTP 请求头 Host 提供对应的 host。

或者在 /etc/hosts 添加记录:

$ kubectl get ing ingress-pipy-bookinfo -n flomesh-spring -o jsonpath="range .spec.rules[*].host'\\n'"

api-v1.flomesh.cn

api-v2.flomesh.cn

fe-v1.flomesh.cn

fe-v2.flomesh.cn

//添加记录到 /etc/hosts

127.0.0.1 api-v1.flomesh.cn api-v2.flomesh.cn fe-v1.flomesh.cn fe-v2.flomesh.cn验证

$ curl http://127.0.0.1:81/actuator/health -H 'Host: api-v1.flomesh.cn'

"status":"UP","groups":["liveness","readiness"]

//OR

$ curl http://api-v1.flomesh.cn:81/actuator/health

"status":"UP","groups":["liveness","readiness"]测试

灰度

在 v1 版本的服务中,我们为 book 添加 rating 和 review。

# rate a book

$ curl -X POST http://$ingressAddr/bookinfo-ratings/ratings \\

-H "Content-Type: application/json" \\

-H "Host: api-v1.flomesh.cn" \\

-d '"reviewerId":"9bc908be-0717-4eab-bb51-ea14f669ef20","productId":"2099a055-1e21-46ef-825e-9e0de93554ea","rating":3'

$ curl http://$ingressAddr/bookinfo-ratings/ratings/2099a055-1e21-46ef-825e-9e0de93554ea -H "Host: api-v1.flomesh.cn"

# review a book

$ curl -X POST http://$ingressAddr/bookinfo-reviews/reviews \\

-H "Content-Type: application/json" \\

-H "Host: api-v1.flomesh.cn" \\

-d '"reviewerId":"9bc908be-0717-4eab-bb51-ea14f669ef20","productId":"2099a055-1e21-46ef-825e-9e0de93554ea","review":"This was OK.","rating":3'

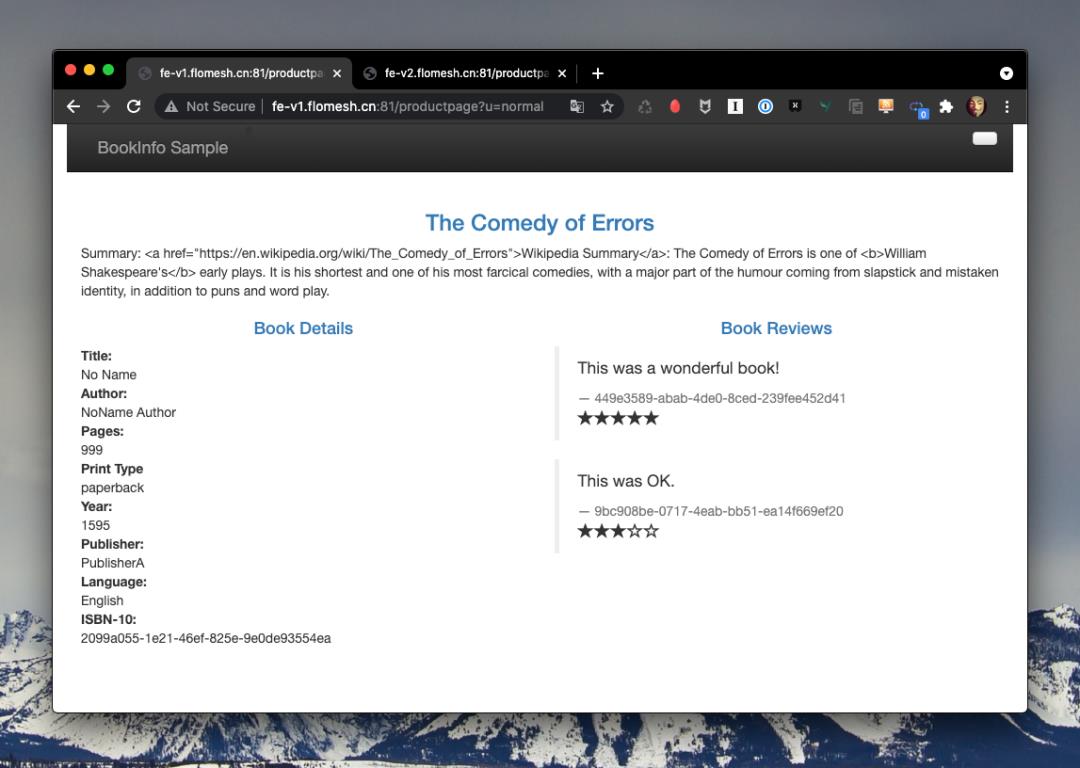

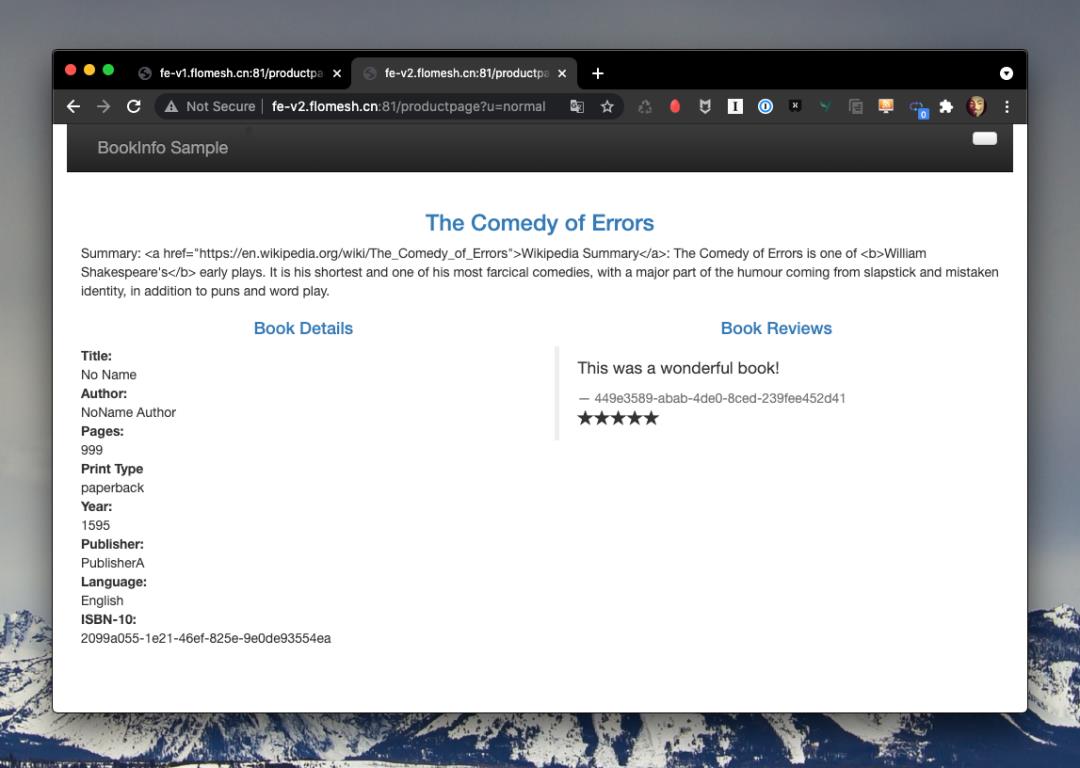

$ curl http://$ingressAddr/bookinfo-reviews/reviews/2099a055-1e21-46ef-825e-9e0de93554ea -H "Host: api-v1.flomesh.cn"执行上面的命令之后,我们可以在浏览器中访问前端服务(http://fe-v1.flomesh.cn:81/productpage?u=normal、 http://fe-v2.flomesh.cn:81/productpage?u=normal),只有 v1 版本的前端中才能看到刚才添加的记录。

熔断

这里熔断我们通过修改 mock-config.json 中的 inbound.circuitBreak 为 true,来将服务强制开启熔断:

"ingress":,

"inbound":

"rateLimit":-1,

"dataLimit":-1,

"circuitBreak":true,//here

"blacklist":[]

,

"outbound":

"rateLimit":-1,

"dataLimit":-1

$ curl http://$ingressAddr/actuator/health -H 'Host: api-v1.flomesh.cn'

HTTP/1.1503ServiceUnavailable

Connection: keep-alive

Content-Length:27

ServiceCircuitBreakOpen限流

修改 pipy config 的配置,将 inbound.rateLimit 设置为 1。

"ingress":,

"inbound":

"rateLimit":1,//here

"dataLimit":-1,

"circuitBreak":false,

"blacklist":[]

,

"outbound":

"rateLimit":-1,

"dataLimit":-1

我们使用 wrk 模拟发送请求,20 个连接、20 个请求、持续 30s:

$ wrk -t20 -c20 -d30s --latency http://$ingressAddr/actuator/health -H 'Host: api-v1.flomesh.cn'

Running30s test @ http://127.0.0.1:81/actuator/health

20 threads and20 connections

ThreadStatsAvgStdevMax+/-Stdev

Latency951.51ms206.23ms1.04s93.55%

Req/Sec0.611.7110.0093.55%

LatencyDistribution

50%1.00s

75%1.01s

90%1.02s

99%1.03s

620 requests in30.10s,141.07KB read

Requests/sec:20.60

Transfer/sec:4.69KB从结果来看 20.60 req/s,即每个连接 1 req/s。

黑白名单

将 pipy config 的 mock-config.json 做如下修改:ip 地址使用的是 ingress controller 的 pod ip。

$ kgpo -n ingress-pipy ingress-pipy-controller-76cd866d78-4cqqn-o jsonpath='.status.podIP'

10.42.0.78

"ingress":,

"inbound":

"rateLimit":-1,

"dataLimit":-1,

"circuitBreak":false,

"blacklist":["10.42.0.78"]//here

,

"outbound":

"rateLimit":-1,

"dataLimit":-1

还是访问网关的接口

curl http://$ingressAddr/actuator/health -H 'Host: api-v1.flomesh.cn'

HTTP/1.1503ServiceUnavailable

content-type: text/plain

Connection: keep-alive

Content-Length:20

ServiceUnavailable引用链接

[1] Flomesh: https://flomesh.cn/[2] github: https://github.com/flomesh-io/flomesh-bookinfo-demo[3] k3d: https://k3d.io/[4] k3s: https://github.com/k3s-io/k3s

往期推荐

点分享

点收藏

点点赞

点在看

以上是关于使用 Flomesh 强化 Spring Cloud 服务治理的主要内容,如果未能解决你的问题,请参考以下文章

动态代码评估:不安全的反序列化(Spring Boot 2) - 如何避免与执行器相关的强化问题,还是误报?

Spring Cloud总结29.Zuul的FallBack回退机制