pytorch Alexnet 网络模型搭建

Posted 为了维护世界和平_

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了pytorch Alexnet 网络模型搭建相关的知识,希望对你有一定的参考价值。

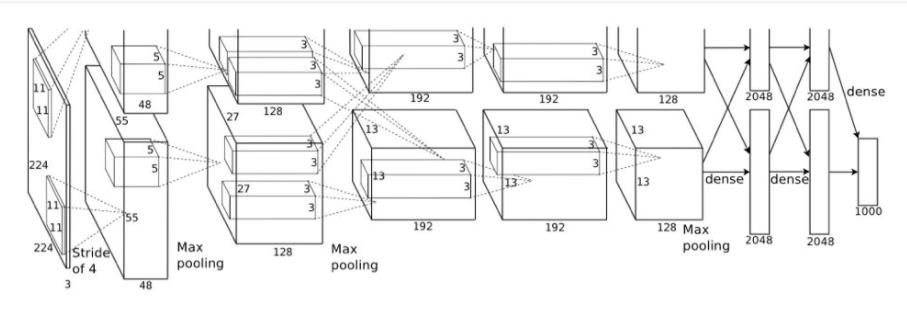

Alexnet 网络模型

网络亮点

- 首次使用GPU进行网络加速训练(是cpu的20-50倍速度)

- 使用Relu激活函数,而不是传统的sigmoid激活函数及Tanh激活函数

- 使用LRN局部相应归一化

- 在全连接层的前两层使用了Dropout随机失活神经元操作,以减少过拟合

pytorch 模型实现

import torch.nn as nn

import torch

#卷积核的数量仅仅用到论文中的一半

class AlexNet(nn.Module):

def __init__(self, num_classes=1000, init_weights=False):

super(AlexNet, self).__init__()

#特征提取网络

self.features = nn.Sequential(

nn.Conv2d(3, 48, kernel_size=11, stride=4, padding=2), # input[3, 224, 224] output[48, 55, 55]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[48, 27, 27]

nn.Conv2d(48, 128, kernel_size=5, padding=2), # output[128, 27, 27]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 13, 13]

nn.Conv2d(128, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 192, kernel_size=3, padding=1), # output[192, 13, 13]

nn.ReLU(inplace=True),

nn.Conv2d(192, 128, kernel_size=3, padding=1), # output[128, 13, 13]

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2), # output[128, 6, 6] 最后输出的尺寸

)

#分类网络

self.classifier = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(128 * 6 * 6, 2048),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(2048, 2048),

nn.ReLU(inplace=True),

nn.Linear(2048, num_classes),

)

if init_weights:

self._initialize_weights()

def forward(self, x):

x = self.features(x)

x = torch.flatten(x, start_dim=1)#展平处理

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):#卷积操作的初始化函数

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

if m.bias is not None:#常量初始化

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):#线性操作的初始化

nn.init.normal_(m.weight, 0, 0.01)# 0是均值,0.01是方差

nn.init.constant_(m.bias, 0)

alexnet_modle = AlexNet()

print(alexnet_modle)

网络打印输出

AlexNet(

(features): Sequential(

(0): Conv2d(3, 48, kernel_size=(11, 11), stride=(4, 4), padding=(2, 2))

(1): ReLU(inplace=True)

(2): MaxPool2d(kernel_size=3, stride=2, padding=0, dilation=1, ceil_mode=False)

(3): Conv2d(48, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(4): ReLU(inplace=True)

(5): MaxPool2d(kernel_size=3, stride=2, padding=0, dilation=1, ceil_mode=False)

(6): Conv2d(128, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(7): ReLU(inplace=True)

(8): Conv2d(192, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(9): ReLU(inplace=True)

(10): Conv2d(192, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(11): ReLU(inplace=True)

(12): MaxPool2d(kernel_size=3, stride=2, padding=0, dilation=1, ceil_mode=False)

)

(classifier): Sequential(

(0): Dropout(p=0.5, inplace=False)

(1): Linear(in_features=4608, out_features=2048, bias=True)

(2): ReLU(inplace=True)

(3): Dropout(p=0.5, inplace=False)

(4): Linear(in_features=2048, out_features=2048, bias=True)

(5): ReLU(inplace=True)

(6): Linear(in_features=2048, out_features=1000, bias=True)

)

)

以上是关于pytorch Alexnet 网络模型搭建的主要内容,如果未能解决你的问题,请参考以下文章