TensorFlow1.x 代码实战系列:MNIST手写数字识别

Posted 白马金羁侠少年

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了TensorFlow1.x 代码实战系列:MNIST手写数字识别相关的知识,希望对你有一定的参考价值。

相关资料

实战1:MNSIT手写数字识别

这里使用的是普通的DNN模型实现MNIST识别,如果想提高精度,可以自行使用CNN模型。

'''

A logistic regression learning algorithm example using TensorFlow library.

This example is using the MNIST database of handwritten digits

(http://yann.lecun.com/exdb/mnist/)

Author: Aymeric Damien

Project: https://github.com/aymericdamien/TensorFlow-Examples/

'''

import tensorflow._api.v2.compat.v1 as tf

tf.disable_v2_behavior()

# Import MNIST data (https://aitechtogether.com/ai-question/19979.html)

from tensorflow.examples.tutorials.mnist import input_data

# https://github.com/tensorflow/tensorflow/tree/0a3f4e15331b3fbd0ccb60d2b00c1f384f6a32ec/tensorflow/examples/tutorials

mnist = input_data.read_data_sets("/tmp/data/", one_hot=True)

# Parameters

learning_rate = 0.01

training_epochs = 50

batch_size = 100

display_step = 5

# tf Graph Input

x = tf.placeholder(tf.float32, [None, 784]) # mnist data image of shape 28*28=784

y = tf.placeholder(tf.float32, [None, 10]) # 0-9 digits recognition => 10 classes

# [None, 784] 不知道batch_size大小,但是知道最后一个维度必须是784

# Set model weights

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

# Construct model

pred = tf.nn.softmax(tf.matmul(x, W) + b) # Softmax

# pred: [batch_size, 100]

# Minimize error using cross entropy (一个batch样本的交叉熵损失)

# softmax loss: https://zhuanlan.zhihu.com/p/83772845

cost = tf.reduce_mean(-tf.reduce_sum(y * tf.log(pred), reduction_indices=1))

# Gradient Descent

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)

# Initialize the variables (i.e. assign their default value)

init = tf.global_variables_initializer()

# Start training

with tf.Session() as sess:

# Run the initializer

sess.run(init)

# Training cycle

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = int(mnist.train.num_examples / batch_size)

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

# batch_xs: [batch_size, 784]

# batch_ys: [batch_size, 10]

# out = sess.run(pred, feed_dict=x:batch_xs, y:batch_ys)

# print(out.shape)

# Run optimization op (backprop) and cost op (to get loss value)

_, c = sess.run([optimizer, cost], feed_dict=x: batch_xs,

y: batch_ys)

# cost: 一个batch样本的交叉熵损失

# Compute average loss

avg_cost += c / total_batch

# Display logs per epoch step

if (epoch + 1) % display_step == 0:

print("Epoch:", '%04d' % (epoch + 1), "cost=", ":.9f".format(avg_cost))

print("Optimization Finished!")

# Test model

correct_prediction = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1))

# Calculate accuracy

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# test.images: (10000, 784), test.labels: (10000, 10)

# tf.eval()是sess.run()的另一个写法

# debug时可以令input = x: mnist.test.images, y: mnist.test.labels

print("Accuracy:", accuracy.eval(x: mnist.test.images, y: mnist.test.labels))

Epoch: 0005 cost= 0.465507917

Epoch: 0010 cost= 0.392346543

Epoch: 0015 cost= 0.362667364

Epoch: 0020 cost= 0.345452763

Epoch: 0025 cost= 0.333730606

Epoch: 0030 cost= 0.325053533

Epoch: 0035 cost= 0.318299953

Epoch: 0040 cost= 0.312863539

Epoch: 0045 cost= 0.308358982

Epoch: 0050 cost= 0.304474182

Optimization Finished!

Accuracy: 0.9193

Process finished with exit code 0

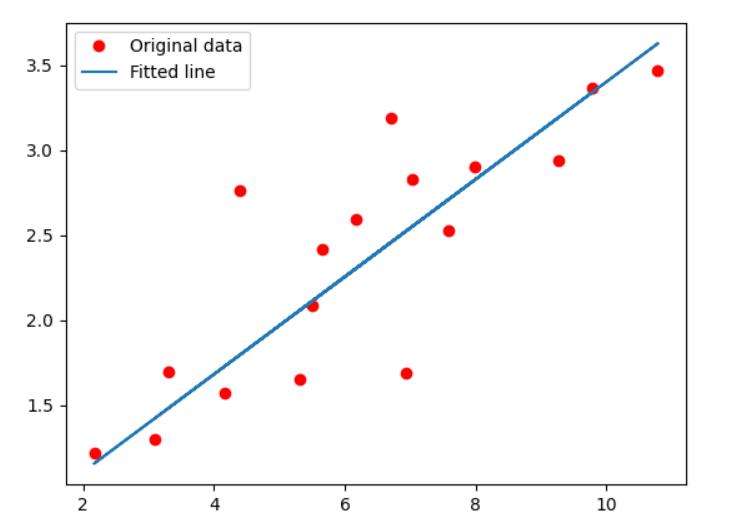

实战2:线性回归

'''

A linear regression learning algorithm example using TensorFlow library.

Author: Aymeric Damien

Project: https://github.com/aymericdamien/TensorFlow-Examples/

'''

import tensorflow._api.v2.compat.v1 as tf

tf.disable_v2_behavior()

import numpy

import matplotlib.pyplot as plt

rng = numpy.random

# Parameters

learning_rate = 0.01

training_epochs = 1000

display_step = 50

# Training Data

train_X = numpy.asarray([3.3, 4.4, 5.5, 6.71, 6.93, 4.168, 9.779, 6.182, 7.59, 2.167,

7.042, 10.791, 5.313, 7.997, 5.654, 9.27, 3.1])

train_Y = numpy.asarray([1.7, 2.76, 2.09, 3.19, 1.694, 1.573, 3.366, 2.596, 2.53, 1.221,

2.827, 3.465, 1.65, 2.904, 2.42, 2.94, 1.3])

n_samples = train_X.shape[0]

# tf Graph Input

X = tf.placeholder(tf.float32) # If the shape is not specified, you can feed a tensor of any shape.

Y = tf.placeholder("float")

# Set model weights

W = tf.Variable(rng.randn(), name="weight")

b = tf.Variable(rng.randn(), name="bias")

# Construct a linear model

pred = tf.add(tf.multiply(X, W), b)

# Mean squared error

cost = tf.reduce_sum(tf.pow(pred - Y, 2)) / (2 * n_samples)

# Gradient descent

# Note, minimize() knows to modify W and b because Variable objects are trainable=True by default

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)

# Initialize the variables (i.e. assign their default value)

init = tf.global_variables_initializer()

# Start training

with tf.Session() as sess:

# Run the initializer

sess.run(init)

# Fit all training data

for epoch in range(training_epochs):

for (x, y) in zip(train_X, train_Y):

sess.run(optimizer, feed_dict=X: x, Y: y)

# Display logs per epoch step

if (epoch + 1) % display_step == 0:

# 所有训练集的MSE loss

c = sess.run(cost, feed_dict=X: train_X, Y: train_Y)

print("Epoch:", '%04d' % (epoch + 1), "cost=", ":.9f".format(c), \\

"W=", sess.run(W), "b=", sess.run(b))

print("Optimization Finished!")

training_cost = sess.run(cost, feed_dict=X: train_X, Y: train_Y)

print("Training cost=", training_cost, "W=", sess.run(W), "b=", sess.run(b), '\\n')

# Graphic display

plt.plot(train_X, train_Y, 'ro', label='Original data')

plt.plot(train_X, sess.run(W) * train_X + sess.run(b), label='Fitted line')

plt.legend()

plt.show()

# Testing example, as requested (Issue #2)

test_X = numpy.asarray([6.83, 4.668, 8.9, 7.91, 5.7, 8.7, 3.1, 2.1])

test_Y = numpy.asarray([1.84, 2.273, 3.2, 2.831, 2.92, 3.24, 1.35, 1.03])

print("Testing... (Mean square loss Comparison)")

testing_cost = sess.run(

tf.reduce_sum(tf.pow(pred - Y, 2)) / (2 * test_X.shape[0]),

feed_dict=X: test_X, Y: test_Y) # same function as cost above, test n is different

print("Testing cost=", testing_cost)

print("Absolute mean square loss difference:", abs(

training_cost - testing_cost))

plt.plot(test_X, test_Y, 'bo', label='Testing data')

plt.plot(train_X, sess.run(W) * train_X + sess.run(b), label='Fitted line')

plt.legend()

plt.show()

Epoch: 0050 cost= 0.120824270 W= 0.3667477 b= -0.041331705

Epoch: 0100 cost= 0.115755573 W= 0.35978004 b= 0.008793428

Epoch: 0150 cost= 0.111272469 W= 0.3532267 b= 0.05593741

Epoch: 0200 cost= 0.107307263 W= 0.34706315 b= 0.10027754

Epoch: 0250 cost= 0.103800252 W= 0.34126616 b= 0.14198062

Epoch: 0300 cost= 0.100698456 W= 0.33581394 b= 0.18120334

Epoch: 0350 cost= 0.097955085 W= 0.33068597 b= 0.21809348

Epoch: 0400 cost= 0.095528767 W= 0.325863 b= 0.2527896

Epoch: 0450 cost= 0.093382917 W= 0.3213269 b= 0.28542197

Epoch: 0500 cost= 0.091485016 W= 0.3170605 b= 0.31611422

Epoch: 0550 cost= 0.089806609 W= 0.313048 b= 0.3449799

Epoch: 0600 cost= 0.088322178 W= 0.30927402 b= 0.37212968

Epoch: 0650 cost= 0.087009393 W= 0.30572447 b= 0.39766482

Epoch: 0700 cost= 0.085848458 W= 0.3023862 b= 0.42168018

Epoch: 0750 cost= 0.084821783 W= 0.29924637 b= 0.4442676

Epoch: 0800 cost= 0.083913833 W= 0.2962933 b= 0.46551228

Epoch: 0850 cost= 0.083110899 W= 0.2935158 b= 0.48549363

Epoch: 0900 cost= 0.082400911 W= 0.29090345 b= 0.5042859

Epoch: 0950 cost= 0.081773058 W= 0.2884467 b= 0.52196056

Epoch: 1000 cost= 0.081217892 W= 0.28613582 b= 0.5385841

Optimization Finished!

Training cost= 0.08121789 W= 0.28613582 b= 0.5385841

Testing... (Mean square loss Comparison)

以上是关于TensorFlow1.x 代码实战系列:MNIST手写数字识别的主要内容,如果未能解决你的问题,请参考以下文章