大数据技术之Zookeeper

Posted @从一到无穷大

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了大数据技术之Zookeeper相关的知识,希望对你有一定的参考价值。

文章目录

1 Zookeeper 入门

1.1 概述

Zookeeper 是一个开源的分布式的,为分布式应用提供协调服务的Apache 项目。

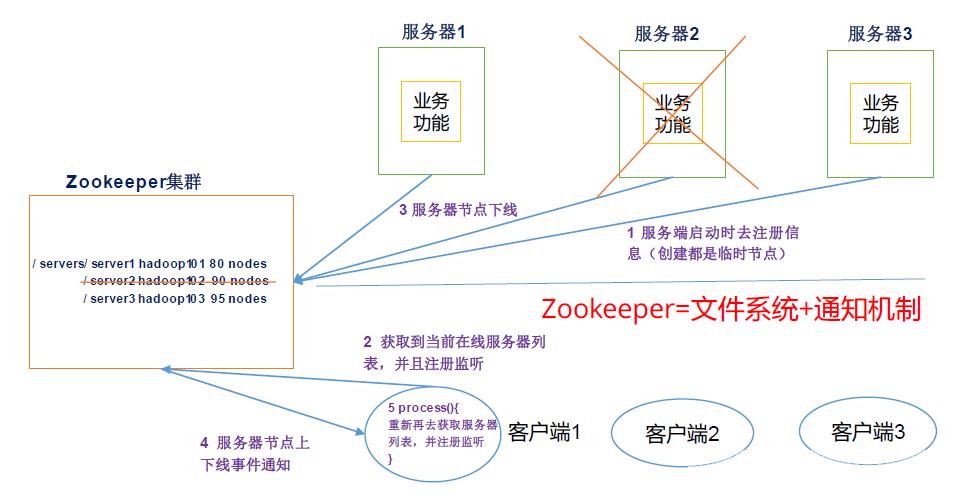

Zookeeper 工作机制

Zookeeper从设计模式角度来理解:是一个基于观察者模式设计的分布式服务管理框架,它负责存储和管理大家都关心的数据,然后接受观察者的注册, 一旦这些数据的状态发生变化,Zookeeper就将负责通知已经Zookeeper上注册的那些观察者做出相应的反应。

1.2 Zookeeper 特点

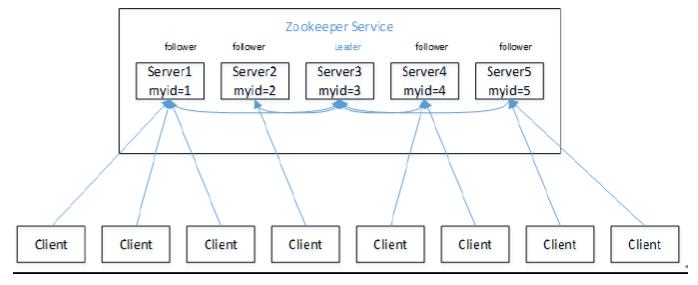

(1)Zookeeper:一个领导者(Leader),多个跟随者(Follower)组成的集群。

(2)集群中只要有半数以上节点存活,Zookeeper集群就能正常服务。

(3)全局数据一致:每个Server保存一份相同的数据副本,Client无论连接到哪个Server,数据都是一致的。

(4)更新请求顺序进行,来自同一个Client的更新请求按其发送顺序依次执行。

(5)数据更新原子性,一次数据更新要么成功,要么失败。

(6)实时性,在一定时间范围内,Client能读到最新数据。

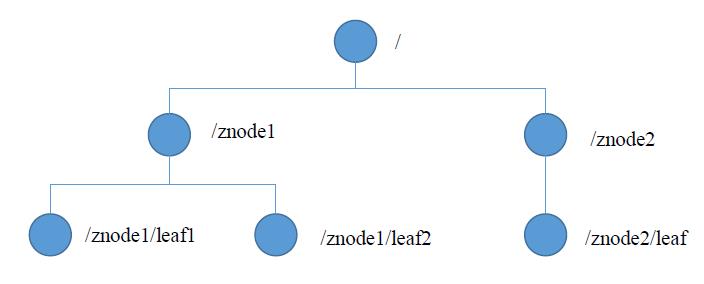

1.3 数据结构

ZooKeeper数据模型的结构与Unix文件系统很类似,整体上可以看作是一棵树,每个节点称做一个ZNode。每一个ZNode默认能够存储1MB的数据,每个ZNode都可以通过其路径唯一标识。

1.4 应用场景

提供的服务包括:统一命名服务、统一配置管理、统一集群管理、服务器节点动态上下线、软负载均衡等。

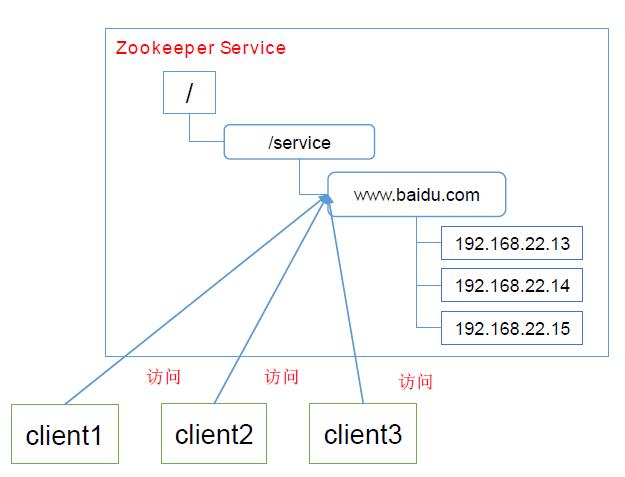

统一命名服务

在分布式环境下,经常需要对应用/服务进行统一命名,便于识别。例如:IP不容易记住,而域名容易记住。

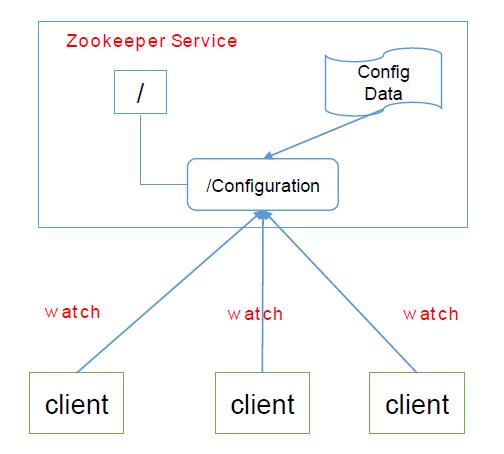

统一配置管理

(1)分布式环境下,配置文件同步非常常见。

a. 一般要求一个集群中,所有节点的配置信息是一致的,比如Kafka集群。

b. 对配置文件修改后,希望能够快速同步到各个节点上。

(2)配置管理可交由ZooKeeper实现。

a. 可将配置信息写入Zookeeper上的一个Znode。

b. 各个客户端服务器监听这个Znode。

c. 一旦Znode中的数据被修改,ZooKeeper将通知各个客户端服务器。

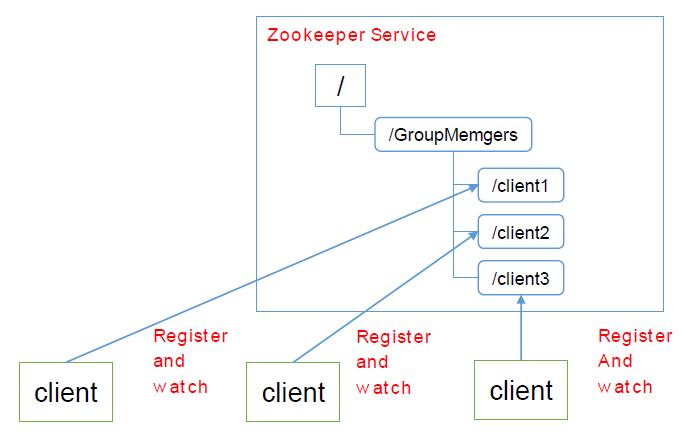

统一集群管理

(1)分布式环境中,实时掌握每个节点的状态是必要的。可根据节点实时状态做出一些调整。

(2)ZooKeeper可以实现实时监控节点状态变化。可将节点信息写入ZooKeeper上的一个ZNode。监听这个ZNode可获取它的实时状态变化。

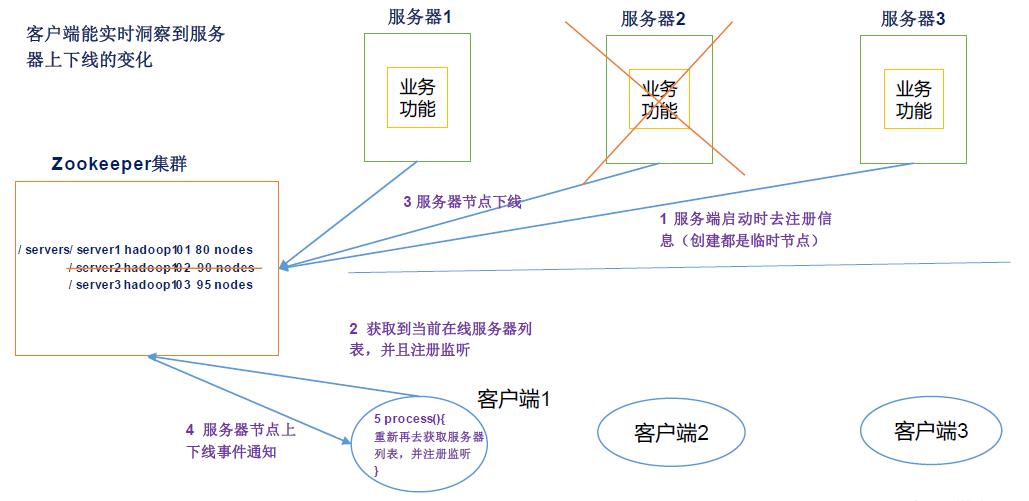

服务器动态上下线

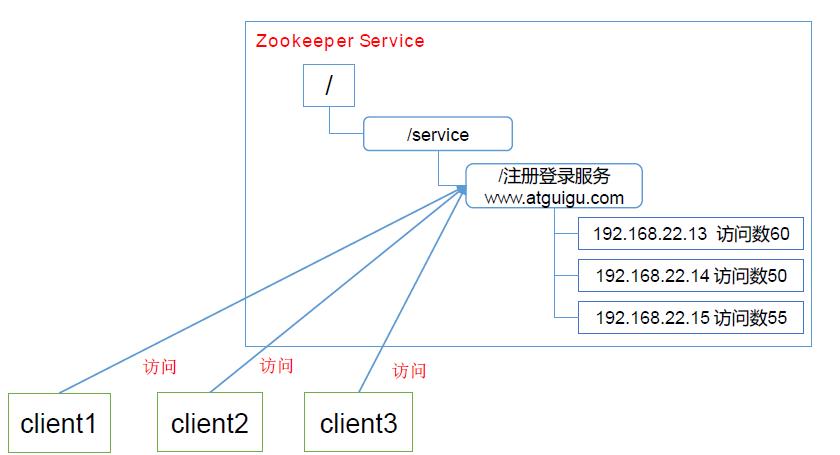

软负载均衡

在Zookeeper中记录每台服务器的访问数,让访问数最少的服务器去处理最新的客户端请求。

2 Zookeeper 安装

2.1 本地模式安装部署

1 安装前准备

(1)安装 Jdk

(2)拷贝 Zookeeper安装 包 到 Linux系统下

(3)解压到指定目录

[Tom@hadoop102 software]$ tar -zxvf zookeeper-3.5.9.tar.gz -C /opt/module/

2 配置修改

将 /opt/module/zookeeper-3.5.9/conf 这个路径下的 zoo_sample.cfg修改为 zoo.cfg

[Tom@hadoop102 conf ]$ mv zoo_sample.cfg zoo.cfg

在 /opt/module/zookeeper-3.5.9/这个目录上创建zkData文件夹

[Tom@hadoop102 zookeeper-3.5.9]$ mkdir zkData

3 操作Zookeeper

(1)启动Zookeeper

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

(2)查看进程是否启动

[Tom@hadoop102 zookeeper-3.5.9]$ jps

2257 QuorumPeerMain

2291 Jps

(3)查看状态

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Mode:standalone

(4)启动客户端

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkCli.sh

(5)退出客户端

[zk: localhost:2181(CONNECTED) 0] quit

(6)停止Zookeeper

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkServer.sh stop

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Stopping zookeeper ... STOPPED

2.2 配置参数解读

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/opt/module/zookeeper-3.5.9/zkData

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

#######################cluster##########################

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888

Zookeeper中的配置文件zoo.cfg中参数含义解读如下:

1. tickTime =2000:通信心跳数,Zookeeper服务器与客户端心跳时间,单位为毫秒

Zookeeper使用的基本时间,服务器之间或客户端与服务器之间维持心跳的时间间隔,也就是每个tickTime时间就会发送一个心跳,时间单位为毫秒。

它用于心跳机制,并且设置最小的session超时时间为两倍心跳时间。(session的最小超时时间是2*tickTime)

2. initLimit =10:LF初始通信时限

集群中的Follower跟随者服务器与Leader领导者服务器之间初始连接时能容忍的最多心跳数(tickTime的数量),用它来限定集群中的Zookeeper服务器连接到Leader的时限。

3. syncLimit =5: LF同步通信时限

集群中Leader与Follower之间的最大响应时间单位,假如响应超过syncLimit * tickTime,Leader认为Follwer死掉,从服务器列表中删除Follwer。

4. dataDir:数据文件目录 +数据持久化路径

主要用于保存 Zookeeper中的数据 。

5. clientPort =2181:客户端连接端口

监听客户端连接的端口

3 Zookeeper实战(开发重点)

3.1 分布式安装部署

1. 集群规划

在hadoop102、 hadoop103和 hadoop104三个节点上部署 Zookeeper。

2 解压安装

(1) 解压 Zookeeper安装包到 /opt/module/目录下

[Tom@hadoop102 software]$ tar -zxvf zookeeper-3.5.9.tar.gz -C /opt/module/

(2)同步 /opt/module/zookeeper-3.5.9目录内容到 hadoop103、 hadoop104

[Tom@hadoop102 module]$ xsync zookeeper-3.5.9/

3.配置服务器编号

(1)在 /opt/module/zookeeper-3.5.9/这个目录下创建zkData

[Tom@hadoop102 zookeeper-3.5.9]$ mkdir -p zkData

(2)在 /opt/module/zookeeper-3.5.9/zkData目录下创建一个 myid的文件

[Tom@hadoop102 zkData]$ touch myid

添加myid文件,注意一定要在 linux里面创建,在 notepad++里面很可能乱码

(3)编辑 myid文件

[Tom@hadoop102 zkData] $ vim myid

在文件中添加与 server对应的编号:2

(4)拷贝配置好的 zookeeper到其他机器上

[Tom@hadoop102 zkData] xsync myid

并分别在 hadoop103、 hadoop104上修改myid文件中内容为 3、 4

4. 配置zoo.cfg文件

(1)重命名 /opt/module/zookeeper-3.4.10/conf这个目录下的 zoo_sample.cfg为 zoo.cfg

[Tom@hadoop102 conf]$ mv zoo_sample.cfg zoo.cfg

(2)打开 zoo.cfg文件

[Tom@hadoop102 conf]$ vim zoo.cfg

修改数据存储路径配置

dataDir=/opt/module/zookeeper-3.5.9/zkData

增加如下配置

#######################cluster##########################

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888

(3)同步 zoo.cfg配置文件

[Tom@hadoop102 conf]$ xsync zoo.cfg

(4)配置参数解读

server.A=B:C:D 。

A是一个数字,表示这个是第几号服务器;

集群模式下配置一个文件 myid,这个文件在 dataDir目录 下,这个文件里面有一个数据就是 A的值, Zookeeper启动时读取此文件,拿到里面的数据与 zoo.cfg里面的配置信息比较从而判断到底是哪个server。

B是这个服务器的地址;

C是这个服务器 Follower与集群中的 Leader服务器交换信息的端口;

D是万一集群中的 Leader服务器挂了,需要一个端口来重新进行选举,选出一个新的Leader,而这个端口就是用来执行选举时服务器相互通信的端口。

4. 集群操作

(1)分别启动 Zookeeper

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkServer.sh start

[Tom@hadoop103 zookeeper-3.5.9]$ bin/zkServer.sh start

[Tom@hadoop104 zookeeper-3.5.9]$ bin/zkServer.sh start

(2)查看状态

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

[Tom@hadoop103 zookeeper-3.5.9]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: leader

[Tom@hadoop104 zookeeper-3.5.9]$ bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /opt/module/zookeeper-3.5.9/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

3.2 客户端命令行操作

| 命令基本语法 | 功能描述 |

|---|---|

| help | 显示所有操作命令 |

| ls path [watch] | 使用ls命令来查看当前znode中所包含的内容 |

| ls2 path [watch] | 查看当前节点数据并能看到更新次数等数据 |

| create | 普通创建: -s 含有序列、-e 临时(重启或者超时消失) |

| get path [watch] | 获得节点的值 |

| set | 设置节点的具体值 |

| stat | 查看节点状态 |

| delete | 删除节点 |

| rmr | 递归删除节点 |

1. 启动客户端

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkCli.sh

2. 显示所有操作命令

[zk: localhost:2181(CONNECTED) 0] help

ZooKeeper -server host:port cmd args

addauth scheme auth

close

config [-c] [-w] [-s]

connect host:port

create [-s] [-e] [-c] [-t ttl] path [data] [acl]

delete [-v version] path

deleteall path

delquota [-n|-b] path

get [-s] [-w] path

getAcl [-s] path

history

listquota path

ls [-s] [-w] [-R] path

ls2 path [watch]

printwatches on|off

quit

reconfig [-s] [-v version] [[-file path] | [-members serverID=host:port1:port2;port3[,...]*]] | [-add serverId=host:port1:port2;port3[,...]]* [-remove serverId[,...]*]

redo cmdno

removewatches path [-c|-d|-a] [-l]

rmr path

set [-s] [-v version] path data

setAcl [-s] [-v version] [-R] path acl

setquota -n|-b val path

stat [-w] path

sync path

Command not found: Command not found help

3. 查看当前 znode中所包含的内容

[zk: localhost:2181(CONNECTED) 1] ls /

[admin, brokers, cluster, config, consumers, controller_epoch, hbase, isr_change_notification, latest_producer_id_block, zookeeper]

4. 查看当前节点详细数据

[zk: localhost:2181(CONNECTED) 2] ls2 /

'ls2' has been deprecated. Please use 'ls [-s] path' instead.

[cluster, controller_epoch, brokers, zookeeper, admin, isr_change_notification, consumers, latest_producer_id_block, config, hbase]

cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0xa00000004

cversion = 48

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 10

[zk: localhost:2181(CONNECTED) 3] ls -s /

[admin, brokers, cluster, config, consumers, controller_epoch, hbase, isr_change_notification, latest_producer_id_block, zookeeper]cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0xa00000004

cversion = 48

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 10

5. 分别创建2个普通节点

[zk: localhost:2181(CONNECTED) 4] create /sanguo "shuguo"

Created /sanguo

[zk: localhost:2181(CONNECTED) 5] create /sanguo/shuguo "liubei"

Created /sanguo/shuguo

6. 获得节点的值

[zk: localhost:2181(CONNECTED) 6] get /sanguo

shuguo

[zk: localhost:2181(CONNECTED) 7] get /sanguo/shuguo

liubei

7. 创建短暂节点

[zk: localhost:2181(CONNECTED) 8] create -e /sanguo/wuguo "zhouyu"

Created /sanguo/wuguo

(1)在当前客户端是能查看到的

[zk: localhost:2181(CONNECTED) 9] ls /sanguo

[shuguo, wuguo]

(2)退出当前客户端,然后再重启客户端

[zk: localhost:2181(CONNECTED) 10] quit

[Tom@hadoop102 zookeeper-3.5.9]$ bin/zkCli.sh

(3)再次查看根目录,短暂节点已经删除

[zk: localhost:2181(CONNECTED) 0] ls /sanguo

[shuguo]

8. 创建带序号的节点

(1)先创建一个普通的根节点 /sanguo/weiguo

[zk: localhost:2181(CONNECTED) 0] create /sanguo/weiguo "xuchu"

Created /sanguo/weiguo

(2)创建带序号的节点

[zk: localhost:2181(CONNECTED) 1] create -s /sanguo/weiguo/simayi "zhongda"

Created /sanguo/weiguo/simayi0000000000

[zk: localhost:2181(CONNECTED) 2] create -s /sanguo/weiguo/xuyou "zhongda"

Created /sanguo/weiguo/xuyou0000000001

[zk: localhost:2181(CONNECTED) 3] create -s /sanguo/weiguo/caozhen "zhongda"

Created /sanguo/weiguo/caozhen0000000002

如果原来没有序号节点 ,序号从0开始依次递增。 如果原节点下已有2个节点,则再排序时从 2开始,以此类推。

9. 修改节点数据值

[zk: localhost:2181(CONNECTED) 4] set /sanguo/weiguo "simayi"

10. 节点的值变化监听

(1)在 hadoop104主机上注册监听 /sanguo节点数据变化

[zk: localhost:2181(CONNECTED) 0] get /sanguo watch

'get path [watch]' has been deprecated. Please use 'get [-s] [-w] path' instead.

shuguo

(2)在 hadoop103主机上修改 /sanguo节点的数据

[zk: localhost:2181(CONNECTED) 0] set /sanguo "caorui"

(3)观察 hadoop104主机收到数据变化的监听

[zk: localhost:2181(CONNECTED) 1]

WATCHER::

WatchedEvent state:SyncConnected type:NodeDataChanged path:/sanguo

11. 节点的子节点变化监听(路径变化)

(1)在 hadoop104主机上注册监听/sanguo节点的子节点变化

[zk: localhost:2181(CONNECTED) 1] ls /sanguo watch

'ls path [watch]' has been deprecated. Please use 'ls [-w] path' instead.

[shuguo, weiguo]

[zk: localhost:2181(CONNECTED) 2] ls -w /sanguo

[shuguo, weiguo]

(2)在 hadoop103主机 /sanguo节点上创建子节点

[zk: localhost:2181(CONNECTED) 1] create /sanguo/jinguo "simayi"

Created /sanguo/jinguo

(3)观察 hadoop104主机收到子节点变化的监听

[zk: localhost:2181(CONNECTED) 3]

WATCHER::

WatchedEvent state:SyncConnected type:NodeChildrenChanged path:/sanguo

12. 删除节点

[zk: localhost:2181(CONNECTED) 5] delete /sanguo/jinguo

13. 递归删除节点

[zk: localhost:2181(CONNECTED) 7] rmr /sanguo/shuguo

The command 'rmr' has been deprecated. Please use 'deleteall' instead.

14. 查看节点状态

[zk: localhost:2181(CONNECTED) 11] stat /sanguo

cZxid = 0x1300000002

ctime = Wed Aug 25 09:13:58 CST 2021

mZxid = 0x1400000009

mtime = Wed Aug 25 15:51:32 CST 2021

pZxid = 0x140000000c

cversion = 7

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 6

numChildren = 1

3.3 API应用

1. 创建一个 Maven工程

2. 添加 pom文件

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency>

<!--https://mvnrepository.com/artifact/org.apache.zookeeper/zookeeper -->

<dependency>

<groupId>org.apache.zookeeper</groupId>

<artifactId>zookeeper</artifactId>

<version>3.5.9</version>

</dependency>

</dependencies>

3. 拷贝 log4j.properties文件到项目根目录

需要在项目的src/main/resources目录下,新建一个文件,命名为log4j.properties,在文件中填入。

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] -%m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] -%m%n

4. 编写Zookeeper测试代码

package com.Tom.zookeeper;

import org.apache.zookeeper.*;

import org.apache.zookeeper.data.Stat;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.util.List;

public class TestZookeeper {

private String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private int sessionTimeout = 2000;

private ZooKeeper zkClient;

@Before

public void init() throws IOException {

zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent event) {

System.out.println("----------start----------");

List<String> children;

try {

children = zkClient.getChildren("/", true);

for (String child: children) {

System.out.println(child);

}

System.out.println("------------end----------");

} catch (KeeperException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

});

}

// 1 创建节点

@Test

public void createNode() throws KeeperException, InterruptedException {

String path = zkClient.create("/sanguo1", "simayi".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);

System.out.println(path);

}

// 2 获取子节点,并监控节点的变化

@Test

public void getDataAndWatch() throws KeeperException, InterruptedException {

List<String> children = zkClient.getChildren("/", true);

for (String child: children) {

System.out.println(child);

}

Thread.sleep(Long.MAX_VALUE);

}

// 3 判断节点是否存在

@Test

public void exist() throws KeeperException, InterruptedException {

Stat stat = zkClient.exists("/weigu", false);