合肥工业大学Linux实验二Latex 科技论文排版

Posted 上衫_

tags:

篇首语:本文由小常识网(cha138.com)小编为大家整理,主要介绍了合肥工业大学Linux实验二Latex 科技论文排版相关的知识,希望对你有一定的参考价值。

一、实验目的

通过使用 latex 进行科技论文的编辑,掌握使用 latex 排版的方法。

二、实验任务和要求

将老师提供的符合 IEEE 期刊论文格式的 pdf 文件,使用 latex 编辑排版软件进

行编辑处理,进而生成出相同的 pdf 文件。

三、实验步骤和实验结果

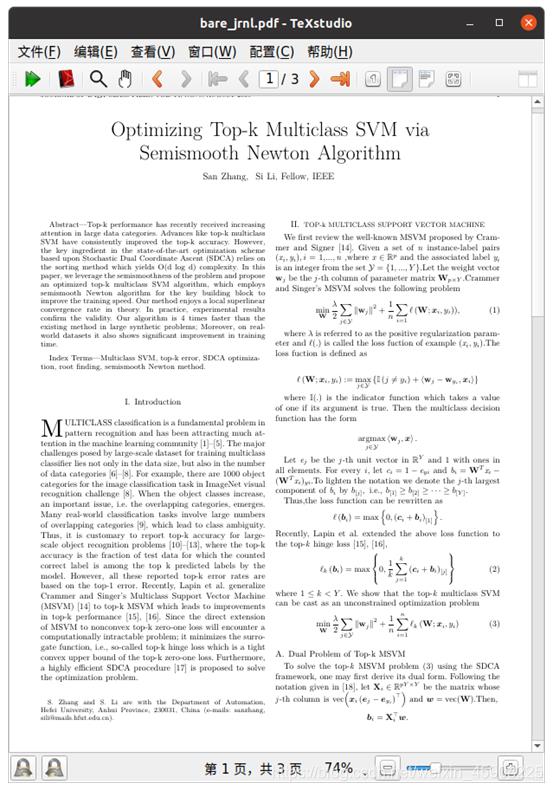

1.打开老师所给的IEEE模板

2.根据IEEE模板先将论文的大致框架完成:标题和正文

3.向论文中增加数学公式

4.向论文中增加图片

5.向论文中增加引用

四、代码如下

\\documentclass[journal]{IEEEtran}

\\hyphenation{op-tical net-works semi-conduc-tor}

\\makeatletter

\\newcommand{\\rmnum}[1]{\\romannumeral #1}

\\newcommand{\\Rmnum}[1]{\\expandafter\\@slowromancap\\romannumeral #1@}

\\makeatother

\\usepackage{amssymb}

\\usepackage{amsmath}

\\usepackage{multirow}

\\usepackage[text={190mm,250mm},centering]{geometry}

\\usepackage{indentfirst}

\\usepackage{graphicx}

\\usepackage{epstopdf}

\\usepackage{cite}

\\usepackage{stfloats}

\\begin{document}

\\title{Optimizing Top-k Multiclass SVM via\\\\Semismooth Newton Algorithm}

\\author{San~Zhang,~Si~Li,~\\IEEEmembership{Fellow,~IEEE}

\\thanks{S. Zhang and S. Li are with the Department of Automation,Hefei University, Anhui Province, 230031, China (e-mails: {sanzhang, sili}@mails.hfut.edu.cn).}}

\\markboth{Journal of \\LaTeX\\ Class Files,~Vol.~14, No.~8, August~2015}%{Shell \\MakeLowercase{\\textit{et al.}}: Bare Demo of IEEEtran.cls for IEEE Journals}

\\maketitle

\\begin{abstract}

Top-k performance has recently received increasing attention in large data categories. Advances like top-k multiclass SVM have consistently improved the top-k accuracy. However,the key ingredient in the state-of-the-art optimization scheme

based upon Stochastic Dual Coordinate Ascent (SDCA) relies on the sorting method which yields O(d log d) complexity. In this paper, we leverage the semismoothness of the problem and propose an optimized top-k multiclass SVM algorithm, which employs semismooth Newton algorithm for the key building block to improve the training speed. Our method enjoys a local superlinear convergence rate in theory. In practice, experimental results confirm the validity. Our algorithm is 4 times faster than the existing method in large synthetic problems; Moreover, on real-world datasets it also shows significant improvement intraining time.

\\end{abstract}

\\begin{IEEEkeywords}

Multiclass SVM, top-k error, SDCA optimiza-\\\\tion, root finding, semismooth Newton method.

\\end{IEEEkeywords}

\\IEEEpeerreviewmaketitle

\\section{Introduction}

\\IEEEPARstart{M}{ULTICLASS} classification is a fundamental problem in pattern recognition and has been attracting much attention in the machine learning community

\\cite{Bishop2006Pattern,Hsu2002comparison,Rifkin2004In,Yuan2012Recent,Rocha2014Multiclass}.

The major challenges posed by large-scale dataset for training multiclass classifier lies not only in the data size, but also in the number of data categories \\cite{Deng2010What,Zhou2014Learning,Russakovsky2015Imagenet}.

For example, there are 1000 object categories for the image classification task in ImageNet visual recognition challenge \\cite{Russakovsky2015Imagenet}. When the object classes increase, an important issue, i.e. the overlapping categories,

emerges. Many real-world classification tasks involve large numbers of overlapping categories

\\cite{Cai2007Exploiting}

, which lead to class ambiguity. Thus, it is customary to report top-k accuracy

for large-scale object recognition problems \\cite{Krizhevsky2012Imagenet,Simonyan2014Very,Szegedy2015Going,He2016Deep}, where the top-k accuracy is the fraction of test data for which the counted correct label is among the top k predicted labels by the model. However, all these reported top-k error rates are based on the top-1 error. Recently, Lapin et al. generalize

Crammer and Singer’s Multiclass Support Vector Machine (MSVM) \\cite{Crammer2001algorithmic}

to top-k MSVM which leads to improvements in top-k performance

\\cite{Lapin2015Top}, \\cite{Lapin2016Loss}.

Since the direct extension of MSVM to nonconvex top-k zero-one loss will encounter a computationally intractable problem; it minimizes the surro-gate function, i.e., so-called top-k hinge loss which is a tight convex upper bound of the top-k zero-one loss. Furthermore, a highly efficient SDCA procedure

\\cite{Zhang2015Stochastic}

is proposed to solve the optimization problem.

\\section{{\\footnotesize TOP-k MULTICLASS SUPPORT VECTOR MACHINE}}

We first review the well-known MSVM proposed by Crammer and Signer \\cite{Crammer2001algorithmic}.

Given a set of $\\mathit{n}$ instance-label pairs ($\\mathit{x}_{i},\\mathit{y}_{i}),i$ = 1,$...,\\mathit{n}$,where $\\mathit{x} \\in \\mathbb{R}^{p}$ and the associated label $\\mathit{y}_{i}$ is an integer from the set $\\mathcal{Y}$ = $\\{1,...,Y\\}$.Let the weight vector $\\mathbf{w}_{j}$ be the $\\mathit{j}$-th column of parameter matrix $\\mathbf{W}_{p \\times Y}$.Crammer and Singer's MSVM solves the following problem

\\begin{equation}

\\min _{\\mathbf{W}} \\frac{\\lambda}{2} \\sum_{j \\in\\mathcal{Y}}\\left\\|\\mathbf{w}_{j}\\right\\|^{2}+\\frac{1}{n} \\sum_{i=1}\\ell\\left(\\mathbf{W};\\boldsymbol{x}_{i},y_{i}\\right)),

\\end{equation}

where $\\lambda$ is referred to as the positive regularization parameter and $\\ell$(.) is called the loss fuction of example ($\\mathit{x}_{i},\\mathit{y}_{i}$).The loss fuction is defined as

$$\\ell\\left(\\mathbf{W} ; \\boldsymbol{x}_{i}, y_{i}\\right):=\\max _{j \\in \\mathcal{Y}}\\left\\{\\mathbb{I}\\left(j \\neq y_{i}\\right)+\\left\\langle\\mathbf{w}_{j}-\\mathbf{w}_{y_{i}}, \\boldsymbol{x}_{i}\\right\\rangle\\right\\}$$

where $\\mathbb{I}$(.) is the indicator function which takes a value of one

if its argument is true. Then the multiclass decision function has the form

$$\\underset{j \\in \\mathcal{Y}}{\\operatorname{argmax}}\\left\\langle\\mathbf{w}_{j}, \\boldsymbol{x}\\right\\rangle.$$

Let $\\mathit{e}_{j}$ be the $\\mathit{j}$-th unit vector in $\\mathbb{R}^{Y}$ and 1 with ones in all elements. For every $\\mathit{i}$, let $\\mathit{c}_{i} = 1 - \\mathit{e}_{yi}$ and $\\mathit{b}_{i} = \\mathbf{W}^{T}\\mathit{x}_{i} - (\\mathbf{W}^{T}\\mathit{x}_{i})_{yi}.$To lighten the notation we denote the $\\mathit{j}$-th largest component of $\\mathit{b}_{i}$ by $b_{[j]}, \\text { i.e., } b_{[1]} \\geq b_{[2]} \\geq \\cdots \\geq b_{[Y]}.$

Thus,the loss function can be rewritten as $$\\ell\\left(\\boldsymbol{b}_{i}\\right)=\\max \\left\\{0,\\left(\\boldsymbol{c}_{i}+\\boldsymbol{b}_{i}\\right)_{[1]}\\right\\}.$$

Recently, Lapin et al. extended the above loss function to the top-$\\mathit{k}$ hinge loss \\cite{Lapin2015Top}, \\cite{Lapin2016Loss},

\\begin{equation}

\\ell_{k}\\left(\\boldsymbol{b}_{i}\\right)=\\max \\left\\{0, \\frac{1}{k} \\sum_{j=1}^{k}\\left(\\boldsymbol{c}_{i}+\\boldsymbol{b}_{i}\\right)_{[j]}\\right\\}

\\end{equation}

where $1 \\leq k<Y$. We show that the top-$\\mathit{k}$ multiclass SVM can be cast as an unconstrained optimization problem

\\begin{equation}

\\min _{\\mathbf{W}} \\frac{\\lambda}{2} \\sum_{j \\in \\mathcal{Y}}\\left\\|\\mathbf{w}_{j}\\right\\|^{2}+\\frac{1}{n} \\sum_{i=1}^{n} \\ell_{k}\\left(\\mathbf{W} ; \\boldsymbol{x}_{i}, y_{i}\\right)

\\end{equation}

\\subsection{Dual Problem of Top-k MSVM}

To solve the top-$\\mathit{k}$ MSVM problem (3) using the SDCA framework, one may first derive its dual form. Following the notation given in \\cite{Shalev-Shwartz2016Accelerated}, let $\\mathbf{X}_{i} \\in \\mathbb{R}^{p Y \\times Y}$ be the matrix whose $\\mathit{j}$-th column is vec$\\left(\\boldsymbol{x}_{i}\\left(\\boldsymbol{e}_{j}-\\boldsymbol{e}_{y_{i}}\\right)^{\\top}\\right)$ and $\\boldsymbol{w}=\\operatorname{vec}(\\mathbf{W})$.Then,

$$ \\boldsymbol{b}_{i}=\\mathbf{X}_{i}^{\\top} \\boldsymbol{w}.$$

Hence we can reformulate the primal optimization problem of top-$\\mathit{k}$ MSVM as

\\begin{equation}

\\min _{\\boldsymbol{w} \\in \\mathbb{R}^{p Y}} P(\\boldsymbol{w}):=\\frac{\\lambda}{2} \\boldsymbol{w}^{\\top} \\boldsymbol{w}+\\frac{1}{n} \\sum_{i=1}^{n} \\ell_{k}\\left(\\boldsymbol{w} ; \\mathbf{X}_{i}, y_{i}\\right).

\\end{equation}

We obtain its equivalent optimization problem

$$\\min\\frac{1}{2}\\left\\|\\boldsymbol{\\alpha}_{i}\\right\\|_{2}^{2}+\\boldsymbol{a}_{i}^{\\top} \\boldsymbol{\\alpha}_{i}+\\frac{1}{2}\\left(\\mathbf{1}^{\\top} \\boldsymbol{\\alpha}_{i}\\right)^{2}$$

\\begin{equation}

\\text { s.t. } 0 \\leq-\\boldsymbol{\\alpha}_{i} \\leq \\frac{1}{k} \\sum-\\boldsymbol{\\alpha}_{i}

\\end{equation}

$$\\sum-\\boldsymbol{\\alpha}_{i} \\leq 1$$

$$\\alpha_{i}^{y_{i}}=0$$

where

$$\\boldsymbol{a}_{i}=\\frac{1}{\\rho_{i}}\\left(\\boldsymbol{c}_{i}+\\mathbf{X}_{i}^{\\top} \\hat{\\boldsymbol{w}}\\right), \\rho_{i}=\\frac{1}{n \\lambda}\\left\\|\\boldsymbol{x}_{i}\\right\\|^{2}$$

Here calculating $\\mathbf{X}_{i}^{\\top} \\hat{\\boldsymbol{w}}$ still takes $O\\left(p Y^{2}\\right)$ operations, which is too expensive. We reshape the vector $\\hat{\\boldsymbol{w}}$ into a $\\mathit{p}$-by-Y matrix $\\hat{W}.$Thus the computation

$$\\mathbf{X}_{i}^{\\top} \\hat{\\boldsymbol{w}}=\\hat{\\boldsymbol{W}}^{\\top} \\boldsymbol{x}_{i}-\\left(\\hat{\\boldsymbol{W}}^{\\top} \\boldsymbol{x}_{i}\\right)_{y_{i}}$$

takes $O(p Y)$ operations. In order to avoid the heavy notation,

we drop the subscript of $\\mathit{a}_{i}$ and let $\\mathit{z}$ = $-\\boldsymbol{\\alpha}_{i}^{\\backslash y_{i}}$,$s=\\sum z_{j}$,the above optimization problem (5) can be rewritten as

$$\\min _{\\boldsymbol{z}, s} \\frac{1}{2}\\|\\boldsymbol{z}-\\boldsymbol{a}\\|^{2}+\\frac{1}{2} s^{2}$$

\\begin{equation}

\\text { s.t. } s=\\sum z_{j}

\\end{equation}

$$s \\leq 1$$

$$0 \\leq z_{j} \\leq s / k.$$

Once the problem (6) is solved, a sufficient increase of the

dual objective will be achieved. Whilst for the primal problem,

the process will lead to the update

\\begin{equation}

\\boldsymbol{w}=\\boldsymbol{w}+\\frac{1}{n \\lambda} \\mathbf{X}_{i}\\left(\\boldsymbol{\\alpha}_{i}-\\boldsymbol{\\alpha}_{i}^{\\text {old }}\\right)

\\end{equation}

A pseudo-code of the SDCA algorithm for the top-$k$ MSVM is

depicted as Algorithm 1. To have the first $w$, we can initialize

$\\alpha_{i}$ = 0 and then $w$ = 0.

\\begin{table}[h]

\\begin{tabular}{llllllll}

\\hline

\\multicolumn{8}{l}{\\begin{tabular}[c]{@{}l@{}}Algorithm 1 Stochastic Dual Coordinate Ascent Algorithm\\\\ for Top-k MSVM\\end{tabular}} \\\\ \\hline

\\multicolumn{8}{l}{\\textbf{Require: $\\alpha,\\lambda,k,\\epsilon$}} \\\\

\\multicolumn{8}{l}

{\\begin{tabular}[c]{@{}l@{}}

1:$\\boldsymbol{w} \\leftarrow \\sum_{i} \\frac{1}{n \\lambda} \\mathbf{X}_{i} \\boldsymbol{\\alpha}_{i}$

\\\\ 2:while $\\alpha$ is not optimal do

\\\\ 3:~~~~~Randomly permute the training examples

\\\\ 4:~~~~~for $i = 1,...,n$ do

\\\\ 5:~~~~~~~~~$\\boldsymbol{\\alpha}_{i}^{\\text {old }} \\leftarrow \\boldsymbol{\\alpha}_{i}$

\\\\ 6:~~~~~~~~~Update $\\alpha_{i}$ by solving sub-problem (6)

\\\\ 7:~~~~~~~~~$\\boldsymbol{w} \\leftarrow \\boldsymbol{w}+\\frac{1}{n \\lambda} \\mathbf{X}_{i}\\left(\\boldsymbol{\\alpha}_{i}-\\boldsymbol{\\alpha}_{i}^{\\text {old }}\\right)$

\\\\ 8:~~~~~end for

\\\\ 9:end while

\\end{tabular}} \\\\

\\multicolumn{8}{l}{\\multirow{2}{*}{\\textbf{Ensure: $\\boldsymbol{w},\\alpha$

}}} \\\\

\\multicolumn{8}{l}{} \\\\ \\hline

\\end{tabular}

\\end{table}

\\section{ EXPERIMENTS}

In this section, we first demonstrate the performance of our semismooth Newton method on synthetic data. Then, we apply our algorithm to the top-k multiclass SVM problem to show the efficiency compared with the existing method in \\cite{Lapin2015Top}. Our algorithms used to solve problem are implemented in C with a Matlab interface and run on 3.1GHz Intel Xeon (E5-2687W) Linux machine with 128G of memory. The compiler used is GCC 4.8.4. Both our code and libsdca package of \\cite{Lapin2015Top} ensure the “-O3” optimization flag is set. The experiments are carried out in Matlab 2016a. All the implementation will be released publicly on website.

\\subsection{Efficiency of the Proposed Algorithm}

To investigate the scalability in the problem dimension of our algorithm, two synthetic problems are randomly generated with $d$ ranging between 50,000 and 2,000,000. In the first test problem, $a_{j}$ is randomly chosen from the uniform

distribution U(15, 25) as in \\cite{Cominetti2014Newtons}, \\cite{Kiwiel2008Variable}. In the second test, following the setup of \\cite{Lapin2015Top}, \\cite{Gong2011Efficient}, data entries are sampled from the normal distribution N(0, 1). In the third synthetic problem, $a_{j}$ is chosen by independent draws from uniform distribution U(−1, 1). For pure comparison, we assume the problem without the constraint $s \\le r$.Thus, the knapsack problem which corresponds to the $s = r$ case will not occur in these synthetic problems.

We first present numerical results to investigate the scalability of our proposed algorithm compared with the sortingbased method for different values of $k = 1, 5, 10$. Fig. 1(a), 1(b) and 1(c) correspond to the first, the second and the third

test problems respectively. They tell us that the running times grow linearly with the problem size for both the sortingbased method and our proposed algorithm. However, our algorithm ?? is consistently much faster than the sortingbased method. When the problem size $d \\ge 2 \\times 10^{6}$, our proposed algorithm is 2.5 times faster in the first problem, and 4 times faster in both the second and the third problems

respectively. In addition to the superlinear convergence, the semismooth Newton method accesses to accurate solutions in a few iterations. Our numerical results suggest that it usually takes $3 \\thicksim 5$ iterations to converge.

\\begin{table}[h]

\\caption{Datasets used in the experimental evaluation.}

\\begin{tabular}{l|llll}

\\hline

Dataset & Classes & Features & Training size & Testing size \\\\ \\hline

FMD & 10 & 2048 & 500 & 500 \\\\

News20 & 20 & 15,478 & 15,935 & 3993 \\\\

Letter & 26 & 16 & 15,000 & 5,000 \\\\

INDoor67 & 67 & 4,096 & 5,360 & 1,340 \\\\

Caltech101 & 101 & 784 & 4,100 & 4,100 \\\\

Flowers & 102 & 2,048 & 2,040 & 6,149 \\\\

CUB & 200 & 2,048 & 5,994 & 5.794 \\\\

SUN397 & 379 & 4,096 & 19,850 & 19,850 \\\\

ALOI & 1,000 & 128 & 86,400 & 21,600 \\\\

ImageNet & 1,000 & 2,048 & 1,281,167 & 50,000 \\\\ \\hline

\\end{tabular}

\\end{table}

\\ifCLASSOPTIONcaptionsoff

\\newpage

\\fi

\\section{CONCLUDING REMARKS}

In this paper, we leverage the semismoothness of the optimization problem and develop an optimized top-$k$ multiclass SVM. While our proposed semismooth Newton method enjoys the local superlinear convergence rate, we also present an efficient algorithm to obtain the starting point, which works quite well in practice for the Newton iteration. Experimental results on both synthetic and real-world datasets show that our proposed method scales better with larger numbers of categories and offers faster convergence compared with the existing sorting-based algorithm. We note that there are many other semismooth scenarios, such as ReLU activation function

in deep neural networks and hinge loss in the empirical risk minimization problem. It must be very appealing to exploit the semismooth structure and propose more efficient machine learning algorithms in future work.

\\begin{figure*}[ht]

\\centering

{

\\includegraphics[width=5.5cm]{scale1.eps}}

\\hspace{10pt} %每张图片水平距离

{

\\includegraphics[width=5.5cm]{scale2.eps}}

\\hspace{10pt}

{

\\includegraphics[width=5.5cm]{scale3.eps}}

\\caption{Scaling of our algorithm compared with sorting method. Left: $a_{j} \\sim U(10,25)$.Middle: $a_{j} \\sim U(0,1)$.Right: $a_{j} \\sim U(-1,1)$}

\\end{figure*}

\\section*{ACKNOWLEDGEMENTS}

The authors would like to thank the reviewers for their valuable suggestions on improving this paper. Thanks also goes to Wu Wang for the helpful email exchange.

\\bibliographystyle{ieeetr}

\\bibliography{projection}

\\end{document}

五、实验结果

以上是关于合肥工业大学Linux实验二Latex 科技论文排版的主要内容,如果未能解决你的问题,请参考以下文章